爬虫学习之2:BeautifuSoup爬取58租房数据

周末了有点累,不想看别的书,学习下爬虫放松一下,简单了解了下BeautifulSoup库和Requests库,用之爬取58同城租房数据,代码较简单,才初学还有很多待完善地方,大神勿喷,贴出来仅为记录一下,写完博客打把农药睡觉。

这个程序设置了爬取页数为3页,为了反爬,爬取每一页间隔时间简单设置为2秒。代码如下:

import requests

from bs4 import BeautifulSoup

import time

#请求头

headers = {

'User-Agent':'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) '

'Chrome/49.0.2623.112 Safari/537.36'}

#获取详情页链接

def get_links(url):

web_data = requests.get(url,headers=headers)

soup = BeautifulSoup(web_data.text, 'lxml')

links = soup.select('.des h2 a')

for link in links:

href = link.get("href")

get_info(href)

#获取详情页信息

def get_info(url):

if(url is None):

return

else:

web_data = requests.get(url, headers=headers)

soup = BeautifulSoup(web_data.text, 'lxml')

tittles = soup.select('body > div.main-wrap > div.house-title > h1')

prices = soup.select(

'body > div.main-wrap > div.house-basic-info > div.house-basic-right.fr > div.house-basic-desc > div.house-desc-item.fl.c_333 > div > span.c_ff552e > b')

areas = soup.select(

'body > div.main-wrap > div.house-basic-info > div.house-basic-right.fr > div.house-basic-desc > div.house-desc-item.fl.c_333 > ul > li:nth-of-type(2) > span:nth-of-type(2)')

for tittle,price,area in zip(tittles,prices,areas):

data = {

'房屋名称': tittle.get_text().strip(),

'价格': price.get_text().strip(),

'面积': area.get_text().strip(),

}

print(data)

if __name__ == '__main__':

urls = ['http://cx.58.com/chuzu/pn{}'.format(number) for number in range(1,3)]

fileName = 'D:/58.json'

for single_url in urls:

get_links(single_url)

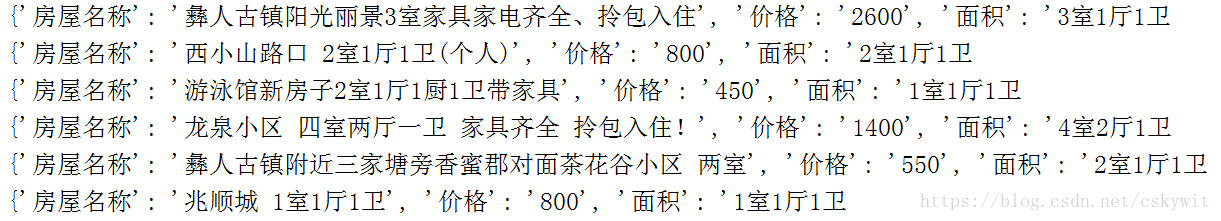

time.sleep(2)没有保存到文件,只是简单的print,运行效果部分截图如下: