准备软件包

1、hadoop-2.7.2.tar.gz

http://mirror.bit.edu.cn/apache/hadoop/common/

2、scala-2.10.4.tgz

http://www.scala-lang.org/download/2.10.4.html

3、spark-2.0.0-bin-hadoop2.7.tar

http://spark.apache.org/downloads.html

一、环境准备

3台Centos7的虚拟机:

172.16.92.115 spark01 #namenode

172.16.92.117 spark02 #datanode

172.16.92.80 spark03 #datanode

1.1、防火墙、selinux关闭

1.2、配置hosts设置ssh免密码登录,使三台机能够互访

[root@spark01 ~]# vi /etc/hosts 172.16.92.115 spark01 #namenode 172.16.92.117 spark02 #datanode 172.16.92.80 spark03 #datanode [root@spark01 ~]# ssh-keygen [root@spark01 ~]# ssh-copy-id spark01 [root@spark01 ~]# ssh-copy-id spark02 [root@spark01 ~]# ssh-copy-id spark03 [root@spark01 ~]# scp /etc/hosts spark02:/etc/ [root@spark01 ~]# scp /etc/hosts spark03:/etc/ [root@spark02 ~]# ssh-keygen [root@spark02 ~]# ssh-copy-id spark01 [root@spark02 ~]# ssh-copy-id spark02 [root@spark02 ~]# ssh-copy-id spark03 [root@spark03 ~]# ssh-keygen [root@spark03 ~]# ssh-copy-id spark01 [root@spark03 ~]# ssh-copy-id spark02 [root@spark03 ~]# ssh-copy-id spark03

1.3、安装JDK1.8

这里已安装

[root@spark01 ~]# yum list|grep jdk Repodata is over 2 weeks old. Install yum-cron? Or run: yum makecache fast jdk1.8.0_91.x86_64 2000:1.8.0_91-fcs installed

1.4、配置环境变量

[root@spark01 ~]# export JAVA_HOME=/usr/java/jdk1.8.0_91 [root@spark01 ~]# export CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar:$JAVA_HOME/lib:$CLASSPATH [root@spark01 ~]# export PATH=$JAVA_HOME/bin:$PATH

二、开始安装hadoop

2.1、解压并创建hadoop文件目录

[root@spark01 ~]#tar -xzvf hadoop-2.7.2.tar.gz -C /opt [root@spark01 ~]#cd /opt/hadoop-2.7.2 [root@spark01 hadoop-2.7.2]#mkdir -p dfs/name [root@spark01 hadoop-2.7.2]#mkdir -p dfs/data [root@spark01 hadoop-2.7.2]#mkdir tmp/

2.2、修改配置文件

主要是修改以下文件

etc/hadoop/slaves

etc/hadoop/core-site.xml

etc/hadoop/hdfs-site.xml

etc/hadoop/mapred-site.xml

etc/hadoop/yarn-site.xml

2.2.1、修改slaves文件(添加数据节点)

[root@spark01 hadoop-2.7.2]#vi etc/hadoop/slaves spark02 spark03

2.2.2、修改core-site.xml文件(增加hadoop核心配置,hdfs文件端口是9000)

[root@spark01 hadoop-2.7.2]#vi etc/hadoop/core-site.xmlfs.defaultFS hdfs://spark01:9000 io.file.buffer.size 131072 #默认64MB,这里改为128MBhadoop.tmp.dir file:/opt/hadoop-2.7.2/tmp Abasefor other temporary directories. hadoop.proxyuser.spark.hosts * hadoop.proxyuser.spark.groups *

2.2.3、

修改hdfs-site.xml 文件(增加hdfs配置信息、namenode、datanode端口和目录位置)

[root@spark01 hadoop-2.7.2]#vi etc/hadoop/hdfs-site.xmldfs.namenode.secondary.http-address spark01:9001 dfs.namenode.name.dir file:/opt/hadoop-2.7.2/dfs/name dfs.datanode.data.dir file:/opt/hadoop-2.7.2/dfs/data dfs.replication 2 #复本个数==datanode个数dfs.webhdfs.enabled true

2.2.4、修改mapred-site.xml 文件(增加mapreduce配置、使用yarn框架、jobhistory使用地址以及web地址)

[root@spark01 hadoop-2.7.2]#vi etc/hadoop/mapred-site.xmlmapreduce.framework.name yarn mapreduce.jobhistory.address spark01:10020 mapreduce.jobhistory.webapp.address spark01:19888

[root@spark01 hadoop-2.7.2]# vi etc/hadoop/yarn-site.xmlyarn.nodemanager.aux-services mapreduce_shuffle yarn.nodemanager.aux- services.mapreduce.shuffle.class org.apache.hadoop.mapred.ShuffleHandler yarn.resourcemanager.address spark01:8032 yarn.resourcemanager.scheduler.address spark01:8030 yarn.resourcemanager.resource-tracker.address spark01:8035 yarn.resourcemanager.admin.address spark01:8033 yarn.resourcemanager.webapp.address spark01:8088

2.2.5、修改hadoop_env.sh配置文件的JAVA_HOME

[root@spark01 hadoop-2.7.2]# vi etc/hadoop/hadoop-env.sh # The java implementation to use. export JAVA_HOME=/usr/java/jdk1.8.0_91

2.3、将配置好的hadoop文件copy到其他的所有的slave机器

[root@spark01 ~]# scp -r /opt/hadoop-2.7.2/ spark02:/opt/ [root@spark01 ~]# scp -r /opt/hadoop-2.7.2/ spark03:/opt/

2.4、配置hadoop环境变量

[root@spark01 ~]# export HADOOP_HOME=/opt/hadoop-2.7.2 [root@spark01 ~]# export PATH=$HADOOP_HOME/bin:$PATH [root@spark02 ~]# export HADOOP_HOME=/opt/hadoop-2.7.2 [root@spark02 ~]# export PATH=$HADOOP_HOME/bin:$PATH [root@spark03 ~]# export HADOOP_HOME=/opt/hadoop-2.7.2 [root@spark03 ~]# export PATH=$HADOOP_HOME/bin:$PATH

注意:这里配置的是本地的环境变量,在hadoop中不一定会生效。

hadoop的环境变量在:etc/hadoop/hadoop-env.sh

spark的环境变量也一样,当使用spark-submit提交任务到集群,

如果要调用库等,需要在spark中配置环境变量,就是添加环境变量到spark-env.sh文件。

2.5、格式化namenode节点

[root@spark01 hadoop-2.7.2]# ./bin/hdfs namenode -format 17/07/18 09:52:36 INFO namenode.NameNode: STARTUP_MSG: /************************************************************ STARTUP_MSG: Starting NameNode STARTUP_MSG: host = spark01/172.16.92.115 STARTUP_MSG: args = [-format] STARTUP_MSG: version = 2.7.2 。。。。。。 /************************************************************ SHUTDOWN_MSG: Shutting down NameNode at spark01/172.16.92.115 ************************************************************/

2.6、启动hadoop文件系统

[root@spark01 hadoop-2.7.2]# ./sbin/start-dfs.sh Starting namenodes on [spark01] spark01: Warning: Permanently added the ECDSA host key for IP address '172.16.92.115' to the list of known hosts. spark01: starting namenode, logging to /opt/hadoop-2.7.2/logs/hadoop-root-namenode-spark01.out spark03: starting datanode, logging to /opt/hadoop-2.7.2/logs/hadoop-root-datanode-spark03.out spark02: starting datanode, logging to /opt/hadoop-2.7.2/logs/hadoop-root-datanode-spark02.out Starting secondary namenodes [spark01] spark01: starting secondarynamenode, logging to /opt/hadoop-2.7.2/logs/hadoop-root-secondarynamenode-spark01.out

2.7、查看进程jps

[root@spark01 hadoop-2.7.2]# jps 25954 Jps 25749 SecondaryNameNode 25533 NameNode

为了使用hadoop命令方便,加个环境变量

[root@spark01 ~]# vi /etc/profile export PATH=$PATH:/opt/hadoop-2.7.2/bin

2.8、其他命令

2.8.1、关闭文件系统

[root@spark01 ~]#./sbin/stop-dfs.sh

2.8.2、开启或者关闭hadoop所有服务

[root@spark01 ~]#./sbin/start-all.sh [root@spark01 ~]#./sbin/stop-all.sh

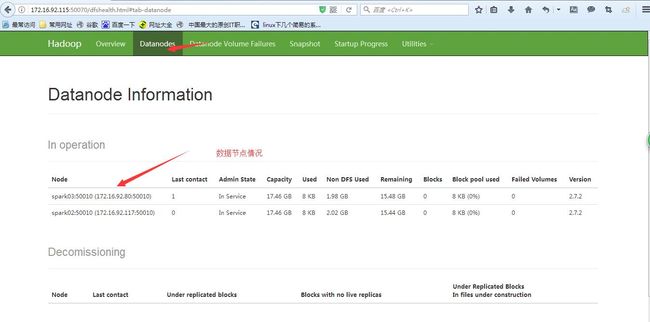

用浏览器输入以下地址查看hadoop集群

http://spark01:50070/

三、安装Spark2.0.0

已经在3个节点中安装hadoop集群

3.1.1、安装scala

[root@spark01 ~]# tar -zxvf scala-2.10.4.tgz -C /usr/local

3.1.2、配置scala环境变量(spark是用scala开发的)

[root@spark01 ~]# vi /etc/profile

export SCALA_HOME=/usr/local/scala-2.10.4

export PATH=${JAVA_HOME}/bin:${HADOOP_HOME}/bin:${SCALA_HOME}/bin:$PATH

[root@spark01 ~]# source /etc/profile

3.1.3、测试scala运行环境

[root@spark01 ~]# scala Welcome to Scala version 2.10.4 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_91). Type in expressions to have them evaluated. Type :help for more information. scala> 9*3 res0: Int = 27 scala> exit

3.2、安装spark

3.2.1、解压spark包

[root@spark01 ~]# tar -zxvf spark-2.0.0-bin-hadoop2.7.tar -C /usr/local/

3.2.2、配置本地环境变量

[root@spark01 ~]# vi /etc/profile

export SPARK_HOME=/usr/local/spark-2.0.0-bin-hadoop2.7/

export PATH=${JAVA_HOME}/bin:${HADOOP_HOME}/bin:${SCALA_HOME}/bin:${SPARK_HOME}/bin:$PATH

[root@spark01 ~]# source /etc/profile

3.2.3、配置Spark环境变量

[root@spark01 ~]# cd $SPARK_HOME/conf [root@spark01 conf]# cp spark-env.sh.template spark-env.sh [root@spark01 conf]# vi spark-env.sh export JAVA_HOME=/usr/java/jdk1.8.0_91 export SCALA_HOME=/usr/local/scala-2.10.4 export SPARK_MASTER_IP=spark01 #Spark集群的主节点的主机名 export SPARK_WORKER_CORES=1 #Spark集群工作节点的cpu核数 export SPARK_WORKER_MEMORY=512M #Spark集群工作节点的可用内存 export HADOOP_CONF_DIR=/opt/hadoop-2.7.2/etc/hadoop

3.2.4、配置工作节点

[root@spark01 conf]# cp slaves.template slaves [root@spark01 conf]# vi slaves spark01 spark02 spark03

3.2.5、将配置好的hadoop文件copy到其他的所有的slave机器

[root@spark01 ~]# scp -r /usr/local/spark-2.0.0-bin-hadoop2.7/ spark02:/usr/local/ [root@spark01 ~]# scp -r /usr/local/spark-2.0.0-bin-hadoop2.7/ spark03:/usr/local/

3.2.6、启动Spark

[root@spark01 ~]# cd $SPARK_HOME/sbin [root@spark01 sbin]# ./start-all.sh starting org.apache.spark.deploy.master.Master, logging to /usr/local/spark-2.0.0-bin-hadoop2.7//logs/spark-root-org.apache.spark.deploy.master.Master-1-spark01.out localhost: Warning: Permanently added 'localhost' (ECDSA) to the list of known hosts. spark02: starting org.apache.spark.deploy.worker.Worker, logging to /usr/local/spark-2.0.0-bin-hadoop2.7/logs/spark-root-org.apache.spark.deploy.worker.Worker-1-spark02.out spark01: starting org.apache.spark.deploy.worker.Worker, logging to /usr/local/spark-2.0.0-bin-hadoop2.7/logs/spark-root-org.apache.spark.deploy.worker.Worker-1-spark01.out localhost: starting org.apache.spark.deploy.worker.Worker, logging to /usr/local/spark-2.0.0-bin-hadoop2.7/logs/spark-root-org.apache.spark.deploy.worker.Worker-1-spark01.out spark03: starting org.apache.spark.deploy.worker.Worker, logging to /usr/local/spark-2.0.0-bin-hadoop2.7/logs/spark-root-org.apache.spark.deploy.worker.Worker-1-spark03.out

3.2.7、jps查看进程,多了一个Master和Worker进程

[root@spark01 sbin]# jps 25749 SecondaryNameNode 13413 Worker 13719 Jps 13322 Master 13434 Worker 25533 NameNode

查看spark集群

http://spark01:8080/