Kubernetes介绍

Kubernetes是Google开源的容器集群管理系统,是基于Docker构建一个容器的调度服务,提供资源调度、均衡容灾、服务注册、动态扩缩容等功能套件。 Kubernetes提供应用部署、维护、 扩展机制等功能,利用Kubernetes能方便地管理跨机器运行容器化的应用,其主要功能如下: 1) 使用Docker对应用程序包装(package)、实例化(instantiate)、运行(run)。 2) 将多台Docker主机抽象为一个资源,以集群的方式运行、管理跨机器的容器,包括任务调度、资源管理、弹性伸缩、滚动升级等功能。 3)使用编排系统(YAML File)快速构建容器集群,提供负载均衡,解决容器直接关联及通信问题 4) 解决Docker跨机器容器之间的通讯问题。 5)自动管理和修复容器,简单说,比如创建一个集群,里面有十个容器,如果某个容器异常关闭,那么,会尝试重启或重新分配容器,始终保证会有 十个容器在运行,反而杀死多余的。 Kubernetes的自我修复机制使得容器集群总是运行在用户期望的状态当前Kubernetes支持GCE、vShpere、CoreOS、OpenShift。

Kubernetes和Mesos的区别

1)Mesos是Apache下的开源分布式资源管理框架,它被称为是分布式系统的内核; Kubernetes是Google开源的容器集群管理系统,实现基于Docker构建容器,利用Kubernetes能很方面管理多台Docker主机中的容器。 2)Mesos负责管理集群管资源(动态运行时,某机器有额外的资源,通知master来分配); Kubernetes抽象出新的容器组合模型并且对其编排管理(把容器自由组合提供服务这事儿搞定了,从而微服务,serverless等才真 正的优雅地在开发和运维之间不吵架地被实现),而且kubernetes把以前运维的很多很难搞的东西都变得容易了。比如OpenStack, Kubernetes是把OpenStack里面的VM换成了容器,但是实现地更漂亮,更精简,更抽象和本质化,用起来也更容易。 3)Mesos相比Kubernetes发展的时间更久,总体情况更成熟,在生产环境有更多的使用经验,国外使用Mesos的公司有Twitter,Apple, Airbnb,Uber等,国内也有大批知名公司在使用Mesos,比如:小米、当当、豆瓣、去哪儿、携程、唯品会、知乎、新浪微博、爱奇艺、 七牛、唯品会、bilibili、中国联通、中国移动、中国电信、华为、数人云等等。中大型公司会更倾向于使用Mesos, 因为本身这些公司有一定的开发能力,Mesos提供了良好的API而且有非常多成熟的Framework跑在Mesos上,Mesos+Marathon+Zookeeper 正常情况可以满足绝大部分需求,只需要写JSON或者DSL定义好service/application就好,只有一些特殊情况才确实需要写自己的Framework。 而kubernetes(k8s)现在也正在慢慢成熟起来,它在生产环境显然还需要更多时间来验证。京东目前已经在kubernetes上跑15W+容器了。 Mesos现在越来越适应并且被添加上了很多Kubernete的概念同时支持了很多Kubernetes的API。因此如果你需要它们的话,它将是对你的 Kubernetes应用去获得更多能力的一个便捷方式(比如高可用的主干、更加高级的调度命令、去管控很大数目结点的能力),同时能够很好的 适用于产品级工作环境中(毕竟Kubernetes任然还是一个初始版本)。 4)如果你是一个集群世界的新手,Kubernetes是一个很棒的起点。它是最快的、最简单的、最轻量级的方法去摆脱束缚,同时开启面向集群开发的实践。 它提供了一个高水平的可移植方案,因为它是被一些不同的贡献者所支持的( 例如微软、IBM、Red Hat、CoreOs、MesoSphere、VMWare等等)。 如果你已经有已经存在的工作任务(Hadoop、Spark、Kafka等等),Mesos给你提供了一个可以让你将不同工作任务相互交错的框架,然后混合进一个 包含Kubernetes 应用的新的东西。 如果你还没有用Kubernetes 系列框架完成项目的能力,Mesos给了你一个减压阀。

Kubernetes结构图

kubernetes角色组成

1)Pod 在Kubernetes系统中,调度的最小颗粒不是单纯的容器,而是抽象成一个Pod,Pod是一个可以被创建、销毁、调度、管理的最小的部署单元。 比如一个或一组容器。Pod是kubernetes的最小操作单元,一个Pod可以由一个或多个容器组成;同一个Pod只能运行在同一个主机上,共享相 同的volumes、network、namespace; 2)ReplicationController(RC) RC用来管理Pod,一个RC可以由一个或多个Pod组成,在RC被创建后,系统会根据定义好的副本数来创建Pod数量。在运行过程中,如果Pod数量 小于定义的,就会重启停止的或重新分配Pod,反之则杀死多余的。当然,也可以动态伸缩运行的Pods规模或熟悉。RC通过label关联对应的Pods, 在滚动升级中,RC采用一个一个替换要更新的整个Pods中的Pod。 Replication Controller是Kubernetes系统中最有用的功能,实现复制多个Pod副本,往往一个应用需要多个Pod来支撑,并且可以保证其复制的 副本数,即使副本所调度分配的宿主机出现异常,通过Replication Controller可以保证在其它主宿机启用同等数量的Pod。Replication Controller 可以通过repcon模板来创建多个Pod副本,同样也可以直接复制已存在Pod,需要通过Label selector来关联。 3)Service Service定义了一个Pod逻辑集合的抽象资源,Pod集合中的容器提供相同的功能。集合根据定义的Label和selector完成,当创建一个Service后, 会分配一个Cluster IP,这个IP与定义的端口提供这个集合一个统一的访问接口,并且实现负载均衡。 Services是Kubernetes最外围的单元,通过虚拟一个访问IP及服务端口,可以访问我们定义好的Pod资源,目前的版本是通过iptables的nat转发来实现, 转发的目标端口为Kube_proxy生成的随机端口,目前只提供GOOGLE云上的访问调度,如GCE。 4)Label Label是用于区分Pod、Service、RC的key/value键值对;仅使用在Pod、Service、Replication Controller之间的关系识别,但对这些单元本身进行操 作时得使用name标签。Pod、Service、RC可以有多个label,但是每个label的key只能对应一个;主要是将Service的请求通过lable转发给后端提供服务的Pod集合; 说说个人一点看法,目前Kubernetes保持一周一小版本、一个月一大版本的节奏,迭代速度极快,同时也带来了不同版本操作方法的差异,另外官网文档更新速度 相对滞后及欠缺,给初学者带来一定挑战。在上游接入层官方侧重点还放在GCE(Google Compute Engine)的对接优化,针对个人私有云还未推出一套可行的接入 解决方案。在v0.5版本中才引用service代理转发的机制,且是通过iptables来实现,在高并发下性能令人担忧。但作者依然看好Kubernetes未来的发展,至少目前 还未看到另外一个成体系、具备良好生态圈的平台,相信在V1.0时就会具备生产环境的服务支撑能力。

kubernetes组件组成

1)kubectl 客户端命令行工具,将接受的命令格式化后发送给kube-apiserver,作为整个系统的操作入口。 2)kube-apiserver 作为整个系统的控制入口,以REST API服务提供接口。 3)kube-controller-manager 用来执行整个系统中的后台任务,包括节点状态状况、Pod个数、Pods和Service的关联等。 4)kube-scheduler 负责节点资源管理,接受来自kube-apiserver创建Pods任务,并分配到某个节点。 5)etcd 负责节点间的服务发现和配置共享。 6)kube-proxy 运行在每个计算节点上,负责Pod网络代理。定时从etcd获取到service信息来做相应的策略。 7)kubelet 运行在每个计算节点上,作为agent,接受分配该节点的Pods任务及管理容器,周期性获取容器状态,反馈给kube-apiserver。 8)DNS 一个可选的DNS服务,用于为每个Service对象创建DNS记录,这样所有的Pod就可以通过DNS访问服务了。

Kubelet

根据上图可知Kubelet是Kubernetes集群中每个Minion和Master API Server的连接点,Kubelet运行在每个Minion上,是Master API Server和Minion之间的桥梁, 接收Master API Server分配给它的commands和work,与持久性键值存储etcd、file、server和http进行交互,读取配置信息。Kubelet的主要工作是管理Pod和容 器的生命周期,其包括Docker Client、Root Directory、Pod Workers、Etcd Client、Cadvisor Client以及Health Checker组件,具体工作如下: 1) 通过Worker给Pod异步运行特定的Action。 2) 设置容器的环境变量。 3) 给容器绑定Volume。 4) 给容器绑定Port。 5) 根据指定的Pod运行一个单一容器。 6) 杀死容器。 7) 给指定的Pod创建network 容器。 8) 删除Pod的所有容器。 9) 同步Pod的状态。 10) 从Cadvisor获取container info、 pod info、root info、machine info。 11) 检测Pod的容器健康状态信息。 12) 在容器中运行命令

kubernetes基本部署步骤

1)minion节点安装docker 2)minion节点配置跨主机容器通信 3)master节点部署etcd、kube-apiserver、kube-controller-manager和kube-scheduler组件 4)minion节点部署kubelet、kube-proxy组件 注:推荐将etcd做成集群,所有节点都安装etcd。这里的使用master来搭建私有仓库供minion节点使用。 温馨提示: 如果minion主机没有安装docker,启动kubelet时会报如下错误: Could not load kubeconfig file /var/lib/kubelet/kubeconfig: stat /var/lib/kubelet/kubeconfig: no such file or directory. Trying auth path instead. Could not load kubernetes auth path /var/lib/kubelet/kubernetes_auth: stat /var/lib/kubelet/kubernetes_auth: no such file or directory. Continuing with defaults. No cloud provider specified.

kubernetes集群环境部署过程记录

主机名 IP 节点及功能 系统版本 registry 10.10.172.202 registry CentOS7.2 K8S-master 10.10.172.202 Master、etcd CentOS7.2 K8S-node-1 10.10.172.203 Node1 CentOS7.2 K8S-node-2 10.10.172.204 Node2 CentOS7.2

0)设置三台机器的主机名

Registry上执行: [root@localhost ~]# hostnamectl --static set-hostname registry Master上执行: [root@localhost ~]# hostnamectl --static set-hostname k8s-master Node1上执行: [root@localhost ~]# hostnamectl --static set-hostname k8s-node-1 Node2上执行: [root@localhost ~]# hostnamectl --static set-hostname k8s-node-2 在三台机器上都要设置hosts,均执行如下命令: [root@k8s-master ~]# vim /etc/hosts 10.10.172.201 registry 10.10.172.202 k8s-master 10.10.172.202 etcd 10.10.172.203 k8s-node-1 10.10.172.204 k8s-node-2

1)关闭三台机器上的防火墙和SElinux

[root@k8s-master ~]# systemctl disable firewalld.service [root@k8s-master ~]# systemctl stop firewalld.service 注:建议安装iptable防火墙,yum install iptables-service -y;systemctl start iptables.service;systemctl enable iptables.service [root@k8s-master ~]# sed -i "/SELINUX/s/enforcing/disabled/g" /etc/selinux/config [root@k8s-master ~]# reboot [root@k8s-master ~]# getenforce Disabled 注:docker 1.13.1 需要关闭selinux或者升级内核,才可以启动docker。 启动报错提示:Error starting daemon: SELinux is not supported with the overlay2 graph driver on this kernel

2)docker私有仓库建立

环境说明:

我们选取10.10.172.201做私有仓库地址

yum install docker -y

1.启动docker仓库端口服务

docker run -d -p 5000:5000 --name=registry --privileged=true --restart=always -v /data/history:/data/registry registry

2.查看docker仓库端口服务

# curl -XGET http://10.10.172.201:5000/v2/_catalog

# curl -XGET http://10.10.172.201:5000/v2/image_name/tags/list

3.将自己的镜像加到docker仓库

1).加载到自己的私有仓库

docker pull busybox

docker tag busybox 10.10.172.201:5000/busybox

docker push 10.10.172.201:5000/busybox

docker push 上传镜像到本地私有仓库时报错提示:

The push refers to a repository [10.10.172.201:5000/busybox]

Get https://10.10.172.201:5000/v1/_ping: http: server gave HTTP response to HTTPS client

解决方法:

修改服务端docker配置文件/etc/sysconfig/docker添加如下代码,同时重启docker服务:

OPTIONS='--selinux-enabled --log-driver=journald --signature-verification=false --insecure-registry 10.10.172.201:5000'

ADD_REGISTRY='--add-registry 10.10.172.201:5000'

2).检查本地私有仓库是否成功

[root@k8s-master ~]# curl -XGET http://10.10.172.201:5000/v2/_catalog

{"repositories":["busybox"]}

[root@k8s-master ~]# curl -XGET http://10.10.172.201:5000/v2/busybox/tags/list

{"name":"busybox","tags":["latest"]}

4.docker客户端使用本地私有仓库:

修改配置文件/etc/sysconfig/docker,添加如下代码,同时重启docker服务:

OPTIONS='--selinux-enabled --log-driver=journald --signature-verification=false --insecure-registry 10.10.172.201:5000'

ADD_REGISTRY='--add-registry 10.10.172.201:5000'

或者:

修改配置文件/etc/docker/daemon.json,添加如下代码,同时重启docker服务:

{ "insecure-registries":["10.10.172.201:5000"] }

或者:

{ "insecure-registries":["10.10.172.201:5000"],

"registry-mirrors": ["http://df98fb04.m.daocloud.io"]}

至此,docker本地私有仓库部署完毕,可以向仓库中添加或者更新Docker镜像。

3)现在开始部署Master

1)先安装docker环境

[root@k8s-master ~]# yum install -y docker

配置Docker配置文件,使其允许从registry中拉取镜像

[root@k8s-master ~]# vim /etc/sysconfig/docker #添加下面一行内容

......

OPTIONS='--insecure-registry registry:5000'

[root@k8s-master ~]# systemctl start docker

2)安装etcd

k8s运行依赖etcd,需要先部署etcd,下面采用yum方式安装:

[root@k8s-master ~]# yum install etcd -y

yum安装的etcd默认配置文件在/etc/etcd/etcd.conf,编辑配置文件:

[root@k8s-master ~]# cp /etc/etcd/etcd.conf{,.bak}

[root@k8s-master ~]# cat /etc/etcd/etcd.conf

#[member]

ETCD_NAME=master #节点名称

ETCD_DATA_DIR="/var/lib/etcd/default.etcd" #数据存放位置

#ETCD_WAL_DIR=""

#ETCD_SNAPSHOT_COUNT="10000"

#ETCD_HEARTBEAT_INTERVAL="100"

#ETCD_ELECTION_TIMEOUT="1000"

#ETCD_LISTEN_PEER_URLS="http://0.0.0.0:2380"

ETCD_LISTEN_CLIENT_URLS="http://0.0.0.0:2379,http://0.0.0.0:4001" #监听客户端地址

#ETCD_MAX_SNAPSHOTS="5"

#ETCD_MAX_WALS="5"

#ETCD_CORS=""

#

#[cluster]

#ETCD_INITIAL_ADVERTISE_PEER_URLS="http://localhost:2380"

# if you use different ETCD_NAME (e.g. test), set ETCD_INITIAL_CLUSTER value for this name, i.e. "test=http://..."

#ETCD_INITIAL_CLUSTER="default=http://localhost:2380"

#ETCD_INITIAL_CLUSTER_STATE="new"

#ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_ADVERTISE_CLIENT_URLS="http://etcd:2379,http://etcd:4001" #通知客户端地址

#ETCD_DISCOVERY=""

#ETCD_DISCOVERY_SRV=""

#ETCD_DISCOVERY_FALLBACK="proxy"

#ETCD_DISCOVERY_PROXY=""

启动etcd并验证状态

[root@k8s-master ~]# systemctl start etcd;systemctl enable etcd

[root@k8s-master ~]# ps -ef|grep etcd

etcd 28145 1 1 14:38 ? 00:00:00 /usr/bin/etcd --name=master --data-dir=/var/lib/etcd/default.etcd --listen-client-urls=http://0.0.0.0:2379,http://0.0.0.0:4001

root 28185 24819 0 14:38 pts/1 00:00:00 grep --color=auto etcd

[root@k8s-master ~]# lsof -i:2379

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

etcd 28145 etcd 6u IPv6 1283822 0t0 TCP *:2379 (LISTEN)

etcd 28145 etcd 18u IPv6 1284133 0t0 TCP localhost:53203->localhost:2379 (ESTABLISHED)

........

[root@k8s-master ~]# etcdctl set testdir/testkey0 0

0

[root@k8s-master ~]# etcdctl get testdir/testkey0

0

[root@k8s-master ~]# etcdctl -C http://etcd:4001 cluster-health

member 8e9e05c52164694d is healthy: got healthy result from http://etcd:2379

cluster is healthy

[root@k8s-master ~]# etcdctl -C http://etcd:2379 cluster-health

member 8e9e05c52164694d is healthy: got healthy result from http://etcd:2379

cluster is healthy

[root@k8s-master ~]# etcdctl member list

8e9e05c52164694d: name=default peerURLs=http://localhost:2380 clientURLs=http://etcd:2379,http://etcd:4001 isLeader=true

3)安装kubernets

[root@k8s-master ~]# yum install kubernetes -y #其实只需安装kubernetes-master

配置并启动kubernetes

在kubernetes master上需要运行以下组件:Kubernets API Server、Kubernets Controller Manager、Kubernets Scheduler

[root@k8s-master ~]# cp /etc/kubernetes/apiserver{,.bak}

[root@k8s-master ~]# vim /etc/kubernetes/apiserver

###

# kubernetes system config

#

# The following values are used to configure the kube-apiserver

#

# The address on the local server to listen to.

KUBE_API_ADDRESS="--insecure-bind-address=0.0.0.0"

# The port on the local server to listen on.

KUBE_API_PORT="--port=8080"

# Port minions listen on

# KUBELET_PORT="--kubelet-port=10250"

# Comma separated list of nodes in the etcd cluster

KUBE_ETCD_SERVERS="--etcd-servers=http://etcd:2379"

# Address range to use for services

KUBE_SERVICE_ADDRESSES="--service-cluster-ip-range=192.168.21.0/24"

# default admission control policies

#KUBE_ADMISSION_CONTROL="--admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,SecurityContextDeny,ServiceAccount,ResourceQuota"

KUBE_ADMISSION_CONTROL="--admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,SecurityContextDeny,ResourceQuota"

# Add your own!

KUBE_API_ARGS=""

[root@k8s-master ~]# cp /etc/kubernetes/config{,.bak}

[root@k8s-master ~]# vim /etc/kubernetes/config

###

# kubernetes system config

#

# The following values are used to configure various aspects of all

# kubernetes services, including

#

# kube-apiserver.service

# kube-controller-manager.service

# kube-scheduler.service

# kubelet.service

# kube-proxy.service

# logging to stderr means we get it in the systemd journal

KUBE_LOGTOSTDERR="--logtostderr=true"

# journal message level, 0 is debug

KUBE_LOG_LEVEL="--v=0"

# Should this cluster be allowed to run privileged docker containers

KUBE_ALLOW_PRIV="--allow-privileged=false"

# How the controller-manager, scheduler, and proxy find the apiserver

KUBE_MASTER="--master=http://k8s-master:8080"

启动服务并设置开机自启动

[root@k8s-master ~]# systemctl enable kube-apiserver.service

[root@k8s-master ~]# systemctl start kube-apiserver.service

[root@k8s-master ~]# systemctl enable kube-controller-manager.service

[root@k8s-master ~]# systemctl start kube-controller-manager.service

[root@k8s-master ~]# systemctl enable kube-scheduler.service

[root@k8s-master ~]# systemctl start kube-scheduler.service

4)接着部署Node(在两台node节点机器上都要操作)

1)安装docker

[root@k8s-node-1 ~]# yum install -y docker

配置Docker配置文件,使其允许从registry中拉取镜像

[root@k8s-node-1 ~]# vim /etc/sysconfig/docker #添加下面一行内容

......

OPTIONS='--insecure-registry registry:5000'

[root@k8s-node-1 ~]# systemctl start docker;systemctl enable docker

2)安装kubernets

[root@k8s-node-1 ~]# yum install kubernetes -y #其实只需安装kubernetes-node即可

配置并启动kubernetes

在kubernetes master上需要运行以下组件:Kubelet、Kubernets Proxy

[root@k8s-node-1 ~]# cp /etc/kubernetes/config{,.bak}

[root@k8s-node-1 ~]# vim /etc/kubernetes/config

###

# kubernetes system config

#

# The following values are used to configure various aspects of all

# kubernetes services, including

#

# kube-apiserver.service

# kube-controller-manager.service

# kube-scheduler.service

# kubelet.service

# kube-proxy.service

# logging to stderr means we get it in the systemd journal

KUBE_LOGTOSTDERR="--logtostderr=true"

# journal message level, 0 is debug

KUBE_LOG_LEVEL="--v=0"

# Should this cluster be allowed to run privileged docker containers

KUBE_ALLOW_PRIV="--allow-privileged=false"

# How the controller-manager, scheduler, and proxy find the apiserver

KUBE_MASTER="--master=http://k8s-master:8080"

[root@k8s-node-1 ~]# cp /etc/kubernetes/kubelet{,.bak}

[root@k8s-node-1 ~]# vim /etc/kubernetes/kubelet

###

# kubernetes kubelet (minion) config

# The address for the info server to serve on (set to 0.0.0.0 or "" for all interfaces)

KUBELET_ADDRESS="--address=0.0.0.0"

# The port for the info server to serve on

# KUBELET_PORT="--port=10250"

# You may leave this blank to use the actual hostname

KUBELET_HOSTNAME="--hostname-override=k8s-node-1" #特别注意这个,在另一个node2节点上,要改为k8s-node-2

# location of the api-server

KUBELET_API_SERVER="--api-servers=http://k8s-master:8080"

# pod infrastructure container

KUBELET_POD_INFRA_CONTAINER="--pod-infra-container-image=registry.access.redhat.com/rhel7/pod-infrastructure:latest"

# Add your own!

KUBELET_ARGS=""

加速器配置(可选)

[root@k8s-node-1 ~]# cat /etc/docker/daemon.json

{"registry-mirrors": ["http://df98fb04.m.daocloud.io"]}

[root@k8s-node-1 ~]#

启动服务并设置开机自启动

[root@k8s-node-1 ~]# systemctl enable kubelet.service

[root@k8s-node-1 ~]# systemctl start kubelet.service

[root@k8s-node-1 ~]# systemctl enable kube-proxy.service

[root@k8s-node-1 ~]# systemctl start kube-proxy.service

查看状态

[root@k8s-master ~]# kubectl -s http://k8s-master:8080 get node NAME STATUS AGE k8s-node-1 Ready 29s k8s-node-2 Ready 28s [root@k8s-master ~]# kubectl get nodes NAME STATUS AGE k8s-node-1 Ready 44s k8s-node-2 Ready 43s

kubernetes常用命令

查看node主机

[root@k8s-master ~]# kubectl get node //有的环境是用monion,那么查看命令就是"kubectl get minions"

查看pods清单

[root@k8s-master ~]# kubectl get pods

查看service清单

[root@k8s-master ~]# kubectl get services //或者使用命令"kubectl get services -o json"

查看replicationControllers清单

[root@k8s-master ~]# kubectl get replicationControllers

删除所有pods(同理将下面命令中的pods换成services或replicationControllers,就是删除所有的services或replicationContronllers)

[root@k8s-master ~]# for i in `kubectl get pod|tail -n +2|awk '{print $1}'`; do kubectl delete pod $i; done

--------------------------------------------------------------------------

除了上面那种查看方式,还可以通过Server api for REST方式(这个及时性更高)

查看kubernetes版本

[root@k8s-master ~]# curl -s -L http://10.10.172.202:8080/api/v1beta1/version | python -mjson.tool

查看pods清单

[root@k8s-master ~]# curl -s -L http://10.10.172.202:8080/api/v1beta1/pods | python -mjson.tool

查看replicationControllers清单

[root@k8s-master ~]# curl -s -L http://10.10.172.202:8080/api/v1beta1/replicationControllers | python -mjson.tool

查查看node主机(或者是minion主机,将下面命令中的node改成minion)

[root@k8s-master ~]# curl -s -L http://10.10.172.202:8080/api/v1beta1/node | python -m json.tool

查看service清单

[root@k8s-master ~]# curl -s -L http://10.10.172.202:8080/api/v1beta1/services | python -m json.tool

温馨提示:

在新版Kubernetes中,所有的操作命令都整合至kubectl,包括kubecfg、kubectl.sh、kubecfg.sh等

5)创建覆盖网络——Flannel

1)安装Flannel(在master、node上均执行如下命令,进行安装)

[root@k8s-master ~]# yum install flannel -y #etcd节点和node节点都需要安装flannel

2)配置Flannel(在master、node上均编辑/etc/sysconfig/flanneld)

[root@k8s-master ~]# cp /etc/sysconfig/flanneld{,.bak}

[root@k8s-master ~]# vim /etc/sysconfig/flanneld

# Flanneld configuration options

# etcd url location. Point this to the server where etcd runs

FLANNEL_ETCD_ENDPOINTS="http://etcd:2379"

# etcd config key. This is the configuration key that flannel queries

# For address range assignment

FLANNEL_ETCD_PREFIX="/atomic.io/network"

# Any additional options that you want to pass

#FLANNEL_OPTIONS=""

3)配置etcd中关于flannel的key(这个只在master上操作)

Flannel使用Etcd进行配置,来保证多个Flannel实例之间的配置一致性,所以需要在etcd上进行如下配置:('/atomic.io/network/config'这个key与上文/etc/sysconfig/flannel中的配置项FLANNEL_ETCD_PREFIX是相对应的,错误的话启动就会出错)

[root@k8s-master ~]# etcdctl rm /atomic.io/network/ --recursive

[root@k8s-master ~]# etcdctl mk /atomic.io/network/config '{ "Network": "10.10.0.0/16" }'

{ "Network": "10.10.0.0/16" }

注:atomic.io可以更换为coreos.com

查看etcd配置中心网段

etcdctl ls /atomic.io/network/subnets

4)启动Flannel

启动Flannel之后,需要依次重启docker、kubernete。

在master执行:

[root@k8s-master ~]# systemctl enable flanneld.service

[root@k8s-master ~]# systemctl start flanneld.service

[root@k8s-master ~]# service docker restart

[root@k8s-master ~]# systemctl restart kube-apiserver.service

[root@k8s-master ~]# systemctl restart kube-controller-manager.service

[root@k8s-master ~]# systemctl restart kube-scheduler.service

在node上执行:

[root@k8s-node-1 ~]# systemctl enable flanneld.service

[root@k8s-node-1 ~]# systemctl start flanneld.service

[root@k8s-node-1 ~]# service docker restart

[root@k8s-node-1 ~]# systemctl restart kubelet.service

[root@k8s-node-1 ~]# systemctl restart kube-proxy.service

然后通过ifconfig命令查看maste和node节点,发现docker0网桥网络的ip已经是上面指定的10.10.0.0网段了。并且在master和node节点上创建的容器间都是可以相互通信的,能相互ping通!

在master上执行:

[root@k8s-master ~]# ifconfig

docker0: flags=4099 mtu 1500

inet 10.10.34.1 netmask 255.255.255.0 broadcast 0.0.0.0

ether 02:42:e1:c2:b5:88 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

eth0: flags=4163 mtu 1500

inet 10.10.172.202 netmask 255.255.255.0 broadcast 10.10.172.255

inet6 fe80::250:56ff:fe86:6833 prefixlen 64 scopeid 0x20

ether 00:50:56:86:68:33 txqueuelen 1000 (Ethernet)

RX packets 87982 bytes 126277968 (120.4 MiB)

RX errors 0 dropped 40 overruns 0 frame 0

TX packets 47274 bytes 6240061 (5.9 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

flannel0: flags=4305 mtu 1472

inet 10.10.34.0 netmask 255.255.0.0 destination 10.10.34.0

unspec 00-00-00-00-00-00-00-00-00-00-00-00-00-00-00-00 txqueuelen 500 (UNSPEC)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73 mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

inet6 ::1 prefixlen 128 scopeid 0x10

loop txqueuelen 0 (Local Loopback)

RX packets 91755 bytes 38359378 (36.5 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 91755 bytes 38359378 (36.5 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@k8s-master ~]#

在node上执行

[root@k8s-node-1 ~]# ifconfig

docker0: flags=4099 mtu 1500

inet 10.10.66.1 netmask 255.255.255.0 broadcast 0.0.0.0

ether 02:42:2c:1d:19:14 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

eth0: flags=4163 mtu 1500

inet 10.10.172.203 netmask 255.255.255.0 broadcast 10.10.172.255

inet6 fe80::250:56ff:fe86:3ed8 prefixlen 64 scopeid 0x20

ether 00:50:56:86:3e:d8 txqueuelen 1000 (Ethernet)

RX packets 69554 bytes 116340717 (110.9 MiB)

RX errors 0 dropped 34 overruns 0 frame 0

TX packets 35925 bytes 2949594 (2.8 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

flannel0: flags=4305 mtu 1472

inet 10.10.66.0 netmask 255.255.0.0 destination 10.10.66.0

unspec 00-00-00-00-00-00-00-00-00-00-00-00-00-00-00-00 txqueuelen 500 (UNSPEC)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73 mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

inet6 ::1 prefixlen 128 scopeid 0x10

loop txqueuelen 0 (Local Loopback)

RX packets 24 bytes 1856 (1.8 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 24 bytes 1856 (1.8 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@k8s-node-1 ~]#

[root@k8s-node-2 ~]# ifconfig

docker0: flags=4099 mtu 1500

inet 10.10.59.1 netmask 255.255.255.0 broadcast 0.0.0.0

ether 02:42:08:8b:65:48 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

eth0: flags=4163 mtu 1500

inet 10.10.172.204 netmask 255.255.255.0 broadcast 10.10.172.255

inet6 fe80::250:56ff:fe86:22d8 prefixlen 64 scopeid 0x20

ether 00:50:56:86:22:d8 txqueuelen 1000 (Ethernet)

RX packets 69381 bytes 116036521 (110.6 MiB)

RX errors 0 dropped 27 overruns 0 frame 0

TX packets 35545 bytes 2943130 (2.8 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

flannel0: flags=4305 mtu 1472

inet 10.10.59.0 netmask 255.255.0.0 destination 10.10.59.0

unspec 00-00-00-00-00-00-00-00-00-00-00-00-00-00-00-00 txqueuelen 500 (UNSPEC)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73 mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

inet6 ::1 prefixlen 128 scopeid 0x10

loop txqueuelen 0 (Local Loopback)

RX packets 24 bytes 1856 (1.8 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 24 bytes 1856 (1.8 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@k8s-node-2 ~]#

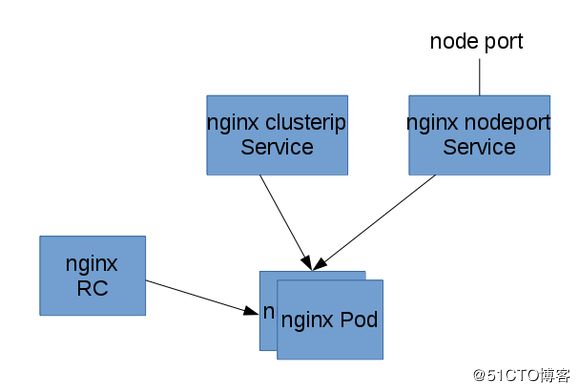

6)部署nginx pod 和 复制 “器”

以下面的图来安装一个简单的静态内容的nginx应用:

用复制“器”启动一个2个备份的nginx Pod,然后在前面挂Service,一个service只能被集群内部访问,一个能被集群外的节点访问。下面所有的命令都是在master管理节点上运行的。

1)首先部署nginx pod 和复制“器”---------------------------------------------------------------------

[root@k8s-master ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

docker.io/nginx latest 3448f27c273f 8 days ago 109.4 MB

通过下面命令发现apiVersion版本是v1

[root@k8s-master ~]# curl -s -L http://10.10.172.202:8080/api/v1beta1/version | python -mjson.tool

{

"apiVersion": "v1",

.......

}

开始创建pod单元

[root@k8s-master ~]# mkdir -p /data/kubermange && cd /data/kubermange

[root@k8s-master kubermange]# vim nginx-rc.yaml

apiVersion: v1

kind: ReplicationController

metadata:

name: nginx-controller

spec:

replicas: 2 #即2个备份

selector:

name: nginx

template:

metadata:

labels:

name: nginx

spec:

containers:

- name: nginx

image: docker.io/nginx

ports:

- containerPort: 80

[root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 create -f nginx-rc.yaml

replicationcontroller "nginx-controller" created

由于kubernetes要去gcr.io下载gcr.io/google_containers/pause镜像,然后下载nginx镜像,所以所创建的Pod需要等待一些时间才能处于running状态。

然后查看pods清单

[root@k8s-master kubermange]# kubectl -s http://k8s-master:8080 get pods

NAME READY STATUS RESTARTS AGE

nginx-controller-3n1ct 0/1 ContainerCreating 0 8s

nginx-controller-4bnfn 0/1 ContainerCreating 0 8s

可以使用describe 命令查看pod所分到的节点:

[root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 describe pod nginx-controller-3n1ct |more

Name: nginx-controller-3n1ct

Namespace: default

Node: k8s-node-1/10.10.172.203

.......

同理,查看另一个pod

[root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 describe pod nginx-controller-4bnfn |more

Name: nginx-controller-4bnfn

Namespace: default

Node: k8s-node-2/10.10.172.204

.......

由上可以看出,这个复制“器”启动了两个Pod,分别运行在10.10.172.203和10.10.172.204这两个节点上了。到这两个节点上查看,发现已经有nginx应用容器创建了。

提醒:最好事先在node节点上执行命令yum install *rhsm* -y(yum install python-rhsm python-rhsm-certificates [python-dateutil] -y);然后执行命令docker pull registry.access.redhat.com/rhel7/pod-infrastructure:latest;最后执行命令kubectl -s http://10.10.172.202:8080 create -f nginx-rc.yaml来创建pod单元。

[root@k8s-node-1 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

docker.io/nginx latest 3f8a4339aadd 12 days ago 108.5 MB

registry.access.redhat.com/rhel7/pod-infrastructure latest 99965fb98423 12 weeks ago 208.6 MB

[root@k8s-node-1 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

e60877d9d5e4 docker.io/nginx "nginx -g 'daemon off" 10 minutes ago Up 10 minutes k8s_nginx.3d610115_nginx-controller-b05d6_default_aadfd74a-f43a-11e7-a1bf-005056866833_6de59c2d

cba61f9bda3b registry.access.redhat.com/rhel7/pod-infrastructure:latest "/usr/bin/pod" 11 minutes ago Up 11 minutes k8s_POD.a8590b41_nginx-controller-b05d6_default_aadfd74a-f43a-11e7-a1bf-005056866833_e60a56ca

[root@k8s-node-1 ~]# docker inspect e60877d9d5e4 |grep -i ip

"IpcMode": "container:cba61f9bda3b9e68859098f16ae4c77c09189ace3b8dc4656b797f5dd7dcb615",

"LinkLocalIPv6Address": "",

"LinkLocalIPv6PrefixLen": 0,

"SecondaryIPAddresses": null,

"SecondaryIPv6Addresses": null,

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"IPAddress": "",

"IPPrefixLen": 0,

"IPv6Gateway": "",

[root@k8s-node-1 ~]#

[root@k8s-node-2 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

docker.io/nginx latest 3f8a4339aadd 12 days ago 108.5 MB

registry.access.redhat.com/rhel7/pod-infrastructure latest 99965fb98423 12 weeks ago 208.6 MB

[root@k8s-node-2 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

491df793c5d8 docker.io/nginx "nginx -g 'daemon off" 12 minutes ago Up 12 minutes k8s_nginx.3d610115_nginx-controller-8ddph_default_aadfcd91-f43a-11e7-a1bf-005056866833_785ceefb

647bf56d61b8 registry.access.redhat.com/rhel7/pod-infrastructure:latest "/usr/bin/pod" 12 minutes ago Up 12 minutes k8s_POD.a8590b41_nginx-controller-8ddph_default_aadfcd91-f43a-11e7-a1bf-005056866833_145d0863

[root@k8s-node-2 ~]# docker inspect 491df793c5d8 |grep -i ip

"IpcMode": "container:647bf56d61b8b46a01dbf422ab273a11aa36c6b38bce594d73bec1ac42068829",

"LinkLocalIPv6Address": "",

"LinkLocalIPv6PrefixLen": 0,

"SecondaryIPAddresses": null,

"SecondaryIPv6Addresses": null,

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"IPAddress": "",

"IPPrefixLen": 0,

"IPv6Gateway": "",

[root@k8s-node-2 ~]#

2)部署节点内部可访问的nginx service------------------------------------------------------------------------

Service的type有ClusterIP和NodePort之分,缺省是ClusterIP,这种类型的Service只能在集群内部访问。配置文件如下:

[root@k8s-master kubermange]# vim nginx-service-clusterip.yaml

apiVersion: v1

kind: Service

metadata:

name: nginx-service-clusterip

spec:

ports:

- port: 8001

targetPort: 80

protocol: TCP

selector:

name: nginx

然后执行下面的命令创建service:

[root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 create -f nginx-service-clusterip.yaml

或者

[root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 create -f ./nginx-service-clusterip.yaml

service "nginx-service-clusterip" created

[root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 get service

NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes 192.168.21.1 443/TCP 2d

nginx-service-clusterip 192.168.21.174 8001/TCP 12s

验证service的可访问性(访问节点):

上面的输出告诉我们这个Service的Cluster IP是192.168.21.174,端口是8001。那么我们就来验证这个PortalNet IP的工作情况:

ssh登录到节点机上验证(可以提前做ssh无密码登录的信任关系,当然也可以不做,这样验证时要手动输入登录密码)

[root@k8s-master kubermange]# ssh 10.10.172.203 curl -s 192.168.21.174:8001 //或者直接到节点机上执行"curl -s 192.168.21.174:8001"

The authenticity of host '10.10.172.203 (10.10.172.203)' can't be established.

ECDSA key fingerprint is 66:41:1f:d2:77:b6:eb:ce:3f:a1:68:47:7e:14:ee:cb.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added '10.10.172.203' (ECDSA) to the list of known hosts.

[email protected]'s password:

Welcome to nginx!

Welcome to nginx!

If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.

For online documentation and support please refer to

nginx.org.

Commercial support is available at

nginx.com.

Thank you for using nginx.

[root@k8s-master kubermange]#

同理验证到另外一个节点机上的service的可访问性也是ok的

[root@k8s-master kubermange]# ssh 10.10.172.204 curl -s 192.168.21.174:8001

由此可见,从前面部署×××的部分可以知道nginx Pod运行在10.10.172.203和10.10.172.204这两个节点上。

从这两个节点上访问我们的服务来体现Service Cluster IP在所有集群节点的可到达性。

3)部署外部可访问的nginx service-------------------------------------------------------------------

下面我们创建NodePort类型的Service,这种类型的Service在集群外部是可以访问。下表是本文用的配置文件:

[root@k8s-master kubermange]# vim nginx-service-nodeport.yaml

apiVersion: v1

kind: Service

metadata:

name: nginx-service-nodeport

spec:

ports:

- port: 8000

targetPort: 80

protocol: TCP

type: NodePort

selector:

name: nginx

执行下面的命令创建service:

[root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 create -f ./nginx-service-nodeport.yaml

service "nginx-service-nodeport" created

[root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 get service

NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes 192.168.21.1 443/TCP 2d

nginx-service-clusterip 192.168.21.174 8001/TCP 27m

nginx-service-nodeport 192.168.21.140 8000:31099/TCP 13s

使用下面的命令获得这个service的节点级别的端口:

[root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 describe service nginx-service-nodeport 2>/dev/null | grep NodePort

Type: NodePort

NodePort: 31099/TCP

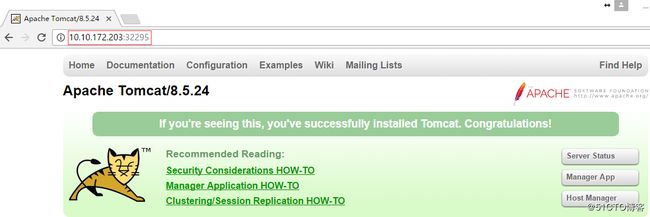

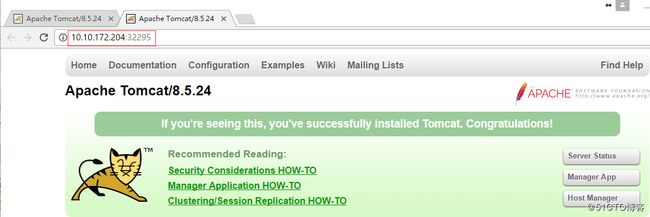

验证service的可访问性(访问节点):

上面的输出告诉我们这个Service的节点级别端口是31099。下面我们验证这个Service的工作情况:

[root@k8s-master kubermange]# curl 10.10.172.203:31099

Welcome to nginx!

Welcome to nginx!

If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.

For online documentation and support please refer to

nginx.org.

Commercial support is available at

nginx.com.

Thank you for using nginx.

[root@k8s-master kubermange]#

同理验证到另外一个节点机上的service的可访问性也是ok的

[root@k8s-master kubermange]# curl 10.10.172.204:31099

----------------------------------------------------------

登录另外两个节点机上,发现已经创建了nginx应用容器

[root@k8s-node-1 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

e60877d9d5e4 docker.io/nginx "nginx -g 'daemon off" About an hour ago Up About an hour k8s_nginx.3d610115_nginx-controller-b05d6_default_aadfd74a-f43a-11e7-a1bf-005056866833_6de59c2d

cba61f9bda3b registry.access.redhat.com/rhel7/pod-infrastructure:latest "/usr/bin/pod" About an hour ago Up About an hour k8s_POD.a8590b41_nginx-controller-b05d6_default_aadfd74a-f43a-11e7-a1bf-005056866833_e60a56ca

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

491df793c5d8 docker.io/nginx "nginx -g 'daemon off" About an hour ago Up About an hour k8s_nginx.3d610115_nginx-controller-8ddph_default_aadfcd91-f43a-11e7-a1bf-005056866833_785ceefb

647bf56d61b8 registry.access.redhat.com/rhel7/pod-infrastructure:latest "/usr/bin/pod" About an hour ago Up About an hour k8s_POD.a8590b41_nginx-controller-8ddph_default_aadfcd91-f43a-11e7-a1bf-005056866833_145d0863

谷歌浏览器访问测试:

--------------------------------------------------------------------------------------------------------------

1)可以扩容nginx应用容器,依次添加对应的应用容器的pod、service-clusterip、service-nodeport的yaml文件即可。 注意yaml文件中的name名。 2)当然也可以添加其他应用容器,比如tomcat,也是依次创建pod、service-clusterip、service-nodeport的yaml文件。 注意yaml文件中的name名和port端口不要重复 3)后面应用容器的集群环境完成后(外部可访问的端口是固定的),可以考虑做下master控制机的集群环境(即做etcd集群)。 可以在控制节点做负载均衡,还可以通过keepalived做高可用。 --------------------------------------------------------- 下面是tomcat应用容器创建实例中的3个yaml文件 [root@k8s-master kubermange]# cat tomcat-rc.yaml apiVersion: v1 kind: ReplicationController metadata: name: tomcat-controller spec: replicas: 2 selector: name: tomcat template: metadata: labels: name: tomcat spec: containers: - name: tomcat image: docker.io/tomcat ports: - containerPort: 8080 [root@k8s-master kubermange]# cat tomcat-service-clusterip.yaml apiVersion: v1 kind: Service metadata: name: tomcat-service-clusterip spec: ports: - port: 8801 targetPort: 8080 protocol: TCP selector: name: tomcat [root@k8s-master kubermange]# cat tomcat-service-nodeport.yaml apiVersion: v1 kind: Service metadata: name: tomcat-service-nodeport spec: ports: - port: 8880 targetPort: 8080 protocol: TCP type: NodePort selector: name: tomcat 查看外部可访问的tomcat service的端口 [root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 describe service tomcat-service-nodeport 2>/dev/null | grep NodePort Type: NodePort NodePort:32295/TCP

操作步骤如下: 1)首先部署tomcat pod 和 复制“器” [root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 create -f tomcat-rc.yaml [root@k8s-master kubermange]# kubectl -s http://k8s-master:8080 get pods [root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 describe pod nginx-controller-* |more 2)部署节点内部可访问的tomcat service [root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 create -f tomcat-service-clusterip.yaml [root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 get service 3)部署外部可访问的tomcat service [root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 create -f ./tomcat-service-nodeport.yaml [root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 get service [root@k8s-master kubermange]# kubectl -s http://10.10.172.202:8080 describe service tomcat-service-nodeport 2>/dev/null | grep NodePort

谷歌浏览器访问测试:

到此为止,我们在每个节点上分别部署了nginx和tomcat容器。

总结:只需要node节点安装所有镜像即可,master节点不承担虚拟机docker安装可选。

[root@k8s-master ~]# docker images REPOSITORY TAG IMAGE ID CREATED SIZE docker.io/nginx latest 3f8a4339aadd 12 days ago 108.5 MB [root@k8s-master ~]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES [root@k8s-master ~]# [root@k8s-node-1 ~]# docker images REPOSITORY TAG IMAGE ID CREATED SIZE docker.io/nginx latest 3f8a4339aadd 12 days ago 108.5 MB docker.io/tomcat latest 3dcfe809147d 3 weeks ago 557.4 MB registry.access.redhat.com/rhel7/pod-infrastructure latest 99965fb98423 12 weeks ago 208.6 MB [root@k8s-node-1 ~]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES a30873206f1a docker.io/tomcat "catalina.sh run" 23 minutes ago Up 23 minutes k8s_tomcat.cdbc0245_tomcat-controller-9dm45_default_07397545-f448-11e7-a1bf-005056866833_d753af9d 967e614e667b registry.access.redhat.com/rhel7/pod-infrastructure:latest "/usr/bin/pod" 25 minutes ago Up 25 minutes k8s_POD.24f70ba9_tomcat-controller-9dm45_default_07397545-f448-11e7-a1bf-005056866833_46708437 e60877d9d5e4 docker.io/nginx "nginx -g 'daemon off" 2 hours ago Up 2 hours k8s_nginx.3d610115_nginx-controller-b05d6_default_aadfd74a-f43a-11e7-a1bf-005056866833_6de59c2d cba61f9bda3b registry.access.redhat.com/rhel7/pod-infrastructure:latest "/usr/bin/pod" 2 hours ago Up 2 hours k8s_POD.a8590b41_nginx-controller-b05d6_default_aadfd74a-f43a-11e7-a1bf-005056866833_e60a56ca [root@k8s-node-1 ~]# [root@k8s-node-2 ~]# docker images REPOSITORY TAG IMAGE ID CREATED SIZE docker.io/nginx latest 3f8a4339aadd 12 days ago 108.5 MB docker.io/tomcat latest 3dcfe809147d 3 weeks ago 557.4 MB registry.access.redhat.com/rhel7/pod-infrastructure latest 99965fb98423 12 weeks ago 208.6 MB [root@k8s-node-2 ~]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 18b91f21a691 docker.io/tomcat "catalina.sh run" 24 minutes ago Up 24 minutes k8s_tomcat.cdbc0245_tomcat-controller-2b17b_default_07395f07-f448-11e7-a1bf-005056866833_de861f22 5bfe2ec09a0a registry.access.redhat.com/rhel7/pod-infrastructure:latest "/usr/bin/pod" 25 minutes ago Up 25 minutes k8s_POD.24f70ba9_tomcat-controller-2b17b_default_07395f07-f448-11e7-a1bf-005056866833_69dd72ef 491df793c5d8 docker.io/nginx "nginx -g 'daemon off" 2 hours ago Up 2 hours k8s_nginx.3d610115_nginx-controller-8ddph_default_aadfcd91-f43a-11e7-a1bf-005056866833_785ceefb 647bf56d61b8 registry.access.redhat.com/rhel7/pod-infrastructure:latest "/usr/bin/pod" 2 hours ago Up 2 hours k8s_POD.a8590b41_nginx-controller-8ddph_default_aadfcd91-f43a-11e7-a1bf-005056866833_145d0863 [root@k8s-node-2 ~]#