上一篇文章中,我们介绍完了Peer的start()方法,本文将深入start()里的调用方法来分析Peer的收发消息机制。start()方法中的第一步便是交换Version消息,我们来看看negotiateInboundProtocol()方法:

//btcd/peer/peer.go

// negotiateInboundProtocol waits to receive a version message from the peer

// then sends our version message. If the events do not occur in that order then

// it returns an error.

func (p *Peer) negotiateInboundProtocol() error {

if err := p.readRemoteVersionMsg(); err != nil {

return err

}

return p.writeLocalVersionMsg()

}

其主要步骤是:

- 等待并读取、处理Peer发过来的Version消息;

- 向Peer发送自己的Version消息;

negotiateOutboundProtocol()与negotiateInboundProtocol()类似,只是上述两步的顺序相反。在readRemoteVersionMsg()中,先是读取并解析出Version消息,然后调用Peer的handleRemoteVersionMsg来处理Version消息,最后回调MessageListeners中的OnVersion进一步处理。这里,我们主要来看handleRemoteVersionMsg():

//btcd/peer/peer.go

// handleRemoteVersionMsg is invoked when a version bitcoin message is received

// from the remote peer. It will return an error if the remote peer's version

// is not compatible with ours.

func (p *Peer) handleRemoteVersionMsg(msg *wire.MsgVersion) error {

// Detect self connections.

if !allowSelfConns && sentNonces.Exists(msg.Nonce) {

return errors.New("disconnecting peer connected to self")

}

// Notify and disconnect clients that have a protocol version that is

// too old.

if msg.ProtocolVersion < int32(wire.MultipleAddressVersion) {

// Send a reject message indicating the protocol version is

// obsolete and wait for the message to be sent before

// disconnecting.

reason := fmt.Sprintf("protocol version must be %d or greater",

wire.MultipleAddressVersion)

rejectMsg := wire.NewMsgReject(msg.Command(), wire.RejectObsolete,

reason)

return p.writeMessage(rejectMsg)

}

// Updating a bunch of stats.

p.statsMtx.Lock()

p.lastBlock = msg.LastBlock

p.startingHeight = msg.LastBlock

// Set the peer's time offset.

p.timeOffset = msg.Timestamp.Unix() - time.Now().Unix()

p.statsMtx.Unlock()

// Negotiate the protocol version.

p.flagsMtx.Lock()

p.advertisedProtoVer = uint32(msg.ProtocolVersion)

p.protocolVersion = minUint32(p.protocolVersion, p.advertisedProtoVer)

p.versionKnown = true

log.Debugf("Negotiated protocol version %d for peer %s",

p.protocolVersion, p)

// Set the peer's ID.

p.id = atomic.AddInt32(&nodeCount, 1)

// Set the supported services for the peer to what the remote peer

// advertised.

p.services = msg.Services

// Set the remote peer's user agent.

p.userAgent = msg.UserAgent

p.flagsMtx.Unlock()

return nil

}

可以看到,Peer在处理Version消息时,主要进行了:

- 检测Version消息里的Nonce是否是自己缓存的nonce值,如果是,则表明该Version消息由自己发送给自己,在实际网络下,不允许节点自己与自己结成Peer,所以这时会返回错误;

- 检测Version消息里的ProtocolVersion,如果Peer的版本低于209,则拒绝与之相连;

- Nonce和ProtocolVersion检查通过后,就开始更新Peer的相关信息,如Peer的最新区块高度、Peer与本地节点的时间偏移等;

- 最后,更新Peer的版本号、支持的服务、UserAgent等信息,同时为其分配一个id。

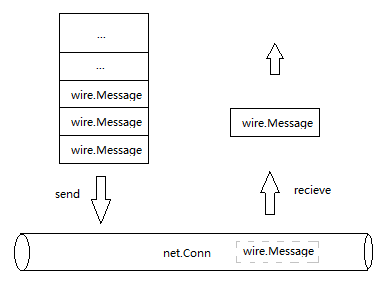

Peer的区块高度、支持的服务等信息将用于本地节点判断是否同步Peer的区块,我们将在后文中介绍,总之,交换Version消息是为了保证后续的消息交换及同步区块过程能够顺利进行。向Peer发送Version消息时,最主要的是填充及封装Version消息,我们将在介绍btcd/wire时再详细说明,这里暂不展开。接下来,我们开始介绍start()中新起的goroutine里运行的各个Handler的实现,由于这些goroutine里大量处理了channel消息,为了便于理解后续代码,我们先给出各个goroutine与其关联的channel的关系图:

图中带箭头的黑色大圆圈代表一个goroutine,蓝色和红色“管道”代表一个channel,这里的channel均是双向管道。在分析或设计Go的并发编程代码时,大家不防也采用类似的“圆圈”上“插管”的方式来帮助直观理解各个协程及它们的同步关系。

我们首先来看inHandler():

//btcd/peer/peer.go

// inHandler handles all incoming messages for the peer. It must be run as a

// goroutine.

func (p *Peer) inHandler() {

// Peers must complete the initial version negotiation within a shorter

// timeframe than a general idle timeout. The timer is then reset below

// to idleTimeout for all future messages.

idleTimer := time.AfterFunc(idleTimeout, func() {

log.Warnf("Peer %s no answer for %s -- disconnecting", p, idleTimeout)

p.Disconnect()

})

out:

for atomic.LoadInt32(&p.disconnect) == 0 {

// Read a message and stop the idle timer as soon as the read

// is done. The timer is reset below for the next iteration if

// needed.

rmsg, buf, err := p.readMessage()

idleTimer.Stop()

......

atomic.StoreInt64(&p.lastRecv, time.Now().Unix())

p.stallControl <- stallControlMsg{sccReceiveMessage, rmsg}

// Handle each supported message type.

p.stallControl <- stallControlMsg{sccHandlerStart, rmsg}

switch msg := rmsg.(type) {

case *wire.MsgVersion:

p.PushRejectMsg(msg.Command(), wire.RejectDuplicate,

"duplicate version message", nil, true)

break out

......

case *wire.MsgGetAddr:

if p.cfg.Listeners.OnGetAddr != nil {

p.cfg.Listeners.OnGetAddr(p, msg)

}

case *wire.MsgAddr:

if p.cfg.Listeners.OnAddr != nil {

p.cfg.Listeners.OnAddr(p, msg)

}

case *wire.MsgPing:

p.handlePingMsg(msg)

if p.cfg.Listeners.OnPing != nil {

p.cfg.Listeners.OnPing(p, msg)

}

case *wire.MsgPong:

p.handlePongMsg(msg)

if p.cfg.Listeners.OnPong != nil {

p.cfg.Listeners.OnPong(p, msg)

}

......

case *wire.MsgBlock:

if p.cfg.Listeners.OnBlock != nil {

p.cfg.Listeners.OnBlock(p, msg, buf)

}

......

default:

log.Debugf("Received unhandled message of type %v "+

"from %v", rmsg.Command(), p)

}

p.stallControl <- stallControlMsg{sccHandlerDone, rmsg}

// A message was received so reset the idle timer.

idleTimer.Reset(idleTimeout)

}

// Ensure the idle timer is stopped to avoid leaking the resource.

idleTimer.Stop()

// Ensure connection is closed.

p.Disconnect()

close(p.inQuit)

log.Tracef("Peer input handler done for %s", p)

}

其主要步骤包括:

- 设定一个idleTimer,其超时时间为5分钟。如果每隔5分钟内没有从Peer接收到消息,则主动与该Peer断开连接。我们在后面分析pingHandler时将会看到,往Peer发送ping消息的周期是2分钟,也就是说最多约2分钟多一点(2min + RTT + Peer处理Ping的时间,其中RTT一般为ms级)需要收到Peer回复的Pong消息,所以如果5min没有收到回复,可以认为Peer已经失去联系;

- 循环读取和处理从Peer发过来的消息。当5min内收到消息时,idleTimer暂时停止。请注意,消息读取完毕后,inHandler向stallHandler通过stallControl channel发送了sccReceiveMessage消息,并随后发送了sccHandlerStart,stallHandler会根据这些消息来计算节点接收并处理消息所消耗的时间,我们在后面分析stallHandler分详细介绍。

- 在处理Peer发送过来的消息时,inHandler可能先对其作处理,如MsgPing和MsgPong,也可能不对其作任何处理,如MsgBlock等等,然后回调MessageListener的对应函数作处理。

- 在处理完一条消息后,inHandler向stallHandler发送sccHandlerDone,通知stallHandler消息处理完毕。同时,将idleTimer复位再次开始计时,并等待读取下一条消息;

- 当主动调用Disconnect()与Peer断开连接后,消息读取和处理循环将退出,inHandler协和也准备退出。退出之前,先将idleTimer停止,并再次主动调用Disconnect()强制与Peer断开连接,最后通过inQuit channel向stallHandler通知自己已经退出。

inHandler协程主要处理接收消息,并回调MessageListener中的消息处理函数对消息进行处理,需要注意的是,回调函数处理消息时不能太耗时,否则会收引起超时断连。outHandler主要发送消息,我们来看看它的代码:

//btcd/peer/peer.go

// outHandler handles all outgoing messages for the peer. It must be run as a

// goroutine. It uses a buffered channel to serialize output messages while

// allowing the sender to continue running asynchronously.

func (p *Peer) outHandler() {

out:

for {

select {

case msg := <-p.sendQueue:

switch m := msg.msg.(type) {

case *wire.MsgPing:

// Only expects a pong message in later protocol

// versions. Also set up statistics.

if p.ProtocolVersion() > wire.BIP0031Version {

p.statsMtx.Lock()

p.lastPingNonce = m.Nonce

p.lastPingTime = time.Now()

p.statsMtx.Unlock()

}

}

p.stallControl <- stallControlMsg{sccSendMessage, msg.msg}

if err := p.writeMessage(msg.msg); err != nil {

p.Disconnect()

......

continue

}

......

p.sendDoneQueue <- struct{}{}

case <-p.quit:

break out

}

}

<-p.queueQuit

// Drain any wait channels before we go away so we don't leave something

// waiting for us. We have waited on queueQuit and thus we can be sure

// that we will not miss anything sent on sendQueue.

cleanup:

for {

select {

case msg := <-p.sendQueue:

if msg.doneChan != nil {

msg.doneChan <- struct{}{}

}

// no need to send on sendDoneQueue since queueHandler

// has been waited on and already exited.

default:

break cleanup

}

}

close(p.outQuit)

log.Tracef("Peer output handler done for %s", p)

}

可以看出,outHandler主要是从sendQueue循环取出消息,并调用writeMessage()向Peer发送消息。当消息发送前,它向stallHandler发送sccSendMessage消息,通知stallHandler开始跟踪这条消息的响应是否超时;消息发成功后,通过sendDoneQueue channel通知queueHandler发送下一条消息。需要注意的是,sendQueue是buffer size为1的channel,它与sendDoneQueue配合保证发送缓冲队列outputQueue里的消息按顺序一一发送。当Peer断开连接时,p.quit的接收代码会被触发,从而让循环退出。通过queueQuit同步,outHandler退出之前需要等待queueHandler退出,是为了让queueHandler将发送缓冲中的消息清空。最后,通过outQuit channel通知stallHandler自己退出。

发送消息的队列由queueHandler维护,它通过sendQueue将队列中的消息送往outHandler并向Peer发送。queueHandler还专门处理了Inventory的发送,我们来看看它的代码:

//btcd/peer/peer.go

// queueHandler handles the queuing of outgoing data for the peer. This runs as

// a muxer for various sources of input so we can ensure that server and peer

// handlers will not block on us sending a message. That data is then passed on

// to outHandler to be actually written.

func (p *Peer) queueHandler() {

pendingMsgs := list.New()

invSendQueue := list.New()

trickleTicker := time.NewTicker(trickleTimeout)

defer trickleTicker.Stop()

// We keep the waiting flag so that we know if we have a message queued

// to the outHandler or not. We could use the presence of a head of

// the list for this but then we have rather racy concerns about whether

// it has gotten it at cleanup time - and thus who sends on the

// message's done channel. To avoid such confusion we keep a different

// flag and pendingMsgs only contains messages that we have not yet

// passed to outHandler.

waiting := false

// To avoid duplication below.

queuePacket := func(msg outMsg, list *list.List, waiting bool) bool { (1)

if !waiting {

p.sendQueue <- msg

} else {

list.PushBack(msg)

}

// we are always waiting now.

return true

}

out:

for { (2)

select {

case msg := <-p.outputQueue: (3)

waiting = queuePacket(msg, pendingMsgs, waiting)

// This channel is notified when a message has been sent across

// the network socket.

case <-p.sendDoneQueue: (4)

// No longer waiting if there are no more messages

// in the pending messages queue.

next := pendingMsgs.Front()

if next == nil {

waiting = false

continue

}

// Notify the outHandler about the next item to

// asynchronously send.

val := pendingMsgs.Remove(next)

p.sendQueue <- val.(outMsg)

case iv := <-p.outputInvChan: (5)

// No handshake? They'll find out soon enough.

if p.VersionKnown() {

invSendQueue.PushBack(iv)

}

case <-trickleTicker.C: (6)

// Don't send anything if we're disconnecting or there

// is no queued inventory.

// version is known if send queue has any entries.

if atomic.LoadInt32(&p.disconnect) != 0 ||

invSendQueue.Len() == 0 {

continue

}

// Create and send as many inv messages as needed to

// drain the inventory send queue.

invMsg := wire.NewMsgInvSizeHint(uint(invSendQueue.Len()))

for e := invSendQueue.Front(); e != nil; e = invSendQueue.Front() {

iv := invSendQueue.Remove(e).(*wire.InvVect)

// Don't send inventory that became known after

// the initial check.

if p.knownInventory.Exists(iv) { (7)

continue

}

invMsg.AddInvVect(iv)

if len(invMsg.InvList) >= maxInvTrickleSize {

waiting = queuePacket( (8)

outMsg{msg: invMsg},

pendingMsgs, waiting)

invMsg = wire.NewMsgInvSizeHint(uint(invSendQueue.Len()))

}

// Add the inventory that is being relayed to

// the known inventory for the peer.

p.AddKnownInventory(iv) (9)

}

if len(invMsg.InvList) > 0 {

waiting = queuePacket(outMsg{msg: invMsg}, (10)

pendingMsgs, waiting)

}

case <-p.quit:

break out

}

}

// Drain any wait channels before we go away so we don't leave something

// waiting for us.

for e := pendingMsgs.Front(); e != nil; e = pendingMsgs.Front() { (11)

val := pendingMsgs.Remove(e)

msg := val.(outMsg)

if msg.doneChan != nil {

msg.doneChan <- struct{}{}

}

}

cleanup:

for { (12)

select {

case msg := <-p.outputQueue:

if msg.doneChan != nil {

msg.doneChan <- struct{}{}

}

case <-p.outputInvChan:

// Just drain channel

// sendDoneQueue is buffered so doesn't need draining.

default:

break cleanup

}

}

close(p.queueQuit) (13)

log.Tracef("Peer queue handler done for %s", p)

}

queueHandler()中的主要步骤:

- 代码(1)处定义了一个函数值,它的主要逻辑为: 当从outputQueue接收到待发送消息时,如果有消息正在通过outHandler发送,则将消息缓存到pendingMsgs或invSendQueue;

- 代码(2)处开始循环处理channel消息。请注意,这里的select语句没有定义default分支,也就是说管道中没有数据时,循环将阻塞在select语句处;

- 当有发送消息的请求时,发送方向outputQueue写入数据,代码(3)处的接收代码将会被触发,并调用queuePacket(),要么立即发向outHandler,要么缓存起来排队发送;

- 当outHandler发送完一条消息时,它向sendDoneQueue写入数据,代码(4)处的接收代码被触发,queueHandler从缓存在pendingMsgs中的待发送消息取出一条发往outHandler;

- 当要发送Inventory时,发送方向outputInvChan写入数据,代码(5)处的接收代码被触发,待发送的Inventory将被缓存到invSendQueue中;

- 代码(6)处trickleTicker 10s被触发一次,它首先从invSendQueue中取出一条Inventory,随后验证它是否已经向Peer发送过,如代码(7)处所示;如果是新的Inventroy,则将各个Inventory组成Inventory Vector,通过inv消息发往Peer。需要注意的是,代码(8)处限制每个inv消息里的Inventory Vector的size最大为1000,当超过该限制时,invSendQueue中的Inventory将分成多个inv消息发送。代码(9)处将发送过的Inventory缓存下来,以防后面重复发送;

- 当调用Peer的Disconnect()时,p.quit的接收代码会被触发,循环退出;同时代码(11)处将pendingMsgs中的待发送消息清空,代码(12)处将管道中的消息清空,随后代码(12)处通过queueQuit channel通知outHandler退出。

queueHandler()通过outputQueue和outputInvChan这两上带缓冲的channel,以及pendingMsgs和invSendQueue两个List,实现了发送消息列队;而且,它通过缓存大小为1的channel sendQueue保证待发送消息按顺序串行发送。inHandler,outHandler和queueHandler在不同goroutine中执行,实现了异步收发消息。然而正如我们在inHandler中所了解的,消息的接收处理也是一条一条地串行处理的,如果没有超时控制,假如某一时间段内发送队列中有大量待发送消息,而且inHandler中处理某些消息太耗时导致后续消息无法读取时,Peer之间的消息交换将发生严重的“拥塞”。为了防止这种情况,stallHandler中作了超时处理:

//btcd/peer/peer.go

// stallHandler handles stall detection for the peer. This entails keeping

// track of expected responses and assigning them deadlines while accounting for

// the time spent in callbacks. It must be run as a goroutine.

func (p *Peer) stallHandler() {

// These variables are used to adjust the deadline times forward by the

// time it takes callbacks to execute. This is done because new

// messages aren't read until the previous one is finished processing

// (which includes callbacks), so the deadline for receiving a response

// for a given message must account for the processing time as well.

var handlerActive bool

var handlersStartTime time.Time

var deadlineOffset time.Duration

// pendingResponses tracks the expected response deadline times.

pendingResponses := make(map[string]time.Time)

// stallTicker is used to periodically check pending responses that have

// exceeded the expected deadline and disconnect the peer due to

// stalling.

stallTicker := time.NewTicker(stallTickInterval)

defer stallTicker.Stop()

// ioStopped is used to detect when both the input and output handler

// goroutines are done.

var ioStopped bool

out:

for {

select {

case msg := <-p.stallControl:

switch msg.command {

case sccSendMessage: (1)

// Add a deadline for the expected response

// message if needed.

p.maybeAddDeadline(pendingResponses,

msg.message.Command())

case sccReceiveMessage: (2)

// Remove received messages from the expected

// response map. Since certain commands expect

// one of a group of responses, remove

// everything in the expected group accordingly.

switch msgCmd := msg.message.Command(); msgCmd {

case wire.CmdBlock:

fallthrough

case wire.CmdMerkleBlock:

fallthrough

case wire.CmdTx:

fallthrough

case wire.CmdNotFound:

delete(pendingResponses, wire.CmdBlock)

delete(pendingResponses, wire.CmdMerkleBlock)

delete(pendingResponses, wire.CmdTx)

delete(pendingResponses, wire.CmdNotFound)

default:

delete(pendingResponses, msgCmd) (3)

}

case sccHandlerStart: (4)

// Warn on unbalanced callback signalling.

if handlerActive {

log.Warn("Received handler start " +

"control command while a " +

"handler is already active")

continue

}

handlerActive = true

handlersStartTime = time.Now()

case sccHandlerDone: (5)

// Warn on unbalanced callback signalling.

if !handlerActive {

log.Warn("Received handler done " +

"control command when a " +

"handler is not already active")

continue

}

// Extend active deadlines by the time it took

// to execute the callback.

duration := time.Since(handlersStartTime)

deadlineOffset += duration

handlerActive = false

default:

log.Warnf("Unsupported message command %v",

msg.command)

}

case <-stallTicker.C: (6)

// Calculate the offset to apply to the deadline based

// on how long the handlers have taken to execute since

// the last tick.

now := time.Now()

offset := deadlineOffset

if handlerActive {

offset += now.Sub(handlersStartTime) (7)

}

// Disconnect the peer if any of the pending responses

// don't arrive by their adjusted deadline.

for command, deadline := range pendingResponses {

if now.Before(deadline.Add(offset)) { (8)

continue

}

log.Debugf("Peer %s appears to be stalled or "+

"misbehaving, %s timeout -- "+

"disconnecting", p, command)

p.Disconnect()

break

}

// Reset the deadline offset for the next tick.

deadlineOffset = 0

case <-p.inQuit: (9)

// The stall handler can exit once both the input and

// output handler goroutines are done.

if ioStopped {

break out

}

ioStopped = true

case <-p.outQuit: (10)

// The stall handler can exit once both the input and

// output handler goroutines are done.

if ioStopped {

break out

}

ioStopped = true

}

}

// Drain any wait channels before going away so there is nothing left

// waiting on this goroutine.

cleanup:

for { (11)

select {

case <-p.stallControl:

default:

break cleanup

}

}

log.Tracef("Peer stall handler done for %s", p)

}

其中的主要逻辑为:

- 当收到outHandler发来的sccSendMessage时,将为已经发送的消息设定收到响应消息的超时时间deadline,并缓存入pendingResponses中,如代码(1)处所示;

- 当收到inHandler发来的sccReceiveMessage时,如果是响应消息,则将对应消息命令和其deadline从pendingResponses中移除,不需要再跟踪该消息响应是否超时,如代码(2)、(3)处所示。请注意这里只是根据消息命令或者类型来匹配请求和响应,并没有通过序列号或请求ID来严格匹配,这一方面是由于节点对收和发均作了串行化处理,另一方面是由于节点同步到最新区块后,Peer之间的消息交换并不是非常频繁;

- 当收到inHandler发来的sccHandlerStart时,说明inHandler开始处理接收到的消息,为了防止下一条响应消息因为当前消息处理时间过程而导致超时,stallHandler将在收到sccHandlerStart和sccHandlerDone时,计算处理当前消息的时间,并在检测下一条响应消息是否超时时将前一条消息的处理时间考虑进去;

- 代码(5)处收到inHandler发来的sccHandlerDone时,表明当前接收到的消息已经处理完毕,用当前时间减去开始处理消息的时点,即得到处理消息所花费的时间deadlineOffset,这个时间差将被用于调节下一个响应消息的超时门限;

- 代码(6)处stallTicker每隔15s触发,用于周期性地检查是否有消息的响应超时,如果有响应已经超时,则主动断开该Peer连接。如果在当前检查时点与上一个检查时点之间有一条接收消息正在处理或者刚处理完毕,则超时门限延长前一条接收消息的处理时长,如代码(7)、(8)处所示,以免因前一条消息处理太耗时而导致下一条响应消息超时。然而,如果某一条消息的处理时间过长,导致有多于1条响应消息被延迟读取和处理,则下一条消息之后的响应消息大概仍然会超时,所以要避免在处理接收消息的回调函数中作耗时操作;如果网络延时大,导致inHandler读取下一条响应消息时等待时间过长,也会导致超时;

- 代码(9)、(10)处保证当inHandler和outHandler均退出后,stallHandler才结束处理循环,准备退出;

- 代码(11)处stallHandler将stallControl channel中的消息清空,并最后退出;

stallHandler跟踪发送消息与对应的响应消息,每隔15s检查是否有响应消息超时,同时修正了当前响应消息处理时间对下一条响应消息超时检查的影响,当超时发生时主动断开与Peer的连接,可以重新选择其它Peer开始同步,保证了Peer收发消息时不会因网络延迟或处理耗时而影响区块同步效率。当然,为了维持和Peer之间的连接关系,当前节点与Peer节点之间定时发送Ping/Pong心跳,Ping消息的发送由pingHandler来处理,Peer节点收到后回复Pong消息。

//btcd/peer/peer.go

// pingHandler periodically pings the peer. It must be run as a goroutine.

func (p *Peer) pingHandler() {

pingTicker := time.NewTicker(pingInterval)

defer pingTicker.Stop()

out:

for {

select {

case <-pingTicker.C:

nonce, err := wire.RandomUint64()

if err != nil {

log.Errorf("Not sending ping to %s: %v", p, err)

continue

}

p.QueueMessage(wire.NewMsgPing(nonce), nil)

case <-p.quit:

break out

}

}

}

pingHandler的逻辑相对简单,主要是以2分钟为周期向Peer发送Ping消息;当p.quit被关闭时,pingHandler退出。

到此,我们已经全部了解了5个Handler或goroutine的执行过程,它们是Peer之间收发消息的框架。然而,我们还没有介绍消息是由谁发送出去或者从哪里读到,为了弄清楚它,我们可以看看Peer的readMessage和writeMessage方法:

//btcd/peer/peer.go

// readMessage reads the next bitcoin message from the peer with logging.

func (p *Peer) readMessage() (wire.Message, []byte, error) {

n, msg, buf, err := wire.ReadMessageN(p.conn, p.ProtocolVersion(),

p.cfg.ChainParams.Net)

atomic.AddUint64(&p.bytesReceived, uint64(n))

if p.cfg.Listeners.OnRead != nil {

p.cfg.Listeners.OnRead(p, n, msg, err)

}

if err != nil {

return nil, nil, err

}

......

return msg, buf, nil

}

// writeMessage sends a bitcoin message to the peer with logging.

func (p *Peer) writeMessage(msg wire.Message) error {

// Don't do anything if we're disconnecting.

if atomic.LoadInt32(&p.disconnect) != 0 {

return nil

}

......

// Write the message to the peer.

n, err := wire.WriteMessageN(p.conn, msg, p.ProtocolVersion(),

p.cfg.ChainParams.Net)

atomic.AddUint64(&p.bytesSent, uint64(n))

if p.cfg.Listeners.OnWrite != nil {

p.cfg.Listeners.OnWrite(p, n, msg, err)

}

return err

}

可以看到,真正的收发消息都由wire的ReadMessage()和WriteMessage()处理,这里我们不展开分析,将在后续文章介绍btcd/wire时说明。实际上,消息的收发最终是读或者写p.conn,它是一个net.Conn,也就是消息的收发都是读写Peer之间的net连接。p.conn在Peer的AssociateConnection()方法中初始化,它是在connMgr成功建立起Peer之间的TCP连接后调用的。

// AssociateConnection associates the given conn to the peer. Calling this

// function when the peer is already connected will have no effect.

func (p *Peer) AssociateConnection(conn net.Conn) {

// Already connected?

if !atomic.CompareAndSwapInt32(&p.connected, 0, 1) {

return

}

p.conn = conn

p.timeConnected = time.Now()

......

go func() {

if err := p.start(); err != nil {

log.Debugf("Cannot start peer %v: %v", p, err)

p.Disconnect()

}

}()

}

到此,我们就了解了Peer收发消息机制的全貌,它的基本机制如下图所示:

可以看到,Peer之间收发消息的前提是成功建立了网络连接,那Peer之间是如何建立并维护它们之间的TCP连接的呢?我们将在下一篇文章《Btcd区块在P2P网络上的传播之ConnMgr》中介绍。