各种聚类算法(原理+代码+对比分析)最全总结

序言

还是要持续总结,持续积累。

一、聚类的目标

使同一类对象的相似度尽可能地大;不同类对象之间的相似度尽可能地小。

二、聚类算法分类

1.基于划分

给定一个有N个元组或者纪录的数据集,分裂法将构造K个分组,每一个分组就代表一个聚类,K<N。

特点:计算量大。很适合发现中小规模的数据库中小规模的数据库中的球状簇。

算法:K-MEANS算法、K-MEDOIDS算法、CLARANS算法

2.基于层次

对给定的数据集进行层次似的分解,直到某种条件满足为止。具体又可分为“自底向上”和“自顶向下”两种方案。

特点:较小的计算开销。然而这种技术不能更正错误的决定。

算法:BIRCH算法、CURE算法、CHAMELEON算法

3.基于密度

只要一个区域中的点的密度大过某个阈值,就把它加到与之相近的聚类中去。

特点:能克服基于距离的算法只能发现“类圆形”的聚类的缺点。

算法:DBSCAN算法、OPTICS算法、DENCLUE算法

4.基于网格

将数据空间划分成为有限个单元(cell)的网格结构,所有的处理都是以单个的单元为对象的。

特点:处理速度很快,通常这是与目标数据库中记录的个数无关的,只与把数据空间分为多少个单元有关。

算法:STING算法、CLIQUE算法、WAVE-CLUSTER算法

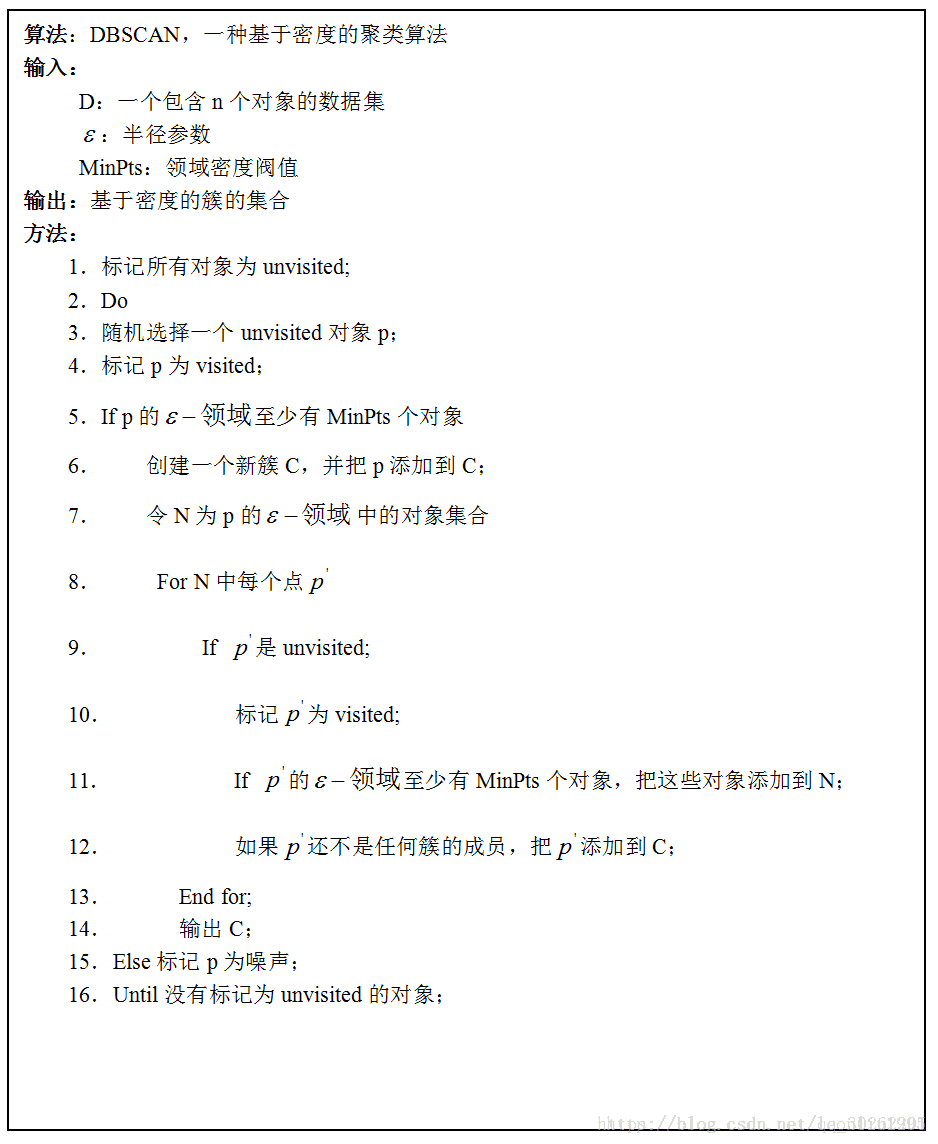

三、DBscan聚类

1.算法原理

DBSCAN(Density-Based Spatial Clustering of Application with Noise)是一种典型的基于密度的聚类算法,在DBSCAN算法中将数据点分为一下三类:

核心点:在半径Eps内含有超过MinPts数目的点

边界点:在半径Eps内点的数量小于MinPts,但是落在核心点的邻域内

噪音点:既不是核心点也不是边界点的点

在这里有两个量,一个是半径Eps,另一个是指定的数目MinPts

2.代码

# encoding=utf-8

import numpy as np

from sklearn.cluster import DBSCAN

from sklearn import metrics

from sklearn.datasets.samples_generator import make_blobs

from sklearn.preprocessing import StandardScaler

import matplotlib.pyplot as plt

class DBScan (object):

"""

the class inherits from object, encapsulate the DBscan algorithm

"""

def __init__(self, p, l_stauts):

self.point = p

self.labels_stats = l_stauts

self.db = DBSCAN(eps=0.2, min_samples=10).fit(self.point)

def draw(self):

coreSamplesMask = np.zeros_like(self.db.labels_, dtype=bool)

coreSamplesMask[self.db.core_sample_indices_] = True

labels = self.db.labels_

nclusters = jiangzao(labels)

# 输出模型评估参数,包括估计的集群数量、均匀度、完整性、V度量、

# 调整后的兰德指数、调整后的互信息量、轮廓系数

print('Estimated number of clusters: %d' % nclusters)

print("Homogeneity: %0.3f" % metrics.homogeneity_score(self.labels_stats, labels))

print("Completeness: %0.3f" % metrics.completeness_score(self.labels_stats, labels))

print("V-measure: %0.3f" % metrics.v_measure_score(self.labels_stats, labels))

print("Adjusted Rand Index: %0.3f"

% metrics.adjusted_rand_score(self.labels_stats, labels))

print("Adjusted Mutual Information: %0.3f"

% metrics.adjusted_mutual_info_score(self.labels_stats, labels))

print("Silhouette Coefficient: %0.3f"

% metrics.silhouette_score(self.point, labels))

# 绘制结果

# 黑色被移除,并被标记为噪音。

unique_labels = set(labels)

colors = plt.cm.Spectral(np.linspace(0, 1, len(unique_labels)))

for k, col in zip(unique_labels, colors):

if k == -1:

# 黑色用于噪声

col = 'k'

classMemberMask = (labels == k)

# 画出分类点集

xy = self.point[classMemberMask & coreSamplesMask]

plt.plot(xy[:, 0], xy[:, 1], 'o', markerfacecolor=col,

markeredgecolor='k', markersize=6)

# 画出噪声点集

xy = self.point[classMemberMask & ~coreSamplesMask]

plt.plot(xy[:, 0], xy[:, 1], 'o', markerfacecolor=col,

markeredgecolor='k', markersize=3)

# 加标题,显示分类数

plt.title('Estimated number of clusters: %d' % nclusters)

plt.show()

def jiangzao (labels):

# 标签中的簇数,忽略噪声(如果存在)

clusters = len(set(labels)) - (1 if -1 in labels else 0)

return clusters

def standar_scaler(points):

p = StandardScaler().fit_transform(points)

return p

if __name__ == "__main__":

"""

test class dbScan

"""

centers = [[1, 1], [-1, -1], [-1, 1], [1, -1]]

point, labelsTrue = make_blobs(n_samples=2000, centers=centers, cluster_std=0.4,

random_state=0)

point = standar_scaler(point)

db = DBScan(point, labelsTrue)

db.draw()3.图形输出

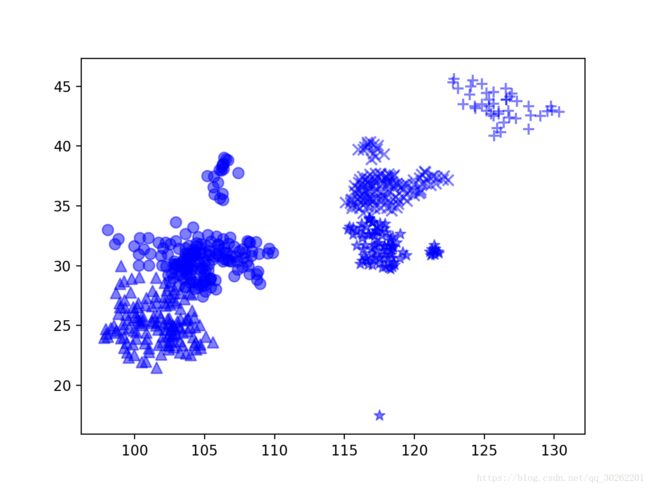

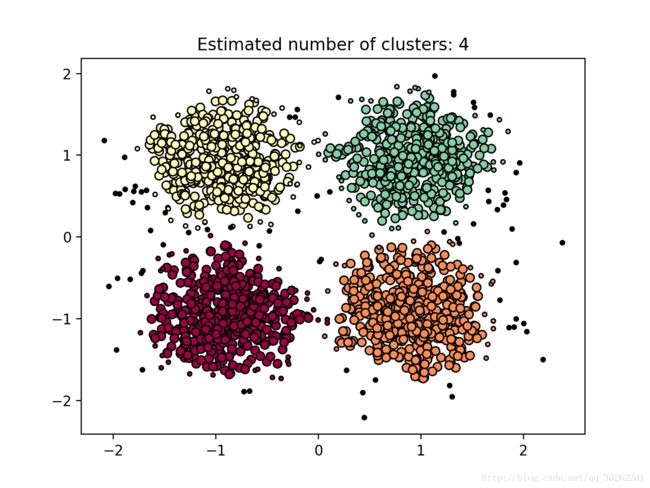

如图算法自动将数据集分成了4簇,用四种颜色代表。每一簇内较大的点代表核心对象,较小的点代表边界点(与簇内其他点密度相连,但是自身不是核心对象)。黑色的点代表离群点或者叫噪声点。

4.控制台输出

Estimated number of clusters: 4

Homogeneity: 0.928

Completeness: 0.862

V-measure: 0.894

Adjusted Rand Index: 0.928

Adjusted Mutual Information: 0.862

Silhouette Coefficient: 0.584四、K-means聚类

1.算法原理

2.代码

#coding=utf-8

import numpy as np

import matplotlib.pyplot as plt

from sklearn.cluster import KMeans

#从磁盘读取城市经纬度数据

X = []

f = open('city.txt')

for v in f:

X.append([float(v.split(',')[1]), float(v.split(',')[2])])

#转换成numpy array

X = np.array(X)

#类簇的数量

n_clusters = 5

#现在把数据和对应的分类书放入聚类函数中进行聚类

cls = KMeans(n_clusters).fit(X)

#X中每项所属分类的一个列表

cls.labels_

#画图

markers = ['^', 'x', 'o', '*', '+']

for i in range(n_clusters):

members = cls.labels_ == i

plt.scatter(X[members, 0], X[members, 1], s=60, marker=markers[i], c='b', alpha=0.5)

plt.title(' ')

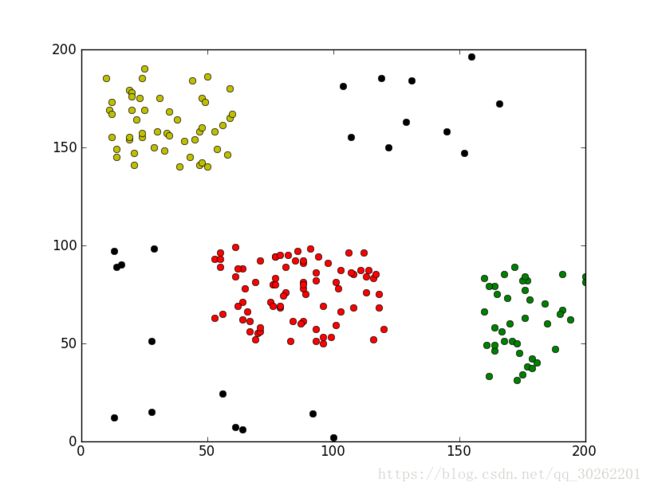

plt.show()3.图像输出

五、层次聚类

1.算法简介

凝聚层次聚类:所谓凝聚的,指的是该算法初始时,将每个点作为一个簇,每一步合并两个最接近的簇。另外即使到最后,对于噪音点或是离群点也往往还是各占一簇的,除非过度合并。对于这里的“最接近”,有下面三种定义。我在实现是使用了MIN,该方法在合并时,只要依次取当前最近的点对,如果这个点对当前不在一个簇中,将所在的两个簇合并就行:

- 单链(MIN):定义簇的邻近度为不同两个簇的两个最近的点之间的距离。

- 全链(MAX):定义簇的邻近度为不同两个簇的两个最远的点之间的距离。

- 组平均:定义簇的邻近度为取自两个不同簇的所有点对邻近度的平均值。

2.代码

# scoding=utf-8

# Agglomerative Hierarchical Clustering(AHC)

import pylab as pl

from operator import itemgetter

from collections import OrderedDict,Counter

points = [[int(eachpoint.split('#')[0]), int(eachpoint.split('#')[1])] for eachpoint in open("points","r")]

# 初始时每个点指派为单独一簇

groups = [idx for idx in range(len(points))]

# 计算每个点对之间的距离

disP2P = {}

for idx1,point1 in enumerate(points):

for idx2,point2 in enumerate(points):

if (idx1 < idx2):

distance = pow(abs(point1[0]-point2[0]),2) + pow(abs(point1[1]-point2[1]),2)

disP2P[str(idx1)+"#"+str(idx2)] = distance

# 按距离降序将各个点对排序

disP2P = OrderedDict(sorted(disP2P.iteritems(), key=itemgetter(1), reverse=True))

# 当前有的簇个数

groupNum = len(groups)

# 过分合并会带入噪音点的影响,当簇数减为finalGroupNum时,停止合并

finalGroupNum = int(groupNum*0.1)

while groupNum > finalGroupNum:

# 选取下一个距离最近的点对

twopoins,distance = disP2P.popitem()

pointA = int(twopoins.split('#')[0])

pointB = int(twopoins.split('#')[1])

pointAGroup = groups[pointA]

pointBGroup = groups[pointB]

# 当前距离最近两点若不在同一簇中,将点B所在的簇中的所有点合并到点A所在的簇中,此时当前簇数减1

if(pointAGroup != pointBGroup):

for idx in range(len(groups)):

if groups[idx] == pointBGroup:

groups[idx] = pointAGroup

groupNum -= 1

# 选取规模最大的3个簇,其他簇归为噪音点

wantGroupNum = 3

finalGroup = Counter(groups).most_common(wantGroupNum)

finalGroup = [onecount[0] for onecount in finalGroup]

dropPoints = [points[idx] for idx in range(len(points)) if groups[idx] not in finalGroup]

# 打印规模最大的3个簇中的点

group1 = [points[idx] for idx in range(len(points)) if groups[idx]==finalGroup[0]]

group2 = [points[idx] for idx in range(len(points)) if groups[idx]==finalGroup[1]]

group3 = [points[idx] for idx in range(len(points)) if groups[idx]==finalGroup[2]]

pl.plot([eachpoint[0] for eachpoint in group1], [eachpoint[1] for eachpoint in group1], 'or')

pl.plot([eachpoint[0] for eachpoint in group2], [eachpoint[1] for eachpoint in group2], 'oy')

pl.plot([eachpoint[0] for eachpoint in group3], [eachpoint[1] for eachpoint in group3], 'og')

# 打印噪音点,黑色

pl.plot([eachpoint[0] for eachpoint in dropPoints], [eachpoint[1] for eachpoint in dropPoints], 'ok')

pl.show()