Hadoop学习之网络爬虫+分词+倒排索引实现搜索引擎案例

本项目实现的是:自己写一个网络爬虫,对搜狐(或者csdn)爬取新闻(博客)标题,然后把这些新闻标题和它的链接地址上传到hdfs多个文件上,一个文件对应一个标题和链接地址,然后通过分词技术对每个文件中的标题进行分词,分词后建立倒排索引以此来实现搜索引擎的功能,建立倒排索引不熟悉的朋友可以看看我上篇博客

Hadoop–倒排索引过程详解

首先 要自己写一个网络爬虫

由于我开始写爬虫的时候用了htmlparser,把所有搜到的链接存到队列,并且垂直搜索,这个工作量太大,爬了一个小时还没爬完造成了我电脑的死机,所以,现在我就去掉了垂直搜索,只爬搜狐的主页的新闻文章链接

不多说,看代码

首先看下载工具类,解释看注释

package com.yc.spider;

import java.io.BufferedInputStream;

import java.io.BufferedOutputStream;

import java.io.File;

import java.io.FileOutputStream;

import java.io.IOException;

import java.net.HttpURLConnection;

import java.net.MalformedURLException;

import java.net.URL;

import java.text.DateFormat;

import java.text.SimpleDateFormat;

import java.util.Date;

import java.util.List;

import java.util.Random;

import java.util.Scanner;

import java.util.Set;

import java.util.regex.Matcher;

import java.util.regex.Pattern;

/**

* 下载工具类

* @author 汤高

*

*/

public class DownLoadTool {

/**

* 下载页面的内容

* 就是根据地址下载整个html标签

*

* @param addr

* @return

*/

public String downLoadUrl(final String addr) {

StringBuffer sb = new StringBuffer();

try {

URL url;

if(addr.startsWith("http://")==false){

String urladdr=addr+"http://";

url = new URL(urladdr);

}else{

System.out.println(addr);

url = new URL(addr);

}

HttpURLConnection con = (HttpURLConnection) url.openConnection();

con.setConnectTimeout(5000);

con.connect();

if (con.getResponseCode() == 200) {

BufferedInputStream bis = new BufferedInputStream(con

.getInputStream());

Scanner sc = new Scanner(bis,"gbk");

while (sc.hasNextLine()) {

sb.append(sc.nextLine());

}

}

} catch (IOException e) {

e.printStackTrace();

}

return sb.toString();

}

}

然后看一个文章链接的匹配类

package com.yc.spider;

import java.io.BufferedInputStream;

import java.io.IOException;

import java.net.HttpURLConnection;

import java.net.URL;

import java.util.ArrayList;

import java.util.HashSet;

import java.util.List;

import java.util.Scanner;

import java.util.Set;

import java.util.regex.Matcher;

import java.util.regex.Pattern;

/**

* 文章下载类

*

* @author 汤高

*

*

*/

public class ArticleDownLoad {

/**

* 取出文章的a标记href

*/

static String ARTICLE_URL = "]*\\s+href=\"?(http[^<>\"]*)\"[^<>]*>([^<]*)" ;

static Set getImageLink(String html) {

Set result = new HashSet();

// 创建一个Pattern模式类,编译这个正则表达式

Pattern p = Pattern.compile(ARTICLE_URL, Pattern.CASE_INSENSITIVE);

// 定义一个匹配器的类

Matcher matcher = p.matcher(html);

while (matcher.find()) {

System.out.println("======="+matcher.group(1)+"\t"+matcher.group(2));

result.add(matcher.group(1)+"\t"+matcher.group(2));

}

return result;

}

}

下面看爬虫类

package com.yc.spider;

import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException;

import java.util.List;

import java.util.Set;

import java.util.regex.Matcher;

import java.util.regex.Pattern;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataOutputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.htmlparser.util.ParserException;

/**

* 把爬到的内容上传到hdfs

* @author 汤高

*

*

*/

public class Spider {

private DownLoadTool dlt = new DownLoadTool();

private ArticleDownLoad pdl = new ArticleDownLoad();

public void crawling(String url) {

try {

Configuration conf = new Configuration();

URI uri = new URI("hdfs://192.168.52.140:9000");

FileSystem hdfs = FileSystem.get(uri, conf);

String html = dlt.downLoadUrl(url);

Set allneed = pdl.getImageLink(html);

for (String addr : allneed) {

//生成文件路径

Path p1 = new Path("/myspider/" + System.currentTimeMillis());

FSDataOutputStream dos = hdfs.create(p1);

String a = addr + "\n";

//把内容写入文件

dos.write(a.getBytes());

}

} catch (IllegalArgumentException e) {

e.printStackTrace();

} catch (URISyntaxException e) {

e.printStackTrace();

} catch (IOException e) {

e.printStackTrace();

}

}

}

最后看测试类来爬内容

package com.yc.spider;

import java.io.FileNotFoundException;

import org.htmlparser.util.ParserException;

public class Test {

public static void main(String[] args) throws ParserException {

Spider s=new Spider();

// s.crawling("http://blog.csdn.net");

s.crawling("http://www.sohu.com");

}

}

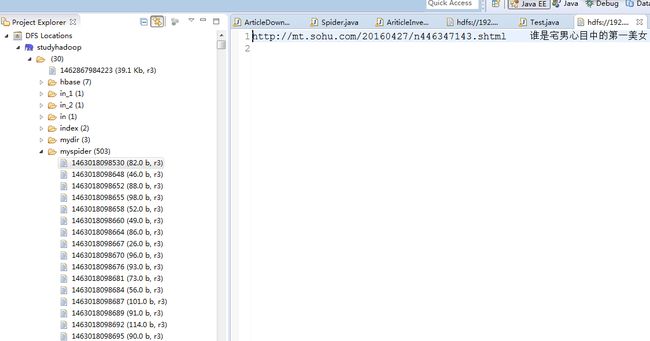

爬到的内容上传到hdfs上了

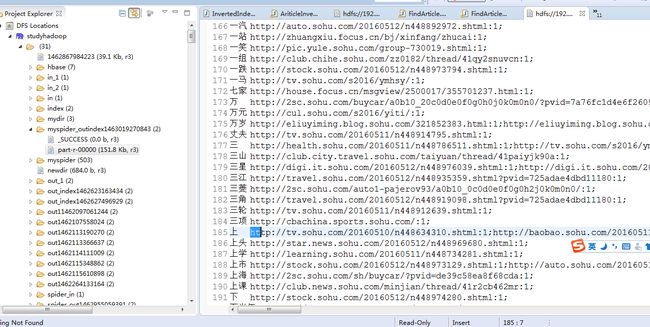

下面进行分词和建倒排索引

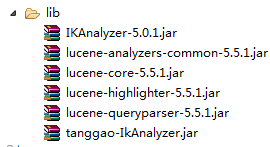

看分词所用到的包

我的分词用了lucenne5.5.1版本

中文分词用到了IKAnalyzer-5.0.1版本,但是与我的lucenne5.5.1不兼容,所以我做了一下兼容,然后自己打成了一个tanggao-IkAnalyzer.jar包,大家可以用现在最新的IKAnalyzer2012版本,应该可以兼容lucenne5.5.1版本

看代码

package com.tg.hadoop;

import java.io.IOException;

import java.io.StringReader;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.input.KeyValueTextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.analysis.TokenStream;

import org.apache.lucene.analysis.tokenattributes.OffsetAttribute;

import com.tg.Ik.IKAnalyzer5x;

public class AriticleInvertedIndex {

/**

*

* @author 汤高

*

*/

public static class ArticleInvertedIndexMapper extends Mapper{

private Text keyInfo = new Text(); // 存储单词和URI的组合

private Text valueInfo = new Text(); //存储词频

@Override

protected void map(Text key, Text value, Mapper.Context context)

throws IOException, InterruptedException {

//把标题分词

Analyzer analyzer = new IKAnalyzer5x(true);

TokenStream ts = analyzer.tokenStream("field", new StringReader( value.toString().trim() ));

OffsetAttribute offsetAtt = ts.addAttribute(OffsetAttribute.class);

try {

ts.reset();

while (ts.incrementToken()) {

System.out.println(offsetAtt.toString().trim());

keyInfo.set( offsetAtt.toString()+":"+key);

valueInfo.set("1");

System.out.println("key"+keyInfo);

System.out.println("value"+valueInfo);

context.write(keyInfo, valueInfo);

}

ts.end();

} finally {

ts.close();

}

}

}

public static class ArticleInvertedIndexCombiner extends Reducer{

private Text info = new Text();

@Override

protected void reduce(Text key, Iterable values, Reducer.Context context)

throws IOException, InterruptedException {

//统计词频

int sum = 0;

for (Text value : values) {

sum += Integer.parseInt(value.toString() );

}

int splitIndex = key.toString().indexOf(":");

//重新设置value值由URI和词频组成

info.set( key.toString().substring( splitIndex + 1) +":"+sum );

//重新设置key值为单词

key.set( key.toString().substring(0,splitIndex));

context.write(key, info);

System.out.println("key"+key);

System.out.println("value"+info);

}

}

public static class ArticleInvertedIndexReducer extends Reducer{

private Text result = new Text();

@Override

protected void reduce(Text key, Iterable values, Reducer.Context context)

throws IOException, InterruptedException {

//生成文档列表

String fileList = new String();

for (Text value : values) {

fileList += value.toString()+";";

}

result.set(fileList);

context.write(key, result);

}

}

public static void main(String[] args) {

try {

Configuration conf = new Configuration();

Job job = Job.getInstance(conf,"InvertedIndex");

job.setJarByClass(AriticleInvertedIndex.class);

job.setInputFormatClass(KeyValueTextInputFormat.class);

//实现map函数,根据输入的对生成中间结果。

job.setMapperClass(ArticleInvertedIndexMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(Text.class);

job.setCombinerClass(ArticleInvertedIndexCombiner.class);

job.setReducerClass(ArticleInvertedIndexReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

FileInputFormat.addInputPath(job, new Path("hdfs://192.168.52.140:9000/myspider/"));

FileOutputFormat.setOutputPath(job, new Path("hdfs://192.168.52.140:9000/myspider_outindex"+System.currentTimeMillis()+"/"));

System.exit(job.waitForCompletion(true) ? 0 : 1);

} catch (IllegalStateException e) {

e.printStackTrace();

} catch (IllegalArgumentException e) {

e.printStackTrace();

} catch (ClassNotFoundException e) {

e.printStackTrace();

} catch (IOException e) {

e.printStackTrace();

} catch (InterruptedException e) {

e.printStackTrace();

}

}

}

转载请指明出处:http://blog.csdn.net/tanggao1314/article/details/51382382