TensorFlow实战:Chapter-8上(Mask R-CNN介绍与实现)

简介

论文地址:Mask R-CNN

源代码:matterport - github

代码源于matterport的工作组,可以在github上fork它们组的工作。

软件必备

复现的Mask R-CNN是基于Python3,Keras,TensorFlow。

- Python 3.4+

- TensorFlow 1.3+

- Keras 2.0.8+

- Jupyter Notebook

- Numpy, skimage, scipy

建议配置一个高版本的Anaconda3+TensorFlow-GPU版本。

Mask R-CNN论文回顾

Mask R-CNN(简称MRCNN)是基于R-CNN系列、FPN、FCIS等工作之上的,MRCNN的思路很简洁:Faster R-CNN针对每个候选区域有两个输出:种类标签和bbox的偏移量。那么MRCNN就在Faster R-CNN的基础上通过增加一个分支进而再增加一个输出,即物体掩膜(object mask)。

先回顾一下Faster R-CNN, Faster R-CNN主要由两个阶段组成:区域候选网络(Region Proposal Network,RPN)和基础的Fast R-CNN模型。

MRCNN采用和Faster R-CNN相同的两个阶段,具有相同的第一层(即RPN),第二阶段,除了预测种类和bbox回归,并且并行的对每个RoI预测了对应的二值掩膜(binary mask)。示意图如下:

这样做可以将整个任务简化为mulit-stage pipeline,解耦了多个子任务的关系,现阶段来看,这样做好处颇多。

主要工作

损失函数的定义

依旧采用的是多任务损失函数,针对每个每个RoI定义为

掩膜分支针对每个RoI产生一个 Km2 K m 2 的输出,即K个分辨率为 m×m m × m 的二值的掩膜, K K 为分类物体的种类数目。依据预测类别分支预测的类型 i i ,只将第 i i 的二值掩膜输出记为 Lmask L m a s k 。

掩膜分支的损失计算如下示意图:

- mask branch 预测 K K 个种类的 m×m m × m 二值掩膜输出

- 依据种类预测分支(Faster R-CNN部分)预测结果:当前RoI的物体种类为 i i

- 第 i i 个二值掩膜输出就是该RoI的损失 Lmask L m a s k

对于预测的二值掩膜输出,我们对每个像素点应用sigmoid函数,整体损失定义为平均二值交叉损失熵。

引入预测 K K 个输出的机制,允许每个类都生成独立的掩膜,避免类间竞争。这样做解耦了掩膜和种类预测。不像是FCN的方法,在每个像素点上应用softmax函数,整体采用的多任务交叉熵,这样会导致类间竞争,最终导致分割效果差。

掩膜表示到RoIAlign层

在Faster R-CNN上预测物体标签或bbox偏移量是将feature map压缩到FC层最终输出vector,压缩的过程丢失了空间上(平面结构)的信息,而掩膜是对输入目标做空间上的编码,直接用卷积形式表示像素点之间的对应关系那是最好的了。

输出掩膜的操作是不需要压缩输出vector,所以可以使用FCN(Full Convolutional Network),不仅效率高,而且参数量还少。为了更好的表示出RoI输入和FCN输出的feature之间的像素对应关系,提出了RoIAlign层。

先回顾一下RoIPool层:

其核心思想是将不同大小的RoI输入到RoIPool层,RoIPool层将RoI量化成不同粒度的特征图(量化成一个一个bin),在此基础上使用池化操作提取特征。

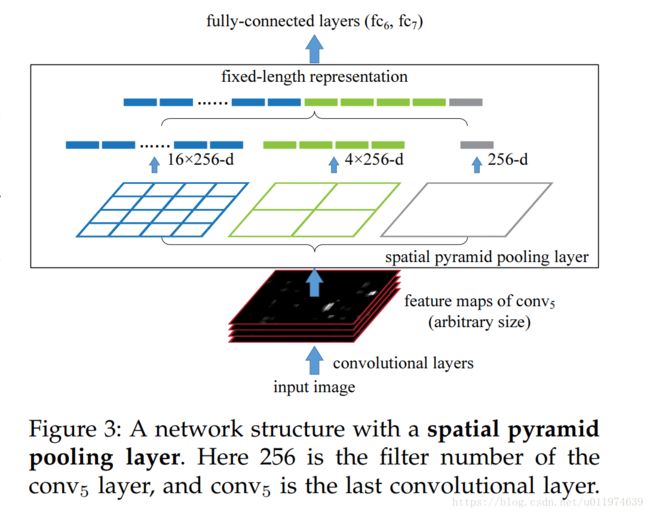

下图是SPPNet内对RoI的操作,在Faster R-CNN中只使用了一种粒度的特征图:

平面示意图如下:

这里面存在一些问题,在上面量操作上,实际计算中是使用的是 [x/16] [ x / 16 ] , 16 16 的量化的步长, [⋅] [ · ] 是舍入操作(rounding)。这套量化舍入操作在提取特征时有着较好的鲁棒性(检测物体具有平移不变性等),但是这很不利于掩膜定位,有较大负面效果。

针对这个问题,提出了RoIAlign层:避免了对RoI边界或bin的量化操作,在扩展feature map时使用双线性插值算法。这里实现的架构要看FPN论文:

一开始的Faster R-CNN是基于最上层的特征映射做分割和预测的,这会丢失高分辨下的信息,直观的影响就是丢失小目标检测,对细节部分丢失不敏感。受到SSD的启发,FPN也使用了多层特征做预测。这里使用的top-down的架构,是将高层的特征反卷积带到低层的特征(即有了语义,也有精度),而在MRCNN论文里面说的双线性差值算法就是这里的top-down反卷积是用的插值算法。

总结

MRCNN有着优异的效果,除去了掩膜分支的作用,很大程度上是因为基础特征网络的增强,论文使用的是ResNeXt101+FPN的top-down组合,有着极强的特征学习能力,并且在实验中夹杂这多种工程调优技巧。

但是吧,MRCNN的缺点也很明显,需要大的计算能力并且速度慢,这离实际应用还是有很长的路,坐等大神们发力!

如何使用代码

项目的源代码地址为:github/Mask R-CNN

满足运行环境

- Python 3.4+

- TensorFlow 1.3+

- Keras 2.0.8+

- Jupyter Notebook

- Numpy, skimage, scipy, Pillow(安装Anaconda3直接完事)

- cv2

下载代码

linux环境下直接clone到本地

git clone https://github.com/matterport/Mask_RCNN.gitWindows下下载代码即可,地址在上面

下载模型在COCO数据集上预训练权重(

mask_rcnn_coco.h5),下载地址releasses Page.如果需要在COCO数据集上训练或测试,需要安装

pycocotools,clone下来,make生成对应的文件,拷贝下工程目录下即可(方法可参考下面repos内的README.md文件)。- Linux: https://github.com/waleedka/coco

- Windows: https://github.com/philferriere/cocoapi. You must have the Visual C++ 2015 build tools on your path (see the repo for additional details)

如果使用COCO数据集,需要:

- pycocotools (即第4条描述的)

- MS COCO Dataset。2014的训练集数据

- COCO子数据集,5K的minival和35K的validation-minus-minival。(这两个数据集下载比较慢,没有贴原地址,而是我的CSDN地址,分不够下载的可以私信我~)

下面的代码分析运行环境都是jupyter。

代码分析-数据预处理

项目源代码:matterport - github

inspect_data.ipynb展示了准备训练数据的预处理步骤.

导包

导入的coco包需要从coco/PythonAPI上下载操作数据代码,并在本地使用make指令编译.将生成的pycocotools拷贝至工程的主目录下,即和该inspect_data.ipynb文件同一目录。

import os

import sys

import itertools

import math

import logging

import json

import re

import random

from collections import OrderedDict

import numpy as np

import matplotlib

import matplotlib.pyplot as plt

import matplotlib.patches as patches

import matplotlib.lines as lines

from matplotlib.patches import Polygon

import utils

import visualize

from visualize import display_images

import model as modellib

from model import log

%matplotlib inline

ROOT_DIR = os.getcwd()

# 选择任意一个代码块

# import shapes

# config = shapes.ShapesConfig() # 使用代码创建数据集,后面会有介绍

# MS COCO 数据集

import coco

config = coco.CocoConfig()

COCO_DIR = "/root/模型复现/Mask_RCNN-master/coco" # COCO数据存放位置

加载数据集

COCO数据集的训练集内有82081张图片,共81类。

# 这里使用的是COCO

if config.NAME == 'shapes':

dataset = shapes.ShapesDataset()

dataset.load_shapes(500, config.IMAGE_SHAPE[0], config.IMAGE_SHAPE[1])

elif config.NAME == "coco":

dataset = coco.CocoDataset()

dataset.load_coco(COCO_DIR, "train")

# Must call before using the dataset

dataset.prepare()

print("Image Count: {}".format(len(dataset.image_ids)))

print("Class Count: {}".format(dataset.num_classes))

for i, info in enumerate(dataset.class_info):

print("{:3}. {:50}".format(i, info['name']))

>>>

>>>

loading annotations into memory...

Done (t=7.68s)

creating index...

index created!

Image Count: 82081

Class Count: 81

0. BG

1. person

2. bicycle

...

77. scissors

78. teddy bear

79. hair drier

80. toothbrush随机找几张照片看看:

# 加载和展示随机几张照片和对应的mask

image_ids = np.random.choice(dataset.image_ids, 4)

for image_id in image_ids:

image = dataset.load_image(image_id)

mask, class_ids = dataset.load_mask(image_id)

visualize.display_top_masks(image, mask, class_ids, dataset.class_names)Bounding Boxes(bbox)

这里我们不使用数据集本身提供的bbox坐标数据,取而代之的是通过mask计算出bbox,这样可以在不同的数据集下对bbox使用相同的处理方法。因为我们是从mask上计算bbox,相比与从图片计算bbox转换来说,更便于放缩,旋转,裁剪图像。

# Load random image and mask.

image_id = random.choice(dataset.image_ids)

image = dataset.load_image(image_id)

mask, class_ids = dataset.load_mask(image_id)

# Compute Bounding box

bbox = utils.extract_bboxes(mask)

# Display image and additional stats

print("image_id ", image_id, dataset.image_reference(image_id))

log("image", image)

log("mask", mask)

log("class_ids", class_ids)

log("bbox", bbox)

# Display image and instances

visualize.display_instances(image, bbox, mask, class_ids, dataset.class_names)

>>>

>>>

image_id 41194 http://cocodataset.org/#explore?id=190360

image shape: (428, 640, 3) min: 0.00000 max: 255.00000

mask shape: (428, 640, 5) min: 0.00000 max: 1.00000

class_ids shape: (5,) min: 1.00000 max: 59.00000

bbox shape: (5, 4) min: 1.00000 max: 640.00000

调整图片大小

因为训练时是批量处理的,每次batch要处理多张图片,模型需要一个固定的输入大小。故将训练集的图片放缩到一个固定的大小(1024×1024),放缩的过程要保持不变的宽高比,如果照片本身不是正方形,那边就在边缘填充0.(这在R-CNN论文里面论证过)。

需要注意的是:原图片做了放缩,对应的mask也需要放缩,因为我们的bbox是依据mask计算出来的,这样省了修改程序了~

# Load random image and mask.

image_id = np.random.choice(dataset.image_ids, 1)[0]

image = dataset.load_image(image_id)

mask, class_ids = dataset.load_mask(image_id)

original_shape = image.shape

# 调整到固定大小

image, window, scale, padding = utils.resize_image(

image,

min_dim=config.IMAGE_MIN_DIM,

max_dim=config.IMAGE_MAX_DIM,

padding=config.IMAGE_PADDING)

mask = utils.resize_mask(mask, scale, padding) # mask也要放缩

# Compute Bounding box

bbox = utils.extract_bboxes(mask)

# Display image and additional stats

print("image_id: ", image_id, dataset.image_reference(image_id))

print("Original shape: ", original_shape)

log("image", image)

log("mask", mask)

log("class_ids", class_ids)

log("bbox", bbox)

# Display image and instances

visualize.display_instances(image, bbox, mask, class_ids, dataset.class_names)

>>>

>>>

image_id: 6104 http://cocodataset.org/#explore?id=139889

Original shape: (426, 640, 3)

image shape: (1024, 1024, 3) min: 0.00000 max: 255.00000

mask shape: (1024, 1024, 2) min: 0.00000 max: 1.00000

class_ids shape: (2,) min: 24.00000 max: 24.00000

bbox shape: (2, 4) min: 169.00000 max: 917.00000原图片从(426, 640, 3)放大到(1024, 1024, 3),图片的上下两端都填充了0(黑色的部分):

Mini Mask

训练高分辨率的图片时,表示每个目标的二值mask也会非常大。例如,训练一张1024×1024的图片,其目标物体对应的mask需要1MB的内存(用boolean变量表示单点),如果1张图片有100个目标物体就需要100MB。讲道理,如果是五颜六色就算了,但实际上表示mask的图像矩阵上大部分都是0,很浪费空间。

为了节省空间同时提升训练速度,我们优化mask的表示方式,不直接存储那么多0,而是通过存储有值坐标的相对位置来压缩表示数据的内存,原理和压缩算法差类似。

- 我们存储在对象边界框内(bbox内)的mask像素,而不是存储整张图片的mask像素,大多数物体相对比于整张图片是较小的,节省存储空间是通过少存储目标周围的0实现的。

- 将mask调整到小尺寸

56×56,对于大尺寸的物体会丢失一些精度,但是大多数对象的注解并不是很准确,所以大多数情况下这些损失是可以忽略的。(可以在config类中设置mini mask的size。)

说白了就是在处理数据的时候,我们先利用标注的mask信息计算出对应的bbox框,而后利用计算的bbox框反过来改变mask的表示方法,目的就是操作规范化,同时降低存储空间和计算复杂度。

image_id = np.random.choice(dataset.image_ids, 1)[0]

# 使用load_image_gt方法获取bbox和mask

image, image_meta, bbox, mask = modellib.load_image_gt(

dataset, config, image_id, use_mini_mask=False)

log("image", image)

log("image_meta", image_meta)

log("bbox", bbox)

log("mask", mask)

display_images([image]+[mask[:,:,i] for i in range(min(mask.shape[-1], 7))])

>>>

>>>

image shape: (1024, 1024, 3) min: 0.00000 max: 252.00000

image_meta shape: (89,) min: 0.00000 max: 66849.00000

bbox shape: (1, 5) min: 62.00000 max: 987.00000

mask shape: (1024, 1024, 1) min: 0.00000 max: 1.00000

随机选取一张图片,可以看到图片目标相对与图片本身较小:

visualize.display_instances(image, bbox[:,:4], mask, bbox[:,4], dataset.class_names)使用load_image_gt方法,传入use_mini_mask=True实现mini mask操作:

# load_image_gt方法集成了mini_mask的操作

image, image_meta, bbox, mask = modellib.load_image_gt(

dataset, config, image_id, augment=True, use_mini_mask=True)

log("mask", mask)

display_images([image]+[mask[:,:,i] for i in range(min(mask.shape[-1], 7))])

>>>

>>>

mask shape: (56, 56, 1) min: 0.00000 max: 1.00000这里为了展现效果,将mini_mask表示方法通过expand_mask方法扩大到大图像下的mask,再绘制试试:

mask = utils.expand_mask(bbox, mask, image.shape)

visualize.display_instances(image, bbox[:,:4], mask, bbox[:,4], dataset.class_names)

可以看到边界是锯齿状,这也是压缩的副作用,总体来说效果还可以~

Anchors

Anchors是Faster R-CNN内提出的方法。

模型在运行过程中有多层feature map,同时也会有非常多的Anchors,处理好Anchors的顺序非常重要。例如使用anchors的顺序要匹配卷积处理的顺序等规则。

对于FPN网络,anchor的顺序要与卷积层的输出相匹配:

- 先按金字塔等级排序,第一层的所有anchors,第二层所有anchors,etc..通过按层次可以很容易分开所有的anchors

- 对于每个层,通过feature map处理序列来排列anchors,通常,一个卷积层处理一个feature map 是从左上角开始,向右一行一行来整

- 对于feature map的每个cell,可为不同比例的Anchors采用随意顺序,这里我们将采用不同比例的顺序当参数传递给相应的函数

Anchor步长:在FPN架构下,前几层的feature map是高分辨率的。例如,如果输入是1024×1024,那么第一层的feature map大小为256×256,这会产生约200K的anchors(2562563),这些anchor都是32×32,相对于图片像素的步长为4(1024/256=4),这里面有很多重叠,如果我们能够为feature map的每个点生成独有的anchor,就会显著的降低负载,如果设置anchor的步长为2,那么anchor的数量就会下降4倍。

这里我们使用的strides为2,这和论文不一样,在Config类中,我们配置了3中比例([0.5, 1, 2])的anchors,以第一层feature map举例,其大小为256×256,故有 feature_map2×ratiosstride2=256×256×322=49152 f e a t u r e _ m a p 2 × r a t i o s s t r i d e 2 = 256 × 256 × 3 2 2 = 49152 。

# 生成 Anchors

anchors = utils.generate_pyramid_anchors(config.RPN_ANCHOR_SCALES,

config.RPN_ANCHOR_RATIOS,

config.BACKBONE_SHAPES,

config.BACKBONE_STRIDES,

config.RPN_ANCHOR_STRIDE)

# Print summary of anchors

print("Scales: ", config.RPN_ANCHOR_SCALES)

print("ratios: {}, \nAnchors_per_cell:{}".format(config.RPN_ANCHOR_RATIOS , len(config.RPN_ANCHOR_RATIOS)))

print("backbone_shapes: ",config.BACKBONE_SHAPES)

print("backbone_strides: ",config.BACKBONE_STRIDES)

print("rpn_anchor_stride: ",config.RPN_ANCHOR_STRIDE)

num_levels = len(config.BACKBONE_SHAPES)

print("Count: ", anchors.shape[0])

print("Levels: ", num_levels)

anchors_per_level = []

for l in range(num_levels):

num_cells = config.BACKBONE_SHAPES[l][0] * config.BACKBONE_SHAPES[l][1]

anchors_per_level.append(anchors_per_cell * num_cells // config.RPN_ANCHOR_STRIDE**2)

print("Anchors in Level {}: {}".format(l, anchors_per_level[l]))

>>>

>>>

Scales: (32, 64, 128, 256, 512)

ratios: [0.5, 1, 2],

anchors_per_cell:3

backbone_shapes: [[256 256] [128 128] [ 64 64] [ 32 32] [ 16 16]]

backbone_strides: [4, 8, 16, 32, 64]

rpn_anchor_stride: 2

Count: 65472

Levels: 5

Anchors in Level 0: 49152

Anchors in Level 1: 12288

Anchors in Level 2: 3072

Anchors in Level 3: 768

Anchors in Level 4: 192

看看位置图片中心点cell的不同层anchor表示:

# Load and draw random image

image_id = np.random.choice(dataset.image_ids, 1)[0]

image, image_meta, _, _ = modellib.load_image_gt(dataset, config, image_id)

fig, ax = plt.subplots(1, figsize=(10, 10))

ax.imshow(image)

levels = len(config.BACKBONE_SHAPES) # 共有5层 15个anchors

for level in range(levels):

colors = visualize.random_colors(levels)

# Compute the index of the anchors at the center of the image

level_start = sum(anchors_per_level[:level]) # sum of anchors of previous levels

level_anchors = anchors[level_start:level_start+anchors_per_level[level]]

print("Level {}. Anchors: {:6} Feature map Shape: {}".format(level, level_anchors.shape[0],

config.BACKBONE_SHAPES[level]))

center_cell = config.BACKBONE_SHAPES[level] // 2

center_cell_index = (center_cell[0] * config.BACKBONE_SHAPES[level][1] + center_cell[1])

level_center = center_cell_index * anchors_per_cell

center_anchor = anchors_per_cell * (

(center_cell[0] * config.BACKBONE_SHAPES[level][1] / config.RPN_ANCHOR_STRIDE**2) \

+ center_cell[1] / config.RPN_ANCHOR_STRIDE)

level_center = int(center_anchor)

# Draw anchors. Brightness show the order in the array, dark to bright.

for i, rect in enumerate(level_anchors[level_center:level_center+anchors_per_cell]):

y1, x1, y2, x2 = rect

p = patches.Rectangle((x1, y1), x2-x1, y2-y1, linewidth=2, facecolor='none',

edgecolor=(i+1)*np.array(colors[level]) / anchors_per_cell)

ax.add_patch(p)

>>>

>>>

Level 0. Anchors: 49152 Feature map Shape: [256 256]

Level 1. Anchors: 12288 Feature map Shape: [128 128]

Level 2. Anchors: 3072 Feature map Shape: [64 64]

Level 3. Anchors: 768 Feature map Shape: [32 32]

Level 4. Anchors: 192 Feature map Shape: [16 16]

代码分析-在自己的数据集上训练模型

项目源代码:matterport - github

train_shapes.ipynb展示了如何在自己的数据集上训练Mask R-CNN.

如果想在你的个人训练集上训练模型,需要分别创建两个子类继承下面两个父类:

Config类,该类包含了默认的配置,子类继承该类在针对数据集定制配置。Dataset类,该类提供了一套api,新的数据集继承该类,同时覆写相关方法即可,这样可以在不修改模型代码的情况下,使用多种数据集(包括同时使用)。

无论是Dataset还是Config都是基类,使用是要继承并做相关定制,使用案例可参考下面的demo。

导包

因为demo中使用的数据集是使用opencv创建出来的,故不需要另外在下载数据集了。为了保证模型运行正常,此demo依旧需要在GPU上运行。

import os

import sys

import random

import math

import re

import time

import numpy as np

import cv2

import matplotlib

import matplotlib.pyplot as plt

from config import Config

import utils

import model as modellib

import visualize

from model import log

%matplotlib inline

ROOT_DIR = os.getcwd() # Root directory of the project

MODEL_DIR = os.path.join(ROOT_DIR, "logs") # Directory to save logs and trained model

COCO_MODEL_PATH = os.path.join(ROOT_DIR, "mask_rcnn_coco.h5") # Path to COCO trained weights

构建个人数据集

这里直接使用opencv创建一个数据集,数据集是由画布和简单的几何形状(三角形,正方形,圆形)组成。

构造的数据集需要继承utils.Dataset类,使用load_shapes()方法向外提供加载数据的方法,并需要重写下面的方法:

- load_image()

- load_mask()

- image_reference()

构造数据集的代码:

class ShapesDataset(utils.Dataset):

"""

生成一个数据集,数据集由简单的(三角形,正方形,圆形)放置在空白画布的图片组成。

"""

def load_shapes(self, count, height, width):

"""

产生对应数目的固定大小图片

count: 生成数据的数量

height, width: 产生图片的大小

"""

# 添加种类信息

self.add_class("shapes", 1, "square")

self.add_class("shapes", 2, "circle")

self.add_class("shapes", 3, "triangle")

# 生成随机规格形状,每张图片依据image_id指定

for i in range(count):

bg_color, shapes = self.random_image(height, width)

self.add_image("shapes", image_id=i, path=None,

width=width, height=height,

bg_color=bg_color, shapes=shapes)

def load_image(self, image_id):

"""

依据给定的iamge_id产生对应图片。

通常这个函数是读取文件的,这里我们是依据image_id到image_info里面查找信息,再生成图片

"""

info = self.image_info[image_id]

bg_color = np.array(info['bg_color']).reshape([1, 1, 3])

image = np.ones([info['height'], info['width'], 3], dtype=np.uint8)

image = image * bg_color.astype(np.uint8)

for shape, color, dims in info['shapes']:

image = self.draw_shape(image, shape, dims, color)

return image

def image_reference(self, image_id):

"""Return the shapes data of the image."""

info = self.image_info[image_id]

if info["source"] == "shapes":

return info["shapes"]

else:

super(self.__class__).image_reference(self, image_id)

def load_mask(self, image_id):

"""依据给定的image_id产生相应的规格形状的掩膜"""

info = self.image_info[image_id]

shapes = info['shapes']

count = len(shapes)

mask = np.zeros([info['height'], info['width'], count], dtype=np.uint8)

for i, (shape, _, dims) in enumerate(info['shapes']):

mask[:, :, i:i+1] = self.draw_shape(mask[:, :, i:i+1].copy(),

shape, dims, 1)

# Handle occlusions

occlusion = np.logical_not(mask[:, :, -1]).astype(np.uint8)

for i in range(count-2, -1, -1):

mask[:, :, i] = mask[:, :, i] * occlusion

occlusion = np.logical_and(occlusion, np.logical_not(mask[:, :, i]))

# Map class names to class IDs.

class_ids = np.array([self.class_names.index(s[0]) for s in shapes])

return mask, class_ids.astype(np.int32)

def draw_shape(self, image, shape, dims, color):

"""绘制给定的形状."""

# Get the center x, y and the size s

x, y, s = dims

if shape == 'square':

image = cv2.rectangle(image, (x-s, y-s), (x+s, y+s), color, -1)

elif shape == "circle":

image = cv2.circle(image, (x, y), s, color, -1)

elif shape == "triangle":

points = np.array([[(x, y-s),

(x-s/math.sin(math.radians(60)), y+s),

(x+s/math.sin(math.radians(60)), y+s),

]], dtype=np.int32)

image = cv2.fillPoly(image, points, color)

return image

def random_shape(self, height, width):

"""

依据给定的长宽边界生成随机形状

返回一个有三个值的元组:

* shape: 形状名称(square, circle, ...)

* color: 形状颜色(a tuple of 3 values, RGB.)

* dimensions: 随机形状的中心位置和大小(center_x,center_y,size)

"""

# Shape

shape = random.choice(["square", "circle", "triangle"])

# Color

color = tuple([random.randint(0, 255) for _ in range(3)])

# Center x, y

buffer = 20

y = random.randint(buffer, height - buffer - 1)

x = random.randint(buffer, width - buffer - 1)

# Size

s = random.randint(buffer, height//4)

return shape, color, (x, y, s)

def random_image(self, height, width):

"""

产生有多种形状的随机规格的图片

返回背景色 和 可以用于绘制图片的形状规格列表

"""

# 随机生成三个通道颜色

bg_color = np.array([random.randint(0, 255) for _ in range(3)])

# 生成一些随机形状并记录它们的bbox

shapes = []

boxes = []

N = random.randint(1, 4)

for _ in range(N):

shape, color, dims = self.random_shape(height, width)

shapes.append((shape, color, dims))

x, y, s = dims

boxes.append([y-s, x-s, y+s, x+s])

# 使用非极大值抑制避免各种形状之间覆盖 阈值为:0.3

keep_ixs = utils.non_max_suppression(np.array(boxes), np.arange(N), 0.3)

shapes = [s for i, s in enumerate(shapes) if i in keep_ixs]

return bg_color, shapes

用上面的数据类构造一组数据,看看:

# 构建训练集,大小为500

dataset_train = ShapesDataset()

dataset_train.load_shapes(500, config.IMAGE_SHAPE[0], config.IMAGE_SHAPE[1])

dataset_train.prepare()

# 构建验证集,大小为50

dataset_val = ShapesDataset()

dataset_val.load_shapes(50, config.IMAGE_SHAPE[0], config.IMAGE_SHAPE[1])

dataset_val.prepare()

# 随机选取4个样本

image_ids = np.random.choice(dataset_train.image_ids, 4)

for image_id in image_ids:

image = dataset_train.load_image(image_id)

mask, class_ids = dataset_train.load_mask(image_id)

visualize.display_top_masks(image, mask, class_ids, dataset_train.class_names)

为上面构造的数据集配置一个对应的ShapesConfig类,该类的作用统一模型配置参数。该类需要继承Config类:

class ShapesConfig(Config):

"""

为数据集添加训练配置

继承基类Config

"""

NAME = "shapes" # 该配置类的识别符

#Batch size is 8 (GPUs * images/GPU).

GPU_COUNT = 1 # GPU数量

IMAGES_PER_GPU = 8 # 单GPU上处理图片数(这里我们构造的数据集图片小,可以多处理几张)

# 分类种类数目 (包括背景)

NUM_CLASSES = 1 + 3 # background + 3 shapes

# 使用小图片可以更快的训练

IMAGE_MIN_DIM = 128 # 图片的小边长

IMAGE_MAX_DIM = 128 # 图片的大边长

# 使用小的anchors,因为数据图片和目标都小

RPN_ANCHOR_SCALES = (8, 16, 32, 64, 128) # anchor side in pixels

# 减少训练每张图片上的ROIs,因为图片很小且目标很少,

# Aim to allow ROI sampling to pick 33% positive ROIs.

TRAIN_ROIS_PER_IMAGE = 32

STEPS_PER_EPOCH = 100 # 因为数据简单,使用小的epoch

VALIDATION_STPES = 5 # 因为epoch较小,使用小的交叉验证步数

config = ShapesConfig()

config.print()

>>>

>>>

Configurations:

BACKBONE_SHAPES [[32 32]

[16 16]

[ 8 8]

[ 4 4]

[ 2 2]]

BACKBONE_STRIDES [4, 8, 16, 32, 64]

BATCH_SIZE 8

BBOX_STD_DEV [ 0.1 0.1 0.2 0.2]

DETECTION_MAX_INSTANCES 100

DETECTION_MIN_CONFIDENCE 0.7

DETECTION_NMS_THRESHOLD 0.3

GPU_COUNT 1

IMAGES_PER_GPU 8

IMAGE_MAX_DIM 128

IMAGE_MIN_DIM 128

IMAGE_PADDING True

IMAGE_SHAPE [128 128 3]

LEARNING_MOMENTUM 0.9

LEARNING_RATE 0.002

MASK_POOL_SIZE 14

MASK_SHAPE [28, 28]

MAX_GT_INSTANCES 100

MEAN_PIXEL [ 123.7 116.8 103.9]

MINI_MASK_SHAPE (56, 56)

NAME shapes

NUM_CLASSES 4

POOL_SIZE 7

POST_NMS_ROIS_INFERENCE 1000

POST_NMS_ROIS_TRAINING 2000

ROI_POSITIVE_RATIO 0.33

RPN_ANCHOR_RATIOS [0.5, 1, 2]

RPN_ANCHOR_SCALES (8, 16, 32, 64, 128)

RPN_ANCHOR_STRIDE 2

RPN_BBOX_STD_DEV [ 0.1 0.1 0.2 0.2]

RPN_TRAIN_ANCHORS_PER_IMAGE 256

STEPS_PER_EPOCH 100

TRAIN_ROIS_PER_IMAGE 32

USE_MINI_MASK True

USE_RPN_ROIS True

VALIDATION_STPES 5

WEIGHT_DECAY 0.0001

加载模型并训练

上面配置好了个人数据集和对应的Config了,下面加载预训练模型:

# 模型有两种模式: training inference

# 创建模型并设置training模式

model = modellib.MaskRCNN(mode="training", config=config,

model_dir=MODEL_DIR)

# 选择权重类型,这里我们的预训练权重是COCO的

init_with = "coco" # imagenet, coco, or last

if init_with == "imagenet":

model.load_weights(model.get_imagenet_weights(), by_name=True)

elif init_with == "coco":

# 载入在MS COCO上的预训练模型,跳过不一样的分类数目层

model.load_weights(COCO_MODEL_PATH, by_name=True,

exclude=["mrcnn_class_logits", "mrcnn_bbox_fc",

"mrcnn_bbox", "mrcnn_mask"])

elif init_with == "last":

# 载入你最后训练的模型,继续训练

model.load_weights(model.find_last()[1], by_name=True)

训练模型

我们前面基础层是加载预训练模型的,在预训练模型的基础上再训练,分为两步:

- 只训练head部分,为了不破坏基础层的提取能力,我们冻结所有backbone layers,只训练随机初始化的层,为了达成只训练head部分,训练时需要向

train()方法传入layers='heads'参数。 - Fine-tune所有层,上面训练了一会head部分,为了更好的适配新的数据集,需要fine-tune,使用

layers='all'参数。

这两个步骤也是做迁移学习的必备套路了~

1. 训练head部分

# 通过传入参数layers="heads" 冻结处理head部分的所有层。可以通过传入一个正则表达式选择要训练的层

model.train(dataset_train, dataset_val,

learning_rate=config.LEARNING_RATE,

epochs=1,

layers='heads')

>>>

>>>

Starting at epoch 0. LR=0.002

Checkpoint Path: /root/Mask_RCNNmaster/logs/shapes20171103T2047/mask_rcnn_shapes_{epoch:04d}.h5

Selecting layers to train

fpn_c5p5 (Conv2D)

fpn_c4p4 (Conv2D)

fpn_c3p3 (Conv2D)

fpn_c2p2 (Conv2D)

fpn_p5 (Conv2D)

fpn_p2 (Conv2D)

fpn_p3 (Conv2D)

fpn_p4 (Conv2D)

In model: rpn_model

rpn_conv_shared (Conv2D)

rpn_class_raw (Conv2D)

rpn_bbox_pred (Conv2D)

mrcnn_mask_conv1 (TimeDistributed)

...

mrcnn_mask_conv4 (TimeDistributed)

mrcnn_mask_bn4 (TimeDistributed)

mrcnn_bbox_fc (TimeDistributed)

mrcnn_mask_deconv (TimeDistributed)

mrcnn_class_logits (TimeDistributed)

mrcnn_mask (TimeDistributed)

Epoch 1/1

100/100 [==============================] - 37s 371ms/step - loss: 2.5472 - rpn_class_loss: 0.0244 - rpn_bbox_loss: 1.1118 - mrcnn_class_loss: 0.3692 - mrcnn_bbox_loss: 0.3783 - mrcnn_mask_loss: 0.3223 - val_loss: 1.7634 - val_rpn_class_loss: 0.0143 - val_rpn_bbox_loss: 0.9989 - val_mrcnn_class_loss: 0.1673 - val_mrcnn_bbox_loss: 0.0857 - val_mrcnn_mask_loss: 0.1559

2. Fine tune 所有层

# 通过传入参数layers="all"所有层

model.train(dataset_train, dataset_val,

learning_rate=config.LEARNING_RATE / 10,

epochs=2,

layers="all")

>>>

>>>

Starting at epoch 1. LR=0.0002

Checkpoint Path: /root/Mask_RCNN-master/logs/shapes20171103T2047/mask_rcnn_shapes_{epoch:04d}.h5

Selecting layers to train

conv1 (Conv2D)

bn_conv1 (BatchNorm)

res2a_branch2a (Conv2D)

bn2a_branch2a (BatchNorm)

res2a_branch2b (Conv2D)

...

...

res5c_branch2c (Conv2D)

bn5c_branch2c (BatchNorm)

fpn_c5p5 (Conv2D)

fpn_c4p4 (Conv2D)

fpn_c3p3 (Conv2D)

fpn_c2p2 (Conv2D)

fpn_p5 (Conv2D)

fpn_p2 (Conv2D)

fpn_p3 (Conv2D)

fpn_p4 (Conv2D)

In model: rpn_model

rpn_conv_shared (Conv2D)

rpn_class_raw (Conv2D)

rpn_bbox_pred (Conv2D)

mrcnn_mask_conv1 (TimeDistributed)

mrcnn_mask_bn1 (TimeDistributed)

mrcnn_mask_conv2 (TimeDistributed)

mrcnn_class_conv1 (TimeDistributed)

mrcnn_mask_bn2 (TimeDistributed)

mrcnn_class_bn1 (TimeDistributed)

mrcnn_mask_conv3 (TimeDistributed)

mrcnn_mask_bn3 (TimeDistributed)

mrcnn_class_conv2 (TimeDistributed)

mrcnn_class_bn2 (TimeDistributed)

mrcnn_mask_conv4 (TimeDistributed)

mrcnn_mask_bn4 (TimeDistributed)

mrcnn_bbox_fc (TimeDistributed)

mrcnn_mask_deconv (TimeDistributed)

mrcnn_class_logits (TimeDistributed)

mrcnn_mask (TimeDistributed)

Epoch 2/2

100/100 [==============================] - 38s 381ms/step - loss: 11.4351 - rpn_class_loss: 0.0190 - rpn_bbox_loss: 0.9108 - mrcnn_class_loss: 0.2085 - mrcnn_bbox_loss: 0.1606 - mrcnn_mask_loss: 0.2198 - val_loss: 11.2957 - val_rpn_class_loss: 0.0173 - val_rpn_bbox_loss: 0.8740 - val_mrcnn_class_loss: 0.1590 - val_mrcnn_bbox_loss: 0.0997 - val_mrcnn_mask_loss: 0.2296

模型预测

模型预测也需要配置一个类InferenceConfig类,大部分配置和train相同:

class InferenceConfig(ShapesConfig):

GPU_COUNT = 1

IMAGES_PER_GPU = 1

inference_config = InferenceConfig()

# 重新创建模型设置为inference模式

model = modellib.MaskRCNN(mode="inference",

config=inference_config,

model_dir=MODEL_DIR)

# 获取保存的权重,或者手动指定目录位置

# model_path = os.path.join(ROOT_DIR, ".h5 file name here")

model_path = model.find_last()[1]

# 加载权重

assert model_path != "", "Provide path to trained weights"

print("Loading weights from ", model_path)

model.load_weights(model_path, by_name=True)

# 测试随机图片

image_id = random.choice(dataset_val.image_ids)

original_image, image_meta, gt_bbox, gt_mask =\

modellib.load_image_gt(dataset_val, inference_config,

image_id, use_mini_mask=False)

log("original_image", original_image)

log("image_meta", image_meta)

log("gt_bbox", gt_bbox)

log("gt_mask", gt_mask)

visualize.display_instances(original_image, gt_bbox[:,:4], gt_mask, gt_bbox[:,4],

dataset_train.class_names, figsize=(8, 8))

>>>

>>>

original_image shape: (128, 128, 3) min: 18.00000 max: 231.00000

image_meta shape: (12,) min: 0.00000 max: 128.00000

gt_bbox shape: (2, 5) min: 1.00000 max: 115.00000

gt_mask shape: (128, 128, 2) min: 0.00000 max: 1.00000随机几张验证集图片看看:

使用模型预测:

def get_ax(rows=1, cols=1, size=8):

"""返回Matplotlib Axes数组用于可视化.提供中心点控制图形大小"""

_, ax = plt.subplots(rows, cols, figsize=(size*cols, size*rows))

return ax

results = model.detect([original_image], verbose=1) # 预测

r = results[0]

visualize.display_instances(original_image, r['rois'], r['masks'], r['class_ids'],

dataset_val.class_names, r['scores'], ax=get_ax())

>>>

>>>

Processing 1 images

image shape: (128, 128, 3) min: 18.00000 max: 231.00000

molded_images shape: (1, 128, 128, 3) min: -98.80000 max: 127.10000

image_metas shape: (1, 12) min: 0.00000 max: 128.00000计算ap值:

# Compute VOC-Style mAP @ IoU=0.5

# Running on 10 images. Increase for better accuracy.

image_ids = np.random.choice(dataset_val.image_ids, 10)

APs = []

for image_id in image_ids:

# 加载数据

image, image_meta, gt_bbox, gt_mask =\

modellib.load_image_gt(dataset_val, inference_config,

image_id, use_mini_mask=False)

molded_images = np.expand_dims(modellib.mold_image(image, inference_config), 0)

# Run object detection

results = model.detect([image], verbose=0)

r = results[0]

# Compute AP

AP, precisions, recalls, overlaps =\

utils.compute_ap(gt_bbox[:,:4], gt_bbox[:,4],

r["rois"], r["class_ids"], r["scores"])

APs.append(AP)

print("mAP: ", np.mean(APs))

>>>

>>>

mAP: 0.9代码分析-Mask R-CNN 模型分析

测试,调试和评估Mask R-CNN模型。

导包

这里会用到自定义的COCO子数据集,5K的minival和35K的validation-minus-minival。(这两个数据集下载比较慢,没有贴原地址,而是我的CSDN地址,分不够下载的可以私信我~)

import os

import sys

import random

import math

import re

import time

import numpy as np

import scipy.misc

import tensorflow as tf

import matplotlib

import matplotlib.pyplot as plt

import matplotlib.patches as patches

import utils

import visualize

from visualize import display_images

import model as modellib

from model import log

%matplotlib inline

ROOT_DIR = os.getcwd() # Root directory of the project

MODEL_DIR = os.path.join(ROOT_DIR, "logs") # Directory to save logs and trained model

COCO_MODEL_PATH = os.path.join(ROOT_DIR, "coco/mask_rcnn_coco.h5") # Path to trained weights file

SHAPES_MODEL_PATH = os.path.join(ROOT_DIR, "log/shapes20171103T2047/mask_rcnn_shapes_0002.h5") # Path to Shapes trained weights

# Shapes toy dataset

# import shapes

# config = shapes.ShapesConfig()

# MS COCO Dataset

import coco

config = coco.CocoConfig()

COCO_DIR = os.path.join(ROOT_DIR, "coco") # TODO: enter value here

def get_ax(rows=1, cols=1, size=16):

"""控制绘图大小"""

_, ax = plt.subplots(rows, cols, figsize=(size*cols, size*rows))

return ax

# 创建一个预测配置类InferenceConfig,用于测试预训练模型

class InferenceConfig(config.__class__):

# Run detection on one image at a time

GPU_COUNT = 1

IMAGES_PER_GPU = 1

config = InferenceConfig()

DEVICE = "/cpu:0" # /cpu:0 or /gpu:0

TEST_MODE = "inference" # values: 'inference' or 'training'

# 加载验证集

if config.NAME == 'shapes':

dataset = shapes.ShapesDataset()

dataset.load_shapes(500, config.IMAGE_SHAPE[0], config.IMAGE_SHAPE[1])

elif config.NAME == "coco":

dataset = coco.CocoDataset()

dataset.load_coco(COCO_DIR, "minival")

# Must call before using the dataset

dataset.prepare()

# 创建模型并设置inference mode

with tf.device(DEVICE):

model = modellib.MaskRCNN(mode="inference", model_dir=MODEL_DIR,

config=config)

# Set weights file path

if config.NAME == "shapes":

weights_path = SHAPES_MODEL_PATH

elif config.NAME == "coco":

weights_path = COCO_MODEL_PATH

# Or, uncomment to load the last model you trained

# weights_path = model.find_last()[1]

# Load weights

print("Loading weights ", weights_path)

model.load_weights(weights_path, by_name=True)

image_id = random.choice(dataset.image_ids)

image, image_meta, gt_bbox, gt_mask =\

modellib.load_image_gt(dataset, config, image_id, use_mini_mask=False)

info = dataset.image_info[image_id]

print("image ID: {}.{} ({}) {}".format(info["source"], info["id"], image_id,

dataset.image_reference(image_id)))

gt_class_id = gt_bbox[:, 4]

# Run object detection

results = model.detect([image], verbose=1)

# Display results

ax = get_ax(1)

r = results[0]

# visualize.display_instances(image, gt_bbox[:,:4], gt_mask, gt_bbox[:,4],

# dataset.class_names, ax=ax[0], title="Ground Truth")

visualize.display_instances(image, r['rois'], r['masks'], r['class_ids'],

dataset.class_names, r['scores'], ax=ax,

title="Predictions")

log("gt_class_id", gt_class_id)

log("gt_bbox", gt_bbox)

log("gt_mask", gt_mask)

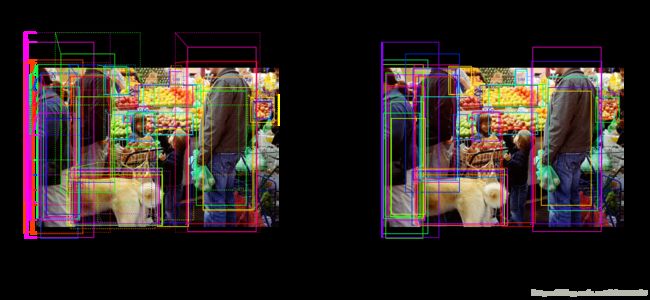

随机在数据集中选张照片看看:

区域候选网络(Region Proposal Network,RPN)

RPN网络的任务就是做目标区域推荐,从R-CNN中使用的Selective Search方法到Faster R-CNN中使用的Anchor方法,目的就是用更快的方法产生更好的RoI。

RPN在图像上创建大量的boxes(anchors),并在anchors上运行一个轻量级的二值分类器返回有目标/无目标的分数。具有高分数的anchors(positive anchors,正样本)会被传到下一阶段用于分类。

通常,positive anchors也不会完全覆盖目标,所以RPN在对anchor打分的同时会回归一个偏移量和放缩值,用于修正anchors位置和大小。

RPN Target

RPN Target是需要找到有目标的anchor,传递到模型后面用于分类等任务。RPN会在一个完整的图片上覆盖多种不同形状的anchors,通过计算anchors与标注的ground truth(GT box)的IoU,认为IoU≥0.7为正样本,IoU≤0.3为负样本,卡在中间的丢弃为中立样本,训练模型不使用。

上面提到了训练RPN的同时会回归一个偏移量和放缩值,目的就是用来修正anchor的位置和大小,最终更好的与ground truth相cover。

# 生成RPN trainig targets

# target_rpn_match 值为1代表positive anchors, -1代表negative,0代表neutral.

target_rpn_match, target_rpn_bbox = modellib.build_rpn_targets(

image.shape, model.anchors, gt_bbox, model.config)

log("target_rpn_match", target_rpn_match)

log("target_rpn_bbox", target_rpn_bbox)

# 分类所有anchor

positive_anchor_ix = np.where(target_rpn_match[:] == 1)[0]

negative_anchor_ix = np.where(target_rpn_match[:] == -1)[0]

neutral_anchor_ix = np.where(target_rpn_match[:] == 0)[0]

positive_anchors = model.anchors[positive_anchor_ix]

negative_anchors = model.anchors[negative_anchor_ix]

neutral_anchors = model.anchors[neutral_anchor_ix]

log("positive_anchors", positive_anchors)

log("negative_anchors", negative_anchors)

log("neutral anchors", neutral_anchors)

# 对positive anchor做修正

refined_anchors = utils.apply_box_deltas(

positive_anchors,

target_rpn_bbox[:positive_anchors.shape[0]] * model.config.RPN_BBOX_STD_DEV)

log("refined_anchors", refined_anchors, )

>>>

>>>

target_rpn_match shape: (65472,) min: -1.00000 max: 1.00000

target_rpn_bbox shape: (256, 4) min: -3.66348 max: 7.29204

positive_anchors shape: (19, 4) min: -53.01934 max: 1030.62742

negative_anchors shape: (237, 4) min: -90.50967 max: 1038.62742

neutral anchors shape: (65216, 4) min: -362.03867 max: 1258.03867

refined_anchors shape: (19, 4) min: -0.00000 max: 1024.00000

看看positive anchors和修正后的positive anchors:

visualize.draw_boxes(image, boxes=positive_anchors, refined_boxes=refined_anchors, ax=get_ax())

RPN Prediction

# Run RPN sub-graph

pillar = model.keras_model.get_layer("ROI").output # node to start searching from

rpn = model.run_graph([image], [

("rpn_class", model.keras_model.get_layer("rpn_class").output),

("pre_nms_anchors", model.ancestor(pillar, "ROI/pre_nms_anchors:0")),

("refined_anchors", model.ancestor(pillar, "ROI/refined_anchors:0")),

("refined_anchors_clipped", model.ancestor(pillar, "ROI/refined_anchors_clipped:0")),

("post_nms_anchor_ix", model.ancestor(pillar, "ROI/rpn_non_max_suppression:0")),

("proposals", model.keras_model.get_layer("ROI").output),

])

>>>

>>>

rpn_class shape: (1, 65472, 2) min: 0.00000 max: 1.00000

pre_nms_anchors shape: (1, 10000, 4) min: -362.03867 max: 1258.03870

refined_anchors shape: (1, 10000, 4) min: -1030.40588 max: 2164.92578

refined_anchors_clipped shape: (1, 10000, 4) min: 0.00000 max: 1024.00000

post_nms_anchor_ix shape: (1000,) min: 0.00000 max: 1879.00000

proposals shape: (1, 1000, 4) min: 0.00000 max: 1.00000

看看高分的anchors(没有修正前):

limit = 100

sorted_anchor_ids = np.argsort(rpn['rpn_class'][:,:,1].flatten())[::-1]

visualize.draw_boxes(image, boxes=model.anchors[sorted_anchor_ids[:limit]], ax=get_ax())看看修正后的高分anchors,超过的图片边界的会被截止:

limit = 50

ax = get_ax(1, 2)

visualize.draw_boxes(image, boxes=rpn["pre_nms_anchors"][0, :limit],

refined_boxes=rpn["refined_anchors"][0, :limit], ax=ax[0])

visualize.draw_boxes(image, refined_boxes=rpn["refined_anchors_clipped"][0, :limit], ax=ax[1])对上面的anchors做非极大值抑制:

limit = 50

ixs = rpn["post_nms_anchor_ix"][:limit]

visualize.draw_boxes(image, refined_boxes=rpn["refined_anchors_clipped"][0, ixs], ax=get_ax())最终的proposal和上面的步骤一致,只是在坐标上做了归一化操作:

limit = 50

# Convert back to image coordinates for display

h, w = config.IMAGE_SHAPE[:2]

proposals = rpn['proposals'][0, :limit] * np.array([h, w, h, w])

visualize.draw_boxes(image, refined_boxes=proposals, ax=get_ax())测量RPN的召回率(目标被anchors覆盖的比例),这里我们计算召回率有三种方法:

- 所有的 anchors

- 所有修正的anchors

- 经过极大值抑制后的修正Anchors

iou_threshold = 0.7

recall, positive_anchor_ids = utils.compute_recall(model.anchors, gt_bbox, iou_threshold)

print("All Anchors ({:5}) Recall: {:.3f} Positive anchors: {}".format(

model.anchors.shape[0], recall, len(positive_anchor_ids)))

recall, positive_anchor_ids = utils.compute_recall(rpn['refined_anchors'][0], gt_bbox, iou_threshold)

print("Refined Anchors ({:5}) Recall: {:.3f} Positive anchors: {}".format(

rpn['refined_anchors'].shape[1], recall, len(positive_anchor_ids)))

recall, positive_anchor_ids = utils.compute_recall(proposals, gt_bbox, iou_threshold)

print("Post NMS Anchors ({:5}) Recall: {:.3f} Positive anchors: {}".format(

proposals.shape[0], recall, len(positive_anchor_ids)))

>>>

>>>

All Anchors (65472) Recall: 0.263 Positive anchors: 5

Refined Anchors (10000) Recall: 0.895 Positive anchors: 126

Post NMS Anchors ( 50) Recall: 0.526 Positive anchors: 12

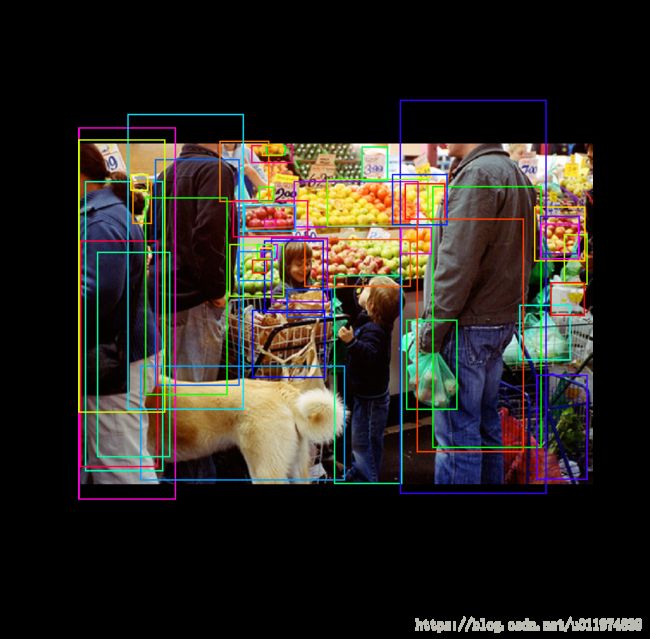

Proposal 分类

前面RPN Target是生成region proposal,这里就要对其分类了~

Proposal Classification

将RPN推选出来的Proposal送到分类部分,最终生成种类概率分布和bbox回归。

# Get input and output to classifier and mask heads.

mrcnn = model.run_graph([image], [

("proposals", model.keras_model.get_layer("ROI").output),

("probs", model.keras_model.get_layer("mrcnn_class").output),

("deltas", model.keras_model.get_layer("mrcnn_bbox").output),

("masks", model.keras_model.get_layer("mrcnn_mask").output),

("detections", model.keras_model.get_layer("mrcnn_detection").output),

])

>>>

>>>

proposals shape: (1, 1000, 4) min: 0.00000 max: 1.00000

probs shape: (1, 1000, 81) min: 0.00000 max: 0.99825

deltas shape: (1, 1000, 81, 4) min: -3.31265 max: 2.86541

masks shape: (1, 100, 28, 28, 81) min: 0.00003 max: 0.99986

detections shape: (1, 100, 6) min: 0.00000 max: 930.00000

获取检测种类,除去填充的0部分:

det_class_ids = mrcnn['detections'][0, :, 4].astype(np.int32)

det_count = np.where(det_class_ids == 0)[0][0]

det_class_ids = det_class_ids[:det_count]

detections = mrcnn['detections'][0, :det_count]

print("{} detections: {}".format(

det_count, np.array(dataset.class_names)[det_class_ids]))

captions = ["{} {:.3f}".format(dataset.class_names[int(c)], s) if c > 0 else ""

for c, s in zip(detections[:, 4], detections[:, 5])]

visualize.draw_boxes(

image,

refined_boxes=detections[:, :4],

visibilities=[2] * len(detections),

captions=captions, title="Detections",

ax=get_ax())

>>>

>>>

11 detections: ['person' 'person' 'person' 'person' 'person' 'orange' 'person' 'orange'

'dog' 'handbag' 'apple']Step by Step Detection

# Proposals是标准坐标, 放缩回图片坐标

h, w = config.IMAGE_SHAPE[:2]

proposals = np.around(mrcnn["proposals"][0] * np.array([h, w, h, w])).astype(np.int32)

# Class ID, score, and mask per proposal

roi_class_ids = np.argmax(mrcnn["probs"][0], axis=1)

roi_scores = mrcnn["probs"][0, np.arange(roi_class_ids.shape[0]), roi_class_ids]

roi_class_names = np.array(dataset.class_names)[roi_class_ids]

roi_positive_ixs = np.where(roi_class_ids > 0)[0]

# How many ROIs vs empty rows?

print("{} Valid proposals out of {}".format(np.sum(np.any(proposals, axis=1)), proposals.shape[0]))

print("{} Positive ROIs".format(len(roi_positive_ixs)))

# Class counts

print(list(zip(*np.unique(roi_class_names, return_counts=True))))

>>>

>>>

1000 Valid proposals out of 1000

106 Positive ROIs

[('BG', 894), ('apple', 25), ('cup', 2), ('dog', 4), ('handbag', 2), ('orange', 36), ('person', 36), ('sandwich', 1)]

看一些随机取出的proposal样本,BG的不做显示,主要看有类别的,还有其对应的分数:

limit = 200

ixs = np.random.randint(0, proposals.shape[0], limit)

captions = ["{} {:.3f}".format(dataset.class_names[c], s) if c > 0 else ""

for c, s in zip(roi_class_ids[ixs], roi_scores[ixs])]

visualize.draw_boxes(image, boxes=proposals[ixs],

visibilities=np.where(roi_class_ids[ixs] > 0, 2, 1),

captions=captions, title="ROIs Before Refinment",

ax=get_ax())做bbox修正:

# Class-specific bounding box shifts.

roi_bbox_specific = mrcnn["deltas"][0, np.arange(proposals.shape[0]), roi_class_ids]

log("roi_bbox_specific", roi_bbox_specific)

# Apply bounding box transformations

# Shape: [N, (y1, x1, y2, x2)]

refined_proposals = utils.apply_box_deltas(

proposals, roi_bbox_specific * config.BBOX_STD_DEV).astype(np.int32)

log("refined_proposals", refined_proposals)

# Show positive proposals

# ids = np.arange(roi_boxes.shape[0]) # Display all

limit = 5

ids = np.random.randint(0, len(roi_positive_ixs), limit) # Display random sample

captions = ["{} {:.3f}".format(dataset.class_names[c], s) if c > 0 else ""

for c, s in zip(roi_class_ids[roi_positive_ixs][ids], roi_scores[roi_positive_ixs][ids])]

visualize.draw_boxes(image, boxes=proposals[roi_positive_ixs][ids],

refined_boxes=refined_proposals[roi_positive_ixs][ids],

visibilities=np.where(roi_class_ids[roi_positive_ixs][ids] > 0, 1, 0),

captions=captions, title="ROIs After Refinment",

ax=get_ax())

>>>

>>>

roi_bbox_specific shape: (1000, 4) min: -3.31265 max: 2.86541

refined_proposals shape: (1000, 4) min: -1.00000 max: 1024.00000滤掉低分的检测目标:

# Remove boxes classified as background

keep = np.where(roi_class_ids > 0)[0]

print("Keep {} detections:\n{}".format(keep.shape[0], keep))

# Remove low confidence detections

keep = np.intersect1d(keep, np.where(roi_scores >= config.DETECTION_MIN_CONFIDENCE)[0])

print("Remove boxes below {} confidence. Keep {}:\n{}".format(

config.DETECTION_MIN_CONFIDENCE, keep.shape[0], keep))

>>>

>>>

Keep 106 detections:

[ 0 1 2 3 4 5 6 7 9 10 11 12 13 14 15 16 17 18

19 22 23 24 25 26 27 28 31 34 35 36 37 38 41 43 47 51

56 65 66 67 68 71 73 75 82 87 91 92 101 102 105 109 110 115

117 120 123 138 156 164 171 175 177 184 197 205 241 253 258 263 265 280

287 325 367 430 451 452 464 469 491 514 519 527 554 597 610 686 697 712

713 748 750 780 815 871 911 917 933 938 942 947 949 953 955 981]

Remove boxes below 0.7 confidence. Keep 44:

[ 0 1 2 3 4 5 6 9 12 13 14 17 19 26 31 34 38 41

43 47 67 75 82 87 92 120 123 164 171 175 177 205 258 325 452 469

519 697 713 815 871 911 917 949]

做非极大值抑制操作:

# Apply per-class non-max suppression

pre_nms_boxes = refined_proposals[keep]

pre_nms_scores = roi_scores[keep]

pre_nms_class_ids = roi_class_ids[keep]

nms_keep = []

for class_id in np.unique(pre_nms_class_ids):

# Pick detections of this class

ixs = np.where(pre_nms_class_ids == class_id)[0]

# Apply NMS

class_keep = utils.non_max_suppression(pre_nms_boxes[ixs],

pre_nms_scores[ixs],

config.DETECTION_NMS_THRESHOLD)

# Map indicies

class_keep = keep[ixs[class_keep]]

nms_keep = np.union1d(nms_keep, class_keep)

print("{:22}: {} -> {}".format(dataset.class_names[class_id][:20],

keep[ixs], class_keep))

keep = np.intersect1d(keep, nms_keep).astype(np.int32)

print("\nKept after per-class NMS: {}\n{}".format(keep.shape[0], keep))

>>>

>>>

person : [ 0 1 2 3 5 9 12 13 14 19 26 41 43 47 82 92 120 123

175 177 258 452 469 519 871 911 917] -> [ 5 12 1 2 3 19]

dog : [ 6 75 171] -> [75]

handbag : [815] -> [815]

apple : [38] -> [38]

orange : [ 4 17 31 34 67 87 164 205 325 697 713 949] -> [ 4 87]

Kept after per-class NMS: 11

[ 1 2 3 4 5 12 19 38 75 87 815]

看看最后的结果:

ixs = np.arange(len(keep)) # Display all

# ixs = np.random.randint(0, len(keep), 10) # Display random sample

captions = ["{} {:.3f}".format(dataset.class_names[c], s) if c > 0 else ""

for c, s in zip(roi_class_ids[keep][ixs], roi_scores[keep][ixs])]

visualize.draw_boxes(

image, boxes=proposals[keep][ixs],

refined_boxes=refined_proposals[keep][ixs],

visibilities=np.where(roi_class_ids[keep][ixs] > 0, 1, 0),

captions=captions, title="Detections after NMS",

ax=get_ax())

生成Mask

在上一阶段产生的实例基础上,通过mask head为每个实例产生分割mask。

Mask Target

即Mask分支的训练目标:

display_images(np.transpose(gt_mask, [2, 0, 1]), cmap="Blues")Predicted Masks

# Get predictions of mask head

mrcnn = model.run_graph([image], [

("detections", model.keras_model.get_layer("mrcnn_detection").output),

("masks", model.keras_model.get_layer("mrcnn_mask").output),

])

# Get detection class IDs. Trim zero padding.

det_class_ids = mrcnn['detections'][0, :, 4].astype(np.int32)

det_count = np.where(det_class_ids == 0)[0][0]

det_class_ids = det_class_ids[:det_count]

print("{} detections: {}".format(

det_count, np.array(dataset.class_names)[det_class_ids]))

# Masks

det_boxes = mrcnn["detections"][0, :, :4].astype(np.int32)

det_mask_specific = np.array([mrcnn["masks"][0, i, :, :, c]

for i, c in enumerate(det_class_ids)])

det_masks = np.array([utils.unmold_mask(m, det_boxes[i], image.shape)

for i, m in enumerate(det_mask_specific)])

log("det_mask_specific", det_mask_specific)

log("det_masks", det_masks)

display_images(det_mask_specific[:4] * 255, cmap="Blues", interpolation="none")

>>>

>>>

detections shape: (1, 100, 6) min: 0.00000 max: 930.00000

masks shape: (1, 100, 28, 28, 81) min: 0.00003 max: 0.99986

11 detections: ['person' 'person' 'person' 'person' 'person' 'orange' 'person' 'orange'

'dog' 'handbag' 'apple']

det_mask_specific shape: (11, 28, 28) min: 0.00016 max: 0.99985

det_masks shape: (11, 1024, 1024) min: 0.00000 max: 1.00000

display_images(det_masks[:4] * 255, cmap="Blues", interpolation="none")