Python爬虫常用库的使用

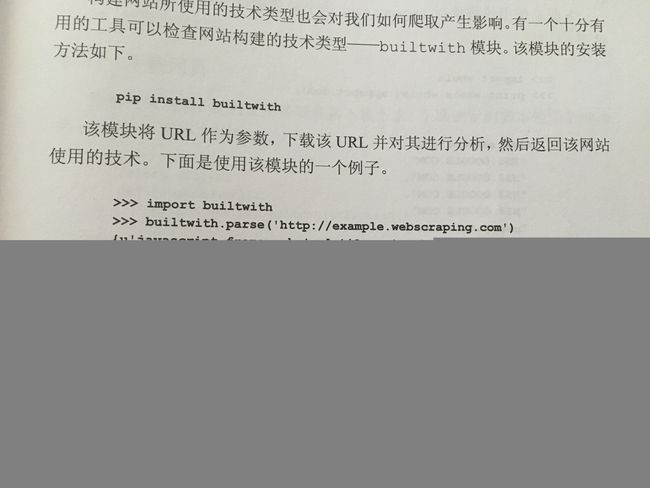

builtwith的使用

Requests库的使用

实例引入

import requests

response = requests.get('https://www.baidu.com/')

print(type(response))

print(response.status_code)

print(type(response.text))

print(response.text)

print(response.cookies)各种请求方式

import requests

requests.post('http://httpbin.org/post')

requests.put('http://httpbin.org/put')

requests.delete('http://httpbin.org/delete')

requests.head('http://httpbin.org/get')

requests.options('http://httpbin.org/get')基本GET请求

基本写法

import requests

response = requests.get('http://httpbin.org/get')

print(response.text)带参数GET请求

import requests

response = requests.get("http://httpbin.org/get?name=germey&age=22")

print(response.text)import requests

data = {

'name': 'germey',

'age': 22

}

response = requests.get("http://httpbin.org/get", params=data)

print(response.text)解析json

import requests

import json

response = requests.get("http://httpbin.org/get")

print(type(response.text))

print(response.json())

print(json.loads(response.text))

print(type(response.json()))获取二进制数据

import requests

response = requests.get("https://github.com/favicon.ico")

print(type(response.text), type(response.content))

print(response.text)

print(response.content)import requests

response = requests.get("https://github.com/favicon.ico")

with open('favicon.ico', 'wb') as f:

f.write(response.content)

f.close()添加headers

import requests

response = requests.get("https://www.zhihu.com/explore")

print(response.text)import requests

headers = {

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_4) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/52.0.2743.116 Safari/537.36'

}

response = requests.get("https://www.zhihu.com/explore", headers=headers)

print(response.text)基本POST请求

import requests

data = {'name': 'germey', 'age': '22'}

response = requests.post("http://httpbin.org/post", data=data)

print(response.text)import requests

data = {'name': 'germey', 'age': '22'}

headers = {

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_4) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/52.0.2743.116 Safari/537.36'

}

response = requests.post("http://httpbin.org/post", data=data, headers=headers)

print(response.json())reponse属性

import requests

response = requests.get('http://www.jianshu.com')

print(type(response.status_code), response.status_code)

print(type(response.headers), response.headers)

print(type(response.cookies), response.cookies)

print(type(response.url), response.url)

print(type(response.history), response.history)状态码判断

import requests

response = requests.get('http://www.jianshu.com/hello.html')

exit() if not response.status_code == requests.codes.not_found else print('404 Not Found')import requests

response = requests.get('http://www.jianshu.com')

exit() if not response.status_code == 200 else print('Request Successfully')100: ('continue',),

101: ('switching_protocols',),

102: ('processing',),

103: ('checkpoint',),

122: ('uri_too_long', 'request_uri_too_long'),

200: ('ok', 'okay', 'all_ok', 'all_okay', 'all_good', '\\o/', '✓'),

201: ('created',),

202: ('accepted',),

203: ('non_authoritative_info', 'non_authoritative_information'),

204: ('no_content',),

205: ('reset_content', 'reset'),

206: ('partial_content', 'partial'),

207: ('multi_status', 'multiple_status', 'multi_stati', 'multiple_stati'),

208: ('already_reported',),

226: ('im_used',),

# Redirection.

300: ('multiple_choices',),

301: ('moved_permanently', 'moved', '\\o-'),

302: ('found',),

303: ('see_other', 'other'),

304: ('not_modified',),

305: ('use_proxy',),

306: ('switch_proxy',),

307: ('temporary_redirect', 'temporary_moved', 'temporary'),

308: ('permanent_redirect',

'resume_incomplete', 'resume',), # These 2 to be removed in 3.0

# Client Error.

400: ('bad_request', 'bad'),

401: ('unauthorized',),

402: ('payment_required', 'payment'),

403: ('forbidden',),

404: ('not_found', '-o-'),

405: ('method_not_allowed', 'not_allowed'),

406: ('not_acceptable',),

407: ('proxy_authentication_required', 'proxy_auth', 'proxy_authentication'),

408: ('request_timeout', 'timeout'),

409: ('conflict',),

410: ('gone',),

411: ('length_required',),

412: ('precondition_failed', 'precondition'),

413: ('request_entity_too_large',),

414: ('request_uri_too_large',),

415: ('unsupported_media_type', 'unsupported_media', 'media_type'),

416: ('requested_range_not_satisfiable', 'requested_range', 'range_not_satisfiable'),

417: ('expectation_failed',),

418: ('im_a_teapot', 'teapot', 'i_am_a_teapot'),

421: ('misdirected_request',),

422: ('unprocessable_entity', 'unprocessable'),

423: ('locked',),

424: ('failed_dependency', 'dependency'),

425: ('unordered_collection', 'unordered'),

426: ('upgrade_required', 'upgrade'),

428: ('precondition_required', 'precondition'),

429: ('too_many_requests', 'too_many'),

431: ('header_fields_too_large', 'fields_too_large'),

444: ('no_response', 'none'),

449: ('retry_with', 'retry'),

450: ('blocked_by_windows_parental_controls', 'parental_controls'),

451: ('unavailable_for_legal_reasons', 'legal_reasons'),

499: ('client_closed_request',),

# Server Error.

500: ('internal_server_error', 'server_error', '/o\\', '✗'),

501: ('not_implemented',),

502: ('bad_gateway',),

503: ('service_unavailable', 'unavailable'),

504: ('gateway_timeout',),

505: ('http_version_not_supported', 'http_version'),

506: ('variant_also_negotiates',),

507: ('insufficient_storage',),

509: ('bandwidth_limit_exceeded', 'bandwidth'),

510: ('not_extended',),

511: ('network_authentication_required', 'network_auth', 'network_authentication'),文件上传

import requests

files = {'file': open('favicon.ico', 'rb')}

response = requests.post("http://httpbin.org/post", files=files)

print(response.text)获取cookie

import requests

response = requests.get("https://www.baidu.com")

print(response.cookies)

for key, value in response.cookies.items():

print(key + '=' + value)会话维持

模拟登录

import requests

requests.get('http://httpbin.org/cookies/set/number/123456789')

response = requests.get('http://httpbin.org/cookies')

print(response.text)import requests

s = requests.Session()

s.get('http://httpbin.org/cookies/set/number/123456789')

response = s.get('http://httpbin.org/cookies')

print(response.text)证书验证

import requests

response = requests.get('https://www.12306.cn')

print(response.status_code)import requests

from requests.packages import urllib3

urllib3.disable_warnings()

response = requests.get('https://www.12306.cn', verify=False)

print(response.status_code)import requests

response = requests.get('https://www.12306.cn', cert=('/path/server.crt', '/path/key'))

print(response.status_code)代理设置

import requests

proxies = {

"http": "http://127.0.0.1:9743",

"https": "https://127.0.0.1:9743",

}

response = requests.get("https://www.taobao.com", proxies=proxies)

print(response.status_code)import requests

proxies = {

"http": "http://user:[email protected]:9743/",

}

response = requests.get("https://www.taobao.com", proxies=proxies)

print(response.status_code)pip3 install 'requests[socks]'import requests

proxies = {

'http': 'socks5://127.0.0.1:9742',

'https': 'socks5://127.0.0.1:9742'

}

response = requests.get("https://www.taobao.com", proxies=proxies)

print(response.status_code)超时设置

import requests

from requests.exceptions import ReadTimeout

try:

response = requests.get("http://httpbin.org/get", timeout = 0.5)

print(response.status_code)

except ReadTimeout:

print('Timeout')认证设置

import requests

from requests.auth import HTTPBasicAuth

r = requests.get('http://120.27.34.24:9001', auth=HTTPBasicAuth('user', '123'))

print(r.status_code)import requests

r = requests.get('http://120.27.34.24:9001', auth=('user', '123'))

print(r.status_code)异常处理

import requests

from requests.exceptions import ReadTimeout, ConnectionError, RequestException

try:

response = requests.get("http://httpbin.org/get", timeout = 0.5)

print(response.status_code)

except ReadTimeout:

print('Timeout')

except ConnectionError:

print('Connection error')

except RequestException:

print('Error')Connection error

Urllib库的使用

urllib.urlopen

get请求

import urllib.request

response = urllib.request.urlopen('http://asahii.cn')

print(response.read().decode('utf-8'))

post请求

import urllib.parse

import urllib.request

data = bytes(urllib.parse.urlencode({'word':'hello'}), encoding='utf8')

response = urllib.request.urlopen('http://httpbin.org/post', data=data)

print(response.read().decode('utf-8')){

"args": {},

"data": "",

"files": {},

"form": {

"word": "hello"

},

"headers": {

"Accept-Encoding": "identity",

"Connection": "close",

"Content-Length": "10",

"Content-Type": "application/x-www-form-urlencoded",

"Host": "httpbin.org",

"User-Agent": "Python-urllib/3.6"

},

"json": null,

"origin": "14.154.29.216",

"url": "http://httpbin.org/post"

}

超时时限设置。超过此值将抛出异常。

import urllib.request

response = urllib.request.urlopen('http://httpbin.org/get?data=haha', timeout=1)

print(response.read().decode('utf-8')){

"args": {

"data": "haha"

},

"headers": {

"Accept-Encoding": "identity",

"Connection": "close",

"Host": "httpbin.org",

"User-Agent": "Python-urllib/3.6"

},

"origin": "14.154.29.216",

"url": "http://httpbin.org/get?data=haha"

}

捕捉异常

import socket

import urllib.request

import urllib.error

try:

response = urllib.request.urlopen('http://httpbin.org/get', timeout=0.1)

except urllib.error.URLError as e:

if isinstance(e.reason, socket.timeout):

print('Time Out')Time Out

响应

响应类型

import urllib.request

resp = urllib.request.urlopen('http://asahii.cn')

print(type(resp))

#resp = urllib.request.urlopen('https://www.python.org')

#print(type(resp))

print(resp.status)

print(resp.getheaders())

print(resp.getheader('Server'))

200

[('Server', 'GitHub.com'), ('Content-Type', 'text/html; charset=utf-8'), ('Last-Modified', 'Sat, 20 Jan 2018 04:10:07 GMT'), ('Access-Control-Allow-Origin', '*'), ('Expires', 'Mon, 22 Jan 2018 13:32:43 GMT'), ('Cache-Control', 'max-age=600'), ('X-GitHub-Request-Id', '71A8:11832:91183A:9A71F6:5A65E5A3'), ('Content-Length', '106'), ('Accept-Ranges', 'bytes'), ('Date', 'Mon, 22 Jan 2018 14:26:04 GMT'), ('Via', '1.1 varnish'), ('Age', '480'), ('Connection', 'close'), ('X-Served-By', 'cache-hnd18748-HND'), ('X-Cache', 'HIT'), ('X-Cache-Hits', '1'), ('X-Timer', 'S1516631164.467386,VS0,VE0'), ('Vary', 'Accept-Encoding'), ('X-Fastly-Request-ID', 'f3adc99bea78d082bc4447a4a04f427731a40dad')]

GitHub.com

Request

构造请求,包括增添一些请求头部信息等。

from urllib import request, parse

req = urllib.request.Request('http://asahii.cn')

resp = urllib.request.urlopen(req)

print(resp.read().decode('utf-8'))

########################## 分隔线 ##########################

url = 'http://httpbin.org/post'

headers = {

'User-Agent':'Mozilla/4.0(compatible;MSIE 5.5;Windows NT)',

'Host':'httpbin.org'

}

dict = {

'name':'Germey'

}

data = bytes(parse.urlencode(dict), encoding='utf8')

req = request.Request(url=url, data=data, headers=headers, method='POST')

resp = request.urlopen(req)

print(resp.read().decode('utf-8'))

{

"args": {},

"data": "",

"files": {},

"form": {

"name": "Germey"

},

"headers": {

"Accept-Encoding": "identity",

"Connection": "close",

"Content-Length": "11",

"Content-Type": "application/x-www-form-urlencoded",

"Host": "httpbin.org",

"User-Agent": "Mozilla/4.0(compatible;MSIE 5.5;Windows NT)"

},

"json": null,

"origin": "14.154.29.0",

"url": "http://httpbin.org/post"

}

from urllib import request,parse

url = 'http://httpbin.org/post'

dict = {

'name':'China'

}

data = bytes(parse.urlencode(dict), encoding='utf8')

req = request.Request(url=url, data=data, method='POST')

req.add_header('User-Agent', 'Mozilla/4.0(compatible;MSIE 5.5;Windows NT)')

resp = request.urlopen(req)

print(resp.read().decode('utf-8')){

"args": {},

"data": "",

"files": {},

"form": {

"name": "China"

},

"headers": {

"Accept-Encoding": "identity",

"Connection": "close",

"Content-Length": "10",

"Content-Type": "application/x-www-form-urlencoded",

"Host": "httpbin.org",

"User-Agent": "Mozilla/4.0(compatible;MSIE 5.5;Windows NT)"

},

"json": null,

"origin": "14.154.28.26",

"url": "http://httpbin.org/post"

}

Handler

设置代理。可以通过来回切换代理,以防止服务器禁用我们的IP。

import urllib.request

proxy_handler = urllib.request.ProxyHandler({

'http':'http://127.0.0.1:9743',

'https':'https://127.0.0.1:9743'

})

opener = urllib.request.build_opener(proxy_handler)

resp = opener.open('http://www.baidu.com')

print(resp.read())Cookie相关操作

用来维持登录状态。可以在遇到需要登录的网站使用。

# 获取cookie

import http.cookiejar, urllib.request

cookie = http.cookiejar.CookieJar()

handler = urllib.request.HTTPCookieProcessor(cookie)

opener = urllib.request.build_opener(handler)

resp = opener.open('http://www.baidu.com')

for item in cookie:

print(item.name+"="+item.value)BAIDUID=417382BEB774A45EA7FC2C374C846E04:FG=1

BIDUPSID=417382BEB774A45EA7FC2C374C846E04

H_PS_PSSID=25639_1446_21107_20930

PSTM=1516632429

BDSVRTM=0

BD_HOME=0

保存cookie到本地文件中

import http.cookiejar, urllib.request

filename = "cookie.txt"

cookie = http.cookiejar.MozillaCookieJar(filename)

handler = urllib.request.HTTPCookieProcessor(cookie)

opener = urllib.request.build_opener(handler)

resp = opener.open('http://www.baidu.com')

cookie.save(ignore_discard=True, ignore_expires=True)另一种cookie保存格式

import http.cookiejar, urllib.request

filename = "cookie.txt"

cookie = http.cookiejar.LWPCookieJar(filename)

handler = urllib.request.HTTPCookieProcessor(cookie)

opener = urllib.request.build_opener(handler)

resp = opener.open('http://www.baidu.com')

cookie.save(ignore_discard=True, ignore_expires=True)加载本地cookie到请求中去。用什么样的方式存cookie,就用什么样的方式去读。

import http.cookiejar, urllib.request

cookie = http.cookiejar.LWPCookieJar()

cookie.load('cookie.txt', ignore_discard=True, ignore_expires=True)

handler = urllib.request.HTTPCookieProcessor(cookie)

opener = urllib.request.build_opener(handler)

resp = opener.open('http://httpbin.org/get')

print(resp.read().decode('utf8')){

"args": {},

"headers": {

"Accept-Encoding": "identity",

"Connection": "close",

"Host": "httpbin.org",

"User-Agent": "Python-urllib/3.6"

},

"origin": "14.154.29.0",

"url": "http://httpbin.org/get"

}

页面请求时的异常处理

from urllib import request, error

try:

resp = request.urlopen('http://asahii.cn/index.htm')

except error.URLError as e:

print(e.reason)#可考虑进行重拾Not Found

详细的错误类型

from urllib import request, error

try:

resp = request.urlopen('http://asahii.cn/index.htm')

except error.HTTPError as ee:

print(ee.reason, ee.code, ee.headers, sep='\n')

except error.URLError as e:

print(e.reason)Not Found

404

Server: GitHub.com

Content-Type: text/html; charset=utf-8

ETag: "5a62c123-215e"

Access-Control-Allow-Origin: *

X-GitHub-Request-Id: F758:1D9CC:916D4F:9B5FD5:5A65FE22

Content-Length: 8542

Accept-Ranges: bytes

Date: Mon, 22 Jan 2018 15:09:43 GMT

Via: 1.1 varnish

Age: 148

Connection: close

X-Served-By: cache-hnd18733-HND

X-Cache: HIT

X-Cache-Hits: 1

X-Timer: S1516633784.646920,VS0,VE0

Vary: Accept-Encoding

X-Fastly-Request-ID: fb6b42a4706a17c3de8bd318b76be3513b68c49b

加上原因判断

import socket

from urllib import request, error

try:

resp = request.urlopen('http://asahii.cn/index.html', timeout=0.01)

except error.URLError as e:

print(type(e.reason))

if isinstance(e.reason, socket.timeout):

print('TIME OUT')

TIME OUT

网页解析

解析URL

from urllib.parse import urlparse

result = urlparse('http://www.baidu.com/index.html;user?id=5#comment')

print(type(result), result, sep='\n')

result = urlparse('www.baidu.com/index.html;user?id=5#comment', scheme='https')

print(result)

result = urlparse('http://www.baidu.com/index.html;user?id=5#comment', scheme='https')

print(result)

result = urlparse('http://www.baidu.com/index.html;user?id=5#comment', allow_fragments=False)

print(result)

result = urlparse('http://www.baidu.com/index.html#comment', allow_fragments=False)

print(result)

ParseResult(scheme='http', netloc='www.baidu.com', path='/index.html', params='user', query='id=5', fragment='comment')

ParseResult(scheme='https', netloc='', path='www.baidu.com/index.html', params='user', query='id=5', fragment='comment')

ParseResult(scheme='http', netloc='www.baidu.com', path='/index.html', params='user', query='id=5', fragment='comment')

ParseResult(scheme='http', netloc='www.baidu.com', path='/index.html', params='user', query='id=5#comment', fragment='')

ParseResult(scheme='http', netloc='www.baidu.com', path='/index.html#comment', params='', query='', fragment='')

URL拼装

from urllib.parse import urlunparse

data=['http', 'www.baidu.com', 'index.html', 'user', 'a=6', 'comment']

print(urlunparse(data))http://www.baidu.com/index.html;user?a=6#comment

from urllib.parse import urlencode

params = {

'name':'germey',

'age':22

}

base_url = 'http://www.baidu.com?'

url = base_url+urlencode(params)

print(url)http://www.baidu.com?name=germey&age=22