hang_detect类死锁解题案例分析

背景知识

【hang detect 机制】

MTK在内核中新增了一个用于监视system_server的SW watchdog字符设备驱动:/dev/RT_Monitor,该设备会启动一个内核线程循环去check system_server线程的状态,若system_server不存在则表明Andriod在执行restart,若system_server存在,hang_detect_counter

就开始超时流程,每过30s,hang_detect_counter--

,hang_detect_counter

初始值为10,由SW watchdog通过/dev/RT_Monitor设备节点操作ioctl设置(喂狗)。也就是说,如果操作10*30s,还没有收到来自SW watchdog的喂狗操作,则表明system_server 的SW watchdog已经被hang住,无法转变异常为SWT类型,此时RT_Monitor进程将打印出所有D态(深睡)进程的backtrace到kernel log供调试分析,最后执行BUG(),结束自己。

主要实现:

434 static int hang_detect_thread(void *arg)

435 {

..

445 while (1) {

446 if ((1 == hd_detect_enabled) && (FindTaskByName("system_server") != -1)) {

447 LOGE("[Hang_Detect] hang_detect thread counts down %d:%d.\n", hang_detect_counter, hd_timeout);

448

449 if (hang_detect_counter <= 0)

450 ShowStatus();

451

452 if (hang_detect_counter == 0) {

..

461 } else { /* only Customer user load trigger KE */

462 LOGE("[Hang_Detect] we should triger KE...\n");

463 aee_kernel_exception_api(__FILE__, __LINE__,

464 DB_OPT_NE_JBT_TRACES | DB_OPT_DISPLAY_HANG_DUMP,

465 "\nCRDISPATCH_KEY:SS Hang\n",

466 "we triger Kernel API DB ");

467 msleep(30 * 1000);

468 BUG();

469 }

现象

mt6580平台,反复执行【camera连拍后-返回】操作5-30min出现手机卡住,后面自动重启, user版本.

初步分析

解开db,kernel log提示发生了KE,而且是由BUG()触发.

[ 1832.311664] -(0)[117:hang_detect]------------[ cut here ]------------

[ 1832.311696] -(0)[117:hang_detect]Kernel BUG at c0741d80 [verbose debug info unavailable]

[ 1832.311709] -(0)[117:hang_detect]Internal error: Oops - BUG: 0 [#1] PREEMPT SMP ARM

[ 1832.311723] -(0)[117:hang_detect]disable aee kernel api

[ 1833.311741] -(0)[117:hang_detect]Non-crashing CPUs did not react to IPI

[ 1833.311768] -(0)[117:hang_detect]CPU: 0 PID: 117 Comm: hang_detect Tainted: G W 3.18.35+ #2

[ 1833.311783] -(0)[117:hang_detect]task: df117200 ti: de608000 task.ti: de608000

[ 1833.311804] -(0)[117:hang_detect]PC is at hang_detect_thread+0x240/0x2e8

[ 1833.311821] -(0)[117:hang_detect]LR is at _raw_spin_unlock_irqrestore+0x30/0x5c

..

[ 1839.517818] -(0)[117:hang_detect]Backtrace:

[ 1839.518142] -(0)[117:hang_detect][<c0741b40>] (hang_detect_thread) from [<c013d634>] (kthread+0xe8/0xfc)

[ 1839.518286] -(0)[117:hang_detect] r9:00000000 r8:00000000 r7:c0741b40 r6:00000000 r5:00000000 r4:de5ebf80

[ 1839.518953] -(0)[117:hang_detect][

取出mini_ramdump+vmlinux,启动gdb调试, 可以清晰的此题属于hang detect类问题分析方向.

#0 0xc0741d80 in hang_detect_thread (arg=)

at /home/android/.jenkins/jobs/K300AG_7.0_TSDS/workspace/kernel-3.18/drivers/misc/mediatek/aee/aed/monitor_hang.c:467

467 msleep(30 * 1000);

(gdb) list

462 LOGE("[Hang_Detect] we should triger KE...\n");

463 aee_kernel_exception_api(__FILE__, __LINE__,

464 DB_OPT_NE_JBT_TRACES | DB_OPT_DISPLAY_HANG_DUMP,

465 "\nCRDISPATCH_KEY:SS Hang\n",

466 "we triger Kernel API DB ");

467 msleep(30 * 1000);

468 BUG();

469 }

分析kernel log,查看最开始打印和最后hang detect 计数器的时间点:

<3>[ 1441.021890] (1)[117:hang_detect][Hang_Detect] hang_detect thread counts down 10:10.

...

<3>[ 1801.141892] (0)[117:hang_detect][Hang_Detect] hang_detect thread counts down 0:10.

这里一共执行了10*30s时间,

然后会依次打印出D状态进程,由于需要厘清各个D进程之间卡住的关系,所以需要排查所有的D状态进程(深睡)依赖关系,首先从system_server进程被卡住的watchdog线程停下的地方开始:

..

<3>[ 1802.041672] (1)[117:hang_detect]watchdog D (1)[117:hang_detect] c0aad124

..

<3>[ 1802.284342] (0)[117:hang_detect]Camera@Display D

..

<3>[ 1802.294514] (0)[117:hang_detect]com.myos.camera D

..

<3>[ 1802.295677] (0)[117:hang_detect]Binder:9840_1 D

<3>[ 1802.296097] (0)[117:hang_detect]Binder:9840_2 D

<3>[ 1802.296392] (0)[117:hang_detect]AsyncTask #1 D

<3>[ 1802.296711] (0)[117:hang_detect]AsyncTask #2 D

<3>[ 1802.297264] (0)[117:hang_detect]AsyncTask #3 D

..

<3>[ 1802.301450] (0)[117:hang_detect]service D

分析

watchdog

的调用栈

<3>[ 1802.041672] (1)[117:hang_detect]watchdog D (1)[117:hang_detect] c0aad124

<3>[ 1802.041709] (1)[117:hang_detect] 0 1139 263 0x00000000

<6>[ 1802.041722] (1)[117:hang_detect]Backtrace:

<6>[ 1802.041750] (1)[117:hang_detect][] (mutex_ lock+0x54/0x58)

<6>[ 1802.041848] (1)[117:hang_detect] r10:00000000 r9:de1cc700 r8:de30e958 r7:ca785480 r6:c1040eac r5:00a0003a

<6>[ 1802.041875] (1)[117:hang_detect] r4:c1040eac

<6>[ 1802.041896] (1)[117:hang_detect][] (misc_ open+0x2c/0x104)

<6>[ 1802.041904] (1)[117:hang_detect] r4:c1040eac r3:c03bdd30

<6>[ 1802.041928] (1)[117:hang_detect][] (chrdev_ open+0x88/0x140)

<6>[ 1802.041936] (1)[117:hang_detect] r8:00000000 r7:ca785480 r6:de30e958 r5:df2f6540 r4:00000000 r3:c03bdd30

<6>[ 1802.041972] (1)[117:hang_detect][] (do_ dentry_open+0x180/0x2d0)

<6>[ 1802.041980] (1)[117:hang_detect] r8:00000000 r7:c0224158 r6:ca785488 r5:de30e958 r4:ca785480

<6>[ 1802.042014] (1)[117:hang_detect][

上面就是watchdog线程的调用栈情况,发现此刻已经被调度走了,看上去是在等待misc_open的mutex lock,具体是不是呢?解开地址验证下:

(gdb) list *0xc03bdd5c

0xc03bdd5c is in misc_open (/home/android/.jenkins/jobs/K300AG_7.0_TSDS/workspace/kernel-3.18/drivers/char/misc.c:121).

116 int err = -ENODEV;

117 const struct file_operations *new_fops = NULL;

118

119 mutex_lock(&misc_mtx);

120

121 list_for_each_entry(c, &misc_list, list) {

122 if (c->minor == minor) {

123 new_fops = fops_get(c->fops);

124 break;

125 }

(gdb)

果然,当前线程是在尝试获取

misc_mtx这个lock,当前lock被其他线程拿走了,mutex lock是可以睡眠的,所以watchdog线程进入睡眠D态,那这个lock被谁拿走了呢?

打印出来看看:

(gdb) p misc_mtx

$1 = {count = {counter = 1}, wait_lock = {{rlock = {raw_lock = {{slock = 0, tickets = {owner = 0, next = 0}}}}}}, wait_list = {

next = 0xc1040eb4 , prev = 0xc1040eb4 }, owner = 0x0 <__vectors_start>, osq = {tail = {counter = 0}}}

(gdb)

counter = 1表示unlock状态,owner显示0,这是怎么回事呢?虽然dump取出来的时候跟上面的bt不是同一个时间,但是依然是出问题的情况呀?这个问题留后面看,推测是mini dump的问题,因为mini dump只保留异常现场32kb范围内容,需要抓full dump才更可靠。

继续排除D状态进程,搜索看哪个进程还在操作misc_open的misc_mtx lock,

C:\Documents and Settings\user.com\桌面\stability training\k300死机\12.09\APLog_2017_1219_140241\kernel_log (9 hits)

Line 49131: <6>[ 1801.473767] (1)[117:hang_detect][] (mutex_lock) from [] (misc_open+0x2c/0x104)

Line 50630: <6>[ 1801.953114] (0)[117:hang_detect][] (mutex_lock) from [] (misc_open+0x2c/0x104)

Line 50851: <6>[ 1802.041896] (1)[117:hang_detect][] (mutex_lock) from [] (misc_open+0x2c/0x104)

Line 51694: <6>[ 1802.283537] (0)[117:hang_detect][] (mutex_lock) from [] (misc_open+0x2c/0x104)

Line 52024: <6>[ 1802.289427] (0)[117:hang_detect][] (mutex_lock) from [] (misc_open+0x2c/0x104)

Line 52057: <6>[ 1802.290022] (0)[117:hang_detect][] (mutex_lock) from [] (misc_open+0x2c/0x104)

Line 52279: <6>[ 1802.294083] (0)[117:hang_detect][] (mutex_lock) from [] (misc_open+0x2c/0x104)

Line 52617: <6>[ 1802.299955] (0)[117:hang_detect][] (mutex_lock) from [] (misc_open+0x2c/0x104)

Line 52715: <6>[ 1802.301778] (0)[117:hang_detect][] (binder_open) from [] (misc_open+0x90/0x104)

果然,

c03bdd5c的所有进程都是在等拿misc_open的mutex lock,只有

c03bddc0不同,而这是就是需要要找的进程.

分析service进程

<3>[ 1802.301450] (0)[117:hang_detect]service D

<3>[ 1802.301461] (0)[117:hang_detect] c0aad124 <3>[ 1802.301476] (0)[117:hang_detect] 0 10387 10164 0x00000000

<6>[ 1802.301485] (0)[117:hang_detect]Backtrace:

<6>[ 1802.301504] (0)[117:hang_detect][] (mutex_ lock+0x54/0x58)

<6>[ 1802.301682] (0)[117:hang_detect] r10:00000000 r9:cc64a580 r8:c50c2180 r7:c109ec54 r6:c5296000 r5:c5296000

<6>[ 1802.301709] (0)[117:hang_detect] r4:c1082774

<6>[ 1802.301740] (0)[117:hang_detect][] (binder_ open+0xf4/0x330)

<6>[ 1802.301748] (0)[117:hang_detect] r4:ca18ac00 r3:00000000

<6>[ 1802.301778] (0)[117:hang_detect][] (misc_ open+0x90/0x104)

<6>[ 1802.301790] (0)[117:hang_detect] r9:cc64a580 r8:de30fd40 r7:c50c2180 r6:c1040eac r5:00000034 r4:c0879300

<6>[ 1802.301834] (0)[117:hang_detect][] (chrdev_ open+0x88/0x140)

解开调用栈,发现该进程拿到了

misc_mtx,但是一直没释放,阻塞在下面的open函数,那么service进程在等谁呢?

(gdb) list *0xc03bddc0

0xc03bddc0 is in misc_open (/home/android/.jenkins/jobs/K300AG_7.0_TSDS/workspace/kernel-3.18/drivers/char/misc.c:147).

143 err = 0;

144 replace_fops(file, new_fops);

145 if (file->f_op->open) {

146 file->private_data = c;

147 err = file->f_op->open(inode,file);

148 }

149 fail:

150 mutex_unlock(&misc_mtx);

151 return err;

(gdb)

..

这个是misc_open的调用是从binder_open过来的,分析它的调用栈:

(gdb) list *0xc0879300

0xc0879300 is in binder_open (/home/android/.jenkins/jobs/K300AG_7.0_TSDS/workspace/kernel-3.18/drivers/staging/android/binder.c:4361).

4360 static int binder_open(struct inode *nodp, struct file *filp)

4361 {

4362 struct binder_proc *proc;

..

4380 binder_lock(__func__);

..

(gdb) list *0xc08793f4(gdb) list *0xc08793f4

0xc08793f4 is in binder_open (/home/android/.jenkins/jobs/K300AG_7.0_TSDS/workspace/kernel-3.18/include/linux/jump_label.h:88).

84 #include <linux/atomic.h>

85

86 static inline int static_key_count(struct static_key *key)

87 {

88 return atomic_read(&key->enabled);

89 }

90

91 #ifdef HAVE_JUMP_LABEL

92

(gdb)

上面这个atomic_read是什么?跟踪内核代码发现,其实这个就是mutex lock的实现,所以最终这个service进程这里在等

binder lock,那binder lock又是被谁拿去了呢??

既然是等待binder lock,那么推测定有其它进程有binder thread read/write操作,排查既是D态进程,又在操作binder_thread_read/write的进程,发现下面两个进程同事满足条件:

<3>[ 1802.284342] (0)[117:hang_detect]Camera@Display D

<3>[ 1802.295677] (0)[117:hang_detect]Binder:9840_1 D

分析Binder:9840_1线程

<3>[ 1802.295677] (0)[117:hang_detect]Binder:9840_1 D

<3>[ 1802.295689] (0)[117:hang_detect] c0aad124 <3>[ 1802.295704] (0)[117:hang_detect] 0 9851 263 0x00000000

<6>[ 1802.295712] (0)[117:hang_detect]Backtrace:

<6>[ 1802.295731] (0)[117:hang_detect][] (mutex_ lock+0x54/0x58)

<6>[ 1802.295825] (0)[117:hang_detect] r10:c109ec54 r9:ca18a600 r8:ca18a668 r7:ca18a660 r6:dbf5de40 r5:dae2fd00

<6>[ 1802.295852] (0)[117:hang_detect] r4:c1082774

<6>[ 1802.295872] (0)[117:hang_detect][] (binder_ thread_read+0x168/0x12f0)

<6>[ 1802.295880] (0)[117:hang_detect] r4:00000000 r3:00000000

<6>[ 1802.295903] (0)[117:hang_detect][

仔细看这个进程的调用栈,发现也是陷入了获取不到binder lock的等待,然后被调度走了,说明该进程不是当前binder lock的持有者!

那就只剩下binder write的进程了。

分析Camera@Display线程

<3>[ 1802.284342] (0)[117:hang_detect]Camera@Display D

<3>[ 1802.284354] (0)[117:hang_detect] c0aad124 <3>[ 1802.284368] (0)[117:hang_detect] 0 10194 1 0x00000000

<6>[ 1802.284377] (0)[117:hang_detect]Backtrace:

<6>[ 1802.284396] (0)[117:hang_detect][] (down_ write+0x64/0x68)

<6>[ 1802.284475] (0)[117:hang_detect] r10:d045bd00 r9:c13374dc r8:00000001 r7:00000000 r6:e7a03000 r5:ca18a600

<6>[ 1802.284501] (0)[117:hang_detect] r4:de110ff8

<6>[ 1802.284521] (0)[117:hang_detect][] (binder_ update_page_range+0x190/0x384)

<6>[ 1802.284529] (0)[117:hang_detect] r4:c109ec54 r3:c109ec54

<6>[ 1802.284552] (0)[117:hang_detect][] (binder_ thread_write+0x16d4/0x310c)

<6>[ 1802.284560] (0)[117:hang_detect] r10:d045bd00 r9:c13374dc r8:e7a01f44 r7:aa27f134 r6:00000000 r5:c1082750

<6>[ 1802.284587] (0)[117:hang_detect] r4:c109ec54

<6>[ 1802.284606] (0)[117:hang_detect][] (do_ vfs_ioctl+0x3e4/0x5a8)

从上面分析调用栈发现,刚好是改进程拿到了binder lock,但是在执行

down_write这个信号量的时候被schedule出去了,

Camera@Display进程也陷入D状态,所以导致后面需要拿binder lock的线程都会被block住!

那么

down_write是哪个情况呢??继续撸:

(gdb) list *0xc087bf28

0xc087bf28 is in binder_update_page_range (/home/android/.jenkins/jobs/K300AG_7.0_TSDS/workspace/kernel-3.18/drivers/staging/android/binder.c:1459).

1454 else

1455 mm = get_task_mm(proc->tsk);

1456

1457 if (mm) {

1458 down_write(&mm->mmap_sem);

1459 vma = proc->vma;

1460 if (vma && mm != proc->vma_vm_mm) {

1461 pr_err("%d: vma mm and task mm mismatch\n", proc->pid);

1462 vma = NULL;

1463 }

(gdb)

原来

Camera@Display进程在等待

mm->mmap_sem这个信号量锁没等到被调度走了,从上面代码得知,mm是由get_task_mm函数所得,就是task_struct->mmap_sem信号量锁。

那这个task_struct->mmap_sem(mm lock)又被谁拿走了呢?(是不是撸得有点晕了..?)

分析AsyncTask #2 进程

继续分析排查D态调进程,发现

AsyncTask #2 进程中的

sdcardfs_setattr函数可疑:

<3>[ 1802.296711] (0)[117:hang_detect]AsyncTask #2 D

<3>[ 1802.296723] (0)[117:hang_detect] c0aad124 <3>[ 1802.296738] (0)[117:hang_detect] 0 9897 263 0x00000000

<6>[ 1802.296747] (0)[117:hang_detect]Backtrace:

<6>[ 1802.296765] (0)[117:hang_detect][] (mutex_ lock+0x54/0x58)

<6>[ 1802.296859] (0)[117:hang_detect] r10:cd044000 r9:da3a4110 r8:cd045d98 r7:cd045ce0 r6:deec53a0 r5:00000000

<6>[ 1802.296885] (0)[117:hang_detect] r4:deec5410

<6>[ 1802.296906] (0)[117:hang_detect][] (sdcardfs_ setattr+0x140/0x33c)

<6>[ 1802.296914] (0)[117:hang_detect] r4:c24414a8 r3:0000a068

<6>[ 1802.296936] (0)[117:hang_detect][] (notify_ change+0x1d0/0x33c)

..

gdb+撸代码:

(gdb) list *0xc02f1610

0xc02f1610 is in sdcardfs_setattr (/home/android/.jenkins/jobs/K300AG_7.0_TSDS/workspace/kernel-3.18/fs/sdcardfs/inode.c:462).

461 static int sdcardfs_setattr(struct dentry *dentry, struct iattr *ia)

462 {

463 int err;

..

516 if (current->mm)

(gdb)

517 down_write(¤t->mm->mmap_sem);

..(gdb) list *0xc02f1750

0xc02f1750 is in sdcardfs_setattr (/home/android/.jenkins/jobs/K300AG_7.0_TSDS/workspace/kernel-3.18/fs/sdcardfs/inode.c:543).

538 * Note: we use lower_dentry->d_inode, because lower_inode may be

539 * unlinked (no inode->i_sb and i_ino==0. This happens if someone

540 * tries to open(), unlink(), then ftruncate() a file.

541 */

542 mutex_lock(&lower_dentry->d_inode->i_mutex);

543 err = notify_change(lower_dentry, &lower_ia, /* note: lower_ia */

544 NULL);

545 mutex_unlock(&lower_dentry->d_inode->i_mutex);

546 if (current->mm)

547 up_write(¤t->mm->mmap_sem);

从上面调用栈结合撸代码可以明显看出,

AsyncTask #2先拿到了mm lock(

current->mm->mmap_sem,LINE517),但是还没来得急释放(LINE547),就有陷入了

i_mutex的等待!

所以后面所有要拿mm lock的进程都会被阻塞住陷入等待.

搜索sdcardfs关键字:

C:\Documents and Settings\user.com\桌面\stability training\k300死机\12.09\APLog_2017_1219_140241\kernel_log (4 hits)

Line 52441: <6>[ 1802.296906] (0)[117:hang_detect][] (mutex_lock) from [] (sdcardfs_setattr+0x140/0x33c)

Line 52443: <6>[ 1802.296936] (0)[117:hang_detect][] (sdcardfs_setattr) from [] (notify_change+0x1d0/0x33c)

Line 52493: <6>[ 1802.297793] (0)[117:hang_detect][] (vfs_write) from [] (sdcardfs_write+0x44/0x190)

Line 52495: <6>[ 1802.297834] (0)[117:hang_detect][] (sdcardfs_write) from [] (vfs_write+0xac/0x1b8)

发现是 AsyncTask #3 进程在操作sdcardfs_write:

分析AsyncTask #3进程

<3>[ 1802.297264] (0)[117:hang_detect]AsyncTask #3 D

<3>[ 1802.297275] (0)[117:hang_detect] c0aad124 <3>[ 1802.297290] (0)[117:hang_detect] 0 9951 263 0x00000000

<6>[ 1802.297299] (0)[117:hang_detect]Backtrace:

<6>[ 1802.297318] (0)[117:hang_detect][] (down_ read+0x38/0x3c)

<6>[ 1802.297395] (0)[117:hang_detect] r6:c212f200 r5:c6712018 r4:c6713cb0

<6>[ 1802.297421] (0)[117:hang_detect][] (do_ page_fault+0xb4/0x3ec)

<6>[ 1802.297435] (0)[117:hang_detect][] (_ _dabt_svc+0x38/0x60)

<6>[ 1802.297497] (0)[117:hang_detect]Exception stack(0xc6713cb0 to 0xc6713cf8)

<6>[ 1802.297510] (0)[117:hang_detect]3ca0: 00000000 8e47900b 00001000 00000034

<6>[ 1802.297525] (0)[117:hang_detect]3cc0: 00001000 c6713e04 deec5474 00001000 00000000 c0b1a040 00001000 c6713d0c

<6>[ 1802.297538] (0)[117:hang_detect]3ce0: 000d3394 c6713cf8 c6713dfc c01fd150 200f0013 ffffffff

<6>[ 1802.297545] (0)[117:hang_detect] r10:00001000 r9:c0b1a040 r8:00000000 r7:c6713ce4 r6:ffffffff r5:200f0013

<6>[ 1802.297572] (0)[117:hang_detect] r4:c01fd150

<6>[ 1802.297594] (0)[117:hang_detect][] (new_ sync_write+0x9c/0xc4)

<6>[ 1802.297725] (0)[117:hang_detect] r7:db4a0300 r6:c6713f78 r5:00000000 r4:00000000

<6>[ 1802.297753] (0)[117:hang_detect][] (sdcardfs_ write+0x44/0x190)

<6>[ 1802.297801] (0)[117:hang_detect] r9:ccf380c0 r8:da3a4e58 r7:00133394 r6:c6713f78 r5:8e41800c r4:db4a0300

<6>[ 1802.297834] (0)[117:hang_detect][] (vfs_ write+0xac/0x1b8)

解析调用栈撸代码:

(gdb) list *0xc02f13dc

0xc02f13dc is in sdcardfs_write (/home/android/.jenkins/jobs/K300AG_7.0_TSDS/workspace/kernel-3.18/fs/sdcardfs/file.c:61).

59 static ssize_t sdcardfs_write(struct file *file, const char __user *buf,

60 size_t count, loff_t *ppos)

61 {

62 int err;

..

73 err = vfs_write(lower_file, buf, count, ppos);

74 /* update our inode times+sizes upon a successful lower write */

75 if (err >= 0) {

(gdb) (gdb) list *0xc02208d8

0xc02208d8 is in new_sync_write (/home/android/.jenkins/jobs/K300AG_7.0_TSDS/workspace/kernel-3.18/fs/read_write.c:467).

466 ssize_t new_sync_write(struct file *filp, const char __user *buf, size_t len, loff_t *ppos)

467 {

468 struct iovec iov = { .iov_base = (void __user *)buf, .iov_len = len };

469 struct kiocb kiocb;

470 struct iov_iter iter;

471 ssize_t ret;

(gdb)

..

(gdb) list *0xc0220b54

0xc0220b54 is in vfs_write (/home/android/.jenkins/jobs/K300AG_7.0_TSDS/workspace/kernel-3.18/fs/read_write.c:534).

529 ret = rw_verify_area(WRITE, file, pos, count);

530 if (ret >= 0) {

531 count = ret;

532 file_start_write(file);

533 if (file->f_op->write)

534 ret = file->f_op->write(file, buf, count, pos);

..

(gdb) list *0xc01dc738

0xc01dc738 is in generic_file_write_iter (/home/android/.jenkins/jobs/K300AG_7.0_TSDS/workspace/kernel-3.18/mm/filemap.c:2673).

2668 struct file *file = iocb->ki_filp;

2669 struct inode *inode = file->f_mapping->host;

2670 ssize_t ret;

2671

2672 mutex_lock(&inode->i_mutex);

2673 ret = __generic_file_write_iter(iocb, from);

2674 mutex_unlock(&inode->i_mutex);

..

这里可以明显得出,i_mutex lock被

AsyncTask #3进程拿住了,但是AsyncTask #3也最终被调度走了,那么

AsyncTask #3在等什么呢?继续分析调用栈:

(gdb) list *0xc0114688

0xc0114688 is in __do_page_fault (/home/android/.jenkins/jobs/K300AG_7.0_TSDS/workspace/kernel-3.18/arch/arm/mm/fault.c:236).

231 unsigned int flags, struct task_struct *tsk)

232 {

233 struct vm_area_struct *vma;

234 int fault;

235

236 vma = find_vma(mm, addr); ???

237 fault = VM_FAULT_BADMAP;

238 if (unlikely(!vma))

239 goto out;

240 if (unlikely(vma->vm_start > addr))

..

这里是发生了缺页异常的处理流程,继续看:

(gdb) list *0xc01145d4

0xc01145d4 is in do_page_fault (/home/android/.jenkins/jobs/K300AG_7.0_TSDS/workspace/kernel-3.18/arch/arm/mm/fault.c:266).

264 static int __kprobes

265 do_page_fault(unsigned long addr, unsigned int fsr, struct pt_regs *regs)

266 {

267 struct task_struct *tsk;

268 struct mm_struct *mm;

..

299 if (!down_read_trylock(&mm->mmap_sem)) {

300 if (!user_mode(regs) && !search_exception_tables(regs->ARM_pc))

(gdb)

301 goto no_context;

302 retry:

303 down_read(&mm->mmap_sem);

304 } else {

305 /*

306 * The above down_read_trylock() might have succeeded in

307 * which case, we'll have missed the might_sleep() from

308 * down_read()

309 */

310 might_sleep();

(gdb)

311 #ifdef CONFIG_DEBUG_VM

312 if (!user_mode(regs) &&

313 !search_exception_tables(regs->ARM_pc))

314 goto no_context;

315 #endif

316 }

317

318 fault = __do_page_fault(mm, addr, fsr, flags, tsk);

这里的gdb解析显示代码虽然有点问题,symbols提示的代码位置跟调用栈有点出入(可能是工具的原因,所以需要结合调用栈一起分析), 但可以根据当前的调用栈推出当前进程是在等待

mm lock (mm->mmap_sem信号量锁)(

LINE303),到现在这步,线程卡住的关系就很明显了:

AsyncTask #2进程持有mm lock,但它在等i_mutex,

AsyncTask #3进程持有i_mutex, 但它在等待mm lock

==》

dead lock

!

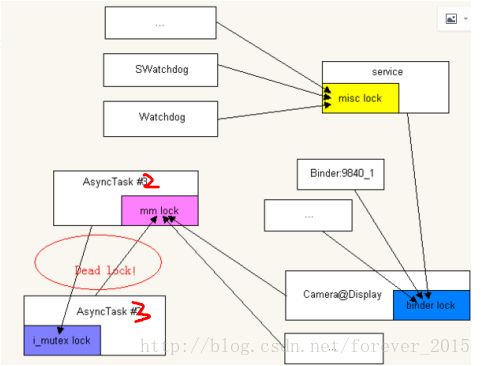

用一张简图表示

解决方案

拿i_mutex前,释放mm lock.

fs/sdcardfs/inode.c

461 static int sdcardfs_setattr(struct dentry *dentry, struct iattr *ia)

462 {

..

516 if (current->mm)

517

down_write(¤t->mm->mmap_sem);

518 if (ia->ia_valid & ATTR_SIZE) {

519 err = inode_newsize_ok(inode, ia->ia_size);

520 if (err) {

521 if (current->mm)

522 up_write(¤t->mm->mmap_sem);

523 lockdep_on();

524 goto out;

525 }

526 truncate_setsize(inode, ia->ia_size);

527 }

+ if (current->mm)

+ up_write(¤t->mm->mmap_sem);

529 /*

530 * mode change is for clearing setuid/setgid bits. Allow lower fs

531 * to interpret this in its own way.

532 */

533 if (lower_ia.ia_valid & (ATTR_KILL_SUID | ATTR_KILL_SGID))

534 lower_ia.ia_valid &= ~ATTR_MODE;

535

536 /* notify the (possibly copied-up) lower inode */

537 /*

538 * Note: we use lower_dentry->d_inode, because lower_inode may be

539 * unlinked (no inode->i_sb and i_ino==0. This happens if someone

540 * tries to open(), unlink(), then ftruncate() a file.

541 */

542

mutex_lock(&lower_dentry->d_inode->i_mutex);

543 err = notify_change(lower_dentry, &lower_ia, /* note: lower_ia */

544 NULL);

545

mutex_unlock(&lower_dentry->d_inode->i_mutex);

546 - if (current->mm)

547 - up_write(¤t->mm->mmap_sem);

548 lockdep_on();