SSD-Tensorflow超详细解析【一】:加载模型对图片进行测试

SSD-tensorflow——github下载地址:SSD-Tensorflow

目标检测的块速实现

下载完成之后我们打开工程,可以看到如下图所示的文件布局:

首先我们打开checkpoints文件,解压缩ssd_300_vgg.ckpt.zip文件到checkpoints目录下面。注意:解压到checkpoints文件夹下即可,不要有子文件夹。

然后打开notebooks的ssd_tests.ipyb文件(使用Jupyter或者ipython)。为了方便调试,将其保存为.py文件,或者直接在notebooks下新建一个test.py文件,然后复制如下代码:

# coding: utf-8

# In[1]:

import os

import math

import random

import numpy as np

import tensorflow as tf

import cv2

slim = tf.contrib.slim

# In[2]:

import matplotlib.pyplot as plt

import matplotlib.image as mpimg

# In[3]:

import sys

sys.path.append('./')

# In[4]:

from nets import ssd_vgg_300, ssd_common, np_methods

from preprocessing import ssd_vgg_preprocessing

from notebooks import visualization

# In[5]:

# TensorFlow session: grow memory when needed. TF, DO NOT USE ALL MY GPU MEMORY!!!

gpu_options = tf.GPUOptions(allow_growth = True)

config = tf.ConfigProto(log_device_placement = False, gpu_options = gpu_options)

isess = tf.InteractiveSession(config = config)

# ## SSD 300 Model

#

# The SSD 300 network takes 300x300 image inputs. In order to feed any image, the latter is resize to this input shape (i.e.`Resize.WARP_RESIZE`). Note that even though it may change the ratio width / height, the SSD model performs well on resized images (and it is the default behaviour in the original Caffe implementation).

#

# SSD anchors correspond to the default bounding boxes encoded in the network. The SSD net output provides offset on the coordinates and dimensions of these anchors.

# In[6]:

# Input placeholder.

net_shape = (300, 300)

data_format = 'NHWC'

img_input = tf.placeholder(tf.uint8, shape = (None, None, 3))

# Evaluation pre-processing: resize to SSD net shape.

image_pre, labels_pre, bboxes_pre, bbox_img = ssd_vgg_preprocessing.preprocess_for_eval(

img_input, None, None, net_shape, data_format, resize = ssd_vgg_preprocessing.Resize.WARP_RESIZE)

image_4d = tf.expand_dims(image_pre, 0)

# Define the SSD model.

reuse = True if 'ssd_net' in locals() else None

ssd_net = ssd_vgg_300.SSDNet()

with slim.arg_scope(ssd_net.arg_scope(data_format = data_format)):

predictions, localisations, _, _ = ssd_net.net(image_4d, is_training = False, reuse = reuse)

# Restore SSD model.

ckpt_filename = 'D:\py project\SSD-Tensorflow\checkpoints/ssd_300_vgg.ckpt'

# ckpt_filename = '../checkpoints/VGG_VOC0712_SSD_300x300_ft_iter_120000.ckpt'

isess.run(tf.global_variables_initializer())

saver = tf.train.Saver()

saver.restore(isess, ckpt_filename)

# SSD default anchor boxes.

ssd_anchors = ssd_net.anchors(net_shape)

# ## Post-processing pipeline

#

# The SSD outputs need to be post-processed to provide proper detections. Namely, we follow these common steps:

#

# * Select boxes above a classification threshold;

# * Clip boxes to the image shape;

# * Apply the Non-Maximum-Selection algorithm: fuse together boxes whose Jaccard score > threshold;

# * If necessary, resize bounding boxes to original image shape.

# In[7]:

# Main image processing routine.

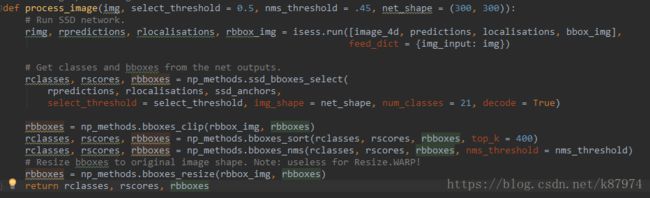

def process_image(img, select_threshold = 0.5, nms_threshold = .45, net_shape = (300, 300)):

# Run SSD network.

rimg, rpredictions, rlocalisations, rbbox_img = isess.run([image_4d, predictions, localisations, bbox_img],

feed_dict = {img_input: img})

# Get classes and bboxes from the net outputs.

rclasses, rscores, rbboxes = np_methods.ssd_bboxes_select(

rpredictions, rlocalisations, ssd_anchors,

select_threshold = select_threshold, img_shape = net_shape, num_classes = 21, decode = True)

rbboxes = np_methods.bboxes_clip(rbbox_img, rbboxes)

rclasses, rscores, rbboxes = np_methods.bboxes_sort(rclasses, rscores, rbboxes, top_k = 400)

rclasses, rscores, rbboxes = np_methods.bboxes_nms(rclasses, rscores, rbboxes, nms_threshold = nms_threshold)

# Resize bboxes to original image shape. Note: useless for Resize.WARP!

rbboxes = np_methods.bboxes_resize(rbbox_img, rbboxes)

return rclasses, rscores, rbboxes

# In[21]:

# Test on some demo image and visualize output.

path = 'D:\py project\SSD-Tensorflow\demo/'

image_names = sorted(os.listdir(path))

img = mpimg.imread(path + image_names[-1])

rclasses, rscores, rbboxes = process_image(img)

# visualization.bboxes_draw_on_img(img, rclasses, rscores, rbboxes, visualization.colors_plasma)

visualization.plt_bboxes(img, rclasses, rscores, rbboxes)修改代码中的ckpt_filename为你的工程目录下的chepoints下的ckpt文件。path为你想要进行测试的图片目录(代码中只对该目录下的最后一个文件夹进行测试,如果要想测试多幅图片或者做成视频的方式,需大家自行修改代码)。

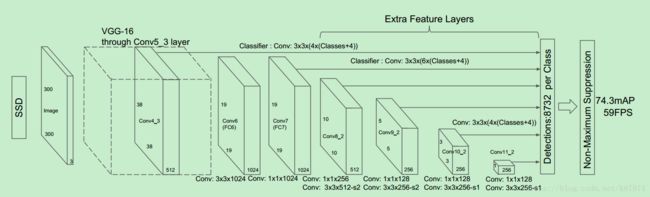

SSD的网络结构

理论上来讲,现在代码已经可以跑了。但是我们还是需要学习一下它是如何实现SSD这一目标检测过程的。

我们打开nets中的ssd_vgg_300.py文件,里面就是整个基于vgg的ssd目标检测网络。其网络结构设计参照下图:

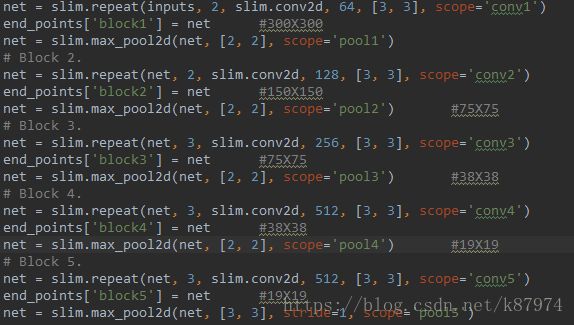

下图为代码的一个实现,

这个里面实现的是input-conv-conv-block1-pool-conv-conv-block2-pool-conv-conv-conv-block3-pool-conv-conv-conv-block4-pool-conv-conv-conv-block5-pool-conv-block6-dropout-conv-block7-dropout-conv-conv-block8-conv-conv-block9-conv-conv-block11。

每一层的feature maps都是存到了end_points这个dic里面,这是整个基本网络结构的设计。再看一下SSDParams里面的定义:

default_params = SSDParams(

img_shape=(300, 300), #输入的size

num_classes=21, # 类数

no_annotation_label=21,

feat_layers=['block4', 'block7', 'block8', 'block9', 'block10', 'block11'], #需要抽取并做额外卷积来做目标检测的层

feat_shapes=[(38, 38), (19, 19), (10, 10), (5, 5), (3, 3), (1, 1)], #对应上面的层的feature maps的size大小

anchor_size_bounds=[0.15, 0.90],

# anchor_size_bounds=[0.20, 0.90],

anchor_sizes=[(21., 45.), # anchor size,定义的default box的size,之后再计算anchor的时候用到

(45., 99.),

(99., 153.),

(153., 207.),

(207., 261.),

(261., 315.)],

# anchor_sizes=[(30., 60.),

# (60., 111.),

# (111., 162.),

# (162., 213.),

# (213., 264.),

# (264., 315.)],

anchor_ratios=[[2, .5], # 长宽比,即论文里面提到的 ratios(2,1/2,3,1/3)

[2, .5, 3, 1./3],

[2, .5, 3, 1./3],

[2, .5, 3, 1./3],

[2, .5],

[2, .5]],

anchor_steps=[8, 16, 32, 64, 100, 300], #caffe实现的时候使用的初始化anchor方法,后面会讲到

anchor_offset=0.5, #偏移,计算中心点时用到

normalizations=[20, -1, -1, -1, -1, -1],

prior_scaling=[0.1, 0.1, 0.2, 0.2]

)其中feat-layers里面保存的是我们在目标检测时需要用到的feature层,一共有6个层,其size对应feat_shapes里面的数值。

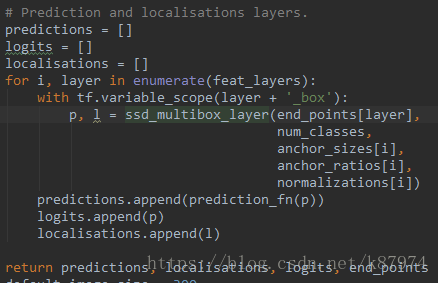

我们再继续看SSD是如何预测目标的类别以及框的位置的,下图接SSD网络结构设计部分:

这里的一个for循环依次计算feat_layers里面的类别与位置——从block4、7、8、9、10、11,然后append到相应的数组里面。对于里面的细节,我们转到ssd_multibox_layer,如下代码所示:

def ssd_multibox_layer(inputs,

num_classes,

sizes,

ratios=[1],

normalization=-1,

bn_normalization=False):

"""Construct a multibox layer, return a class and localization predictions.

"""

net = inputs

if normalization > 0:

net = custom_layers.l2_normalization(net, scaling=True) #对特征层做l2的标准化处理

# Number of anchors.

num_anchors = len(sizes) + len(ratios) #计算default box的数量,分别为 4 6 6 6 4 4

# Location.

num_loc_pred = num_anchors * 4 #预测的位置信息= 4*num_anchors , 即 ymin,xmin,ymax,xmax.

loc_pred = slim.conv2d(net, num_loc_pred, [3, 3], activation_fn=None, #对特征层做卷积,输出channels为4*num_anchors

scope='conv_loc')

loc_pred = custom_layers.channel_to_last(loc_pred) # ensure data format be "NWHC"

loc_pred = tf.reshape(loc_pred,

tensor_shape(loc_pred, 4)[:-1]+[num_anchors, 4]) #将得到的feature maps reshape为[N,W,H,num_anchors,4]

# Class prediction.

num_cls_pred = num_anchors * num_classes # 预测的类别信息 21*4=84

cls_pred = slim.conv2d(net, num_cls_pred, [3, 3], activation_fn=None,

scope='conv_cls')

cls_pred = custom_layers.channel_to_last(cls_pred)

cls_pred = tf.reshape(cls_pred,

tensor_shape(cls_pred, 4)[:-1]+[num_anchors, num_classes]) # reshape 为[N,W,H,num_anchors,classes]

return cls_pred, loc_pred该段代码实现对指定feature layers的位置预测以及类别预测。首先计算anchors的数量,对于位置信息,输出16通道的feature map,将其reshape为[N,W,H,num_anchors,4]。对于类别信息,输出84通道的feature maps,再将其reshape为[N,W,H,num_anchors,num_classes]。返回计算得到的位置和类别预测。

anchor box的初始化

初始过程的思想大致如此:设置好相应的anchor size,ratios(长宽比),offset(偏移)等,对于每一层feature layers,计算出相对的anchor size,论文中如右所示: 。smin=0.2。300*0.2=60,而SSDNet中定义的anchor size 为(21., 45.),(45., 99.),(99., 153.), (153., 207.),(207., 261.),(261., 315.)]。可见其大小为自己设置的,并非由公式得到的。

。smin=0.2。300*0.2=60,而SSDNet中定义的anchor size 为(21., 45.),(45., 99.),(99., 153.), (153., 207.),(207., 261.),(261., 315.)]。可见其大小为自己设置的,并非由公式得到的。

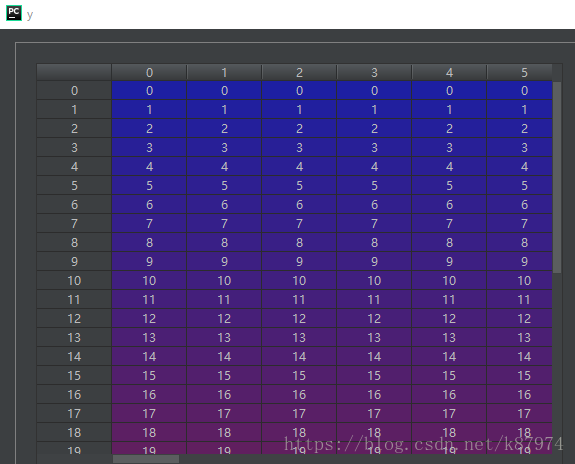

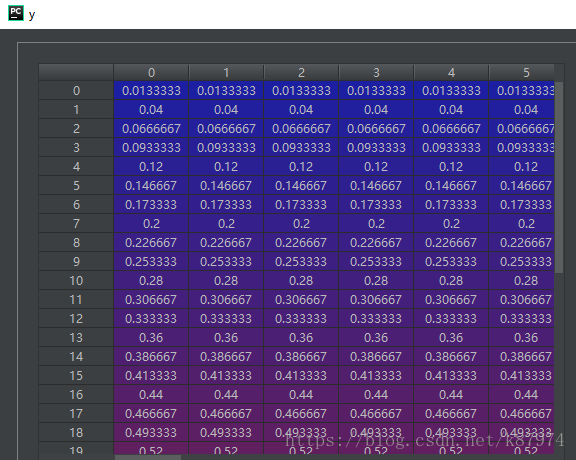

代码中的实现,以第一层feat_layer(block4,38x38)为例:对于y,x各生成一个相对应的网格矩阵,矩阵数值如下:

然后对其做标准化处理,比较简单的方法为(y+offset)/feat_shape,以第一层为例,即(y+0.5)/38可得到归一化的坐标,归一化的数组如下:

然后定义anchors的宽度和高度,其宽度和高度分别对应论文中![]() 、

、![]() ,以及当ratios=1时对应

,以及当ratios=1时对应![]() 。其中ar即为定义好的anchor_ratios。以第一层为例,共有4个anchors,其中第一个的h和w为

。其中ar即为定义好的anchor_ratios。以第一层为例,共有4个anchors,其中第一个的h和w为![]() 21/300。第二个h和w为

21/300。第二个h和w为

其初始化主要由下面三个函数完成,其调用关系为从上到下:

def ssd_anchor_one_layer(img_shape,

feat_shape,

sizes,

ratios,

step,

offset=0.5,

dtype=np.float32):

"""Computer SSD default anchor boxes for one feature layer.

Determine the relative position grid of the centers, and the relative

width and height.

Arguments:

feat_shape: Feature shape, used for computing relative position grids;

size: Absolute reference sizes;

ratios: Ratios to use on these features;

img_shape: Image shape, used for computing height, width relatively to the

former;

offset: Grid offset.

Return:

y, x, h, w: Relative x and y grids, and height and width.

"""

# Compute the position grid: simple way. #计算中心点的归一化距离

# y, x = np.mgrid[0:feat_shape[0], 0:feat_shape[1]]

# y = (y.astype(dtype) + offset) / feat_shape[0]

# x = (x.astype(dtype) + offset) / feat_shape[1]

# Weird SSD-Caffe computation using steps values...

y, x = np.mgrid[0:feat_shape[0], 0:feat_shape[1]] #生成一个网格矩阵 y,x均为[38,38],其中y为从上到下从0到37。y为从左到右0到37

y = (y.astype(dtype) + offset) * step / img_shape[0] #以第一个元素为例,简单方法为:(0+0.5)/38即为相对距离,而SSD-Caffe使用的是(0+0.5)*step/img_shape

x = (x.astype(dtype) + offset) * step / img_shape[1]

# Expand dims to support easy broadcasting.

y = np.expand_dims(y, axis=-1) # [38,38,1]

x = np.expand_dims(x, axis=-1)

# Compute relative height and width.

# Tries to follow the original implementation of SSD for the order.

num_anchors = len(sizes) + len(ratios)

h = np.zeros((num_anchors, ), dtype=dtype)

w = np.zeros((num_anchors, ), dtype=dtype)

# Add first anchor boxes with ratio=1.

h[0] = sizes[0] / img_shape[0]

w[0] = sizes[0] / img_shape[1]

di = 1

if len(sizes) > 1:

h[1] = math.sqrt(sizes[0] * sizes[1]) / img_shape[0]

w[1] = math.sqrt(sizes[0] * sizes[1]) / img_shape[1]

di += 1

for i, r in enumerate(ratios):

h[i+di] = sizes[0] / img_shape[0] / math.sqrt(r)

w[i+di] = sizes[0] / img_shape[1] * math.sqrt(r)

return y, x, h, w最终返回y,x,h,w的数值信息。

边界框的选取

上面讲了SSDNet的网络结构以及anchor_bbox的初始化,通过上述方法可以得到初步的类别与位置预测。这里我们进入到np_methods.ssd_bboxes_select函数里面,查看SSD是如何选择对应的bboxes的。

其函数如下(函数调用关系为从上往下):

def ssd_bboxes_select_layer(predictions_layer,

localizations_layer,

anchors_layer,

select_threshold=0.5,

img_shape=(300, 300),

num_classes=21,

decode=True):

"""Extract classes, scores and bounding boxes from features in one layer.

Return:

classes, scores, bboxes: Numpy arrays...

"""

# First decode localizations features if necessary.

if decode:

localizations_layer = ssd_bboxes_decode(localizations_layer, anchors_layer) #解码成ymin,xmin,ymax,xmax的形式

# Reshape features to: Batches x N x N_labels | 4.

p_shape = predictions_layer.shape #以第一层为例,这里的值为(1,38,38,4,21)单幅图片

batch_size = p_shape[0] if len(p_shape) == 5 else 1

predictions_layer = np.reshape(predictions_layer,

(batch_size, -1, p_shape[-1])) #reshape之后为(1,5776,21)

l_shape = localizations_layer.shape #(1,38,38,4,4) 为边界框的坐标信息

localizations_layer = np.reshape(localizations_layer,

(batch_size, -1, l_shape[-1])) #reshape之后为(1,5776,4)

# Boxes selection: use threshold or score > no-label criteria.

if select_threshold is None or select_threshold == 0:

# Class prediction and scores: assign 0. to 0-class

classes = np.argmax(predictions_layer, axis=2)

scores = np.amax(predictions_layer, axis=2)

mask = (classes > 0)

classes = classes[mask]

scores = scores[mask]

bboxes = localizations_layer[mask]

else:

sub_predictions = predictions_layer[:, :, 1:] #去掉背景类别

idxes = np.where(sub_predictions > select_threshold) #得到预测的类别中阈值大于select_threshold的索引值。

classes = idxes[-1]+1 #因为去掉了背景类别,全体的类别索引减了1,所以该处的类需要+1

scores = sub_predictions[idxes] #得到所有已选定(大于给定阈值)的类别的分数。这里的话数组维度应该会大幅减少

bboxes = localizations_layer[idxes[:-1]] #得到所有已选定的类的坐标值

return classes, scores, bboxes

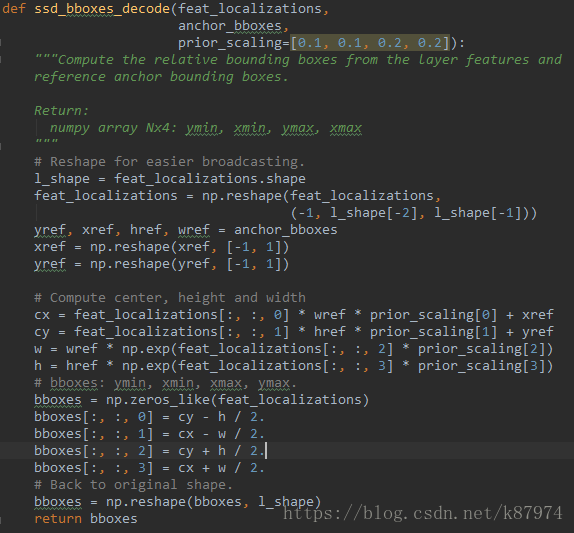

对于每一层的feat_layer与anchor boxes,需要先对其decode,返回边界框的ymin,xmin,ymax,xmax。再根据给定的阈值选择大于其值的类和边界框。并appen到一个数组中返回。

边界框的裁剪

此时得到的rbboxes数组中的部分值是大于1的,而在我们的归一化表示中,整个框的宽度和高度都是等于1的,因此需要对其进行裁剪,保证最大值不超过1,最小值不小于0。其代码如下所示:

def bboxes_clip(bbox_ref, bboxes):

"""Clip bounding boxes with respect to reference bbox.

"""

bboxes = np.copy(bboxes)

bboxes = np.transpose(bboxes)

bbox_ref = np.transpose(bbox_ref) #其中 bbox_ref为一个[0,0,1,1]的数组

bboxes[0] = np.maximum(bboxes[0], bbox_ref[0])

bboxes[1] = np.maximum(bboxes[1], bbox_ref[1])

bboxes[2] = np.minimum(bboxes[2], bbox_ref[2])

bboxes[3] = np.minimum(bboxes[3], bbox_ref[3])

bboxes = np.transpose(bboxes)

return bboxes保留指定数量的边界框

得到了裁剪之后的边界框和类别,我们需要对其做一个降序排序,并保留指定数量的最优的那一部分。个中参数可以自行调节,这里只保留至多400个。其代码如下:

def bboxes_sort(classes, scores, bboxes, top_k=400):

"""Sort bounding boxes by decreasing order and keep only the top_k

"""

# if priority_inside:

# inside = (bboxes[:, 0] > margin) & (bboxes[:, 1] > margin) & \

# (bboxes[:, 2] < 1-margin) & (bboxes[:, 3] < 1-margin)

# idxes = np.argsort(-scores)

# inside = inside[idxes]

# idxes = np.concatenate([idxes[inside], idxes[~inside]])

idxes = np.argsort(-scores)

classes = classes[idxes][:top_k]

scores = scores[idxes][:top_k]

bboxes = bboxes[idxes][:top_k]

return classes, scores, bboxes非极大值抑制(NMS)

在得到了指定数量的边界框和类别之后。对于同一个类存在多个框的情况下,要找到一个最合适的,并去掉其他冗余的框,需要进行非极大值抑制的操作,其代码实现如下:

def bboxes_nms(classes, scores, bboxes, nms_threshold=0.45):

"""Apply non-maximum selection to bounding boxes.

"""

keep_bboxes = np.ones(scores.shape, dtype=np.bool)

for i in range(scores.size-1):

if keep_bboxes[i]:

# Computer overlap with bboxes which are following.

overlap = bboxes_jaccard(bboxes[i], bboxes[(i+1):]) #计算当前框与之后所有框的IOU

# Overlap threshold for keeping + checking part of the same class

keep_overlap = np.logical_or(overlap < nms_threshold, classes[(i+1):] != classes[i]) #对于所有IOU小于0.45或者当前类别与之后类别不相同的位置,置为True

keep_bboxes[(i+1):] = np.logical_and(keep_bboxes[(i+1):], keep_overlap) # 将上面得到的所有IOU小于0.45或类别不同的位置赋给keep_bboxes

idxes = np.where(keep_bboxes)

return classes[idxes], scores[idxes], bboxes[idxes]边界框的Resize

代码中有一步是对bboxes进行resize,使其恢复到original image shape。但是由于这里使用的是归一化的表示方式,所以意义不大,但还是贴一下代码:

目标检测的可视化

在完成了上述所有操作之后,我们得到了最终的classes,scores,localizations。我们需要对其进行可视化,将所有的目标类别、分数以及边界框的坐标画在我们的原图上,这里由如下代码完成:

def plt_bboxes(img, classes, scores, bboxes, figsize=(10,10), linewidth=1.5):

"""Visualize bounding boxes. Largely inspired by SSD-MXNET!

"""

fig = plt.figure(figsize=figsize)

plt.imshow(img)

height = img.shape[0]

width = img.shape[1]

colors = dict()

for i in range(classes.shape[0]):

cls_id = int(classes[i])

if cls_id >= 0:

score = scores[i]

if cls_id not in colors:

colors[cls_id] = (random.random(), random.random(), random.random())

ymin = int(bboxes[i, 0] * height)

xmin = int(bboxes[i, 1] * width)

ymax = int(bboxes[i, 2] * height)

xmax = int(bboxes[i, 3] * width)

rect = plt.Rectangle((xmin, ymin), xmax - xmin,

ymax - ymin, fill=False,

edgecolor=colors[cls_id],

linewidth=linewidth)

plt.gca().add_patch(rect)

class_name = str(cls_id)

plt.gca().text(xmin, ymin - 2,

'{:s} | {:.3f}'.format(class_name, score),

bbox=dict(facecolor=colors[cls_id], alpha=0.5),

fontsize=12, color='white')

plt.show()值得一提的是,因为边界框的表示是归一化的,所以要恢复成原图的尺寸的话,只需要对相应的坐标乘上原图的Height和width即可。

最终的结果如下:

好了,本篇博文到此结束,下一篇讲讲如何利用SSD_tensorflow训练自己的数据集。

如果你觉得有用,帮忙扫个红包支持一下吧: