DeepLearing学习笔记-Planar data classification with one hidden layer(第三周作业)

0 - 背景:

前文,创建的神经网络只有一个输出层,没有隐藏层。本文将创建单隐藏层的神经网络模型。

- 二分类单隐藏层的神经网络

- 神经元节点采用非线性的激活函数,如tanh

- 计算交叉损失函数

- 运用前向和后向传播

1- 准备条件:

本文实践过程需要以下的python库:

- numpy Python中常用的科学计算库

- sklearn 是常用的数据挖掘和分析库

- matplotlib 是用于数据可视化

- testCases 是用以测试相对应函数的测试例子

- planar_utils 是本文实践中需要用到其他一些函数

模块加载代码:

import numpy as np

import matplotlib.pyplot as plt

from testCases import *

import sklearn

import sklearn.datasets

import sklearn.linear_model

from planar_utils import plot_decision_boundary, sigmoid, load_planar_dataset, load_extra_datasets

%matplotlib inline

np.random.seed(1) # set a seed so that the results are consistentsklearn 的安装:

pip install sklearn

2-数据集介绍

下述代码将加载一个二分类的数据集并进行可视化:

X, Y = load_planar_dataset()

# Visualize the data:

plt.scatter(X[0, :], X[1, :], c=Y, s=40, cmap=plt.cm.Spectral);可视化结果:

我们可以看出,数据点分布呈现一个花型,其中红色点表示y=0,蓝色点表示y=1。我们的目标是创建一个模型以能够分开这两类数据。

其中X是一个矩阵,包含着数据的特征信息 (x1,x2)

Y是一个向量,包含这数据对应的标记结果,其中 (red:0,blue:1)

尺寸信息:

### START CODE HERE ### (≈ 3 lines of code)

shape_X = X.shape

shape_Y = Y.shape

m = shape_X[1] # training set size

### END CODE HERE ###

print ('The shape of X is: ' + str(shape_X))

print ('The shape of Y is: ' + str(shape_Y))

print ('I have m = %d training examples!' % (m))运行结果:

The shape of X is: (2, 400)

The shape of Y is: (1, 400)

I have m = 400 training examples!3 - 简单逻辑回归

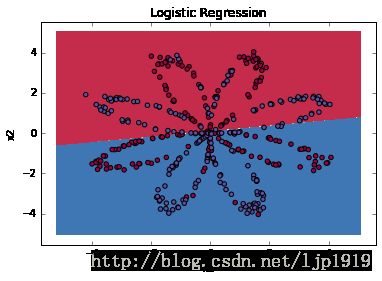

在创建一个全神经网络之前,我们先看看逻辑回归对于这类问题是如何处理的。为此,我们可以采用sklearn的’s built-in函数实现:

# Train the logistic regression classifier

clf = sklearn.linear_model.LogisticRegressionCV();

clf.fit(X.T, Y.T);运行结果会有如下提示:

c:\users\jason\appdata\local\programs\python\python35\lib\site-packages\sklearn\utils\validation.py:547: DataConversionWarning: A column-vector y was passed when a 1d array was expected. Please change the shape of y to (n_samples, ), for example using ravel().

y = column_or_1d(y, warn=True)上述的警告信息,可以根据提示通过Y = Y.ravel()将Y进行转换,使得尺寸从(1, 400)变为(400,)。

现在绘制出模型的边界:

# Plot the decision boundary for logistic regression

plot_decision_boundary(lambda x: clf.predict(x), X, Y)#会有告警信息

plt.title("Logistic Regression")

# Print accuracy

LR_predictions = clf.predict(X.T)

print ('Accuracy of logistic regression: %d ' % float((np.dot(Y,LR_predictions) + np.dot(1-Y,1-LR_predictions))/float(Y.size)*100) +

'% ' + "(percentage of correctly labelled datapoints)")输出:

Accuracy of logistic regression: 47 % (percentage of correctly labelled datapoints)

从输出结果,我们可以看出准确率为47%,数据集并不能够通过逻辑回归被很好地被线性划分。由此,我们才需要本文的正题-神经网络。

4 - 神经网络模型

从上述的逻辑回归结果我们可以看出该模型对于花形数据表现不佳,为此我们采用单隐藏层的神经网络作为尝试,模型如下:

该模型在输入层和输出层之间,只有一个隐藏层。隐藏层的激活函数是 tanh ,输出层的激活函数是 σ

模型的数学公式:

对于训练样本 x(i) :

根据之前单个样本的代价函数:

上述逻辑回归的损失函数,也被称为

cross-entropy loss,用于度量两个概率分布之间的相似性。

而m个样本的代价函数:

在计算完所有样本的预测值之后,我们可以计算整体的代价函数 J :

注意: 建立神经网络的一般方法如下:

1. 定义神经网络的结构 ( 输入单元数量, 隐藏层单元数量等等).

2. 初始化模型的参数

3. 迭代循环:

- 前向传播

- 计算损失函数

- 后向传播,计算梯度

- 更新参数 (梯度下降)

一般习惯将上述1-3步分别定义成一个独立的函数,再通过模型函数将三者融合一起。在建立模型之后,迭代获取到参数之后,即可对新数据进行预测。

4-1 定义神经网络的结构

定义如下三个参数的值:

- n_x: 输入层的神经元数

- n_h: 隐藏层的神经元数(本文设置为4)

- n_y: 输出层的神经元数

# GRADED FUNCTION: layer_sizes

def layer_sizes(X, Y):

"""

Arguments:

X -- input dataset of shape (input size, number of examples)

Y -- labels of shape (output size, number of examples)

Returns:

n_x -- the size of the input layer

n_h -- the size of the hidden layer

n_y -- the size of the output layer

"""

### START CODE HERE ### (≈ 3 lines of code)

n_x = X.shape[0] # size of input layer

n_h = 4

n_y = Y.shape[0] # size of output layer

### END CODE HERE ###

return (n_x, n_h, n_y)4-2 模型参数初始化

注意参数尺寸要与上述模型一致。在定义参数的时候,对于权重矩阵我们进行随机初始化:np.random.randn(a,b) * 0.01 以获取一个尺寸为(a,b)的随机矩阵。

对于参数b,我们直接初始化为0,np.zeros((a,b))

初始化代码如下:

# GRADED FUNCTION: initialize_parameters

def initialize_parameters(n_x, n_h, n_y):

"""

Argument:

n_x -- size of the input layer

n_h -- size of the hidden layer

n_y -- size of the output layer

Returns:

params -- python dictionary containing your parameters:

W1 -- weight matrix of shape (n_h, n_x)

b1 -- bias vector of shape (n_h, 1)

W2 -- weight matrix of shape (n_y, n_h)

b2 -- bias vector of shape (n_y, 1)

"""

np.random.seed(2) # we set up a seed so that your output matches ours although the initialization is random.

### START CODE HERE ### (≈ 4 lines of code)

W1 = np.random.randn(n_h,n_x) * 0.01

b1 = np.zeros((n_h,1))

W2 = np.random.randn(n_y,n_h) * 0.01

b2 = np.zeros((n_y,1))

### END CODE HERE ###

assert (W1.shape == (n_h, n_x))

assert (b1.shape == (n_h, 1))

assert (W2.shape == (n_y, n_h))

assert (b2.shape == (n_y, 1))

parameters = {"W1": W1,

"b1": b1,

"W2": W2,

"b2": b2}

return parameters4-3 迭代循环

4-3-1 前向传播

为前向传播定义一个函数,在隐藏层的激活函数是tanh,在输出层的激活函数是sigmoid。利用初始化的参数计算 Z[1],A[1],Z[2] 和 A[2] ,同时注意保留值到cache,因为在后续的后向传播需要用到。

前向传播代码:

# GRADED FUNCTION: forward_propagation

def forward_propagation(X, parameters):

"""

Argument:

X -- input data of size (n_x, m)

parameters -- python dictionary containing your parameters (output of initialization function)

Returns:

A2 -- The sigmoid output of the second activation

cache -- a dictionary containing "Z1", "A1", "Z2" and "A2"

"""

# Retrieve each parameter from the dictionary "parameters"

### START CODE HERE ### (≈ 4 lines of code)

W1 = parameters["W1"]

b1 = parameters["b1"]

W2 = parameters["W2"]

b2 = parameters["b2"]

### END CODE HERE ###

# Implement Forward Propagation to calculate A2 (probabilities)

### START CODE HERE ### (≈ 4 lines of code)

Z1 = np.dot(W1,X)+b1

A1 = np.tanh(Z1)

Z2 = np.dot(W2,A1)+b2

A2 = 1/(1+np.exp(-Z2))

### END CODE HERE ###

#可以在此打印查看变量的尺寸值

print("Z1 size=",Z1.shape)

print("A1 size=",A1.shape)

print("Z2 size=",Z2.shape)

print("A2 size=",A2.shape)

assert(A2.shape == (1, X.shape[1]))

cache = {"Z1": Z1,

"A1": A1,

"Z2": Z2,

"A2": A2}

return A2, cache测试代码:

def forward_propagation_test_case():

np.random.seed(1)

X_assess = np.random.randn(2, 3)

parameters = {'W1': np.array([[-0.00416758, -0.00056267],

[-0.02136196, 0.01640271],

[-0.01793436, -0.00841747],

[ 0.00502881, -0.01245288]]),

'W2': np.array([[-0.01057952, -0.00909008, 0.00551454, 0.02292208]]),

'b1': np.array([[ 0.],

[ 0.],

[ 0.],

[ 0.]]),

'b2': np.array([[ 0.]])}

return X_assess, parameters

X_assess, parameters = forward_propagation_test_case()

print(X_assess.shape)

A2, cache = forward_propagation(X_assess, parameters)

# Note: we use the mean here just to make sure that your output matches ours.

print(np.mean(cache['Z1']) ,np.mean(cache['A1']),np.mean(cache['Z2']),np.mean(cache['A2']))输出结果:

(2, 3)

Z1 size= (4, 3)

A1 size= (4, 3)

Z2 size= (1, 3)

A2 size= (1, 3)

-0.000499755777742 -0.000496963353232 0.000438187450959 0.500109546852注意各个参数的尺寸。

4-3-2 代价函数

在输出的cache中,我们是记录了 A[2] (在代码中用A2表示),该结果包含了 a[2](i) 的所有值,即整个样本。为此,我们可以计算整个样本的代价函数:

在python中计算交叉熵损失函数 −∑i=0my(i)log(a[2](i)) 可以用如下的两步骤实现:

logprobs = np.multiply(np.log(A2),Y) #对应元素相乘

cost = - np.sum(logprobs) # no need to use a for loop!代码实现:

# GRADED FUNCTION: compute_cost

def compute_cost(A2, Y, parameters):

"""

Computes the cross-entropy cost given in equation (13)

Arguments:

A2 -- The sigmoid output of the second activation, of shape (1, number of examples)

Y -- "true" labels vector of shape (1, number of examples)

parameters -- python dictionary containing your parameters W1, b1, W2 and b2

Returns:

cost -- cross-entropy cost given equation (13)

"""

m = Y.shape[1] # number of example

# Compute the cross-entropy cost

### START CODE HERE ### (≈ 2 lines of code)

logprobs = np.multiply(np.log(A2),Y)+np.multiply(np.log(1-A2),1-Y)

cost = -np.sum(logprobs)/m

### END CODE HERE ###

cost = np.squeeze(cost) # makes sure cost is the dimension we expect.

# E.g., turns [[17]] into 17

assert(isinstance(cost, float))

return cost测试代码:

def compute_cost_test_case():

np.random.seed(1)

Y_assess = np.random.randn(1, 3)

parameters = {'W1': np.array([[-0.00416758, -0.00056267],

[-0.02136196, 0.01640271],

[-0.01793436, -0.00841747],

[ 0.00502881, -0.01245288]]),

'W2': np.array([[-0.01057952, -0.00909008, 0.00551454, 0.02292208]]),

'b1': np.array([[ 0.],

[ 0.],

[ 0.],

[ 0.]]),

'b2': np.array([[ 0.]])}

a2 = (np.array([[ 0.5002307 , 0.49985831, 0.50023963]]))

return a2, Y_assess, parameters

A2, Y_assess, parameters = compute_cost_test_case()

print("cost = " + str(compute_cost(A2, Y_assess, parameters)))运行结果:

cost = 0.6929198937764-3-3 后向传播

基于前向传播过程中的cache,我们开始计算后向传播。后向传播的公式如下:

∂J∂z(i)2=1m(a[2](i)−y(i))

∂J∂W2=∂J∂z(i)2a[1](i)T

∂J∂b2=∑i∂J∂z(i)2

∂J∂z(i)1=WT2∂J∂z(i)2∗(1−a[1](i)2)

∂J∂W1=∂J∂z(i)1XT

∂Ji∂b1=∑i∂J∂z(i)1

- 注意 ∗ 表示元素之间的乘积。

代码中的符号对应以下的信息:

- dW1 = ∂J∂W1

- db1 = ∂J∂b1

- dW2 = ∂J∂W2

- db2 = ∂J∂b2

建议:

- 在计算dZ1时候,我们需要先计算 g[1]′(Z[1]) 。这是由于 g[1](.) is的激活函数是tanh,当 a=g[1](z) 则 g[1]′(z)=1−a2 。为此,我们可以用

(1 - np.power(A1, 2))来计算 g[1]′(Z[1])

- 在计算dZ1时候,我们需要先计算 g[1]′(Z[1]) 。这是由于 g[1](.) is的激活函数是tanh,当 a=g[1](z) 则 g[1]′(z)=1−a2 。为此,我们可以用

代码实现:

# GRADED FUNCTION: backward_propagation

def backward_propagation(parameters, cache, X, Y):

"""

Implement the backward propagation using the instructions above.

Arguments:

parameters -- python dictionary containing our parameters

cache -- a dictionary containing "Z1", "A1", "Z2" and "A2".

X -- input data of shape (2, number of examples)

Y -- "true" labels vector of shape (1, number of examples)

Returns:

grads -- python dictionary containing your gradients with respect to different parameters

"""

m = X.shape[1]

# First, retrieve W1 and W2 from the dictionary "parameters".

### START CODE HERE ### (≈ 2 lines of code)

W1 = parameters["W1"]

W2 = parameters["W2"]

### END CODE HERE ###

# Retrieve also A1 and A2 from dictionary "cache".

### START CODE HERE ### (≈ 2 lines of code)

A1 = cache["A1"]

A2 = cache["A2"]

### END CODE HERE ###

# Backward propagation: calculate dW1, db1, dW2, db2.

### START CODE HERE ### (≈ 6 lines of code, corresponding to 6 equations on slide above)

dZ2 = A2-Y

dW2 = np.dot(dZ2, A1.T)/m

db2 = np.sum(dZ2, axis=1, keepdims=True)/m

dZ1 = np.dot(W2.T,dZ2)*(1-np.power(A1,2))#np.multiply(np.dot(W2.T, dZ2), (1 - np.power(A1, 2)))

dW1 = np.dot(dZ1, X.T)/m

db1 = np.sum(dZ1, axis=1, keepdims=True)/m

### END CODE HERE ###

grads = {"dW1": dW1,

"db1": db1,

"dW2": dW2,

"db2": db2}

return grads测试代码:

def backward_propagation_test_case():

np.random.seed(1)

X_assess = np.random.randn(2, 3)

Y_assess = np.random.randn(1, 3)

parameters = {'W1': np.array([[-0.00416758, -0.00056267],

[-0.02136196, 0.01640271],

[-0.01793436, -0.00841747],

[ 0.00502881, -0.01245288]]),

'W2': np.array([[-0.01057952, -0.00909008, 0.00551454, 0.02292208]]),

'b1': np.array([[ 0.],

[ 0.],

[ 0.],

[ 0.]]),

'b2': np.array([[ 0.]])}

cache = {'A1': np.array([[-0.00616578, 0.0020626 , 0.00349619],

[-0.05225116, 0.02725659, -0.02646251],

[-0.02009721, 0.0036869 , 0.02883756],

[ 0.02152675, -0.01385234, 0.02599885]]),

'A2': np.array([[ 0.5002307 , 0.49985831, 0.50023963]]),

'Z1': np.array([[-0.00616586, 0.0020626 , 0.0034962 ],

[-0.05229879, 0.02726335, -0.02646869],

[-0.02009991, 0.00368692, 0.02884556],

[ 0.02153007, -0.01385322, 0.02600471]]),

'Z2': np.array([[ 0.00092281, -0.00056678, 0.00095853]])}

return parameters, cache, X_assess, Y_assess

parameters, cache, X_assess, Y_assess = backward_propagation_test_case()

grads = backward_propagation(parameters, cache, X_assess, Y_assess)

print ("dW1 = "+ str(grads["dW1"]))

print ("db1 = "+ str(grads["db1"]))

print ("dW2 = "+ str(grads["dW2"]))

print ("db2 = "+ str(grads["db2"]))运行结果如下:

dW1 = [[ 0.01018708 -0.00708701]

[ 0.00873447 -0.0060768 ]

[-0.00530847 0.00369379]

[-0.02206365 0.01535126]]

db1 = [[-0.00069728]

[-0.00060606]

[ 0.000364 ]

[ 0.00151207]]

dW2 = [[ 0.00363613 0.03153604 0.01162914 -0.01318316]]

db2 = [[ 0.06589489]]4-3-4 参数更新

上述的结果我们已经可以计算出反向传播的梯度了,那么我们就可以通过梯度下降法对参数进行更新:

θ=θ−α∂J∂θ 其中 α 是学习率 θ 则是代表待更新的参数。

选择好的学习率,迭代才会收敛,否则迭代过程不断振荡,呈发散状态。

参数更新代码:

# GRADED FUNCTION: update_parameters

def update_parameters(parameters, grads, learning_rate = 1.2):

"""

Updates parameters using the gradient descent update rule given above

Arguments:

parameters -- python dictionary containing your parameters

grads -- python dictionary containing your gradients

Returns:

parameters -- python dictionary containing your updated parameters

"""

# Retrieve each parameter from the dictionary "parameters"

### START CODE HERE ### (≈ 4 lines of code)

W1 = parameters["W1"]

b1 = parameters["b1"]

W2 = parameters["W2"]

b2 = parameters["b2"]

### END CODE HERE ###

# Retrieve each gradient from the dictionary "grads"

### START CODE HERE ### (≈ 4 lines of code)

dW1 = grads["dW1"]

db1 = grads["db1"]

dW2 = grads["dW2"]

db2 = grads["db2"]

## END CODE HERE ###

# Update rule for each parameter

### START CODE HERE ### (≈ 4 lines of code)

W1 -= learning_rate * dW1

b1 -= learning_rate * db1

W2 -= learning_rate * dW2

b2 -= learning_rate * db2

### END CODE HERE ###

parameters = {"W1": W1,

"b1": b1,

"W2": W2,

"b2": b2}

return parameters测试代码:

def update_parameters_test_case():

parameters = {'W1': np.array([[-0.00615039, 0.0169021 ],

[-0.02311792, 0.03137121],

[-0.0169217 , -0.01752545],

[ 0.00935436, -0.05018221]]),

'W2': np.array([[-0.0104319 , -0.04019007, 0.01607211, 0.04440255]]),

'b1': np.array([[ -8.97523455e-07],

[ 8.15562092e-06],

[ 6.04810633e-07],

[ -2.54560700e-06]]),

'b2': np.array([[ 9.14954378e-05]])}

grads = {'dW1': np.array([[ 0.00023322, -0.00205423],

[ 0.00082222, -0.00700776],

[-0.00031831, 0.0028636 ],

[-0.00092857, 0.00809933]]),

'dW2': np.array([[ -1.75740039e-05, 3.70231337e-03, -1.25683095e-03,

-2.55715317e-03]]),

'db1': np.array([[ 1.05570087e-07],

[ -3.81814487e-06],

[ -1.90155145e-07],

[ 5.46467802e-07]]),

'db2': np.array([[ -1.08923140e-05]])}

return parameters, grads

parameters, grads = update_parameters_test_case()

parameters = update_parameters(parameters, grads)

print("W1 = " + str(parameters["W1"]))

print("b1 = " + str(parameters["b1"]))

print("W2 = " + str(parameters["W2"]))

print("b2 = " + str(parameters["b2"]))测试运行结果:

W1 = [[-0.00643025 0.01936718]

[-0.02410458 0.03978052]

[-0.01653973 -0.02096177]

[ 0.01046864 -0.05990141]]

b1 = [[ -1.02420756e-06]

[ 1.27373948e-05]

[ 8.32996807e-07]

[ -3.20136836e-06]]

W2 = [[-0.01041081 -0.04463285 0.01758031 0.04747113]]

b2 = [[ 0.00010457]]4-4 模型融合

将上述4-1~4-3的各个模块进行整合成一个完整的神经网络模型。代码如下:

# GRADED FUNCTION: nn_model

def nn_model(X, Y, n_h, num_iterations = 10000, print_cost=False):

"""

Arguments:

X -- dataset of shape (2, number of examples)

Y -- labels of shape (1, number of examples)

n_h -- size of the hidden layer

num_iterations -- Number of iterations in gradient descent loop

print_cost -- if True, print the cost every 1000 iterations

Returns:

parameters -- parameters learnt by the model. They can then be used to predict.

"""

np.random.seed(3)

n_x = layer_sizes(X, Y)[0]

n_y = layer_sizes(X, Y)[2]

# Initialize parameters, then retrieve W1, b1, W2, b2. Inputs: "n_x, n_h, n_y". Outputs = "W1, b1, W2, b2, parameters".

### START CODE HERE ### (≈ 5 lines of code)

parameters = initialize_parameters(n_x, n_h, n_y)

W1 = parameters["W1"]

b1 = parameters["b1"]

W2 = parameters["W2"]

b2 = parameters["b2"]

### END CODE HERE ###

# Loop (gradient descent)

for i in range(0, num_iterations):

### START CODE HERE ### (≈ 4 lines of code)

# Forward propagation. Inputs: "X, parameters". Outputs: "A2, cache".

A2, cache = forward_propagation(X, parameters)

# Cost function. Inputs: "A2, Y, parameters". Outputs: "cost".

cost = compute_cost(A2, Y, parameters)

# Backpropagation. Inputs: "parameters, cache, X, Y". Outputs: "grads".

grads = backward_propagation(parameters, cache, X , Y)

# Gradient descent parameter update. Inputs: "parameters, grads". Outputs: "parameters".

parameters = update_parameters(parameters, grads)

### END CODE HERE ###

# Print the cost every 1000 iterations

if print_cost and i % 1000 == 0:

print ("Cost after iteration %i: %f" %(i, cost))

return parameters测试代码:

def nn_model_test_case():

np.random.seed(1)

X_assess = np.random.randn(2, 3)

Y_assess = np.random.randn(1, 3)

return X_assess, Y_assess

X_assess, Y_assess = nn_model_test_case()

parameters = nn_model(X_assess, Y_assess, 4, num_iterations=10000, print_cost=False)

print("W1 = " + str(parameters["W1"]))

print("b1 = " + str(parameters["b1"]))

print("W2 = " + str(parameters["W2"]))

print("b2 = " + str(parameters["b2"]))测试代码运行结果:

W1 = [[-4.18494502 5.33220306]

[-7.52989352 1.24306198]

[-4.19295477 5.32631754]

[ 7.52983748 -1.24309404]]

b1 = [[ 2.32926814]

[ 3.79459053]

[ 2.3300254 ]

[-3.79468789]]

W2 = [[-6033.83672183 -6008.12981297 -6033.10095335 6008.0663689 ]]

b2 = [[-52.666077]]

c:\users\jason\appdata\local\programs\python\python35\lib\site-packages\ipykernel_launcher.py:26: RuntimeWarning: overflow encountered in exp4-5 预测

至此,我们已经训练获得了最优的参数,那么我们可以基于该模型对新数据进行预测:

As an example, if you would like to set the entries of a matrix X to 0 and 1 based on a threshold you would do: X_new = (X > threshold)

代码:

# GRADED FUNCTION: predict

def predict(parameters, X):

"""

Using the learned parameters, predicts a class for each example in X

Arguments:

parameters -- python dictionary containing your parameters

X -- input data of size (n_x, m)

Returns

predictions -- vector of predictions of our model (red: 0 / blue: 1)

"""

# Computes probabilities using forward propagation, and classifies to 0/1 using 0.5 as the threshold.

### START CODE HERE ### (≈ 2 lines of code)

A2, cache = forward_propagation(X, parameters)

predictions = (A2 > 0.5)

#predictions = np.around(A2)#这种方式也可以

### END CODE HERE ###

print ("predictions:", predictions)

return predictions测试代码:

def predict_test_case():

np.random.seed(1)

X_assess = np.random.randn(2, 3)

parameters = {'W1': np.array([[-0.00615039, 0.0169021 ],

[-0.02311792, 0.03137121],

[-0.0169217 , -0.01752545],

[ 0.00935436, -0.05018221]]),

'W2': np.array([[-0.0104319 , -0.04019007, 0.01607211, 0.04440255]]),

'b1': np.array([[ -8.97523455e-07],

[ 8.15562092e-06],

[ 6.04810633e-07],

[ -2.54560700e-06]]),

'b2': np.array([[ 9.14954378e-05]])}

return parameters, X_assess

parameters, X_assess = predict_test_case()

predictions = predict(parameters, X_assess)

print("predictions mean = " + str(np.mean(predictions)))测试代码运行结果:

predictions: [[ True False True]]

predictions mean = 0.6666666666674-5-1 边界绘制

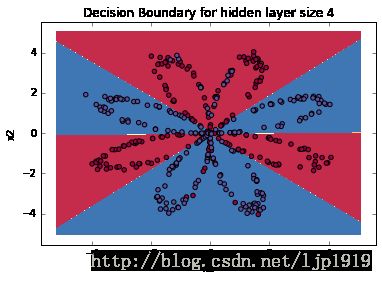

对于隐藏层为4的神经网络,我们来绘制下边界:

# Build a model with a n_h-dimensional hidden layer

parameters = nn_model(X, Y, n_h = 4, num_iterations = 10000, print_cost=True)

# Plot the decision boundary

plot_decision_boundary(lambda x: predict(parameters, x.T), X, Y)

plt.title("Decision Boundary for hidden layer size " + str(4))运行结果:

Cost after iteration 0: 0.693048

Cost after iteration 1000: 0.288083

Cost after iteration 2000: 0.254385

Cost after iteration 3000: 0.233864

Cost after iteration 4000: 0.226792

Cost after iteration 5000: 0.222644

Cost after iteration 6000: 0.219731

Cost after iteration 7000: 0.217504

Cost after iteration 8000: 0.219528

Cost after iteration 9000: 0.218627

predictions: [[ 1. 1. 1. ..., 0. 0. 0.]]waning信息:

c:\users\jason\appdata\local\programs\python\python35\lib\site-packages\numpy\ma\core.py:6385: MaskedArrayFutureWarning: In the future the default for ma.maximum.reduce will be axis=0, not the current None, to match np.maximum.reduce. Explicitly pass 0 or None to silence this warning.

return self.reduce(a)

c:\users\jason\appdata\local\programs\python\python35\lib\site-packages\numpy\ma\core.py:6385: MaskedArrayFutureWarning: In the future the default for ma.minimum.reduce will be axis=0, not the current None, to match np.minimum.reduce. Explicitly pass 0 or None to silence this warning.

return self.reduce(a)4-5-2 准确率

统计真实矩阵和预测矩阵中相同位置,值相同的个数。

# Print accuracy

predictions = predict(parameters, X)

print ('Accuracy: %d' % float((np.dot(Y,predictions.T) + np.dot(1-Y,1-predictions.T))/float(Y.size)*100) + '%')运行结果:

Accuracy: 90%相比于逻辑回归,此处90%的准确率是高出许多的。

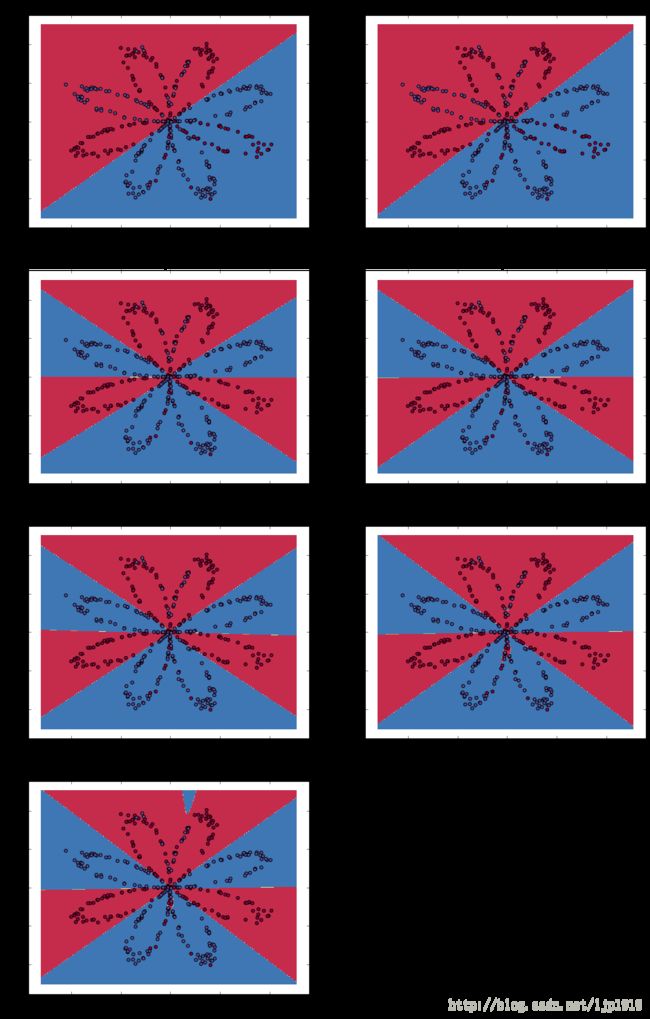

4-6 隐藏层神经元数量的影响

我们在隐藏层的神经元数量分别取1, 2, 3, 4, 5, 10, 20做如下观察:

# This may take about 2 minutes to run

plt.figure(figsize=(16, 32))

hidden_layer_sizes = [1, 2, 3, 4, 5, 10, 20]

for i, n_h in enumerate(hidden_layer_sizes):

plt.subplot(5, 2, i+1)

plt.title('Hidden Layer of size %d' % n_h)

parameters = nn_model(X, Y, n_h, num_iterations = 5000)

plot_decision_boundary(lambda x: predict(parameters, x.T), X, Y)

predictions = predict(parameters, X)

accuracy = float((np.dot(Y,predictions.T) + np.dot(1-Y,1-predictions.T))/float(Y.size)*100)

print ("Accuracy for {} hidden units: {} %".format(n_h, accuracy))warning信息:

c:\users\jason\appdata\local\programs\python\python35\lib\site-packages\numpy\ma\core.py:6385: MaskedArrayFutureWarning: In the future the default for ma.maximum.reduce will be axis=0, not the current None, to match np.maximum.reduce. Explicitly pass 0 or None to silence this warning.

return self.reduce(a)

c:\users\jason\appdata\local\programs\python\python35\lib\site-packages\numpy\ma\core.py:6385: MaskedArrayFutureWarning: In the future the default for ma.minimum.reduce will be axis=0, not the current None, to match np.minimum.reduce. Explicitly pass 0 or None to silence this warning.

return self.reduce(a)输出结果如下:

Accuracy for 1 hidden units: 67.5 %

Accuracy for 2 hidden units: 67.25 %

Accuracy for 3 hidden units: 90.75 %

Accuracy for 4 hidden units: 90.5 %

Accuracy for 5 hidden units: 91.25 %

Accuracy for 10 hidden units: 90.25 %

Accuracy for 20 hidden units: 90.5 %

从上图的对比,我们可以看出,隐藏层的神经元数量越多,则对训练数据集的拟合效果越好,直到最后出现过拟合。本文这里隐藏层的神经元数量,最适值是n_h=5,即能够较好地拟合训练数据集,也不会出现过拟合现象。另外,对于n_h过大而产生的过拟合是可以通过正则化来消除的,这点后续再补充介绍。