Phoenix四贴之三:hive整合

0.前期准备,伪分布式的hbase搭建(这里简单演示一下)

Hbase的伪分布式安装部署(使用三个进程来当作集群)

下载地址:http://mirror.bit.edu.cn/apache/hbase/1.2.3/hbase-1.2.3-bin.tar.gz

在这里,下载的是1.2.3版本

关于hbase和hadoop的版本对应信息,可参考官档的说明

http://hbase.apache.org/book/configuration.html#basic.prerequisites

tar -zxvf hbase-1.2.6-bin.tar.gz -C /opt/soft/

cd /opt/soft/hbase-1.2.6/

vim conf/hbase-env.sh

#在内部加入export JAVA_HOME=/usr/local/jdk1.8(或者source /etc/profile)

#配置Hbase

mkdir /opt/soft/hbase-1.2.6/data

vim conf/hbase-site.xml

#####下面是在hbase-site.xml添加的项#####

<configuration>

<property>

<name>hbase.rootdirname>

<value>hdfs://yyhhdfs/hbasevalue>

property>

<property>

<name>hbase.zookeeper.property.dataDirname>

<value>/opt/soft/hbase-1.2.6/data/zookeepervalue>

property>

<property>

<name>hbase.cluster.distributedname>

<value>truevalue>

property>

configuration>

#在regionservers内部写入localhost

echo "localhost" > conf/regionservers

启动Hbase

[root@yyh4 ~]# /opt/soft/hbase-1.2.6/bin/hbase-daemon.sh start zookeeper

starting zookeeper, logging to /opt/soft/hbase-1.2.6/logs/hbase-root-zookeeper-yyh4.out

[root@yyh4 ~]# /opt/soft/hbase-1.2.6/bin/hbase-daemon.sh start master

starting master, logging to /opt/soft/hbase-1.2.6/logs/hbase-root-master-yyh4.out

Java HotSpot(TM) 64-Bit Server VM warning: ignoring option PermSize=128m; support was removed in 8.0

Java HotSpot(TM) 64-Bit Server VM warning: ignoring option MaxPermSize=128m; support was removed in 8.0

[root@yyh4 ~]# /opt/soft/hbase-1.2.6/bin/hbase-daemon.sh start regionserver

starting regionserver, logging to /opt/soft/hbase-1.2.6/logs/hbase-root-regionserver-yyh4.out

Java HotSpot(TM) 64-Bit Server VM warning: ignoring option PermSize=128m; support was removed in 8.0

Java HotSpot(TM) 64-Bit Server VM warning: ignoring option MaxPermSize=128m; support was removed in 8.0

[root@yyh4 ~]# jps

25536 HMaster

25417 HQuorumPeer

25757 HRegionServer

26014 Jps可以看出,新增了HQuorumPeer,HRegionServer和HMaster三个进程。

通过http://yyh4:16030/访问Hbase的web页面

至此,Hbase的伪分布式集群搭建完毕

1. 安装部署

1.1 安装预编译的Phoenix

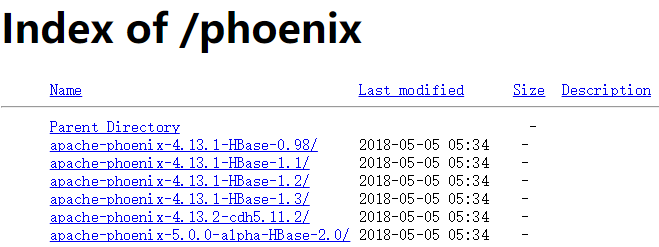

1.下载并解压最新版的phoenix-[version]-bin.tar包

地址:http://apache.fayea.com/phoenix/apache-phoenix-4.13.1-HBase-1.2/bin/

理由:hbase是1.2.6版本的,所以选用4.13.1版本的phoenix,

官网:http://apache.fayea.com/phoenix/

将phoenix-[version]-server.jar放入服务端和master节点的HBase的lib目录下

重启HBase

将phoenix-[version]-client.jar添加到所有Phoenix客户端的classpath

#将phoenix-[version]-server.jar放入服务端和master节点的HBase的lib目录下

#将phoenix-[version]-client.jar添加到所有Phoenix客户端的classpath

[root@yyh4 /]# cd /opt/soft

[root@yyh4 soft]# tar -zxvf apache-phoenix-4.13.1-HBase-1.2-bin.tar.gz

[root@yyh4 soft]# cp apache-phoenix-4.13.1-HBase-1.2-bin/phoenix-4.13.1-HBase-1.2-server.jar hbase-1.2.6/lib/

[root@yyh4 soft]# cp apache-phoenix-4.13.1-HBase-1.2-bin/phoenix-4.13.1-HBase-1.2-client.jar hbase-1.2.6/lib/2 使用Phoenix

若要在命令行执行交互式SQL语句:

运行过程 (要求python及yum install python-argparse)

在phoenix-{version}/bin 目录下

$ /opt/soft/apache-phoenix-4.13.1-HBase-1.2-bin/bin/sqlline.py localhost可以进入命令行模式

0: jdbc:phoenix:localhost>退出命令行的方式是执行 !quit

0: jdbc:phoenix:localhost>!quit

0: jdbc:phoenix:localhost> help其余常见指令

!all Execute the specified SQL against all the current connections

!autocommit Set autocommit mode on or off

!batch Start or execute a batch of statements

!brief Set verbose mode off

!call Execute a callable statement

!close Close the current connection to the database

!closeall Close all current open connections

!columns List all the columns for the specified table

!commit Commit the current transaction (if autocommit is off)

!connect Open a new connection to the database.

!dbinfo Give metadata information about the database

!describe Describe a table

!dropall Drop all tables in the current database

!exportedkeys List all the exported keys for the specified table

!go Select the current connection

!help Print a summary of command usage

!history Display the command history

!importedkeys List all the imported keys for the specified table

!indexes List all the indexes for the specified table

!isolation Set the transaction isolation for this connection

!list List the current connections

!manual Display the SQLLine manual

!metadata Obtain metadata information

!nativesql Show the native SQL for the specified statement

!outputformat Set the output format for displaying results

(table,vertical,csv,tsv,xmlattrs,xmlelements)

!primarykeys List all the primary keys for the specified table

!procedures List all the procedures

!properties Connect to the database specified in the properties file(s)

!quit Exits the program

!reconnect Reconnect to the database

!record Record all output to the specified file

!rehash Fetch table and column names for command completion

!rollback Roll back the current transaction (if autocommit is off)

!run Run a script from the specified file

!save Save the current variabes and aliases

!scan Scan for installed JDBC drivers

!script Start saving a script to a file

!set Set a sqlline variable

!sql Execute a SQL command

!tables List all the tables in the database

!typeinfo Display the type map for the current connection

!verbose Set verbose mode on若要在命令行执行SQL脚本

$ sqlline.py localhost ../examples/stock_symbol.sql测试ph:

#创建表

CREATE TABLE yyh ( pk VARCHAR PRIMARY KEY,val VARCHAR );

#增加,修改表数据

upsert into yyh values ('1','Helldgfho');

#查询表数据

select * from yyh;

#删除表(如果是建立表,则同时删除phoenix与hbase表数据,如果是建立view,则不删除hbase的数据)

drop table test;

3.(HIVE和Phoenix整合)Phoenix Hive的Phoenix Storage Handler

Apache Phoenix Storage Handler是一个插件,它使Apache Hive能够使用HiveQL从Apache Hive命令行访问Phoenix表。

Hive安装程序

使hive提供phoenix-{version}-hive.jar:

#在phoenix节点:

[root@yyh4 apache-phoenix-4.13.1-HBase-1.2-bin]#

scp phoenix-4.13.1-HBase-1.2-hive.jar yyh3://opt/soft/apache-hive-1.2.1-bin/lib

#第1步:在hive-server节点:hive-env.sh(这里不需要,因为lib内部)

#HIVE_AUX_JARS_PATH =

表创建和删除

Phoenix Storage Handler支持INTERNAL和EXTERNAL Hive表。

创建内部表

对于INTERNAL表,Hive管理表和数据的生命周期。创建Hive表时,也会创建相应的Phoenix表。一旦Hive表被删除,凤凰表也被删除。

create table phoenix_yyh (

s1 string,

s2 string

)

STORED BY 'org.apache.phoenix.hive.PhoenixStorageHandler'

TBLPROPERTIES (

"phoenix.table.name" = "yyh",

"phoenix.zookeeper.quorum" = "yyh4",

"phoenix.zookeeper.znode.parent" = "/hbase",

"phoenix.zookeeper.client.port" = "2181",

"phoenix.rowkeys" = "s1, i1",

"phoenix.column.mapping" = "s1:s1, i1:i1, f1:f1, d1:d1",

"phoenix.table.options" = "SALT_BUCKETS=10, DATA_BLOCK_ENCODING='DIFF'"

);创建EXTERNAL表

对于EXTERNAL表,Hive与现有的Phoenix表一起使用,仅管理Hive元数据。从Hive中删除EXTERNAL表只会删除Hive元数据,但不会删除Phoenix表。

create external table ayyh

(pk string,

value string)

STORED BY 'org.apache.phoenix.hive.PhoenixStorageHandler'

TBLPROPERTIES (

"phoenix.table.name" = "yyh",

"phoenix.zookeeper.quorum" = "yyh4",

"phoenix.zookeeper.znode.parent" = "/hbase",

"phoenix.column.mapping" = "pk:PK,value:VAL",

"phoenix.rowkeys" = "pk",

"phoenix.table.options" = "SALT_BUCKETS=10, DATA_BLOCK_ENCODING='DIFF'"

);属性

- phoenix.table.name

- Specifies the Phoenix table name #指定Phoenix表名

- Default: the same as the Hive table #默认值:与Hive表相同

- phoenix.zookeeper.quorum

- Specifies the ZooKeeper quorum for HBase

- Default: localhost #默认:localhost

- phoenix.zookeeper.znode.parent

- Specifies the ZooKeeper parent node for HBase #指定HBase的ZooKeeper父节点

- Default: /hbase

- phoenix.zookeeper.client.port

- Specifies the ZooKeeper port #指定ZooKeeper端口

- Default: 2181

- phoenix.rowkeys

- The list of columns to be the primary key in a Phoenix table #Phoenix列表中的主列

- Required #必填

- phoenix.column.mapping

- Mappings between column names for Hive and Phoenix. See Limitations for details #Hive和Phoenix的列名之间的映射。详情请参阅限制。

数据提取,删除和更新

数据提取可以通过Hive和Phoenix支持的所有方式完成:

Hive:

insert into table T values (....);

insert into table T select c1,c2,c3 from source_table;Phoenix:

upsert into table T values (.....);

Phoenix CSV BulkLoad tools

All delete and update operations should be performed on the Phoenix side. See Limitations for more details. #所有删除和更新操作都应在凤凰方面执行。请参阅限制了解更多详情。

其他配置选项

这些选项可以在Hive命令行界面(CLI)环境中设置。

Performance Tuning性能调整

| Parameter | Default Value | Description |

|---|---|---|

| phoenix.upsert.batch.size | 1000 | Batch size for upsert.批量大小 |

| [phoenix-table-name].disable.wal | false | Temporarily sets the table attribute DISABLE_WAL to true. Sometimes used to improve performance#暂时将表格属性DISABLE_WAL设置为true。有时用于提高性能 |

| [phoenix-table-name].auto.flush | false | When WAL is disabled and if this value is true, then MemStore is flushed to an HFile.#当WAL被禁用时,如果该值为true,则MemStore被刷新为HFile |

Query Data查询数据

You can use HiveQL for querying data in a Phoenix table. A Hive query on a single table can be as fast as running the query in the Phoenix CLI with the following property settings: hive.fetch.task.conversion=more and hive.exec.parallel=true

#您可以使用HiveQL查询Phoenix表中的数据。单个表上的Hive查询可以像运行Phoenix CLI中的查询一样快,并具有以下属性设置:hive.fetch.task.conversion = more和hive.exec.parallel = true

| Parameter | Default Value | Description |

|---|---|---|

| hbase.scan.cache | 100 | Read row size for a unit request#读取单位请求的行大小 |

| hbase.scan.cacheblock | false | Whether or not cache block#是否缓存块 |

| split.by.stats | false | If true, mappers use table statistics. One mapper per guide post. |

| [hive-table-name].reducer.count | 1 | Number of reducers. In Tez mode, this affects only single-table queries. See Limitations. |

| [phoenix-table-name].query.hint | Hint for Phoenix query (for example, NO_INDEX) |

限制

- Hive update and delete operations require transaction manager support on both Hive and Phoenix sides. Related Hive and Phoenix JIRAs are listed in the Resources section. #Hive更新和删除操作需要Hive和Phoenix两方的事务管理器支持。相关的Hive和Phoenix JIRA列在参考资料部分。

- Column mapping does not work correctly with mapping row key columns. #列映射无法正确使用映射行键列。

- MapReduce and Tez jobs always have a single reducer . #MapReduce和Tez作业总是只有一个reducer。

资源

- PHOENIX-2743 : Implementation, accepted by Apache Phoenix community. Original pull request contains modification for Hive classes.#实施,被Apache Phoenix社区接受。原始请求包含对Hive类的修改。

- PHOENIX-331 : An outdated implementation with support of Hive 0.98.#支持Hive 0.98的过时实施。

4 整合的全步骤预览

#注意这样的操作,尤其是大小写的处理,其实更符合所有组件

#####虚拟集群测试概要#####

#hbase shell

#habse节点 2列,3数据

create 'YINGGDD','info'

put 'YINGGDD', 'row021','info:name','phoenix'

put 'YINGGDD', 'row012','info:name','hbase'

put 'YINGGDD', 'row012','info:sname','shbase'

#phoenix 节点

#opt/soft/apache-phoenix-4.13.1-HBase-1.2-bin/bin/sqlline.py yyh4

create table "YINGGDD" ("id" varchar primary key, "info"."name" varchar, "info"."sname" varchar);

select * from "YINGGDD";

#hive节点

#beeline -u jdbc:hive2://yyh3:10000

create external table yingggy ( id string,

name string,

sname string)

STORED BY 'org.apache.phoenix.hive.PhoenixStorageHandler'

TBLPROPERTIES (

"phoenix.table.name" = "YINGGDD" ,

"phoenix.zookeeper.quorum" = "yyh4" ,

"phoenix.zookeeper.znode.parent" = "/hbase",

"phoenix.column.mapping" = "pk:id,name:name,sname:sname",

"phoenix.rowkeys" = "id",

"phoenix.table.options" = "SALT_BUCKETS=10, DATA_BLOCK_ENCODING='DIFF'"

);

select * from yingggy; ############公司内部集群测试###############

#hive 111 --- ph 113

#ph表

beeline -u jdbc:hive2://192.168.1.111:10000/dm -f /usr/local/apache-hive-2.3.2-bin/bin/yyh.sql

add jar /usr/local/apache-hive-2.3.2-bin/lib/phoenix-4.9.0-cdh5.9.1-hive.jar;

add jar /usr/local/apache-hive-2.3.2-bin/lib/phoenix-4.9.0-HBase-1.2-hive.jar;

add jar /usr/local/apache-hive-2.3.2-bin/lib/phoenix-4.9.0-HBase-1.2-client.jar;

#driver#phoenix-4.9.0-HBase-1.2-hive.org.apache.phoenix.jdbc.PhoenixDriver

create external table ayyh

(pk string,

value string)

STORED BY 'org.apache.phoenix.hive.PhoenixStorageHandler'

TBLPROPERTIES (

"phoenix.table.name" = "yyh",

"phoenix.zookeeper.quorum" = "192.168.1.112",

"phoenix.zookeeper.znode.parent" = "/hbase",

"phoenix.column.mapping" = "pk:PK,value:VAL",

"phoenix.rowkeys" = "pk",

"phoenix.table.options" = "SALT_BUCKETS=10, DATA_BLOCK_ENCODING='DIFF'"

);

#113作为hive的客户端,去建立表格

cd ~

beeline -u jdbc:hive2://192.168.1.111:10000/dm -u hive -f yyha.sql

#117登陆hive查看表格

beeline -u jdbc:hive2://192.168.1.111:10000/dm -e "select * from ayyh limit 3"5目前的踩坑记

5.1 stored类找不到

Error: Error while compiling statement: FAILED: SemanticException Cannot find class 'org.apache.phoenix.hive.PhoenixStorageHandler' (state=42000,code=40000)

##分析如下,缺少jar包

#导入add jar解决5.2 No suitable driver found for jdbc:phoenix

#因此,进入beeline以后,用add jar(公司集群,不允许频繁启动):

0: jdbc:hive2://yyh3:10000> add jar /opt/soft/apache-hive-1.2.1-bin/lib/phoenix-4.13.1-HBase-1.2-hive.jar;

INFO : Added [/opt/soft/apache-hive-1.2.1-bin/lib/phoenix-4.13.1-HBase-1.2-hive.jar] to class path

INFO : Added resources: [/opt/soft/apache-hive-1.2.1-bin/lib/phoenix-4.13.1-HBase-1.2-hive.jar]

发现报新错误:

Error: Error while processing statement: FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.DDLTask. MetaException(message:No suitable driver found for jdbc:phoenix:yyh4:2181:/hbase;) (state=08S01,code=1)

其实,这个问题是在查公司集群时体现出的报错,为了结构清晰,单独写在下面[import@slave113 phoenix-4.9.0-cdh5.9.1]$ beeline -u jdbc:hive2://192.168.1.111:10000/dm

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/usr/local/apache-hive-2.3.2-bin/lib/log4j-slf4j-impl-2.6.2.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/usr/local/apache-hive-2.3.2-bin/lib/phoenix-4.13.1-HBase-1.2-hive.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/usr/local/apache-hive-2.3.2-bin/lib/phoenix-4.9.0-cdh5.9.1-hive.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/cloudera/parcels/CDH-5.9.0-1.cdh5.9.0.p0.23/jars/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory]

Connecting to jdbc:hive2://192.168.1.111:10000/dm

Connected to: Apache Hive (version 2.3.2)

Driver: Hive JDBC (version 2.3.2)

Transaction isolation: TRANSACTION_REPEATABLE_READ

Beeline version 2.3.2 by Apache Hive

0: jdbc:hive2://192.168.1.111:10000/dm> add jar /usr/local/apache-hive-2.3.2-bin/lib/phoenix-4.13.1-HBase-1.2-hive.jar

. . . . . . . . . . . . . . . . . . . > ;

Error: Error while processing statement: /usr/local/apache-hive-2.3.2-bin/lib/phoenix-4.13.1-HBase-1.2-hive.jar does not exist (state=,code=1)

0: jdbc:hive2://192.168.1.111:10000/dm> add jar /usr/local/apache-hive-2.3.2-bin/lib/phoenix-4.13.1-HBase-1.2-hive.jar;

No rows affected (0.051 seconds)

0: jdbc:hive2://192.168.1.111:10000/dm> create external table ayyh

. . . . . . . . . . . . . . . . . . . > (pk string,

. . . . . . . . . . . . . . . . . . . > value string)

. . . . . . . . . . . . . . . . . . . > STORED BY 'org.apache.phoenix.hive.PhoenixStorageHandler'

. . . . . . . . . . . . . . . . . . . > TBLPROPERTIES (

. . . . . . . . . . . . . . . . . . . > "phoenix.table.name" = "yyh",

. . . . . . . . . . . . . . . . . . . > "phoenix.zookeeper.quorum" = "192.168.1.113:2181",

. . . . . . . . . . . . . . . . . . . > "phoenix.zookeeper.znode.parent" = "/hbase",

. . . . . . . . . . . . . . . . . . . > "phoenix.column.mapping" = "pk:PK,value:VAL",

. . . . . . . . . . . . . . . . . . . > "phoenix.rowkeys" = "pk",

. . . . . . . . . . . . . . . . . . . > "phoenix.table.options" = "SALT_BUCKETS=10, DATA_BLOCK_ENCODING='DIFF'"

. . . . . . . . . . . . . . . . . . . > );

Error: org.apache.hive.service.cli.HiveSQLException: Error while processing statement: FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.DDLTask. MetaException(message:No suitable driver found for jdbc:phoenix:192.168.1.113:2181:2181:/hbase;)

at org.apache.hive.service.cli.operation.Operation.toSQLException(Operation.java:380)

at org.apache.hive.service.cli.operation.SQLOperation.runQuery(SQLOperation.java:257)

at org.apache.hive.service.cli.operation.SQLOperation.access$800(SQLOperation.java:91)

at org.apache.hive.service.cli.operation.SQLOperation$BackgroundWork$1.run(SQLOperation.java:348)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1698)

at org.apache.hive.service.cli.operation.SQLOperation$BackgroundWork.run(SQLOperation.java:362)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617)

at java.lang.Thread.run(Thread.java:745)

Caused by: org.apache.hadoop.hive.ql.metadata.HiveException: MetaException(message:No suitable driver found for jdbc:phoenix:192.168.1.113:2181:2181:/hbase;)

at org.apache.hadoop.hive.ql.metadata.Hive.createTable(Hive.java:862)

at org.apache.hadoop.hive.ql.metadata.Hive.createTable(Hive.java:867)

at org.apache.hadoop.hive.ql.exec.DDLTask.createTable(DDLTask.java:4356)

at org.apache.hadoop.hive.ql.exec.DDLTask.execute(DDLTask.java:354)

at org.apache.hadoop.hive.ql.exec.Task.executeTask(Task.java:199)

at org.apache.hadoop.hive.ql.exec.TaskRunner.runSequential(TaskRunner.java:100)

at org.apache.hadoop.hive.ql.Driver.launchTask(Driver.java:2183)

at org.apache.hadoop.hive.ql.Driver.execute(Driver.java:1839)

at org.apache.hadoop.hive.ql.Driver.runInternal(Driver.java:1526)

at org.apache.hadoop.hive.ql.Driver.run(Driver.java:1237)

at org.apache.hadoop.hive.ql.Driver.run(Driver.java:1232)

at org.apache.hive.service.cli.operation.SQLOperation.runQuery(SQLOperation.java:255)

... 11 more

Caused by: MetaException(message:No suitable driver found for jdbc:phoenix:192.168.1.113:2181:2181:/hbase;)

at org.apache.phoenix.hive.PhoenixMetaHook.preCreateTable(PhoenixMetaHook.java:84)

at org.apache.hadoop.hive.metastore.HiveMetaStoreClient.createTable(HiveMetaStoreClient.java:747)

at org.apache.hadoop.hive.metastore.HiveMetaStoreClient.createTable(HiveMetaStoreClient.java:740)

at sun.reflect.GeneratedMethodAccessor74.invoke(Unknown Source)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.hive.metastore.RetryingMetaStoreClient.invoke(RetryingMetaStoreClient.java:173)

at com.sun.proxy.$Proxy34.createTable(Unknown Source)

at sun.reflect.GeneratedMethodAccessor74.invoke(Unknown Source)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.hive.metastore.HiveMetaStoreClient$SynchronizedHandler.invoke(HiveMetaStoreClient.java:2330)

at com.sun.proxy.$Proxy34.createTable(Unknown Source)

at org.apache.hadoop.hive.ql.metadata.Hive.createTable(Hive.java:852)

... 22 more (state=08S01,code=1)

0: jdbc:hive2://192.168.1.111:10000/dm> Closing: 0: jdbc:hive2://192.168.1.111:10000/dm

You have new mail in /var/spool/mail/import

以上问题是因为add jar并不能加载driver

所以加入jar包,重启hive解决

5.3 java.lang.NoSuchMethodError: org.apache.hadoop.hbase.client.HBaseAdmin

所以加入jar包,重启hive,又出现新的错误,怀疑是版本问题,目前在公司集群有问题,但是在虚拟机没有问题,所以在排查。

0: jdbc:hive2://192.168.1.111:10000/dm> create external table ayyh

. . . . . . . . . . . . . . . . . . . > (pk string,

. . . . . . . . . . . . . . . . . . . > value string)

. . . . . . . . . . . . . . . . . . . > STORED BY 'org.apache.phoenix.hive.PhoenixStorageHandler'

. . . . . . . . . . . . . . . . . . . > TBLPROPERTIES (

. . . . . . . . . . . . . . . . . . . > "phoenix.table.name" = "yyh",

. . . . . . . . . . . . . . . . . . . > "phoenix.zookeeper.quorum" = "192.168.1.112",

. . . . . . . . . . . . . . . . . . . > "phoenix.zookeeper.znode.parent" = "/hbase",

. . . . . . . . . . . . . . . . . . . > "phoenix.column.mapping" = "pk:PK,value:VAL",

. . . . . . . . . . . . . . . . . . . > "phoenix.rowkeys" = "pk",

. . . . . . . . . . . . . . . . . . . > "phoenix.table.options" = "SALT_BUCKETS=10, DATA_BLOCK_ENCODING='DIFF'"

. . . . . . . . . . . . . . . . . . . > );

Error: org.apache.hive.service.cli.HiveSQLException: Error while processing statement: FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.DDLTask. org.apache.hadoop.hbase.client.HBaseAdmin.(Lorg/apache/hadoop/hbase/client/HConnection;)V

at org.apache.hive.service.cli.operation.Operation.toSQLException(Operation.java:380)

at org.apache.hive.service.cli.operation.SQLOperation.runQuery(SQLOperation.java:257)

at org.apache.hive.service.cli.operation.SQLOperation.access$800(SQLOperation.java:91)

at org.apache.hive.service.cli.operation.SQLOperation$BackgroundWork$1.run(SQLOperation.java:348)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1714)

at org.apache.hive.service.cli.operation.SQLOperation$BackgroundWork.run(SQLOperation.java:362)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617)

at java.lang.Thread.run(Thread.java:745)

Caused by: java.lang.NoSuchMethodError: org.apache.hadoop.hbase.client.HBaseAdmin.(Lorg/apache/hadoop/hbase/client/HConnection;)V

at org.apache.phoenix.query.ConnectionQueryServicesImpl.getAdmin(ConnectionQueryServicesImpl.java:3282)

at org.apache.phoenix.query.ConnectionQueryServicesImpl$13.call(ConnectionQueryServicesImpl.java:2377)

at org.apache.phoenix.query.ConnectionQueryServicesImpl$13.call(ConnectionQueryServicesImpl.java:2352)

at org.apache.phoenix.util.PhoenixContextExecutor.call(PhoenixContextExecutor.java:76)

at org.apache.phoenix.query.ConnectionQueryServicesImpl.init(ConnectionQueryServicesImpl.java:2352)

at org.apache.phoenix.jdbc.PhoenixDriver.getConnectionQueryServices(PhoenixDriver.java:232)

at org.apache.phoenix.jdbc.PhoenixEmbeddedDriver.createConnection(PhoenixEmbeddedDriver.java:147)

at org.apache.phoenix.jdbc.PhoenixDriver.connect(PhoenixDriver.java:202)

at java.sql.DriverManager.getConnection(DriverManager.java:664)

at java.sql.DriverManager.getConnection(DriverManager.java:270)

at org.apache.phoenix.hive.util.PhoenixConnectionUtil.getConnection(PhoenixConnectionUtil.java:81)

at org.apache.phoenix.hive.PhoenixMetaHook.preCreateTable(PhoenixMetaHook.java:55)

at org.apache.hadoop.hive.metastore.HiveMetaStoreClient.createTable(HiveMetaStoreClient.java:747)

at org.apache.hadoop.hive.metastore.HiveMetaStoreClient.createTable(HiveMetaStoreClient.java:740)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.hive.metastore.RetryingMetaStoreClient.invoke(RetryingMetaStoreClient.java:173)

at com.sun.proxy.$Proxy34.createTable(Unknown Source)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.hive.metastore.HiveMetaStoreClient$SynchronizedHandler.invoke(HiveMetaStoreClient.java:2330)

at com.sun.proxy.$Proxy34.createTable(Unknown Source)

at org.apache.hadoop.hive.ql.metadata.Hive.createTable(Hive.java:852)

at org.apache.hadoop.hive.ql.metadata.Hive.createTable(Hive.java:867)

at org.apache.hadoop.hive.ql.exec.DDLTask.createTable(DDLTask.java:4356)

at org.apache.hadoop.hive.ql.exec.DDLTask.execute(DDLTask.java:354)

at org.apache.hadoop.hive.ql.exec.Task.executeTask(Task.java:199)

at org.apache.hadoop.hive.ql.exec.TaskRunner.runSequential(TaskRunner.java:100)

at org.apache.hadoop.hive.ql.Driver.launchTask(Driver.java:2183)

at org.apache.hadoop.hive.ql.Driver.execute(Driver.java:1839)

at org.apache.hadoop.hive.ql.Driver.runInternal(Driver.java:1526)

at org.apache.hadoop.hive.ql.Driver.run(Driver.java:1237)

at org.apache.hadoop.hive.ql.Driver.run(Driver.java:1232)

at org.apache.hive.service.cli.operation.SQLOperation.runQuery(SQLOperation.java:255)

... 11 more (state=08S01,code=1)

Phoenix官方英文网站

官网 http://phoenix.apache.org/index.html

Hive整合 http://phoenix.apache.org/hive_storage_handler.html