TensorFlow北大公开课学习笔记6.2-制作数据集

前一篇,解决了如何对图片进行预测,且输出结果,本次要解决的是如下:

今天学习:

tfrecords文件,生成tfrecords文件,解析terecords文件

反向传播文件修改图片标签获取的接口,关键操作是利用多线程提高图片和标签的批获取效率,方法用:将批获取操作放到线程协调器开启和关闭之间。

在运行mnist_geberateeds.py时我觉得对于这个结果有点意外

读入后,

# 用空格分割每行的内容 value = content.split()

也不应该是

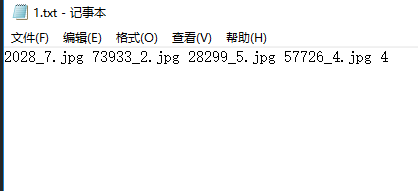

value是等于['8876_3.jpg', '3'],也就是说value[0],value[1]等于,8876_3.jpg,3,大大的问号????

答案:测试发现,在这个![]() 制作的时候,应该是加了\n,所以才会出现如下:

制作的时候,应该是加了\n,所以才会出现如下:

所以没毛病!

但是经过下面的改变,则会发现:

所以哈,这个应该是在制作txt时,格式为:图片名字 标签\n ,只是我们在txt文件中看不到而已,要print才看到。

# coding:utf-8

import tensorflow as tf

import numpy as np

from PIL import Image

import os

image_train_path = './mnist_data_jpg/mnist_train_jpg_60000/'

label_train_path = './mnist_data_jpg/mnist_train_jpg_60000.txt'

tfRecord_train = './data/mnist_train.tfrecords'

image_test_path = './mnist_data_jpg/mnist_test_jpg_10000/'

label_test_path = './mnist_data_jpg/mnist_test_jpg_10000.txt'

tfRecord_test = './data/mnist_test.tfrecords'

data_path = './data'

resize_height = 28

resize_width = 28

# 生成tfrecords文件

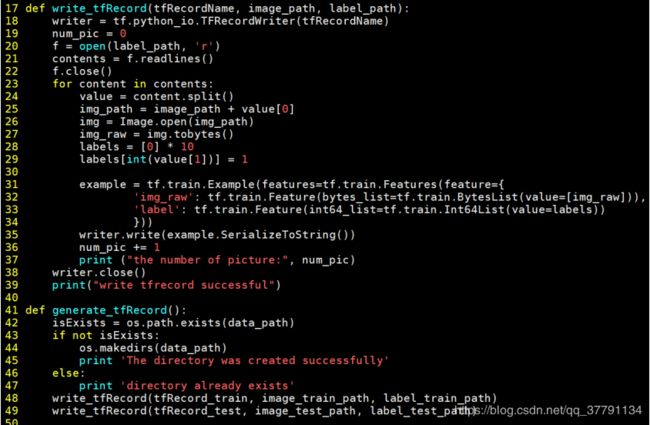

def write_tfRecord(tfRecordName, image_path, label_path):

# 新建一个writer

writer = tf.python_io.TFRecordWriter(tfRecordName)

# 为了显示进度,制作一个计数器

num_pic = 0

# 以读的形式打开标签文件

f = open(label_path, 'r')

# 读取整个文件的内容

contents = f.readlines()

# 关闭文件

f.close()

# 循环遍历每张图和标签

for content in contents:

# 用空格分割每行的内容

value = content.split()

print(value)

img_path = image_path + value[0]

img = Image.open(img_path)

# 将图片转换为二进制数据

img_raw = img.tobytes()

# 把label的每个元素赋值为0

labels = [0] * 10

# 把label的标签位赋值为1

labels[int(value[1])] = 1

# 把每张图片和标签封装到example中

example = tf.train.Example(features=tf.train.Features(feature={

'img_raw': tf.train.Feature(bytes_list=tf.train.BytesList(value=[img_raw])),

'label': tf.train.Feature(int64_list=tf.train.Int64List(value=labels))

}))

# 把example进行序列化

writer.write(example.SerializeToString())

# 没保存一张图片进行加1

num_pic += 1

print("the number of picture:", num_pic)

# 关闭writer

writer.close()

print("write tfrecord successful")

def generate_tfRecord():

# 判断保存路径是否存在

isExists = os.path.exists(data_path)

if not isExists:

os.makedirs(data_path)

print('The directory was created successfully')

else:

print('directory already exists')

write_tfRecord(tfRecord_train, image_train_path, label_train_path)

write_tfRecord(tfRecord_test, image_test_path, label_test_path)

# 解析tfrecords文件

def read_tfRecord(tfRecord_path):

# 该函数会生成一个先入先出的队列,文件阅读器会使用它来读取数据

filename_queue = tf.train.string_input_producer([tfRecord_path], shuffle=True)

# 新建一个reader

reader = tf.TFRecordReader()

# 把读出的每个样本保存在serialized_example中进行解序列化,标签和图片的键名应该和制作tfrecords的键名相同,其中标签给出几分类。

_, serialized_example = reader.read(filename_queue)

# 将tf.train.Example协议内存块(protocol buffer)解析为张量

features = tf.parse_single_example(serialized_example,

features={

'label': tf.FixedLenFeature([10], tf.int64),

'img_raw': tf.FixedLenFeature([], tf.string)

})

# 将img_raw字符串转换为8位无符号整型

img = tf.decode_raw(features['img_raw'], tf.uint8)

# 将形状变为一行784列

img.set_shape([784])

# 变成0到1之间的浮点数

img = tf.cast(img, tf.float32) * (1. / 255)

# 把标签也变成浮点数形式

label = tf.cast(features['label'], tf.float32)

# 返回图片和标签

return img, label

# 读入tfrecord文件,需要指定num和isTrain

def get_tfrecord(num, isTrain=True):

if isTrain:

tfRecord_path = tfRecord_train

else:

tfRecord_path = tfRecord_test

img, label = read_tfRecord(tfRecord_path)

# 随机读取一个batch的数据(图片和标签)

# 这是使用两个线程num_threads=2,容量是1000,从数据中打乱读取1000,但min_after_dequeue少于700时从数据中读取然后填充到1000中

img_batch, label_batch = tf.train.shuffle_batch([img, label],

batch_size=num,

num_threads=2,

capacity=1000,

min_after_dequeue=700)

# 返回的图片和标签为随机抽取的batch_size组

return img_batch, label_batch

def main():

generate_tfRecord()

if __name__ == '__main__':

main()制作数据集,实现特定应用:

1、数据集生成读取文件(mnist_generateds.py)

√tfrecords 文件

1) tfrecords: 是一种二进制文件,可先将图片和标签制作成该格式的文件。

使用 tfrecords 进行数据读取,会提高内存利用率。

2) tf.train.Example: 用来存储训练数据。 训练数据的特征用键值对的形式表

示。

如:‘img_raw ’ :值 ‘label ’ :值 值是 Byteslist/FloatList/Int64List

3) SerializeToString( ): 把数据序列化成字符串存储。

√生成 tfrecords 文件

注解:

1) writer = tf.python_io.TFRecordWriter(tfRecordName) #新建一个 writer

2) for 循环遍历每张图和标签

3) example = tf.train.Example(features=tf.train.Features(feature={

'img_raw':tf.train.Feature(bytes_list=tf.train.BytesList(value=[

img_raw])),

'label':tf.train.Feature(int64_list=tf.train.Int64List(value=lab

els))})) # 把每张图片和标签封装到 example 中

4) writer.write(example.SerializeToString()) # 把 example 进行序列化

5) writer.close() #关闭 writer

# coding:utf-8

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import mnist_forward

import os

import mnist_generateds # 1

BATCH_SIZE = 200

LEARNING_RATE_BASE = 0.1

LEARNING_RATE_DECAY = 0.99

REGULARIZER = 0.0001

STEPS = 50000

MOVING_AVERAGE_DECAY = 0.99

MODEL_SAVE_PATH = "./model/"

MODEL_NAME = "mnist_model"

# 手动给出训练的总样本数6万

train_num_examples = 60000 # 2

def backward():

x = tf.placeholder(tf.float32, [None, mnist_forward.INPUT_NODE])

y_ = tf.placeholder(tf.float32, [None, mnist_forward.OUTPUT_NODE])

y = mnist_forward.forward(x, REGULARIZER)

global_step = tf.Variable(0, trainable=False)

ce = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y, labels=tf.argmax(y_, 1))

cem = tf.reduce_mean(ce)

loss = cem + tf.add_n(tf.get_collection('losses'))

learning_rate = tf.train.exponential_decay(

LEARNING_RATE_BASE,

global_step,

train_num_examples / BATCH_SIZE,

LEARNING_RATE_DECAY,

staircase=True)

train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step)

ema = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step)

ema_op = ema.apply(tf.trainable_variables())

with tf.control_dependencies([train_step, ema_op]):

train_op = tf.no_op(name='train')

saver = tf.train.Saver()

# 一次批获取 batch_size张图片和标签

img_batch, label_batch = mnist_generateds.get_tfrecord(BATCH_SIZE, isTrain=True) # 3

with tf.Session() as sess:

init_op = tf.global_variables_initializer()

sess.run(init_op)

ckpt = tf.train.get_checkpoint_state(MODEL_SAVE_PATH)

if ckpt and ckpt.model_checkpoint_path:

saver.restore(sess, ckpt.model_checkpoint_path)

# 利用多线程提高图片和标签的批获取效率

coord = tf.train.Coordinator() # 4

# 启动输入队列的线程

threads = tf.train.start_queue_runners(sess=sess, coord=coord) # 5

for i in range(STEPS):

# 执行图片和标签的批获取

xs, ys = sess.run([img_batch, label_batch]) # 6

_, loss_value, step = sess.run([train_op, loss, global_step], feed_dict={x: xs, y_: ys})

if i % 1000 == 0:

print("After %d training step(s), loss on training batch is %g." % (step, loss_value))

saver.save(sess, os.path.join(MODEL_SAVE_PATH, MODEL_NAME), global_step=global_step)

# 关闭线程协调器

coord.request_stop() # 7

coord.join(threads) # 8

def main():

backward() # 9

if __name__ == '__main__':

main()

# coding:utf-8

import time

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import mnist_forward

import mnist_backward

import mnist_generateds

TEST_INTERVAL_SECS = 5

# 手动给出测试的总样本数1万

TEST_NUM = 10000 # 1

def test():

with tf.Graph().as_default() as g:

x = tf.placeholder(tf.float32, [None, mnist_forward.INPUT_NODE])

y_ = tf.placeholder(tf.float32, [None, mnist_forward.OUTPUT_NODE])

y = mnist_forward.forward(x, None)

ema = tf.train.ExponentialMovingAverage(mnist_backward.MOVING_AVERAGE_DECAY)

ema_restore = ema.variables_to_restore()

saver = tf.train.Saver(ema_restore)

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

# 用函数get_tfrecord替换读取所有测试集1万张图片

img_batch, label_batch = mnist_generateds.get_tfrecord(TEST_NUM, isTrain=False) # 2

while True:

with tf.Session() as sess:

ckpt = tf.train.get_checkpoint_state(mnist_backward.MODEL_SAVE_PATH)

if ckpt and ckpt.model_checkpoint_path:

saver.restore(sess, ckpt.model_checkpoint_path)

global_step = ckpt.model_checkpoint_path.split('/')[-1].split('-')[-1]

# 利用多线程提高图片和标签的批获取效率

coord = tf.train.Coordinator() # 3

# 启动输入队列的线程

threads = tf.train.start_queue_runners(sess=sess, coord=coord) # 4

# 执行图片和标签的批获取

xs, ys = sess.run([img_batch, label_batch]) # 5

accuracy_score = sess.run(accuracy, feed_dict={x: xs, y_: ys})

print("After %s training step(s), test accuracy = %g" % (global_step, accuracy_score))

# 关闭线程协调器

coord.request_stop() # 6

coord.join(threads) # 7

else:

print('No checkpoint file found')

return

# 定义程序循环的间隔时间是5s

time.sleep(TEST_INTERVAL_SECS)

def main():

test() # 8

if __name__ == '__main__':

main()

# coding:utf-8

# 1前向传播过程

import tensorflow as tf

# 网络输入节点为784个(代表每张输入图片的像素个数)

INPUT_NODE = 784

# 输出节点为10个(表示输出为数字0-9的十分类)

OUTPUT_NODE = 10

# 隐藏层节点500个

LAYER1_NODE = 500

def get_weight(shape, regularizer):

# 参数满足截断正态分布,并使用正则化,

w = tf.Variable(tf.truncated_normal(shape, stddev=0.1))

# w = tf.Variable(tf.random_normal(shape,stddev=0.1))

# 将每个参数的正则化损失加到总损失中

if regularizer != None: tf.add_to_collection('losses', tf.contrib.layers.l2_regularizer(regularizer)(w))

return w

def get_bias(shape):

# 初始化的一维数组,初始化值为全 0

b = tf.Variable(tf.zeros(shape))

return b

def forward(x, regularizer):

# 由输入层到隐藏层的参数w1形状为[784,500]

w1 = get_weight([INPUT_NODE, LAYER1_NODE], regularizer)

# 由输入层到隐藏的偏置b1形状为长度500的一维数组,

b1 = get_bias([LAYER1_NODE])

# 前向传播结构第一层为输入 x与参数 w1矩阵相乘加上偏置 b1 ,再经过relu函数 ,得到隐藏层输出 y1。

y1 = tf.nn.relu(tf.matmul(x, w1) + b1)

# 由隐藏层到输出层的参数w2形状为[500,10]

w2 = get_weight([LAYER1_NODE, OUTPUT_NODE], regularizer)

# 由隐藏层到输出的偏置b2形状为长度10的一维数组

b2 = get_bias([OUTPUT_NODE])

# 前向传播结构第二层为隐藏输出 y1与参 数 w2 矩阵相乘加上偏置 矩阵相乘加上偏置 b2,得到输出 y。

# 由于输出 。由于输出 y要经过softmax oftmax 函数,使其符合概率分布,故输出y不经过 relu函数

y = tf.matmul(y1, w2) + b2

return y# coding:utf-8

import tensorflow as tf

import numpy as np

from PIL import Image

import mnist_backward

import mnist_forward

def restore_model(testPicArr):

# 利用tf.Graph()复现之前定义的计算图

with tf.Graph().as_default() as tg:

x = tf.placeholder(tf.float32, [None, mnist_forward.INPUT_NODE])

# 调用mnist_forward文件中的前向传播过程forword()函数

y = mnist_forward.forward(x, None)

# 得到概率最大的预测值

preValue = tf.argmax(y, 1)

# 实例化具有滑动平均的saver对象

variable_averages = tf.train.ExponentialMovingAverage(mnist_backward.MOVING_AVERAGE_DECAY)

variables_to_restore = variable_averages.variables_to_restore()

saver = tf.train.Saver(variables_to_restore)

with tf.Session() as sess:

# 通过ckpt获取最新保存的模型

ckpt = tf.train.get_checkpoint_state(mnist_backward.MODEL_SAVE_PATH)

if ckpt and ckpt.model_checkpoint_path:

saver.restore(sess, ckpt.model_checkpoint_path)

preValue = sess.run(preValue, feed_dict={x: testPicArr})

return preValue

else:

print("No checkpoint file found")

return -1

# 预处理,包括resize,转变灰度图,二值化

def pre_pic(picName):

img = Image.open(picName)

reIm = img.resize((28, 28), Image.ANTIALIAS)

im_arr = np.array(reIm.convert('L'))

# 对图片做二值化处理(这样以滤掉噪声,另外调试中可适当调节阈值)

threshold = 50

# 模型的要求是黑底白字,但输入的图是白底黑字,所以需要对每个像素点的值改为255减去原值以得到互补的反色。

for i in range(28):

for j in range(28):

im_arr[i][j] = 255 - im_arr[i][j]

if (im_arr[i][j] < threshold):

im_arr[i][j] = 0

else:

im_arr[i][j] = 255

# 把图片形状拉成1行784列,并把值变为浮点型(因为要求像素点是0-1 之间的浮点数)

nm_arr = im_arr.reshape([1, 784])

nm_arr = nm_arr.astype(np.float32)

# 接着让现有的RGB图从0-255之间的数变为0-1之间的浮点数

img_ready = np.multiply(nm_arr, 1.0 / 255.0)

return img_ready

def application():

# 输入要识别的几张图片

testNum = eval(input("input the number of test pictures:"))

for i in range(testNum):

# 给出待识别图片的路径和名称

testPic = input("the path of test picture:")

# 图片预处理

testPicArr = pre_pic(testPic)

# 获取预测结果

preValue = restore_model(testPicArr)

print("The prediction number is:", preValue)

def main():

application()

if __name__ == '__main__':

main()