本篇博客主要介绍下 tensorboard 的使用方法,tensorboard 是 tensorflow 中一个可视化训练过程中数据的工具,它不需要单独安装,tensorflow 安装过程中已经将其装好了,它可以通过tensorflow程序运行过程中产生的日志文件可视化tensorflow程序的运行状态,它和tensorflow程序跑在不同的进程。下面基于官方的例子源码来讲解 mnist_with_summaries.py

编码阶段

1.添加关心的tensor或者Variable变量到tensorboard中

tf.summary.image 添加需要观察的图片信息

with tf.name_scope('input_reshape'):#使用命名空间,将一些节点信息统一在一起,使计算图看起来整洁

image_shaped_input = tf.reshape(x, [-1, 28, 28, 1])

tf.summary.image('input', image_shaped_input, 10) # 参数:name、tensor、max_outputs

#max_outputs默认是3,我们这里让其多显示几张就写成了10

#使用命名空间后,image的名字类似:input_reshape/input/xxxxx

tf.summary.scalar 添加需要观察的变量信息

# 定义一个对Variable变量(这里有weight和bias)的命名空间公共方法,并计算他们的mean、stddev

# max、min、histogram等值并收集在Tensorboard中供用户查看

def variable_summaries(var):

"""Attach a lot of summaries to a Tensor (for TensorBoard visualization)."""

with tf.name_scope('summaries'):

mean = tf.reduce_mean(var)

tf.summary.scalar('mean', mean) #参数 :name, values

with tf.name_scope('stddev'):

stddev = tf.sqrt(tf.reduce_mean(tf.square(var - mean)))

tf.summary.scalar('stddev', stddev)

tf.summary.scalar('max', tf.reduce_max(var)) #名字类似:xxx/summaries/max

tf.summary.scalar('min', tf.reduce_min(var))

tf.summary.histogram('histogram', var)

tf.summary.histogram 添加对变量或者tensor取值范围的直方图信息

with tf.name_scope('Wx_plus_b'):

preactivate = tf.matmul(input_tensor, weights) + biases

tf.summary.histogram('pre_activations', preactivate) #参数 :name, values

2.汇总所有操作节点,并通过FileWriter创建保存运行过程中信息的文件

tf.summary.merge_all 汇总所有节点操作,并定义两个文件记录器FileWriter

#汇总所有操作,并定义两个文件记录器FileWriter

merged = tf.summary.merge_all()

train_writer = tf.summary.FileWriter(FLAGS.log_dir + '/train', sess.graph) #添加整个计算图

test_writer = tf.summary.FileWriter(FLAGS.log_dir + '/test')

3.训练或者测试过程中运行汇总的节点merged,会产生运行信息并将这些信息写入上一步中创建的文件当中

train_writer.add_summary 往文件中写入信息

#记录训练时运算时间和内存占用情况

run_options = tf.RunOptions(trace_level=tf.RunOptions.FULL_TRACE) #设置trace_level

run_metadata = tf.RunMetadata() #定义tensorflow运行元信息

summary, _ = sess.run([merged, train_step],

feed_dict=feed_dict(True),

options=run_options,

run_metadata=run_metadata)

train_writer.add_run_metadata(run_metadata, 'step%03d' % i)#添加训练元信息

train_writer.add_summary(summary, i)

完整的代码如下:

# coding=UTF-8

import argparse

import os

import sys

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

FLAGS = None

def train():

# Import data

mnist = input_data.read_data_sets(FLAGS.data_dir,

fake_data=FLAGS.fake_data)

# 默认的session,可以先构建session后定义操作,如果使用tf.Session()需要在启动session之前构建整个计算图,

# 然后启动该计算图。它还可以直接在不声明session的条件下直接使用run(),eval()

sess = tf.InteractiveSession()

# Create a multilayer model.

# Input placeholders

with tf.name_scope('input'): #使用命名空间,将一些节点信息统一在一起,使计算图看起来整洁

x = tf.placeholder(tf.float32, [None, 784], name='x-input')

y_ = tf.placeholder(tf.int64, [None], name='y-input')

with tf.name_scope('input_reshape'):

image_shaped_input = tf.reshape(x, [-1, 28, 28, 1])

tf.summary.image('input', image_shaped_input, 10) #使用命名空间后,image的名字类似:input_reshape/input/xxxxx

# We can't initialize these variables to 0 - the network will get stuck.

#模型参数初始化

def weight_variable(shape):

"""Create a weight variable with appropriate initialization."""

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

"""Create a bias variable with appropriate initialization."""

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

# 定义一个对Variable变量(这里有weight和bias)的命名空间公共方法,并计算他们的mean、stddev

# max、min、histogram等值并收集在Tensorboard中供用户查看

def variable_summaries(var):

"""Attach a lot of summaries to a Tensor (for TensorBoard visualization)."""

with tf.name_scope('summaries'):

mean = tf.reduce_mean(var)

tf.summary.scalar('mean', mean)

with tf.name_scope('stddev'):

stddev = tf.sqrt(tf.reduce_mean(tf.square(var - mean)))

tf.summary.scalar('stddev', stddev)

tf.summary.scalar('max', tf.reduce_max(var)) #名字类似:xxx/summaries/max

tf.summary.scalar('min', tf.reduce_min(var))

tf.summary.histogram('histogram', var)

def nn_layer(input_tensor, input_dim, output_dim, layer_name, act=tf.nn.relu):

"""Reusable code for making a simple neural net layer.

It does a matrix multiply, bias add, and then uses ReLU to nonlinearize.

It also sets up name scoping so that the resultant graph is easy to read,

and adds a number of summary ops.

"""

# 定义一个MLP多层神经网络来训练数据,包括:初始化weight和bias、做一个矩阵相乘再加上一个偏置项,然后经过一个非线性

#激活函数

# Adding a name scope ensures logical grouping of the layers in the graph.

with tf.name_scope(layer_name):

# This Variable will hold the state of the weights for the layer

with tf.name_scope('weights'):

weights = weight_variable([input_dim, output_dim])

variable_summaries(weights)

with tf.name_scope('biases'):

biases = bias_variable([output_dim])

variable_summaries(biases)

with tf.name_scope('Wx_plus_b'):

preactivate = tf.matmul(input_tensor, weights) + biases

tf.summary.histogram('pre_activations', preactivate)

activations = act(preactivate, name='activation')

tf.summary.histogram('activations', activations)

return activations

hidden1 = nn_layer(x, 784, 500, 'layer1') #使用前面定义的网络

#使用dropout

with tf.name_scope('dropout'):

keep_prob = tf.placeholder(tf.float32)

tf.summary.scalar('dropout_keep_probability', keep_prob)

dropped = tf.nn.dropout(hidden1, keep_prob)

# Do not apply softmax activation yet, see below.

# 这里激活函数用的是全等映射,即直接将输入复制给输出

y = nn_layer(dropped, 500, 10, 'layer2', act=tf.identity)

with tf.name_scope('cross_entropy'):

# The raw formulation of cross-entropy,

# tf.reduce_mean(-tf.reduce_sum(y_ * tf.log(tf.softmax(y)), reduction_indices=[1]))

# can be numerically unstable.

# So here we use tf.losses.sparse_softmax_cross_entropy on the

# raw logit outputs of the nn_layer above, and then average across

# the batch.

with tf.name_scope('total'):

#计算softmax和交叉熵

cross_entropy = tf.losses.sparse_softmax_cross_entropy(

labels=y_, logits=y)

tf.summary.scalar('cross_entropy', cross_entropy)

#使用Adam优化器对损失进行优化

with tf.name_scope('train'):

train_step = tf.train.AdamOptimizer(FLAGS.learning_rate).minimize(

cross_entropy)

#统计正确率

with tf.name_scope('accuracy'):

with tf.name_scope('correct_prediction'):

correct_prediction = tf.equal(tf.argmax(y, 1), y_)

with tf.name_scope('accuracy'):

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

tf.summary.scalar('accuracy', accuracy)

# Merge all the summaries and write them out to

# ./logs/(by default)

#汇总所有操作,并定义两个文件记录器FileWriter

merged = tf.summary.merge_all()

train_writer = tf.summary.FileWriter(FLAGS.log_dir + '/train', sess.graph)

test_writer = tf.summary.FileWriter(FLAGS.log_dir + '/test')

tf.global_variables_initializer().run()

# Train the model, and also write summaries.

# Every 10th step, measure test-set accuracy, and write test summaries

# All other steps, run train_step on training data, & add training summaries

#定义一个feed_dict函数来确定要训练数据还是测试数据

def feed_dict(train):

"""Make a TensorFlow feed_dict: maps data onto Tensor placeholders."""

if train or FLAGS.fake_data:

xs, ys = mnist.train.next_batch(100, fake_data=FLAGS.fake_data)

k = FLAGS.dropout

else:

xs, ys = mnist.test.images, mnist.test.labels

k = 1.0

return {x: xs, y_: ys, keep_prob: k}

for i in range(FLAGS.max_steps):

if i % 10 == 0: # Record summaries and test-set accuracy

summary, acc = sess.run([merged, accuracy], feed_dict=feed_dict(False))

test_writer.add_summary(summary, i)

print('Accuracy at step %s: %s' % (i, acc))

else: # Record train set summaries, and train

if i % 100 == 99: # Record execution stats

#记录训练时运算时间和内存占用情况

run_options = tf.RunOptions(trace_level=tf.RunOptions.FULL_TRACE)

run_metadata = tf.RunMetadata()

summary, _ = sess.run([merged, train_step],

feed_dict=feed_dict(True),

options=run_options,

run_metadata=run_metadata)

train_writer.add_run_metadata(run_metadata, 'step%03d' % i)#添加训练元信息

train_writer.add_summary(summary, i)

print('Adding run metadata for', i)

else: # Record a summary

summary, _ = sess.run([merged, train_step], feed_dict=feed_dict(True))

train_writer.add_summary(summary, i)

train_writer.close() #记得关闭

test_writer.close()

def main(_):

if tf.gfile.Exists(FLAGS.log_dir):#文件存在就删除,重新训练生成

tf.gfile.DeleteRecursively(FLAGS.log_dir)

tf.gfile.MakeDirs(FLAGS.log_dir)

train()

if __name__ == '__main__':

parser = argparse.ArgumentParser() #命令行参数解析,没有默认值就提示用户输入

parser.add_argument('--fake_data', nargs='?', const=True, type=bool,

default=False,

help='If true, uses fake data for unit testing.')

parser.add_argument('--max_steps', type=int, default=1000,

help='Number of steps to run trainer.')

parser.add_argument('--learning_rate', type=float, default=0.001,

help='Initial learning rate')

parser.add_argument('--dropout', type=float, default=0.9,

help='Keep probability for training dropout.')

parser.add_argument(

'--data_dir',

type=str,

default="./mnist_data",

help='Directory for storing input data')

parser.add_argument(

'--log_dir',

type=str,

default="./logs",

help='Summaries log directory')

FLAGS, unparsed = parser.parse_known_args()

tf.app.run(main=main, argv=[sys.argv[0]] + unparsed)

TensorBoard 可视化文件生成

上面代码运行完成后会在train_writer和test_writer指定目录下生成类似"events.out.tfevents.1513910245.N22411D1"这种的文件,然后通过命令行输入命令

tensorboard --logdir=path/to/log-directory #注意这里只需要指定到生成文件的上一级目录就可以了

会有如下提示:

F:\>tensorboard --logdir=./log

TensorBoard 0.4.0rc3 at http://N22411D1:6006 (Press CTRL+C to quit)

最后我们通过将" http://N22411D1:6006"输入谷歌或者火狐浏览器就可以了。

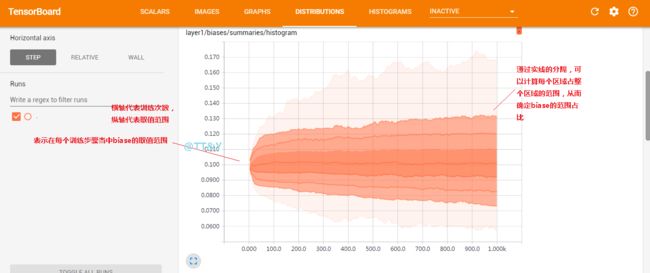

TensorBoard 可视化文件分析

请放大查看原图,图中有注释说明。

SCALARS

统计一些准确率、损失函数、weight等单个值的变化趋势

IMAGES

显示你指定的一些图片信息

GRAPHS

显示你定义的整个计算图,包括计算图里面每个节点的详细信息,比如输入输出的shape是多少,内存占用,计算时间占用,节点名称等等

DISTRIBUTIONS

显示你指定的一些模型参数随着迭代次数增加的变化趋势

HISTOGRAMS

显示你指定的一些模型参数随着迭代次数增加的变化趋势

参考:《TensorFlow实战》