转载自:https://www.cnblogs.com/sxchengchen/p/7805667.html

GlusterFS 配置及使用

GlusterFS集群创建

一、简介

GlusterFS概述

- Glusterfs是一个开源的分布式文件系统,是Scale存储的核心,能够处理千数量级的客户端.在传统的解决 方案中Glusterfs能够灵活的结合物理的,虚拟的和云资源去体现高可用和企业级的性能存储.

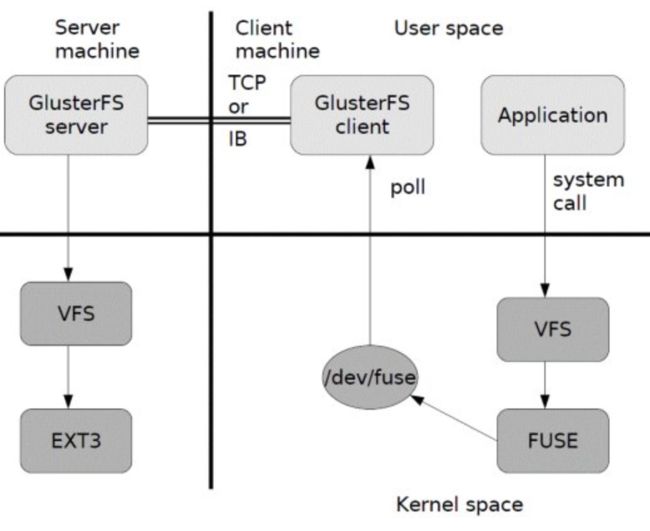

- Glusterfs通过TCP/IP或InfiniBand RDMA网络链接将客户端的存储资块源聚集在一起,使用单一的全局命名空间来管理数据,磁盘和内存资源.

- Glusterfs基于堆叠的用户空间设计,可以为不同的工作负载提供高优的性能.

- Glusterfs支持运行在任何标准IP网络上标准应用程序的标准客户端,如下图1所示,用户可以在全局统一的命名空间中使用NFS/CIFS等标准协议来访问应用数据.

Glusterfs主要特征

- 扩展性和高性能

- 高可用

- 全局统一命名空间

- 弹性hash算法

- 弹性卷管理

- 基于标准协议

工作原理:

1) 首先是在客户端, 用户通过glusterfs的mount point 来读写数据, 对于用户来说,集群系统的存在对用户是完全透明的,用户感觉不到是操作本地系统还是远端的集群系统。

2) 用户的这个操作被递交给 本地linux系统的VFS来处理。

3) VFS 将数据递交给FUSE 内核文件系统:在启动 glusterfs 客户端以前,需要想系统注册一个实际的文件系统FUSE,如上图所示,该文件系统与ext3在同一个层次上面, ext3 是对实际的磁盘进行处理, 而fuse 文件系统则是将数据通过/dev/fuse 这个设备文件递交给了glusterfs client端。所以, 我们可以将 fuse文件系统理解为一个代理。

4) 数据被fuse 递交给Glusterfs client 后, client 对数据进行一些指定的处理(所谓的指定,是按照client 配置文件据来进行的一系列处理, 我们在启动glusterfs client 时需要指定这个文件。

5) 在glusterfs client的处理末端,通过网络将数据递交给 Glusterfs Server,并且将数据写入到服务器所控制的存储设备上。

常用卷类型

分布(distributed)

复制(replicate)

条带(striped)

基本卷:

(1) distribute volume:分布式卷

(2) stripe volume:条带卷

(3) replica volume:复制卷

复合卷:

(4) distribute stripe volume:分布式条带卷

(5) distribute replica volume:分布式复制卷

(6) stripe replica volume:条带复制卷

(7) distribute stripe replicavolume:分布式条带复制卷

二、环境规划

注:node1-node6 为服务端 ,node-client为客户端

| 操作系统 | IP | 主机名 | 硬盘数量(三块) |

| centos 7.3 | 172.16.2.51 | node1 | sdb:2G sdc:2G sdd:2G |

| centos 7.3 | 172.16.2.52 | node2 | sdb:2G sdc:2G sdd:2G |

| centos 7.3 | 172.16.2.53 | node3 | sdb:2G sdc:2G sdd:2G |

| centos 7.3 | 172.16.2.54 | node4 | sdb:2G sdc:2G sdd:2G |

| centos 7.3 | 172.16.2.55 | node5 | sdb:2G sdc:2G sdd:2G |

| centos 7.3 | 172.16.2.56 | node6 | sdb:2G sdc:2G sdd:2G |

| centos 7.3 | 172.16.2.57 | node7-client | sda:20G |

1、环境准备:(node1-node6 同时操作)

1.1 给node1-node6 每台主机添加三块各2G硬盘。

[root@node1 ~]# df -h

文件系统 容量 已用 可用 已用% 挂载点

/dev/mapper/cl-root 18G 4.2G 14G 24% /

devtmpfs 473M 0 473M 0% /dev

tmpfs 489M 84K 489M 1% /dev/shm

tmpfs 489M 7.1M 482M 2% /run

tmpfs 489M 0 489M 0% /sys/fs/cgroup

/dev/sdd 2.0G 33M 2.0G 2% /glusterfs/sdd

/dev/sdc 2.0G 33M 2.0G 2% /glusterfs/sdc

/dev/sdb 2.0G 33M 2.0G 2% /glusterfs/sdb

/dev/sda1 297M 158M 140M 54% /boot

tmpfs 98M 16K 98M 1% /run/user/42

tmpfs 98M 0 98M 0% /run/user/0

1.2 关闭防火墙,seLinux,同步时间

关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

关闭SELinux

sed 's/=permissive/=disabled/' /etc/selinux/config

setenforce 0

同步时间

ntpdate ntp.gwadar.cn

1.3 主机解析(hosts文件配置)

[root@node1 ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.16.2.51 node1

172.16.2.52 node2

172.16.2.53 node3

172.16.2.54 node4

172.16.2.55 node5

172.16.2.56 node6

1.4 测试所有存储节点网络情况

for i in {1..6}

do

ping -c3 node$i &> /dev/null && echo "$i up "

done

1.5 配置epel源

yum install http://mirrors.163.com/centos/7.3.1611/extras/x86_64/Packages/epel-release-7-9.noarch.rpm

1.6 配置glusterfs 的本地 yum源(采用网络源方式)

vim /etc/yum.repos.d/gluster-epel.repo

[root@node1 ~]# cat /etc/yum.repos.d/gluster.repo

[gluster]

name=gluster

baseurl=https://buildlogs.centos.org/centos/7/storage/x86_64/gluster-3.8/

gpgcheck=0

enabled=1

1.7 安装GlusterFS和rpcbind(他是一个RPC服务,主要是在nfs共享时候负责通知客户端,服务器的nfs端口号的。简单理解rpc就是一个中介服务。)

yum install -y glusterfs-server samba rpcbind

systemctl start glusterd.service

systemctl enable glusterd.service

systemctl start rpcbind // rpcbind 用于以nfs方式挂在

systemctl enable rpcbind

systemctl status rpcbind

以上操作均在node1-node6上同时操作

三、Gluster管理

1、gluster 命令帮助

[root@node1 ~]# gluster peer help

peer detach {

peer help - Help command for peer

peer probe {

peer status - list status of peers

pool list - list all the nodes in the pool (including localhost)

2、添加GlusterFS节点(在node1上操作就可以)

[root@node1 ~]# gluster peer probe node2

peer probe: success.

[root@node1 ~]# gluster peer probe node3

peer probe: success.

[root@node1 ~]# gluster peer probe node4

peer probe: success.

查看所添加节点的状态

[root@node1 ~]# gluster peer status

Number of Peers: 3

Hostname: node2

Uuid: 67c60312-a312-43d6-af77-87cbbc29e1aa

State: Peer in Cluster (Connected)

Hostname: node3

Uuid: d79c3a0b-585a-458d-b202-f88ac1439d0d

State: Peer in Cluster (Connected)

Hostname: node4

Uuid: 97094c6e-afc8-4cfb-9d26-616aedc55236

State: Peer in Cluster (Connected)

从存储池中删除节点

[root@node1 ~]# gluster peer detach node2

peer detach: success

[root@node1 ~]# gluster peer probe node2

peer probe: success.

3、创建卷

3.1创建分布卷

[root@node1 ~]# gluster volume create dis_vol \

> node1:/glusterfs/sdb/dv1 \

> node2:/glusterfs/sdb/dv1 \

> node3:/glusterfs/sdb/dv1

volume create: dis_vol: success: please start the volume to access data

查看分布卷

[root@node1 ~]# gluster volume info dis_vol

3.2创建复制卷

[root@node1 ~]# gluster volume create rep_vol replica 3 \

> node1:/glusterfs/sdb/rv2 \

> node2:/glusterfs/sdb/rv2 \

> node3:/glusterfs/sdb/rv2

volume create: rep_vol: success: please start the volume to access data

查看

[root@node1 ~]# gluster volume info rep_vol

3.3创建条带卷

[root@node1 ~]# gluster volume create str_vol stripe 3 \

> node1:/glusterfs/sdb/sv3 \

> node2:/glusterfs/sdb/sv3 \

> node3:/glusterfs/sdb/sv3

volume create: str_vol: success: please start the volume to access data

查看gluster volume info str_vol

3.4 创建分布条带卷

[root@node1 ~]# gluster volume create dir_str_vol stripe 4 \

> node1:/glusterfs/sdb/dsv4 \

> node2:/glusterfs/sdb/dsv4 \

> node3:/glusterfs/sdb/dsv4 \

> node4:/glusterfs/sdb/dsv4 \

> node5:/glusterfs/sdb/dsv4 \

> node6:/glusterfs/sdb/dsv4 \

> node1:/glusterfs/sdc/dsv4 \

> node2:/glusterfs/sdc/dsv4

volume create: dir_str_vol: failed: Host node5 is not in ' Peer in Cluster' state

3.5 创建分布复制卷

[root@node1 ~]# gluster volume create dir_rep_vol replica 2 \

> node2:/glusterfs/sdb/drv5 \

> node1:/glusterfs/sdb/drv5 \

> node3:/glusterfs/sdb/drv5 \

> node4:/glusterfs/sdb/drv5

volume create: dir_rep_vol: success: please start the volume to access data

3.6 创建分布条带复制

[root@node1 ~]# gluster volume create dis_str_rep_vol stri 2 repl 2 \

> node1:/glusterfs/sdb/dsrv6 \

> node2:/glusterfs/sdb/dsrv6 \

> node3:/glusterfs/sdb/drsv6 \

> node4:/glusterfs/sdb/drsv6

volume create: dis_str_rep_vol: success: please start the volume to access data

3.7 创建条带复制卷

[root@node1 ~]# gluster volume create str_rep_vol stripe 2 replica 2 \

> node1:/glusterfs/sdb/srv7 \

> node2:/glusterfs/sdb/srv7 \

> node3:/glusterfs/sdb/srv7 \

> node4:/glusterfs/sdb/srv7

volume create: str_rep_vol: success: please start the volume to access data

3.8 创建分散卷(不常用)

[root@node1 ~]# gluster volume create disperse_vol disperse 4 \

> node1:/glusterfs/sdb/dv8 \

> node2:/glusterfs/sdb/dv8 \

> node3:/glusterfs/sdb/dv8 \

> node4:/glusterfs/sdb/dv8

There isn't an optimal redundancy value for this configuration. Do you want to create the volume with redundancy 1 ? (y/n) y

volume create: disperse_vol: success: please start the volume to access data

查看卷的状态

[root@node1 ~]# gluster volume info disperse_vol

Volume Name: disperse_vol

Type: Disperse

Volume ID: 8be1cd6f-49f8-4b11-bcfb-ef5a4f22e224

Status: Created

Snapshot Count: 0

Number of Bricks: 1 x (3 + 1) = 4

Transport-type: tcp

Bricks:

Brick1: node1:/glusterfs/sdb/dv8

Brick2: node2:/glusterfs/sdb/dv8

Brick3: node3:/glusterfs/sdb/dv8

Brick4: node4:/glusterfs/sdb/dv8

Options Reconfigured:

transport.address-family: inet

performance.readdir-ahead: on

nfs.disable: on

3.9 创建分布分散卷(不常用)

[root@node1 ~]# gluster volume create disperse_vol_3 disperse 3 \

> node1:/glusterfs/sdb/d9 \

> node2:/glusterfs/sdb/d9 \

> node3:/glusterfs/sdb/d9 \

> node4:/glusterfs/sdb/d9 \

> node5:/glusterfs/sdb/d9 \

> node6:/glusterfs/sdb/d9

volume create: disperse_vol_3: success: please start the volume to access data

4、查看卷

4.1 查看单个卷的详细信息

[root@node1 ~]# gluster volume info disperse_vol_3

Volume Name: disperse_vol_3

Type: Distributed-Disperse

Volume ID: 3065d729-8a4f-4717-b8dc-cd73950d8ef7

Status: Created

Snapshot Count: 0

Number of Bricks: 2 x (2 + 1) = 6

Transport-type: tcp

Bricks:

Brick1: node1:/glusterfs/sdb/d9

Brick2: node2:/glusterfs/sdb/d9

Brick3: node3:/glusterfs/sdb/d9

Brick4: node4:/glusterfs/sdb/d9

Brick5: node5:/glusterfs/sdb/d9

Brick6: node6:/glusterfs/sdb/d9

Options Reconfigured:

transport.address-family: inet

performance.readdir-ahead: on

nfs.disable: on

4.2查看所有创建卷的状态

[root@node1 ~]# gluster volume status

Status of volume: dir_rep_vol

Gluster process TCP Port RDMA Port Online Pid

------------------------------------------------------------------------------

Brick node2:/glusterfs/sdb/drv5 49152 0 Y 17676

Brick node1:/glusterfs/sdb/drv5 49152 0 Y 16821

Brick node3:/glusterfs/sdb/drv5 49152 0 Y 16643

Brick node4:/glusterfs/sdb/drv5 49152 0 Y 17365

Self-heal Daemon on localhost N/A N/A Y 16841

Self-heal Daemon on node3 N/A N/A Y 16663

Self-heal Daemon on node6 N/A N/A Y 16557

Self-heal Daemon on node2 N/A N/A N N/A

Self-heal Daemon on node5 N/A N/A Y 15374

Self-heal Daemon on node4 N/A N/A Y 17386

Task Status of Volume dir_rep_vol

------------------------------------------------------------------------------

There are no active volume tasks

Volume dis_str_rep_vol is not started

Volume dis_vol is not started

Volume disperse_vol is not started

Volume disperse_vol_3 is not started

Volume rep_vol is not started

Volume str_rep_vol is not started

Volume str_vol is not started

5、/启/停/删除卷

$gluster volume start mamm-volume

$gluster volume stop mamm-volume

$gluster volume delete mamm-volume

6、扩展收缩卷

$gluster volume add-brick mamm-volume [strip|repli

$gluster volume remove-brick mamm-volume [repl

扩展或收缩卷时,也要按照卷的类型,加入或减少的brick个数必须满足相应的要求。

扩展前状态

[root@node1 ~]# gluster volume status dir_rep_vol

Status of volume: dir_rep_vol

Gluster process TCP Port RDMA Port Online Pid

------------------------------------------------------------------------------

Brick node2:/glusterfs/sdb/drv5 49153 0 Y 2409

Brick node1:/glusterfs/sdb/drv5 49153 0 Y 1162

Brick node3:/glusterfs/sdb/drv5 49153 0 Y 1140

Brick node4:/glusterfs/sdb/drv5 49153 0 Y 1430

Self-heal Daemon on localhost N/A N/A Y 2560

Self-heal Daemon on node3 N/A N/A Y 2507

Self-heal Daemon on node6 N/A N/A Y 2207

Self-heal Daemon on node5 N/A N/A Y 2749

Self-heal Daemon on node4 N/A N/A Y 2787

Self-heal Daemon on node2 N/A N/A Y 2803

Task Status of Volume dir_rep_vol

------------------------------------------------------------------------------

扩展

[root@node1 ~]# gluster volume add-brick dir_rep_vol \

> node1:/glusterfs/sdb/drv6 \

> node2:/glusterfs/sdb/drv6 \

> node3:/glusterfs/sdb/drv6 \

> node4:/glusterfs/sdb/drv6

volume add-brick: success

扩展后

[root@node1 ~]# gluster volume status dir_rep_vol

Status of volume: dir_rep_vol

Gluster process TCP Port RDMA Port Online Pid

------------------------------------------------------------------------------

Brick node2:/glusterfs/sdb/drv5 49153 0 Y 2409

Brick node1:/glusterfs/sdb/drv5 49153 0 Y 1162

Brick node3:/glusterfs/sdb/drv5 49153 0 Y 1140

Brick node4:/glusterfs/sdb/drv5 49153 0 Y 1430

Brick node1:/glusterfs/sdb/drv6 49154 0 Y 5248

Brick node2:/glusterfs/sdb/drv6 49154 0 Y 5093

Brick node3:/glusterfs/sdb/drv6 49154 0 Y 5017

Brick node4:/glusterfs/sdb/drv6 49154 0 Y 5103

Self-heal Daemon on localhost N/A N/A Y 5268

Self-heal Daemon on node3 N/A N/A Y 5037

Self-heal Daemon on node6 N/A N/A Y 5041

Self-heal Daemon on node5 N/A N/A Y 5055

Self-heal Daemon on node4 N/A N/A Y 5132

Self-heal Daemon on node2 N/A N/A N N/A

Task Status of Volume dir_rep_vol

------------------------------------------------------------------------------

There are no active volume tasks

收缩卷

[root@node1 ~]# gluster volume remove-brick dir_rep_vol \

> node1:/glusterfs/sdb/drv5 \

> node2:/glusterfs/sdb/drv5 \

> node3:/glusterfs/sdb/drv5 \

> node4:/glusterfs/sdb/drv5 force(强制)

Removing brick(s) can result in data loss. Do you want to Continue? (y/n) y

volume remove-brick commit force: success

[root@node1 ~]# gluster volume status dir_rep_vol

Status of volume: dir_rep_vol

Gluster process TCP Port RDMA Port Online Pid

------------------------------------------------------------------------------

Brick node1:/glusterfs/sdb/drv6 49154 0 Y 5248

Brick node2:/glusterfs/sdb/drv6 49154 0 Y 5093

Brick node3:/glusterfs/sdb/drv6 49154 0 Y 5017

Brick node4:/glusterfs/sdb/drv6 49154 0 Y 5103

Self-heal Daemon on localhost N/A N/A Y 5591

Self-heal Daemon on node4 N/A N/A Y 5377

Self-heal Daemon on node6 N/A N/A Y 5291

Self-heal Daemon on node5 N/A N/A Y 5305

Self-heal Daemon on node2 N/A N/A Y 5341

Self-heal Daemon on node3 N/A N/A Y 5282

Task Status of Volume dir_rep_vol

------------------------------------------------------------------------------

There are no active volume tasks

7、迁移卷(替换)

volume replace-brick

示例:

[root@node1 ~]# gluster volume replace-brick rep_vol node2:/glusterfs/sdb/rv2 node2:/glusterfs/sdb/rv3 commit force

volume replace-brick: failed: volume: rep_vol is not started

[root@node1 ~]# gluster volume start rep_vol

volume start: rep_vol: success

[root@node1 ~]# gluster volume replace-brick rep_vol node2:/glusterfs/sdb/rv2 node2:/glusterfs/sdb/rv3 commit force

volume replace-brick: success: replace-brick commit force operation successful

#迁移需要完成一系列的事务,假如我们准备将mamm卷中的brick3替换为brick5

#启动迁移过程

$gluster volume replace-brick mamm-volume node3:/exp3 node5:/exp5 start

#暂停迁移过程

$gluster volume replace-brick mamm-volume node3:/exp3 node5:/exp5 pause

#中止迁移过程

$gluster volume replace-brick mamm-volume node3:/exp3 node5:/exp5 abort

#查看迁移状态

$gluster volume replace-brick mamm-volume node3:/exp3 node5:/exp5 status

#迁移完成后提交完成

$gluster volume replace-brick mamm-volume node3:/exp3 node5:/exp5 commit

四、客户端管理

1、安装

[root@node7-client ~]# yum install glusterfs glusterfs-fuse attr -y

2、glusterfs方式挂在

挂载(当前生效)

[root@node7 ~]# mount -t glusterfs node2:/rep_vol /gfs_test/

[root@node7 ~]# df -h

文件系统 容量 已用 可用 已用% 挂载点

/dev/mapper/cl-root 18G 4.1G 14G 24% /

devtmpfs 473M 0 473M 0% /dev

tmpfs 489M 84K 489M 1% /dev/shm

tmpfs 489M 7.1M 482M 2% /run

tmpfs 489M 0 489M 0% /sys/fs/cgroup

/dev/sda1 297M 156M 142M 53% /boot

tmpfs 98M 16K 98M 1% /run/user/42

tmpfs 98M 0 98M 0% /run/user/0

node2:/rep_vol 2.0G 33M 2.0G 2% /rep

node2:/rep_vol 2.0G 33M 2.0G 2% /gfs_test

挂载(永久)

[root@node7 ~]# echo "node2:/rep_vol /gfs_test glusterfs defaults,_netdev 0 0" >> /etc/fstab

[root@node7 ~]# mount -a

3、Nfs方式

挂在(当前生效)

[root@node7 ~]# mount -t nfs node3:/dis_vol gfs_nfs

mount.nfs: requested NFS version or transport protocol is not supported

解决方法:

1)、安装 nfs-utils rpcbind

2)、开起卷的nfs挂载方式

[root@node1 ~]# gluster volume info dis_vol

Volume Name: dis_vol

Type: Distribute

Volume ID: c501f4ad-5a54-4835-b163-f508aa1c07ba

Status: Started

Snapshot Count: 0

Number of Bricks: 3

Transport-type: tcp

Bricks:

Brick1: node1:/glusterfs/sdb/dv1

Brick2: node2:/glusterfs/sdb/dv1

Brick3: node3:/glusterfs/sdb/dv1

Options Reconfigured:

transport.address-family: inet

performance.readdir-ahead: on

nfs.disable: on

[root@node1 ~]# gluster volume set dis_vol nfs.disable off

volume set: success

[root@node7 ~]# mount -t nfs node3:/dis_vol /gfs_nfs

[root@node7 ~]# df -h

文件系统 容量 已用 可用 已用% 挂载点

/dev/mapper/cl-root 18G 4.2G 14G 24% /

devtmpfs 473M 0 473M 0% /dev

tmpfs 489M 84K 489M 1% /dev/shm

tmpfs 489M 7.1M 482M 2% /run

tmpfs 489M 0 489M 0% /sys/fs/cgroup

/dev/sda1 297M 156M 142M 53% /boot

tmpfs 98M 16K 98M 1% /run/user/42

tmpfs 98M 0 98M 0% /run/user/0

node3:/dis_vol 6.0G 97M 5.9G 2% /gfs_nfs

永久挂载

[root@node7 ~]# echo "node3:/dis_vol /gfs_nfs nfs defaults,_netdev 0 0" >> /etc/fstab

[root@node7 ~]# mount -a

[root@node7 ~]# df -h

文件系统 容量 已用 可用 已用% 挂载点

/dev/mapper/cl-root 18G 4.1G 14G 24% /

devtmpfs 473M 0 473M 0% /dev

tmpfs 489M 84K 489M 1% /dev/shm

tmpfs 489M 7.1M 482M 2% /run

tmpfs 489M 0 489M 0% /sys/fs/cgroup

/dev/sda1 297M 156M 142M 53% /boot

tmpfs 98M 12K 98M 1% /run/user/42

tmpfs 98M 0 98M 0% /run/user/0

node3:/dis_vol 6.0G 97M 5.9G 2% /gfs_nfs