示例网站:https://python123.io/ws/demo....

>>> import requests

>>> r = requests.get('https://python123.io/ws/demo.html')

>>> r.text

'This is a python demo page \r\n\r\nThe demo python introduces several python courses.

\r\nPython is a wonderful general-purpose programming language. You can learn Python from novice to professional by tracking the following courses:\r\nBasic Python and Advanced Python.

\r\n'

>>> demo = r.text

>>> from bs4 import BeautifulSoup

>>> soup = BeautifulSoup(demo,'html.parser')

>>> print(soup.prettify())

This is a python demo page

The demo python introduces several python courses.

Python is a wonderful general-purpose programming language. You can learn Python from novice to professional by tracking the following courses:

Basic Python

and

Advanced Python

.

我们使用soup的prettify方法来漂亮打印HTML页面。

BeautifulSoup库的基本元素

html元素的介绍:

The demo python introduces several python courses.

在以上的代码中,

..

就是标签,Tag;标签的名字name 就是

pclass 就是属性(Attributes),属性是由键值对构成的。

beautifulsoup类就是对应着HTML页面。

BeautifulSoup 库解析器

无论哪种解析器都可以处理HTML文档的

| 解析器 | 使用方法 | 条件 |

|---|---|---|

| bs4的HTML解析器 | BeautifulSoup(mk,'html.parser') |

安装bs4库 |

| lxml的HTML解析器 | BeautifulSoup(mk,'lxml') |

pip install lxml |

| lxml的XML解析器 | BeautifulSoup(mk,'xml') |

pip install lxml |

| html5lib解析器 | BeautifulSoup(mk,'html5lib') |

pip install html5lib |

BeautifulSoup 类的基本元素

| 基本元素 | 说明 |

|---|---|

| Tag | 标签,最基本的信息组织单元,分别用<>和表明开头和结尾 |

| Name | 标签的名字, .. 的名字是‘p’,格式: |

| Attributes | 标签的属性,字典形式组织,格式: |

| NavigableString | 标签内非属性字符串,<>..中字符串,格式: |

| Comment | 标签内字符串的注释部分,一种特殊的Comment类型 |

>>> tag = soup.a

>>> tag

Basic Pythonsoup中所有的标签都可以使用soup.tag的形式访问,当文档中存在多个同名标签,则只访问第一个。

访问标签名字的方法:

>>> tag.name

'a'

>>> tag.parent.name

'p'

>>> tag.parent.parent.name

'body'访问标签属性的方法,无论标签是否存在属性,都会返回一个字典。

>>> tag.attrs

{'href': 'http://www.icourse163.org/course/BIT-268001', 'class': ['py1'], 'id': 'link1'}

>>> tag.attrs['href']

'http://www.icourse163.org/course/BIT-268001'

>>> tag.attrs['class']

['py1']

>>> type(tag.attrs)

>>> type(tag)

#标签是标签属性 访问NavigableString的方法:

>>> tag.string

'Basic Python'

>>> soup.p

The demo python introduces several python courses.

>>> soup.p.string

'The demo python introduces several python courses.'

>>> type(soup.p.string)

#这个字符串不是普通字符串类型 访问Comment注释的方法

>>> newsoup = BeautifulSoup("This is not a comment

",'html.parser')

>>> newsoup.b.string

'This is a comment'

>>> type(newsoup.b.string)

>>> newsoup.p.string

'This is not a comment'

>>> type(newsoup.p.string)

若分析文本时不需要注释的内容,用类型来判断一下就可以过滤掉注释的内容。

HTML遍历方法:

1.从根节点向叶子节点下行遍历

2.从叶子节点向根节点上行遍历

3.从叶子节点到叶子节点的平行遍历方式

下行遍历方法:

| 属性 | 说明 |

|---|---|

| .contents | 子节点的列表,将 |

| .children | 子节点的迭代类型,与.contents类似,用于循环遍历儿子节点 |

| .descendants | 子孙节点的迭代类型,包含所有子孙节点,用于循环遍历 |

>>> soup.body

The demo python introduces several python courses.

Python is a wonderful general-purpose programming language. You can learn Python from novice to professional by tracking the following courses:

Basic Python and Advanced Python.

>>> soup.body.contents

['\n', The demo python introduces several python courses.

, '\n', Python is a wonderful general-purpose programming language. You can learn Python from novice to professional by tracking the following courses:

Basic Python and Advanced Python.

, '\n']

>>> len(soup.body.contents)

5

>>> for child in soup.body.children:

print(child)

The demo python introduces several python courses.

Python is a wonderful general-purpose programming language. You can learn Python from novice to professional by tracking the following courses:

Basic Python and Advanced Python.

>>> for child in soup.body.descendants:

print(child)

The demo python introduces several python courses.

The demo python introduces several python courses.

The demo python introduces several python courses.

Python is a wonderful general-purpose programming language. You can learn Python from novice to professional by tracking the following courses:

Basic Python and Advanced Python.

Python is a wonderful general-purpose programming language. You can learn Python from novice to professional by tracking the following courses:

Basic Python

Basic Python

and

Advanced Python

Advanced Python

.上行遍历方法

| 属性 | 说明 |

|---|---|

| .parent | 节点的父亲标签 |

| .parents | 节点先辈标签的迭代类型,用于循环遍历先辈节点 |

>>> soup.title.parent

This is a python demo page

>>> soup.html.parent

This is a python demo page

The demo python introduces several python courses.

Python is a wonderful general-purpose programming language. You can learn Python from novice to professional by tracking the following courses:

Basic Python and Advanced Python.

>>> soup.parent

>>> for parent in soup.a.parents:

if parent is None:

print(parent)

else:

print(parent.name)

p

body

html

[document]标签树的平行遍历

| 属性 | 说明 |

|---|---|

| .next_sibling | 返回按照HTML文本顺序的下一个平行节点标签 |

| .previous_sibling | 返回按照HTML文本顺序的上一个平行节点标签 |

| .next_siblings | 迭代类型,返回按照HTML文本顺序的后续所有平行节点标签 |

| .previous_siblings | 迭代类型,返回按照HTML文本顺序的前续所有平行节点标签 |

重要:平行遍历发送在同一个父亲节点下的各个节点之间

>>> soup.a.next_sibling

' and '

>>> soup.a.next_sibling.next_sibling

Advanced Python

>>> soup.a.previous_sibling

'Python is a wonderful general-purpose programming language. You can learn Python from novice to professional by tracking the following courses:\r\n'

>>> soup.a.previous_sibling.previous_siblingbs4的html页面友好输出:

print(soup.prettify())XML:扩展标记语言 与HTML格式类似。最早的通用信息标记语言,可扩展性好,但繁琐。主要采用

>>> for link in soup.find_all('a'):

print(link.get('href'))

http://www.icourse163.org/course/BIT-268001

http://www.icourse163.org/course/BIT-1001870001soup.find_all()方法:<>.find_all(name,attrs,recursive,string,**kwargs)

返回一个列表类型,存储查找的结果

name:对标签名称的检索字符串

>>> soup.find_all('a')

[Basic Python, Advanced Python]

>>> soup.find_all('a')[0]

Basic Python

>>> soup.find_all(['a','b'])#同时搜索两个参数需要传入一个列表

[The demo python introduces several python courses., Basic Python, Advanced Python]

>>> for tag in soup.find_all(True):

print(tag.name)

html

head

title

body

p

b

p

a

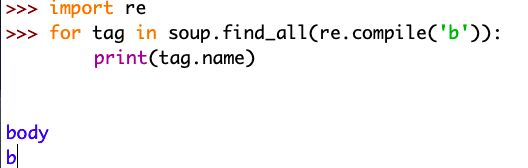

a现在要搜索所有以‘b’开头的标签,包括和标签。

attrs:对标签属性值的检索字符串,可标注属性检索

soup(..)等价于 soup.find_all(..)

实例:中国大学排名的爬虫实现

URL链接为:http://www.zuihaodaxue.com/zu...

在这里,我们在HTML页面中看到了全部信息,那么现在对程序结构进行一下初步设计。

步骤1:从网络上获取大学排名网页内容,getHTMLText()

步骤2:提取网页内容中信息到合适的数据结构,fillUnivList()(关键点)

步骤3:利用数据结构展示并输出结构,printUnivList()

import requests

from bs4 import BeautifulSoup as bs

import bs4

def getHTMLText(url):

try:

r = requests.get(url,timeout=30)

r.raise_for_status()

r.encoding = r.apparent_encoding

return r.text

except:

print('爬取网页失败')

def fillUnivList(ulist,html):

soup = bs(html,'html.parser')

for tr in soup.find('tbody').children:

if isinstance(tr,bs4.element.Tag):#过滤掉非标签内容,必须引入bs4库

tds = tr('td') #tr.find_all('td'),查找所有td标签

ulist.append([tds[0].string,tds[1].string,tds[2].string])

def printUnivList(ulist,num):

geshi = "{:^10}\t{:^6}\t{:^10}"

print(geshi.format('排名','学校名称','省市'))

for i in range(num):

u = ulist[i]

print(geshi.format(u[0],u[1],u[2]))

def main():

uinfo = []

url = 'http://www.zuihaodaxue.com/zuihaodaxuepaiming2019.html'

html = getHTMLText(url)

fillUnivList(uinfo,html)

printUnivList(uinfo,20) #打印前20所大学

main()