1 媒体捕捉

1.1 媒体捕捉类简介

- AVCaptureSession: AVFoundation核心类,它管理从物理设备得到的信号流,可以为它配置一个预设值用来控制捕捉数据格式和质量。

- AVCaptureDevice:为摄像头和麦克风等物理设备定义的接口,其中针对物理硬件定义了大量的控制方法。比如摄像头的对焦、曝光、白平衡和闪光等。

- AVCaptureDeviceInput:对物理设备的AVCaptureDevice封装,只用包装成该对象的实例才能添加到AVCaptureSession中。

- AVCaptureOutput:该类是一个抽象类,具体功能子类是...StillImageOutput等,它们用于输出捕捉到的媒体资源。...DataOutput子类用于对视频流和音频流进行实时处理。

- AVCaptureConnection:用于连接输出和输入端,即图中箭头,可以对信号流的底层控制,如禁用某些特定连接,或在音频连接中访问单独的音频轨道。

- AVCaptureVideoPreviewLayer:用于渲染当前捕捉到的视频媒体,可以通过设置gravity属性设置图像拉伸方式。

1.2 图像捕捉

1.2.1 初始化相机

首先需要创建THPreviewView.h来负责捕捉到图像的预览以及所有的手势交互逻辑。

#import

// 手势交互代理方法

@protocol THPreviewViewDelegate

- (void)tappedToFocusAtPoint:(CGPoint)point;

- (void)tappedToExposeAtPoint:(CGPoint)point;

- (void)tappedToResetFocusAndExposure;

@end

@interface THPreviewView : UIView

@property (strong, nonatomic) AVCaptureSession *session;

@property (weak, nonatomic) id delegate;

@property (nonatomic) BOOL tapToFocusEnabled;

@property (nonatomic) BOOL tapToExposeEnabled;

@end

THPreviewView.m

@interface THPreviewView ()

@property (strong, nonatomic) UIView *focusBox;

@property (strong, nonatomic) UIView *exposureBox;

@property (strong, nonatomic) NSTimer *timer;

@property (strong, nonatomic) UITapGestureRecognizer *singleTapRecognizer;

@property (strong, nonatomic) UITapGestureRecognizer *doubleTapRecognizer;

@property (strong, nonatomic) UITapGestureRecognizer *doubleDoubleTapRecognizer;

@end

@implementation THPreviewView

- (id)initWithFrame:(CGRect)frame {

self = [super initWithFrame:frame];

if (self) {

[self setupView];

}

return self;

}

+ (Class)layerClass {

return [AVCaptureVideoPreviewLayer class];

}

- (AVCaptureSession*)session {

return [(AVCaptureVideoPreviewLayer*)self.layer session];

}

- (void)setSession:(AVCaptureSession *)session {

[(AVCaptureVideoPreviewLayer*)self.layer setSession:session];

}

// 将屏幕坐标系(像素点为基准)转换为捕捉设备坐标系(home键位于右侧时左上0,0 右下1,1)

- (CGPoint)captureDevicePointForPoint:(CGPoint)point {

AVCaptureVideoPreviewLayer *layer =

(AVCaptureVideoPreviewLayer *)self.layer;

return [layer captureDevicePointOfInterestForPoint:point];

}

@end

创建THCameraController.h作为相机管理类,负责和相机相关的具体逻辑实现。

extern NSString *const THThumbnailCreatedNotification;

// 定义错误事件发生时的调用

@protocol THCameraControllerDelegate

- (void)deviceConfigurationFailedWithError:(NSError *)error;

- (void)mediaCaptureFailedWithError:(NSError *)error;

- (void)assetLibraryWriteFailedWithError:(NSError *)error;

@end

@interface THCameraController : NSObject

@property (weak, nonatomic) id delegate;

@property (nonatomic, strong, readonly) AVCaptureSession *captureSession;

// Session Configuration 用于配置和捕捉会话

- (BOOL)setupSession:(NSError **)error;

- (void)startSession;

- (void)stopSession;

// Camera Device Support 切换摄像头,判断并开启闪光、手电筒功能,判断是否支持对焦和曝光功能

- (BOOL)switchCameras;

- (BOOL)canSwitchCameras;

@property (nonatomic, readonly) NSUInteger cameraCount;

@property (nonatomic, readonly) BOOL cameraHasTorch;

@property (nonatomic, readonly) BOOL cameraHasFlash;

@property (nonatomic, readonly) BOOL cameraSupportsTapToFocus;

@property (nonatomic, readonly) BOOL cameraSupportsTapToExpose;

@property (nonatomic) AVCaptureTorchMode torchMode;

@property (nonatomic) AVCaptureFlashMode flashMode;

// Tap to * Methods 开启聚焦、曝光功能

- (void)focusAtPoint:(CGPoint)point;

- (void)exposeAtPoint:(CGPoint)point;

- (void)resetFocusAndExposureModes;

/** Media Capture Methods 捕捉静态图片和视频**/

// Still Image Capture

- (void)captureStillImage;

// Video Recording

- (void)startRecording;

- (void)stopRecording;

- (BOOL)isRecording;

- (CMTime)recordedDuration;

@end

THCameraController.m实现图像和声音捕捉逻辑。

- (BOOL)setupSession:(NSError **)error {

self.captureSession = [[AVCaptureSession alloc] init];

self.captureSession.sessionPreset = AVCaptureSessionPresetHigh;

// 设置默认相机设备输入

AVCaptureDevice *videoDevice = [AVCaptureDevice defaultDeviceWithMediaType:AVMediaTypeVideo];

AVCaptureDeviceInput *videoInput = [AVCaptureDeviceInput deviceInputWithDevice:videoDevice error:error];

if (videoInput) {

if ([self.captureSession canAddInput:videoInput]) {

[self.captureSession addInput:videoInput];

self.activeVideoInput = videoInput;

}

} else {

return NO;

}

// 设置默认麦克风设备

AVCaptureDevice *audioDevice = [AVCaptureDevice defaultDeviceWithMediaType:AVMediaTypeAudio];

AVCaptureDeviceInput *audioInput = [AVCaptureDeviceInput deviceInputWithDevice:audioDevice error:error];

if (audioInput) {

if ([self.captureSession canAddInput:audioInput]) {

[self.captureSession addInput:audioInput];

}

} else {

return NO;

}

// 设置静态图像输出

self.imageOutput = [[AVCaptureStillImageOutput alloc] init];

self.imageOutput.outputSettings = @{AVVideoCodecKey : AVVideoCodecJPEG};

if ([self.captureSession canAddOutput:self.imageOutput]) {

[self.captureSession addOutput:self.imageOutput];

}

// 设置视频输出

self.movieOutput = [[AVCaptureMovieFileOutput alloc] init];

if ([self.captureSession canAddOutput:self.movieOutput]) {

[self.captureSession addOutput:self.movieOutput];

}

self.videoQueue = dispatch_queue_create("com.tapharmonic.VideoQueue", DISPATCH_QUEUE_SERIAL);

return YES;

}

// 会话的开始和终止是耗时操作,因此需放在一个队列中异步执行

- (void)startSession {

if (![self.captureSession isRunning]) {

dispatch_async(self.videoQueue, ^{

[self.captureSession startRunning];

});

}

}

- (void)stopSession {

if ([self.captureSession isRunning]) {

dispatch_async(self.videoQueue, ^{

[self.captureSession stopRunning];

});

}

}

创建THViewController实现相机视图控制器,管理预览视图Preview和相机CameraController。

- (void)viewDidLoad {

[super viewDidLoad];

[[NSNotificationCenter defaultCenter] addObserver:self

selector:@selector(updateThumbnail:)

name:THThumbnailCreatedNotification

object:nil];

self.cameraMode = THCameraModeVideo;

self.cameraController = [[THCameraController alloc] init];

NSError *error;

if ([self.cameraController setupSession:&error]) {

[self.previewView setSession:self.cameraController.captureSession];

self.previewView.delegate = self;

[self.cameraController startSession];

} else {

NSLog(@"Error: %@", [error localizedDescription]);

}

self.previewView.tapToFocusEnabled = self.cameraController.cameraSupportsTapToFocus;

self.previewView.tapToExposeEnabled = self.cameraController.cameraSupportsTapToExpose;

}

最后在项目的InfoPlist中添加key对相机和麦克风授权,相机初始化功能完成。

1.2.2 切换摄像头

切换摄像头工具方法实现

- (AVCaptureDevice *)cameraWithPosition:(AVCaptureDevicePosition)position {

NSArray *devices = [AVCaptureDevice devicesWithMediaType:AVMediaTypeVideo];

for (AVCaptureDevice *device in devices) {

if (device.position == position) {

return device;

}

}

return nil;

}

- (AVCaptureDevice *)activeCamera {

return self.activeVideoInput.device;

}

- (AVCaptureDevice *)inactiveCamera {

AVCaptureDevice *device = nil;

if (self.cameraCount > 1) {

if ([self activeCamera].position == AVCaptureDevicePositionBack) {

device = [self cameraWithPosition:AVCaptureDevicePositionFront];

} else {

device = [self cameraWithPosition:AVCaptureDevicePositionBack];

}

}

return device;

}

- (BOOL)canSwitchCameras {

return self.cameraCount > 1;

}

- (NSUInteger)cameraCount {

return [[AVCaptureDevice devicesWithMediaType:AVMediaTypeVideo] count];

}

切换摄像头核心方法实现

- (BOOL)switchCameras {

if (![self canSwitchCameras]) {

return NO;

}

NSError *error;

AVCaptureDevice *videoDevice = [self inactiveCamera];

AVCaptureDeviceInput *videoInput = [AVCaptureDeviceInput deviceInputWithDevice:videoDevice error:&error];

if (videoInput) {

[self.captureSession beginConfiguration];

[self.captureSession removeInput:self.activeVideoInput];

if ([self.captureSession canAddInput:videoInput]) {

[self.captureSession addInput:videoInput];

self.activeVideoInput = videoInput;

} else {

[self.captureSession addInput:self.activeVideoInput];

}

[self.captureSession commitConfiguration];

} else {

[self.delegate deviceConfigurationFailedWithError:error];

return NO;

}

return YES;

}

1.2.3 配置捕捉设备

AVCaptureDevice定义了很多方法可以控制摄像头的焦距、曝光、闪光和白平衡。实现的逻辑遵循:1)查询是否支持某项配置,2)锁定配置,3)执行配置,4)解锁配置4个步骤进行。

1.2.4 调整焦距和曝光

通常,iOS大多数设备都支持基于兴趣点的曝光和聚焦,并且默认都是基于屏幕中心点默认连续自动对焦和曝光。在实际应用中,可能我们需要时设备对于视图中心以外其他部分的兴趣点锁定对焦和曝光。

锁定焦距

#pragma mark - Focus Methods

- (BOOL)cameraSupportsTapToFocus {

return [[self activeCamera] isFocusPointOfInterestSupported];

}

- (void)focusAtPoint:(CGPoint)point {

AVCaptureDevice *device = [self activeCamera];

if (device.isFocusPointOfInterestSupported && [device isFocusModeSupported:AVCaptureFocusModeAutoFocus]) {

NSError *error;

if ([device lockForConfiguration:&error]) {

// 此处的点已经从屏幕坐标系转换为设备坐标系,在Preview中转换

device.focusPointOfInterest = point;

device.focusMode = AVCaptureFocusModeAutoFocus;

[device unlockForConfiguration];

} else {

[self.delegate deviceConfigurationFailedWithError:error];

}

}

}

锁定曝光

#pragma mark - Exposure Methods

- (BOOL)cameraSupportsTapToExpose {

return [[self activeCamera] isExposurePointOfInterestSupported];

}

static const NSString *THCameraAdjustingExposureContext;

- (void)exposeAtPoint:(CGPoint)point {

// 这里使用持续调整曝光模式,并且通过KVO监视摄像头,在调整好曝光模式后锁定曝光等级,持续曝光模式

// 能更精细控制曝光等级。尽管对焦模式中持续自动对焦也能更精细控制,但是对于对焦一次对焦就可以满足大多数需要。

AVCaptureDevice *device = [self activeCamera];

if (device.isExposurePointOfInterestSupported && [device isExposureModeSupported:AVCaptureExposureModeContinuousAutoExposure]) {

NSError *error;

if ([device lockForConfiguration:&error]) {

device.exposurePointOfInterest = point;

device.exposureMode = AVCaptureExposureModeContinuousAutoExposure;

if ([device isExposureModeSupported:AVCaptureExposureModeLocked]) {

[device addObserver:self forKeyPath:@"adjustingExposure" options:NSKeyValueObservingOptionNew context:&THCameraAdjustingExposureContext];

}

[device unlockForConfiguration];

} else {

[self.delegate deviceConfigurationFailedWithError:error];

}

}

}

- (void)observeValueForKeyPath:(NSString *)keyPath

ofObject:(id)object

change:(NSDictionary *)change

context:(void *)context {

if (context == &THCameraAdjustingExposureContext) {

AVCaptureDevice *device = (AVCaptureDevice *)object;

// 此处由于AVCaptureExposureModeContinuousAutoExposure会持续调整某点的曝光等级,因此会

// 多次修改isAdjustingExposure属性,因此只有修改为不再调整时候移除通知。只有支持

// AVCaptureExposureModeLocked才会添加该通知,因此在满足该条件时候移除通知会移除干净。

if (!device.isAdjustingExposure && [device isExposureModeSupported:AVCaptureExposureModeLocked]) {

[object removeObserver:self forKeyPath:@"adjustingExposure" context:&THCameraAdjustingExposureContext];

dispatch_async(dispatch_get_main_queue(), ^{

NSError *error;

if ([device lockForConfiguration:&error]) {

device.exposureMode = AVCaptureExposureModeLocked;

[device unlockForConfiguration];

} else {

[self.delegate deviceConfigurationFailedWithError:error];

}

});

}

} else {

[super observeValueForKeyPath:keyPath ofObject:object change:change context:context];

}

}

恢复以屏幕中心自动连续对焦和曝光

- (void)resetFocusAndExposureModes {

AVCaptureDevice *device = [self activeCamera];

AVCaptureFocusMode focusMode = AVCaptureFocusModeContinuousAutoFocus;

BOOL canResetFocus = [device isFocusPointOfInterestSupported] && [device isFocusModeSupported:focusMode];

AVCaptureExposureMode exposureMode = AVCaptureExposureModeContinuousAutoExposure;

BOOL canResetExposure = [device isExposurePointOfInterestSupported] && [device isExposureModeSupported:exposureMode];

CGPoint centerPoint = CGPointMake(0.5f, 0.5f);

NSError *error;

if ([device lockForConfiguration:&error]) {

if (canResetFocus) {

device.focusMode = focusMode;

device.focusPointOfInterest = centerPoint;

}

if (canResetExposure) {

device.exposureMode = exposureMode;

device.exposurePointOfInterest = centerPoint;

}

[device unlockForConfiguration];

} else {

[self.delegate deviceConfigurationFailedWithError:error];

}

}

1.2.5 调整闪光灯和手电筒

苹果将后面的LED灯用于拍照模式下闪光(flash)和摄像模式下的手电筒(torch)功能。在拍照模式下也可以通过设置torch使用手电筒功能。

#pragma mark - Flash and Torch Modes

- (BOOL)cameraHasFlash {

return [[self activeCamera] hasFlash];

}

- (AVCaptureFlashMode)flashMode {

return [[self activeCamera] flashMode];

}

- (void)setFlashMode:(AVCaptureFlashMode)flashMode {

AVCaptureDevice *device = [self activeCamera];

if ([device isFlashModeSupported:flashMode]) {

NSError *error;

if ([device lockForConfiguration:&error]) {

device.flashMode = flashMode;

[device unlockForConfiguration];

} else {

[self.delegate deviceConfigurationFailedWithError:error];

}

}

}

- (BOOL)cameraHasTorch {

return [[self activeCamera] hasTorch];

}

- (AVCaptureTorchMode)torchMode {

return [[self activeCamera] torchMode];

}

- (void)setTorchMode:(AVCaptureTorchMode)torchMode {

AVCaptureDevice *device = [self activeCamera];

if ([device isTorchModeSupported:torchMode]) {

NSError *error;

if ([device lockForConfiguration:&error]) {

device.torchMode = torchMode;

[device unlockForConfiguration];

} else {

[self.delegate deviceConfigurationFailedWithError:error];

}

}

}

1.2.6 拍摄静态照片

当创建一个会话并添加捕捉设备输入和输出时,AVFoundation会自动建立输入和输出之间的链接,得到照片和视频都需要先获得正确的连接AVCaptureConnection。

处理照片时需要用到Core Media框架中的CMSampleBuffer,这里需要注意它是Core Foundation类型数据,其不支持ARC,在iOS中手动建立的Core Foundation需要手动释放内存,系统block中的Core Foundation数据并不需要,推测系统在调用block后会自动处理。

创建静态图片输出时可以指定outputSettings设置输出的图片格式,这样对应的信号流可以被压缩成指定格式的二进制数据。

将视频和图片资源存入系统相册时需要使用PHotos框架。

#pragma mark - Image Capture Methods

- (void)captureStillImage {

AVCaptureConnection *connection = [self.imageOutput connectionWithMediaType:AVMediaTypeVideo];

if (connection.isVideoOrientationSupported) {

connection.videoOrientation = [self currentVideoOrientation];

}

id handler = ^(CMSampleBufferRef sampleBuffer, NSError *error) {

if (sampleBuffer != NULL) {

NSData *imageData = [AVCaptureStillImageOutput jpegStillImageNSDataRepresentation:sampleBuffer];

UIImage *image = [[UIImage alloc] initWithData:imageData];

[self writeImageToPhotosLibrary:image];

} else {

NSLog(@"NULL sampleBuffer: %@", [error localizedDescription]);

}

};

// 捕获静态图像

[self.imageOutput captureStillImageAsynchronouslyFromConnection:connection completionHandler:handler];

}

- (AVCaptureVideoOrientation)currentVideoOrientation {

AVCaptureVideoOrientation orientation;

switch ([UIDevice currentDevice].orientation) {

// 相机的左右和设备的左右是相反的

case UIDeviceOrientationLandscapeRight:

orientation = AVCaptureVideoOrientationLandscapeLeft;

break;

case UIDeviceOrientationLandscapeLeft:

orientation = AVCaptureVideoOrientationLandscapeRight;

break;

case UIDeviceOrientationPortraitUpsideDown:

orientation = AVCaptureVideoOrientationPortraitUpsideDown;

break;

case UIDeviceOrientationPortrait:

default:

orientation = AVCaptureVideoOrientationPortrait;

break;

}

return orientation;

}

- (void)writeImageToPhotosLibrary:(UIImage *)image {

NSError *error = nil;

__block PHObjectPlaceholder *createdAsset = nil;

[[PHPhotoLibrary sharedPhotoLibrary] performChangesAndWait:^{

createdAsset = [PHAssetCreationRequest creationRequestForAssetFromImage:image].placeholderForCreatedAsset;

} error:&error];

if (error || !createdAsset) {

NSLog(@"Error: %@", [error localizedDescription]);

} else {

[self postThumbnailNotifification:image];

}

}

- (void)postThumbnailNotifification:(UIImage *)image {

NSNotificationCenter *nc = [NSNotificationCenter defaultCenter];

[nc postNotificationName:THThumbnailCreatedNotification object:image];

}

1.2.7 拍摄视频文件

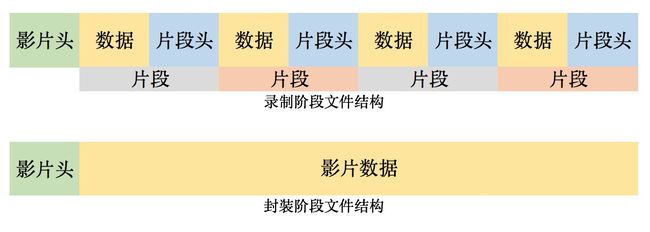

生成视频文件主要分为录制数据,封装视频文件两个部分。在录制数据中,为了防止电话接入等中断事件,AVCaptureFileOutput采用分段捕捉功能,在写入文件初会产生一个最小化头信息,随着录制进行,片段按照一定周期写入,最后创建完整的头信息。这样当发生中断事件时,会以最后一个完整写入的片段终点保存视频文件。默认的片段时长为10秒,可以通过movieFragmentInterval更改。封装视频文件时文件头信息会被放在视频数据之前。如下图所示。

AVCaptureFileOutput可以设置将要写入视频文件的元数据。另外它还提供许多实用功能,例如可以设置录制最长时间、录制到特定的文件大小、保留最小的可用磁盘空间等。另外通过设置其OutputSetting可以设置编码格式、码率、色彩采样方式等一大堆属性,具体见其头文件说明。通常不设置OutputSetting,系统会默认根据Connection使用H264编码器等各种配置,可以通过其实例方法查看。

AVCaptureFileOutput尽管可以配置很多参数,但是其文件容器一定是苹果的QuickTime格式,后缀名为.mov。如果需要使用其他格式视频,只能值其代理方法结束录制即完成文件封装后再对原始视频转码。

#pragma mark - Video Capture Methods

- (BOOL)isRecording {

return self.movieOutput.isRecording;

}

- (void)startRecording {

if (self.isRecording) {

return;

}

AVCaptureConnection *videoConnection = [self.movieOutput connectionWithMediaType:AVMediaTypeVideo];

// 设置视频方向不会物理上旋转像素,只是在创建Quick Time文件时会有相应的矩阵变化

if ([videoConnection isVideoOrientationSupported]) {

videoConnection.videoOrientation = [self currentVideoOrientation];

}

// 防抖效果在实时预览时并不可见,只会对最终生成的视频数据生效

if ([videoConnection isVideoStabilizationSupported]) {

videoConnection.enablesVideoStabilizationWhenAvailable = YES;

}

// 默认摄像头会根据屏幕中心快速对焦,这样在快速切换远近场景时会有脉冲式效果,采用平滑对焦方式可以减缓对焦速度,使快速的场景切换更自然

AVCaptureDevice *device = [self activeCamera];

if (device.isSmoothAutoFocusEnabled) {

NSError *error;

if ([device lockForConfiguration:&error]) {

device.smoothAutoFocusEnabled = YES;

[device unlockForConfiguration];

} else {

[self.delegate deviceConfigurationFailedWithError:error];

}

}

self.outputURL = [self uniqueURL];

[self.movieOutput startRecordingToOutputFileURL:self.outputURL recordingDelegate:self];

}

- (NSURL *)uniqueURL {

NSFileManager *fileManager = [NSFileManager defaultManager];

NSString *dirPath = [fileManager temporaryDirectoryWithTemplateString:@"kamera.XXXXXX"];

if (dirPath) {

NSString *filePath = [dirPath stringByAppendingPathComponent:@"kamera_movie.mov"];

return [NSURL fileURLWithPath:filePath];

}

return nil;

}

- (void)stopRecording {

if ([self isRecording]) {

[self.movieOutput stopRecording];

}

}

- (CMTime)recordedDuration {

return self.movieOutput.recordedDuration;

}

#pragma mark - AVCaptureFileOutputRecordingDelegate

- (void)captureOutput:(AVCaptureFileOutput *)captureOutput

didFinishRecordingToOutputFileAtURL:(NSURL *)outputFileURL

fromConnections:(NSArray *)connections

error:(NSError *)error {

if (error) {

[self.delegate mediaCaptureFailedWithError:error];

} else {

[self writeVideoToPhotosLibrary:[self.outputURL copy]];

}

self.outputURL = nil;

}

- (void)writeVideoToPhotosLibrary:(NSURL *)videoURL {

NSError *error = nil;

__block PHObjectPlaceholder *createdAsset = nil;

[[PHPhotoLibrary sharedPhotoLibrary] performChangesAndWait:^{

createdAsset = [PHAssetCreationRequest creationRequestForAssetFromVideoAtFileURL:videoURL].placeholderForCreatedAsset;

} error:&error];

if (error || !createdAsset) {

[self.delegate assetLibraryWriteFailedWithError:error];

} else {

[self generateThumbnailForVideoAtURL:videoURL];

}

}

- (void)generateThumbnailForVideoAtURL:(NSURL *)videoURL {

dispatch_async(self.videoQueue, ^{

AVAsset *asset = [AVAsset assetWithURL:videoURL];

AVAssetImageGenerator *imageGenerator = [AVAssetImageGenerator assetImageGeneratorWithAsset:asset];

imageGenerator.maximumSize = CGSizeMake(100.0f, 0.0f);

// 捕捉缩略图时考虑视频方向的变化

imageGenerator.appliesPreferredTrackTransform = YES;

CGImageRef imageRef = [imageGenerator copyCGImageAtTime:kCMTimeZero actualTime:NULL error:nil];

UIImage *image = [UIImage imageWithCGImage:imageRef];

CGImageRelease(imageRef);

dispatch_async(dispatch_get_main_queue(), ^{

[self postThumbnailNotifification:image];

});

});

}

2 高级捕捉功能

2.1 视频缩放

AVCaptureDevice提供了videoZoomFactor的属性,可以设置视频的缩放等级,通过其activeFormat属性可以得到一个AVCaptureDeviceFormat实例,取videoMaxZoomFactor可以确定最大缩放等级。设备执行缩放的效果是通过居中裁剪由摄像头传感器捕捉到的图片来实现。通过设置AVCaptureDeviceFormat中的videoZoomFactor...Threshold可以设置放大图像的点。

const CGFloat THZoomRate = 1.0f;

// KVO Contexts

static const NSString *THRampingVideoZoomContext;

static const NSString *THRampingVideoZoomFactorContext;

@implementation THCameraController

- (void)dealloc {

[self.activeCamera removeObserver:self forKeyPath:@"videoZoomFactor"];

[self.activeCamera removeObserver:self forKeyPath:@"rampingVideoZoom"];

}

- (BOOL)setupSessionInputs:(NSError **)error {

BOOL success = [super setupSessionInputs:error];

if (success) {

[self.activeCamera addObserver:self forKeyPath:@"videoZoomFactor" options:NSKeyValueObservingOptionNew context:&THRampingVideoZoomFactorContext];

[self.activeCamera addObserver:self forKeyPath:@"rampingVideoZoom" options:NSKeyValueObservingOptionNew context:&THRampingVideoZoomContext];

}

return success;

}

- (void)observeValueForKeyPath:(NSString *)keyPath

ofObject:(id)object

change:(NSDictionary *)change

context:(void *)context {

if (context == &THRampingVideoZoomContext) {

[self updateZoomingDelegate];

} else if (context == &THRampingVideoZoomFactorContext) {

if (self.activeCamera.isRampingVideoZoom) {

[self updateZoomingDelegate];

}

} else {

[super observeValueForKeyPath:keyPath ofObject:object change:change context:context];

}

}

- (void)updateZoomingDelegate {

CGFloat curZoomFactor = self.activeCamera.videoZoomFactor;

CGFloat maxZoomFactor = [self maxZoomFactor];

CGFloat value = log(curZoomFactor)/log(maxZoomFactor);

[self.zoomingDelegate rampedZoomToValue:value];

}

- (BOOL)cameraSupportsZoom {

return self.activeCamera.activeFormat.videoMaxZoomFactor > 1.0f;

}

- (CGFloat)maxZoomFactor {

// 4.0可以定义为其它缩放等级

return MIN(self.activeCamera.activeFormat.videoMaxZoomFactor, 4.0f);

}

// 一次缩放,拖动缩放条到某一点时调用

- (void)setZoomValue:(CGFloat)zoomValue {

if (!self.activeCamera.isRampingVideoZoom) {

NSError *error;

if ([self.activeCamera lockForConfiguration:&error]) {

CGFloat zoomFactor = pow([self maxZoomFactor], zoomValue);

self.activeCamera.videoZoomFactor = zoomFactor;

[self.activeCamera unlockForConfiguration];

} else {

[self.delegate deviceConfigurationFailedWithError:error];

}

}

}

// 逐渐缩放,拉动摇杆时调用

- (void)rampZoomToValue:(CGFloat)zoomValue {

NSError *error;

if ([self.activeCamera lockForConfiguration:&error]) {

CGFloat zoomFactor = pow([self maxZoomFactor], zoomValue);

// 当rate = 1时,表示每秒增缩放因子的一倍

[self.activeCamera rampToVideoZoomFactor:zoomFactor withRate:THZoomRate];

[self.activeCamera unlockForConfiguration];

} else {

[self.delegate deviceConfigurationFailedWithError:error];

}

}

// 取消缩放,设置当前值,松开摇杆时调用

- (void)cancelZoom {

NSError *error;

if ([self.activeCamera lockForConfiguration:&error]) {

[self.activeCamera cancelVideoZoomRamp];

[self.activeCamera unlockForConfiguration];

} else {

[self.delegate deviceConfigurationFailedWithError:error];

}

}

2.2 人脸检测

iPhone自带的相机应用会在拍摄范围内出现人脸的时候检测人脸信息,并以人脸的中心为焦点进行聚焦。在AVFoundation中AVCaptureMetadataOut能实现人脸检测功能。它返回的结果是AVMetadataFaceObject的数组,数组中每个元素代表一个人脸信息,它包含人脸位置(以设备坐标系为基坐标系),人脸倾斜角(人脸和肩膀之间的倾斜角,单位为度),偏转角(人脸在y轴上的旋转角,单位为度)。

在相机类中初始化人脸元数据输出

- (BOOL)setupSessionOutputs:(NSError **)error {

self.metadataOutput = [[AVCaptureMetadataOutput alloc] init];

if ([self.captureSession canAddOutput:self.metadataOutput]) {

[self.captureSession addOutput:self.metadataOutput];

NSArray *metadataObjectTypes = @[AVMetadataObjectTypeFace];

self.metadataOutput.metadataObjectTypes = metadataObjectTypes;

dispatch_queue_t mainQueue = dispatch_get_main_queue();

[self.metadataOutput setMetadataObjectsDelegate:self

queue:mainQueue];

return YES;

} else {

if (error) {

NSDictionary *userInfo = @{NSLocalizedDescriptionKey:

@"Failed to still image output."};

*error = [NSError errorWithDomain:THCameraErrorDomain

code:THCameraErrorFailedToAddOutput

userInfo:userInfo];

}

return NO;

}

}

- (void)captureOutput:(AVCaptureOutput *)captureOutput

didOutputMetadataObjects:(NSArray *)metadataObjects

fromConnection:(AVCaptureConnection *)connection {

for (AVMetadataFaceObject *face in metadataObjects) {

NSLog(@"Face detected with ID: %li",(long)face.faceID);

NSLog(@"Face bounds: %@", NSStringFromCGRect(face.bounds));

}

[self.faceDetectionDelegate didDetectFaces:metadataObjects];

}

在预览视图中可视化人脸数据

@interface THPreviewView ()

@property (strong, nonatomic) CALayer *overlayLayer;

@property (strong, nonatomic) NSMutableDictionary *faceLayers;

@property (nonatomic, readonly) AVCaptureVideoPreviewLayer *previewLayer;

@end

@implementation THPreviewView

+ (Class)layerClass {

return [AVCaptureVideoPreviewLayer class];

}

- (id)initWithFrame:(CGRect)frame {

if (self = [super initWithFrame:frame]) {

[self setupView];

}

return self;

}

- (void)setupView {

self.faceLayers = [NSMutableDictionary dictionary];

// 视频预览layer

self.previewLayer.videoGravity = AVLayerVideoGravityResizeAspectFill;

// facelyaers的承载layer

self.overlayLayer = [CALayer layer];

self.overlayLayer.frame = self.bounds;

// 设置其所有子Layer的视角,眼睛在z轴距离图像1000个像素

self.overlayLayer.sublayerTransform = CATransform3DMakePerspective(1000);

[self.previewLayer addSublayer:self.overlayLayer];

}

- (AVCaptureSession*)session {

return self.previewLayer.session;

}

- (void)setSession:(AVCaptureSession *)session {

self.previewLayer.session = session;

}

- (AVCaptureVideoPreviewLayer *)previewLayer {

return (AVCaptureVideoPreviewLayer *)self.layer;

}

- (void)didDetectFaces:(NSArray *)faces {

NSArray *transformedFaces = [self transformedFacesFromFaces:faces];

NSMutableArray *lostFaces = [self.faceLayers.allKeys mutableCopy];

// 更新仍被监测到的人脸数据

for (AVMetadataFaceObject *face in transformedFaces) {

NSNumber *faceID = @(face.faceID);

[lostFaces removeObject:faceID];

CALayer *layer = [self.faceLayers objectForKey:faceID];

if (!layer) {

layer = [self makeFaceLayer];

[self.overlayLayer addSublayer:layer];

self.faceLayers[faceID] = layer;

}

// 重新初始化人脸layer旋转

layer.transform = CATransform3DIdentity;

// 设置人脸layer位置

layer.frame = face.bounds;

// 设置人脸layer倾斜角(即头部向肩膀方向倾斜夹角)

if (face.hasRollAngle) {

CATransform3D t = [self transformForRollAngle:face.rollAngle];

// CATransform3D进行多次旋转变化规则为两个独立的旋转矩阵相乘

layer.transform = CATransform3DConcat(layer.transform, t);

}

// 设置人脸layer旋转角(即脸部对应于y轴方向上的夹角)

if (face.hasYawAngle) {

CATransform3D t = [self transformForYawAngle:face.yawAngle];

layer.transform = CATransform3DConcat(layer.transform, t);

}

}

// 移除已经丢失的人脸数据,分别在字点中和视图中移除

for (NSNumber *faceID in lostFaces) {

CALayer *layer = [self.faceLayers objectForKey:faceID];

[layer removeFromSuperlayer];

[self.faceLayers removeObjectForKey:faceID];

}

}

// 将基于摄像头坐标系的人脸数组转化为基于previewLayer坐标系的人脸数据数组

- (NSArray *)transformedFacesFromFaces:(NSArray *)faces {

NSMutableArray *transformedFaces = [NSMutableArray array];

for (AVMetadataObject *face in faces) {

AVMetadataObject *transformedFace =

[self.previewLayer transformedMetadataObjectForMetadataObject:face];

[transformedFaces addObject:transformedFace];

}

return transformedFaces;

}

- (CALayer *)makeFaceLayer {

CALayer *layer = [CALayer layer];

layer.borderWidth = 5.0f;

layer.borderColor =

[UIColor colorWithRed:0.188 green:0.517 blue:0.877 alpha:1.000].CGColor;

return layer;

}

@end

在预览视图中处理人脸的倾斜和偏转

// 绕Z轴旋转

- (CATransform3D)transformForRollAngle:(CGFloat)rollAngleInDegrees {

CGFloat rollAngleInRadians = THDegreesToRadians(rollAngleInDegrees);

return CATransform3DMakeRotation(rollAngleInRadians, 0.0f, 0.0f, 1.0f);

}

// 绕Y轴旋转

- (CATransform3D)transformForYawAngle:(CGFloat)yawAngleInDegrees {

// 首先应用偏转角造成的绕Y轴旋转

CGFloat yawAngleInRadians = THDegreesToRadians(yawAngleInDegrees);

CATransform3D yawTransform =

CATransform3DMakeRotation(yawAngleInRadians, 0.0f, -1.0f, 0.0f);

// 由于本示例中屏幕方向固定为Portrait,因此当颠倒设备时人脸的角度会相对摄像头做出改变,但是屏幕通常不会自动旋转,因此必须根据设备当前方向修正旋转矩阵。

return CATransform3DConcat(yawTransform, [self orientationTransform]);

}

// 得到根据当前设备方法需要进行的视图选择矩阵,尽管在本次示例中在判断偏转角才调用,但是其也会影响倾斜角,因此因在判断偏转角和倾斜角后统一进行逻辑处理

- (CATransform3D)orientationTransform {

CGFloat angle = 0.0;

switch ([UIDevice currentDevice].orientation) {

case UIDeviceOrientationPortraitUpsideDown:

angle = M_PI;

break;

case UIDeviceOrientationLandscapeRight:

angle = -M_PI / 2.0f;

break;

case UIDeviceOrientationLandscapeLeft:

angle = M_PI / 2.0f;

break;

default: //UIDeviceOrientationPortrait

angle = 0.0;

break;

}

return CATransform3DMakeRotation(angle, 0.0f, 0.0f, 1.0f);

}

// FaceMetadataObject中的倾斜角和偏转角单位都是度,这里需要转换为弧度

static CGFloat THDegreesToRadians(CGFloat degrees) {

return degrees * M_PI / 180;

}

// 创建一个CATransform3D数据的函数

static CATransform3D CATransform3DMakePerspective(CGFloat eyePosition) {

// CATransform3D 是一个4行4列矩阵,其中第一列、二、三列分别通过其中的4个元素可以得到新的坐标系的x,y,z值,

//第四列前三行元素分别是x,y,z的透视因子,默认为0,图像不会发生变化,其正负值有效,值越大会使图像变形程度更高,

// 该值可以理解为视角,通常通过-1.0 / distance获得,distance表示眼睛离目标3d模型从某一轴上的直线距离,

// 可以为负,遵循近大远小的原则,当距离无限接近时,其对应坐标轴方向上的像素拉伸越夸张,当距离无限大时,对应坐标轴上图像不拉伸。

// 第四列第四行默认为1,没有意义。

CATransform3D transform = CATransform3DIdentity;

transform.m34 = -1.0 / eyePosition;

return transform;

}

通过上述功能结合Core Animation和Quartz框架,可以实现在人脸上添加帽子和眼镜胡须等动态元素。在Apple Developer Connection网站中Apple’s SquareCam示例有详细介绍。

2.3 机器可读码识别(二维码等)

AVFoundation可以识别机器码,机器可读码分条形码和二维码两个大类,其下有很多小分类,AVFoundation支持的类型如下图所示。

识别机器可读码通过AVCaptureMetadataOutput可以实现。

初始化相机,添加二维码输出

@implementation THCameraController

- (NSString *)sessionPreset {

// 识别条形码和二维码使用较小相机尺寸以提高效率

return AVCaptureSessionPreset640x480;

}

// 尽管系统支持在任何距离都是用自动对焦功能,但是为了提高识别成功率,限制当相机离物体近的时候才开启自动对焦

- (BOOL)setupSessionInputs:(NSError *__autoreleasing *)error {

BOOL success = [super setupSessionInputs:error];

if (success) {

if (self.activeCamera.autoFocusRangeRestrictionSupported) {

if ([self.activeCamera lockForConfiguration:error]) {

self.activeCamera.autoFocusRangeRestriction = AVCaptureAutoFocusRangeRestrictionNear;

[self.activeCamera unlockForConfiguration];

}

}

}

return success;

}

- (BOOL)setupSessionOutputs:(NSError **)error {

self.metadataOutput = [[AVCaptureMetadataOutput alloc] init];

if ([self.captureSession canAddOutput:self.metadataOutput]) {

[self.captureSession addOutput:self.metadataOutput];

[self.metadataOutput setMetadataObjectsDelegate:self queue:dispatch_get_main_queue()];

NSArray *typs = @[AVMetadataObjectTypeQRCode,AVMetadataObjectTypeAztecCode];

self.metadataOutput.metadataObjectTypes = typs;

} else {

NSDictionary *userInfo = @{NSLocalizedDescriptionKey : @"Failed to add metadata output"};

*error = [NSError errorWithDomain:THCameraErrorDomain code:THCameraErrorFailedToAddOutput userInfo:userInfo];

return NO;

}

return YES;

}

- (void)captureOutput:(AVCaptureOutput *)captureOutput

didOutputMetadataObjects:(NSArray *)metadataObjects

fromConnection:(AVCaptureConnection *)connection {

[self.codeDetectionDelegate didDetectCodes:metadataObjects];

}

@end

处理检测到的元数据数组,该数组由AVMetadataMachineReadableCodeObject元素组成,每个元素代表一个检测到的机器码对象,其含有三个重要的属性,StringValue中定义了机器码代码的字符串,bounds定义了机器码的视图标准化的矩形边界,corners定义了机器码视图的基于设备坐标系的真实顶点数组,通过使用它可以展现出三维效果。

初始化预览视图,负责图像显示

@implementation THPreviewView

+ (Class)layerClass {

return [AVCaptureVideoPreviewLayer class];

}

- (id)initWithFrame:(CGRect)frame {

if (self = [super initWithFrame:frame]) {

[self setupView];

}

return self;

}

- (void)setupView {

_codeLayers = [NSMutableDictionary dictionaryWithCapacity:5];

self.previewLayer.videoGravity = AVLayerVideoGravityResizeAspect;

}

- (AVCaptureSession*)session {

return self.previewLayer.session;

}

- (void)setSession:(AVCaptureSession *)session {

self.previewLayer.session = session;

}

- (AVCaptureVideoPreviewLayer *)previewLayer {

return (AVCaptureVideoPreviewLayer *)self.layer;

}

- (void)didDetectCodes:(NSArray *)codes {

NSArray *transformedCodes = [self transformedCodesFromCodes:codes];

NSMutableArray *lostCodes = [self.codeLayers.allKeys mutableCopy];

// 更新仍然存在的二维码数据(实际应用中通常扫描一次即关闭相机)

for (AVMetadataMachineReadableCodeObject *code in transformedCodes) {

// 这里使用的是机器码的String作为唯一标识,因此当有两个一样的二维码时后面的会覆盖前面的识别结果,如果需要都显示,此处需要更新字典的key值设计方式

NSString *stringValue = code.stringValue;

if (stringValue) {

[lostCodes removeObject:stringValue];

} else {

continue;

}

NSArray *layers = self.codeLayers[stringValue];

if (!layers) {

layers = @[[self makeBoundsLayer], [self makeCornersLayer]];

self.codeLayers[stringValue] = layers;

[self.previewLayer addSublayer:layers[0]];

[self.previewLayer addSublayer:layers[1]];

}

// Bounds属性代表了一个标准化后的二维机器码边界,corner属性代表机器码图案的实际顶点,使用它创建的Layer具有三维效果

CAShapeLayer *boundsLayer = layers[0];

boundsLayer.path = [self bezierPathForBounds:code.bounds].CGPath;

CAShapeLayer *cornerLayer = layers[1];

cornerLayer.path = [self bezierPathForCorners:code.corners].CGPath;

}

// 移除不在丢失追踪的二维码数据以及其layer

for (NSString *stringValue in lostCodes) {

for (CALayer *layer in self.codeLayers[stringValue]) {

[layer removeFromSuperlayer];

}

[self.codeLayers removeObjectForKey:stringValue];

}

}

@end

使用探测到的机器码数据,进行描边等操作

// 将基于设备坐标系的二维码数据转换为基于视图坐标系的二维码数据

- (NSArray *)transformedCodesFromCodes:(NSArray *)codes {

NSMutableArray *transformedCodes = [NSMutableArray arrayWithCapacity:5];

for (AVMetadataObject *code in codes) {

AVMetadataObject *transformedCode = [self.previewLayer transformedMetadataObjectForMetadataObject:code];

[transformedCodes addObject:transformedCode];

}

return transformedCodes.copy;

}

- (UIBezierPath *)bezierPathForBounds:(CGRect)bounds {

return [UIBezierPath bezierPathWithRect:bounds];

}

- (CAShapeLayer *)makeBoundsLayer {

CAShapeLayer *shapeLayer = [CAShapeLayer layer];

shapeLayer.strokeColor = [UIColor colorWithRed:0.95f green:0.75f blue:0.06f alpha:1.0f].CGColor;

shapeLayer.fillColor = nil;

shapeLayer.lineWidth = 4.0f;

return shapeLayer;

}

- (CAShapeLayer *)makeCornersLayer {

CAShapeLayer *shapeLayer = [CAShapeLayer layer];

shapeLayer.strokeColor = [UIColor colorWithRed:0.12 green:0.67 blue:0.42f alpha:1.0f].CGColor;

shapeLayer.fillColor = [UIColor colorWithRed:0.19f green:0.75f blue:0.48f alpha:1.0f].CGColor;

shapeLayer.lineWidth = 2.0f;

return shapeLayer;

}

- (UIBezierPath *)bezierPathForCorners:(NSArray *)corners {

UIBezierPath *path = [UIBezierPath bezierPath];

for (int i = 0; i < corners.count; i++) {

CGPoint point = [self pointForCorner:corners[i]];

if (i == 0) {

[path moveToPoint:point];

} else {

[path addLineToPoint:point];

}

}

[path closePath];

return path;

}

// 通过Corener字典建立point

- (CGPoint)pointForCorner:(NSDictionary *)corner {

CGPoint point;

CGPointMakeWithDictionaryRepresentation((CFDictionaryRef)corner, &point);

return point;

}

2.4 高帧率捕捉图像

高帧率(FPS)捕捉图像能提高运动场景的流畅度,也能支持高质量的慢动作视频效果。处理高帧率视频分一下几个阶段:1)捕捉:AVFoundation框架默认的帧率为30,在iPhone6s上已经支持240的帧率捕捉,另外框架支持启用dropped P-frames的h.264特性,使高帧率视频能在旧的设备上流畅播放。2)播放:AVPlayer支持以多种帧率播放视频,AVPlayerItem带有audioTimePitchAlgorithm属性优化音频处理。3)编辑:编辑功能将在该系列最后一篇文章中说明。4)导出:AVFoundation可以保存高帧率的视频,它可以被导出或者转换,例如转换为标准30的帧率。

高帧率捕捉通过设置AVCaptureDevice的activeFormat以及FrameDuration实现,它的formats属性的以得到其接收者支持的所以格式,该数组由AVCaptureDeviceFormat组成,其定义了色彩抽样方式以及可使用的帧率数组(video...rateRanges)等信息,AVFrameRateRange对象定义了帧率和帧时长等信息。

创建包含在AVCaptureDevice分类中的私有工具类THQualityOfService

@interface THQualityOfService : NSObject

@property(strong, nonatomic, readonly) AVCaptureDeviceFormat *format;

@property(strong, nonatomic, readonly) AVFrameRateRange *frameRateRange;

@property(assign, nonatomic, readonly) BOOL isHighFrameRate;

+ (instancetype)qosWithFormat:(AVCaptureDeviceFormat *)format frameRateRange:(AVFrameRateRange *)frameRateRange;

@end

@implementation THQualityOfService

+ (instancetype)qosWithFormat:(AVCaptureDeviceFormat *)format frameRateRange:(AVFrameRateRange *)frameRateRange {

return [[self alloc] initWithFormat:format frameRateRange:frameRateRange];

}

- (instancetype)initWithFormat:(AVCaptureDeviceFormat *)format frameRateRange:(AVFrameRateRange *)frameRateRange {

if (self = [super init]) {

_format = format;

_frameRateRange = frameRateRange;

}

return self;

}

- (BOOL)isHighFrameRate {

return self.frameRateRange.maxFrameRate > 30.0f;

}

@end

完善AVCaptureDevice分类,完善高帧率逻辑

@implementation AVCaptureDevice (THAdditions)

- (BOOL)supportsHighFrameRateCapture {

if (![self hasMediaType:AVMediaTypeVideo]) {

return NO;

}

return [self findHighestQualityOfService].isHighFrameRate;

}

- (THQualityOfService *)findHighestQualityOfService {

AVCaptureDeviceFormat *maxFormat = nil;

AVFrameRateRange *maxFrameRateRange = nil;

for (AVCaptureDeviceFormat *format in self.formats) {

FourCharCode codecType = CMVideoFormatDescriptionGetCodecType(format.formatDescription);

// CMFormatDescriptionRef 这是Core Media定义的类型,指的是图像采集的方式,这里iPhone设备使用4:2:0的色彩抽样格式,

// 并且颜色范围忽略不明显值,具体见头文件和该系列第一篇文章中色彩二次抽样介绍

if (codecType == kCVPixelFormatType_420YpCbCr8BiPlanarVideoRange) {

NSArray *frameRateRanges = format.videoSupportedFrameRateRanges;

for (AVFrameRateRange *range in frameRateRanges) {

if (range.maxFrameRate > maxFrameRateRange.maxFrameRate) {

maxFormat = format;

maxFrameRateRange = range;

}

}

}

}

return [THQualityOfService qosWithFormat:maxFormat frameRateRange:maxFrameRateRange];

}

- (BOOL)enableMaxFrameRateCapture:(NSError **)error {

THQualityOfService *qos = [self findHighestQualityOfService];

if (!qos.isHighFrameRate) {

NSString *message = @"Device does not support high FPS capture";

NSDictionary *userInfo = @{NSLocalizedDescriptionKey : message};

NSUInteger code = THCameraErrorHighFrameRateCaptureNotSupported;

*error = [NSError errorWithDomain:THCameraErrorDomain code:code userInfo:userInfo];

return NO;

}

if ([self lockForConfiguration:error]) {

CMTime minFrameDuration = qos.frameRateRange.minFrameDuration;

self.activeFormat = qos.format;

// AVFoundation中控制捕捉设备是通过每一帧的时长(1/帧率)来控制,并不是帧率

self.activeVideoMinFrameDuration = minFrameDuration;

self.activeVideoMaxFrameDuration = minFrameDuration;

[self unlockForConfiguration];

return YES;

}

return NO;

}

@end

在相机控制器中使用高帧率功能

@implementation THCameraController

- (BOOL)cameraSupportsHighFrameRateCapture {

return [self.activeCamera supportsHighFrameRateCapture];

}

- (BOOL)enableHighFrameRateCapture {

NSError *error;

BOOL enabled = [self.activeCamera enableMaxFrameRateCapture:&error];

if (!enabled) {

[self.delegate deviceConfigurationFailedWithError:error];

}

return enabled;

}

@end

2.5 处理捕捉到的视频文件

AVCaptureMovieFileOutput是简化的视频捕捉类,当需要更多自定义操作时,需要使用AVCaptureVideoDataOutput。它还可以结合OpenGL ES和Core Animation框架中的API将可视化贴图等集成到最终生成的视频中。这里暂不处理声音部分AudioDataOutput。使用VideoDataOutput捕捉数据有两个重要的代理方法。

- captureOutput:didOutputSampleBuffer...:每当新的视频帧被捕捉到时将调用此方法,sampleBuffer中的数据组成方式基于VideoDataOutput设置的videoSettings。

- captureOutput:didDropSampleBuffer...:当前一个方法处理单个视频帧耗费过多的时间,后续的视频帧不能按时到达时将会调用此方法,因此应尽量加快前一个方法的处理效率。

2.5.1 CMSampleBuffer简介

CMSampleBuffer可以包含媒体采样数据、格式描述、元数据。CVPixelBuffer包含的像素数据中,对于RGBA格式的像素数据通常连续存储,对于420等格式数据通常采用两个plane将亮度数据和颜色分量数据分别存储。

获取VideoDataOutput输出的样本数据

// 对CVPixelBuffer包含的像素数据,将一张RGB图变为灰度图(这里没有使用标准变换矩阵)

- (void)handleCVPixelBuffer {

const int BYTES_PER_PIXEL = 4;

CMSampleBufferRef sampleBuffer = nil;

//pixelBuffer是unretained引用,不需要手动释放内存

CVPixelBufferRef pixelBuffer = CMSampleBufferGetImageBuffer(sampleBuffer);

CVPixelBufferLockBaseAddress(pixelBuffer, 0);

size_t bufferWidth = CVPixelBufferGetWidth(pixelBuffer);

size_t bufferHeight = CVPixelBufferGetHeight(pixelBuffer);

unsigned char *pixel = (unsigned char *)CVPixelBufferGetBaseAddress(pixelBuffer);

unsigned char grayPixel;

for (int row = 0; row < bufferHeight; row++) {

for (int column = 0; column < bufferWidth; column++) {

// 尽管这里像素数据应该是0-255的整数,但是这里是直接对二进制位进行相加,因此可以使用加法

grayPixel = (pixel[0] + pixel[1] + pixel[2])/3;

pixel[0] = pixel[1] = pixel[2] = grayPixel;

pixel += BYTES_PER_PIXEL;

}

}

CVPixelBufferUnlockBaseAddress(pixelBuffer, 0);

// 此处可以得到经过处理的图片

}

获取格式描述

CMFormatDescriptionRef定义了媒体通用的一下属性,根据媒体类型不同分为Video和AuidoDescriptionRef,其中分别定义特有属性。

// 根据CMSamplebuffer的Format类型获取图像和音频数据

- (void)getMeidaData {

CMSampleBufferRef sampleBuffer = nil;

CMFormatDescriptionRef formatDescription = CMSampleBufferGetFormatDescription(sampleBuffer);

CMMediaType mediaType = CMFormatDescriptionGetMediaType(formatDescription);

if (mediaType == kCMMediaType_Video) {

CVPixelBufferRef pixelBuffer = CMSampleBufferGetImageBuffer(sampleBuffer);

// 处理图像数据

} else if (mediaType == kCMMediaType_Audio) {

CMBlockBufferRef blockBuffer = CMSampleBufferGetDataBuffer(sampleBuffer);

// 处理音频数据

}

}

时间信息

CMSampleBuffer还定义了当前帧的相对采集时间CMTime。

获取元数据

CMSampleBuffer中还包含了可交换图片格式(Exif)等元数据。

// 获取可交换图片文件格式(Exif)的附件,其中记录了当前帧的曝光模式、尺寸、白平衡等大量的元数据信息

- (void)getExifAttachment {

CMSampleBufferRef sampleBuffer = nil;

CFDictionaryRef exifAttachments = (CFDictionaryRef)CMGetAttachment(sampleBuffer, kCGImagePropertyExifDictionary, NULL);

}

2.5.1 使用AVCaptureVideoDataOutput

定义相机管理类

@protocol THTextureDelegate

// 当新的纹理被创建时调用,target值纹理类型,OpenGL ES可用根据name拿到对应的贴图

- (void)textureCreatedWithTarget:(GLenum)target name:(GLuint)name;

@end

@interface THCameraController : THBaseCameraController

// context用于管理状态的上下文,同时管理使用OpenGL ES进行绘制所需要的资源

- (instancetype)initWithContext:(EAGLContext *)context;

@property (weak, nonatomic) id textureDelegate;

@end

捕捉图像数据并转换成OpenGL ES可用的贴图

OpenGL ES基于GPU处理图像,是高性能视频处理的唯一解决方案,关于它的使用另起文章,此处只将AVFoundation捕捉到的图片数据转换为OpenGL ES可用的贴图。此处使用GLKViewController作为根控制器。另外可阅读Learning OpenGL ES for iOS这本书。

@interface THCameraController ()

@property (weak, nonatomic) EAGLContext *context;

@property (strong, nonatomic) AVCaptureVideoDataOutput *videoDataOutput;

// 作为Core Video中像素buffer和OpenGL ES中贴图桥梁,能减少数据再CPU和GPU之间传输的开销

@property (nonatomic) CVOpenGLESTextureCacheRef textureCache;

@property (nonatomic) CVOpenGLESTextureRef cameraTexture;

@end

@implementation THCameraController

- (instancetype)initWithContext:(EAGLContext *)context {

if (self = [super init]) {

_context = context;

CVReturn err = CVOpenGLESTextureCacheCreate(kCFAllocatorDefault, NULL, _context, NULL, &_textureCache);

if (err != kCVReturnSuccess) {

NSLog(@"Error creating texture cache. %d", err);

}

}

return self;

}

- (NSString *)sessionPreset {

return AVCaptureSessionPreset640x480;

}

- (BOOL)setupSessionOutputs:(NSError **)error {

self.videoDataOutput = [[AVCaptureVideoDataOutput alloc] init];

// 摄像头默认格式是kCVPixelFormatType_420YpCbCr8Planar,但是OpenGL中常用的是BGRA模式,如果使用默认设置,

// 在得到CVPixelBuffer时其中的样本数据将是YpCbCr数据,而使用32BGRA将得到RGBA数据。

// 但是这样会降低摄像头性能,并且得到的样本尺寸更大

self.videoDataOutput.videoSettings = @{(id)kCVPixelBufferPixelFormatTypeKey : @(kCVPixelFormatType_32BGRA)};

// 调用代理方法必须在串行队列,这里采用主队列,也可以自定义

[self.videoDataOutput setSampleBufferDelegate:self queue:dispatch_get_main_queue()];

if ([self.captureSession canAddOutput:self.videoDataOutput]) {

[self.captureSession addOutput:self.videoDataOutput];

return YES;

}

return NO;

}

- (void)captureOutput:(AVCaptureOutput *)captureOutput

didOutputSampleBuffer:(CMSampleBufferRef)sampleBuffer

fromConnection:(AVCaptureConnection *)connection {

CVReturn err;

CVImageBufferRef pixelBuffer = CMSampleBufferGetImageBuffer(sampleBuffer);

CMFormatDescriptionRef formatDescription = CMSampleBufferGetFormatDescription(sampleBuffer);

CMVideoDimensions dimensions = CMVideoFormatDescriptionGetDimensions(formatDescription);

// 此处宽高都传height会在水平方向上裁剪视频

err = CVOpenGLESTextureCacheCreateTextureFromImage(kCFAllocatorDefault, _textureCache, pixelBuffer, NULL, GL_TEXTURE_2D, GL_RGBA, dimensions.height, dimensions.height, GL_BGRA, GL_UNSIGNED_BYTE, 0, &_cameraTexture);

if (!err) {

GLenum target = CVOpenGLESTextureGetTarget(_cameraTexture);

GLuint name = CVOpenGLESTextureGetName(_cameraTexture);

[self.textureDelegate textureCreatedWithTarget:target name:name];

} else {

NSLog(@"Error at CVOpenGLESTextureCacheCreatTextureFromImage %d",err);

}

[self cleanupTextures];

}

- (void)cleanupTextures {

if (_cameraTexture) {

CFRelease(_cameraTexture);

_cameraTexture = NULL;

}

CVOpenGLESTextureCacheFlush(_textureCache, 0);

}

@end