本文主要参考下面两篇博文,并在部分细节处做了修改。

https://blog.csdn.net/XX_123_1_RJ/article/details/102733175?depth_1-utm_source=distribute.pc_relevant.none-task&utm_source=distribute.pc_relevant.none-task

https://blog.csdn.net/linolzhang/article/details/97833354

一、数据集准备

(训练集验证集测试集的数据分别准备)

1、标注数据集

大多数人会用labelme来标注数据集,然后用labelme将每张标注图片都生成一个json文件。labelme教程网上很多,这里不再赘述。

本人由于原图的标注目标很小,用labelme标注未免不精确,所以先用PS手动标注后再写代码把标注图转换成了labelme格式的json文件。

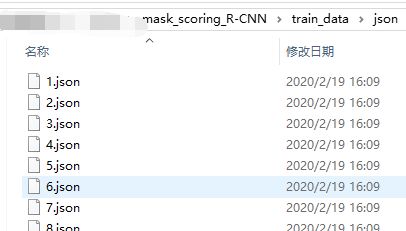

结果如图:

2、将这些json文件转换成coco格式

这一步我使用如下代码可成功转换。

# -*- coding:utf-8 -*- import os, sys import argparse import json import matplotlib.pyplot as plt import skimage.io as io from labelme import utils import numpy as np import glob import PIL.Image class MyEncoder(json.JSONEncoder): def default(self, obj): if isinstance(obj, np.integer): return int(obj) elif isinstance(obj, np.floating): return float(obj) elif isinstance(obj, np.ndarray): return obj.tolist() else: return super(MyEncoder, self).default(obj) class labelme2coco(object): def __init__(self, labelme_json=[], save_json_path='./tran.json'): ''' :param labelme_json: 所有labelme的json文件路径组成的列表 :param save_json_path: json保存位置 ''' self.labelme_json = labelme_json self.save_json_path = save_json_path self.images = [] self.categories = [] self.annotations = [] # self.data_coco = {} self.label = [] self.annID = 1 self.height = 0 self.width = 0 self.save_json() def data_transfer(self): for num, json_file in enumerate(self.labelme_json): with open(json_file, 'r') as fp: data = json.load(fp) # 加载json文件 self.images.append(self.image(data, num)) for shapes in data['shapes']: label = shapes['label'] if label not in self.label: self.categories.append(self.categorie(label)) self.label.append(label) points = shapes['points'] # 这里的point是用rectangle标注得到的,只有两个点,需要转成四个点 points.append([points[0][0], points[1][1]]) points.append([points[1][0], points[0][1]]) self.annotations.append(self.annotation(points, label, num)) self.annID += 1 def image(self, data, num): image = {} #img = utils.img_b64_to_arr(data['imageData']) # 解析原图片数据 # img=io.imread(data['imagePath']) # 通过图片路径打开图片 # img = cv2.imread(data['imagePath'], 0) # height, width = img.shape[:2] height = data['imageHeight'] width = data['imageWidth'] image['height'] = height image['width'] = width image['id'] = num + 1 image['file_name'] = data['imagePath'].split('/')[-1] self.height = height self.width = width return image def categorie(self, label): categorie = {} categorie['supercategory'] = 'Cancer' categorie['id'] = len(self.label) + 1 # 0 默认为背景 categorie['name'] = label return categorie def annotation(self, points, label, num): annotation = {} annotation['segmentation'] = [list(np.asarray(points).flatten())] annotation['iscrowd'] = 0 annotation['image_id'] = num + 1 # annotation['bbox'] = str(self.getbbox(points)) # 使用list保存json文件时报错(不知道为什么) # list(map(int,a[1:-1].split(','))) a=annotation['bbox'] 使用该方式转成list annotation['bbox'] = list(map(float, self.getbbox(points))) annotation['area'] = annotation['bbox'][2] * annotation['bbox'][3] # annotation['category_id'] = self.getcatid(label) annotation['category_id'] = self.getcatid(label) # 注意,源代码默认为1 annotation['id'] = self.annID return annotation def getcatid(self, label): for categorie in self.categories: if label == categorie['name']: return categorie['id'] return 1 def getbbox(self, points): # img = np.zeros([self.height,self.width],np.uint8) # cv2.polylines(img, [np.asarray(points)], True, 1, lineType=cv2.LINE_AA) # 画边界线 # cv2.fillPoly(img, [np.asarray(points)], 1) # 画多边形 内部像素值为1 polygons = points mask = self.polygons_to_mask([self.height, self.width], polygons) return self.mask2box(mask) def mask2box(self, mask): '''从mask反算出其边框 mask:[h,w] 0、1组成的图片 1对应对象,只需计算1对应的行列号(左上角行列号,右下角行列号,就可以算出其边框) ''' # np.where(mask==1) index = np.argwhere(mask == 1) rows = index[:, 0] clos = index[:, 1] # 解析左上角行列号 left_top_r = np.min(rows) # y left_top_c = np.min(clos) # x # 解析右下角行列号 right_bottom_r = np.max(rows) right_bottom_c = np.max(clos) # return [(left_top_r,left_top_c),(right_bottom_r,right_bottom_c)] # return [(left_top_c, left_top_r), (right_bottom_c, right_bottom_r)] # return [left_top_c, left_top_r, right_bottom_c, right_bottom_r] # [x1,y1,x2,y2] return [left_top_c, left_top_r, right_bottom_c - left_top_c, right_bottom_r - left_top_r] # [x1,y1,w,h] 对应COCO的bbox格式 def polygons_to_mask(self, img_shape, polygons): mask = np.zeros(img_shape, dtype=np.uint8) mask = PIL.Image.fromarray(mask) xy = list(map(tuple, polygons)) PIL.ImageDraw.Draw(mask).polygon(xy=xy, outline=1, fill=1) mask = np.array(mask, dtype=bool) return mask def data2coco(self): data_coco = {} data_coco['images'] = self.images data_coco['categories'] = self.categories data_coco['annotations'] = self.annotations return data_coco def save_json(self): self.data_transfer() self.data_coco = self.data2coco() # 保存json文件 json.dump(self.data_coco, open(self.save_json_path, 'w'), indent=4, cls=MyEncoder) # indent=4 更加美观显示 if __name__ == '__main__': src_folder = os.path.abspath(sys.argv[1]) # load src - join json labelme_json = glob.glob(src_folder + '/*.json') labelme2coco(labelme_json, sys.argv[2])

在运行这个代码时,只有把所有需要的模块都安装在anaconda当时安装labelme的那个虚拟环境下才能运行成功,当然这个代码的运行也是要在labelme这个虚拟环境下的。

二、环境搭建(linux)

1、创建pytorch环境

conda create --name maskrcnn_benchmark source activate maskrcnn_benchmark #所有模块的安装都在此虚拟环境下 conda install ipython pip install ninja yacs cython matplotlib pyqt5 conda install pytorch-nightly torchvision=0.2.1 cudatoolkit=9.0

上面的步骤执行完之后还要离线安装torch1.0.1。因为某种墙的存在,在线下载torch不太容易实现,国内镜像源又没有1.0.1这个版本。而经过博主长期的踩坑发现torch1.0.1和torchvision=0.2.1加上numpy1.17才是可用组合。这是torch1.0.1的下载链接: http://download.pytorch.org/whl/cu100/torch-1.0.1-cp36-cp36m-linux_x86_64.whl,建议直接迅雷下载。下载完成后,cd到模块所在目录然后pip install torch-1.0.1-cp36-cp36m-linux_x86_64.whl即可。(本人的python是3.6,请酌情修改下载链接)

2、安装cocoapi及apex

export INSTALL_DIR=$PWD # install pycocotools git clone https://github.com/cocodataset/cocoapi.git cd cocoapi/PythonAPI python setup.py build_ext install # install apex cd $INSTALL_DIR git clone https://github.com/NVIDIA/apex.git cd apex python setup.py install --cuda_ext --cpp_ext

3、编译模型代码

# install PyTorch Detection cd $INSTALL_DIR #maskrcnn-benchmark #git clone https://github.com/facebookresearch/maskrcnn-benchmark.git git clone https://github.com/zjhuang22/maskscoring_rcnn cd maskscoring_rcnn python setup.py build develop

三、训练前的准备

1、数据和预训练模型准备

在下载的maskscoring_rcnn中新建一个datasets目录,可按如下结构放置你的json文件和原始图像

─ datasets └── annotations ├── coco_train.json └── coco_test.json └── coco_train #该文件夹放置训练集的原始图像 └── coco_test #该文件夹放置测试集的原始图像

另外,maskscoring_rcnn的pretrained_models目录下需要放置R-101.pkl和R-50.pkl这两个预训练模型,如果服务器连了网,在开始训练模型之前会自动下载这两个模型,如果服务器没有网就需要手动下载放到pretrained_models下了。作者在GitHub也放了有这些模型的百度网盘链接。

2、修改参数

(1)修改 maskscoring_rcnn/configs 目录下的配置文件,选择其中的 e2e_ms_rcnn_R_50_FPN_1x.yaml训练脚本,修改如下:

MODEL: META_ARCHITECTURE: "GeneralizedRCNN" WEIGHT: "catalog://ImageNetPretrained/MSRA/R-50" PRETRAINED_MODELS: 'pretrained_models' DATASETS: TRAIN: ("coco_train_xxx",) # 1.设置训练验证集,名字可以随意起,和其他配置文件对应即可。 TEST: ("coco_val_xxx",)

……(省略数行)

SOLVER:

BASE_LR: 0.002 #设置基础学习率,原为0.02

WEIGHT_DECAY: 0.0001

STEPS: (60000, 80000)

MAX_ITER: 5000 #2.设置最大迭代次数,可根据图片数量酌情增减,改小也可以更快看到结果。原为90000

(2)修改 maskscoring_rcnn/maskrcnn_benchmark/config 下的 paths_catalog.py 文件:

DATASETS = { "coco_2014_train": ( "coco/train2014", "coco/annotations/instances_train2014.json",), "coco_2014_val": ("coco/val2014", "coco/annotations/instances_val2014.json"), "coco_2014_minival": ( "coco/val2014", "coco/annotations/instances_minival2014.json", ), "coco_2014_valminusminival": ( "coco/val2014", "coco/annotations/instances_valminusminival2014.json", ), #添加自己的数据集路径信息,在相应的代码段后面添加两行即可 "coco_train_xxx": ("coco_mydata_train", "annotations/coco_mydata_train.json"), "coco_val_xxx": ("coco_mydata_test", "annotations/coco_mydata_test.json"), }

(3)修改 maskscoring_rcnn/maskrcnn_benchmark/config 下的 defaults.py 配置文件:

_C.MODEL.ROI_BOX_HEAD.NUM_CLASSES = 3 # 1.修改分类数量,coco对应81(80+1),注意1加的是背景 _C.SOLVER.BASE_LR = 0.005 # 2.修改学习率,默认为0.001 _C.SOLVER.CHECKPOINT_PERIOD = 1000 # 3.修改check point数量,根据需要自定义 _C.SOLVER.IMS_PER_BATCH = 1 # 4.修改batch size,默认16 _C.TEST.IMS_PER_BATCH = 1 # 5.修改test batch size,默认8 _C.OUTPUT_DIR = "weights/" # 6.设置模型保存路径(对应自定义文件夹)

四、开始训练

到maskscoring_rcnn所在目录下执行:

python tools/train_net.py --config-file configs/e2e_ms_rcnn_R_50_FPN_1x.yaml

python tools/test_net.py --config-file configs/e2e_ms_rcnn_R_50_FPN_1x.yaml

在models里面可以查看训练日志。

五、模型预测

1、修改maskscoring_rcnn/configs 路径下的对应的yaml文件的权重路径。

MODEL: META_ARCHITECTURE: "GeneralizedRCNN" WEIGHT: "weights/model_0005000.pth" # 训练好的模型路径 BACKBONE: CONV_BODY: "R-50-FPN" OUT_CHANNELS: 256

2、修改maskscoring_rcnn/demo 路径下的 predictor.py 文件,添加类别信息。这个文件在原来的demo目录下是没有的,从mask rcnn benchmark的demo文件下复制过来即可。

class COCODemo(object): # COCO categories for pretty print CATEGORIES = [ "__background", "cla_a",#根据自己的数据集修改类别信息 "cla_b", "cla_c", ]

3、在maskscoring_rcnn/demo 下新建 predict.py,用于预测。

#!/usr/bin/env python # coding=UTF-8 import os, sys import numpy as np import cv2 from maskrcnn_benchmark.config import cfg from predictor import COCODemo # 1.修改后的配置文件 config_file = "configs/e2e_ms_rcnn_R_50_FPN_1x.yaml" # 2.配置 cfg.merge_from_file(config_file) # merge配置文件 cfg.merge_from_list(["MODEL.MASK_ON", True]) # 打开mask开关 cfg.merge_from_list(["MODEL.DEVICE", "cuda"]) # or设置为CPU ["MODEL.DEVICE", "cpu"] #cfg.merge_from_list(["MODEL.DEVICE", "cpu"]) coco_demo = COCODemo( cfg, min_image_size=800, confidence_threshold=0.5, # 3.设置置信度 ) if __name__ == '__main__': in_folder = './datasets/test_images/' out_folder = './datasets/test_images_out/' if not os.path.exists(out_folder): os.makedirs(out_folder) for file_name in os.listdir(in_folder): if not file_name.endswith(('jpg', 'png')): continue # load file img_path = os.path.join(in_folder, file_name) image = cv2.imread(img_path) # method1. 直接得到opencv图片结果 #predictions = coco_demo.run_on_opencv_image(image) #save_path = os.path.join(out_folder, file_name) #cv2.imwrite(save_path, predictions) # method2. 获取预测结果 predictions = coco_demo.compute_prediction(image) top_predictions = coco_demo.select_top_predictions(predictions) # draw img = coco_demo.overlay_boxes(image, top_predictions) img = coco_demo.overlay_mask(img, predictions) img = coco_demo.overlay_class_names(img, top_predictions) save_path = os.path.join(out_folder, file_name) cv2.imwrite(save_path, img) # print results boxes = top_predictions.bbox.numpy() labels = top_predictions.get_field("labels").numpy() #label = labelList[np.argmax(scores)] scores = top_predictions.get_field("scores").numpy() masks = top_predictions.get_field("mask").numpy() for i in range(len(boxes)): print('box:', i, ' label:', labels[i]) x1,y1,x2,y2 = [round(x) for x in boxes[i]] # = map(int, boxes[i]) print('x1,y1,x2,y2:', x1,y1,x2,y2)

4、运行程序。

python demo/predict.py

在运行的过程中会报错找不到文件或者无法导入相关的库,此时把相应的文件从 mask rcnn benchmark 对应的文件夹复制过来即可。具体操作可参考:https://www.cnblogs.com/littleLittleTiger/p/12582747.html

成功截图如下