环境准备

我们使用的k8s和ceph环境见:

https://blog.51cto.com/leejia/2495558

https://blog.51cto.com/leejia/2499684

ECK简介

Elastic Cloud on Kubernetes,这是一款基于 Kubernetes Operator 模式的新型编排产品,用户可使用该产品在 Kubernetes 上配置、管理和运行 Elasticsearch 集群。ECK 的愿景是为 Kubernetes 上的 Elastic 产品和解决方案提供 SaaS 般的体验。

ECK使用 Kubernetes Operator模式构建而成,需要安装在Kubernetes集群内,ECK用于部署,且更专注于简化所有后期运行工作:

- 管理和监测多个集群

- 轻松升级至新版本

- 扩大或缩小集群容量

- 更改集群配置

- 动态调整本地存储的规模

- 备份

Kubernetes目前是容器编排领域的领头羊,而Elastic社区发布ECK,使Elasticsearch更容易的跑在云上,也是为云原生技术增砖添瓦,紧跟时代潮流。

部署ECK

部署ECK并查看日志是否正常:

# kubectl apply -f https://download.elastic.co/downloads/eck/1.1.2/all-in-one.yaml

# kubectl -n elastic-system logs -f statefulset.apps/elastic-operator

过几分钟查看elastic-operator是否运行正常,ECK中只有一个elastic-operator pod:

# kubectl get pods -n elastic-system

NAME READY STATUS RESTARTS AGE

elastic-operator-0 1/1 Running 1 2m55s使用ECK部署使用ceph持久化存储的elasticsearch集群

我们测试情况使用1台master节点和1台data节点来部署集群,生产环境建议使用3+台master节点。如下的manifest中,对实例的heap大小,容器的可使用内存,容器的虚拟机内存都进行了配置,可以根据集群需要做调整:

# vim es.yaml

apiVersion: elasticsearch.k8s.elastic.co/v1

kind: Elasticsearch

metadata:

name: quickstart

spec:

version: 7.7.1

nodeSets:

- name: master-nodes

count: 1

config:

node.master: true

node.data: false

podTemplate:

spec:

initContainers:

- name: sysctl

securityContext:

privileged: true

command: ['sh', '-c', 'sysctl -w vm.max_map_count=262144']

containers:

- name: elasticsearch

env:

- name: ES_JAVA_OPTS

value: -Xms1g -Xmx1g

resources:

requests:

memory: 2Gi

limits:

memory: 2Gi

volumeClaimTemplates:

- metadata:

name: elasticsearch-data

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 5Gi

storageClassName: rook-ceph-block

- name: data-nodes

count: 1

config:

node.master: false

node.data: true

podTemplate:

spec:

initContainers:

- name: sysctl

securityContext:

privileged: true

command: ['sh', '-c', 'sysctl -w vm.max_map_count=262144']

containers:

- name: elasticsearch

env:

- name: ES_JAVA_OPTS

value: -Xms1g -Xmx1g

resources:

requests:

memory: 2Gi

limits:

memory: 2Gi

volumeClaimTemplates:

- metadata:

name: elasticsearch-data

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 10Gi

storageClassName: rook-ceph-block

# kubectl apply -f es.yaml过段时间,查看elasticsearch集群的状态

# kubectl get pods

quickstart-es-data-nodes-0 1/1 Running 0 54s

quickstart-es-master-nodes-0 1/1 Running 0 54s

# kubectl get elasticsearch

NAME HEALTH NODES VERSION PHASE AGE

quickstart green 2 7.7.1 Ready 73s查看pv的状态,我们可以看到申请的pv已经创建和绑定成功:

# kubectl get pv

pvc-512cc739-3654-41f4-8339-49a44a093ecf 10Gi RWO Retain Bound default/elasticsearch-data-quickstart-es-data-nodes-0 rook-ceph-block 9m5s

pvc-eff8e0fd-f669-448a-8b9f-05b2d7e06220 5Gi RWO Retain Bound default/elasticsearch-data-quickstart-es-master-nodes-0 rook-ceph-block 9m5s默认集群开启了basic认证,用户名为elastic,密码可以通过secret获取。默认集群也开启了自签名证书https访问。我们可以通过service资源来访问elasticsearch:

# kubectl get services

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

quickstart-es-data-nodes ClusterIP None 4m10s

quickstart-es-http ClusterIP 10.107.201.126 9200/TCP 4m11s

quickstart-es-master-nodes ClusterIP None 4m10s

quickstart-es-transport ClusterIP None 9300/TCP 4m11s

# kubectl get secret quickstart-es-elastic-user -o=jsonpath='{.data.elastic}' | base64 --decode; echo

# curl https://10.107.201.126:9200 -u 'elastic:J1fO9bu88j8pYK8rIu91a73o' -k

{

"name" : "quickstart-es-data-nodes-0",

"cluster_name" : "quickstart",

"cluster_uuid" : "AQxFX8NiTNa40mOPapzNXQ",

"version" : {

"number" : "7.7.1",

"build_flavor" : "default",

"build_type" : "docker",

"build_hash" : "ad56dce891c901a492bb1ee393f12dfff473a423",

"build_date" : "2020-05-28T16:30:01.040088Z",

"build_snapshot" : false,

"lucene_version" : "8.5.1",

"minimum_wire_compatibility_version" : "6.8.0",

"minimum_index_compatibility_version" : "6.0.0-beta1"

},

"tagline" : "You Know, for Search"

} 不停服,扩容一台data节点:修改es.yaml中data-nodes中count的value为2,然后apply下es.yaml即可。

# kubectl apply -f es.yaml

# kubectl get pods

quickstart-es-data-nodes-0 1/1 Running 0 24m

quickstart-es-data-nodes-1 1/1 Running 0 8m22s

quickstart-es-master-nodes-0 1/1 Running 0 24m

# kubectl get elasticsearch

NAME HEALTH NODES VERSION PHASE AGE

quickstart green 3 7.7.1 Ready 25m不停服,缩容一台data节点,会自动进行数据同步:修改es.yaml中data-nodes中count的value为1,然后apply下es.yaml即可。

对接kibana

由于默认kibana也开启了自签名证书的https访问,我们可以选择关闭,我们来使用ECK部署kibana:

# vim kibana.yaml

apiVersion: kibana.k8s.elastic.co/v1

kind: Kibana

metadata:

name: quickstart

spec:

version: 7.7.1

count: 1

elasticsearchRef:

name: quickstart

http:

tls:

selfSignedCertificate:

disabled: true

# kubectl apply -f kibana.yaml

# kubectl get pods

NAME READY STATUS RESTARTS AGE

quickstart-es-data-nodes-0 1/1 Running 0 31m

quickstart-es-data-nodes-1 1/1 Running 1 15m

quickstart-es-master-nodes-0 1/1 Running 0 31m

quickstart-kb-6558457759-2rd7l 1/1 Running 1 4m3s

# kubectl get kibana

NAME HEALTH NODES VERSION AGE

quickstart green 1 7.7.1 4m27s为kibana在ingress中添加一个四层代理,提供对外访问服务:

# vim tsp-kibana.yaml

apiVersion: k8s.nginx.org/v1alpha1

kind: GlobalConfiguration

metadata:

name: nginx-configuration

namespace: nginx-ingress

spec:

listeners:

- name: kibana-tcp

port: 5601

protocol: TCP

---

apiVersion: k8s.nginx.org/v1alpha1

kind: TransportServer

metadata:

name: kibana-tcp

spec:

listener:

name: kibana-tcp

protocol: TCP

upstreams:

- name: kibana-app

service: quickstart-kb-http

port: 5601

action:

pass: kibana-app

# kubectl apply -f tsp-kibana.yaml默认kibana访问elasticsearch的用户名为elastic,密码获取方式如下

# kubectl get secret quickstart-es-elastic-user -o=jsonpath='{.data.elastic}' | base64 --decode; echo删除ECK相关资源

删除elasticsearch和kibana以及ECK

# kubectl get namespaces --no-headers -o custom-columns=:metadata.name \

| xargs -n1 kubectl delete elastic --all -n

# kubectl delete -f https://download.elastic.co/downloads/eck/1.1.2/all-in-one.yaml对接cerebro

先安装Kubernetes应用的包管理工具helm。Helm是用来封装 Kubernetes原生应用程序的YAML文件,可以在你部署应用的时候自定义应用程序的一些metadata,helm依赖chart实现了应用程序的在k8s上的分发。helm和chart主要实现了如下功能:

- 应用程序封装

- 版本管理

- 依赖检查

- 应用程序分发

# wget https://get.helm.sh/helm-v3.2.3-linux-amd64.tar.gz # tar -zxvf helm-v3.0.0-linux-amd64.tar.gz # mv linux-amd64/helm /usr/local/bin/helm # helm repo add stable https://kubernetes-charts.storage.googleapis.com通过helm安装cerebro:

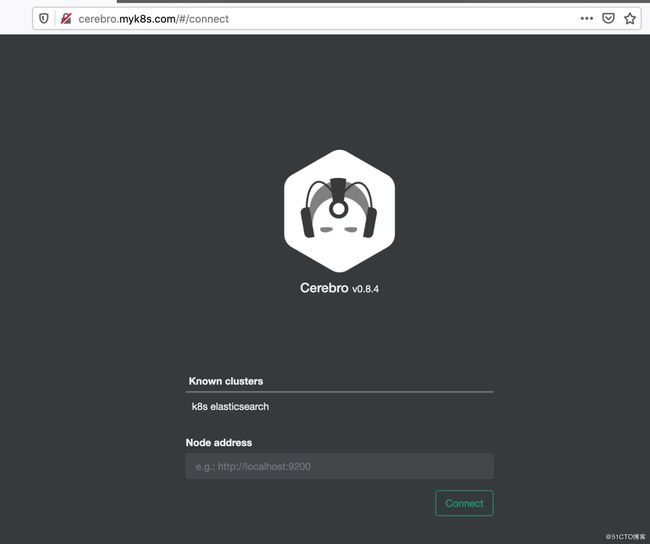

# helm install stable/cerebro --version 1.1.4 --generate-name查看cerebro的状态:

# kubectl get pods|grep cerebro cerebro-1591777586-7fd87f7d48-hmlp7 1/1 Running 0 11m由于默认ECK部署的elasticsearch开启了自签名证书的https服务,故可以在cerebro配置忽略https证书认证(也可以在cerebro中添加自签名证书的ca证书来识别自签名证书),并重启cerebro:

1,导出cerebro的configmap:# kubectl get configmap cerebro-1591777586 -o yaml > cerebro.yaml2,替换configmap中cerebro的hosts相关配置为如下(其中quickstart-es-http为elasticsarch的service资源名字):

play.ws.ssl.loose.acceptAnyCertificate = true hosts = [ { host = "https://quickstart-es-http.default.svc:9200" name = "k8s elasticsearch" } ]3,应用cerebro的configmap并重启cerebro pod:

# kubectl apply -f cerebro.yaml

# kubectl get pods|grep cerebro

cerebro-1591777586-7fd87f7d48-hmlp7 1/1 Running 0 11m

# kubectl get pod cerebro-1591777586-7fd87f7d48-hmlp7 -o yaml | kubectl replace --force -f -先确认cerebro的service资源,然后配置ingress为cerebro添加7层代理:

# kubectl get services

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

cerebro-1591777586 ClusterIP 10.111.107.171 80/TCP 19m

# vim cerebro-ingress.yaml

apiVersion: networking.k8s.io/v1beta1

kind: Ingress

metadata:

name: cerebro-ingress

spec:

rules:

- host: cerebro.myk8s.com

http:

paths:

- path: /

backend:

serviceName: cerebro-1591777586

servicePort: 80

# kubectl apply -f cerebro-ingress.yaml 在本地pc的/etc/hosts文件添加host绑定"172.18.2.175 cerebro.myk8s.com",然后通过览器访问:

删除cerebro

# helm list

NAME NAMESPACE REVISION UPDATED STATUS CHART APP VERSION

cerebro-1591777586 default 1 2020-06-10 16:26:30.419723417 +0800 CST deployed cerebro-1.1.4 0.8.4

# heml delete name cerebro-1591777586参考

https://www.elastic.co/guide/en/cloud-on-k8s/current/k8s-deploy-kibana.html

https://www.elastic.co/guide/en/cloud-on-k8s/current/k8s-kibana-http-configuration.html

https://www.elastic.co/guide/en/cloud-on-k8s/current/k8s-quickstart.html

https://hub.helm.sh/charts/stable/cerebro

https://www.elastic.co/cn/blog/introducing-elastic-cloud-on-kubernetes-the-elasticsearch-operator-and-beyond

https://helm.sh/docs/intro/install/