过欠拟合

1.定义

- 一类是模型无法得到较低的训练误差,我们将这一现象称作欠拟合(underfitting);

- 另一类是模型的训练误差远小于它在测试数据集上的误差,我们称该现象为过拟合(overfitting)。 在实践中,我们要尽可能同时应对欠拟合和过拟合。虽然有很多因素可能导致这两种拟合问题,在这里我们重点讨论两个因素:模型复杂度和训练数据集大小。

2.code实现

import torch

import numpy as np

import sys

sys.path.append("/home/kesci/input")

import d2lzh1981 as d2l

print(torch.__version__)

n_train,n_test,true_w,true_b = 100,100,[1.2,-3.4,5.6],5

features = torch.randn((n_train + n_test),1)

poly_features = torch.cat((features,torch.pow(features,2),torch.pow(features,3)),1)

labels = (true_w[0]*poly_features[:,0] + true_w[1] * poly_features[:,1] + true_w[2]*poly_features[:,2] + true_b)

labels += torch.tensor(np.random.normal(0,0.01,size=labels.size()),dtype = torch.float)

features,poly_features[:2],labels[:2]

def semilogy(x_vals,y_vals,x_label,y_label,x2_vals= None,y2_vals = None,legend = None,figsize = (3.5,2.5)):

d2l.plt.xlabel(x_label)

d2l.plt.ylabel(y_label)

d2l.plt.semilogy(x_vals,y_vals)

if x2_vals and y2_vals:

d2l.plt.semilogy(x2_vals,y_vals,linestyle=':')

d2l.plt.legend(legend)

num_epochs,loss = 100,torch.nn.MSLoss()

def fit_and_plot(train_features,test_features,train_labels,test_labels):

net = torch.nn.Linear(train_features.shape[-1],1)

batch_size = min(10,train_labels.shape[0])

dataset = torch.utils.data.TensorDataset(train_features,train_labels)

train_iter = torch.untils.data.DataLoader(dataset,batch_size,shuffle=True)

optimizer = torch.optim.SGD(net.parameters(),lr=0.01)

train_ls,test_ls = [],[]

for _ in range(num_epochs):

for X,y in train_iter:

l = loss(net(X),y.view(-1,1))

optimizer.zero_grad()

l.backward()

optimizer.step()

train_labels = train_labels.view(-1,1)

test_labels = test_labels.view(-1,1)

train_ls.append(loss(net(train_features),train_labels).item())

test_ls.append(loss(net(test_features), test_labels).item())

print('final epoch: train loss', train_ls[-1], 'test loss', test_ls[-1])

semilogy(range(1, num_epochs + 1), train_ls, 'epochs', 'loss',

range(1, num_epochs + 1), test_ls, ['train', 'test'])

print('weight:', net.weight.data,

'\nbias:', net.bias.data)

fit_and_plot(poly_features[:n_train, :], poly_features[n_train:, :], labels[:n_train], labels[n_train:])

fit_and_plot(features[:n_train, :], features[n_train:, :], labels[:n_train], labels[n_train:])

fit_and_plot(poly_features[0:2, :], poly_features[n_train:, :], labels[0:2], labels[n_train:])

n_train,n_test,num_inputs = 20,100,200

true_w,true_b = torch.ones(num_inputs,1)* 0.01,0.05

features = torch.randn((n_train+ n_test,num_inputs))

labels = torch.matmul(features,true_w) + true_b

labels += torch.tensor(np.random.normal(0,0.01,size=labels.size()),dtype=torch.float)

train_features,test_features = features[:n_train,:],features[n_train:,:]

train_labels,test_labels = labels[:n_train],labels[n_train:]

def init_params():

w = torch.randn((num_inputs,1),requires_grad=True)

b = torch.zeros(1,requires_grad = True)

return [w,b]

def l2_penalty(w):

return (w**2).sum() / 2

batch_size,num_epochs,lr = 1,100,0.003

net,loss = d2l.linreg,d2l.squared_loss

dataset = torch.untils.data.TensorDaraset(train_features,train_labels)

train_iter = torch.utils.data.DataLoader(dataset,batch_size,shuffle=True)

def fit_and_plot(lambd):

w,b = init_params()

train_ls,test_ls = [],[]

for _ in range(num_epochs):

for X,y in train_iter:

l = loss(net(X,w,b),y) + lambd *l2_penalty(w)

l = l.sum()

if w.grad is not None:

w.grad.data.zero_()

b.grad.data.zero_()

l.backward()

d2l.sgd([w,b],lr,batch_size)

train_ls.append(loss(net(train_features,w,b),train_labels).mean().item())

test_ls.append(loss(net(test_features,w,b),test_labels).mean().item())

d2l.semilogy(range(1, num_epochs + 1), train_ls, 'epochs', 'loss',

range(1, num_epochs + 1), test_ls, ['train', 'test'])

print('L2 norm of w:',w.norm().item())

fit_and_plot(lambd = 0)

fit_and_plot(lambd = 3)

def fit_and_plot_pytorch(wd):

net = nn.Linear(num_inputs,1)

nn.init.normal_(net.weight,mean =0,std = 1)

nn.init.normal_(net.bias,mean=0,std =1)

optimizer_w = torch.optim.SGD(params = [net.weight],lr = lr,weight_decay=wd)

optimizer_b = torch.optim.SGD(params=[net.bias],lr= lr)

train_ls,test_ls = [],[]

for _ in range(num_epochs):

for X,y in train_iter:

l = loss(net(X),y).mean()

optimizer_w.zero_grad()

optimizer_b.zero_grad()

l.backward()

optimizer_w.step()

optimizer_b.step()

train_ls.append(loss(net(train_features),train_labels).mean().item())

test_ls.append(loss(net(test_features),test_labels).mean().item())

d2l.semilogy(range(1,num_epochs + 1),train_ls,'epochs','loss',

range(1,num_epochs + 1),test_ls,['train','test'])

print('L2 norm of w:',net.weight.data.norm().item())

fit_and_plot_pytorch(0)

fit_and_plot_pytorch(3)

def dropout(X,drop_prob):

X = X.float()

assert 0<= drop_prob <= 1

keep_prob = 1 - drop_prob

if keep_prob == 0:

return torch.zeros_like(X)

mask = (torch.rand(X.shape) < keep_prob).float()

return mask** X / keep_prob

X = torch.arange(16).view(2,8)

dropout(X,0)

dropout(X,0.5)

dropout(X,1.0)

num_inputs, num_outputs, num_hiddens1, num_hiddens2 = 784, 10, 256, 256

W1 = torch.tensor(np.random.normal(0, 0.01, size=(num_inputs, num_hiddens1)), dtype=torch.float, requires_grad=True)

b1 = torch.zeros(num_hiddens1, requires_grad=True)

W2 = torch.tensor(np.random.normal(0, 0.01, size=(num_hiddens1, num_hiddens2)), dtype=torch.float, requires_grad=True)

b2 = torch.zeros(num_hiddens2, requires_grad=True)

W3 = torch.tensor(np.random.normal(0, 0.01, size=(num_hiddens2, num_outputs)), dtype=torch.float, requires_grad=True)

b3 = torch.zeros(num_outputs, requires_grad=True)

params = [W1, b1, W2, b2, W3, b3]

drop_prob1,drop_prob2 = 0.2,0.5

def net(X,is_training = True):

X = X.view(-1,num_inputs)

H1 = (torch.matmul(X,W1)+ b1).relu()

if is_training:

H1 = dropout(H1,drop_prob1)

H2 = (torch.matmul(H1,W2) + b2).relu()

if is_training:

H2 = dropout(H2,drop_prob2)

return torch.matmul(H2,W3)+ b3

def evaluate_accuracy(data_iter,net):

acc_sum,n = 0.0,0

for X,y in data_iter:

if isinstance(net,torch.nn.Module):

net.eval()

acc_sum += (net(X).argmax(dim = 1) == y).float().sum().item()

net.train()

else:

if ('is_training' in net.__code__.co_varnames):

acc_sum += (net(X,is_training =False).argmax(dim =1) == y).float().sum().item()

else:

acc_sum += (net(X).argmax(dim=1) == y).float().sum().item()

n += y.shape[0]

return acc_sum / n

num_epochs,lr,batch_size = 5,100,256

loss = torch.nn.CrossEntropyLoss()

train_iter,test_iter = d2l.load_data_fashion_mnist(batch_size,root = '/home/kesci/input/FashionMNIST2065')

d2l.train_ch3(net,

train_iter,

test_iter,

loss,

num_epochs,

batch_size,

params,

lr)

net = nn.Sequential(

d2l.FlattenLayer(),

nn.Linear(num_inputs,num_hiddens1),

nn.ReLU(),

nn.Dropout(drop_prob1),

nn.Linear(num_hiddens1,num_hiddens2),

nn.ReLU(),

nn.Dropout(drop_prob2),

nn.Linear(num_hiddens2,10)

)

for param in net.parameters():

nn.init.normal_(param, mean=0, std=0.01)

optimizer = torch.optim.SGD(net.parameters(), lr=0.5)

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, batch_size, None, None, optimizer)

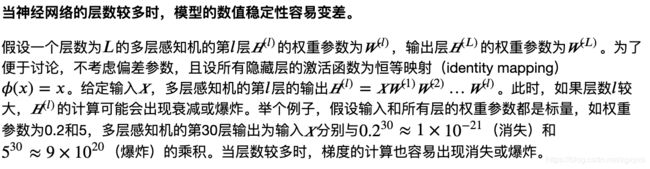

梯度爆炸与梯度消失

1.产生原因

2.code实现

import torch

import torch.nn as nn

import numpy as np

import pandas as pd

import sys

sys.path.append('/home/kesci/input')

import d2lzh1981 as d2l

print(torch.__version__)

torch.set_default_tensor_type(torch.FloatTensor)

test_data = pd.read_csv("/home/kesci/input/houseprices2807/house-prices-advanced-regression-techniques/test.csv")

train_data = pd.read_csv("/home/kesci/input/houseprices2807/house-prices-advanced-regression-techniques/train.csv")

all_features = pd.concat((train_data.iloc[:,1:-1],test_data.iloc[:,1:]))

numeric_features = all_features.dtypes[all_features.dtypes != 'object'].index

all_features[numeric_features] = all_features[numeric_features].apply(lambda x:(x-x.mean()) / (x.std()))

all_features[numeric_features] = all_features[numeric_features].fillna(0)

all_features = pd.get_dummies(all_features,dummy_na =True)

all_features.shape

n_train = train_data.shape[0]

train_features = torch.tensor(all_features[:n_train].values, dtype=torch.float)

test_features = torch.tensor(all_features[n_train:].values, dtype=torch.float)

train_labels = torch.tensor(train_data.SalePrice.values, dtype=torch.float).view(-1, 1)

loss = torch.nn.MSELoss()

def get_net(feature_num):

net = nn.Linear(feature_num,1)

for param in net.parameters():

nn.init.normal_(param,mean=0,std=0.01)

return net

def log_rmse(net,features,labels):

with torch.no_grad():

clipped_preds = torch.max(net(features),torch.tensor(1.0))

rmse = torch.sqrt(2*loss(clipped_preds.log(),labels.log()).mean())

return rmse.item()

def train(net,train_features,train_labels,test_features,test_labels,num_epochs,learning_rate,weight_decay,batch_size):

train_ls,test_ls = [],[]

dataset = torch.utils.data.TensorDataset(train_features,train_labels)

train_iter = torch.utils.data.DataLoader(dataset,batch_size,shuffle = True)

optimizer = torch.optim.Adam(params = net.parameters(),lr = learning_rate,weight_decay = weight_decay)

net = net.float()

for _ in range(num_epochs):

for X,y in train_iter:

l = loss(net(X.float()),y.float())

optimizer.zero_grad()

l.backward()

optimizer.step()

train_ls.append(log_rmse(net,train_features,train_labels))

if test_labels is not None:

test_ls.append(log_rmse(net,test_features,test_labels))

return train_ls,test_ls

def get_k_fold_data(k,i,X,y):

assert k >1

fold_size = X.shape[0] // k

X_train,y_train = None,None

for j in range(k):

idx = slice(j * fold_size,(j+1)* fold_size)

X_part,y_part = X[idx,:],y[idx]

if j ==i:

X_valid,y_valid = X_part,y_part

elif X_train is None:

X_train,y_train = X_part,y_part

else:

X_train = torch.cat((X_train,X_part),dim =0)

y_train = torch.cat((y_train,y_part),dim =0)

return X_train,y_train,X_valid,y_valid

def k_fold(k,X_train,y_train,num_epochs,learning_rate,weight_decay,batch_size):

train_l_sum,valid_l_sum = 0,0

for i in range(k):

data = get_k_fold_data(k,i,X_train,y_train)

net = get_net(X_train.shape[1])

train_ls,valid_ls = train(net,*data,num_epochs,learning_rate,weight_decay,batch_size)

train_l_sum += train_ls[-1]

valid_l_sum += valid_ls[-1]

if i == 0:

d2l.semilogy(range(1, num_epochs + 1), train_ls, 'epochs', 'rmse',\

range(1, num_epochs + 1), valid_ls,\

['train', 'valid'])

print('fold %d, train rmse %f, valid rmse %f' % (i, train_ls[-1], valid_ls[-1]))

return train_l_sum / k,valid_l_sum /k

k, num_epochs, lr, weight_decay, batch_size = 5, 100, 5, 0, 64

train_l, valid_l = k_fold(k, train_features, train_labels, num_epochs, lr, weight_decay, batch_size)

print('%d-fold validation: avg train rmse %f, avg valid rmse %f' % (k, train_l, valid_l))

def train_and_pred(train_features,test_features,train_labels,test_data,num_epochs,lr,weight_decay,batch_size):

net = get_net(train_features.shape[1])

train_ls,_ = train(net,train_features,train_labels,None,None,num_epochs,lr,weight_decay,batch_size)

d2l.semilog(range(1,num_epochs+1),train_ls,'epochs','rmse')

print('train rmse %f'%train_ls[-1])

preds = net(test_features).detach().numpy()

test_data['SalePrice'] = pd.Series(preds.reshape(1,-1)[0])

submission = pd.concat([test_data['Id'],test_data['SalePrice']],axis=1)

submission.to_csv('./submission.csv', index=False)

train_and_pred(train_features, test_features, train_labels, test_data, num_epochs, lr, weight_decay, batch_size)