Tensorflow2.0 keras DenseNet121 系列 代码实现

目录

- 迁移学习

- Dense block 结构剖析

- 自编代码

- 导入各种库

- 卷积组(1x1+3x3)

- Dense Block

- Transition Block

- 构建DenseNet121网络

- 参考

网络介绍请参看:博文

keras搭建深度学习模型的若干方法:博文

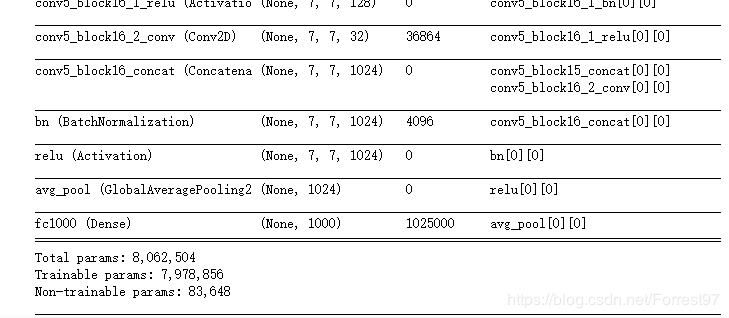

迁移学习

import tensorflow as tf

from tensorflow import keras

base_model = keras.applications.DenseNet121(weights='imagenet')

base_model.summary()

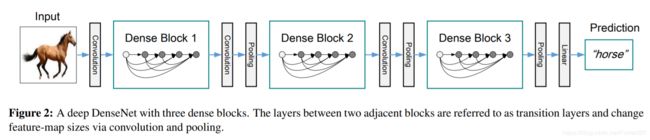

Dense block 结构剖析

整个网络的关键就是Dense block

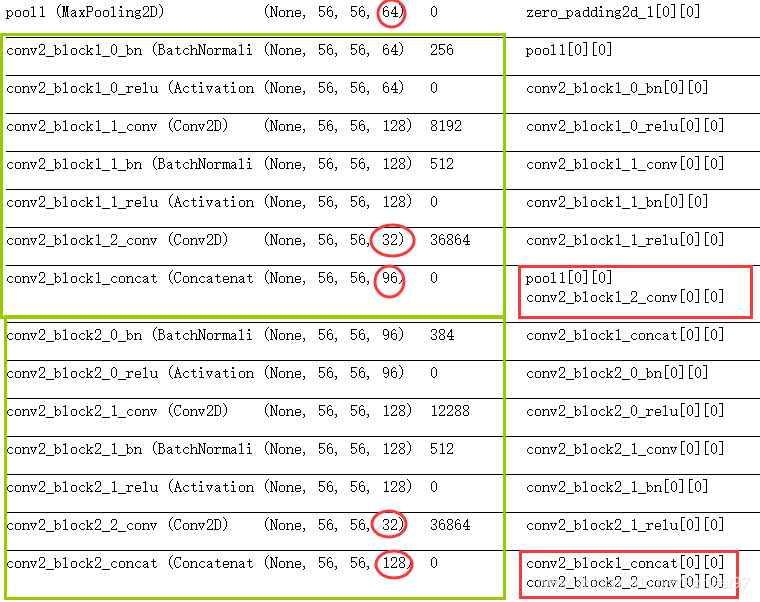

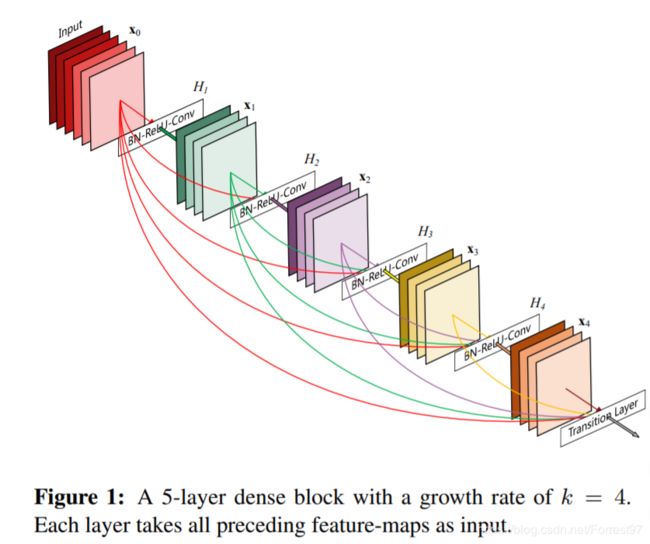

以第一个Dense Block为例,示意图如下。即每一卷积组(第一个表中的1x1 和 3x3)最后卷积操作(特征层数相同)的特征输出,将与网络后的卷积组特征层进行堆叠。

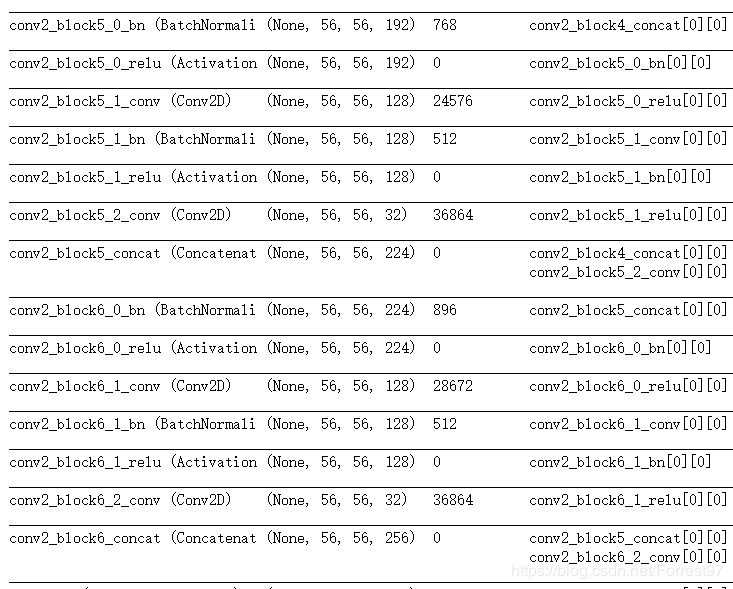

同时我们观察迁移学习得到的summary对比一下是否符合示意图的特征层堆叠形式。

以下是DenseNet121第一个block的完整结构,其中包括了6组卷积。

绿色方框表示一个卷积组操作,红色方框表示特征层的堆叠,可见每一个卷积组的最后特征层数相同都是32,然后与上一个卷积组的输出进行堆叠,就这样网络特征层不断增加。

自编代码

导入各种库

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers, models, Sequential, backend

from tensorflow.keras.layers import Conv2D, MaxPooling2D, Dense, Flatten, Dropout, BatchNormalization, Activation, GlobalAveragePooling2D

from tensorflow.keras.layers import Concatenate, Lambda, Input, ZeroPadding2D, AveragePooling2D

卷积组(1x1+3x3)

首先定义网络中的最小单元一个卷积组,即1x1+3x3卷积操作。

每一个卷积操作配套bn+relu+conv2d

def conv_block(x, nb_filter, dropout_rate=None, name=None):

inter_channel = nb_filter*4

# 1x1 convolution

x = BatchNormalization(epsilon=1.1e-5, axis=3, name=name+'_bn1')(x)

x = Activation('relu', name=name+'_relu1')(x)

x = Conv2D(inter_channel, 1, 1, name=name+'_conv1', use_bias=False)(x)

if dropout_rate:

x = Dropout(dropout_rate)(x)

# 3x3 convolution

x = BatchNormalization(epsilon=1.1e-5, axis=3, name=name+'_bn2')(x)

x = Activation('relu', name=name+'_relu2')(x)

x = ZeroPadding2D((1, 1), name=name+'_zeropadding2')(x)

x = Conv2D(nb_filter, 3, 1, name=name+'_conv2', use_bias=False)(x)

if dropout_rate:

x = Dropout(dropout_rate)(x)

return x

Dense Block

由N组卷积组构成Dense Block

def dense_block(x, stage, nb_layers, nb_filter, growth_rate, dropout_rate=None,

grow_nb_filters=True, name =None):

concat_feat = x # store the last layer output

for i in range(nb_layers):

branch = i+1

x =conv_block(concat_feat, growth_rate, dropout_rate, name=name+str(stage)+'_block'+str(branch)) # 在参考的基础,修改的地方这里应该是相同的growth_rate=32

concat_feat = Concatenate(axis=3, name=name+str(stage)+'_block'+str(branch))([concat_feat, x])

if grow_nb_filters:

nb_filter += growth_rate

return concat_feat, nb_filter

Transition Block

每个Dense block之间的传递block,主要用来降低特征层数

ef transition_block (x,stage, nb_filter, compression=1.0, dropout_rate=None, name=None):

x = BatchNormalization(epsilon=1.1e-5, axis=3, name=name+str(stage)+'_bn')(x)

x = Activation('relu', name=name+str(stage)+'_relu')(x)

x = Conv2D(int(nb_filter*compression), 1, 1, name=name+str(stage)+'_conv', use_bias=False)(x)

if dropout_rate:

x = Dropout(dropout_rate)(x)

x = AveragePooling2D((2,2), strides=(2,2), name=name+str(stage)+'_pooling2d')(x)

return x

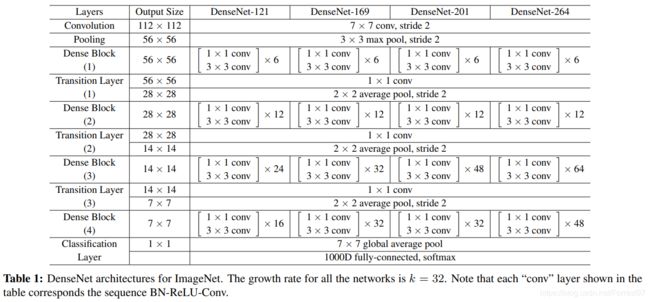

构建DenseNet121网络

按照表1中的模块数构建DenseNet121网络,按照迁移学习参考的将reduction参数定义为0.5,即在transition block中消减一半的特征层。

def DenseNet(nb_dense_block=4, growth_rate=32, nb_filter=64, reduction=0.0, dropout_rate=0.0, weight_decay=1e-4, classes=1000, weights_path=None):

compression = 1.0-reduction

nb_filter=64

nb_layers = [6, 12, 24, 16] #For DenseNet-121

img_input = Input(shape=(224,224,3))

#initial convolution

x = ZeroPadding2D((3, 3), name='conv1_zeropadding')(img_input)

x = Conv2D(nb_filter, 7, 2, name='conv1', use_bias=False)(x)

x = BatchNormalization(epsilon=1.1e-5, axis=3, name='conv1_bn')(x)

x = Activation('relu', name='relu1')(x)

x = ZeroPadding2D((1, 1), name='pool1_zeropadding')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), name='pool1')(x)

#Dense block and Transition layer

for block_id in range(nb_dense_block-1):

stage = block_id+2 # start from 2

x, nb_filter = dense_block(x, stage, nb_layers[block_id], nb_filter, growth_rate,

dropout_rate=dropout_rate, name='Dense')

x = transition_block(x, stage, nb_filter, compression=compression, dropout_rate=dropout_rate, name='Trans')

nb_filter *=compression

final_stage = stage + 1

x, nb_filter=dense_block(x, final_stage, nb_layers[-1], nb_filter, growth_rate,

dropout_rate=dropout_rate, name='Dense')

# top layer

x = BatchNormalization(name= 'final_conv_bn')(x)

x = Activation('relu', name='final_act')(x)

x = GlobalAveragePooling2D(name='final_pooling')(x)

x = Dense(classes, activation='softmax', name='fc')(x)

model=models.Model(img_input, x, name='DenseNet121')

return model

def main():

model = DenseNet(reduction=.5)

model.summary()

if __name__=='__main__':

main()

参考

整个网络还有一些超参的设置,大家可以继续阅读原文

https://github.com/titu1994/DenseNet

https://github.com/flyyufelix/DenseNet-Keras