Spark安装及其sbt和maven 打包工具安装

一.安装准备

- 需要先安装hadoop,Java JDK,采用 Hadoop(伪分布式)+Spark(Local模式) 的组合.

- spark和sbt,maven的版本:spark-2.4.5-bin-without-hadoop.tgz 和sbt-1.3.8.tgz,maven-3.6.3;

https://pan.baidu.com/s/129rn9DrjVSzGi2SksTkefw 提取码: ebbb

二.spark 本地模式安装

- 进入spark 压缩包所在目录,我的目录为~/Documents/Personal File/BigData,解压文件到目录 /usr/local/,并重命名为spark,设置权限.

cd ~/Documents/Personal\ File/BigData

sudo tar -zxf ./spark-2.4.5-bin-without-hadoop.tgz -C /usr/local/

cd /usr/local

sudo mv ./spark-2.4.5-bin-without-hadoop/ ./spark

sudo chown -R hadoop:hadoop ./spark

- 配置文件spark-env.sh;

cd /usr/local/saprk

cp ./conf/spark-env.sh.template ./conf/spark-env.sh

vim ./conf/spark-env.sh

在文件中添加如下配置信息:

export SPARK_DIST_CLASSPATH=$(/usr/local/hadoop/bin/hadoop classpath)

三. Spark Shell 编程

- 启动shell

cd /usr/local/spark

bin/spark-shell

- 简单的编程测试

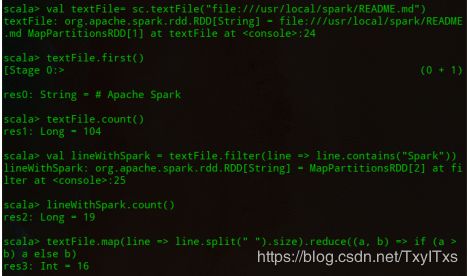

spark创建sc,加载本地文件创建RDD,也可以加载HDFS 文件.通过 前缀(hdfs://和file:///) 进行标识是本地文件还是HDFS文件;

val textFile = sc.textFile("file:///usr/local/spark/README.md")

//获取RDD文件textFile的第一行内容

textFile.first()

//获取RDD文件textFile所有项的计数

textFile.count()

//抽取含有“Spark”的行,返回一个新的RDD

val lineWithSpark = textFile.filter(line => line.contains("Spark"))

//统计新的RDD的行数

lineWithSpark.count()

//找出文本中每行的最多单词数

textFile.map(line => line.split(" ").size).reduce((a, b) => if (a > b) a else b)

- 退出Spark .

:quit

四. Scala 独立应用编程

使用scala 编写的程序需要使用sbt进行编译打包,使用java编写的代码需要通过maven 打包,使用python 编写的代码可以直接通过spark-submit 直接提交.

- sbt 安装

sudo mkdir /usr/local/sbt

sudo tar -zxvf ~/Documents/Personal\ File/BigData/sbt-1.3.8.tgz -C /usr/local

cd /usr/local/sbt

sudo chown -R hadoop /usr/local/sbt

cp ./bin/sbt-launch.jar ./ #把bin目录下的sbt-launch.jar复制到sbt安装目录下

- 创建sbt 的启动脚本.

vim /usr/local/sbt/sbt

#添加如下内容:

#!/bin/bash

SBT_OPTS="-Xms512M -Xmx1536M -Xss1M -XX:+CMSClassUnloadingEnabled -XX:MaxPermSize=256M"

java $SBT_OPTS -jar `dirname $0`/sbt-launch.jar "$@"

- 为文件添加可执行权限.

chmod u+x /usr/local/sbt/sbt

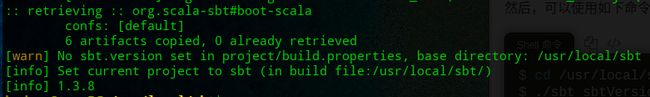

- 查看sbt 版本信息,这个步骤第一次执行时间很长,还有可能执行不成功.

cd /usr/local/sbt

./sbt/ sbtVersion

- scala 编程及其打包

创建一个目录作为应用程序根目录.并创建文件结构目录;

# 进入一个目录,创建相关目录

cd ~/Documents/Personal\ File/BigData

# 创建根目录及其结构

mkdir ./sparkapp # 创建应用程序根目录

mkdir -p ./sparkapp/src/main/scala # 创建所需的文件夹结构

# 创建代码文件

vim ./sparkapp/src/main/scala/SimpleApp.scala

编写代码如下:

/* SimpleApp.scala */

import org.apache.spark.SparkContext

import org.apache.spark.SparkContext._

import org.apache.spark.SparkConf

object SimpleApp {

def main(args: Array[String]) {

val logFile = "file:///usr/local/spark/README.md" // Should be some file on your system

val conf = new SparkConf().setAppName("Simple Application")

val sc = new SparkContext(conf)

val logData = sc.textFile(logFile, 2).cache()

val numAs = logData.filter(line => line.contains("a")).count()

val numBs = logData.filter(line => line.contains("b")).count()

println("Lines with a: %s, Lines with b: %s".format(numAs, numBs))

}

}

- 编译打包文件

cd ~/Documents/Personal\ File/BigData/sparkapp

vim simple.sbt

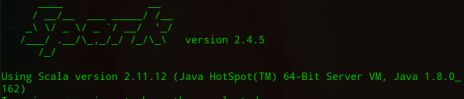

添加内容,scalaVersion指定scala 的版本,spark-core 指定spark的版本.可以通过spark 的shell登录界面获取到版本信息.

name := "Simple Project"

version := "1.0"

scalaVersion := "2.11.12"

libraryDependencies += "org.apache.spark" %% "spark-core" % "2.4.5"

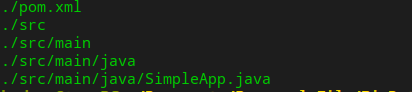

使用sbt打包文件,为保证sbt正常运行,通过如下命令查看文件结构.

cd ~/Documents/Personal\ File/BigData/sparkapp

find .

执行打包命令,生成的 jar 包的位置为 ~/Documents/Personal\ File/BigData/sparkapp/target/scala-2.11/simple-project_2.11-1.0.jar。

/usr/local/sbt/sbt package

/usr/local/spark/bin/spark-submit --class "SimpleApp" ~/Documents/Personal\ File/BigData/sparkapp/target/scala-2.11/simple-project_2.11-1.0.jar

![]()

五. Java 独立编程

- 安装Java 编译打包工具maven ;

cd ~/Documents/Personal\ File/BigData

sudo unzip apache-maven-3.6.3-bin.zip -d /usr/local

cd /usr/local

sudo mv apache-maven-3.6.3/ ./maven

sudo chown -R hadoop ./maven

- Java 应用程序代码

创建应用程序根目录;

cd ~/Documents/Personal\ File/BigData

mkdir -p ./sparkapp2/src/main/java

在./sparkapp2/src/main/java下创建代码文件.

/*** SimpleApp.java ***/

import org.apache.spark.api.java.*;

import org.apache.spark.api.java.function.Function;

import org.apache.spark.SparkConf;

public class SimpleApp {

public static void main(String[] args) {

String logFile = "file:///usr/local/spark/README.md"; // Should be some file on your system

SparkConf conf=new SparkConf().setMaster("local").setAppName("SimpleApp");

JavaSparkContext sc=new JavaSparkContext(conf);

JavaRDD<String> logData = sc.textFile(logFile).cache();

long numAs = logData.filter(new Function<String, Boolean>() {

public Boolean call(String s) { return s.contains("a"); }

}).count();

long numBs = logData.filter(new Function<String, Boolean>() {

public Boolean call(String s) { return s.contains("b"); }

}).count();

System.out.println("Lines with a: " + numAs + ", lines with b: " + numBs);

}

}

在./sparkapp2目录中新建文件pom.xml.

cd ~/Documents/Personal\ File/BigData/sparkapp2

vim pox.xml

<project>

<groupId>cn.edu.xmu</groupId>

<artifactId>simple-project</artifactId>

<modelVersion>4.0.0</modelVersion>

<name>Simple Project</name>

<packaging>jar</packaging>

<version>1.0</version>

<repositories>

<repository>

<id>jboss</id>

<name>JBoss Repository</name>

<url>http://repository.jboss.com/maven2/</url>

</repository>

</repositories>

<dependencies>

<dependency> <!-- Spark dependency -->

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>2.4.5</version>

</dependency>

</dependencies>

</project>

- 使用maven 打包Java 程序

为保证maven 正常运行,通过find 查看文件结构:

cd ~/Documents/Personal\ File/BigData/sparkapp2

find .

# 打包命令

/usr/local/maven/bin/mvn package

- 通过spark-submit 运行程序.

/usr/local/spark/bin/spark-submit --class "SimpleApp" ./target/simple-project-1.0.jar