基于Ambari安装HDP2.6.X

说明,本次采用三台服务器去安装Hadoop生态圈全家桶

第一部分:安装

第二部分:出现问题解决方案

第一部分

hostname -f

vi /etc/hostname

安装wget

yum install wget下载ambari源

wget -nv http://public-repo-1.hortonworks.com/ambari/centos7/2.x/updates/2.6.1.5/ambari.repo -O /etc/yum.repos.d/ambari.repo更新

yum clean && yum makecache 下载ambari-server

yum install ambari-server安装

ambari-server setup启动

ambari-server start( stop | status )这里面会让你选择jdk,我们机子没有安装jdk所以默认用第一个,系统会自己下载,如果你自己下载就选择第三个自己定义目录位置,不过听说现在java收费了,选择第一个如果不行那就用自己的,剩下的一切默认,也先不选数据库,后期都会做

http://X.X.X.X:8080 (账号密码 admin/admin )在所有节点安装ambari-agent

yum install ambari-agent我们需要在ambari-agent.ini更改两个地方首先为了三台机子在ambari互通,其次由于这个版本python有问题,之前security的ssl用的1.22版本,所以我们现在还得加一个版本进去

这是我主机的hostname

force_https_protocol=PROTOCOL_TLSv1_2

启动ambari-agent

ambari-agent start

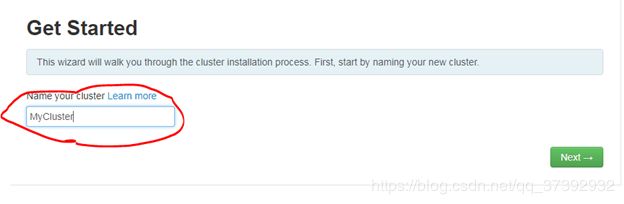

进入ambari界面

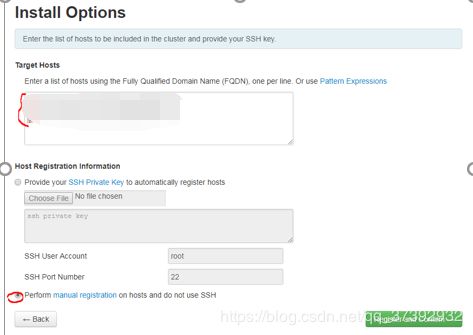

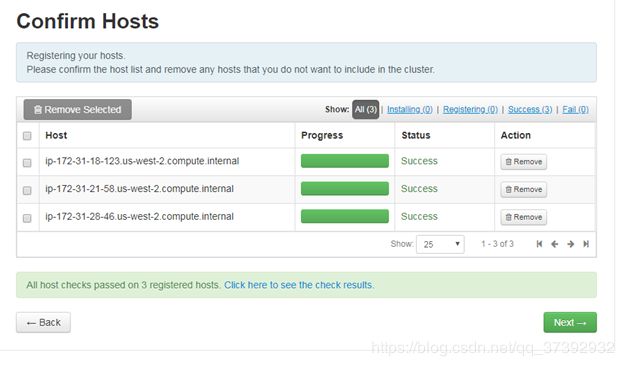

把你服务器的hostname写进去

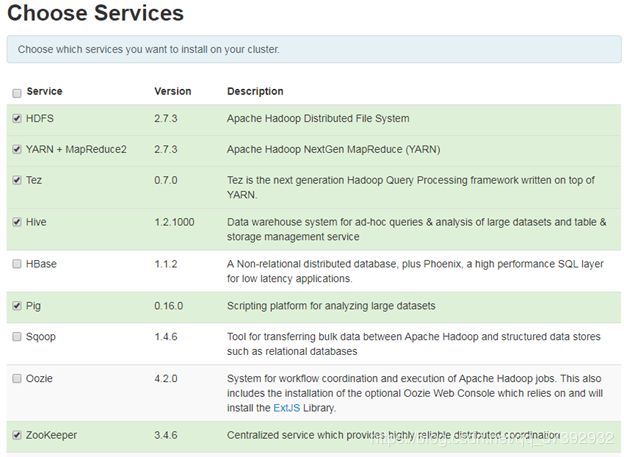

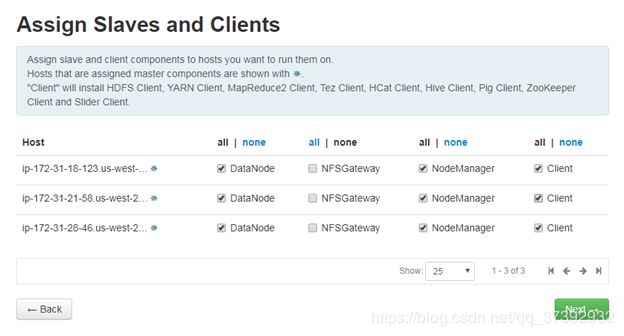

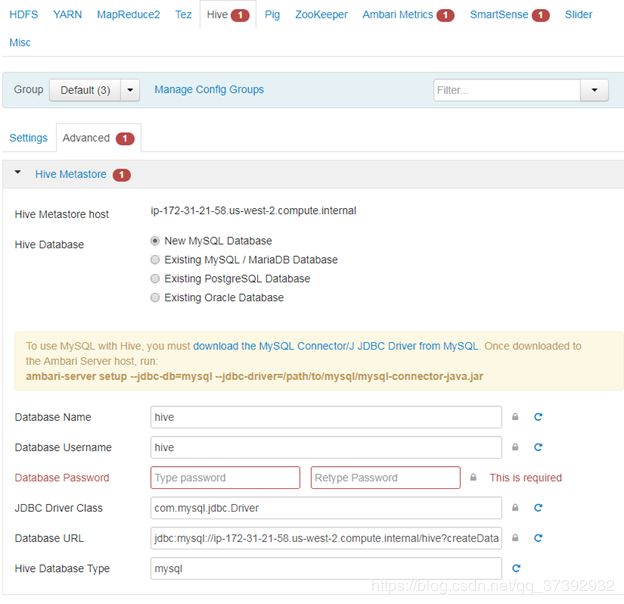

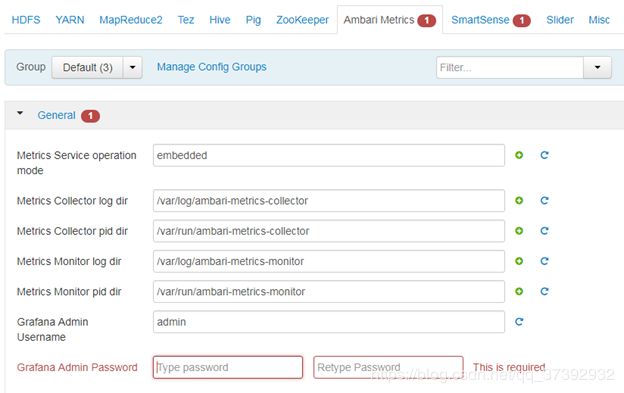

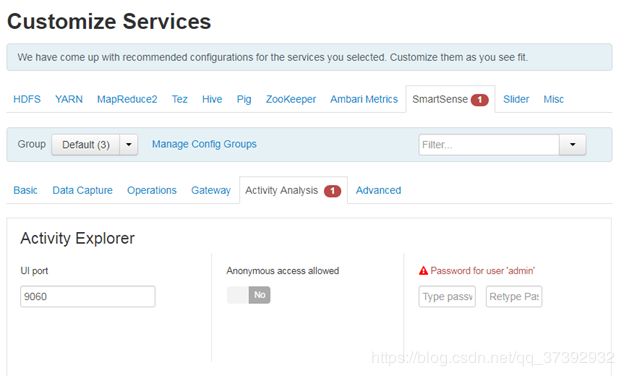

合理分配资源

合理分配资源

完成后

第二部分 解决问题

此处套用一些网上的博客以及自己的一些解决方案

问题一:

ERROR: Exiting with exit code -1.

REASON: Before starting Ambari Server, you must copy the MySQL JDBC driver JAR file to /usr/share/java and set property “server.jdbc.driver.path=[path/to/custom_jdbc_driver]” in ambari.properties.

解决办法:

将mysql的驱动包放到/usr/share/java

在/etc/ambari-server/conf/ambari.properties中添加

server.jdbc.driver.path=/usr/share/java/mysql-connector-java.jar

1 将mysql的驱动包放到/usr/share/java

2在/etc/ambari-server/conf/ambari.properties中添加

3server.jdbc.driver.path=/usr/share/java/mysql-connector-java.jar

问题二:

Error: Package: ambari-server-2.6.0.0-267.x86_64 (ambari-2.6.0.0)

Requires: postgresql-server >= 8.1

You could try using –skip-broken to work around the problem

You could try running: rpm -Va –nofiles –nodigest

解决办法:

[ambari@jesgoo-hz16 software]$ sudo rpm -ivh postgresql-libs-8.4.18-1.el6_4.x86_64.rpm

[ambari@jesgoo-hz16 software]$ sudo rpm -ivh postgresql-8.4.18-1.el6_4.x86_64.rpm

[ambari@jesgoo-hz16 software]$ sudo rpm -ivh postgresql-server-8.4.18-1.el6_4.x86_64.rpm

问题三:

You will need to run those service checks from ambari server host manually and once those service checks are successful then only you should proceed for the upgrade.

解决办法:

So please try this

Ambari UI --> HDFS --> "Service Actions" (drop down from right corner) --> Run Service check

Ambari UI --> OOZIE --> "Service Actions" (drop down from right corner) --> Run Service check

Ambari UI --> ZOOKEEPRR --> "Service Actions" (drop down from right corner) --> Run Service check

Ambari UI --> HIVE --> "Service Actions" (drop down from right corner) --> Run Service check

问题四:

The following Kafka properties should be set properly: inter.broker.protocol.version and log.message.format.version

解决办法:

inter.broker.protocol.version = 0.10.1

log.message.format.version = 0.10.1

问题五

- SSLError: Failed to connect. Please check openssl versions

- 升级openssl(这个应该没有大问题)

- 将JDK换成ORACLE jdk不是sun jdk

- 修改python配置文件

vi /etc/python/cert-verification.cfg

[https]

verify=disable

- 修改ambari配置文件

vim /etc/ambari-agent/conf/ambari-agent.ini

[security]

force_https_protocol=PROTOCOL_TLSv1_2问题六

相关错误:

1.ambari启动后,hbase服务正常,但是之后时不时的挂掉一两个节点,去挂掉的节点上查看日志

内容如下

2016-12-12 10:47:03,487 WARN [regionserver/slave1.sardoop.com/192.168.0.37:16020] wal.ProtobufLogWriter: Failed to write trailer, non-fatal, continuing...

org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.hdfs.server.namenode.LeaseExpiredException): No lease on /apps/hbase/data/oldWALs/Node1%2C16020%2C1481510498265.default.1481510529594 (inode 25442): File is not open for writing. Holder DFSClient_NONMAPREDUCE_1762492244_1 does not have any open files.

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.checkLease(FSNamesystem.java:3536)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.getAdditionalDatanode(FSNamesystem.java:3436)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.getAdditionalDatanode(NameNodeRpcServer.java:877)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.getAdditionalDatanode(ClientNamenodeProtocolServerSideTranslatorPB.java:523)

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:640)

at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:982)

at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2313)

at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2309)

解决方法:

到Ambari的HBase服务下修改配置hbase-env.sh两处

修改前:

export HBASE_REGIONSERVER_OPTS="$HBASE_REGIONSERVER_OPTS -Xmn{{regionserver_xmn_size}} -XX:CMSInitiatingOccupancyFraction=70 -Xms{{regionserver_heapsize}} -Xmx{{regionserver_heapsize}} $JDK_DEPENDED_OPTS"

修改后:

export HBASE_REGIONSERVER_OPTS="$HBASE_REGIONSERVER_OPTS -XX:MaxTenuringThreshold=3 -XX:SurvivorRatio=8 -XX:+UseG1GC -XX:MaxGCPauseMillis=50 -XX:InitiatingHeapOccupancyPercent=75 -XX:NewRatio=39 -Xms{{regionserver_heapsize}} -Xmx{{regionserver_heapsize}} $JDK_DEPENDED_OPTS"

修改前:

export HBASE_OPTS="$HBASE_OPTS -XX:+UseConcMarkSweepGC -XX:ErrorFile={{log_dir}}/hs_err_pid%p.log -Djava.io.tmpdir={{java_io_tmpdir}}"

修改后:

export HBASE_OPTS="$HBASE_OPTS -XX:ErrorFile={{log_dir}}/hs_err_pid%p.log -Djava.io.tmpdir={{java_io_tmpdir}}"

2.HDFS的权限问题

在Ambari上安装好集群之后 自然要使用,无论是在页面上往查看目录,还是在命令行操作文件或目录,经常会出现如下问题:

【Permission denied: user=dr.who, access=READ_EXECUTE, inode="/tmp/hive":ambari-qa:hdfs:drwx-wx-wx】

黑体部分依次是 HDFS目录、目录拥有者、目录拥有者所在组。

解决方式有两种:

①修改目录权限

sudo -u hdfs hadoop dfs -chmod [-R] 755 /user/hdfs #红字部分是指该命令的执行用户,这里使用目录所有者

这种方式破坏了原有的权限设计,个人不建议

②使用对应的用户去执行命令,如

sudo -u hdfs hadoop dfs -ls /user/hive #即 根据目录的权限,选择使用对应的用户

要注意的是,如果要上传文件,最好先用 su someuser 登录,然后再执行(这样路径才不会出错,否则会找不到路径)

其实还有另外一种添加超级权限用户组的方式,感兴趣可参考 HDFS Permissions: Overcoming The "Permission Denied" AccessControlException

问题七

你记得更改你系统的语言,这个版本的python对中文非常不友好

问题八

ambari安装oozie失败

问题原因:/usr/bin/yum -d 0 -e 0 -y install extjs 很明显,在源中不存在extrs,其实在ambari安装的时候,应该就把extjs加入的,但是因为我把这台服务器上的ambari删除了,导致了这个错误

解决方法:

第一种(不支持):

我们进入其他节点拷贝extjs-2.2-1.noarch.rpm到这台服务器上的指定目录

第二种:

参考https://www.cnblogs.com/julyme/p/5642486.html

通过Ambari把这台服务器上的所有组件都删除,最后删除ambari-agent,重新添加host

问题九

在编译ambari-server的时候,进行rpm打包的时候报错:

[ERROR] Failed to execute goal org.codehaus.mojo:rpm-maven-plugin:2.1.4:rpm (default-cli) on project ambari-server: RPM build execution returned: '1' executing '/bin/sh -c cd '/home/ambari/apache-ambari-2.6.1-src/ambari-server/target/rpm/ambari-server/SPECS' && 'rpmbuild' '-bb' '--target' 'x86_64-redhat-linux' '--buildroot' '/home/ambari/apache-ambari-2.6.1-src/ambari-server/target/rpm/ambari-server/buildroot' '--define' '_topdir /home/ambari/apache-ambari-2.6.1-src/ambari-server/target/rpm/ambari-server' '--define' '_build_name_fmt %%{ARCH}/%%{NAME}-%%{VERSION}-%%{RELEASE}.%%{ARCH}.rpm' '--define' '_builddir %{_topdir}/BUILD' '--define' '_rpmdir %{_topdir}/RPMS' '--define' '_sourcedir %{_topdir}/SOURCES' '--define' '_specdir %{_topdir}/SPECS' '--define' '_srcrpmdir %{_topdir}/SRPMS' 'ambari-server.spec'' -> [Help 1]

[ERROR]

[ERROR] To see the full stack trace of the errors, re-run Maven with the -e switch.

[ERROR] Re-run Maven using the -X switch to enable full debug logging.

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/MojoExecutionException

maven在报出这个错误之前info信息有打印出找不到/usr/bin/python2.6这个文件。所以进入目录查看了一下确实不存在该文件,因为装的是python2.7,有个python2.7的文件,所以建立软连接,问题解决。

问题十

2016-11-25 09:26:59,930 - Package['hdp-select'] {'retry_on_repo_unavailability': False, 'retry_count': 5} 2016-11-25 09:26:59,942 - Skipping installation of existing package hdp-select 2016-11-25 09:27:00,081 - Package['spark_2_5_0_0_1245'] {'retry_on_repo_unavailability': False, 'retry_count': 5} 2016-11-25 09:27:00,164 - Skipping installation of existing package spark_2_5_0_0_1245 2016-11-25 09:27:00,166 - Package['spark_2_5_0_0_1245-python'] {'retry_on_repo_unavailability': False, 'retry_count': 5} 2016-11-25 09:27:00,177 - Skipping installation of existing package spark_2_5_0_0_1245-python 2016-11-25 09:27:00,179 - Package['livy_2_5_0_0_1245'] {'retry_on_repo_unavailability': False, 'retry_count': 5} 2016-11-25 09:27:00,196 - Skipping installation of existing package livy_2_5_0_0_1245 2016-11-25 09:27:00,201 - Using hadoop conf dir: /usr/hdp/current/hadoop-client/conf 2016-11-25 09:27:00,203 - call['ambari-python-wrap /usr/bin/hdp-select status spark-client'] {'timeout': 20} 2016-11-25 09:27:00,225 - call returned (0, 'spark-client - 2.5.0.0-1245') 2016-11-25 09:27:00,228 - Directory['/var/run/spark'] {'owner': 'spark', 'create_parents': True, 'group': 'hadoop', 'mode': 0775} 2016-11-25 09:27:00,229 - Directory['/var/log/spark'] {'owner': 'spark', 'group': 'hadoop', 'create_parents': True, 'mode': 0775} 2016-11-25 09:27:00,229 - PropertiesFile['/usr/hdp/current/spark-client/conf/spark-defaults.conf'] {'owner': 'spark', 'key_value_delimiter': ' ', 'group': 'spark', 'mode': 0644, 'properties': ...} 2016-11-25 09:27:00,236 - Generating properties file: /usr/hdp/current/spark-client/conf/spark-defaults.conf 2016-11-25 09:27:00,237 - File['/usr/hdp/current/spark-client/conf/spark-defaults.conf'] {'owner': 'spark', 'content': InlineTemplate(...), 'group': 'spark', 'mode': 0644} Command failed after 1 tries

yum repolist

yum install spark_2_5_0_0_1245 hdp-select问题十一

安装延时问题,如果上述问题解决不了,很可能是获取国外包延时太高,那么

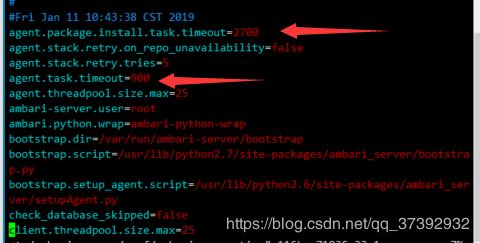

vim /etc/ambari-server/conf/ambari.properties干脆等着

问题十二

如果上述问题依然解决不了,最后你安装的软件你只需要选择必须安装的,什么hive之类我们可以在结束之后慢慢安装

最后附几个论坛和安装手册

https://community.hortonworks.com/search.html?f=&type=question+OR+repo+OR+supportkb+OR+kbentry+OR+idea&redirect=search%2Fsearch&sort=relevance&q=Command+aborted.+Reason%3A+%27Stage+timeout%27

https://docs.hortonworks.com/HDPDocuments/Ambari-2.6.1.5/bk_ambari-installation/bk_ambari-installation.pdf

历史命令和安装文档在我的github上,你们可以浏览或者发我邮件我发你们