深度学习笔记-Hyperparameter tuning-2.1.1-initialization初始化

Improving Deep Neural Networks-Hyperparameter tuning, Regularization and Optimization:https://www.coursera.org/learn/deep-neural-network

这段时间会一直更深度学习的内容。

先明确说明代码不是我写的,我只是整理,来源于Cousera的代码,将代码大幅度缩短,补充说明。

这个章节属于Hyperparameter tuning, Regularization and Optimization的Practical aspects of Deep Learning的initialization,

这个章节不止有随机初始化,还有正则化和梯度检测,这是第一个。

完整目录从这里跳转。

这一章的代码分成几部分

0.模块准备

1.Neural Network model算法模块

2.Zero initialization-零初始化

3.Random initialization-随机初始化

4.He initialization-He初始化

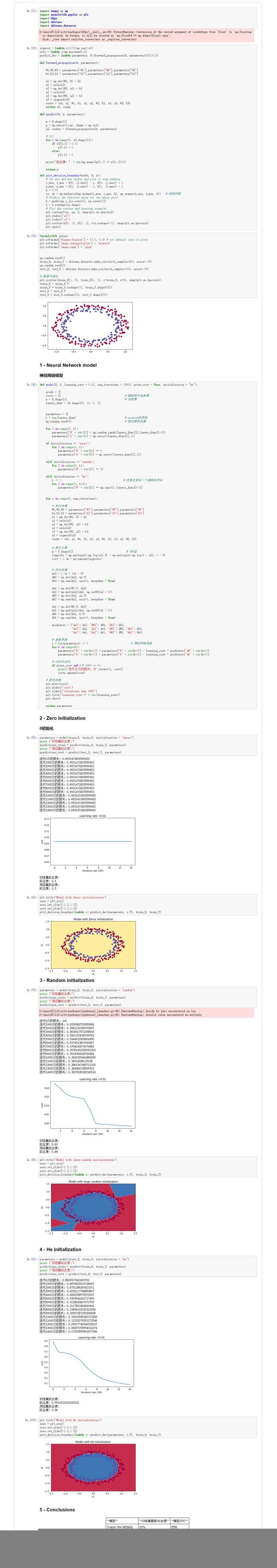

先看一下整体的效果图

0.效果图

已经把代码缩短了,也上传了。

https://download.csdn.net/download/qq_42731466/10946787

1.代码

import numpy as np

import matplotlib.pyplot as plt

import h5py

import sklearn

import sklearn.datasets

# 0.模块准备

sigmoid = lambda x:1/(1+np.exp(-x))

relu = lambda x:np.maximum(0,x)

predict_dec = lambda parameters, X:(forward_propagation(X, parameters)[0]>0.5)

def forward_propagation(X, parameters):

W1,W2,W3 = parameters["W1"],parameters["W2"],parameters["W3"]

b1,b2,b3 = parameters["b1"],parameters["b2"],parameters["b3"]

z1 = np.dot(W1, X) + b1

a1 = relu(z1)

z2 = np.dot(W2, a1) + b2

a2 = relu(z2)

z3 = np.dot(W3, a2) + b3

a3 = sigmoid(z3)

cache = (z1, a1, W1, b1, z2, a2, W2, b2, z3, a3, W3, b3)

return a3, cache

def predict(X, y, parameters):

m = X.shape[1]

p = np.zeros((1,m), dtype = np.int)

a3, caches = forward_propagation(X, parameters)

# 0/1

for i in range(0, a3.shape[1]):

if a3[0,i] > 0.5:

p[0,i] = 1

else:

p[0,i] = 0

print("拟合度: " + str(np.mean((p[0,:] == y[0,:]))))

return p

def plot_decision_boundary(model, X, y):

# Set min and max values and give it some padding

x_min, x_max = X[0, :].min() - 1, X[0, :].max() + 1

y_min, y_max = X[1, :].min() - 1, X[1, :].max() + 1

h = 0.01

xx, yy = np.meshgrid(np.arange(x_min, x_max, h), np.arange(y_min, y_max, h)) # 绘制网格

# Predict the function value for the whole grid

Z = model(np.c_[xx.ravel(), yy.ravel()])

Z = Z.reshape(xx.shape)

# Plot the contour and training examples

plt.contourf(xx, yy, Z, cmap=plt.cm.Spectral)

plt.ylabel('x2')

plt.xlabel('x1')

plt.scatter(X[0, :], X[1, :], c=y.reshape(-1), cmap=plt.cm.Spectral)

plt.show()

plt.rcParams['figure.figsize'] = (7.0, 4.0) # set default size of plots

plt.rcParams['image.interpolation'] = 'nearest'

plt.rcParams['image.cmap'] = 'gray'

np.random.seed(1)

train_X, train_Y = sklearn.datasets.make_circles(n_samples=300, noise=.05)

np.random.seed(2)

test_X, test_Y = sklearn.datasets.make_circles(n_samples=100, noise=.05)

# 数据可视化

plt.scatter(train_X[:, 0], train_X[:, 1], c=train_Y, s=40, cmap=plt.cm.Spectral);

train_X = train_X.T

train_Y = train_Y.reshape((1, train_Y.shape[0]))

test_X = test_X.T

test_Y = test_Y.reshape((1, test_Y.shape[0]))

# 0.算法模块

def model(X, Y, learning_rate = 0.01, num_iterations = 15000, print_cost = True, initialization = "he"):

grads = {}

costs = [] # 跟踪损失函数值

m = X.shape[1] # 训练集

layers_dims = [X.shape[0], 10, 5, 1]

parameters = {}

L = len(layers_dims) # network的层数

np.random.seed(3) # 固定随机的值

for l in range(1, L):

parameters['W' + str(l)] = np.random.randn(layers_dims[l],layers_dims[l-1])

parameters['b' + str(l)] = np.zeros((layers_dims[l],1))

if initialization == "zeros":

for l in range(1, L):

parameters['W' + str(l)] *= 0

parameters['b' + str(l)] = np.zeros((layers_dims[l],1))

elif initialization == "random":

for l in range(1, L):

parameters['W' + str(l)] *= 10

elif initialization == "he":

L -= 1 # 注意这里有一个细微的变化

for l in range(1, L+1):

parameters['W' + str(l)] *= np.sqrt(2./layers_dims[l-1])

for i in range(0, num_iterations):

# 前向传播

W1,W2,W3 = parameters["W1"],parameters["W2"],parameters["W3"]

b1,b2,b3 = parameters["b1"],parameters["b2"],parameters["b3"]

z1 = np.dot(W1, X) + b1

a1 = relu(z1)

z2 = np.dot(W2, a1) + b2

a2 = relu(z2)

z3 = np.dot(W3, a2) + b3

a3 = sigmoid(z3)

cache = (z1, a1, W1, b1, z2, a2, W2, b2, z3, a3, W3, b3)

# 损失计算

m = Y.shape[1] # Y标签

logprobs = np.multiply(-np.log(a3),Y) + np.multiply(-np.log(1 - a3), 1 - Y)

cost = 1./m * np.nansum(logprobs)

# 反向传播

dz3 = 1./m * (a3 - Y)

dW3 = np.dot(dz3, a2.T)

db3 = np.sum(dz3, axis=1, keepdims = True)

da2 = np.dot(W3.T, dz3)

dz2 = np.multiply(da2, np.int64(a2 > 0))

dW2 = np.dot(dz2, a1.T)

db2 = np.sum(dz2, axis=1, keepdims = True)

da1 = np.dot(W2.T, dz2)

dz1 = np.multiply(da1, np.int64(a1 > 0))

dW1 = np.dot(dz1, X.T)

db1 = np.sum(dz1, axis=1, keepdims = True)

gradients = {"dz3": dz3, "dW3": dW3, "db3": db3,

"da2": da2, "dz2": dz2, "dW2": dW2, "db2": db2,

"da1": da1, "dz1": dz1, "dW1": dW1, "db1": db1}

# 参数更新

L = len(parameters) // 2 # 神经网络层数

for k in range(L):

parameters["W" + str(k+1)] = parameters["W" + str(k+1)] - learning_rate * gradients["dW" + str(k+1)]

parameters["b" + str(k+1)] = parameters["b" + str(k+1)] - learning_rate * gradients["db" + str(k+1)]

# 1000次迭代

if print_cost and i % 1000 == 0:

print("迭代{}次的损失: {}".format(i, cost))

costs.append(cost)

# 损失绘制

plt.plot(costs)

plt.ylabel('cost')

plt.xlabel('iterations (per 100)')

plt.title("Learning rate =" + str(learning_rate))

plt.show()

return parameters

# 1.零初始化进行梯度下降

parameters = model(train_X, train_Y, initialization = "zeros")

print ("训练集拟合度:")

predictions_train = predict(train_X, train_Y, parameters)

print ("测试集拟合度:")

predictions_test = predict(test_X, test_Y, parameters)

# 对结果进行绘图

plt.title("Model with Zeros initialization")

axes = plt.gca()

axes.set_xlim([-1.5,1.5])

axes.set_ylim([-1.5,1.5])

plot_decision_boundary(lambda x: predict_dec(parameters, x.T), train_X, train_Y)

# 2.随机初始化

parameters = model(train_X, train_Y, initialization = "random")

print ("训练集拟合度:")

predictions_train = predict(train_X, train_Y, parameters)

print ("测试集拟合度:")

predictions_test = predict(test_X, test_Y, parameters)

plt.title("Model with large random initialization")

axes = plt.gca()

axes.set_xlim([-1.5,1.5])

axes.set_ylim([-1.5,1.5])

plot_decision_boundary(lambda x: predict_dec(parameters, x.T), train_X, train_Y)

# 3.He初始化

parameters = model(train_X, train_Y, initialization = "he")

print ("训练集拟合度:")

predictions_train = predict(train_X, train_Y, parameters)

print ("测试集拟合度:")

predictions_test = predict(test_X, test_Y, parameters)

plt.title("Model with He initialization")

axes = plt.gca()

axes.set_xlim([-1.5,1.5])

axes.set_ylim([-1.5,1.5])

plot_decision_boundary(lambda x: predict_dec(parameters, x.T), train_X, train_Y)