使用Prometheus Operator优雅的监控Kubernetes

什么是Prometheus-Operator

Prometheus-Operator是一套为了方便整合prometheus和kubernetes的软件,使用Prometheus-Operator可以非常简单的在kubernetes集群中部署Prometheus服务,并且提供对kubernetes集群的监控,并且通过Prometheus-Operator用户能够使用简单的声明性配置来配置和管理Prometheus实例,这些配置将响应、创建、配置和管理Prometheus监控实例

Operator的核心思想是将Prometheus的部署与它监控的对象的配置分离,做到部署与监控对象的配置分离之后,就可以轻松实现动态配置。使用Operator部署了Prometheus之后就可以不用再管Prometheus Server了,以后如果要添加监控对象或者添加告警规则,只需要编写对应的ServiceMonitor和Prometheus资源就可以,不用再重启Prometheus服务,Operator会动态的观察配置的改动,并将其生成为对应的prometheus配置文件其中Operator可以部署、管理Prometheus Service

Prometheus-Operator的架构

上图是Prometheus-Operator官方提供的架构图,从下向上看,Operator可以部署并且管理Prometheus Server,并且Operator可以观察Prometheus,那么这个观察是什么意思呢,上图中Service1 - Service5其实就是kubernetes的service,kubernetes的资源分为Service、Deployment、ServiceMonitor、ConfigMap等等,所以上图中的Service和ServiceMonitor都是kubernetes资源,一个ServiceMonitor可以通过labelSelector的方式去匹配一类Service(一般来说,一个ServiceMonitor对应一个Service),Prometheus也可以通过labelSelector去匹配多个ServiceMonitor。

-

Operator : Operator是整个系统的主要控制器,会以Deployment的方式运行于Kubernetes集群上,并根据自定义资源(Custom Resources Definition)CRD 来负责管理与部署Prometheus,Operator会通过监听这些CRD的变化来做出相对应的处理。

-

Prometheus : Operator会观察集群内的Prometheus CRD(Prometheus 也是一种CRD)来创建一个合适的statefulset在monitoring(.metadata.namespace指定)命名空间,并且挂载了一个名为prometheus-k8s的Secret为Volume到/etc/prometheus/config目录,Secret的data包含了以下内容

- configmaps.json指定了rule-files在configmap的名字

- prometheus.yaml为主配置文件

-

ServiceMonitor : ServiceMonitor就是一种kubernetes自定义资源(CRD),Operator会通过监听ServiceMonitor的变化来动态生成Prometheus的配置文件中的Scrape targets,并让这些配置实时生效,operator通过将生成的job更新到上面的prometheus-k8s这个Secret的Data的prometheus.yaml字段里,然后prometheus这个pod里的sidecar容器

prometheus-config-reloader当检测到挂载路径的文件发生改变后自动去执行HTTP Post请求到/api/-reload-路径去reload配置。该自定义资源(CRD)通过labels选取对应的Service,并让prometheus server通过选取的Service拉取对应的监控信息(metric) -

Service :Service其实就是指kubernetes的service资源,这里特指Prometheus exporter的service,比如部署在kubernetes上的mysql-exporter的service

想象一下,我们以传统的方式去监控一个mysql服务,首先需要安装mysql-exporter,获取mysql metrics,并且暴露一个端口,等待prometheus服务来拉取监控信息,然后去Prometheus Server的prometheus.yaml文件中在scarpe_config中添加mysql-exporter的job,配置mysql-exporter的地址和端口等信息,再然后,需要重启Prometheus服务,就完成添加一个mysql监控的任务

现在我们以Prometheus-Operator的方式来部署Prometheus,当我们需要添加一个mysql监控我们会怎么做,首先第一步和传统方式一样,部署一个mysql-exporter来获取mysql监控项,然后编写一个ServiceMonitor通过labelSelector选择刚才部署的mysql-exporter,由于Operator在部署Prometheus的时候默认指定了Prometheus选择label为:prometheus: kube-prometheus的ServiceMonitor,所以只需要在ServiceMonitor上打上prometheus: kube-prometheus标签就可以被Prometheus选择了,完成以上两步就完成了对mysql的监控,不需要改Prometheus配置文件,也不需要重启Prometheus服务,是不是很方便,Operator观察到ServiceMonitor发生变化,会动态生成Prometheus配置文件,并保证配置文件实时生效

如何编写ServiceMonitor

ServiceMonitor是一个kubernetes的自定义资源,所以得遵循Kubernetes ServiceMonitor编写规范,这里通过动态添加一个mysql监控的示例来演示如何编写ServiceMonitor

先决条件:

- 已搭建好kubernetes集群

- 已通过使用prometheus-operator来部署好了Prometheus服务

- kubernetes中已经成功安装好了mysql

在满足上述先决条件的情况下,首先打开prometheus server的界面,如下图,可以在Targets下看到已经有prometheus和kubernetes自身的监控了,还没有mysql监控

接下来在kubernetes中添加mysql监控的exporter:prometheus-mysql-exporter 这里采用helm的方式安装prometheus-mysql-exporter,按照github上的步骤进行安装,修改values.yaml中的datasource为安装在kubernetes中mysql的地址,然后执行命令helm install --name me-release -f values.yaml stable/prometheus-mysql-exporter ,接下来通过执行命令kubectl get service查看刚才运行的mysql-exporter的service:

root@k8s1:/usr/local/src/charts# kubectl get service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

alertmanager-operated ClusterIP None 9093/TCP,6783/TCP 4d

grafana-grafana NodePort 10.106.170.105 80:32324/TCP 4d

kube-prometheus NodePort 10.111.168.249 9090:30900/TCP 22h

kube-prometheus-alertmanager NodePort 10.101.224.156 9093:30903/TCP 22h

kube-prometheus-exporter-kube-state ClusterIP 10.107.60.242 80/TCP 22h

kube-prometheus-exporter-node ClusterIP 10.111.96.180 9100/TCP 22h

me-release-prometheus-mysql-exporter ClusterIP 10.109.252.44 9104/TCP 50s

prometheus-operated ClusterIP None 9090/TCP 4d

找到me-release-prometheus-mysql-exporter,说明服务已经起来了,然后执行命令kubectl describe service me-release-prometheus-mysql-exporter 查看该service信息:

root@k8s1:/usr/local/src/charts# kubectl describe service me-release-prometheus-mysql-exporter

Name: me-release-prometheus-mysql-exporter

Namespace: monitoring

Labels: app=prometheus-mysql-exporter

chart=prometheus-mysql-exporter-0.1.0

heritage=Tiller

release=me-release

Annotations:

Selector: app=prometheus-mysql-exporter,release=me-release

Type: ClusterIP

IP: 10.109.252.44

Port: mysql-exporter 9104/TCP

TargetPort: 9104/TCP

Endpoints: 10.244.4.15:9104

Session Affinity: None

Events:

可以看到该service在k8s内的端口是Port: mysql-exporter 9104/TCP

接下来编写ServiceMonitor文件,执行命令vim servicemonitor.yaml

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor #资源类型为ServiceMonitor

metadata:

labels:

prometheus: kube-prometheus #prometheus默认通过 prometheus: kube-prometheus发现ServiceMonitor,只要写上这个标签prometheus服务就能发现这个ServiceMonitor

name: prometheus-exporter-mysql

spec:

jobLabel: app #jobLabel指定的标签的值将会作为prometheus配置文件中scrape_config下job_name的值,也就是Target,如果不写,默认为service的name

selector:

matchLabels: #该ServiceMonitor匹配的Service的labels,如果使用mathLabels,则下面的所有标签都匹配时才会匹配该service,如果使用matchExpressions,则至少匹配一个标签的service都会被选择

app: prometheus-mysql-exporter # 由于前面查看mysql-exporter的service信息中标签包含了app: prometheus-mysql-exporter这个标签,写上就能匹配到

namespaceSelector:

any: true #表示从所有namespace中去匹配,如果只想选择某一命名空间中的service,可以使用matchNames: []的方式

# mathNames: []

endpoints:

- port: mysql-exporter #前面查看mysql-exporter的service信息中,提供mysql监控信息的端口是Port: mysql-exporter 9104/TCP,所以这里填mysql-exporter

interval: 30s #每30s获取一次信息

# path: /metrics HTTP path to scrape for metrics,默认值为/metrics

honorLabels: true

保存并退出文件,然后执行命令:kubectl create -f servicemonitor.yaml,创建成功之后执行命令kubectl get serviceMonitor查看是否有刚才创建的serviceMonitor:

root@k8s1:~/mysql-exporter# kubectl create -f servicemonitor.yaml

servicemonitor.monitoring.coreos.com/prometheus-exporter-mysql created

root@k8s1:~/mysql-exporter# kubectl get serviceMonitor

NAME AGE

grafana 4d

kafka-release 5h

kafka-release-exporter 5h

kube-prometheus 23h

kube-prometheus-alertmanager 23h

kube-prometheus-exporter-coredns 23h

kube-prometheus-exporter-kube-controller-manager 23h

kube-prometheus-exporter-kube-etcd 23h

kube-prometheus-exporter-kube-scheduler 23h

kube-prometheus-exporter-kube-state 23h

kube-prometheus-exporter-kubelets 23h

kube-prometheus-exporter-kubernetes 23h

kube-prometheus-exporter-node 23h

prometheus-exporter-mysql 13s

prometheus-operator 4d

root@k8s1:~/mysql-exporter#

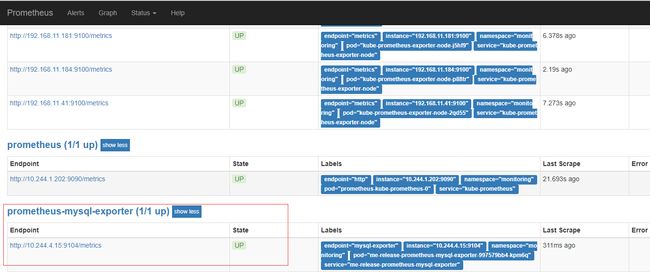

可以看到Prometheus-exporter-mysql已经存在了,表示创建成功了,过1分钟左右,在prometheus的界面中查看Targets,可以看到已经成功添加了mysql监控。

上面提到prometheus通过标签prometheus: kube-prometheus选择ServiceMonitor,该配置写在这里, 当然,你可以通过在values.yaml中配置serviceMonitorsSelector来指定按照自己的规则选择serviceMonitor,关于如何配置serviceMonitorsSelector将放在后文统一讲解

如何动态添加告警规则

当我们动态添加了监控对象,一般会对该对象配置告警规则,采用prometheus-operator的架构模式下,当我们需要动态配置告警规则的时候,可以使用另一种自定义资源(CRD)PrometheusRule,PrometheusRule和ServiceMonitor都是一种自定义资源,ServiceMonitor用于动态添加监控实例,而PrometheusRule则用于动态添加告警规则,下面依然通过动态添加mysql的告警规则为例来演示如何使用PrometheusRule资源。

执行命令vim mysql-rule.yaml,输入以下内容

apiVersion: monitoring.coreos.com/v1 #这和ServiceMonitor一样

kind: PrometheusRule #该资源类型是Prometheus,这也是一种自定义资源(CRD)

metadata:

labels:

app: "prometheus-rule-mysql"

prometheus: kube-prometheus #同ServiceMonitor,ruleSelector也会默认选择标签为prometheus: kube-prometheus的PrometheusRule资源

name: prometheus-rule-mysql

spec:

groups: #编写告警规则,和prometheus的告警规则语法相同

- name: mysql.rules

rules:

- alert: TooManyErrorFromMysql

expr: sum(irate(mysql_global_status_connection_errors_total[1m])) > 10

labels:

severity: critical

annotations:

description: mysql产生了太多的错误.

summary: TooManyErrorFromMysql

- alert: TooManySlowQueriesFromMysql

expr: increase(mysql_global_status_slow_queries[1m]) > 10

labels:

severity: critical

annotations:

description: mysql一分钟内产生了{{ $value }}条慢查询日志.

summary: TooManySlowQueriesFromMysql

Prometheus选择PrometheusRule资源是通过ruleSelector来选择,默认也是通过标签:prometheus: kube-prometheus来选择,在这里可以看到,ruleSelector和ServiceMonitorsSelector都是可以配置的,如何配置将放在后文的配置统一讲解

保存以上文件之后执行kubectl create -f mysql-rule.yaml,创建成功之后执行命令kubectl get prometheusRule可以看到刚才创建的PrometheusRule资源prometheus-rule-mysql:

root@k8s1:~/mysql-exporter# kubectl create -f mysql-rule.yaml

prometheusrule.monitoring.coreos.com/prometheus-rule-mysql created

root@k8s1:~/mysql-exporter# kubectl get prometheusRule

NAME AGE

kube-prometheus 1h

kube-prometheus-alertmanager 1h

kube-prometheus-exporter-kube-controller-manager 1h

kube-prometheus-exporter-kube-etcd 1h

kube-prometheus-exporter-kube-scheduler 1h

kube-prometheus-exporter-kube-state 1h

kube-prometheus-exporter-kubelets 1h

kube-prometheus-exporter-kubernetes 1h

kube-prometheus-exporter-node 1h

kube-prometheus-rules 1h

prometheus-rule-mysql 8s

等待1分钟左右,在prometheus图形界面中可以找到刚才添加的mysql.rule的内容了

如何动态更新Alertmanager配置

原理

Operator部署Alertmanager的时候会生成一个statefulset类型对象,通过命令kubectl get statefulset --all-namespaces可以找到这个statefulset,可以看到name是alertmanager-kube-prometheus

root@k8s1:~/prometheus-operator/helm/alertmanager/templates# kubectl get statefulset --all-namespaces

NAMESPACE NAME DESIRED CURRENT AGE

elk elastic-release-elasticsearch-data 2 2 8d

elk elastic-release-elasticsearch-master 3 3 8d

elk logstash-release 1 1 9d

kafka kafka-release 3 3 1d

kafka zookeeper-release 3 3 12d

kube-system mongodb-release-arbiter 1 1 16d

kube-system mongodb-release-primary 1 1 16d

kube-system mongodb-release-secondary 1 1 16d

kube-system my-release-mysqlha 3 3 15d

monitoring alertmanager-kube-prometheus 1 1 9h

monitoring prometheus-kube-prometheus 1 1 9h

然后执行命令kubectl describe statefulset alertmanager-kube-prometheus -n monitoring可以看到该statefulset的详细信息:

root@k8s1:~/prometheus-operator/helm/alertmanager/templates# kubectl describe statefulset alertmanager-kube-prometheus -n monitoring

Name: alertmanager-kube-prometheus

Namespace: monitoring

CreationTimestamp: Wed, 05 Sep 2018 09:46:08 +0800

Selector: alertmanager=kube-prometheus,app=alertmanager

Labels: alertmanager=kube-prometheus

app=alertmanager

chart=alertmanager-0.1.6

heritage=Tiller

release=kube-prometheus

Annotations:

Replicas: 1 desired | 1 total

Update Strategy: RollingUpdate

Pods Status: 1 Running / 0 Waiting / 0 Succeeded / 0 Failed

Pod Template:

Labels: alertmanager=kube-prometheus

app=alertmanager

Containers:

alertmanager:

Image: intellif.io/prometheus-operator/alertmanager:v0.15.1

Ports: 9093/TCP, 6783/TCP

Host Ports: 0/TCP, 0/TCP

Args:

--config.file=/etc/alertmanager/config/alertmanager.yaml

--cluster.listen-address=$(POD_IP):6783

--storage.path=/alertmanager

--web.listen-address=:9093

--web.external-url=http://192.168.11.178:30903

--web.route-prefix=/

--cluster.peer=alertmanager-kube-prometheus-0.alertmanager-operated.monitoring.svc:6783

Requests:

memory: 200Mi

Liveness: http-get http://:web/api/v1/status delay=0s timeout=3s period=10s #success=1 #failure=10

Readiness: http-get http://:web/api/v1/status delay=3s timeout=3s period=5s #success=1 #failure=10

Environment:

POD_IP: (v1:status.podIP)

Mounts:

/alertmanager from alertmanager-kube-prometheus-db (rw)

/etc/alertmanager/config from config-volume (rw) #挂载的secert目录

config-reloader:

Image: intellif.io/prometheus-operator/configmap-reload:v0.0.1

Port:

Host Port:

Args:

-webhook-url=http://localhost:9093/-/reload

-volume-dir=/etc/alertmanager/config

Limits:

cpu: 5m

memory: 10Mi

Environment:

Mounts:

/etc/alertmanager/config from config-volume (ro)

Volumes:

config-volume:

Type: Secret (a volume populated by a Secret)

SecretName: alertmanager-kube-prometheus

Optional: false

alertmanager-kube-prometheus-db:

Type: EmptyDir (a temporary directory that shares a pod's lifetime)

Medium:

Volume Claims:

Events:

该statefulset挂载了一个名为alertmanager-kube-prometheus的secret资源到alertmanager容器内部的/etc/alertmanager/config/alertmanager.yaml,上面的Volumes:下面的config-volume:标签下可以看到,Type:字段的值为Secret表示挂载一个secret资源,secrect的name是alertmanager-kube-prometheus,通过一下命令查看该secret: kubectl describe secrets alertmanager-kube-prometheus -n monitoring

root@k8s1:~# kubectl describe secrets alertmanager-kube-prometheus -n monitoring

Name: alertmanager-kube-prometheus

Namespace: monitoring

Labels: alertmanager=kube-prometheus

app=alertmanager

chart=alertmanager-0.1.6

heritage=Tiller

release=kube-prometheus

Annotations:

Type: Opaque

Data

====

alertmanager.yaml: 567 bytes

可以看到该secert的Data项里面有一个key为alertmanager.yaml的属性,其value包含567bytes,而这个alertmanager.yaml的值其实就是alertmanager容器的/etc/alertmanager/config/alertmanager.yaml中的内容,statefulset通过挂载的方式将/etc/alertmanager/config挂载成一个secret,执行kubectl edit secrets -n monitoring alertmanager-kube-prometheus可以看到该secret的内容:

apiVersion: v1

data:

alertmanager.yaml: Z2xvYmFsOgogIHJlc29sdmVfdGltZW91dDogNW0KICBzbXRwX2F1dGhfcGFzc3dvcmQ6ICoqKioKICBzbXRwX2F1dGhfdXNlcm5hbWU6IGlub3JpX3lpbmprQDE2My5jb20KICBzbXRwX2Zyb206IGlub3JpX3lpbmprMUAxNjMuY29tCiAgc210cF9yZXF1aXJlX3RsczogZmFsc2UKICBzbXRwX3NtYXJ0aG9zdDogc210cC4xNjMuY29tOjI1CnJlY2VpdmVyczoKLSBlbWFpbF9jb25maWdzOgogIC0gaGVhZGVyczoKICAgICAgU3ViamVjdDogJ1tFUlJPUl0gcHJvbWV0aGV1cy4uLi4uLi4uLi4uLicKICAgIHRvOiB4eHh4QHFxLmNvbQogIG5hbWU6IHRlYW0tWC1tYWlscwotIG5hbWU6ICJudWxsIgpyb3V0ZToKICBncm91cF9ieToKICAtIGFsZXJ0bmFtZQogIC0gY2x1c3RlcgogIC0gc2VydmljZQogIGdyb3VwX2ludGVydmFsOiA1bQogIGdyb3VwX3dhaXQ6IDYwcwogIHJlY2VpdmVyOiB0ZWFtLVgtbWFpbHMKICByZXBlYXRfaW50ZXJ2YWw6IDI0aAogIHJvdXRlczoKICAtIG1hdGNoOgogICAgICBhbGVydG5hbWU6IERlYWRNYW5zU3dpdGNoCiAgICByZWNlaXZlcjogIm51bGwi

kind: Secret

metadata:

creationTimestamp: 2018-09-05T01:46:08Z

labels:

alertmanager: kube-prometheus

app: alertmanager

chart: alertmanager-0.1.6

heritage: Tiller

release: kube-prometheus

name: alertmanager-kube-prometheus

namespace: monitoring

resourceVersion: "5820063"

selfLink: /api/v1/namespaces/monitoring/secrets/alertmanager-kube-prometheus

uid: 75a589e8-b0ad-11e8-8746-005056bf1d6e

type: Opaque

其中data:下面的alertmanager.yaml这个key对应的值是一串base64编码过后的字符串,将这段字符串复制出来通过base64反编码之后内容如下:

global:

resolve_timeout: 5m

smtp_auth_password: xxxxxx

smtp_auth_username: [email protected]

smtp_from: [email protected]

smtp_require_tls: false

smtp_smarthost: smtp.163.com:25

receivers:

- email_configs:

- headers:

Subject: '[ERROR] prometheus............'

to: [email protected]

name: team-X-mails

- name: "null"

route:

group_by:

- alertname

- cluster

- service

group_interval: 5m

group_wait: 60s

receiver: team-X-mails

repeat_interval: 24h

routes:

- match:

alertname: DeadMansSwitch

receiver: "null"

这其实就是alertmanager的config配置,上面说到,该内容会被挂载到alertmanager容器的/etc/alertmanager/config/alertmanager.yaml,我们进入alertmanager容器去看看该文件,执行命令kubectl exec -it alertmanager-kube-prometheus-0 -n monitoring sh进入到容器(可能你的容器名和我的不同,可以通过kubectl get pods --all-namespaces命令查看所有的容器),然后进入目录/etc/alertmanager/config,然后ls可以看到该目录下有一个叫alertmanager.yaml的文件,而该文件的内容就是上面base64反编译之后的内容,我们通过修改名为alertmanager-kube-prometheus的secret的data属性中的alertmanager.yaml字段对应的值就相当于修改了该文件中的内容,所以现在问题就变简单了,在alertmanager的pod中还有另一个container叫做config-reloader,它会监听/etc/alertmanager/config目录,当该目录下的文件发生变化的时候,config-reloader会向alertmanager发起http://localhost:9093/-/reloadPOST请求,alertmanager会重新加载该目录下的配置文件,从而实现了动态配置更新

如何操作

在理解了alertmanager动态配置的原理之后,问题就很清晰了,我们需要动态配置alertmanager只需要更新名为alertmanager-kube-prometheus(你的secret名不一定为这个名字,但一定是alertmanager-{*}格式)的secret的data属性中的alertmanager.yaml字段的值就可以了,更新secret有两种方法,一是通过kubectl edit secret的方式,一种是通过kubectl patch secret的方式,但是两种方式更新secret都需要输入base64编码之后的字符串,这里通过linux下的base64命令进行编码:

首先修改上面base64反编译后的文件,比如smtp_from改成另一个邮箱发送,修改完成之后保存文件,然后通过命令base64 file > test.txt的方式将配置通过base64编码并将编码结果输出到test.txt文件中,然后进入test.txt文件中复制编码之后的字符串,如果通过第一种方式更新secret,执行命令kubectl edit secrets -n monitoring alertmanager-kube-prometheus然后data下面的alertmanager.yaml的值为刚才复制的字符串,保存并退出就可以了。如果通过第二种方式更新secert,执行命令kubectl patch secert alertmanager-kube-prometheus -n -n monitoring -p '{"data":{"alertmanager.yaml":"此处填写刚才复制的base64编码之后的配置字符串"}'即可完成更新,该命令中 -p参数后面跟的是一个JSON字符串,将刚才复制的base64编码后的字符串填入正确的位置可以了

在完成更新之后可以访问alertmanager的界面http://192.168.11.178:30903/#/status,查看配置已经生效了

通过上面的操作我们已经实现了监控对象的动态发现,监控告警规则的动态添加,告警配置(发送邮件)的动态配置,基本上已经实现了所有配置的动态配置

Prometheus-Operator配置

配置Prometheus一般情况下只需要配置kube-prometheus下的values.yaml就能实现对alertmanager、promethes的配置,该文件该如何配置在配置项上一般都有说明,这里主要讲解上文提到的几个配置以及其他比较常用的几个配置,避免占用太多篇幅,我已经将比较简单或不常用的配置以省略号代替:

...

alertmanager: #所有alertmanager的配置都在这个标签下面

config: #alermanager的config,和传统的alertmanager的config配置相同

global:

resolve_timeout: 5m

route:

group_by: ['job']

group_wait: 30s

group_interval: 5m

repeat_interval: 12h

receiver: 'null'

routes:

- match:

alertname: DeadMansSwitch

receiver: 'null'

receivers:

- name: 'null'

## 外部url,报警发送邮件后可以通过该Url访问Alertmanager的界面

externalUrl: "http://192.168.11.178:30903"

...

## Node labels for Alertmanager pod assignment 通过该选择器将会选择alertmanager在哪个node上面部署

## Ref: https://kubernetes.io/docs/user-guide/node-selection/

##

nodeSelector: {}

...

## List of Secrets in the same namespace as the AlertManager

## object, which shall be mounted into the AlertManager Pods.

## Ref: https://github.com/coreos/prometheus-operator/blob/master/Documentation/api.md#alertmanagerspec

##

secrets: []

service:

...

## Port to expose on each node

## Only used if service.type is 'NodePort'

##

nodePort: 30903 #暴露的端口

## Service type

##

type: NodePort # 以NodePort的方式部署alertmanager 的Service,这将可以使用node的ip访问服务

prometheus: #所有的prometheus的配置都在这里编写

## Alertmanagers to which alerts will be sent

## Ref: https://github.com/coreos/prometheus-operator/blob/master/Documentation/api.md#alertmanagerendpoints

##

alertingEndpoints: []

#alertmanager地址,不填写默认会使用同时部署的alertmanager,在helm/prometheus/templates/prometheus.yaml文件第40行,

#github中https://github.com/coreos/prometheus-operator/blob/master/helm/prometheus/templates/prometheus.yaml#L40

# - name: ""

# namespace: ""

# port: 9093

# scheme: http

...

## External URL at which Prometheus will be reachable

##同上,外部访问地址

externalUrl: ""

## List of Secrets in the same namespace as the Prometheus

## object, which shall be mounted into the Prometheus Pods.

## Ref: https://github.com/coreos/prometheus-operator/blob/master/Documentation/api.md#prometheusspec

##

secrets: []

## How long to retain metrics

## prometheus时序数据库数据保存时间

retention: 24h

## Namespaces to be selected for PrometheusRules discovery.

## If unspecified, only the same namespace as the Prometheus object is in is used.

## 选择PrometheusRule的namespace,如何配置any:true则回去所有命名空间中去寻找PrometheusRule资源

ruleNamespaceSelector: {}

## any: true

## or

##

## Rules PrometheusRule CRD selector

## Ref: https://github.com/coreos/prometheus-operator/blob/master/Documentation/design.md

##

## 1. If `matchLabels` is used, `rules.additionalLabels` must contain all the labels from

## `matchLabels` in order to be be matched by Prometheus

## 2. If `matchExpressions` is used `rules.additionalLabels` must contain at least one label

## from `matchExpressions` in order to be matched by Prometheus

## Ref: https://kubernetes.io/docs/concepts/overview/working-with-objects/labels

## 上文提到的ruleNamespaceSelector,不填写默认会使用prometheus: {{.Values.prometheusLabelValue}},

## 而prometheusLabel定义在helm/prometheus/values.yaml中,默认为.Release.Name即releaseName(kube-prometheus)

## 我们可以通过修改helm/prometheus/values.yaml中的prometheusLabel值来修改默认选择的标签,也可以在这里定义自己的标签,

## 在这里定义了标签之后默认的会失效,比如在这里定义一个comment: prometheus标签,默认的prometheus: kube-prometheus就会失效,prometheus也将根据新定义的标签来选择PrometheusRule资源

rulesSelector: {}

# rulesSelector: {

# matchExpressions: [{key: prometheus, operator: In, values: [example-rules, example-rules-2]}]

# }

### OR

# rulesSelector: {

# matchLabels: [{role: example-rules}]

# }

## Prometheus alerting & recording rules

## Ref: https://prometheus.io/docs/querying/rules/

## Ref: https://prometheus.io/docs/alerting/rules/

##

rules: #可以在这里配置PrometheusRule资源,一般不在这里配置,而是单独编写PrometheusRule这样可以实现动态配置

specifiedInValues: true

## What additional rules to be added to the PrometheusRule CRD

## You can use this together with `rulesSelector`

additionalLabels: {}

# prometheus: example-rules

# application: etcd

value: {}

service:

## Port to expose on each node

## Only used if service.type is 'NodePort'

##

nodePort: 30900 #同上,暴露的端口

## Service type

##

type: NodePort #同上,将Prometheus Service映射到外网

## Service monitors selector

## Ref: https://github.com/coreos/prometheus-operator/blob/master/Documentation/design.md

## 同上,prometheus默认会选择带有prometheus: kube-prometheus标签的ServiceMonitor资源,也可以在这里配置自定义的标签,一旦在这里配置了标签,默认的将会失效,prometheus会按照新的serviceMonitorSelector中定义的标签来选择对应的ServiceMonitor

serviceMonitorsSelector: {}

# matchLabels:

# - comment: prometheus

# - release: kube-prometheus

## ServiceMonitor CRDs to create & be scraped by the Prometheus instance.

## Ref: https://github.com/coreos/prometheus-operator/blob/master/Documentation/service-monitor.md

##

serviceMonitors: [] #可以在这里配置serviceMonitor资源,一般不再这里配置,参见如何编写ServiceMonitor章节

参考

- [官方博客] The Prometheus Operator: Managed Prometheus setups for Kubernetes

- [官方文档] Prometheus Operator

- Prometheus-Operator的Github地址

- kube-prometheus/values.yaml

- Document/custon-configuration.md

- kube-prometheus/values.values中各配置的作用

- Prometheus Operator 介紹與安裝

- Prometheus Operator