基于pytorch的DCGAN人脸数据生成

%matplotlib inline

DCGAN Tutorial

Author: Nathan Inkawhich __

Introduction

This tutorial will give an introduction to DCGANs through an example. We

will train a generative adversarial network (GAN) to generate new

celebrities after showing it pictures of many real celebrities. Most of

the code here is from the dcgan implementation in

pytorch/examples __, and this

document will give a thorough explanation of the implementation and shed

light on how and why this model works. But don’t worry, no prior

knowledge of GANs is required, but it may require a first-timer to spend

some time reasoning about what is actually happening under the hood.

Also, for the sake of time it will help to have a GPU, or two. Lets

start from the beginning.

Generative Adversarial Networks

What is a GAN?

GANs are a framework for teaching a DL model to capture the training

data’s distribution so we can generate new data from that same

distribution. GANs were invented by Ian Goodfellow in 2014 and first

described in the paper `Generative Adversarial

Nets `__.

They are made of two distinct models, a *generator* and a

*discriminator*. The job of the generator is to spawn ‘fake’ images that

look like the training images. The job of the discriminator is to look

at an image and output whether or not it is a real training image or a

fake image from the generator. During training, the generator is

constantly trying to outsmart the discriminator by generating better and

better fakes, while the discriminator is working to become a better

detective and correctly classify the real and fake images. The

equilibrium of this game is when the generator is generating perfect

fakes that look as if they came directly from the training data, and the

discriminator is left to always guess at 50% confidence that the

generator output is real or fake.

Now, lets define some notation to be used throughout tutorial starting

with the discriminator. Let $x$ be data representing an image.

$D(x)$ is the discriminator network which outputs the (scalar)

probability that $x$ came from training data rather than the

generator. Here, since we are dealing with images the input to

$D(x)$ is an image of CHW size 3x64x64. Intuitively, $D(x)$

should be HIGH when $x$ comes from training data and LOW when

$x$ comes from the generator. $D(x)$ can also be thought of

as a traditional binary classifier.

For the generator’s notation, let $z$ be a latent space vector

sampled from a standard normal distribution. $G(z)$ represents the

generator function which maps the latent vector $z$ to data-space.

The goal of $G$ is to estimate the distribution that the training

data comes from ($p_{data}$) so it can generate fake samples from

that estimated distribution ($p_g$).

So, $D(G(z))$ is the probability (scalar) that the output of the

generator $G$ is a real image. As described in `Goodfellow’s

paper `__,

$D$ and $G$ play a minimax game in which $D$ tries to

maximize the probability it correctly classifies reals and fakes

($logD(x)$), and $G$ tries to minimize the probability that

$D$ will predict its outputs are fake ($log(1-D(G(x)))$).

From the paper, the GAN loss function is

\begin{align}\underset{G}{\text{min}} \underset{D}{\text{max}}V(D,G) = \mathbb{E}_{x\sim p_{data}(x)}\big[logD(x)\big] + \mathbb{E}_{z\sim p_{z}(z)}\big[log(1-D(G(z)))\big]\end{align}

In theory, the solution to this minimax game is where

$p_g = p_{data}$, and the discriminator guesses randomly if the

inputs are real or fake. However, the convergence theory of GANs is

still being actively researched and in reality models do not always

train to this point.

What is a DCGAN?

A DCGAN is a direct extension of the GAN described above, except that it

explicitly uses convolutional and convolutional-transpose layers in the

discriminator and generator, respectively. It was first described by

Radford et. al. in the paper Unsupervised Representation Learning With Deep Convolutional Generative Adversarial Networks . The discriminator

is made up of strided

convolution

layers, batch norm __

layers, and

LeakyReLU __

activations. The input is a 3x64x64 input image and the output is a

scalar probability that the input is from the real data distribution.

The generator is comprised of

convolutional-transpose __

layers, batch norm layers, and

ReLU __ activations. The

input is a latent vector, z z z, that is drawn from a standard

normal distribution and the output is a 3x64x64 RGB image. The strided

conv-transpose layers allow the latent vector to be transformed into a

volume with the same shape as an image. In the paper, the authors also

give some tips about how to setup the optimizers, how to calculate the

loss functions, and how to initialize the model weights, all of which

will be explained in the coming sections.

from __future__ import print_function

#%matplotlib inline

import argparse

import os

import random

import torch

import torch.nn as nn

import torch.nn.parallel

import torch.backends.cudnn as cudnn

import torch.optim as optim

import torch.utils.data

import torchvision.datasets as dset

import torchvision.transforms as transforms

import torchvision.utils as vutils

import numpy as np

import matplotlib.pyplot as plt

import matplotlib.animation as animation

from IPython.display import HTML

# Set random seed for reproducibility

manualSeed = 999

#manualSeed = random.randint(1, 10000) # use if you want new results

print("Random Seed: ", manualSeed)

random.seed(manualSeed)

torch.manual_seed(manualSeed)

Random Seed: 999

Inputs

Let’s define some inputs for the run:

- dataroot - the path to the root of the dataset folder. We will

talk more about the dataset in the next section - workers - the number of worker threads for loading the data with

the DataLoader - batch_size - the batch size used in training. The DCGAN paper

uses a batch size of 128 - image_size - the spatial size of the images used for training.

This implementation defaults to 64x64. If another size is desired,

the structures of D and G must be changed. See

here__ for more

details - nc - number of color channels in the input images. For color

images this is 3 - nz - length of latent vector

- ngf - relates to the depth of feature maps carried through the

generator - ndf - sets the depth of feature maps propagated through the

discriminator - num_epochs - number of training epochs to run. Training for

longer will probably lead to better results but will also take much

longer - lr - learning rate for training. As described in the DCGAN paper,

this number should be 0.0002 - beta1 - beta1 hyperparameter for Adam optimizers. As described in

paper, this number should be 0.5 - ngpu - number of GPUs available. If this is 0, code will run in

CPU mode. If this number is greater than 0 it will run on that number

of GPUs

# Root directory for dataset

dataroot = "data/celeba"

# Number of workers for dataloader

workers = 2

# Batch size during training

batch_size = 128

# Spatial size of training images. All images will be resized to this

# size using a transformer.

image_size = 64

# Number of channels in the training images. For color images this is 3

nc = 3

# Size of z latent vector (i.e. size of generator input)

nz = 100

# Size of feature maps in generator

ngf = 64

# Size of feature maps in discriminator

ndf = 64

# Number of training epochs

num_epochs = 5

# Learning rate for optimizers

lr = 0.0002

# Beta1 hyperparam for Adam optimizers

beta1 = 0.5

# Number of GPUs available. Use 0 for CPU mode.

ngpu = 1

Data

In this tutorial we will use the Celeb-A Faces dataset __ which can

be downloaded at the linked site, or in Google Drive __.

The dataset will download as a file named img_align_celeba.zip. Once

downloaded, create a directory named celeba and extract the zip file

into that directory. Then, set the dataroot input for this notebook to

the celeba directory you just created. The resulting directory

structure should be:

::

/path/to/celeba

-> img_align_celeba

-> 188242.jpg

-> 173822.jpg

-> 284702.jpg

-> 537394.jpg

…

This is an important step because we will be using the ImageFolder

dataset class, which requires there to be subdirectories in the

dataset’s root folder. Now, we can create the dataset, create the

dataloader, set the device to run on, and finally visualize some of the

training data.

# We can use an image folder dataset the way we have it setup.

# Create the dataset

dataset = dset.ImageFolder(root=dataroot,

transform=transforms.Compose([

transforms.Resize(image_size),

transforms.CenterCrop(image_size),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5)),

]))

# Create the dataloader

dataloader = torch.utils.data.DataLoader(dataset, batch_size=batch_size,

shuffle=True, num_workers=workers)

# Decide which device we want to run on

device = torch.device("cuda:0" if (torch.cuda.is_available() and ngpu > 0) else "cpu")

# Plot some training images

real_batch = next(iter(dataloader))

plt.figure(figsize=(8,8))

plt.axis("off")

plt.title("Training Images")

plt.imshow(np.transpose(vutils.make_grid(real_batch[0].to(device)[:64], padding=2, normalize=True).cpu(),(1,2,0)))

Implementation

With our input parameters set and the dataset prepared, we can now get

into the implementation. We will start with the weigth initialization

strategy, then talk about the generator, discriminator, loss functions,

and training loop in detail.

Weight Initialization

From the DCGAN paper, the authors specify that all model weights shall

be randomly initialized from a Normal distribution with mean=0,

stdev=0.02. The ``weights_init`` function takes an initialized model as

input and reinitializes all convolutional, convolutional-transpose, and

batch normalization layers to meet this criteria. This function is

applied to the models immediately after initialization.

```python

# custom weights initialization called on netG and netD

def weights_init(m):

classname = m.__class__.__name__

if classname.find('Conv') != -1:

nn.init.normal_(m.weight.data, 0.0, 0.02)

elif classname.find('BatchNorm') != -1:

nn.init.normal_(m.weight.data, 1.0, 0.02)

nn.init.constant_(m.bias.data, 0)

```

Generator

~~~~~~~~~

The generator, $G$, is designed to map the latent space vector

($z$) to data-space. Since our data are images, converting

$z$ to data-space means ultimately creating a RGB image with the

same size as the training images (i.e. 3x64x64). In practice, this is

accomplished through a series of strided two dimensional convolutional

transpose layers, each paired with a 2d batch norm layer and a relu

activation. The output of the generator is fed through a tanh function

to return it to the input data range of $[-1,1]$. It is worth

noting the existence of the batch norm functions after the

conv-transpose layers, as this is a critical contribution of the DCGAN

paper. These layers help with the flow of gradients during training. An

image of the generator from the DCGAN paper is shown below.

.. figure:: /_static/img/dcgan_generator.png

:alt: dcgan_generator

Notice, the how the inputs we set in the input section (*nz*, *ngf*, and

*nc*) influence the generator architecture in code. *nz* is the length

of the z input vector, *ngf* relates to the size of the feature maps

that are propagated through the generator, and *nc* is the number of

channels in the output image (set to 3 for RGB images). Below is the

code for the generator.

```python

# Generator Code

class Generator(nn.Module):

def __init__(self, ngpu):

super(Generator, self).__init__()

self.ngpu = ngpu

self.main = nn.Sequential(

# input is Z, going into a convolution

nn.ConvTranspose2d( nz, ngf * 8, 4, 1, 0, bias=False),

nn.BatchNorm2d(ngf * 8),

nn.ReLU(True),

# state size. (ngf*8) x 4 x 4

nn.ConvTranspose2d(ngf * 8, ngf * 4, 4, 2, 1, bias=False),

nn.BatchNorm2d(ngf * 4),

nn.ReLU(True),

# state size. (ngf*4) x 8 x 8

nn.ConvTranspose2d( ngf * 4, ngf * 2, 4, 2, 1, bias=False),

nn.BatchNorm2d(ngf * 2),

nn.ReLU(True),

# state size. (ngf*2) x 16 x 16

nn.ConvTranspose2d( ngf * 2, ngf, 4, 2, 1, bias=False),

nn.BatchNorm2d(ngf),

nn.ReLU(True),

# state size. (ngf) x 32 x 32

nn.ConvTranspose2d( ngf, nc, 4, 2, 1, bias=False),

nn.Tanh()

# state size. (nc) x 64 x 64

)

def forward(self, input):

return self.main(input)

```

Now, we can instantiate the generator and apply the ``weights_init``

function. Check out the printed model to see how the generator object is

structured.

```python

# Create the generator

netG = Generator(ngpu).to(device)

# Handle multi-gpu if desired

if (device.type == 'cuda') and (ngpu > 1):

netG = nn.DataParallel(netG, list(range(ngpu)))

# Apply the weights_init function to randomly initialize all weights

# to mean=0, stdev=0.2.

netG.apply(weights_init)

# Print the model

print(netG)

```

Generator(

(main): Sequential(

(0): ConvTranspose2d(100, 512, kernel_size=(4, 4), stride=(1, 1), bias=False)

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace=True)

(3): ConvTranspose2d(512, 256, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1), bias=False)

(4): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(5): ReLU(inplace=True)

(6): ConvTranspose2d(256, 128, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1), bias=False)

(7): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(8): ReLU(inplace=True)

(9): ConvTranspose2d(128, 64, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1), bias=False)

(10): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(11): ReLU(inplace=True)

(12): ConvTranspose2d(64, 3, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1), bias=False)

(13): Tanh()

)

)

Discriminator

~~~~~~~~~~~~~

As mentioned, the discriminator, $D$, is a binary classification

network that takes an image as input and outputs a scalar probability

that the input image is real (as opposed to fake). Here, $D$ takes

a 3x64x64 input image, processes it through a series of Conv2d,

BatchNorm2d, and LeakyReLU layers, and outputs the final probability

through a Sigmoid activation function. This architecture can be extended

with more layers if necessary for the problem, but there is significance

to the use of the strided convolution, BatchNorm, and LeakyReLUs. The

DCGAN paper mentions it is a good practice to use strided convolution

rather than pooling to downsample because it lets the network learn its

own pooling function. Also batch norm and leaky relu functions promote

healthy gradient flow which is critical for the learning process of both

$G$ and $D$.

Discriminator Code

```python

class Discriminator(nn.Module):

def __init__(self, ngpu):

super(Discriminator, self).__init__()

self.ngpu = ngpu

self.main = nn.Sequential(

# input is (nc) x 64 x 64

nn.Conv2d(nc, ndf, 4, 2, 1, bias=False),

nn.LeakyReLU(0.2, inplace=True),

# state size. (ndf) x 32 x 32

nn.Conv2d(ndf, ndf * 2, 4, 2, 1, bias=False),

nn.BatchNorm2d(ndf * 2),

nn.LeakyReLU(0.2, inplace=True),

# state size. (ndf*2) x 16 x 16

nn.Conv2d(ndf * 2, ndf * 4, 4, 2, 1, bias=False),

nn.BatchNorm2d(ndf * 4),

nn.LeakyReLU(0.2, inplace=True),

# state size. (ndf*4) x 8 x 8

nn.Conv2d(ndf * 4, ndf * 8, 4, 2, 1, bias=False),

nn.BatchNorm2d(ndf * 8),

nn.LeakyReLU(0.2, inplace=True),

# state size. (ndf*8) x 4 x 4

nn.Conv2d(ndf * 8, 1, 4, 1, 0, bias=False),

nn.Sigmoid()

)

def forward(self, input):

return self.main(input)

```

Now, as with the generator, we can create the discriminator, apply the

``weights_init`` function, and print the model’s structure.

```python

# Create the Discriminator

netD = Discriminator(ngpu).to(device)

# Handle multi-gpu if desired

if (device.type == 'cuda') and (ngpu > 1):

netD = nn.DataParallel(netD, list(range(ngpu)))

# Apply the weights_init function to randomly initialize all weights

# to mean=0, stdev=0.2.

netD.apply(weights_init)

# Print the model

print(netD)

```

Discriminator(

(main): Sequential(

(0): Conv2d(3, 64, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1), bias=False)

(1): LeakyReLU(negative_slope=0.2, inplace=True)

(2): Conv2d(64, 128, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1), bias=False)

(3): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(4): LeakyReLU(negative_slope=0.2, inplace=True)

(5): Conv2d(128, 256, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1), bias=False)

(6): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(7): LeakyReLU(negative_slope=0.2, inplace=True)

(8): Conv2d(256, 512, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1), bias=False)

(9): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(10): LeakyReLU(negative_slope=0.2, inplace=True)

(11): Conv2d(512, 1, kernel_size=(4, 4), stride=(1, 1), bias=False)

(12): Sigmoid()

)

)

Loss Functions and Optimizers

With D D D and G G G setup, we can specify how they learn

through the loss functions and optimizers. We will use the Binary Cross

Entropy loss

(BCELoss __)

function which is defined in PyTorch as:

\begin{align}\ell(x, y) = L = {l_1,\dots,l_N}^\top, \quad l_n = - \left[ y_n \cdot \log x_n + (1 - y_n) \cdot \log (1 - x_n) \right]\end{align}

Notice how this function provides the calculation of both log components

in the objective function (i.e. l o g ( D ( x ) ) log(D(x)) log(D(x)) and

l o g ( 1 − D ( G ( z ) ) ) log(1-D(G(z))) log(1−D(G(z)))). We can specify what part of the BCE equation to

use with the y y y input. This is accomplished in the training loop

which is coming up soon, but it is important to understand how we can

choose which component we wish to calculate just by changing y y y

(i.e. GT labels).

Next, we define our real label as 1 and the fake label as 0. These

labels will be used when calculating the losses of D D D and

G G G, and this is also the convention used in the original GAN

paper. Finally, we set up two separate optimizers, one for D D D and

one for G G G. As specified in the DCGAN paper, both are Adam

optimizers with learning rate 0.0002 and Beta1 = 0.5. For keeping track

of the generator’s learning progression, we will generate a fixed batch

of latent vectors that are drawn from a Gaussian distribution

(i.e. fixed_noise) . In the training loop, we will periodically input

this fixed_noise into G G G, and over the iterations we will see

images form out of the noise.

# Initialize BCELoss function

criterion = nn.BCELoss()

# Create batch of latent vectors that we will use to visualize

# the progression of the generator

fixed_noise = torch.randn(64, nz, 1, 1, device=device)

# Establish convention for real and fake labels during training

real_label = 1

fake_label = 0

# Setup Adam optimizers for both G and D

optimizerD = optim.Adam(netD.parameters(), lr=lr, betas=(beta1, 0.999))

optimizerG = optim.Adam(netG.parameters(), lr=lr, betas=(beta1, 0.999))

Training

Finally, now that we have all of the parts of the GAN framework defined,

we can train it. Be mindful that training GANs is somewhat of an art

form, as incorrect hyperparameter settings lead to mode collapse with

little explanation of what went wrong. Here, we will closely follow

Algorithm 1 from Goodfellow’s paper, while abiding by some of the best

practices shown in `ganhacks `__.

Namely, we will “construct different mini-batches for real and fake”

images, and also adjust G’s objective function to maximize

$logD(G(z))$. Training is split up into two main parts. Part 1

updates the Discriminator and Part 2 updates the Generator.

**Part 1 - Train the Discriminator**

Recall, the goal of training the discriminator is to maximize the

probability of correctly classifying a given input as real or fake. In

terms of Goodfellow, we wish to “update the discriminator by ascending

its stochastic gradient”. Practically, we want to maximize

$log(D(x)) + log(1-D(G(z)))$. Due to the separate mini-batch

suggestion from ganhacks, we will calculate this in two steps. First, we

will construct a batch of real samples from the training set, forward

pass through $D$, calculate the loss ($log(D(x))$), then

calculate the gradients in a backward pass. Secondly, we will construct

a batch of fake samples with the current generator, forward pass this

batch through $D$, calculate the loss ($log(1-D(G(z)))$),

and *accumulate* the gradients with a backward pass. Now, with the

gradients accumulated from both the all-real and all-fake batches, we

call a step of the Discriminator’s optimizer.

**Part 2 - Train the Generator**

As stated in the original paper, we want to train the Generator by

minimizing $log(1-D(G(z)))$ in an effort to generate better fakes.

As mentioned, this was shown by Goodfellow to not provide sufficient

gradients, especially early in the learning process. As a fix, we

instead wish to maximize $log(D(G(z)))$. In the code we accomplish

this by: classifying the Generator output from Part 1 with the

Discriminator, computing G’s loss *using real labels as GT*, computing

G’s gradients in a backward pass, and finally updating G’s parameters

with an optimizer step. It may seem counter-intuitive to use the real

labels as GT labels for the loss function, but this allows us to use the

$log(x)$ part of the BCELoss (rather than the $log(1-x)$

part) which is exactly what we want.

Finally, we will do some statistic reporting and at the end of each

epoch we will push our fixed_noise batch through the generator to

visually track the progress of G’s training. The training statistics

reported are:

- **Loss_D** - discriminator loss calculated as the sum of losses for

the all real and all fake batches ($log(D(x)) + log(D(G(z)))$).

- **Loss_G** - generator loss calculated as $log(D(G(z)))$

- **D(x)** - the average output (across the batch) of the discriminator

for the all real batch. This should start close to 1 then

theoretically converge to 0.5 when G gets better. Think about why

this is.

- **D(G(z))** - average discriminator outputs for the all fake batch.

The first number is before D is updated and the second number is

after D is updated. These numbers should start near 0 and converge to

0.5 as G gets better. Think about why this is.

**Note:** This step might take a while, depending on how many epochs you

run and if you removed some data from the dataset.

# Training Loop

# Lists to keep track of progress

img_list = []

G_losses = []

D_losses = []

iters = 0

print("Starting Training Loop...")

# For each epoch

for epoch in range(num_epochs):

# For each batch in the dataloader

for i, data in enumerate(dataloader, 0):

############################

# (1) Update D network: maximize log(D(x)) + log(1 - D(G(z)))

###########################

## Train with all-real batch

netD.zero_grad()

# Format batch

real_cpu = data[0].to(device)

b_size = real_cpu.size(0)

label = torch.full((b_size,), real_label, device=device)

# Forward pass real batch through D

output = netD(real_cpu).view(-1)

# Calculate loss on all-real batch

errD_real = criterion(output, label)

# Calculate gradients for D in backward pass

errD_real.backward()

D_x = output.mean().item()

## Train with all-fake batch

# Generate batch of latent vectors

noise = torch.randn(b_size, nz, 1, 1, device=device)

# Generate fake image batch with G

fake = netG(noise)

label.fill_(fake_label)

# Classify all fake batch with D

output = netD(fake.detach()).view(-1)

# Calculate D's loss on the all-fake batch

errD_fake = criterion(output, label)

# Calculate the gradients for this batch

errD_fake.backward()

D_G_z1 = output.mean().item()

# Add the gradients from the all-real and all-fake batches

errD = errD_real + errD_fake

# Update D

optimizerD.step()

############################

# (2) Update G network: maximize log(D(G(z)))

###########################

netG.zero_grad()

label.fill_(real_label) # fake labels are real for generator cost

# Since we just updated D, perform another forward pass of all-fake batch through D

output = netD(fake).view(-1)

# Calculate G's loss based on this output

errG = criterion(output, label)

# Calculate gradients for G

errG.backward()

D_G_z2 = output.mean().item()

# Update G

optimizerG.step()

# Output training stats

if i % 50 == 0:

print('[%d/%d][%d/%d]\tLoss_D: %.4f\tLoss_G: %.4f\tD(x): %.4f\tD(G(z)): %.4f / %.4f'

% (epoch, num_epochs, i, len(dataloader),

errD.item(), errG.item(), D_x, D_G_z1, D_G_z2))

# Save Losses for plotting later

G_losses.append(errG.item())

D_losses.append(errD.item())

# Check how the generator is doing by saving G's output on fixed_noise

if (iters % 500 == 0) or ((epoch == num_epochs-1) and (i == len(dataloader)-1)):

with torch.no_grad():

fake = netG(fixed_noise).detach().cpu()

img_list.append(vutils.make_grid(fake, padding=2, normalize=True))

iters += 1

Starting Training Loop...

/opt/conda/conda-bld/pytorch_1587428091666/work/aten/src/ATen/native/TensorFactories.cpp:361: UserWarning: Deprecation warning: In a future PyTorch release torch.full will no longer return tensors of floating dtype by default. Instead, a bool fill_value will return a tensor of torch.bool dtype, and an integral fill_value will return a tensor of torch.long dtype. Set the optional `dtype` or `out` arguments to suppress this warning.

[0/5][0/1567] Loss_D: 2.1659 Loss_G: 4.8325 D(x): 0.5253 D(G(z)): 0.6765 / 0.0149

[0/5][50/1567] Loss_D: 0.0725 Loss_G: 12.9152 D(x): 0.9461 D(G(z)): 0.0012 / 0.0000

[0/5][100/1567] Loss_D: 0.4784 Loss_G: 9.7521 D(x): 0.9363 D(G(z)): 0.2498 / 0.0002

[0/5][150/1567] Loss_D: 0.9729 Loss_G: 4.8527 D(x): 0.6332 D(G(z)): 0.0643 / 0.0203

[0/5][200/1567] Loss_D: 1.5795 Loss_G: 3.1628 D(x): 0.3932 D(G(z)): 0.0064 / 0.0651

[0/5][250/1567] Loss_D: 0.2961 Loss_G: 3.6781 D(x): 0.9172 D(G(z)): 0.1499 / 0.0599

[0/5][300/1567] Loss_D: 0.8111 Loss_G: 7.3380 D(x): 0.8501 D(G(z)): 0.3902 / 0.0023

[0/5][350/1567] Loss_D: 0.3878 Loss_G: 4.9385 D(x): 0.8003 D(G(z)): 0.0799 / 0.0122

[0/5][400/1567] Loss_D: 0.3620 Loss_G: 5.3288 D(x): 0.8519 D(G(z)): 0.1378 / 0.0121

[0/5][450/1567] Loss_D: 0.2965 Loss_G: 4.2682 D(x): 0.8896 D(G(z)): 0.1294 / 0.0216

[0/5][500/1567] Loss_D: 0.5334 Loss_G: 2.9145 D(x): 0.7111 D(G(z)): 0.0755 / 0.0788

[0/5][550/1567] Loss_D: 0.2940 Loss_G: 4.9582 D(x): 0.9230 D(G(z)): 0.1405 / 0.0191

[0/5][600/1567] Loss_D: 0.3305 Loss_G: 5.1357 D(x): 0.8063 D(G(z)): 0.0506 / 0.0157

[0/5][650/1567] Loss_D: 0.3909 Loss_G: 4.6094 D(x): 0.8939 D(G(z)): 0.1885 / 0.0254

[0/5][700/1567] Loss_D: 0.8562 Loss_G: 7.3044 D(x): 0.5562 D(G(z)): 0.0026 / 0.0026

[0/5][750/1567] Loss_D: 0.5373 Loss_G: 7.7618 D(x): 0.9740 D(G(z)): 0.3496 / 0.0013

[0/5][800/1567] Loss_D: 0.7745 Loss_G: 3.5268 D(x): 0.5872 D(G(z)): 0.0334 / 0.0570

[0/5][850/1567] Loss_D: 0.6165 Loss_G: 5.0756 D(x): 0.9660 D(G(z)): 0.3899 / 0.0138

[0/5][900/1567] Loss_D: 0.3157 Loss_G: 3.4448 D(x): 0.8157 D(G(z)): 0.0528 / 0.0527

[0/5][950/1567] Loss_D: 0.4068 Loss_G: 5.2599 D(x): 0.8900 D(G(z)): 0.2190 / 0.0079

[0/5][1000/1567] Loss_D: 0.4471 Loss_G: 4.9560 D(x): 0.9502 D(G(z)): 0.2832 / 0.0165

[0/5][1050/1567] Loss_D: 0.2992 Loss_G: 2.8899 D(x): 0.8659 D(G(z)): 0.1113 / 0.0815

[0/5][1100/1567] Loss_D: 0.6775 Loss_G: 4.1491 D(x): 0.7165 D(G(z)): 0.2003 / 0.0361

[0/5][1150/1567] Loss_D: 0.4975 Loss_G: 3.7420 D(x): 0.7965 D(G(z)): 0.1760 / 0.0368

[0/5][1200/1567] Loss_D: 0.5209 Loss_G: 3.9092 D(x): 0.7924 D(G(z)): 0.1702 / 0.0407

[0/5][1250/1567] Loss_D: 0.7658 Loss_G: 5.8661 D(x): 0.9198 D(G(z)): 0.4341 / 0.0051

[0/5][1300/1567] Loss_D: 0.6182 Loss_G: 2.3502 D(x): 0.6498 D(G(z)): 0.0416 / 0.1523

[0/5][1350/1567] Loss_D: 1.2259 Loss_G: 5.9051 D(x): 0.8689 D(G(z)): 0.5534 / 0.0116

[0/5][1400/1567] Loss_D: 0.9445 Loss_G: 2.0345 D(x): 0.4958 D(G(z)): 0.0150 / 0.2204

[0/5][1450/1567] Loss_D: 0.5105 Loss_G: 3.1081 D(x): 0.6893 D(G(z)): 0.0470 / 0.0914

[0/5][1500/1567] Loss_D: 0.6236 Loss_G: 2.9756 D(x): 0.7099 D(G(z)): 0.1615 / 0.0785

[0/5][1550/1567] Loss_D: 0.7406 Loss_G: 6.7447 D(x): 0.9440 D(G(z)): 0.4425 / 0.0019

[1/5][0/1567] Loss_D: 1.0153 Loss_G: 8.2250 D(x): 0.9623 D(G(z)): 0.5492 / 0.0008

[1/5][50/1567] Loss_D: 0.5226 Loss_G: 5.2949 D(x): 0.9142 D(G(z)): 0.3052 / 0.0095

[1/5][100/1567] Loss_D: 4.2235 Loss_G: 10.9526 D(x): 0.9861 D(G(z)): 0.9360 / 0.0001

[1/5][150/1567] Loss_D: 0.5008 Loss_G: 4.6801 D(x): 0.9049 D(G(z)): 0.2883 / 0.0173

[1/5][200/1567] Loss_D: 0.4509 Loss_G: 5.3929 D(x): 0.8955 D(G(z)): 0.2458 / 0.0079

[1/5][250/1567] Loss_D: 0.4885 Loss_G: 2.7514 D(x): 0.7086 D(G(z)): 0.0474 / 0.0915

[1/5][300/1567] Loss_D: 0.5356 Loss_G: 2.6585 D(x): 0.7270 D(G(z)): 0.1288 / 0.1004

[1/5][350/1567] Loss_D: 0.8461 Loss_G: 0.9142 D(x): 0.5377 D(G(z)): 0.0342 / 0.4816

[1/5][400/1567] Loss_D: 0.4752 Loss_G: 2.4987 D(x): 0.7422 D(G(z)): 0.0819 / 0.1183

[1/5][450/1567] Loss_D: 0.4779 Loss_G: 3.2457 D(x): 0.7237 D(G(z)): 0.0604 / 0.0788

[1/5][500/1567] Loss_D: 0.3773 Loss_G: 3.4385 D(x): 0.8374 D(G(z)): 0.1532 / 0.0463

[1/5][550/1567] Loss_D: 0.5182 Loss_G: 3.9504 D(x): 0.6710 D(G(z)): 0.0175 / 0.0360

[1/5][600/1567] Loss_D: 0.3414 Loss_G: 3.3448 D(x): 0.7842 D(G(z)): 0.0488 / 0.0585

[1/5][650/1567] Loss_D: 0.4459 Loss_G: 3.4768 D(x): 0.8273 D(G(z)): 0.1939 / 0.0443

[1/5][700/1567] Loss_D: 1.3107 Loss_G: 0.8703 D(x): 0.3642 D(G(z)): 0.0310 / 0.4835

[1/5][750/1567] Loss_D: 0.4869 Loss_G: 3.3692 D(x): 0.8624 D(G(z)): 0.2562 / 0.0461

[1/5][800/1567] Loss_D: 0.5295 Loss_G: 3.6113 D(x): 0.6611 D(G(z)): 0.0210 / 0.0438

[1/5][850/1567] Loss_D: 0.4660 Loss_G: 2.9037 D(x): 0.7429 D(G(z)): 0.1077 / 0.0790

[1/5][900/1567] Loss_D: 0.6548 Loss_G: 3.9572 D(x): 0.8837 D(G(z)): 0.3717 / 0.0275

[1/5][950/1567] Loss_D: 1.7882 Loss_G: 1.0133 D(x): 0.2506 D(G(z)): 0.0065 / 0.4587

[1/5][1000/1567] Loss_D: 0.4816 Loss_G: 3.2230 D(x): 0.7838 D(G(z)): 0.1672 / 0.0636

[1/5][1050/1567] Loss_D: 0.6412 Loss_G: 2.2602 D(x): 0.8479 D(G(z)): 0.3017 / 0.1601

[1/5][1100/1567] Loss_D: 1.3924 Loss_G: 6.9759 D(x): 0.9619 D(G(z)): 0.6918 / 0.0021

[1/5][1150/1567] Loss_D: 0.6000 Loss_G: 2.7910 D(x): 0.6660 D(G(z)): 0.0650 / 0.0931

[1/5][1200/1567] Loss_D: 0.2818 Loss_G: 3.1817 D(x): 0.9106 D(G(z)): 0.1567 / 0.0585

[1/5][1250/1567] Loss_D: 1.2826 Loss_G: 5.8496 D(x): 0.9246 D(G(z)): 0.6465 / 0.0058

[1/5][1300/1567] Loss_D: 0.4234 Loss_G: 3.0132 D(x): 0.8583 D(G(z)): 0.2126 / 0.0735

[1/5][1350/1567] Loss_D: 0.3174 Loss_G: 3.0332 D(x): 0.9053 D(G(z)): 0.1781 / 0.0714

[1/5][1400/1567] Loss_D: 0.7698 Loss_G: 1.4693 D(x): 0.5879 D(G(z)): 0.1052 / 0.3039

[1/5][1450/1567] Loss_D: 0.6258 Loss_G: 0.6535 D(x): 0.6344 D(G(z)): 0.0806 / 0.6098

[1/5][1500/1567] Loss_D: 0.6141 Loss_G: 4.1689 D(x): 0.9008 D(G(z)): 0.3616 / 0.0211

[1/5][1550/1567] Loss_D: 0.4335 Loss_G: 2.7944 D(x): 0.7994 D(G(z)): 0.1607 / 0.0807

[2/5][0/1567] Loss_D: 1.1596 Loss_G: 5.1119 D(x): 0.9492 D(G(z)): 0.6028 / 0.0114

[2/5][50/1567] Loss_D: 0.4913 Loss_G: 3.5129 D(x): 0.7922 D(G(z)): 0.1916 / 0.0443

[2/5][100/1567] Loss_D: 0.5414 Loss_G: 2.7824 D(x): 0.8043 D(G(z)): 0.2332 / 0.0845

[2/5][150/1567] Loss_D: 0.4686 Loss_G: 1.9839 D(x): 0.7234 D(G(z)): 0.0878 / 0.1734

[2/5][200/1567] Loss_D: 0.8191 Loss_G: 3.1437 D(x): 0.7462 D(G(z)): 0.3407 / 0.0605

[2/5][250/1567] Loss_D: 0.5718 Loss_G: 2.8979 D(x): 0.6697 D(G(z)): 0.0961 / 0.0892

[2/5][300/1567] Loss_D: 0.4787 Loss_G: 2.9174 D(x): 0.7941 D(G(z)): 0.1887 / 0.0726

[2/5][350/1567] Loss_D: 0.7060 Loss_G: 0.9351 D(x): 0.5818 D(G(z)): 0.0757 / 0.4493

[2/5][400/1567] Loss_D: 0.4998 Loss_G: 2.4127 D(x): 0.7822 D(G(z)): 0.1959 / 0.1159

[2/5][450/1567] Loss_D: 0.5721 Loss_G: 2.2826 D(x): 0.7614 D(G(z)): 0.2250 / 0.1320

[2/5][500/1567] Loss_D: 1.0770 Loss_G: 3.5311 D(x): 0.8497 D(G(z)): 0.5341 / 0.0453

[2/5][550/1567] Loss_D: 0.9587 Loss_G: 2.0706 D(x): 0.6015 D(G(z)): 0.2855 / 0.1619

[2/5][600/1567] Loss_D: 0.9088 Loss_G: 0.9746 D(x): 0.5216 D(G(z)): 0.1243 / 0.4250

[2/5][650/1567] Loss_D: 0.5082 Loss_G: 3.1175 D(x): 0.8732 D(G(z)): 0.2840 / 0.0571

[2/5][700/1567] Loss_D: 2.3873 Loss_G: 3.1888 D(x): 0.9324 D(G(z)): 0.8345 / 0.0715

[2/5][750/1567] Loss_D: 0.6265 Loss_G: 2.2966 D(x): 0.6355 D(G(z)): 0.1003 / 0.1327

[2/5][800/1567] Loss_D: 0.5677 Loss_G: 1.8367 D(x): 0.7000 D(G(z)): 0.1488 / 0.1967

[2/5][850/1567] Loss_D: 0.5061 Loss_G: 2.1574 D(x): 0.7548 D(G(z)): 0.1600 / 0.1491

[2/5][900/1567] Loss_D: 0.5903 Loss_G: 2.7046 D(x): 0.7996 D(G(z)): 0.2734 / 0.0932

[2/5][950/1567] Loss_D: 1.2207 Loss_G: 0.9973 D(x): 0.3934 D(G(z)): 0.0880 / 0.4310

[2/5][1000/1567] Loss_D: 0.6456 Loss_G: 2.2442 D(x): 0.6200 D(G(z)): 0.0875 / 0.1431

[2/5][1050/1567] Loss_D: 1.0886 Loss_G: 0.7832 D(x): 0.4076 D(G(z)): 0.0440 / 0.5071

[2/5][1100/1567] Loss_D: 0.4480 Loss_G: 2.3673 D(x): 0.8514 D(G(z)): 0.2267 / 0.1308

[2/5][1150/1567] Loss_D: 0.5068 Loss_G: 2.0385 D(x): 0.7478 D(G(z)): 0.1643 / 0.1567

[2/5][1200/1567] Loss_D: 0.5830 Loss_G: 3.5261 D(x): 0.8727 D(G(z)): 0.3260 / 0.0396

[2/5][1250/1567] Loss_D: 0.5778 Loss_G: 1.4222 D(x): 0.6517 D(G(z)): 0.0862 / 0.2932

[2/5][1300/1567] Loss_D: 0.6409 Loss_G: 3.1975 D(x): 0.8424 D(G(z)): 0.3423 / 0.0548

[2/5][1350/1567] Loss_D: 0.5136 Loss_G: 2.9020 D(x): 0.8700 D(G(z)): 0.2737 / 0.0769

[2/5][1400/1567] Loss_D: 0.6284 Loss_G: 3.0575 D(x): 0.7967 D(G(z)): 0.2912 / 0.0604

[2/5][1450/1567] Loss_D: 1.4433 Loss_G: 0.6274 D(x): 0.3156 D(G(z)): 0.0563 / 0.5881

[2/5][1500/1567] Loss_D: 1.1383 Loss_G: 1.5920 D(x): 0.4010 D(G(z)): 0.0324 / 0.2603

[2/5][1550/1567] Loss_D: 1.0544 Loss_G: 3.7734 D(x): 0.9199 D(G(z)): 0.5679 / 0.0348

[3/5][0/1567] Loss_D: 0.6129 Loss_G: 2.3798 D(x): 0.7758 D(G(z)): 0.2622 / 0.1178

[3/5][50/1567] Loss_D: 1.0150 Loss_G: 1.1159 D(x): 0.4285 D(G(z)): 0.0279 / 0.3954

[3/5][100/1567] Loss_D: 0.7960 Loss_G: 2.9441 D(x): 0.6488 D(G(z)): 0.2342 / 0.0811

[3/5][150/1567] Loss_D: 0.7036 Loss_G: 1.0664 D(x): 0.6019 D(G(z)): 0.1089 / 0.3974

[3/5][200/1567] Loss_D: 0.3800 Loss_G: 2.8119 D(x): 0.8059 D(G(z)): 0.1250 / 0.0813

[3/5][250/1567] Loss_D: 0.5637 Loss_G: 1.4856 D(x): 0.6783 D(G(z)): 0.1155 / 0.2617

[3/5][300/1567] Loss_D: 0.5941 Loss_G: 2.8845 D(x): 0.8076 D(G(z)): 0.2765 / 0.0768

[3/5][350/1567] Loss_D: 0.6790 Loss_G: 1.3125 D(x): 0.6115 D(G(z)): 0.1025 / 0.3247

[3/5][400/1567] Loss_D: 1.3248 Loss_G: 4.6937 D(x): 0.9559 D(G(z)): 0.6608 / 0.0153

[3/5][450/1567] Loss_D: 0.6089 Loss_G: 2.5706 D(x): 0.6176 D(G(z)): 0.0490 / 0.1085

[3/5][500/1567] Loss_D: 0.6205 Loss_G: 1.8407 D(x): 0.6519 D(G(z)): 0.1072 / 0.1874

[3/5][550/1567] Loss_D: 1.8101 Loss_G: 0.7733 D(x): 0.2254 D(G(z)): 0.0249 / 0.5126

[3/5][600/1567] Loss_D: 0.4991 Loss_G: 3.2251 D(x): 0.8315 D(G(z)): 0.2383 / 0.0529

[3/5][650/1567] Loss_D: 1.0529 Loss_G: 3.0567 D(x): 0.8478 D(G(z)): 0.5228 / 0.0689

[3/5][700/1567] Loss_D: 0.9430 Loss_G: 1.2749 D(x): 0.5018 D(G(z)): 0.1144 / 0.3263

[3/5][750/1567] Loss_D: 0.6954 Loss_G: 1.8310 D(x): 0.6068 D(G(z)): 0.1138 / 0.2061

[3/5][800/1567] Loss_D: 1.0832 Loss_G: 4.3888 D(x): 0.9131 D(G(z)): 0.5860 / 0.0163

[3/5][850/1567] Loss_D: 0.5538 Loss_G: 3.0146 D(x): 0.8925 D(G(z)): 0.3258 / 0.0663

[3/5][900/1567] Loss_D: 0.5791 Loss_G: 1.9395 D(x): 0.7682 D(G(z)): 0.2320 / 0.1699

[3/5][950/1567] Loss_D: 0.8190 Loss_G: 0.8678 D(x): 0.5284 D(G(z)): 0.0756 / 0.4602

[3/5][1000/1567] Loss_D: 0.9801 Loss_G: 2.8098 D(x): 0.7046 D(G(z)): 0.3957 / 0.0805

[3/5][1050/1567] Loss_D: 0.9347 Loss_G: 2.7753 D(x): 0.7093 D(G(z)): 0.3738 / 0.0884

[3/5][1100/1567] Loss_D: 0.4949 Loss_G: 2.7010 D(x): 0.8318 D(G(z)): 0.2408 / 0.0833

[3/5][1150/1567] Loss_D: 0.4089 Loss_G: 2.5705 D(x): 0.8685 D(G(z)): 0.2168 / 0.0967

[3/5][1200/1567] Loss_D: 0.6158 Loss_G: 2.4766 D(x): 0.7951 D(G(z)): 0.2862 / 0.1067

[3/5][1250/1567] Loss_D: 0.6034 Loss_G: 1.8273 D(x): 0.7414 D(G(z)): 0.2230 / 0.2029

[3/5][1300/1567] Loss_D: 0.4567 Loss_G: 2.1674 D(x): 0.7194 D(G(z)): 0.0813 / 0.1543

[3/5][1350/1567] Loss_D: 0.6020 Loss_G: 1.9374 D(x): 0.6356 D(G(z)): 0.0930 / 0.1885

[3/5][1400/1567] Loss_D: 0.7482 Loss_G: 1.0558 D(x): 0.5842 D(G(z)): 0.1053 / 0.3856

[3/5][1450/1567] Loss_D: 0.6773 Loss_G: 2.5691 D(x): 0.8046 D(G(z)): 0.3226 / 0.0981

[3/5][1500/1567] Loss_D: 0.5394 Loss_G: 2.1660 D(x): 0.7662 D(G(z)): 0.2061 / 0.1421

[3/5][1550/1567] Loss_D: 0.5775 Loss_G: 2.9399 D(x): 0.8530 D(G(z)): 0.3050 / 0.0661

[4/5][0/1567] Loss_D: 0.7733 Loss_G: 2.4629 D(x): 0.7513 D(G(z)): 0.3245 / 0.1104

[4/5][50/1567] Loss_D: 0.8922 Loss_G: 1.1718 D(x): 0.4907 D(G(z)): 0.0476 / 0.3564

[4/5][100/1567] Loss_D: 0.6547 Loss_G: 3.8219 D(x): 0.8484 D(G(z)): 0.3490 / 0.0313

[4/5][150/1567] Loss_D: 0.5065 Loss_G: 2.6143 D(x): 0.8220 D(G(z)): 0.2389 / 0.0935

[4/5][200/1567] Loss_D: 1.3935 Loss_G: 0.4975 D(x): 0.3265 D(G(z)): 0.0518 / 0.6491

[4/5][250/1567] Loss_D: 0.5922 Loss_G: 3.9062 D(x): 0.9168 D(G(z)): 0.3544 / 0.0295

[4/5][300/1567] Loss_D: 0.5837 Loss_G: 1.6458 D(x): 0.6617 D(G(z)): 0.1093 / 0.2295

[4/5][350/1567] Loss_D: 0.5270 Loss_G: 2.0888 D(x): 0.7810 D(G(z)): 0.2142 / 0.1562

[4/5][400/1567] Loss_D: 0.8648 Loss_G: 3.4855 D(x): 0.9003 D(G(z)): 0.4771 / 0.0455

[4/5][450/1567] Loss_D: 0.5237 Loss_G: 2.2375 D(x): 0.8001 D(G(z)): 0.2354 / 0.1309

[4/5][500/1567] Loss_D: 1.0253 Loss_G: 3.3720 D(x): 0.6948 D(G(z)): 0.4164 / 0.0454

[4/5][550/1567] Loss_D: 0.4881 Loss_G: 2.4536 D(x): 0.7618 D(G(z)): 0.1535 / 0.1103

[4/5][600/1567] Loss_D: 0.5000 Loss_G: 2.6980 D(x): 0.7179 D(G(z)): 0.1211 / 0.0951

[4/5][650/1567] Loss_D: 1.0231 Loss_G: 0.8356 D(x): 0.4700 D(G(z)): 0.1121 / 0.4843

[4/5][700/1567] Loss_D: 0.8009 Loss_G: 3.7583 D(x): 0.8391 D(G(z)): 0.4220 / 0.0356

[4/5][750/1567] Loss_D: 0.7859 Loss_G: 1.0725 D(x): 0.5619 D(G(z)): 0.1009 / 0.3875

[4/5][800/1567] Loss_D: 1.5281 Loss_G: 0.8125 D(x): 0.2933 D(G(z)): 0.0452 / 0.4956

[4/5][850/1567] Loss_D: 0.4022 Loss_G: 3.3176 D(x): 0.9354 D(G(z)): 0.2640 / 0.0477

[4/5][900/1567] Loss_D: 0.5842 Loss_G: 1.7742 D(x): 0.6247 D(G(z)): 0.0504 / 0.2097

[4/5][950/1567] Loss_D: 0.5860 Loss_G: 2.0877 D(x): 0.7251 D(G(z)): 0.1948 / 0.1582

[4/5][1000/1567] Loss_D: 0.6735 Loss_G: 4.2387 D(x): 0.9475 D(G(z)): 0.4155 / 0.0213

[4/5][1050/1567] Loss_D: 0.6130 Loss_G: 2.6651 D(x): 0.8604 D(G(z)): 0.3337 / 0.0914

[4/5][1100/1567] Loss_D: 0.3737 Loss_G: 2.5814 D(x): 0.7820 D(G(z)): 0.0964 / 0.1008

[4/5][1150/1567] Loss_D: 0.5412 Loss_G: 3.2119 D(x): 0.8726 D(G(z)): 0.3015 / 0.0533

[4/5][1200/1567] Loss_D: 1.0635 Loss_G: 4.1522 D(x): 0.9437 D(G(z)): 0.5822 / 0.0267

[4/5][1250/1567] Loss_D: 0.6148 Loss_G: 2.7950 D(x): 0.8066 D(G(z)): 0.2866 / 0.0791

[4/5][1300/1567] Loss_D: 0.4972 Loss_G: 2.4557 D(x): 0.8161 D(G(z)): 0.2245 / 0.1036

[4/5][1350/1567] Loss_D: 0.6969 Loss_G: 3.0373 D(x): 0.8472 D(G(z)): 0.3712 / 0.0606

[4/5][1400/1567] Loss_D: 0.9324 Loss_G: 1.1816 D(x): 0.4720 D(G(z)): 0.0429 / 0.3532

[4/5][1450/1567] Loss_D: 0.4000 Loss_G: 2.9336 D(x): 0.8075 D(G(z)): 0.1475 / 0.0696

[4/5][1500/1567] Loss_D: 0.5277 Loss_G: 2.8440 D(x): 0.8159 D(G(z)): 0.2360 / 0.0770

[4/5][1550/1567] Loss_D: 0.5729 Loss_G: 3.9015 D(x): 0.8743 D(G(z)): 0.3178 / 0.0308

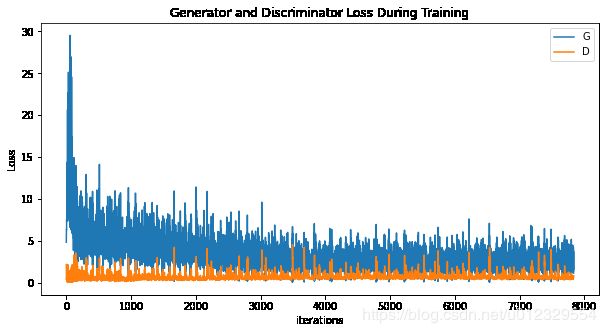

Results

Finally, lets check out how we did. Here, we will look at three

different results. First, we will see how D and G’s losses changed

during training. Second, we will visualize G’s output on the fixed_noise

batch for every epoch. And third, we will look at a batch of real data

next to a batch of fake data from G.

Loss versus training iteration

Below is a plot of D & G’s losses versus training iterations.

plt.figure(figsize=(10,5))

plt.title("Generator and Discriminator Loss During Training")

plt.plot(G_losses,label="G")

plt.plot(D_losses,label="D")

plt.xlabel("iterations")

plt.ylabel("Loss")

plt.legend()

plt.show()

Visualization of G’s progression

Remember how we saved the generator’s output on the fixed_noise batch

after every epoch of training. Now, we can visualize the training

progression of G with an animation. Press the play button to start the

animation.

#%%capture

fig = plt.figure(figsize=(8,8))

plt.axis("off")

ims = [[plt.imshow(np.transpose(i,(1,2,0)), animated=True)] for i in img_list]

ani = animation.ArtistAnimation(fig, ims, interval=1000, repeat_delay=1000, blit=True)

HTML(ani.to_jshtml())

# Grab a batch of real images from the dataloader

real_batch = next(iter(dataloader))

# Plot the real images

plt.figure(figsize=(15,15))

plt.subplot(1,2,1)

plt.axis("off")

plt.title("Real Images")

plt.imshow(np.transpose(vutils.make_grid(real_batch[0].to(device)[:64], padding=5, normalize=True).cpu(),(1,2,0)))

# Plot the fake images from the last epoch

plt.subplot(1,2,2)

plt.axis("off")

plt.title("Fake Images")

plt.imshow(np.transpose(img_list[-1],(1,2,0)))

plt.show()