TensorBoard 简介及使用流程

一、TensorBoard 简介及使用流程

1、TensoBoard 简介

TensorBoard 和 TensorFLow 程序跑在不同的进程中,TensorBoard 会自动读取最新的 TensorFlow 日志文件,并呈现当前 TensorFLow 程序运行的最新状态。

2、TensorBoard 使用流程

- 添加记录节点:

tf.summary.scalar/image/histogram()等- 汇总记录节点:

merged = tf.summary.merge_all()- 运行汇总节点:

summary = sess.run(merged),得到汇总结果- 日志书写器实例化:

summary_writer = tf.summary.FileWriter(logdir, graph=sess.graph),实例化的同时传入 graph 将当前计算图写入日志- 调用日志书写器实例对象

summary_writer的add_summary(summary, global_step=i)方法将所有汇总日志写入文件- 调用日志书写器实例对象

summary_writer的close()方法写入内存,否则它每隔120s写入一次

二、TensorFlow 可视化分类

1、计算图的可视化:add_graph()

...create a graph...

# Launch the graph in a session.

sess = tf.Session()

# Create a summary writer, add the 'graph' to the event file.

writer = tf.summary.FileWriter(logdir, sess.graph)

writer.close() # 关闭时写入内存,否则它每隔120s写入一次2、监控指标的可视化:add_summary()

I、SCALAR

tf.summary.scalar(name, tensor, collections=None, family=None)

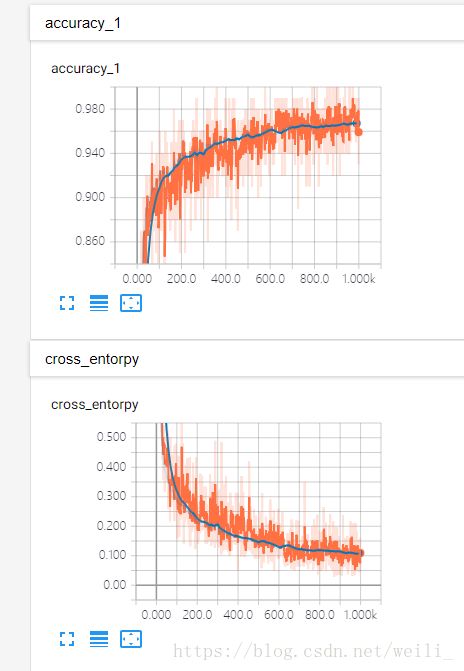

可视化训练过程中随着迭代次数准确率(val acc)、损失值(train/test loss)、学习率(learning rate)、每一层的权重和偏置的统计量(mean、std、max/min)等的变化曲线

输入参数:

- name:此操作节点的名字,TensorBoard 中绘制的图形的纵轴也将使用此名字

- tensor: 需要监控的变量 A real numeric Tensor containing a single value.

输出:

- A scalar Tensor of type string. Which contains a Summary protobuf.

II、IMAGE

tf.summary.image(name, tensor, max_outputs=3, collections=None, family=None)

可视化

当前轮训练使用的训练/测试图片或者 feature maps输入参数:

- name:此操作节点的名字,TensorBoard 中绘制的图形的纵轴也将使用此名字

- tensor: A r A 4-D uint8 or float32 Tensor of shape

[batch_size, height, width, channels]where channels is 1, 3, or 4- max_outputs:Max number of batch elements to generate images for

输出:

- A scalar Tensor of type string. Which contains a Summary protobuf.

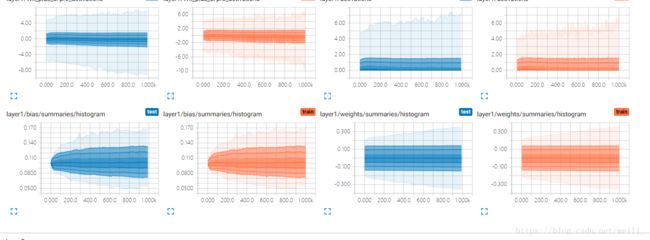

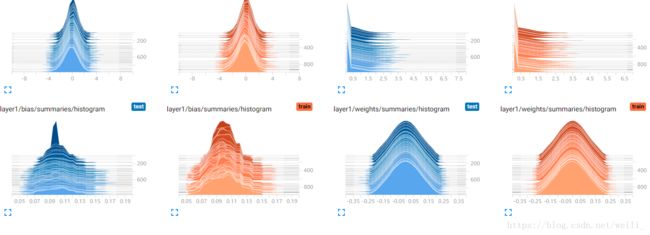

III、HISTOGRAM

tf.summary.histogram(name, values, collections=None, family=None)

可视化张量的取值分布

输入参数:

- name:此操作节点的名字,TensorBoard 中绘制的图形的纵轴也将使用此名字

- tensor: A real numeric Tensor. Any shape. Values to use to build the histogram

输出:

- A scalar Tensor of type string. Which contains a Summary protobuf.

IV、MERGE_ALL

tf.summary.merge_all(key=tf.GraphKeys.SUMMARIES)

- Merges all summaries collected in the default graph

- 因为程序中定义的写日志操作比较多,一一调用非常麻烦,所以TensoorFlow 提供了此函数来整理所有的日志生成操作,eg:

merged = tf.summary.merge_all ()- 此操作不会立即执行,所以,需要明确的运行这个操作(

summary = sess.run(merged))来得到汇总结果- 最后调用日志书写器实例对象的

add_summary(summary, global_step=i)方法将所有汇总日志写入文件

3、多个事件(event)的可视化:add_event()

- 如果 logdir 目录的子目录中包含另一次运行时的数据(多个 event),那么 TensorBoard 会展示所有运行的数据(主要是scalar),这样可以用于比较不同参数下模型的效果,调节模型的参数,让其达到最好的效果!

- 上面那条线是迭代200次的loss曲线图,下面那条是迭代400次的曲线图,程序见最后。

![]()

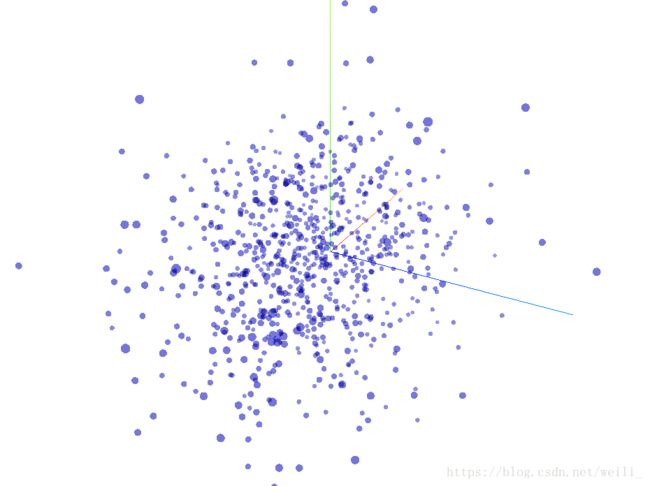

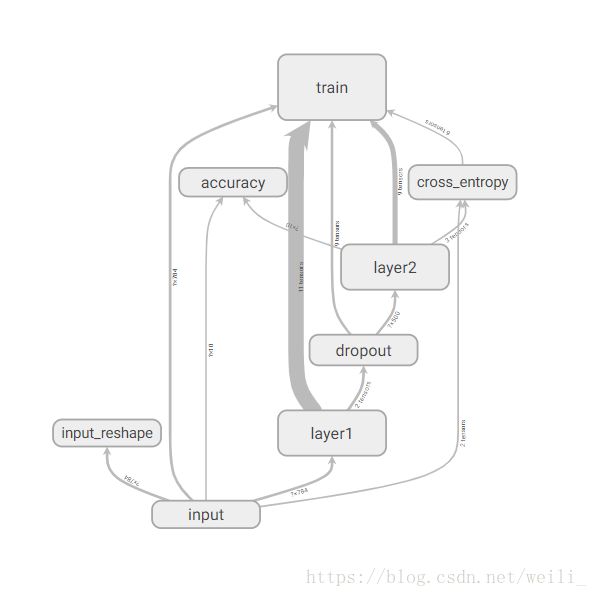

三、通过命名空间美化计算图

- 使用命名空间使可视化效果图更有层次性,使得神经网络的整体结构不会被过多的细节所淹没

- 同一个命名空间下的所有节点会被缩略成一个节点,只有顶层命名空间中的节点才会被显示在 TensorBoard 可视化效果图上

- 可通过

tf.name_scope()或者tf.variable_scope()来实现,具体见最后的程序。

![]()

四、将所有日志写入到文件:tf.summary.FileWriter()

![]()

tf.summary.FileWriter(logdir, graph=None, flush_secs=120, max_queue=10)

- 负责将事件日志(graph、scalar/image/histogram、event)写入到指定的文件中

初始化参数:

- logdir:事件写入的目录

- graph:如果在初始化的时候传入

sess,graph的话,相当于调用add_graph()方法,用于计算图的可视化- flush_sec:How often, in seconds, to flush the

added summaries and eventsto disk.- max_queue:Maximum number of

summaries or eventspending to be written to disk before one of the ‘add’ calls block.其它常用方法:

add_event(event):Adds an event to the event fileadd_graph(graph, global_step=None):Adds a Graph to the event file,Most users pass a graph in the constructor insteadadd_summary(summary, global_step=None):Adds a Summary protocol buffer to the event file,一定注意要传入 global_stepclose():Flushes the event file to disk and close the fileflush():Flushes the event file to diskadd_meta_graph(meta_graph_def,global_step=None)add_run_metadata(run_metadata, tag, global_step=None)

五、启动 TensorBoard 展示所有日志图表

1. 通过 Windows 下的 cmd 启动

- 运行你的程序,在指定目录下(

logs)生成event文件 - 在

logs所在目录,按住shift键,点击右键选择在此处打开cmd - 在

cmd中,输入以下命令启动tensorboard --logdir=logs(本人的是:E:\python code>tensorboard --logdir=mnist_with_summaries),注意:logs的目录并不需要加引号, logs 中有多个event 时,会生成scalar 的对比图,但 graph 只会展示最新的结果 - 把下面生成的网址(

http://H929R0B7MP1Q9TP:6006 # 每个人的可能不一样) copy 到浏览器中打开即可 ,我首选google浏览器,因为我试了其他的浏览器貌似不行。

2. 通过 Ubuntu下的 bash 启动

- 运行你的程序(

python my_program.py),在指定目录下(logs)生成event文件 - 在

bash中,输入以下命令启动tensorboard --logdir=logs --port=8888,注意:logs的目录并不需要加引号,端口号必须是事先在路由器中配置好的 - 把下面生成的网址(

http://ubuntu16:8888 # 把ubuntu16 换成服务器的外部ip地址即可) copy 到本地浏览器中打开即可

六、使用 TF 实现mnist手写数据集(并使用 TensorBoard 可视化)

- 多个event的

loss对比图以及网络结构图(graph)已经在上面展示了,这里就不重复了。- 最下面展示了网络的训练过程以及最终拟合效果图

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

# _*_ encoding=utf-8 _*_

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

max_step =1000

learning_rate = 0.001

dropout = 0.9

data_dir ='E:\\python code'

log_dir = 'E:\\python code\\mnist_with_summaries'

mnist = input_data.read_data_sets(data_dir, one_hot=True)

sess = tf.InteractiveSession()

config = tf.ConfigProto(allow_soft_placement=True)

gpu_options = tf.GPUOptions(per_process_gpu_memory_fraction=0.7)

config.gpu_options.allow_growth = True

# 设置 with tf.name_space 来限定命名空间,以下的所有的节点都会以input/xxx的 形式保存

with tf.name_scope('input'):

x = tf.placeholder(dtype=tf.float32, shape=[None,784], name='x-input')

y_ = tf.placeholder(dtype=tf.float32, shape=[None,10], name='y-input')

with tf.name_scope('input_reshape'):

image_shaped_input = tf.reshape(x,[-1, 28, 28, 1])

tf.summary.image('input',image_shaped_input, 10)

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1,shape=shape)

return tf.Variable(initial)

def variable_summaries(var):

with tf.name_scope('summaries'):

mean = tf.reduce_mean(var)

tf.summary.scalar('mean',mean)

with tf.name_scope('stddev'):

stddev = tf.sqrt(tf.reduce_mean(tf.square(var - mean)))

tf.summary.scalar('stddev',stddev)

tf.summary.scalar('max',tf.reduce_max(var))

tf.summary.scalar('min',tf.reduce_min(var))

tf.summary.histogram('histogram', var)

def nn_layer(input_tensor, input_dim, output_dim, layer_name,

act =tf.nn.relu):

with tf.name_scope(layer_name):

with tf.name_scope('weights'):

weightses = weight_variable([input_dim, output_dim])

variable_summaries(weightses)

with tf.name_scope('bias'):

biases = bias_variable([output_dim])

variable_summaries(biases)

with tf.name_scope('Wx_plus_b'):

preactivate = tf.matmul(input_tensor, weightses)+biases

tf.summary.histogram('pre_activations', preactivate)

activations = act(preactivate, name='activation')

tf.summary.histogram('activations',activations)

return activations

hiddens = nn_layer(x,784,500,'layer1')

with tf.name_scope('dropout'):

keep_prob =tf.placeholder(tf.float32)

tf.summary.scalar('keep_prob', keep_prob)

dropped = tf.nn.dropout(hiddens, keep_prob)

# identity 是一个全等映射的激活函数

y = nn_layer(dropped, 500, 10,'layer2',act=tf.identity)

with tf.name_scope('cross_entropy'):

diff = tf.nn.softmax_cross_entropy_with_logits_v2(logits=y, labels=y_)

with tf.name_scope('total'):

cross_entropy =tf.reduce_mean(diff)

tf.summary.scalar('cross_entorpy',cross_entropy)

with tf.name_scope('train'):

train_step = tf.train.AdamOptimizer(learning_rate).minimize(cross_entropy)

with tf.name_scope('accuracy'):

with tf.name_scope('correct_prediction'):

correct_prediction = tf.equal(tf.argmax(y,1),tf.argmax(y_,1))

with tf.name_scope('accuracy'):

accuracy = tf.reduce_mean(tf.cast(correct_prediction,tf.float32))

tf.summary.scalar('accuracy', accuracy)

# 对前面所有的summary进行了汇总

merged =tf.summary.merge_all()

# 定义两个文件记录器,将其放在两个不同的子目录中分别用来存放训练和测试的日志数据,同时将

# session的计算图的sess.graph加入训练过程的记录器,这样tensorboard的graphs窗口就可以展示整个

# 计算图的可视化效果

train_writer = tf.summary.FileWriter(log_dir + '\\train', sess.graph)

test_writer = tf.summary.FileWriter(log_dir +'\\test')

tf.global_variables_initializer().run()

def feed_dict(train):

if train:

xs,ys = mnist.train.next_batch(100)

k = dropout

else:

xs, ys = mnist.test.images , mnist.test.labels

k =1.0

return {x:xs,y_:ys,keep_prob:k}

# 创建了模型的保存器

saver =tf.train.Saver()

for i in range(max_step):

if i %10==0:

summary, acc = sess.run([merged, accuracy],feed_dict=feed_dict(False))

test_writer.add_summary(summary, i)

print('Accuracy at step %s: %s' %(i, acc))

else:

if i%100==99:

run_options = tf.RunOptions(trace_level =tf.RunOptions.FULL_TRACE)

run_metadata = tf.RunMetadata()

summary,_ = sess.run([merged, train_step],feed_dict=feed_dict(True),

options=run_options, run_metadata = run_metadata)

train_writer.add_run_metadata(run_metadata, 'step%03d'%i)

train_writer.add_summary(summary, i)

saver.save(sess, log_dir+'\\model.ckpt',i)

print('Add run metadata for ', i)

else:

summary,_ = sess.run([merged, train_step], feed_dict=feed_dict(True))

train_writer.add_summary(summary,i)

train_writer.close()

test_writer.close()

这是可视化的结果: