MaskRCNN-Benchmark框架训练自己的数据集

文章目录

- 一、数据集准备

- 二、配置文件

- 三、训练与测试

- 3.1 报错与解决

Facebook AI Research 开源了 Faster R-CNN 和 Mask R-CNN 的 PyTorch 1.0 实现基准:MaskRCNN-Benchmark。相比 Detectron 和 mmdetection,MaskRCNN-Benchmark 的性能相当,并拥有更快的训练速度和更低的 GPU 内存占用。

- 众多亮点如下:

-

PyTorch 1.0:相当或者超越 Detectron 准确率的 RPN、Faster R-CNN、Mask R-CNN 实现;

非常快:训练速度是 Detectron 的两倍,是 mmdection 的 1.3 倍。

节省内存:在训练过程中使用的 GPU 内存比 mmdetection 少大约 500MB;

使用多 GPU 训练和推理;

批量化推理:可以在每 GPU 每批量上使用多张图像进行推理;

支持 CPU 推理:可以在推理时间内于 CPU 上运行。

提供几乎所有参考 Mask R-CNN 和 Faster R-CNN 配置的预训练模型,具有 1x 的 schedule。

运行基础环境:

系统:Ubutun 16.04

Python环境:Anaconda3 | Python 3.6.5 | CUDA

$ conda create --name maskrcnn_benchmark

$ source activate maskrcnn_benchmark

$ conda install ipython

$ pip install ninja yacs cython matplotlib tqdm opencv-python

# follow PyTorch installation in https://pytorch.org/get-started/locally/

# we give the instructions for CUDA 9.0

$ conda install -c pytorch pytorch-nightly torchvision cudatoolkit=9.0

# install pycocotools

$ cd ~/github

$ git clone https://github.com/cocodataset/cocoapi.git

$ cd cocoapi/PythonAPI

$ python setup.py build_ext install

# install apex

cd ~/github

git clone https://github.com/NVIDIA/apex.git

cd apex

python setup.py install --cuda_ext --cpp_ext

# install PyTorch Detection

$ cd ~/github

$ git clone https://github.com/facebookresearch/maskrcnn-benchmark.git

$ cd maskrcnn-benchmark

$ python setup.py build develop

关于执行途中报错请看文章末尾。

install apex执行编译输出:

(maskrcnn_benchmark) xxx@xxx:~/github/apex$ python setup.py install --cuda_ext --cpp_ext

torch.__version__ = 1.0.0

setup.py:43: UserWarning: Option --pyprof not specified. Not installing PyProf dependencies!

warnings.warn("Option --pyprof not specified. Not installing PyProf dependencies!")

Compiling cuda extensions with

nvcc: NVIDIA (R) Cuda compiler driver

Copyright (c) 2005-2017 NVIDIA Corporation

Built on Fri_Sep__1_21:08:03_CDT_2017

Cuda compilation tools, release 9.0, V9.0.176

from /usr/local/cuda-9.0/bin

...

一、数据集准备

COCO数据集下载:https://blog.csdn.net/qq_41847324/article/details/86224628,这位博主写的非常详细。

自定义数据集如下:

COCO数据集现在有3种标注类型:

object instances(目标实例),

object keypoints(目标上的关键点)

image captions(看图说话)

使用JSON文件存储。具体可以参考COCO数据集的标注格式一文。我用到object detection,不需要语义分割,格式相对简单。

{

"info": {..} #描述这个数据集的整体信息,训练自己的数据直接给个空词典ok

"licenses": [license],#可以包含多个licenses实例,训练自己的数据继续给个空列表ok

"images": [

{

'file_name': 'xx', #文件路径,这个路径将和一个将root的根目录拼接成你的文件访问路径

'height': xx, #图片高度

'width': xx, #图片宽度

'id': xx, #每张图片都有一个唯一的id,从0开始编码即可

},

...

],

"annotations": [

{

'segmentation': [] #语义分割的时候要用到,我只用到了目标检测,所以忽略.

'area': xx, #区域面积,宽*高就是区域面积

'image_id': xx, #一张当然可能有多个标注,这个image_id和images中的id相对应

'bbox':[x,y,w,h], #通过这4个坐标来定位边框

'category_id': xx, #类别id(与categories中的id对应)

'id': xx, #这是这个annotation的id,也是唯一的,从0编号即可

},

...

]

"categories": [

{

'supercategory': xx, #你类别名称,例如vehicle(交通工具),下一级有car,truck等.

'id': xx, #类别的id,从1开始编号,0默认为背景

'name': xx, #这个子类别的名字

},

...

],

}

参照上面的标注格式分别生成训练集和验证集的json标注文件,可以继续沿用coco数据集默认的名字:instances_train2104.json和instances_val2014.json。数据集的目录组织结构可以参考下面的整体目录结构中datasets目录。

数据集校验:https://www.zhihu.com/search?q=maskrcnn%20benchmark&utm_content=search_suggestion&type=content

(maskrcnn_benchmark)xxx@xxx~$ tree -L 3

.

├── configs

│ ├── e2e_faster_rcnn_R_101_FPN_1x.yaml #训练和验证要用到的faster r-cnn模型配置文件

│ ├── e2e_mask_rcnn_R_101_FPN_1x.yaml #训练和验证要用到的mask r-cnn模型配置文件

│ └── quick_schedules

├── CONTRIBUTING.md

├── datasets

│ └── coco

│ ├── annotations

│ │ ├── instances_train2014.json #训练集标注文件

│ │ └── instances_val2014.json #验证集标注文件

│ ├── train2014 #存放训练集图片

│ └── val2014 #存放验证集图片

├── maskrcnn_benchmark

│ ├── config

│ │ ├── defaults.py #masrcnn_benchmark默认配置文件,启动时会读取訪配置文件,configs目录下的模型配置文件进行参数合并

│ │ ├── __init__.py

│ │ ├── paths_catalog.py #在訪文件中配置训练和测试集的路径

│ │ └── __pycache__

│ ├── csrc

│ ├── data

│ │ ├── build.py #生成数据集的地方

│ │ ├── datasets #訪目录下的coco.py提供了coco数据集的访问接口

│ │ └── transforms

│ ├── engine

│ │ ├── inference.py #验证引擎

│ │ └── trainer.py #训练引擎

│ ├── __init__.py

│ ├── layers

│ │ ├── batch_norm.py

│ │ ├── __init__.py

│ │ ├── misc.py

│ │ ├── nms.py

│ │ ├── __pycache__

│ │ ├── roi_align.py

│ │ ├── roi_pool.py

│ │ ├── smooth_l1_loss.py

│ │ └── _utils.py

│ ├── modeling

│ │ ├── backbone

│ │ ├── balanced_positive_negative_sampler.py

│ │ ├── box_coder.py

│ │ ├── detector

│ │ ├── __init__.py

│ │ ├── matcher.py

│ │ ├── poolers.py

│ │ ├── __pycache__

│ │ ├── roi_heads

│ │ ├── rpn

│ │ └── utils.py

│ ├── solver

│ │ ├── build.py

│ │ ├── __init__.py

│ │ ├── lr_scheduler.py #在此设置学习率调整策略

│ │ └── __pycache__

│ ├── structures

│ │ ├── bounding_box.py

│ │ ├── boxlist_ops.py

│ │ ├── image_list.py

│ │ ├── __init__.py

│ │ ├── __pycache__

│ │ └── segmentation_mask.py

│ └── utils

│ ├── c2_model_loading.py

│ ├── checkpoint.py #检查点

│ ├── __init__.py

│ ├── logger.py #日志设置

│ ├── model_zoo.py

│ ├── __pycache__

│ └── README.md

├── output #我自己设定的输出目录

├── tools

│ ├── test_net.py #验证入口

│ └── train_net.py #训练入口

└── TROUBLESHOOTING.md

二、配置文件

- 模型配置文件(如:configs/e2e_mask_rcnn_R_101_FPN_1x.yaml)

- 数据路径配置文件(maskrcnn_benchmark/config/paths_catalog.py)

- MaskRCNN-Benchmark框架配置文件(maskrcnn_benchmark/config/defaults.py)。

模型配置文件在启动训练时由--config-file参数指定,在config子目录下默认提供了mask_rcnn和faster_rcnn框架不同骨干网的基于YAML格式的配置文件。这里选用e2e_mask_rcnn_R_101_FPN_1x.yaml,也就是使用mask_rcnn检测模型,骨干网络ResNet101-FPN,配置详情如下(根据自己的数据集作相应的调整):

MODEL:

META_ARCHITECTURE: "GeneralizedRCNN"

WEIGHT: "catalog://ImageNetPretrained/MSRA/R-101" # 若为空,则直接训练

BACKBONE:

CONV_BODY: "R-101-FPN"

OUT_CHANNELS: 256

RPN:

USE_FPN: True #是否使用FPN,也就是特征金字塔结构,选择True将在不同的特征图提取候选区域

ANCHOR_STRIDE: (4, 8, 16, 32, 64) #ANCHOR的步长

PRE_NMS_TOP_N_TRAIN: 2000 #训练时,NMS之前的候选区数量

PRE_NMS_TOP_N_TEST: 1000 #测试时,NMS之后的候选区数量

POST_NMS_TOP_N_TEST: 1000

FPN_POST_NMS_TOP_N_TEST: 1000

ROI_HEADS:

USE_FPN: True

ROI_BOX_HEAD:

POOLER_RESOLUTION: 7

POOLER_SCALES: (0.25, 0.125, 0.0625, 0.03125)

POOLER_SAMPLING_RATIO: 2

FEATURE_EXTRACTOR: "FPN2MLPFeatureExtractor"

PREDICTOR: "FPNPredictor"

ROI_MASK_HEAD:

POOLER_SCALES: (0.25, 0.125, 0.0625, 0.03125)

FEATURE_EXTRACTOR: "MaskRCNNFPNFeatureExtractor"

PREDICTOR: "MaskRCNNC4Predictor"

POOLER_RESOLUTION: 14

POOLER_SAMPLING_RATIO: 2

RESOLUTION: 28

SHARE_BOX_FEATURE_EXTRACTOR: False

MASK_ON: False #默认是True,我这里改为False,因为我没有用到语义分割的功能

DATASETS:

TRAIN: ("coco_2014_train",) #注意这里的训练集和测试集的名字,

TEST: ("coco_2014_val",) #它们和paths_catalog.py中DATASETS相对应

DATALOADER:

SIZE_DIVISIBILITY: 32

SOLVER:

BASE_LR: 0.01 #起始学习率,学习率的调整有多种策略,訪框架自定义了一种策略

WEIGHT_DECAY: 0.0001

#这是什么意思呢?是为了在不同的迭代区间进行学习率的调整而设定的.以我的数据集为例,

#我149898张图,计划是每4个epoch衰减一次,所以如下设置.

STEPS: (599592, 1199184)

MAX_ITER: 1300000 #最大迭代次数

看完模型配置文件,你再看看MaskRCNN-Benchmark框架默认配置文件(defaults.py)你就会发现有不少参数有重合。嘿嘿,阅读代码会发现defaults.py会合并模型配置文件中的参数,defaults.py顾名思义就是提供了默认的参数配置,如果模型配置文件中对訪参数有改动则以模型中的为准。

import os

from yacs.config import CfgNode as CN

_C = CN()

_C.MODEL = CN()

_C.MODEL.RPN_ONLY = False

_C.MODEL.MASK_ON = False

_C.MODEL.DEVICE = "cuda"

_C.MODEL.META_ARCHITECTURE = "GeneralizedRCNN"

_C.MODEL.WEIGHT = ""

_C.INPUT = CN()

# 先检验训练集图片的大小来相应的修改,否则会造成内存溢出。

_C.INPUT.MIN_SIZE_TRAIN = 800 #训练集图片最小尺寸

_C.INPUT.MAX_SIZE_TRAIN = 1333 #训练集图片最大尺寸

_C.INPUT.MIN_SIZE_TEST = 800

_C.INPUT.MAX_SIZE_TEST = 1333

_C.INPUT.PIXEL_MEAN = [102.9801, 115.9465, 122.7717]

_C.INPUT.PIXEL_STD = [1., 1., 1.]

_C.INPUT.TO_BGR255 = True

_C.DATASETS = CN()

_C.DATASETS.TRAIN = () #在模型配置文件中已给出

_C.DATASETS.TEST = ()

_C.DATALOADER = CN()

_C.DATALOADER.NUM_WORKERS = 4 #数据生成启线程数

_C.DATALOADER.SIZE_DIVISIBILITY = 0

_C.DATALOADER.ASPECT_RATIO_GROUPING = True

_C.MODEL.BACKBONE = CN()

_C.MODEL.BACKBONE.CONV_BODY = "R-50-C4"

_C.MODEL.BACKBONE.FREEZE_CONV_BODY_AT = 2

_C.MODEL.BACKBONE.OUT_CHANNELS = 256 * 4

_C.MODEL.RPN = CN()

_C.MODEL.RPN.USE_FPN = False

_C.MODEL.RPN.ANCHOR_SIZES = (32, 64, 128, 256, 512)

_C.MODEL.RPN.ANCHOR_STRIDE = (16,)

_C.MODEL.RPN.ASPECT_RATIOS = (0.5, 1.0, 2.0)

_C.MODEL.RPN.STRADDLE_THRESH = 0

_C.MODEL.RPN.FG_IOU_THRESHOLD = 0.7

_C.MODEL.RPN.BG_IOU_THRESHOLD = 0.3

_C.MODEL.RPN.BATCH_SIZE_PER_IMAGE = 256

_C.MODEL.RPN.POSITIVE_FRACTION = 0.5

_C.MODEL.RPN.PRE_NMS_TOP_N_TRAIN = 12000

_C.MODEL.RPN.PRE_NMS_TOP_N_TEST = 6000

_C.MODEL.RPN.POST_NMS_TOP_N_TRAIN = 2000

_C.MODEL.RPN.POST_NMS_TOP_N_TEST = 1000

_C.MODEL.RPN.NMS_THRESH = 0.7

_C.MODEL.RPN.MIN_SIZE = 0

_C.MODEL.RPN.FPN_POST_NMS_TOP_N_TRAIN = 2000

_C.MODEL.RPN.FPN_POST_NMS_TOP_N_TEST = 2000

_C.MODEL.ROI_HEADS = CN()

_C.MODEL.ROI_HEADS.USE_FPN = False

_C.MODEL.ROI_HEADS.FG_IOU_THRESHOLD = 0.5

_C.MODEL.ROI_HEADS.BG_IOU_THRESHOLD = 0.5

_C.MODEL.ROI_HEADS.BBOX_REG_WEIGHTS = (10., 10., 5., 5.)

_C.MODEL.ROI_HEADS.BATCH_SIZE_PER_IMAGE = 512

_C.MODEL.ROI_HEADS.POSITIVE_FRACTION = 0.25

_C.MODEL.ROI_HEADS.SCORE_THRESH = 0.05

_C.MODEL.ROI_HEADS.NMS = 0.5

_C.MODEL.ROI_HEADS.DETECTIONS_PER_IMG = 100

_C.MODEL.ROI_BOX_HEAD = CN()

_C.MODEL.ROI_BOX_HEAD.FEATURE_EXTRACTOR = "ResNet50Conv5ROIFeatureExtractor"

_C.MODEL.ROI_BOX_HEAD.PREDICTOR = "FastRCNNPredictor"

_C.MODEL.ROI_BOX_HEAD.POOLER_RESOLUTION = 14

_C.MODEL.ROI_BOX_HEAD.POOLER_SAMPLING_RATIO = 0

_C.MODEL.ROI_BOX_HEAD.POOLER_SCALES = (1.0 / 16,)

#数据集类别数,默认是81,因为coco数据集为80+1(背景),我的数据集只有4个类别,加上背景也就是5个类别

_C.MODEL.ROI_BOX_HEAD.NUM_CLASSES = 5

_C.MODEL.ROI_BOX_HEAD.MLP_HEAD_DIM = 1024

_C.MODEL.ROI_MASK_HEAD = CN()

_C.MODEL.ROI_MASK_HEAD.FEATURE_EXTRACTOR = "ResNet50Conv5ROIFeatureExtractor"

_C.MODEL.ROI_MASK_HEAD.PREDICTOR = "MaskRCNNC4Predictor"

_C.MODEL.ROI_MASK_HEAD.POOLER_RESOLUTION = 14

_C.MODEL.ROI_MASK_HEAD.POOLER_SAMPLING_RATIO = 0

_C.MODEL.ROI_MASK_HEAD.POOLER_SCALES = (1.0 / 16,)

_C.MODEL.ROI_MASK_HEAD.MLP_HEAD_DIM = 1024

_C.MODEL.ROI_MASK_HEAD.CONV_LAYERS = (256, 256, 256, 256)

_C.MODEL.ROI_MASK_HEAD.RESOLUTION = 14

_C.MODEL.ROI_MASK_HEAD.SHARE_BOX_FEATURE_EXTRACTOR = True

_C.MODEL.RESNETS = CN()

_C.MODEL.RESNETS.NUM_GROUPS = 1

_C.MODEL.RESNETS.WIDTH_PER_GROUP = 64

_C.MODEL.RESNETS.STRIDE_IN_1X1 = True

_C.MODEL.RESNETS.TRANS_FUNC = "BottleneckWithFixedBatchNorm"

_C.MODEL.RESNETS.STEM_FUNC = "StemWithFixedBatchNorm"

_C.MODEL.RESNETS.RES5_DILATION = 1

_C.MODEL.RESNETS.RES2_OUT_CHANNELS = 256

_C.MODEL.RESNETS.STEM_OUT_CHANNELS = 64

_C.SOLVER = CN()

_C.SOLVER.MAX_ITER = 40000 #最大迭代次数

_C.SOLVER.BASE_LR = 0.02 #初始学习率,这个通常在模型配置文件中有设置

_C.SOLVER.BIAS_LR_FACTOR = 2

_C.SOLVER.MOMENTUM = 0.9

_C.SOLVER.WEIGHT_DECAY = 0.0005

_C.SOLVER.WEIGHT_DECAY_BIAS = 0

_C.SOLVER.GAMMA = 0.1

_C.SOLVER.STEPS = (30000,)

_C.SOLVER.WARMUP_FACTOR = 1.0 / 3

_C.SOLVER.WARMUP_ITERS = 500 #预热迭代次数,预热迭代次数内(小于訪值)的学习率比较低

_C.SOLVER.WARMUP_METHOD = "constant" #预热策略,有'constant'和'linear'两种

_C.SOLVER.CHECKPOINT_PERIOD = 2000 #生成检查点(checkpoint)的步长

_C.SOLVER.IMS_PER_BATCH = 1 #一个batch包含的图片数量

_C.TEST = CN()

_C.TEST.EXPECTED_RESULTS = []

_C.TEST.EXPECTED_RESULTS_SIGMA_TOL = 4

_C.TEST.IMS_PER_BATCH = 1

_C.OUTPUT_DIR = "output" #主要作为checkpoint和inference的输出目录

_C.PATHS_CATALOG = os.path.join(os.path.dirname(__file__), "paths_catalog.py")

关于path_catalog其实最重要的就是DatasetCatalog这个类。

class DatasetCatalog(object):

DATA_DIR = "datasets"

DATASETS = {

"coco_2014_train": (

"coco/train2014", #这里是訪数据集的主目录,称其为root,訪root会和标注文件中images字段中的file_name指定的路径进行拼接得到图片的完整路径

"coco/annotations/instances_train2014.json", # 标注文件路径

),

"coco_2014_val": (

"coco/val2014", #同上

"coco/annotations/instances_val2014.json" #同上

),

}

@staticmethod

def get(name):

if "coco" in name: #e.g. "coco_2014_train"

data_dir = DatasetCatalog.DATA_DIR

attrs = DatasetCatalog.DATASETS[name]

args = dict(

root=os.path.join(data_dir, attrs[0]),

ann_file=os.path.join(data_dir, attrs[1]),

)

return dict(

factory="COCODataset",

args=args,

)

raise RuntimeError("Dataset not available: {}".format(name))

三、训练与测试

# 进入maskrcnn-benchmark目录下,激活maskrcnn_benchmark虚拟环境

(base) xxx@xxx:~/github/maskrcnn-benchmark$ source activate maskrcnn_benchmark

(maskrcnn_benchmark) xxx@xxx:~/github/maskrcnn-benchmark$

=============训练模型============

#指定模型配置文件,执行训练启动脚本

(maskrcnn_benchmark) xxx@xxx:~/github/maskrcnn-benchmark$ python tools/train_net.py \

--config-file configs/e2e_mask_rcnn_R_101_FPN_1x.yaml

=============测试模型============

#指定模型配置文件,执行测试启动脚本

(maskrcnn_benchmark) xxx@xxx:~/github/maskrcnn-benchmark$ python tools/test_net.py \

--config-file configs/e2e_mask_rcnn_R_101_FPN_1x.yaml

3.1 报错与解决

执行python setup.py build_ext install报错如下,执行pip install cython解决

gcc: error: pycocotools/_mask.c: 没有那个文件或目录

error: command 'gcc' failed with exit status 1

运行训练时报错:

from maskrcnn_benchmark import _C

ImportError: /home/xxx/github/maskrcnn-benchmark/maskrcnn_benchmark/_C.cpython-37m-x86_64-linux-gnu.so: undefined symbol: _ZN3c1019UndefinedTensorImpl10_singletonE

解决(若执行完pytorch降级还是报错,再尝试执行重新编译):

- pytorch降级,执行

conda install pytorch=1.0.0 -c soumith

pytorch-1.0.0与torchvision-0.2.1配对。 - 删除maskrcnn-benchmark下的build文件夹,重新编译。

rm -rf build

python setup.py build develop

pytorch-1.0.0离线下载地址:(ubuntu16.04)

https://conda.anaconda.org/soumith/linux-64/pytorch-1.0.0-py3.7_cuda9.0.176_cudnn7.4.1_1.tar.bz2

运行训练时报错:

参考:http://www.sohu.com/a/332756215_473283

AttributeError: module 'torch' has no attribute 'bool'

解决:

修改如下三个文件:

maskrcnn-benchmark/maskrcnn_benchmark/structures/segmentation_mask.py

maskrcnn-benchmark/maskrcnn_benchmark/modeling/balanced_positive_negative_sampler.py

maskrcnn-benchmark/maskrcnn_benchmark/modeling/rpn/inference.py

torch.bool换成torch.uint8

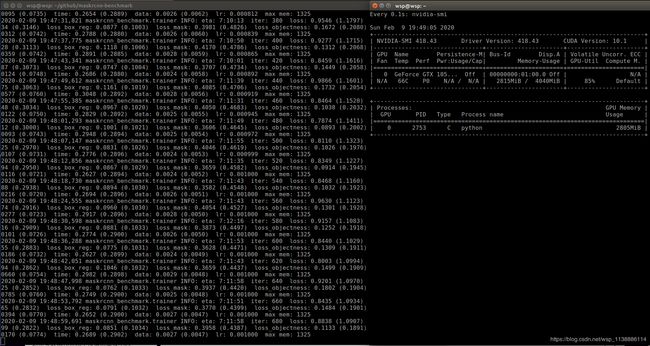

训练执行:python tools/train_net.py --config-file "configs/e2e_mask_rcnn_R_50_FPN_1x.yaml"

参考与鸣谢

https://yq.aliyun.com/articles/701361

https://blog.csdn.net/ChuiGeDaQiQiu/article/details/83868512

数据集:https://zhuanlan.zhihu.com/p/29393415

训练自己的数据集:https://www.cnblogs.com/yanghailin/p/11214526.html

Mask Scoring RCNN训练自己的数据:https://blog.csdn.net/linolzhang/article/details/97833354

预训练模型https://www.cnblogs.com/houyong/p/10266228.html

MaskRCNN Benchmark 配置问题:https://blog.csdn.net/hankexin1314/article/details/102800908

数据类型修改:https://www.cnblogs.com/jiading/p/12055904.html