- Linux C多人网络聊天室

HWY_猿

网络编程多人网络聊天室Linux网络聊天室聊天室LinuxC多人网络聊天室

经过好几天的日夜奋斗,总算把这个聊天室给做出来了,虽然说不上多好,但也是这几天从早到晚劳动的成功,所以就写这篇博文来记录一下啦。别的不敢说,确保能用就是了,完整代码在最后哦~当然啦,如果有幸被转发,还请注明来处哈~一、功能这个Linux下C版本的多人网络聊天室具备以下几个基本功能(或者说需求):(一)C/S模式,IPv4的TCP通信;(二)客户端登录需要账号密码,没有账号需要注册;(三)服务器每接

- Ubuntu,centos下源码安装cmake指定版本

你若盛开,清风自来!

ubuntucentoslinux

网址:Indexof/files/v3.23常规安装出错1.先把安装包cmake-3.12.4-Linux-x86_64.tar.gz复制到指定目录2.解压tar-zxvfcmake-3.12.4-Linux-x86_64.tar.gz3.进入解压之后的文件夹cdcmake-3.12.4-Linux-x86_64.tar.gz4.运行下面命令出错bash:./bootstrap:Nosuchfil

- 非常实用的linux操作系统一键巡检脚本

我科绝伦(Huanhuan Zhou)

linuxlinuxchrome运维

[root@localhost~]#chmod+xsystem_check.sh[root@localhost~]#./system_check.sh[root@localhost~]#cat/root/check_log/check-20250227.txt脚本内容:#!/bin/bash#@Author:zhh#beseemCentOS6.XCentOS7.X#date:20250224#检查

- 【linux自动化实践】linux shell 脚本 替换某文本

忙碌的菠萝

linux自动化实践linux自动化运维

在Linuxshell脚本中,可以使用sed命令来替换文本。以下是一个基本的例子,它将在文件example.txt中查找文本old_text并将其替换为new_textsed-i's/old_text/new_text/g'example.txt解释:sed:是streameditor的缩写,用于处理文本数据。-i:表示直接修改文件内容。s:表示替换操作。old_text:要被替换的文本。new_

- Qt5.6在Linux中无法切换中文输入法问题解决

糯米藕片

经验分享qtlinux开发语言

注意Qt5.6.1要编译1.0.6版本源码chmod777赋权复制两个地方so重启QtCreatorsudocplibfcitxplatforminputcontextplugin.so/home/shen/Qt5.6.1/Tools/QtCreator/lib/Qt/plugins/platforminputcontextssudocplibfcitxplatforminputcontextpl

- Llama.cpp 服务器安装指南(使用 Docker,GPU 专用)

田猿笔记

AI高级应用llama服务器dockerllama.cpp

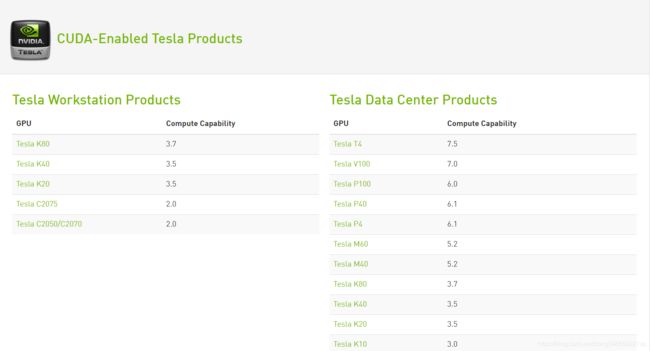

前置条件在开始之前,请确保你的系统满足以下要求:操作系统:Ubuntu20.04/22.04(或支持Docker的Linux系统)。硬件:NVIDIAGPU(例如RTX4090)。内存:16GB+系统内存,GPU需12GB+显存(RTX4090有24GB)。存储:15GB+可用空间(用于源码、镜像和模型文件)。网络:需要互联网连接以下载源码和依赖。软件:已安装并运行Docker。已安装NVIDIA

- 驱动开发系列39 - Linux Graphics 3D 绘制流程(二)- 设置渲染管线

黑不溜秋的

GPU驱动专栏驱动开发

一:概述Intel的Iris驱动是Mesa中的Gallium驱动,主要用于IntelGen8+GPU(Broadwell及更新架构)。它负责与i915内核DRM驱动交互,并通过Vulkan(ANV)、OpenGL(IrisGallium)、或OpenCL(Clover)来提供3D加速。在Iris驱动中,GPUPipeline设置涉及多个部分,包括编译和上传着色器、设置渲染目标、绑定缓冲区、配置固定

- Linux驱动开发: USB驱动开发

DS小龙哥

Linux系统编程与驱动开发linuxUSB驱动嵌入式

一、USB简介1.1什么是USB?USB是连接计算机系统与外部设备的一种串口总线标准,也是一种输入输出接口的技术规范,被广泛地应用于个人电脑和移动设备等信息通讯产品,USB就是简写,中文叫通用串行总线。最早出现在1995年,伴随着奔腾机发展而来。自微软在Windows98中加入对USB接口的支持后,USB接口才推广开来,USB设备也日渐增多,如数码相机、摄像头、扫描仪、游戏杆、打印机、键盘、鼠标等

- 关闭linux系统端口占用,关闭linux系统端口的两种方法

爱吃面的喵

关闭linux系统端口占用

1、通过杀掉进程的方法来关闭端口每个端口都有一个守护进程,kill掉这个守护进程就可以了每个端口都是一个进程占用着,第一步、用下面命令netstat-anp|grep端口找出占用这个端口的进程,第二步、用下面命令kill-9PID杀掉就行了2、通过开启关闭服务的方法来开启/关闭端口因为每个端口都有对应的服务,因此要关闭端口只要关闭相应的服务就可以了。linux中开机自动启动的服务一般都存放在两个地

- Linux 查看端口占用命令

酒酿小圆子~

linux运维服务器

文章目录1、lsof-i:端口号2、netstat命令2.1netstat-tunlp命令2.2netstat-anp命令1、lsof-i:端口号用于查看某一端口的占用情况,比如查看5000端口使用情况:sudolsof-i:5000注意:这里最好使用sudo开启管理员权限,未开启管理员权限时,可能会检测不到相关进程。(并非所有进程都能被检测到,所有非本用户的进程信息将不会显示,如果想看到所有信息

- Linux Device Driver 3rd 上

xiaozi63

linux内核驱动程序

第一章设备驱动程序的简介处于上层应用与底层硬件设备的软件层区分机制和策略是Linux最好的思想之一,机制指的是需要提供什么功能,策略指的是如何使用这个功能!通常不同的环境需要不同的方式来使用硬件,则驱动应当尽可能地不实现策略.驱动程序设计需要考虑一下几个方面的因素:提供给用户尽量多的选项编写驱动程序所占用的时间,驱动程序的操作耗时需要尽量缩减.尽量保持程序简单内核概览:进程管理:负责创建和销毁进程

- 最通用的跨平台引擎:ShiVa 3D引擎

pizi0475

图形图像其它文章图形引擎游戏引擎引擎跨平台脚本服务器sslsoap

ShiVa3D引擎是最通用的跨平台引擎,可以在Web浏览器运行并且也支持Windows,Mac,Linux,Wii,iPhone,iPad,Android,WebOS和AirplaySDK。该引擎支持SSL–securized插件扩展,很像PhysX引擎,FMOD声音库,ARToolkit和ScaleformHUD引擎。ClassicGeometry经典的图形处理支持多边形网,其中包括:-静态网格

- Linux系统如何排查端口占用

程序猿000001号

linux运维服务器

如何在Linux系统中排查端口占用在Linux系统中,当您遇到网络服务无法启动或响应异常的情况时,可能是因为某个特定的端口已经被其他进程占用。这时,您需要进行端口占用情况的排查来解决问题。本文将介绍几种常用的命令行工具和方法,帮助您快速定位并解决端口占用的问题。1.使用netstat命令netstat是一个网络统计工具,它可以显示网络连接、路由表、接口统计等信息。要检查端口占用情况,可以使用以下命

- Linux查看端口占用情况的几种方式

liu_caihong

linux服务器网络

Linux查看端口占用情况的几种方式概述测试环境为Centos7.9,本文简单给出了几种检测端口的例子。一、查看本机端口占用1、netstat#安装netstatyum-yinstallnet-tools#检测端口占用netstat-npl|grep"端口"[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-FFUW0j6I-1655191692938)(C:%5CUse

- Linux上用C++和GCC开发程序实现不同PostgreSQL实例下单个数据库的多个Schema之间的稳定高效的数据迁移

weixin_30777913

c++数据库postgresql

设计一个在Linux上运行的GCCC++程序,同时连接两个不同的PostgreSQL实例,两个实例中分别有一个数据库的多个Schema的表结构完全相同,复制一个实例中一个数据库的多个Schema里的所有表的数据到另一个实例中一个数据库的多个Schema里,使用以下快速高效的方法,加入异常处理,支持每隔固定时间重试一定次数,每张表的复制运行状态和记录条数,开始结束时间戳,运行时间,以及每个批次的运行

- 【spug】使用

勤不了一点

CI/CDpythondjangoci/cd运维devops

目录简介下载与安装初始化配置启动与日志版本更新登录与使用工作台主机管理批量执行配置中心应用发布系统管理监控与告警使用问题简介手动部署|Spugwalle的升级版本轻量级无Agent主机管理主机批量执行主机在线终端文件在线上传下载应用发布部署在线任务计划配置中心监控报警如果有测试错误请指出。下载与安装测试环境:Python3.7.8CentOSLinuxrelease7.4.1708(Core)sp

- nginx 在线预览与强制下载

勤不了一点

nginxnginx运维

环境如下:nginxversion:nginx/1.14.1nginxversion:nginx/1.16.1Chrome:102.0.5005.63(正式版本)(64位)CentOSLinuxrelease7.5.1804(Core)将任意类型文件设置成在线预览或者直接下载以.log和.txt文件为例,nginx默认配置下.txt是可以在线打开,而.log会有弹窗,也就是下载。使用是nginx,

- linux 查看进程启动方式

勤不了一点

系统linux运维服务器

目录如果是systemd管理的服务怎么快速找到对应的服务器呢什么是CGroup查找进程对应的systemd服务方法一:查看/proc//cgroup文件方法二:使用ps命令结合--cgroup选项方法三:systemd-cgls关于system.slice与user.slice方法四:查看文件查找非system服务进程步骤1-判断是否是system服务进程步骤2-判断服务所在目录,查找启动脚本步骤

- nginx 安装(下载解压就行,免安装)

当归1024

nginxnginx运维

nginx是一个高性能的HTTP和反向代理web服务器,同时也提供了IMAP/POP3/SMTP服务。nginx由C语言编写,内存占用少,性能稳定,并发能力强,功能丰富;可以在大多数UnixLinuxOS上编译运行,并有Windows移植版。1、nginx下载地址:nginx:download2、windows安装及启动nginx是绿色免安装的,解压后可以直接启动双击nginx.exe即可启动服务

- Linux查看磁盘命令df-h详解

小毛驴850

linux服务器运维

df-h是一个常用的Linux命令,用于查看文件系统的磁盘使用情况并以易于阅读的方式显示。以下是df-h命令的详细解释:-h:以人类可读的格式显示磁盘空间大小。例如,使用GB、MB、KB等单位代替字节。执行df-h命令后,将会显示如下输出:FilesystemSizeUsedAvailUse%Mountedon/dev/sda120G10G10G50%/tmpfs2.0G02.0G0%/dev/s

- No module named ‘typing‘ 问题解决

qq_40375355

Linxlinuxpython

ImportError:Nomodulenamed‘typing’问题解决1.问题描述Linux环境升级pip到最新后,运行pip命令出现"Nomodulenamed‘typing’"错误2.问题原因Linux默认环境是2.7,pip==21已经不在支持python2.7,所以出现该问题3.解决方案网上通用python-mpipuninstallpip执行该命令,如果报错以下内容:'pip'isa

- OpenCV开源机器视觉软件

视觉人机器视觉

杂说opencv开源人工智能

OpenCV(OpenSourceComputerVisionLibrary)是一个开源的计算机视觉和机器学习软件库,广泛应用于实时图像处理、视频分析、物体检测、人脸识别等领域。它由英特尔实验室于1999年发起,现已成为计算机视觉领域最流行的工具之一,支持多种编程语言(如C++、Python、Java)和操作系统(Windows、Linux、macOS、Android、iOS)。核心功能图像处理基

- Kali Linux信息收集工具全集

weixin_30359021

001:0trace、tcptraceroute、traceroute描述:进行路径枚举时,传统基于ICMP协议的探测工具经常会受到屏蔽,造成探测结果不够全面的问题。与此相对基于TCP协议的探测,则成功率会有所提高,同时基于已经建立的合法TCP会话的探测则更具优势,甚至可以探测到目标内网。虽然没有银弹,但结合多种技术手段,则可以收集更加完整的目标信息,为后续渗透测试做准备。002:Acccheck

- 向量数据库milvus部署

一方有点方

milvus

官方文档MilvusvectordatabasedocumentationRunMilvusinDocker(Linux)|MilvusDocumentationMilvusvectordatabasedocumentation按部署比较简单,这里说一下遇到的问题一:DockerCompose方式部署1、镜像无法拉取,(docker.io被禁)只能获取以下镜像,image:quay.io/core

- Kali Linux信息收集工具

dechen6073

http://www.freebuf.com/column/150118.html可能大部分渗透测试者都想成为网络空间的007,而我个人的目标却是成为Q先生!看过007系列电影的朋友,应该都还记得那个戏份不多但一直都在的Q先生(由于年级太长目前已经退休)。他为007发明了众多神奇的武器,并且总能在关键时刻挽救大英雄于危难之间。但是与Q先生相比我很惭愧。因为到目前为止我还没有发明出什么可以与他相比的

- RK3568平台开发系列讲解(内核篇)Linux 内核启动流程

内核笔记

RK3568linux

更多内容可以加入Linux系统知识库套餐(教程+视频+答疑)返回专栏总目录文章目录一、Linux内核启动流程导图二、自解压阶段三、内核运行入口四、汇编阶段五、C函数阶段六、启动内核现场七、执行第一个应用init程序沉淀、分享、成长,让自己和他人都能有所收获!一、Linux内核启动流程导图自解压:Bootlo

- linux内核代码-注释详解:inet_create

薇儿安蓝

linux网络

/*linux-5.10.x\net\ipv4\af_inet.c*主要作用是分配和初始化一个新的网络套接字,并将其添加到系统的网络套接字表中。总结:套接字创建:首先会调用sock_create()函数创建一个新的套接字实例,该函数返回一个指向structsocket结构体的指针,表示创建的套接字套接字类型和协议设置:根据指定的协议类型,函数会设置套接字的类型和协议族。常见的协议族包括IPv4(A

- Linux 内核 net_proto_family

星空探索

LinuxKernel网络实现LinuxKernel

staticconststructnet_proto_familyinet_family_ops={.family=PF_INET,.create=inet_create,.owner=THIS_MODULE,};(void)sock_register(&inet_family_ops);/***sock_register-addasocketprotocolhandler*@ops:descri

- 慢慢欣赏linux 网络协议栈二 net_device以及初始化注册 (4.19版本)

天麓

网络linuxdevicedriverlinux内核linux网络协议网络

代码流程staticint__initnet_dev_init(void){BUG_ON(!dev_boot_phase);dev_proc_init();=>int__initdev_proc_init(void){intret=register_pernet_subsys(&dev_proc_ops);==>staticstructpernet_operations__net_initdata

- (一文搞定)使用sd卡,往野火EBF6UL/LL-pro板子,移植官方uboot、kernel以及构建rootfs

又摆有菜

嵌入式硬件arm开发linux

0、事先声明1、我的pc是Linux操作系统,接下来的操作也都是在linux系统上的。不是windows操作系统。(如若你是win系统,可安装虚拟机,解决这个问题。此帖不讨论如何在win上安装虚拟机)。2、只在win下面使用了串口软件mobaxterm。(此操作,事先请先安装usb转串口ch340驱动)1、EBF6UL/LL-pro简介这是野火的开发版,芯片使用的nxp的imx6ull。其他不在介

- web报表工具FineReport常见的数据集报错错误代码和解释

老A不折腾

web报表finereport代码可视化工具

在使用finereport制作报表,若预览发生错误,很多朋友便手忙脚乱不知所措了,其实没什么,只要看懂报错代码和含义,可以很快的排除错误,这里我就分享一下finereport的数据集报错错误代码和解释,如果有说的不准确的地方,也请各位小伙伴纠正一下。

NS-war-remote=错误代码\:1117 压缩部署不支持远程设计

NS_LayerReport_MultiDs=错误代码

- Java的WeakReference与WeakHashMap

bylijinnan

java弱引用

首先看看 WeakReference

wiki 上 Weak reference 的一个例子:

public class ReferenceTest {

public static void main(String[] args) throws InterruptedException {

WeakReference r = new Wea

- Linux——(hostname)主机名与ip的映射

eksliang

linuxhostname

一、 什么是主机名

无论在局域网还是INTERNET上,每台主机都有一个IP地址,是为了区分此台主机和彼台主机,也就是说IP地址就是主机的门牌号。但IP地址不方便记忆,所以又有了域名。域名只是在公网(INtERNET)中存在,每个域名都对应一个IP地址,但一个IP地址可有对应多个域名。域名类型 linuxsir.org 这样的;

主机名是用于什么的呢?

答:在一个局域网中,每台机器都有一个主

- oracle 常用技巧

18289753290

oracle常用技巧 ①复制表结构和数据 create table temp_clientloginUser as select distinct userid from tbusrtloginlog ②仅复制数据 如果表结构一样 insert into mytable select * &nb

- 使用c3p0数据库连接池时出现com.mchange.v2.resourcepool.TimeoutException

酷的飞上天空

exception

有一个线上环境使用的是c3p0数据库,为外部提供接口服务。最近访问压力增大后台tomcat的日志里面频繁出现

com.mchange.v2.resourcepool.TimeoutException: A client timed out while waiting to acquire a resource from com.mchange.v2.resourcepool.BasicResou

- IT系统分析师如何学习大数据

蓝儿唯美

大数据

我是一名从事大数据项目的IT系统分析师。在深入这个项目前需要了解些什么呢?学习大数据的最佳方法就是先从了解信息系统是如何工作着手,尤其是数据库和基础设施。同样在开始前还需要了解大数据工具,如Cloudera、Hadoop、Spark、Hive、Pig、Flume、Sqoop与Mesos。系 统分析师需要明白如何组织、管理和保护数据。在市面上有几十款数据管理产品可以用于管理数据。你的大数据数据库可能

- spring学习——简介

a-john

spring

Spring是一个开源框架,是为了解决企业应用开发的复杂性而创建的。Spring使用基本的JavaBean来完成以前只能由EJB完成的事情。然而Spring的用途不仅限于服务器端的开发,从简单性,可测试性和松耦合的角度而言,任何Java应用都可以从Spring中受益。其主要特征是依赖注入、AOP、持久化、事务、SpringMVC以及Acegi Security

为了降低Java开发的复杂性,

- 自定义颜色的xml文件

aijuans

xml

<?xml version="1.0" encoding="utf-8"?> <resources> <color name="white">#FFFFFF</color> <color name="black">#000000</color> &

- 运营到底是做什么的?

aoyouzi

运营到底是做什么的?

文章来源:夏叔叔(微信号:woshixiashushu),欢迎大家关注!很久没有动笔写点东西,近些日子,由于爱狗团产品上线,不断面试,经常会被问道一个问题。问:爱狗团的运营主要做什么?答:带着用户一起嗨。为什么是带着用户玩起来呢?究竟什么是运营?运营到底是做什么的?那么,我们先来回答一个更简单的问题——互联网公司对运营考核什么?以爱狗团为例,绝大部分的移动互联网公司,对运营部门的考核分为三块——用

- js面向对象类和对象

百合不是茶

js面向对象函数创建类和对象

接触js已经有几个月了,但是对js的面向对象的一些概念根本就是模糊的,js是一种面向对象的语言 但又不像java一样有class,js不是严格的面向对象语言 ,js在java web开发的地位和java不相上下 ,其中web的数据的反馈现在主流的使用json,json的语法和js的类和属性的创建相似

下面介绍一些js的类和对象的创建的技术

一:类和对

- web.xml之资源管理对象配置 resource-env-ref

bijian1013

javaweb.xmlservlet

resource-env-ref元素来指定对管理对象的servlet引用的声明,该对象与servlet环境中的资源相关联

<resource-env-ref>

<resource-env-ref-name>资源名</resource-env-ref-name>

<resource-env-ref-type>查找资源时返回的资源类

- Create a composite component with a custom namespace

sunjing

https://weblogs.java.net/blog/mriem/archive/2013/11/22/jsf-tip-45-create-composite-component-custom-namespace

When you developed a composite component the namespace you would be seeing would

- 【MongoDB学习笔记十二】Mongo副本集服务器角色之Arbiter

bit1129

mongodb

一、复本集为什么要加入Arbiter这个角色 回答这个问题,要从复本集的存活条件和Aribter服务器的特性两方面来说。 什么是Artiber? An arbiter does

not have a copy of data set and

cannot become a primary. Replica sets may have arbiters to add a

- Javascript开发笔记

白糖_

JavaScript

获取iframe内的元素

通常我们使用window.frames["frameId"].document.getElementById("divId").innerHTML这样的形式来获取iframe内的元素,这种写法在IE、safari、chrome下都是通过的,唯独在fireforx下不通过。其实jquery的contents方法提供了对if

- Web浏览器Chrome打开一段时间后,运行alert无效

bozch

Webchormealert无效

今天在开发的时候,突然间发现alert在chrome浏览器就没法弹出了,很是怪异。

试了试其他浏览器,发现都是没有问题的。

开始想以为是chorme浏览器有啥机制导致的,就开始尝试各种代码让alert出来。尝试结果是仍然没有显示出来。

这样开发的结果,如果客户在使用的时候没有提示,那会带来致命的体验。哎,没啥办法了 就关闭浏览器重启。

结果就好了,这也太怪异了。难道是cho

- 编程之美-高效地安排会议 图着色问题 贪心算法

bylijinnan

编程之美

import java.util.ArrayList;

import java.util.Collections;

import java.util.List;

import java.util.Random;

public class GraphColoringProblem {

/**编程之美 高效地安排会议 图着色问题 贪心算法

* 假设要用很多个教室对一组

- 机器学习相关概念和开发工具

chenbowen00

算法matlab机器学习

基本概念:

机器学习(Machine Learning, ML)是一门多领域交叉学科,涉及概率论、统计学、逼近论、凸分析、算法复杂度理论等多门学科。专门研究计算机怎样模拟或实现人类的学习行为,以获取新的知识或技能,重新组织已有的知识结构使之不断改善自身的性能。

它是人工智能的核心,是使计算机具有智能的根本途径,其应用遍及人工智能的各个领域,它主要使用归纳、综合而不是演绎。

开发工具

M

- [宇宙经济学]关于在太空建立永久定居点的可能性

comsci

经济

大家都知道,地球上的房地产都比较昂贵,而且土地证经常会因为新的政府的意志而变幻文本格式........

所以,在地球议会尚不具有在太空行使法律和权力的力量之前,我们外太阳系统的友好联盟可以考虑在地月系的某些引力平衡点上面,修建规模较大的定居点

- oracle 11g database control 证书错误

daizj

oracle证书错误oracle 11G 安装

oracle 11g database control 证书错误

win7 安装完oracle11后打开 Database control 后,会打开em管理页面,提示证书错误,点“继续浏览此网站”,还是会继续停留在证书错误页面

解决办法:

是 KB2661254 这个更新补丁引起的,它限制了 RSA 密钥位长度少于 1024 位的证书的使用。具体可以看微软官方公告:

- Java I/O之用FilenameFilter实现根据文件扩展名删除文件

游其是你

FilenameFilter

在Java中,你可以通过实现FilenameFilter类并重写accept(File dir, String name) 方法实现文件过滤功能。

在这个例子中,我们向你展示在“c:\\folder”路径下列出所有“.txt”格式的文件并删除。 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16

- C语言数组的简单以及一维数组的简单排序算法示例,二维数组简单示例

dcj3sjt126com

carray

# include <stdio.h>

int main(void)

{

int a[5] = {1, 2, 3, 4, 5};

//a 是数组的名字 5是表示数组元素的个数,并且这五个元素分别用a[0], a[1]...a[4]

int i;

for (i=0; i<5; ++i)

printf("%d\n",

- PRIMARY, INDEX, UNIQUE 这3种是一类 PRIMARY 主键。 就是 唯一 且 不能为空。 INDEX 索引,普通的 UNIQUE 唯一索引

dcj3sjt126com

primary

PRIMARY, INDEX, UNIQUE 这3种是一类PRIMARY 主键。 就是 唯一 且 不能为空。INDEX 索引,普通的UNIQUE 唯一索引。 不允许有重复。FULLTEXT 是全文索引,用于在一篇文章中,检索文本信息的。举个例子来说,比如你在为某商场做一个会员卡的系统。这个系统有一个会员表有下列字段:会员编号 INT会员姓名

- java集合辅助类 Collections、Arrays

shuizhaosi888

CollectionsArraysHashCode

Arrays、Collections

1 )数组集合之间转换

public static <T> List<T> asList(T... a) {

return new ArrayList<>(a);

}

a)Arrays.asL

- Spring Security(10)——退出登录logout

234390216

logoutSpring Security退出登录logout-urlLogoutFilter

要实现退出登录的功能我们需要在http元素下定义logout元素,这样Spring Security将自动为我们添加用于处理退出登录的过滤器LogoutFilter到FilterChain。当我们指定了http元素的auto-config属性为true时logout定义是会自动配置的,此时我们默认退出登录的URL为“/j_spring_secu

- 透过源码学前端 之 Backbone 三 Model

逐行分析JS源代码

backbone源码分析js学习

Backbone 分析第三部分 Model

概述: Model 提供了数据存储,将数据以JSON的形式保存在 Model的 attributes里,

但重点功能在于其提供了一套功能强大,使用简单的存、取、删、改数据方法,并在不同的操作里加了相应的监听事件,

如每次修改添加里都会触发 change,这在据模型变动来修改视图时很常用,并且与collection建立了关联。

- SpringMVC源码总结(七)mvc:annotation-driven中的HttpMessageConverter

乒乓狂魔

springMVC

这一篇文章主要介绍下HttpMessageConverter整个注册过程包含自定义的HttpMessageConverter,然后对一些HttpMessageConverter进行具体介绍。

HttpMessageConverter接口介绍:

public interface HttpMessageConverter<T> {

/**

* Indicate

- 分布式基础知识和算法理论

bluky999

算法zookeeper分布式一致性哈希paxos

分布式基础知识和算法理论

BY

[email protected]

本文永久链接:http://nodex.iteye.com/blog/2103218

在大数据的背景下,不管是做存储,做搜索,做数据分析,或者做产品或服务本身,面向互联网和移动互联网用户,已经不可避免地要面对分布式环境。笔者在此收录一些分布式相关的基础知识和算法理论介绍,在完善自我知识体系的同

- Android Studio的.gitignore以及gitignore无效的解决

bell0901

androidgitignore

github上.gitignore模板合集,里面有各种.gitignore : https://github.com/github/gitignore

自己用的Android Studio下项目的.gitignore文件,对github上的android.gitignore添加了

# OSX files //mac os下 .DS_Store

- 成为高级程序员的10个步骤

tomcat_oracle

编程

What

软件工程师的职业生涯要历经以下几个阶段:初级、中级,最后才是高级。这篇文章主要是讲如何通过 10 个步骤助你成为一名高级软件工程师。

Why

得到更多的报酬!因为你的薪水会随着你水平的提高而增加

提升你的职业生涯。成为了高级软件工程师之后,就可以朝着架构师、团队负责人、CTO 等职位前进

历经更大的挑战。随着你的成长,各种影响力也会提高。

- mongdb在linux下的安装

xtuhcy

mongodblinux

一、查询linux版本号:

lsb_release -a

LSB Version: :base-4.0-amd64:base-4.0-noarch:core-4.0-amd64:core-4.0-noarch:graphics-4.0-amd64:graphics-4.0-noarch:printing-4.0-amd64:printing-4.0-noa