Scrapy修改下载图片名字

源码下载:http://download.csdn.net/download/adam_zs/10167921

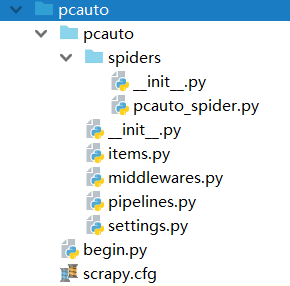

1.项目结构,下载图片

2.代码介绍

pipelines.py

from scrapy.pipelines.images import ImagesPipeline

from scrapy.exceptions import DropItem

from scrapy.http import Request

# 下载图片Pipeline

class DownImagePipeline(ImagesPipeline):

def get_media_requests(self, item, info):

for image_url in item['image_urls']:

yield Request(image_url, meta={'item': item, 'index': item['image_urls'].index(image_url)})

def file_path(self, request, response=None, info=None):

item = request.meta['item'] # 通过上面的meta传递过来item

index = request.meta['index']

car_name = item['car_name'][index] + "." + request.url.split('/')[-1].split('.')[-1]

down_file_name = u'full/{0}/{1}'.format(item['country'][0], car_name)

return down_file_name

pcauto_spider.py

# -*- coding: utf-8 -*-

import scrapy

from pcauto.items import PcautoImage

class PcautoSpider(scrapy.Spider):

name = "pcauto"

allowed_domains = ["pcauto.com.cn"]

start_urls = [

'http://www.pcauto.com.cn/zt/chebiao/guochan/',

'http://www.pcauto.com.cn/zt/chebiao/riben/',

'http://www.pcauto.com.cn/zt/chebiao/deguo/',

'http://www.pcauto.com.cn/zt/chebiao/faguo/',

'http://www.pcauto.com.cn/zt/chebiao/yidali/',

'http://www.pcauto.com.cn/zt/chebiao/yingguo/',

'http://www.pcauto.com.cn/zt/chebiao/meiguo/',

'http://www.pcauto.com.cn/zt/chebiao/hanguo/',

'http://www.pcauto.com.cn/zt/chebiao/qita/',

]

def parse(self, response):

item = PcautoImage()

srcs = response.xpath('//div[@class="dPic"]/i[@class="iPic"]/a/img/@src').extract()

car_name = response.xpath('//div[@class="dTxt"]/i[@class="iTit"]/a//text()').extract()

country = response.xpath('//div[@class="th"]/span/a//text()').extract()

item['image_urls'] = srcs

item['car_name'] = car_name

item['country'] = country

yield item

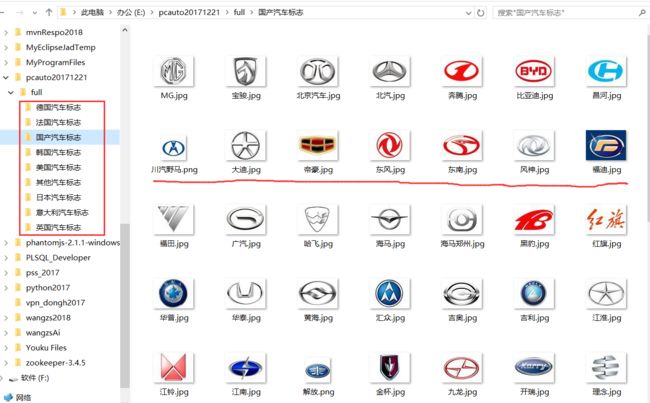

3.运行项目

pycharm中运行begin.py

from scrapy import cmdline

# cmdline.execute("scrapy crawl dmoz".split())

cmdline.execute("scrapy crawl pcauto".split())