飞桨AI课程干货--带你开启新世界的大门!

笔者近期体验了免费的“百度深度学习7日打卡第六期:Python小白逆袭大神”训练营,课程每天都有对应的直播,由中科院团队负责教学,每天有对应的作业贯穿其中,带你全程体验百度AI开放平台——AI Studio,飞桨PaddlePaddle,EasyDL。

看到课程还有这么多丰厚的礼品,打卡更有动力了!

介绍完课程,下面来点学习过程中的干货分享:

Day1-人工智能概述与入门基础

人工智能:Artificial Intelligence,英文缩写为AI。它是研究、开发用于模拟、延伸和扩展人的智能的理论、方法、技术及应用系统的一门新技术科学。

机器学习:一种实现人工智能的方法。

深度学习:一种实现机器学习的技术,人工神经网络是机器学习中的一个重要的算法“深度”就是说神经网络中众多的层。

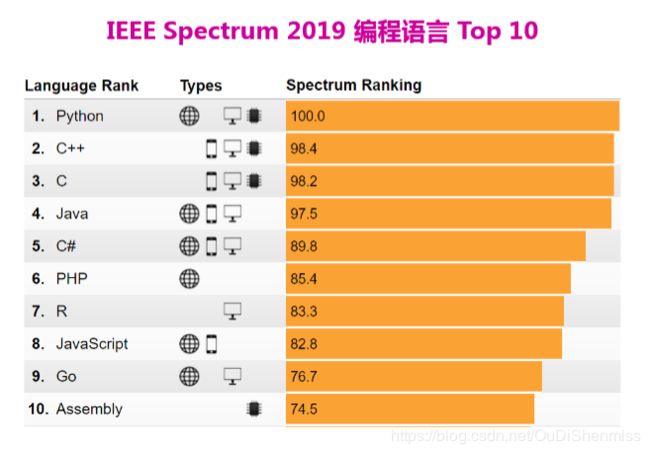

研究AI非常难,需要数学、编程、机器学习的基础,但是使用AI却很简单。Python最大的优势,就是它非常接近自然语言,易于阅读理解,编程更加简单直接,更加适合初学者。人工智能和Python互相之间成就者对方,人工智能算法促进Python的发展,而Python也让算法更加简单。随着NumPy,SciPy,Matplotlib等众多程序库的开发,Python越来越适合于做科学计算。

作业

作业一:输出 9*9 乘法口诀表(注意格式)

def table(): #在这里写下您的乘法口诀表代码吧!

for j in range(1,10):

for i in range(1,j+1):

print("%d*%d=%d\t"%(i,j,i*j),end="")

print("")

if __name__ == '__main__': table()

1 * 1 = 1

1 * 2 = 2 2 * 2 = 4

1 * 3 = 3 2 * 3 = 6 3 * 3 = 9

1 * 4 = 4 2 * 4 = 8 3 * 4 = 12 4 * 4 = 16

1 * 5 = 5 2 * 5 = 10 3 * 5 = 15 4 * 5 = 20 5 * 5 = 25

1 * 6 = 6 2 * 6 = 12 3 * 6 = 18 4 * 6 = 24 5 * 6 = 30 6 * 6 = 36

1 * 7 = 7 2 * 7 = 14 3 * 7 = 21 4 * 7 = 28 5 * 7 = 35 6 * 7 = 42 7 * 7 = 49

1 * 8 = 8 2 * 8 = 16 3 * 8 = 24 4 * 8 = 32 5 * 8 = 40 6 * 8 = 48 7 * 8 = 56 8 * 8 = 64

1 * 9 = 9 2 * 9 = 18 3 * 9 = 27 4 * 9 = 36 5 * 9 = 45 6 * 9 = 54 7 * 9 = 63 8 * 9 = 72 9 * 9 = 81作业二:查找特定名称文件

遍历”Day1-homework”目录下文件;找到文件名包含“2020”的文件;将文件名保存到数组result中;按照序号、文件名分行打印输出。

#导入OS模块

import os

#待搜索的目录路径

path = "Day1-homework"

#待搜索的名称

filename = "2020"

#定义保存结果的数组

result = []

def findfiles():

#在这里写下您的查找文件代码吧!

all = []

for dirpath,dirnames,filenames in os.walk(path):

for file in filenames:

dirpathnames = os.path.join(dirpath, file)

if filename in dirpathnames:

result.append(dirpathnames)

for i,name in enumerate(result):

print(i+1,name)

if __name__ == '__main__':

findfiles()

1 Day1-homework/18/182020.doc

2 Day1-homework/26/26/new2020.txt

3 Day1-homework/4/22/04:22:2020.txtDay2-深度学习实践平台与Python进阶

第二天的课程介绍了python的进阶语法、linux命令、vim编辑器、爬虫知识。

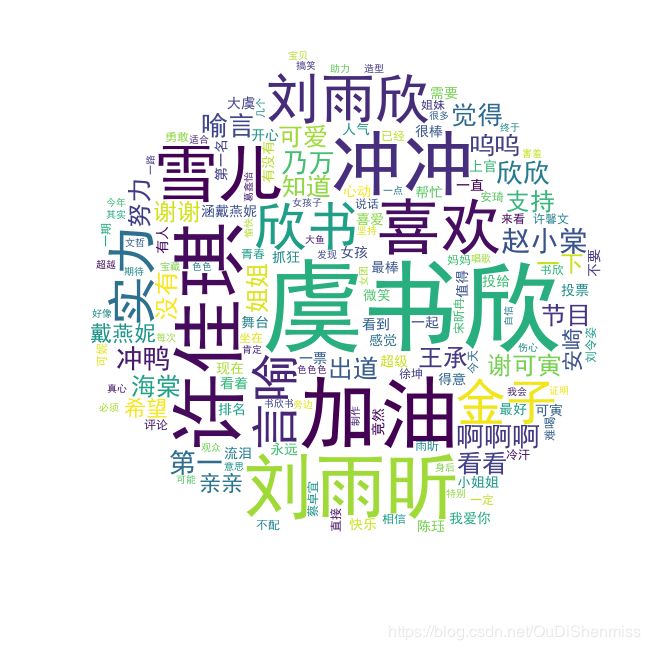

作业:《青春有你2》选手信息爬取

# 一、爬取百度百科中《青春有你2》中所有参赛选手信息,返回页面数据

import json

import re

import requests

import datetime

from bs4 import BeautifulSoup

import os

#获取当天的日期,并进行格式化,用于后面文件命名,格式:20200420

today = datetime.date.today().strftime('%Y%m%d')

def crawl_wiki_data():

"""

爬取百度百科中《青春有你2》中参赛选手信息,返回html

"""

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/67.0.3396.99 Safari/537.36'

}

url='https://baike.baidu.com/item/青春有你第二季'

try:

response = requests.get(url,headers=headers)

print(response.status_code)

#将一段文档传入BeautifulSoup的构造方法,就能得到一个文档的对象, 可以传入一段字符串

soup = BeautifulSoup(response.text,'lxml')

#返回的是class为table-view log-set-param的