单节点部署和原理请看上一篇文章

https://www.cnblogs.com/you-men/p/12863555.html

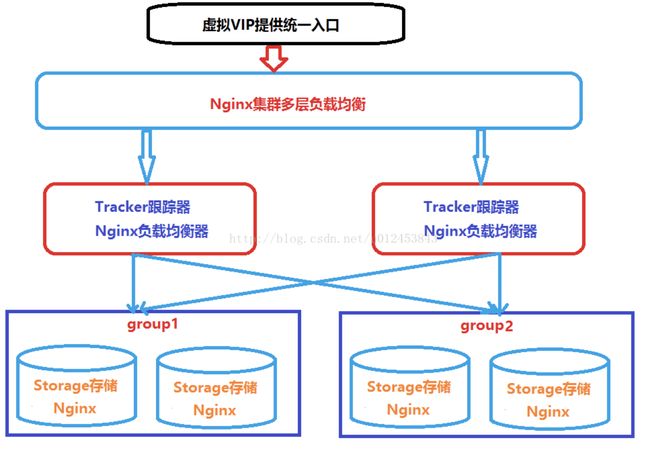

环境

[Fastdfs-Server]

系统 = CentOS7.3

软件 =

fastdfs-nginx-module_v1.16.tar.gz

FastDFS_v5.05.tar.gz

libfastcommon-master.zip

nginx-1.8.0.tar.gz

ngx_cache_purge-2.3.tar.gz

| 节点名 | IP | 软件版本 | 硬件 | 网络 | 说明 |

|---|---|---|---|---|---|

| Tracker-233 | 192.168.43.233 | list 里面都有 | 2C4G | Nat,内网 | 测试环境 |

| Tracker2-234 | 192.168.43.234 | list 里面都有 | 2C4G | Nat,内网 | 测试环境 |

| Group1-60 | 192.168.43.60 | list 里面都有 | 2C4G | Nat,内网 | 测试环境 |

| Group1-97 | 192.168.43.97 | list 里面都有 | 2C4G | Nat,内网 | 测试环境 |

| Group2-24 | 192.168.43.24 | list 里面都有 | 2C4G | Nat,内网 | 测试环境 |

| Group2-128 | 192.168.43.128 | list 里面都有 | 2C4G | Nat,内网 | 测试环境 |

| Nginx1-220 | 192.168.43.220 | list 里面都有 | 2C4G | Nat,内网 | 测试环境 |

| Nginx2-53 | 192.168.43.53 | list 里面都有 | 2C4G | Nat,内网 | 测试环境 |

安装相关工具和依赖

所有机器

yum -y install unzip gcc-c++ perl make libstdc++-devel cmake gcc gcc-c++

安装tracker

解压编译安装libfastcommon

# 安装libfastcommon, fastdfs5.X 取消了对libevent的依赖,添加了对libfastcommon的依赖.

wget https://github.com/happyfish100/libfastcommon/archive/master.zip

unzip libfastcommon-master.zip

cd libfastcommon-master/

./make.sh

./make.sh install

下载安装FastDFS

tar xvf FastDFS_v5.05.tar.gz -C /usr/local/fast/

cd /usr/local/fast/FastDFS/

./make.sh && ./make.sh install

# 创建软链接

ln -s /usr/lib64/libfastcommon.so /usr/local/lib/libfastcommon.so

ln -s /usr/lib64/libfastcommon.so /usr/lib/libfastcommon.so

ln -s /usr/lib64/libfdfsclient.so /usr/local/lib/libfdfsclient.so

ln -s /usr/lib64/libfdfsclient.so /usr/lib/libfdfsclient.so

修改fastdfs配置文件

修改fastdfs服务脚本bin目录为/usr/local/bin,但是实际我们安装在了/usr/bin/下面。所以我们需要修改FastDFS配置文件中的路径,也就是需要修改俩 个配置文件: 命令:vim /etc/init.d/fdfs_storaged 然后输入全局替换命令:

%s+/usr/local/bin+/usr/bin并按回车即可完成替换

修改tracker.conf

第一处:base_path,将默认的路径修改为/fastdfs/tracker。第二处:store_lookup,该值默认是2(即负载均衡策略),现在把它修改为0(即轮询策略,修改成这样方便一会儿我们进行测试,当然,最终还是要改回到2的。如果值为1的话表明要始终向某个group进行上传下载操作,这时下图中的"store_group=group2"才会起作用,如果值是0或2,则

mkdir -p /fastdfs/tracker

cd /etc/fdfs

cp tracker.conf.sample tracker.conf

vim /etc/fdfs/tracker.conf

base_path=/fastdfs/tracker

store_lookup=0

# 然后将这个配置拷贝到另一台tracker上

启动tracker

# 启动tracker

fdfs_trackerd /etc/fdfs/tracker.conf start

or

service fdfs_trackerd start

# 验证端口

lsof -i:22122

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

fdfs_trac 15222 root 5u IPv4 49284 0t0 TCP *:22122 (LISTEN)

fdfs_trac 15222 root 18u IPv4 49304 0t0 TCP tracker1:22122->192.168.43.60:63056 (ESTABLISHED)

部署Storage

配置storage.conf

mkdir -p /fastdfs/storage

vim /etc/fdfs/storage.conf

base_path=/fastdfs/storage

store_path0=/fastdfs/storage

store_path_count=1

disabled=false

tracker_server=192.168.43.234:22122

tracker_server=192.168.43.233:22122

group_name=group1

# 分配的group1可以直接将此文件拷贝过去,group2修改下group_name就行了

启动storage

service fdfs_trackerd start

# 验证端口

lsof -i:23000

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

fdfs_stor 14105 root 5u IPv4 44719 0t0 TCP *:inovaport1 (LISTEN)

fdfs_stor 14105 root 20u IPv4 45629 0t0 TCP group1:inovaport1->192.168.43.60:44823 (ESTABLISHED)

fdfs_monitor /etc/fdfs/storage.conf |grep ACTIVE

[2020-07-03 20:35:09] DEBUG - base_path=/fastdfs/storage, connect_timeout=30, network_timeout=60, tracker_server_count=2, anti_steal_token=0, anti_steal_secret_key length=0, use_connection_pool=0, g_connection_pool_max_idle_time=3600s, use_storage_id=0, storage server id count: 0

ip_addr = 192.168.43.24 ACTIVE

ip_addr = 192.168.43.60 (group1) ACTIVE

ip_addr = 192.168.43.128 ACTIVE

ip_addr = 192.168.43.97 ACTIVE

验证服务可用性

# 测试下是否启动成功,我们尝试上传文件,从/root/目录上传一张图片试试

# 修改client客户端上传配置

vim /etc/fdfs/client.conf

base_path=/fastdfs/tracker

tracker_server=192.168.43.233:22122

tracker_server=192.168.43.234:22122

fdfs_upload_file /etc/fdfs/client.conf /root/1.png

group2/M00/00/00/wKgrgF7_KL-AKIsEAAztU10n3gA362.png

# 我们去下面目录可以看到有这个文件

ls /fastdfs/storage/data/00/00/

wKgrgF7_KL-AKIsEAAztU10n3gA362.png

# 或者使用下面这种

fdfs_test /etc/fdfs/client.conf upload /tmp/test.jpg

example file url: http://192.168.171.140/group1/M00/00/00/wKirjF64N2CAZZODAAGgIaqSzTc877_big.jpg

# 出现最后的一个url说明上传成功

# M00代表磁盘目录,如果电脑只有一个磁盘那就只有M00, 如果有多个磁盘,那就M01、M02...等等。

# 00/00代表磁盘上的两级目录,每级目录下是从00到FF共256个文件夹,两级就是256*256个

配置Nginx

# 安装编译工具和依赖

yum install gcc-c++ pcre pcre-devel zlib zlib-devel openssl openssl-devel

# 下载nginx安装包

wget http://nginx.org/download/nginx-1.14.0.tar.gz

# 解压安装包

tar xvf nginx-1.14.0.tar.gz

# 部署Nginx的fastdfs模块

tar xvf fastdfs-nginx-module_v1.16.tar.gz -C /usr/local/fast/

# 修改一下配置文件

# 去掉local目录

vim /usr/local/fast/fastdfs-nginx-module/src/config

CORE_INCS="$CORE_INCS /usr/include/fastdfs /usr/include/fastcommon/"

cd /usr/local/fast/fastdfs-nginx-module/src/

./configure --add-module=/usr/local/fast/fastdfs-nginx-module/src/

make && make install

cp /usr/local/fast/fastdfs-nginx-module/src/mod_fastdfs.conf /etc/fdfs/

vim /etc/fdfs/mod_fastdfs.conf

connect_timeout=12

tracker_server=192.168.43.233:22122

tracker_server=192.168.43.234:22122

url_have_group_name = true

# 是否允许从地址栏进行访问

store_path0=/fastdfs/storage

group_name=group1

# group2注意修改此处

group_count = 2

[group1]

group_name=group1

storage_server_port=23000

store_path_count=1

store_path0=/fastdfs/storage

[group2]

group_name=group2

storage_server_port=23000

store_path_count=1

store_path0=/fastdfs/storage

cp /usr/local/fast/FastDFS/conf/http.conf /etc/fdfs

cp /usr/local/fast/FastDFS/conf/mime.types /etc/fdfs/

ln -s /fastdfs/storage/data/ /fastdfs/storage/data/M00

vim /usr/local/nginx/conf/nginx.conf

listen 8888;

server_name localhost;

location ~/group([0-9])/M00 {

ngx_fastdfs_module;

}

systemctl reload nginx

# 我们再来上传一下文件

fdfs_upload_file /etc/fdfs/client.conf /root/1.png

group1/M00/00/00/wKgrGF7_LISASYwWAAztU10n3gA811.png

# 浏览器访问

配置tracker反向代理

我们在两个跟踪器上安装nginx,目的是使用统一的一个IP地址对外提供服务

安装nginx

tar xvf ngx_cache_purge-2.3.tar.gz -C /usr/local/fast

cd /usr/local/nginx-1.8.0/

./configure --add-module=/usr/local/fast/ngx_cache_purge-2.3

make && make install

配置nginx

mkdir -p /fastdfs/cache/nginx/proxy_cache

mkdir -p /fastdfs/cache/nginx/proxy_cache/tmp

cat /usr/local/nginx/conf/nginx.conf

worker_processes 1;

events {

worker_connections 1024;

}

http {

include mime.types;

default_type application/octet-stream;

sendfile on;

tcp_nopush on;

keepalive_timeout 65;

# 设置缓存

server_names_hash_bucket_size 128;

client_header_buffer_size 32k;

large_client_header_buffers 4 32k;

client_max_body_size 300m;

proxy_redirect off;

proxy_set_header Host $http_host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_connect_timeout 90;

proxy_send_timeout 90;

proxy_read_timeout 90;

proxy_buffer_size 16k;

proxy_buffers 4 64k;

proxy_busy_buffers_size 128k;

proxy_temp_file_write_size 128k;

proxy_cache_path /fastdfs/cache/nginx/proxy_cache levels=1:2

keys_zone=http-cache:200m max_size=1g inactive=30d;

proxy_temp_path /fastdfs/cache/nginx/proxy_cache/tmp;

# group1的服务设置

upstream fdfs_group1 {

server 192.168.43.60:8888 weight=1 max_fails=2 fail_timeout=30s;

server 192.168.43.24:8888 weight=1 max_fails=2 fail_timeout=30s;

}

upstream fdfs_group2 {

server 192.168.43.97:8888 weight=1 max_fails=2 fail_timeout=30s;

server 192.168.43.128:8888 weight=1 max_fails=2 fail_timeout=30s;

}

server {

listen 80;

server_name localhost;

location /group1/M00 {

proxy_next_upstream http_502 http_504 error timeout invalid_header;

proxy_cache http-cache;

proxy_cache_valid 200 304 12h;

proxy_cache_key $uri$is_args$args;

proxy_pass http://fdfs_group1;

expires 30d;

}

location /group2/M00 {

proxy_next_upstream http_502 http_504 error timeout invalid_header;

proxy_cache http-cache;

proxy_cache_valid 200 304 12h;

proxy_cache_key $uri$is_args$args;

proxy_pass http://fdfs_group2;

expires 30d;

}

location ~/purge(/.*) {

allow 127.0.0.1;

allow 192.168.43.0/24;

deny all;

proxy_cache_purge http-cache $1$is_args$args;

}

error_page 500 502 503 504 /50x.html;

location = /50x.html {

root html;

}

location / {

root html;

index index.html index.htm;

}

}

}

启动验证服务

/usr/local/nginx/sbin/nginx

lsof -i:80

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

nginx 18152 root 6u IPv4 64547 0t0 TCP *:http (LISTEN)

nginx 18153 nobody 6u IPv4 64547 0t0 TCP *:http (LISTEN)

# 上传文件测试负载均衡

[root@tracker1 ~]# fdfs_upload_file /etc/fdfs/client.conf /root/1.png

group2/M00/00/00/wKgrYV7_LtKAVzpbAAztU10n3gA079.png

[root@tracker1 ~]# fdfs_upload_file /etc/fdfs/client.conf /root/1.png

group1/M00/00/00/wKgrPF7_LtOACpZhAAztU10n3gA410.png

[root@tracker1 ~]# fdfs_upload_file /etc/fdfs/client.conf /root/1.png

group2/M00/00/00/wKgrgF7_LtWAQfW7AAztU10n3gA673.png

[root@tracker1 ~]# fdfs_upload_file /etc/fdfs/client.conf /root/1.png

group1/M00/00/00/wKgrGF7_LtWAaML9AAztU10n3gA712.png

配置Nginx反向代理

安装nginx

rpm -ivh nginx-1.16.0-1.el7.ngx.x86_64.rpm

配置nginx

添加负载均衡 fastdfs_tracker

nginx.conf

cat /etc/nginx/nginx.conf

user nginx;

worker_processes 1;

error_log /var/log/nginx/error.log warn;

pid /var/run/nginx.pid;

events {

worker_connections 1024;

}

http {

upstream fastdfs_tracker {

server 192.168.43.234:80 weight=1 max_fails=2 fail_timeout=30s;

server 192.168.43.233:80 weight=1 max_fails=2 fail_timeout=30s;

}

include /etc/nginx/mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

#tcp_nopush on;

keepalive_timeout 65;

#gzip on;

include /etc/nginx/conf.d/*.conf;

}

添加location并且匹配规则路径当中有fastdfs

default.conf

cat /etc/nginx/conf.d/default.conf

server {

listen 80;

server_name localhost;

location / {

root /usr/share/nginx/html;

index index.html index.htm;

}

location /fastdfs {

root html;

index index.html index.htm;

proxy_pass http://fastdfs_tracker/;

proxy_set_header Host $http_host;

proxy_set_header Cookie $http_cookie;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

client_max_body_size 300m;

}

error_page 500 502 503 504 /50x.html;

location = /50x.html {

root /usr/share/nginx/html;

}

}

启动验证nginx

systemctl start nginx

fdfs_upload_file /etc/fdfs/client.conf /root/1.png

group2/M00/00/00/wKgrYV7_MB-AQE_wAAztU10n3gA850.png

配置keepalived高可用

安装keepalived

yum -y install keepalived

配置keepalived

主机配置

cat keepalived.conf

global_defs {

router_id nginx_master

}

vrrp_script check_nginx {

script "/etc/keepalived/check_nginx.sh"

interval 5

}

vrrp_instance VI_1 {

state BACKUP

nopreempt

interface ens33

virtual_router_id 50

priority 80

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.43.251

}

track_script {

check_nginx

}

}

从机配置

cat keepalived.conf

global_defs {

router_id nginx_slave

}

vrrp_script check_nginx {

script "/etc/keepalived/check_nginx.sh"

interval 5

}

vrrp_instance VI_1 {

state BACKUP

nopreempt

interface ens32

virtual_router_id 50

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.43.251

}

track_script {

check_nginx

}

}

check_nginx.sh

cat /etc/keepalived/check_nginx.sh

#!/bin/bash

curl -I http://localhost &>/dev/null

if [ $? -ne 0 ];then

systemctl stop keepalived

fi

chmod +x /etc/keepalived/check_nginx.sh

启动并验证高可用

[root@nginx2 ~]# ip a

1: lo: mtu 65536 qdisc noqueue state UNKNOWN qlen 1

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

2: ens32: mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:4b:e9:6e brd ff:ff:ff:ff:ff:ff

inet 192.168.43.53/24 brd 192.168.43.255 scope global dynamic ens32

valid_lft 2647sec preferred_lft 2647sec

inet 192.168.43.251/32 scope global ens32

valid_lft forever preferred_lft forever

systemctl stop nginx

# 我们切换到另一台机器,可以看到vip自动切换了

[root@nginx1 ~]# ip a

1: lo: mtu 65536 qdisc noqueue state UNKNOWN qlen 1

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

2: ens32: mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:17:4a:03 brd ff:ff:ff:ff:ff:ff

inet 192.168.43.220/24 brd 192.168.43.255 scope global dynamic ens32

valid_lft 2882sec preferred_lft 2882sec

inet 192.168.43.251/32 scope global ens32

valid_lft forever preferred_lft forever

浏览器访问

我们可以看到即使nginx宕机一台,也不影响服务的可用性