【从零开始学Mask RCNN】四,RPN锚框生成和Proposal生成

1. Mask RCNN Anchor框 生成

Mask RCNN的锚框生成和SSD的锚框生成策略类似(SSD的锚框生成策略见:【资源分享】从零开始学习SSD教程) ,都遵循以下规则:

- Anchor的中心点的个数等于特征图像素个数

- Anchor的生成是围绕中心点的

- Anchor框的坐标最终需要归一化到0-1之间,即相对输入图像的大小

我们知道Faster RCNN只是在一个特征图上铺设Anchor,而Mask RCNN引入了FPN之后使用了多层特征,这样和SSD类似都是在多个特征图上铺设Anchor,不过SSD的Anchor铺设策略相对较为复杂,每一个尺度的特征都有不同的Anchor策略设计,如下图所示:

Mask RCNN相对于SSD的Anchor生成策略有以下不同之处:

- SSD的不同特征图的Anchor长宽比设置不同,而Mask RCNN的不同特征图的Anchor比例则完全相同

- Mask RCNN每一层设置固定个数的Anchor数(3个)

- SSD中心点为每个像素偏移0.5步长;Mask RCNN中心点直接选为像素位置

另外,Anchor的基本生成方式和SSD一样,即Anchor框的长度等于 h h h乘 a n c h o r − r a t i o s \sqrt{anchor-ratios} anchor−ratios,Anchor框的宽度等于 w w w除以 a n c h o r − r a t i o s \sqrt{anchor-ratios} anchor−ratios,其中 h , w h,w h,w的初始值是给定的参考尺寸。具体设置如下:

anchor_sizes=[(21., 45.),

(45., 99.),

(99., 153.),

(153., 207.),

(207., 261.),

(261., 315.)]

anchor_ratios=[[2, .5],

[2, .5, 3, 1./3],

[2, .5, 3, 1./3],

[2, .5, 3, 1./3],

[2, .5],

[2, .5]]

在mrcnn/config,py中相关的设置如下:

self.config.BACKBONE_STRIDES = [4, 8, 16, 32, 64] # 特征层的下采样倍数,中心点计算使用

self.config.RPN_ANCHOR_RATIOS = [0.5, 1, 2] # 特征层锚框生成参数

self.config.RPN_ANCHOR_SCALES = [32, 64, 128, 256, 512] # 特征层锚框感受野

Anchor框的生成函数为model.py中的get_anchors函数,参数为image_shape,只需要保证里面有[h,w]即可,也可以是[h,w,c],代码如下:

# 锚框生成函数

def get_anchors(self, image_shape):

"""Returns anchor pyramid for the given image size."""

backbone_shapes = compute_backbone_shapes(self.config, image_shape)

# 如果图片的尺寸相同就用缓存的Anchor信息回复

# Cache anchors and reuse if image shape is the same

if not hasattr(self, "_anchor_cache"):

self._anchor_cache = {}

# 如果对应尺寸图像的Anchors区域没有被缓存,那么就产生Anchors

if not tuple(image_shape) in self._anchor_cache:

# 产生Anchor区域

a = utils.generate_pyramid_anchors(

self.config.RPN_ANCHOR_SCALES,

self.config.RPN_ANCHOR_RATIOS,

backbone_shapes,

self.config.BACKBONE_STRIDES,

self.config.RPN_ANCHOR_STRIDE)

# Keep a copy of the latest anchors in pixel coordinates because

# it's used in inspect_model notebooks.

# TODO: Remove this after the notebook are refactored to not use it

self.anchors = a

# Normalize coordinates

self._anchor_cache[tuple(image_shape)] = utils.norm_boxes(a, image_shape[:2])

return self._anchor_cache[tuple(image_shape)]

这个函数首先调用compute_backbone_shapes计算各个特征层的shape:

def compute_backbone_shapes(config, image_shape):

""" 计算骨干网络的每个阶段特征图的长宽

Returns:

[N, (height, width)]. N代表阶段数

"""

# 可调用返回 True,否则返回 False。

if callable(config.BACKBONE):

return config.COMPUTE_BACKBONE_SHAPE(image_shape)

# 当前只支持ResNet

assert config.BACKBONE in ["resnet50", "resnet101"]

return np.array(

[[int(math.ceil(image_shape[0] / stride)),

int(math.ceil(image_shape[1] / stride))]

for stride in config.BACKBONE_STRIDES])

然后再调用utils.generate_pyramid_anchors函数生成所有的Anchor框,这个函数在mrcnn/utils.py中,代码如下:

def generate_pyramid_anchors(scales, ratios, feature_shapes, feature_strides,

anchor_stride):

"""在特征金字塔的不同层级产生anchors,每个尺度都

和金字塔的层级有关,但是长宽比例都是相同的

返回:

anchors: [N, (y1, x1, y2, x2)]. 所有产生的Anchors放在一个数组中.

按照scales给定的顺序进行排序. 所以scale[0]是第一个,然后scale[1]第二个,等等.

"""

# Anchors

# [anchor_count, (y1, x1, y2, x2)]

anchors = []

for i in range(len(scales)):

anchors.append(generate_anchors(scales[i], ratios, feature_shapes[i],

feature_strides[i], anchor_stride))

return np.concatenate(anchors, axis=0)

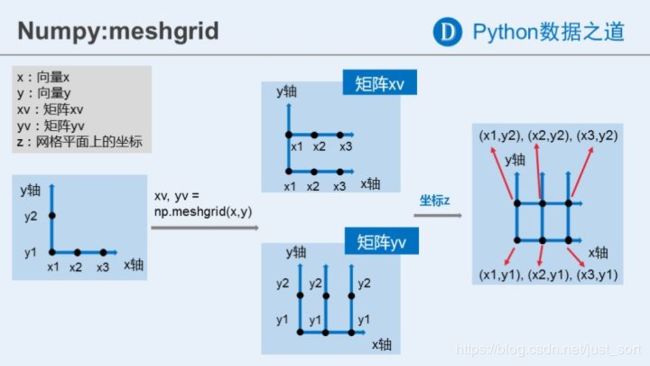

这里又调用了utils.generate_anchors函数来生成每一个特征层的Anchors,这个函数大量应用了np.meshgrid函数,这个函数是用来生成网格坐标,一个很好的说明图如下:

虽然xy双坐标比较常用,但实际上输入可以是任意多的数组,输出数组数目等于输出数组数目,且彼此间shape一致。

如果输入数组不是一维的,会拉伸为1维进行计算。

输出维度是:[len(x2), len(x1), len(x3)……]

例子如下:

utils.generate_anchors函数解释如下:

############################################################

# Anchors

############################################################

def generate_anchors(scales, ratios, shape, feature_stride, anchor_stride):

"""

scales: 1D array of anchor sizes in pixels. Example: [32, 64, 128]

ratios: 1D array of anchor ratios of width/height. Example: [0.5, 1, 2]

shape: [height, width] spatial shape of the feature map over which

to generate anchors.

feature_stride: Stride of the feature map relative to the image in pixels.

anchor_stride: Stride of anchors on the feature map. For example, if the

value is 2 then generate anchors for every other feature map pixel.

"""

# Get all combinations of scales and ratios

scales, ratios = np.meshgrid(np.array(scales), np.array(ratios))

scales = scales.flatten()

ratios = ratios.flatten()

# Enumerate heights and widths from scales and ratios

heights = scales / np.sqrt(ratios)

widths = scales * np.sqrt(ratios)

# Enumerate shifts in feature space

shifts_y = np.arange(0, shape[0], anchor_stride) * feature_stride

shifts_x = np.arange(0, shape[1], anchor_stride) * feature_stride

shifts_x, shifts_y = np.meshgrid(shifts_x, shifts_y)

# Enumerate combinations of shifts, widths, and heights

box_widths, box_centers_x = np.meshgrid(widths, shifts_x)

box_heights, box_centers_y = np.meshgrid(heights, shifts_y)

# Reshape to get a list of (y, x) and a list of (h, w)

box_centers = np.stack(

[box_centers_y, box_centers_x], axis=2).reshape([-1, 2])

box_sizes = np.stack([box_heights, box_widths], axis=2).reshape([-1, 2])

# Convert to corner coordinates (y1, x1, y2, x2)

# 框体信息是相对于原图的, [N, (y1, x1, y2, x2)]

boxes = np.concatenate([box_centers - 0.5 * box_sizes,

box_centers + 0.5 * box_sizes], axis=1)

return boxes

最后再回到model.py中的get_anchors函数,再调用utils.norm_boxes进行Anchor坐标的归一化,即utils.norm_boxes函数,代码如下:

# 坐标归一化

def norm_boxes(boxes, shape):

"""Converts boxes from pixel coordinates to normalized coordinates.

boxes: [N, (y1, x1, y2, x2)] in pixel coordinates

shape: [..., (height, width)] in pixels

Note: In pixel coordinates (y2, x2) is outside the box. But in normalized

coordinates it's inside the box.

Returns:

[N, (y1, x1, y2, x2)] in normalized coordinates

"""

h, w = shape

scale = np.array([h - 1, w - 1, h - 1, w - 1])

shift = np.array([0, 0, 1, 1])

return np.divide((boxes - shift), scale).astype(np.float32)

2. RPN锚框生成

在第三个推文中我们获得了rpn的每个特征图信息即:

rpn_feature_maps = [P2, P3, P4, P5, P6]

然后在每个特征图上产生Anchor框,不过这里是分训练还是测试,训练的话使用刚刚介绍的方法来产生Anchor,如果是测试的话则使用定义好的Anchor,代码如下:

# Anchors

if mode == "training":

anchors = self.get_anchors(config.IMAGE_SHAPE)

# Duplicate across the batch dimension because Keras requires it

# TODO: can this be optimized to avoid duplicating the anchors?

anchors = np.broadcast_to(anchors, (config.BATCH_SIZE,) + anchors.shape)

# A hack to get around Keras's bad support for constants

anchors = KL.Lambda(lambda x: tf.Variable(anchors), name="anchors")(input_image)

else:

anchors = input_anchors

然后基于RPN的各个特征图和定义好的Anchor框信息我们生成每个Anchor框的前景/背景得分信息及每个Anchor框的坐标修正信息。 接前文主函数,我们初始化rpn model class的对象,并应用于各层特征:

# RPN Model, 返回的是keras的Module对象, 注意keras中的Module对象是可call的

rpn = build_rpn_model(config.RPN_ANCHOR_STRIDE,

len(config.RPN_ANCHOR_RATIOS), config.TOP_DOWN_PYRAMID_SIZE)

# Loop through pyramid layers

layer_outputs = [] # list of lists

# 保存各pyramid特征经过RPN之后的结果

for p in rpn_feature_maps:

layer_outputs.append(rpn([p]))

其中build_rpn_model的代码实现如下:

def build_rpn_model(anchor_stride, anchors_per_location, depth):

"""建立RPN网络的Keras模型。它包装了RPN图,可以共享权重。

anchors_per_location: 特征图中每个像素的Anchor个数。

anchor_stride: 控制Anchor框的密度,通常为1或者2。

depth: 骨干网络特征图的深度。

返回一个Keras的Model对象。当被调用时,model的输出是 :

rpn_class_logits: [batch, H * W * anchors_per_location, 2] Anchor classifier logits (before softmax)

rpn_probs: [batch, H * W * anchors_per_location, 2] Anchor classifier probabilities.

rpn_bbox: [batch, H * W * anchors_per_location, (dy, dx, log(dh), log(dw))] Deltas to be

applied to anchors.

"""

input_feature_map = KL.Input(shape=[None, None, depth],

name="input_rpn_feature_map")

outputs = rpn_graph(input_feature_map, anchors_per_location, anchor_stride)

return KM.Model([input_feature_map], outputs, name="rpn_model")

它接收一个输入特征图,然后调用rpn_graph这个函数完成RPN模块的实现,这个函数的代码解析如下:

############################################################

# Region Proposal Network (RPN)

############################################################

def rpn_graph(feature_map, anchors_per_location, anchor_stride):

"""建立RPN网络的计算图。

feature_map: 骨干特征 [batch, height, width, depth]

anchors_per_location: 特征图中每个像素的Anchor个数

anchor_stride: 控制Anchors的密度,比如设置成1或者2

Returns:

rpn_class_logits: [batch, H * W * anchors_per_location, 2] Anchor classifier logits (before softmax)

rpn_probs: [batch, H * W * anchors_per_location, 2] Anchor classifier probabilities.

rpn_bbox: [batch, H * W * anchors_per_location, (dy, dx, log(dh), log(dw))] Deltas to be

applied to anchors.

"""

# TODO: check if stride of 2 causes alignment issues if the feature map

# is not even.

# Shared convolutional base of the RPN

shared = KL.Conv2D(512, (3, 3), padding='same', activation='relu',

strides=anchor_stride,

name='rpn_conv_shared')(feature_map)

# Anchor的分数

# Anchor Score. [batch, height, width, anchors per location * 2].

x = KL.Conv2D(2 * anchors_per_location, (1, 1), padding='valid',

activation='linear', name='rpn_class_raw')(shared)

# Reshape to [batch, anchors, 2]

rpn_class_logits = KL.Lambda(

lambda t: tf.reshape(t, [tf.shape(t)[0], -1, 2]))(x)

# Softmax on last dimension of BG/FG.

rpn_probs = KL.Activation(

"softmax", name="rpn_class_xxx")(rpn_class_logits)

# Bounding box refinement. [batch, H, W, anchors per location * depth]

# where depth is [x, y, log(w), log(h)]

x = KL.Conv2D(anchors_per_location * 4, (1, 1), padding="valid",

activation='linear', name='rpn_bbox_pred')(shared)

# Reshape to [batch, anchors, 4]

rpn_bbox = KL.Lambda(lambda t: tf.reshape(t, [tf.shape(t)[0], -1, 4]))(x)

return [rpn_class_logits, rpn_probs, rpn_bbox]

紧接着前文的主函数,我们需要将获取的list形式的各层Anchor框进行拼接和重组,代码如下:

# Concatenate layer outputs

# Convert from list of lists of level outputs to list of lists

# of outputs across levels.

# e.g. [[a1, b1, c1], [a2, b2, c2]] => [[a1, a2], [b1, b2], [c1, c2]]

output_names = ["rpn_class_logits", "rpn_class", "rpn_bbox"]

outputs = list(zip(*layer_outputs))

outputs = [KL.Concatenate(axis=1, name=n)(list(o))

for o, n in zip(outputs, output_names)]

# [batch, num_anchors, 2/4]

# 其中num_anchors指的是全部特征层上的anchors总数

rpn_class_logits, rpn_class, rpn_bbox = outputs

这段代码的目的就是将原始的 [ ( l o g i t s 2 , c l a s s 2 , b b o x 2 ) , ( l o g i t s 3 , c l a s s 3 , b b o x 3 ) , … … 6 ] [(logits2, class2, bbox2), (logits3, class3, bbox3), ……6] [(logits2,class2,bbox2),(logits3,class3,bbox3),……6],这种排布的返回值转换为 [ ( l o g i t s 2 , l o g i t s 3 … … 6 ) , ( c l a s s 2 , c l a s s 3 … … 6 ) , ( b b o x 2 , b b o x 3 … … 6 ) ] [(logits2,logits3……6), (class2,class3……6), (bbox2,bbox3……6)] [(logits2,logits3……6),(class2,class3……6),(bbox2,bbox3……6)]这种形式(通过zip函数转换),然后将每个小list中的tensor按照第一维度(即anchors维度)拼接,得到三个tensor,每个tensor表明batch中图片对应 5 5 5个特征层的全部anchors的分类回归信息,即:[batch, anchors, 2分类结果 或者 (dy, dx, log(dh), log(dw))]。

3. Proposal生成

3.1 初始化Proposal Layer

现在我们已经获取了所有的Anchor框的信息,这一步的目的是从中挑选指定个数的可能包含目标的Anchor框作为候选区域,即在上一步的二分类中的前景类别得分更高的框,同时由于Anchor框是比较密集并且重叠严重,所以这里还会执行一个NMS来去重。这部分的代码如下,其中,proposal_count是一个整数,用于指定生成proposal数目,不足时会生成坐标为[0,0,0,0]的空值进行补全:

# 产生候选框

# Proposals are [batch, N, (y1, x1, y2, x2)] in normalized coordinates

# and zero padded.

# POST_NMS_ROIS_INFERENCE = 1000

# POST_NMS_ROIS_TRAINING = 2000

proposal_count = config.POST_NMS_ROIS_TRAINING if mode == "training"\

else config.POST_NMS_ROIS_INFERENCE

# [IMAGES_PER_GPU, num_rois, (y1, x1, y2, x2)]

# IMAGES_PER_GPU取代了batch,之后说的batch都是IMAGES_PER_GPU

rpn_rois = ProposalLayer(

proposal_count=proposal_count,

nms_threshold=config.RPN_NMS_THRESHOLD, #0.7

name="ROI",

config=config)([rpn_class, rpn_bbox, anchors])

接下来我们跟进ProposalLayer,在初始部分获取[rpn_class, rpn_bbox, anchors]三个张量作为参数,然后获取前景分数最大的PRE_NMS_LIMITPRE_NMS_LIMIT这么多个候选框,这部分的代码如下:

# 在初始部分获取[rpn_class, rpn_bbox, anchors]三个张量作为参数,

class ProposalLayer(KE.Layer):

"""接收Anchor框的分数并选择一个子集传入到第二个阶段.

根据Anchor框分数和非最大抑制进行过滤,以消除一些重叠框.

并且将bbox调整值应用于Anchor上。

Inputs:

rpn_probs: [batch, num_anchors, (bg prob, fg prob)]

rpn_bbox: [batch, num_anchors, (dy, dx, log(dh), log(dw))]

anchors: [batch, num_anchors, (y1, x1, y2, x2)] anchors in normalized coordinates

Returns:

Proposals in normalized coordinates [batch, rois, (y1, x1, y2, x2)]

"""

def __init__(self, proposal_count, nms_threshold, config=None, **kwargs):

super(ProposalLayer, self).__init__(**kwargs)

self.config = config

self.proposal_count = proposal_count

self.nms_threshold = nms_threshold

def call(self, inputs):

# Box Scores. Use the foreground class confidence. [Batch, num_rois, 1]

# 获得Anchor框的前景分数

scores = inputs[0][:, :, 1]

# Box deltas [batch, num_rois, 4]

# Box deltas. 记录坐标修正信息:(dy, dx, log(dh), log(dw)). [batch, num_rois, 4]

deltas = inputs[1]

deltas = deltas * np.reshape(self.config.RPN_BBOX_STD_DEV, [1, 1, 4]) # [ 0.1 0.1 0.2 0.2]

# Anchors 记录坐标信息:(y1, x1, y2, x2). [batch, num_rois, 4]

anchors = inputs[2]

# Improve performance by trimming to top anchors by score

# and doing the rest on the smaller subset.

# 然后我们获取前景得分最大的n个候选框,

pre_nms_limit = tf.minimum(self.config.PRE_NMS_LIMIT, tf.shape(anchors)[1])

# 输入矩阵时输出每一行的top k. [batch, top_k]

ix = tf.nn.top_k(scores, pre_nms_limit, sorted=True,

name="top_anchors").indices

3.2 获取Top K Anchor框

注意上面的代码结尾只是获取了要保留的Anchor框的索引,接下来还需要对三个输入分别进行提取,接上面代码:

# 提取top k锚框,同时对三个输入进行了提取

# batch_slice函数:

# # 将batch特征拆分为单张

# # 然后提取指定的张数

# # 使用单张特征处理函数处理,并合并(此时返回的第一维不是输入时的batch,而是上步指定的张数)

scores = utils.batch_slice([scores, ix], lambda x, y: tf.gather(x, y),

self.config.IMAGES_PER_GPU)

deltas = utils.batch_slice([deltas, ix], lambda x, y: tf.gather(x, y),

self.config.IMAGES_PER_GPU)

pre_nms_anchors = utils.batch_slice([anchors, ix], lambda a, x: tf.gather(a, x),

self.config.IMAGES_PER_GPU,

names=["pre_nms_anchors"])

这里主要使用了一个utils.batch_slice函数,这个函数把只支持batch=1的函数进行了扩展,由于tf.gather函数只能进行一维数组的切片,而scores的维度现在是[batch,num_rois],相对的ix也是二维即[batch,top_k],因此这里要将两者应用tf.gather函数切片后将结果重新拼接,代码如下(mrnn/utils.py):

# ## Batch Slicing

# Some custom layers support a batch size of 1 only, and require a lot of work

# to support batches greater than 1. This function slices an input tensor

# across the batch dimension and feeds batches of size 1. Effectively,

# an easy way to support batches > 1 quickly with little code modification.

# In the long run, it's more efficient to modify the code to support large

# batches and getting rid of this function. Consider this a temporary solution

def batch_slice(inputs, graph_fn, batch_size, names=None):

"""Splits inputs into slices and feeds each slice to a copy of the given

computation graph and then combines the results. It allows you to run a

graph on a batch of inputs even if the graph is written to support one

instance only.

inputs: list of tensors. All must have the same first dimension length

graph_fn: A function that returns a TF tensor that's part of a graph.

batch_size: number of slices to divide the data into.

names: If provided, assigns names to the resulting tensors.

"""

if not isinstance(inputs, list):

inputs = [inputs]

outputs = []

for i in range(batch_size):

inputs_slice = [x[i] for x in inputs]

output_slice = graph_fn(*inputs_slice)

if not isinstance(output_slice, (tuple, list)):

output_slice = [output_slice]

outputs.append(output_slice)

# 使用tf.while_loop实现循环体代码如下:

# import tensorflow as tf

# i = 0

# outputs = []

#

# def cond(index):

# return index < batch_size # 返回bool值

#

# def body(index):

# index += 1

# inputs_slice = [x[i] for x in inputs]

# output_slice = graph_fn(*inputs_slice)

# if not isinstance(output_slice, (tuple, list)):

# output_slice = [output_slice]

# outputs.append(output_slice)

# return index # 返回cond需要的判断参数进行下一次判断

#

# tf.while_loop(cond, body, [i])

# Change outputs from a list of slices where each is

# a list of outputs to a list of outputs and each has

# a list of slices

# 下面示意中假设每次graph_fn返回两个tensor

# [[tensor11, tensor12], [tensor21, tensor22], ……]

# ——> [(tensor11, tensor21, ……), (tensor12, tensor22, ……)] zip返回的是多个tuple

outputs = list(zip(*outputs))

if names is None:

names = [None] * len(outputs)

# 一般来讲就是batch维度合并回去(上面的for循环实际是将batch拆分了)

result = [tf.stack(o, axis=0, name=n)

for o, n in zip(outputs, names)]

if len(result) == 1:

result = result[0]

return result

3.3 Anchor框坐标修正

我们在第二节中利用RPN网络获取了全部Anchor框的坐标回归结果,即rpn_bbox: [batch, anchors, (dy, dx, log(dh), log(dw))],然后我们在上一个小节(3.2)获得了top k Anchor框的坐标信息以及top k的Anchor框回归偏移量,现在将其合并,即利用RPN的回归结果修正top k Anchor框的坐标。代码如下:

# Anchor框修正

# Apply deltas to anchors to get refined anchors.

# 现在的N代表Top K的K

# [batch, N, (y1, x1, y2, x2)]

boxes = utils.batch_slice([pre_nms_anchors, deltas],

lambda x, y: apply_box_deltas_graph(x, y),

self.config.IMAGES_PER_GPU,

names=["refined_anchors"])

可以看到这里主要调用了apply_box_deltas_graph这个函数结合上面介绍的batch_slice函数完成了RPN回归结果修正,我们来看一下apply_box_deltas_graph这个函数的代码实现,如下:

############################################################

# Proposal Layer

############################################################

def apply_box_deltas_graph(boxes, deltas):

"""Applies the given deltas to the given boxes.

boxes: [N, (y1, x1, y2, x2)] boxes to update

deltas: [N, (dy, dx, log(dh), log(dw))] refinements to apply

"""

# Convert to y, x, h, w

height = boxes[:, 2] - boxes[:, 0]

width = boxes[:, 3] - boxes[:, 1]

center_y = boxes[:, 0] + 0.5 * height

center_x = boxes[:, 1] + 0.5 * width

# Apply deltas

center_y += deltas[:, 0] * height

center_x += deltas[:, 1] * width

height *= tf.exp(deltas[:, 2])

width *= tf.exp(deltas[:, 3])

# Convert back to y1, x1, y2, x2

y1 = center_y - 0.5 * height

x1 = center_x - 0.5 * width

y2 = y1 + height

x2 = x1 + width

result = tf.stack([y1, x1, y2, x2], axis=1, name="apply_box_deltas_out")

return result

从这个代码可以推出,RPN回归出的4个坐标值的意义土如下:

d y = ( y n − y o ) / h o dy=(y_n-y_o)/h_o dy=(yn−yo)/ho

d x = ( x n − x o ) / w o dx=(x_n-x_o)/w_o dx=(xn−xo)/wo

d h = h n / h o dh=h_n/h_o dh=hn/ho

d w = w n / w o dw=w_n/w_o dw=wn/wo

此外,这里的Anchor框坐标实际上是在一个归一化的图上的(即所有的Anchor框位于一个长宽均为1的虚拟画布上),上一步执行了修正之后不再能够保证这一点,因此这里还需要切除Anchor框越界的部分,即只保留Anchor框和 [ 0 , 0 , 1 , 1 ] [0,0,1,1] [0,0,1,1]画布的交集。代码如下:

# Clip to image boundaries. Since we're in normalized coordinates,

# clip to 0..1 range. [batch, N, (y1, x1, y2, x2)]

window = np.array([0, 0, 1, 1], dtype=np.float32)

boxes = utils.batch_slice(boxes,

lambda x: clip_boxes_graph(x, window),

self.config.IMAGES_PER_GPU,

names=["refined_anchors_clipped"])

其中clip_boxes_graph这个函数实现了保留交集的功能,代码如下:

# 保留2个框的交集部分

def clip_boxes_graph(boxes, window):

"""

boxes: [N, (y1, x1, y2, x2)]

window: [4] in the form y1, x1, y2, x2

"""

# Split

wy1, wx1, wy2, wx2 = tf.split(window, 4)

y1, x1, y2, x2 = tf.split(boxes, 4, axis=1)

# Clip

y1 = tf.maximum(tf.minimum(y1, wy2), wy1)

x1 = tf.maximum(tf.minimum(x1, wx2), wx1)

y2 = tf.maximum(tf.minimum(y2, wy2), wy1)

x2 = tf.maximum(tf.minimum(x2, wx2), wx1)

clipped = tf.concat([y1, x1, y2, x2], axis=1, name="clipped_boxes")

clipped.set_shape((clipped.shape[0], 4))

return clipped

3.4 非极大值抑制(NMS)

最后再进行非极大值抑制,以保证不会出现重叠度非常大的候选框,代码如下:

# Non-max suppression

def nms(boxes, scores):

# 非极大值抑制子函数

# :param boxes: [top_k, (y1, x1, y2, x2)]

# :param scores: [top_k]

# :return:

# self.proposal_count为最大返回数目

indices = tf.image.non_max_suppression(

boxes, scores, self.proposal_count,

self.nms_threshold, name="rpn_non_max_suppression")

proposals = tf.gather(boxes, indices)

# Pad if needed, 一旦返回数目不足, 填充(0,0,0,0)直到数目达标

padding = tf.maximum(self.proposal_count - tf.shape(proposals)[0], 0)

# 在后面添加全0行

proposals = tf.pad(proposals, [(0, padding), (0, 0)])

return proposals

proposals = utils.batch_slice([boxes, scores], nms,

self.config.IMAGES_PER_GPU)

# [IMAGES_PER_GPU, proposal_count, (y1, x1, y2, x2)]

return proposals

4. 小节

到这里,我们就将Mask RCNN的Anchor框生成和Proposal生成讲完了,下一节我将讲解获取RPN区域后Mask RCNN是如何处理这些候选框的(ROI Align)。

5. 参考

- https://www.cnblogs.com/hellcat/p/9811301.html

欢迎关注GiantPandaCV, 在这里你将看到独家的深度学习分享,坚持原创,每天分享我们学习到的新鲜知识。( • ̀ω•́ )✧

有对文章相关的问题,或者想要加入交流群,欢迎添加BBuf微信:

![]()

为了方便读者获取资料以及我们公众号的作者发布一些Github工程的更新,我们成立了一个QQ群,二维码如下,感兴趣可以加入。

![]()