kinect实时抠图程序

kinect实时抠图程序

深度图像可以确定人体的像素,bodyindex框架也是利用深度数据做处理来得到人体的像素点的,故我们可以联合彩色框架和bodyinedx框架来做一个抠图程序,彩色框架的分辨率是1920*1080,而bodyindex框架的分辨率是512*424,我们首先来判断bodyindex框架中的人体数据来标定彩色框架中属于人体的数据,如果属于人体,则显示出来,如果不属于人体,则拉黑。所以显示的彩色图像中只有属于人体的数据了。不过彩色框架中的数据是如何映射到深度框架中的数据的,我这里用的是CoordinateMapper类,这个类时kinect v2的sdk中提供的,不过映射的效果就有点。。。边缘处理的不平滑。如果要想边缘效果变得很好的话,就需要自己写函数来处理深度数据了。源码微软kinect v2sdk里面包含,大家可以去下载。

我的代码如下,关于xml的代码我就不贴出来了,添加一个背景图片和image用来显示实时图像就可以了。

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using System.Threading.Tasks;

using System.Windows;

using System.Windows.Controls;

using System.Windows.Data;

using System.Windows.Documents;

using System.Windows.Input;

using System.Windows.Media;

using System.Windows.Media.Imaging;

using System.Windows.Navigation;

using System.Windows.Shapes;

using Microsoft.Kinect;

namespace 抠图

{

///

/// MainWindow.xaml 的交互逻辑

///

public partial class MainWindow : Window

{

//人体索引帧

private KinectSensor kinect1 = null;

private MultiSourceFrameReader multiframe = null;

private uint bitmapbackbufer = 0;

private DepthSpacePoint[] colormappertodepthponters = null;

private readonly int byteperpixel = (PixelFormats.Bgr32.BitsPerPixel+7)/8;//位图中RGB像素的大小

private WriteableBitmap colorbitmap = null;//位图

public MainWindow()

{

InitializeComponent();

this.kinect1 = KinectSensor.GetDefault();//得到设备

this.multiframe = this.kinect1.OpenMultiSourceFrameReader(FrameSourceTypes.Color | FrameSourceTypes.BodyIndex | FrameSourceTypes.Depth);

//多源帧框架读取器

this.multiframe.MultiSourceFrameArrived += mulsourceframe_MultiSourceFrameArrived;

//注册证到达事件,在事件里面对数据进行处理

FrameDescription depthdescription = this.kinect1.DepthFrameSource.FrameDescription;//帧描述,获取高度,宽度等

FrameDescription colordescription = this.kinect1.ColorFrameSource.CreateFrameDescription(ColorImageFormat.Bgra);

int depthwidth = depthdescription.Width; int depthheight = depthdescription.Height;

int colorwidth = colordescription.Width; int colorheight = colordescription.Height;

this.colormappertodepthponters = new DepthSpacePoint[colorwidth*colorheight];//这里为什么要是彩色框架的大小呢?是因为该深度数组要映射到彩色空间,所以必须是彩色框架的大小

this.colorbitmap = new WriteableBitmap(colorwidth, colorheight, 96.0, 96.0, PixelFormats.Bgra32, null);//位图初始化

this.bitmapbackbufer = (uint)((this.colorbitmap.BackBufferStride*(this.colorbitmap.PixelHeight-1))+(this.colorbitmap.PixelWidth*byteperpixel));

//得到彩色框架后台缓冲区的大小

this.body_pose.Source = this.colorbitmap;

this.kinect1.Open();

}

private void mulsourceframe_MultiSourceFrameArrived(object sender, MultiSourceFrameArrivedEventArgs e)

{//数据处理,既然要深度数据用于判断,则要获取深度数据

int depthWidth = 0;

int depthHeight = 0;

DepthFrame depthFrame = null;

ColorFrame colorFrame = null;

BodyIndexFrame bodyIndexFrame = null;

bool isBitmapLocked = false;

MultiSourceFrame multiSourceFrame = e.FrameReference.AcquireFrame();

// 帧为空则返回

if (multiSourceFrame == null)

{

return;

}

try

{

depthFrame = multiSourceFrame.DepthFrameReference.AcquireFrame();

colorFrame = multiSourceFrame.ColorFrameReference.AcquireFrame();

bodyIndexFrame = multiSourceFrame.BodyIndexFrameReference.AcquireFrame();

if ((depthFrame == null) || (colorFrame == null) || (bodyIndexFrame == null))

{

return;

}

// 深度帧的描述

FrameDescription depthFrameDescription = depthFrame.FrameDescription;

depthWidth = depthFrameDescription.Width;

depthHeight = depthFrameDescription.Height;

// 使用深度帧数据将整个帧从颜色空间映射到深度空间

using (KinectBuffer depthFrameData = depthFrame.LockImageBuffer())

{

this.kinect1.CoordinateMapper.MapColorFrameToDepthSpaceUsingIntPtr(

depthFrameData.UnderlyingBuffer,

depthFrameData.Size,

this.colormappertodepthponters);//现在colormapperdepthponters里面全是从颜色空间映射到深度空间的数据,这样就可以用深度数据来标定彩色数据了

}

// 释放

depthFrame.Dispose();

depthFrame = null;

// Lock the bitmap for writing

this.colorbitmap.Lock();

isBitmapLocked = true;

//将原始格式转换为所需格式,并将数据复制到所提供的内存位置。

colorFrame.CopyConvertedFrameDataToIntPtr(this.colorbitmap.BackBuffer, this.bitmapbackbufer, ColorImageFormat.Bgra);

// We're done with the ColorFrame

colorFrame.Dispose();

colorFrame = null;

using (KinectBuffer bodyIndexData = bodyIndexFrame.LockImageBuffer())

{

unsafe

{

byte* bodyIndexDataPointer = (byte*)bodyIndexData.UnderlyingBuffer;

int colorMappedToDepthPointCount = this.colormappertodepthponters.Length;

fixed (DepthSpacePoint* colorMappedToDepthPointsPointer = this.colormappertodepthponters)

{

//得到彩色帧后台缓冲区的数据,即彩色帧数据

uint* bitmapPixelsPointer = (uint*)this.colorbitmap.BackBuffer;

// 循环判断深度数据中每一点的像素数据,如果属于人体,则把彩色帧中的该点显示,否则置零。

for (int colorIndex = 0; colorIndex < colorMappedToDepthPointCount; ++colorIndex)

{

float colorMappedToDepthX = colorMappedToDepthPointsPointer[colorIndex].X;

float colorMappedToDepthY = colorMappedToDepthPointsPointer[colorIndex].Y;

if (!float.IsNegativeInfinity(colorMappedToDepthX) &&

!float.IsNegativeInfinity(colorMappedToDepthY))

{

// 确保深度像素映射到彩色空间中的有效点

int depthX = (int)(colorMappedToDepthX + 0.5f);

int depthY = (int)(colorMappedToDepthY + 0.5f);

if ((depthX >= 0) && (depthX < depthWidth) && (depthY >= 0) && (depthY < depthHeight))

{

int depthIndex = (depthY * depthWidth) + depthX;

//如果是属于人体的像素则跳过这次循环

if (bodyIndexDataPointer[depthIndex] != 0xff)

{

continue;

}

}

}

//否则将彩色图像中这点的数据置零

bitmapPixelsPointer[colorIndex] = 0;

}

}

this.colorbitmap.AddDirtyRect(new Int32Rect(0, 0, this.colorbitmap.PixelWidth, this.colorbitmap.PixelHeight));

}

}

}

finally

{

if (isBitmapLocked)

{

this.colorbitmap.Unlock();

}

if (depthFrame != null)

{

depthFrame.Dispose();

}

if (colorFrame != null)

{

colorFrame.Dispose();

}

if (bodyIndexFrame != null)

{

bodyIndexFrame.Dispose();

}

}

}

private void Windows_closing(object sender, System.ComponentModel.CancelEventArgs e)

{

if (this.multiframe != null)

{

this.multiframe.Dispose();

this.multiframe = null;

}

if (this.kinect1 != null)

{

this.kinect1.Close();

this.kinect1 = null;

}

}

}

}

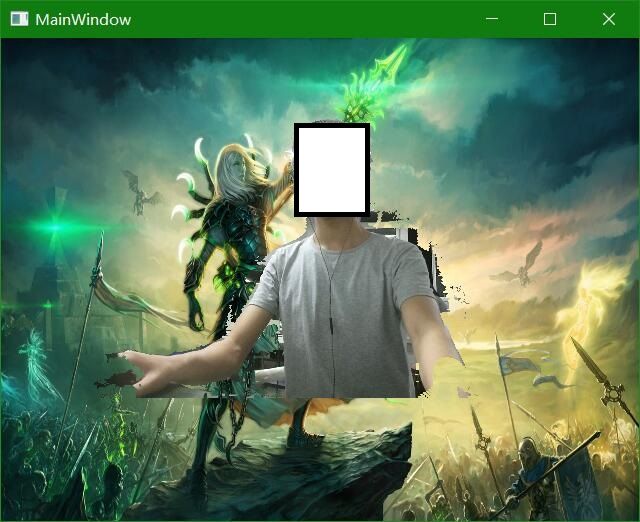

总之,实时抠图程序思想就是这样,效果还可以慢慢去改,不过有框架改的时候好很多,就是根据深度数据判断,标定彩色数据,然后根据标定的彩色数据显示即可。具体的运行效果如下: