spark集群启动后WorkerUI界面看不到Workers解决

前话

我有三台机分别是:

192.168.238.129 master

192.168.238.130 slave2

192.168.238.131 slave1spark 版本是2.0.2,hosts文件已经配置上面参数

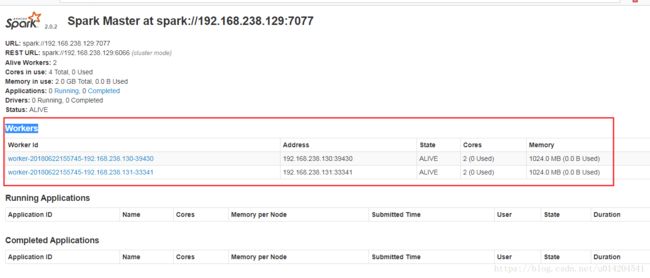

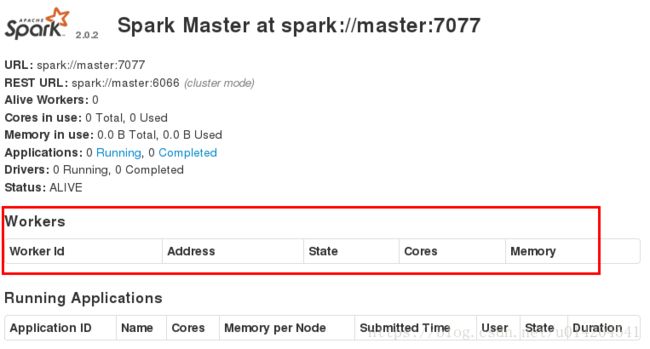

最近在搭spark集群的时候,成功启动集群,但是访问master的WorkerUI界面却看不到子节点,也就是worker id那里为空的,如图:

解决这个问题,关键是改spark的conf下面的spark-env.sh文件:

注意点就是,下面的master的相关配置必须是ip,之前填master,能启动,但是界面看不到worker。

master配置:

export JAVA_HOME=/opt/jdk1.7.0_80

export SCALA_HOME=/opt/scala-2.10.7

export HADOOP_HOME=/usr/local/hadoop-2.7.6

export SPARK_MASTER_IP=192.168.238.129

export SPARK_MASTER_PORT=7077

export SPARK_MASTER_HOST=192.168.238.129

export SPARK_LOCAL_IP=192.168.238.129

export SPARK_HOME=/opt/spark-2.0.2-bin-hadoop2.7

export HADOOP_CONF_DIR=/usr/local/hadoop-2.7.6/etc/hadoop

export SPARK_DIST_CLASSPATH=$(/usr/local/hadoop-2.7.6/bin/hadoop classpath)

export SPARK_EXECUTOR_MEMORY=1G

export SPARK_WORKER_CORES=2slave1的配置

export JAVA_HOME=/opt/jdk1.7.0_80

export SCALA_HOME=/opt/scala-2.10.7

export HADOOP_HOME=/usr/local/hadoop-2.7.6

export SPARK_MASTER_IP=192.168.238.129

export SPARK_MASTER_PORT=7077

export SPARK_MASTER_HOST=192.168.238.129

export SPARK_LOCAL_IP=slave1

export SPARK_HOME=/opt/spark-2.0.2-bin-hadoop2.7

export HADOOP_CONF_DIR=/usr/local/hadoop-2.7.6/etc/hadoop

export SPARK_DIST_CLASSPATH=$(/usr/local/hadoop-2.7.6/bin/hadoop classpath)

export SPARK_EXECUTOR_MEMORY=1G

export SPARK_WORKER_CORES=2slave2的配置

export JAVA_HOME=/opt/jdk1.7.0_80

export SCALA_HOME=/opt/scala-2.10.7

export HADOOP_HOME=/usr/local/hadoop-2.7.6

export SPARK_MASTER_IP=master

export SPARK_MASTER_PORT=7077

export SPARK_MASTER_HOST=master

export SPARK_LOCAL_IP=slave2

export SPARK_HOME=/opt/spark-2.0.2-bin-hadoop2.7

export HADOOP_CONF_DIR=/usr/local/hadoop-2.7.6/etc/hadoop

export SPARK_DIST_CLASSPATH=$(/usr/local/hadoop-2.7.6/bin/hadoop classpath)

export SPARK_EXECUTOR_MEMORY=1G

export SPARK_WORKER_CORES=2从上面配置会发现主要是master的必须用ip,其他的可用可不用ip

成功启动日志:

[hadoop@master spark-2.0.2-bin-hadoop2.7]$ ./sbin/start-all.sh

starting org.apache.spark.deploy.master.Master, logging to /opt/spark-2.0.2-bin-hadoop2.7/logs/spark-hadoop-org.apache.spark.deploy.master.Master-1-master.out

slave2: starting org.apache.spark.deploy.worker.Worker, logging to /opt/spark-2.0.2-bin-hadoop2.7/logs/spark-hadoop-org.apache.spark.deploy.worker.Worker-1-slave2.out

slave1: starting org.apache.spark.deploy.worker.Worker, logging to /opt/spark-2.0.2-bin-hadoop2.7/logs/spark-hadoop-org.apache.spark.deploy.worker.Worker-1-slave1.out失败启动日志:

失败的日志会记录在logs,命令里已经指出是哪个log了。可以自己去看log找出原因

[hadoop@master spark-2.0.2-bin-hadoop2.7]$ ./sbin/start-all.sh

starting org.apache.spark.deploy.master.Master, logging to /opt/spark-2.0.2-bin-hadoop2.7/logs/spark-hadoop-org.apache.spark.deploy.master.Master-1-master.out

slave1: starting org.apache.spark.deploy.worker.Worker, logging to /opt/spark-2.0.2-bin-hadoop2.7/logs/spark-hadoop-org.apache.spark.deploy.worker.Worker-1-slave1.out

slave2: starting org.apache.spark.deploy.worker.Worker, logging to /opt/spark-2.0.2-bin-hadoop2.7/logs/spark-hadoop-org.apache.spark.deploy.worker.Worker-1-slave2.out

slave1: failed to launch org.apache.spark.deploy.worker.Worker:

slave1: full log in /opt/spark-2.0.2-bin-hadoop2.7/logs/spark-hadoop-org.apache.spark.deploy.worker.Worker-1-slave1.out

slave2: failed to launch org.apache.spark.deploy.worker.Worker:

slave2: full log in /opt/spark-2.0.2-bin-hadoop2.7/logs/spark-hadoop-org.apache.spark.deploy.worker.Worker-1-slave2.out

缺点,显示的是ip,不是其他主机的名字

后话

下面内容只作为讨论用。

当改了master的配置不用ip,直接填写master的时候,如下面的配置,发现master能成功启动,但是slave节点都是报错的,说连不上master,但是直接ping也能通,不知道是什么问题?报错信息,可用在spark目录下的logs文件里看到

export SPARK_MASTER_IP=master

export SPARK_MASTER_PORT=7077

export SPARK_MASTER_HOST=master报错日志如下:

18/06/22 16:33:18 WARN worker.Worker: Failed to connect to master master:7077

org.apache.spark.SparkException: Exception thrown in awaitResult

at org.apache.spark.rpc.RpcTimeout$$anonfun$1.applyOrElse(RpcTimeout.scala:77)

at org.apache.spark.rpc.RpcTimeout$$anonfun$1.applyOrElse(RpcTimeout.scala:75)

at scala.runtime.AbstractPartialFunction.apply(AbstractPartialFunction.scala:36)

at org.apache.spark.rpc.RpcTimeout$$anonfun$addMessageIfTimeout$1.applyOrElse(RpcTimeout.scala:59)

at org.apache.spark.rpc.RpcTimeout$$anonfun$addMessageIfTimeout$1.applyOrElse(RpcTimeout.scala:59)

at scala.PartialFunction$OrElse.apply(PartialFunction.scala:167)

at org.apache.spark.rpc.RpcTimeout.awaitResult(RpcTimeout.scala:83)

at org.apache.spark.rpc.RpcEnv.setupEndpointRefByURI(RpcEnv.scala:88)

at org.apache.spark.rpc.RpcEnv.setupEndpointRef(RpcEnv.scala:96)

at org.apache.spark.deploy.worker.Worker$$anonfun$org$apache$spark$deploy$worker$Worker$$tryRegisterAllMasters$1$$anon$1.run(Worker.scala:216)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:471)

at java.util.concurrent.FutureTask.run(FutureTask.java:262)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1145)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:615)

at java.lang.Thread.run(Thread.java:745)

Caused by: java.io.IOException: Failed to connect to master/192.168.238.129:7077

at org.apache.spark.network.client.TransportClientFactory.createClient(TransportClientFactory.java:228)

at org.apache.spark.network.client.TransportClientFactory.createClient(TransportClientFactory.java:179)

at org.apache.spark.rpc.netty.NettyRpcEnv.createClient(NettyRpcEnv.scala:197)

at org.apache.spark.rpc.netty.Outbox$$anon$1.call(Outbox.scala:191)

at org.apache.spark.rpc.netty.Outbox$$anon$1.call(Outbox.scala:187)

... 4 more

Caused by: java.net.ConnectException: 拒绝连接: master/192.168.238.129:7077

at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:744)

at io.netty.channel.socket.nio.NioSocketChannel.doFinishConnect(NioSocketChannel.java:224)

at io.netty.channel.nio.AbstractNioChannel$AbstractNioUnsafe.finishConnect(AbstractNioChannel.java:289)

at io.netty.channel.nio.NioEventLoop.processSelectedKey(NioEventLoop.java:528)

at io.netty.channel.nio.NioEventLoop.processSelectedKeysOptimized(NioEventLoop.java:468)

at io.netty.channel.nio.NioEventLoop.processSelectedKeys(NioEventLoop.java:382)

at io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:354)

at io.netty.util.concurrent.SingleThreadEventExecutor$2.run(SingleThreadEventExecutor.java:111)

... 1 more

18/06/22 16:33:25 INFO worker.Worker: Retrying connection to master (attempt # 1)

18/06/22 16:33:25 INFO worker.Worker: Connecting to master master:7077...

18/06/22 16:33:25 WARN worker.Worker: Failed to connect to master master:7077

org.apache.spark.SparkException: Exception thrown in awaitResult

at org.apache.spark.rpc.RpcTimeout$$anonfun$1.applyOrElse(RpcTimeout.scala:77)

at org.apache.spark.rpc.RpcTimeout$$anonfun$1.applyOrElse(RpcTimeout.scala:75)

at scala.runtime.AbstractPartialFunction.apply(AbstractPartialFunction.scala:36)

at org.apache.spark.rpc.RpcTimeout$$anonfun$addMessageIfTimeout$1.applyOrElse(RpcTimeout.scala:59)

at org.apache.spark.rpc.RpcTimeout$$anonfun$addMessageIfTimeout$1.applyOrElse(RpcTimeout.scala:59)

at scala.PartialFunction$OrElse.apply(PartialFunction.scala:167)

at org.apache.spark.rpc.RpcTimeout.awaitResult(RpcTimeout.scala:83)

at org.apache.spark.rpc.RpcEnv.setupEndpointRefByURI(RpcEnv.scala:88)

at org.apache.spark.rpc.RpcEnv.setupEndpointRef(RpcEnv.scala:96)

at org.apache.spark.deploy.worker.Worker$$anonfun$org$apache$spark$deploy$worker$Worker$$tryRegisterAllMasters$1$$anon$1.run(Worker.scala:216)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:471)

at java.util.concurrent.FutureTask.run(FutureTask.java:262)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1145)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:615)

at java.lang.Thread.run(Thread.java:745)

Caused by: java.io.IOException: Failed to connect to master/192.168.238.129:7077

at org.apache.spark.network.client.TransportClientFactory.createClient(TransportClientFactory.java:228)

at org.apache.spark.network.client.TransportClientFactory.createClient(TransportClientFactory.java:179)

at org.apache.spark.rpc.netty.NettyRpcEnv.createClient(NettyRpcEnv.scala:197)

at org.apache.spark.rpc.netty.Outbox$$anon$1.call(Outbox.scala:191)

at org.apache.spark.rpc.netty.Outbox$$anon$1.call(Outbox.scala:187)

... 4 more但是查看进程又是启动的

[hadoop@slave2 conf]$ jps

7514 Worker

7583 Jps

4485 DataNode

[hadoop@slave2 conf]$