Faster R-CNN 源码解析(Tensorflow版)

参考博客:

http://blog.csdn.net/u013010889/article/details/78574879

http://blog.csdn.net/hjimce/article/details/73382553

代码链接

算法原理

Feature extraction + Region proposal network + Classification and regression:

图片链接

数据生成(imdb, roidb)

- datasets/imdb.py: 定义通用的图像数据库类imdb

datasets/factory.py: 利用lambda表达式像工厂一样自定义自己所需的数据库类,以下都以voc_2007_trainval数据集为例,在继承imdb类的基础上,定义pascal_voc类。

# 以voc数据集为例,按照imdb的命名,利用pascal_voc()函数生成不同的imdb for year in ['2007', '2012']: for split in ['train', 'val', 'trainval', 'test']: name = 'voc_{}_{}'.format(year, split) #year='2007', split='trainval' __sets[name] = (lambda split=split, year=year: pascal_voc(split, year)) def get_imdb(name): """Get an imdb (image database) by name.""" if name not in __sets: raise KeyError('Unknown dataset: {}'.format(name)) return __sets[name]()datasets/pascal_voc.py: 定义pascal_voc类(继承自imdb)。在这一部分,根据自己数据库的具体情况来定义成员变量和成员函数。下面列出一些重要的成员变量及成员函数:

部分成员变量

self._data_path = os.path.join(self._devkit_path, 'VOC' + self._year) #数据库路径 self._classes = ('__background__', # always index 0, 训练类别标签,包含背景类 'person') # Default to roidb handler self._roidb_handler = self.gt_roidb #感兴趣区域(ROI)数据库 self._salt = str(uuid.uuid4()) #?? self._comp_id = 'comp4' # ??- 部分成员函数

gt_roidb(): 调用_load_pascal_annotation()函数,返回ROI数据库。保存缓冲文件(第一次运行时),或载入数据库缓冲文件。

_load_pascal_annotation(): 从VOC数据库的XML文件中载入图像和bbox等信息, 包括:bboxes坐标,类别,overlap矩阵,bbox面积等。

model.train_val.get_training_roidb(imdb): 返回roidb (RoI数据库) 用来训练模型。

主要调用两个函数:imdb.append_flipped_images() # imdb类的一个成员函数,用来水平翻转训练集(数据增强) rdl_roidb.prepare_roidb(imdb) # roidb.py中定义的函数,下文介绍- roi_data_layer.roidb.prepare_roidb(imdb): imdb默认的roidb包含:boxes, gt_overlaps, gt_classed和filpped四个keys, 该函数在此基础进行了扩充,便于模型训练。扩充的内容包括:’image’:保存图片路径, ‘width’和’height’:保存图片尺寸,’max_overlaps’和’max_classes’:保存最大的overlap以及对应的类别。

小结:至此,生成imdb和roidb两个数据库类,记录数据库中图像路径,各类别标签以及标注等信息。

算法的网络框架主要分为三部分, 包括特征提取网络(VGG16, ResNet, MobileNet等),RPN网络和Classification and regression网络。特征网络的选取较灵活,在nets文件夹中定义了各个模型的结构,这部分的代码不作详细介绍。下文将主要介绍RPN网络和分类回归网络,构建网络的代码为network.py中的_build_network()函数

def _build_network(self, is_training=True):

# select initializers

if cfg.TRAIN.TRUNCATED:

initializer = tf.truncated_normal_initializer(mean=0.0, stddev=0.01)

initializer_bbox = tf.truncated_normal_initializer(mean=0.0, stddev=0.001)

else:

initializer = tf.random_normal_initializer(mean=0.0, stddev=0.01)

initializer_bbox = tf.random_normal_initializer(mean=0.0, stddev=0.001)

net_conv = self._image_to_head(is_training)

with tf.variable_scope(self._scope, self._scope):

# 生成anchors

self._anchor_component()

# RPN网络

rois = self._region_proposal(net_conv, is_training, initializer)

# RoI pooling

if cfg.POOLING_MODE == 'crop':

pool5 = self._crop_pool_layer(net_conv, rois, "pool5")

else:

raise NotImplementedError

fc7 = self._head_to_tail(pool5, is_training)

with tf.variable_scope(self._scope, self._scope):

# 分类/回归网络

cls_prob, bbox_pred = self._region_classification(fc7, is_training,

initializer, initializer_bbox)

self._score_summaries.update(self._predictions)

return rois, cls_prob, bbox_predRPN

图片链接

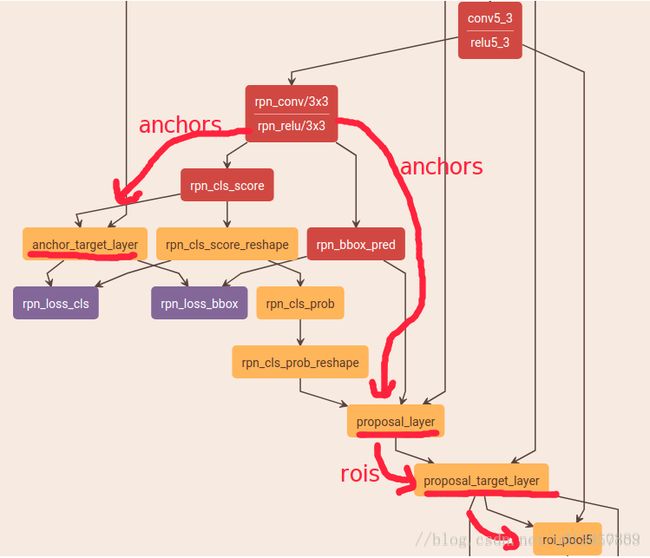

这部分介绍RPN网络的构建,首先在Conv5_3特征图的基础上,生成anchors;然后预测每个anchor的类别及位置

self._anchor_component(), 主要调用layer_utils/generate_anchors.py: 生成anchors。

输入图像的尺寸(W, H), 经过feature extraction模块后,得到尺寸为(W/16, H/16)的特征图,记为(w, h)(VGG16的网络结构,所有stride的乘积为16,具体原理请参考论文);然后特征图的每个点生成k个anchors,论文中设置3种ratios:[0.5, 1, 2], 3种sacles:[8, 16, 32],每个特征图共产生w*h*9个anchors。# array([[ -83., -39., 100., 56.], # [-175., -87., 192., 104.], # [-359., -183., 376., 200.], # [ -55., -55., 72., 72.], # [-119., -119., 136., 136.], # [-247., -247., 264., 264.], # [ -35., -79., 52., 96.], # [ -79., -167., 96., 184.], # [-167., -343., 184., 360.]]) # 上述结果是在batchsize=16(什么意思?)的基础上,即以(0, 0, 15, 15)作为参考窗口,生成9个anchors。注意:生成不同ratio的anchor的时候,anchor的面积保持不变,只是高宽比发生改变。self._region_proposal(),首先预测anchors属于前/背景的分数,以及坐标位置。包括两层网络结构:

第一层:3*3的卷积层

rpn = slim.conv2d(net_conv, 512, [3, 3], trainable=is_training, weights_initializer=initializer, scope="rpn_conv/3x3")第二层:两个分支,都用了1*1的卷积核;第一支得到特征图(height, width, 9*2),用于判断bbox中是否含有物体;第二支得到特征图 (height, width, 9*4),用于得到bbox的坐标。

# shape = (1, ?, ?, 18) , 其中,batchsize=1 rpn_cls_score = slim.conv2d(rpn, self._num_anchors * 2, [1, 1], trainable=is_training, weights_initializer=initializer, # change it so that the score has 2 as its channel size # shape = (1, ?, ?, 2) rpn_cls_score_reshape = self._reshape_layer(rpn_cls_score, 2, 'rpn_cls_score_reshape') # shape = (1, ?, ?, 2) rpn_cls_prob_reshape = self._softmax_layer(rpn_cls_score_reshape, "rpn_cls_prob_reshape") # shape = (?,) rpn_cls_pred = tf.argmax(tf.reshape(rpn_cls_score_reshape, [-1, 2]), axis=1, name="rpn_cls_pred") # shape = (1, ?, ?, 18) rpn_cls_prob = self._reshape_layer(rpn_cls_prob_reshape, self._num_anchors * 2, "rpn_cls_prob") # shape = (1, ?, ?, 36) rpn_bbox_pred = slim.conv2d(rpn, self._num_anchors * 4, [1, 1], trainable=is_training, weights_initializer=initializer, padding='VALID', activation_fn=None, scope='rpn_bbox_pred')疑问:两次reshape的过程具体是怎么进行的? 为什么要reshape?

_region_proposal() 中的_anchor_target_layer()调用anchor_target_layer.py函数得到训练RPN所需的标签。为了训练RPN网络,需要构建两个损失函数:用于分类(前景/背景2类)的softmax_cross_entropy, 另一类是用于回归bbox的smooth_l1_loss。该函数根据cls_prob和bbox_pred为anchors分配标签(1:前景,0:背景,-1:忽略),即rpn_labels;并计算anchor与gt bbox之间的差值, 即rpn_bbox_targets。另外,bbox_inside_weights, rpn_bbox_outside_weights ????

def _anchor_target_layer(self, rpn_cls_score, name): rpn_labels, rpn_bbox_targets, rpn_bbox_inside_weights, rpn_bbox_outside_weights = tf.py_func( anchor_target_layer,[rpn_cls_score, self._gt_boxes, self._im_info, self._feat_stride, self._anchors, self._num_anchors], [tf.float32, tf.float32, tf.float32, tf.float32]) #省略了部分代码 self._anchor_targets['rpn_labels'] = rpn_labels self._anchor_targets['rpn_bbox_targets'] = rpn_bbox_targets self._anchor_targets['rpn_bbox_inside_weights'] = rpn_bbox_inside_weights self._anchor_targets['rpn_bbox_outside_weights'] = rpn_bbox_outside_weights self._score_summaries.update(self._anchor_targets) return rpn_labels正负样本生成策略:

- 只保留图像内部的anchors

- 对于每个gt_box,找到与它IoU最大的anchor则设为正样本

- 对于每个anchor,与任意一个gt_box的IoU>0.7则为正样本,IoU<0.3设为负样本

- 其他anchor则被忽略

- 假如正负样本过多,则进行采样,采样比例由RPN_BATCHSIZE, RPN_FG_FRACTION等控制

_region_proposal()中的_proposal_layer()调用proposal_layer()函数。功能:生成proposal,并进行筛选(NMS等)。主要流程可概括为以下四点:

- 利用坐标变换生成proposal:proposals = bbox_transform_inv(anchors, rpn_bbox_pred)

- 按前景概率对proposal进行降排,然后留下RPN_PRE_NMS_TOP_N个proposal

- 对剩下的proposal进行NMS操作,阈值是0.7

- 对剩下的proposal,保留RPN_POST_NMS_TOP_N个, 得到最终的rois和相应的rpn_socre。

_region_proposal()中的_proposal_target_layer()为上一步中得到的proposal分配所属物体类别,并得到proposal和 gt_bbox的的坐标位置间的差别,便于训练后续Fast R-CNN的分类和回归网络。(注:这一步在测试中没有,因为测试时没有ground truth)

def _proposal_target_layer(self, rois, roi_scores, name): rois, roi_scores, labels, bbox_targets, bbox_inside_weights, bbox_outside_weights = tf.py_func( proposal_target_layer, [rois, roi_scores, self._gt_boxes, self._num_classes], [tf.float32, tf.float32, tf.float32, tf.float32, tf.float32, tf.float32]) self._proposal_targets['rois'] = rois self._proposal_targets['labels'] = tf.to_int32(labels, name="to_int32") self._proposal_targets['bbox_targets'] = bbox_targets self._proposal_targets['bbox_inside_weights'] = bbox_inside_weights self._proposal_targets['bbox_outside_weights'] = bbox_outside_weights return rois, roi_scores主要调用proposal_target_layer()函数,其主要步骤如下:

- 确定每张图片中roi的数目,以及前景fg_roi的数目

- 从所有的rpn_rois中进行采样,并得到rois的类别标签以及bbox的回归目标(bbox_targets),即真值与预测值之间的偏差。

labels, rois, roi_scores, bbox_targets, bbox_inside_weights = _sample_rois( all_rois, all_scores, gt_boxes, fg_rois_per_image, rois_per_image, _num_classes)计算rois与gt_bboxes之间的overlap矩阵,对于每一个roi,最大的overlap的gt_bbox的标签即为该roi的类别标签,并根据TRAIN.FG_THRESH和TRAIN.BG_THRESH_HI/LO 选择前景roi和背景roi。

小结:

RPN网络主要进行了三个工作:

预测anchor的类别(属于前景/背景)及其位置

self._predictions["rpn_cls_score"] = rpn_cls_score self._predictions["rpn_cls_score_reshape"] = rpn_cls_score_reshape self._predictions["rpn_cls_prob"] = rpn_cls_prob self._predictions["rpn_cls_pred"] = rpn_cls_pred self._predictions["rpn_bbox_pred"] = rpn_bbox_pred self._predictions["rois"] = rois生成训练RPN网络的标签信息(anchor target layer)

self._anchor_targets['rpn_labels'] = rpn_labels self._anchor_targets['rpn_bbox_targets'] = rpn_bbox_targets self._anchor_targets['rpn_bbox_inside_weights'] = rpn_bbox_inside_weights self._anchor_targets['rpn_bbox_outside_weights'] = rpn_bbox_outside_weights生成训练分类和回归网络的RoI(proposal layer)以及对应的标签信息(proposal target layer)

self._proposal_targets['rois'] = rois self._proposal_targets['labels'] = tf.to_int32(labels, name="to_int32") self._proposal_targets['bbox_targets'] = bbox_targets self._proposal_targets['bbox_inside_weights'] = bbox_inside_weights self._proposal_targets['bbox_outside_weights'] = bbox_outside_weights

RoI Pooling

FC layer需要固定尺寸的输入。在最早的R-CNN算法中,将输入的图像直接resize成相同的尺寸。而Faster R-CNN对输入图像的尺寸没有要求,经过Proposal layer和 Proposal target layer之后,会得到许多不同尺寸的RoI。Faster R-CNN采用RoI Pooling层(原理参考SPPNet 论文),将不同尺寸ROI对应的特征图采样为相同尺寸,然后输入后续的FC层。这版代码中没有实现RoI pooling layer, 而是把RoI对应的特征图resize成相同尺寸后,再进行max pooling。

# 没有实现RoI pooling layer

pool5 = self._crop_pool_layer(net_conv, rois, "pool5")

def _crop_pool_layer(self, bottom, rois, name):

with tf.variable_scope(name) as scope:

batch_ids = tf.squeeze(tf.slice(rois, [0, 0], [-1, 1], name="batch_id"), [1])

# 得到归一化的bbox坐标(相对原图的尺寸进行归一化)

bottom_shape = tf.shape(bottom)

height = (tf.to_float(bottom_shape[1]) - 1.) * np.float32(self._feat_stride[0])

width = (tf.to_float(bottom_shape[2]) - 1.) * np.float32(self._feat_stride[0])

x1 = tf.slice(rois, [0, 1], [-1, 1], name="x1") / width

y1 = tf.slice(rois, [0, 2], [-1, 1], name="y1") / height

x2 = tf.slice(rois, [0, 3], [-1, 1], name="x2") / width

y2 = tf.slice(rois, [0, 4], [-1, 1], name="y2") / height

# Won't be back-propagated to rois anyway, but to save time

bboxes = tf.stop_gradient(tf.concat([y1, x1, y2, x2], axis=1))

pre_pool_size = cfg.POOLING_SIZE * 2

# 裁剪特征图,并resize成相同的尺寸

crops = tf.image.crop_and_resize(bottom, bboxes, tf.to_int32(batch_ids), [pre_pool_size, pre_pool_size], name="crops")

# 进行标准的max pooling

return slim.max_pool2d(crops, [2, 2], padding='SAME')需要说明的是,我感觉这是一种比较取巧的方法。和标准的ROI pooling之间有什么区别,还是本质上是等价的?

ROIs:在Fast RCNN中,指的是Selective Search的输出;在Faster RCNN中指的是RPN的输出,一堆矩形候选框框,形状为1x5x1x1(4个坐标+索引index),其中值得注意的是:坐标的参考系不是针对feature map这张图的,而是针对原图的(神经网络最开始的输入)。下面给出roi pooling层的流程及代码(C++)。

坐标映射。将roi坐标映射到feature map

int roi_start_w = round(rois_flat[index_roi + 1] * spatial_scale); // spatial_scale 1/16 int roi_start_h = round(rois_flat[index_roi + 2] * spatial_scale); int roi_end_w = round(rois_flat[index_roi + 3] * spatial_scale); int roi_end_h = round(rois_flat[index_roi + 4] * spatial_scale);在feature map上的roi区域做max pooling或者average pooling。

% 确定pooling的窗口。应为roi的尺寸不同,所以窗口的尺寸也会自适应变化 float bin_size_h = (float)(roi_height) / (float)(pooled_height); // 9/7 float bin_size_w = (float)(roi_width) / (float)(pooled_width); // 7/7=1 for (ph = 0; ph < pooled_height; ++ph){ for (pw = 0; pw < pooled_width; ++pw){ int hstart = (floor((float)(ph) * bin_size_h)); int wstart = (floor((float)(pw) * bin_size_w)); int hend = (ceil((float)(ph + 1) * bin_size_h)); int wend = (ceil((float)(pw + 1) * bin_size_w)); hstart = fminf(fmaxf(hstart + roi_start_h, 0), data_height); hend = fminf(fmaxf(hend + roi_start_h, 0), data_height); wstart = fminf(fmaxf(wstart + roi_start_w, 0), data_width); wend = fminf(fmaxf(wend + roi_start_w, 0), data_width); % max/average pooling 这部分代码省略

Classification and Regression

直接上代码

def _region_classification(self, fc7, is_training, initializer, initializer_bbox): # 分类 cls_score = slim.fully_connected(fc7, self._num_classes, weights_initializer=initializer, trainable=is_training, activation_fn=None, scope='cls_score') cls_prob = self._softmax_layer(cls_score, "cls_prob") cls_pred = tf.argmax(cls_score, axis=1, name="cls_pred") # 回归 bbox_pred = slim.fully_connected(fc7, self._num_classes * 4, weights_initializer=initializer_bbox, trainable=is_training, activation_fn=None, scope='bbox_pred') self._predictions["cls_score"] = cls_score self._predictions["cls_pred"] = cls_pred self._predictions["cls_prob"] = cls_prob self._predictions["bbox_pred"] = bbox_pred return cls_prob, bbox_pred小结:至此,数据准备和整个Faster R-CNN的网络已经搭建完成。为了训练网络,需要构建损失函数。

Loss

Loss分为4部分:RPN, class loss,RPN, bbox loss,RCNN, class loss,RCNN, bbox loss。

# RPN, class loss rpn_cls_score = tf.reshape(self._predictions['rpn_cls_score_reshape'], [-1, 2]) rpn_label = tf.reshape(self._anchor_targets['rpn_labels'], [-1]) rpn_select = tf.where(tf.not_equal(rpn_label, -1)) rpn_cls_score = tf.reshape(tf.gather(rpn_cls_score, rpn_select), [-1, 2]) rpn_label = tf.reshape(tf.gather(rpn_label, rpn_select), [-1]) rpn_cross_entropy = tf.reduce_mean( tf.nn.sparse_softmax_cross_entropy_with_logits(logits=rpn_cls_score, labels=rpn_label)) # RPN, bbox loss rpn_bbox_pred = self._predictions['rpn_bbox_pred'] rpn_bbox_targets = self._anchor_targets['rpn_bbox_targets'] rpn_bbox_inside_weights = self._anchor_targets['rpn_bbox_inside_weights'] rpn_bbox_outside_weights = self._anchor_targets['rpn_bbox_outside_weights'] rpn_loss_box = self._smooth_l1_loss(rpn_bbox_pred, rpn_bbox_targets, rpn_bbox_inside_weights, rpn_bbox_outside_weights, sigma=sigma_rpn, dim=[1, 2, 3]) # RCNN, class loss cls_score = self._predictions["cls_score"] label = tf.reshape(self._proposal_targets["labels"], [-1]) cross_entropy = tf.reduce_mean( tf.nn.sparse_softmax_cross_entropy_with_logits( logits=tf.reshape(cls_score, [-1, self._num_classes]), labels=label)) # RCNN, bbox loss bbox_pred = self._predictions['bbox_pred'] bbox_targets = self._proposal_targets['bbox_targets'] bbox_inside_weights = self._proposal_targets['bbox_inside_weights'] bbox_outside_weights = self._proposal_targets['bbox_outside_weights'] loss_box = self._smooth_l1_loss(bbox_pred, bbox_targets, bbox_inside_weights, bbox_outside_weights)分类loss都采用的是:softmax_cross_entropy;回归loss都采用的是:smooth_L1_loss

模型训练

- 论文中采用4步交替训练策略。

- 先用预训练好的ImageNet来初始化RPN网络,然后微调(finetune)RPN网络;

- 根据第一步训练好的RPN来生成RoIs,然后单独训练 Fast R-CNN。在这一步训练过程中,Fast R-CNN的参数初始化也是采用ImageNet预训练的模型。两个网络完全分开训练,不存在共享网络层。

- 采用上一步Fast R-CNN训练好的网络参数,来重新初始化RPN的共享卷积层。(注意:这一步只对RPN的局部网络进行微调,前半部分和Fast R-CNN共享的卷积层训练好后就固定不变了)

- 继续固定共享网络层参数,用步骤3微调后的RPN网络生成的bbox对Fast R-CNN的非共享层进行参数微调。

本文所用的代码采用近似联合训练策略。

- 思路:把RPN的损失函数和Fast R-CNN的损失函数根据一定比例加在一起,然后进行整体的SGD训练

- **问题:**RPN后续的网络层,无法对RPN的bbox坐标进行求导更新,即ROI的误差无法反向传播到RPN网络,因此只能称之为近似联合训练。

loss = cross_entropy + loss_box + rpn_cross_entropy + rpn_loss_box

训练/测试流程

这部分内容会在后续添加。

问题:

- 为什么缩放M x N?

- RPN 网络的两个卷乘层 3x3, 1x1, 为什么要这样设置?