吴恩达 Deep Learning assignment2_2

Logistic Regression with a Neural Network mindset

接下来我们将完成第一个编程任务 —— 构建一个逻辑回归分类器来识别猫。 本次作业将指导我们如何使用神经网络思维模式进行操作,因此也将磨练对深度学习的直觉。

构建学习算法的通用架构,包括:

初始化参数

计算成本函数及其梯度

使用优化算法(梯度下降)

按正确的顺序将上述所有三个函数收集到主模型函数中。

1 - Packages

首先,认识一下本次用到的包并导入

- numpy是使用Python进行科学计算的基础包。

- h5py是与存储在H5文件中的数据集进行交互的常用包。

- matplotlib是一个在Python里非常著名的绘制图形的库。

- 这里使用PIL和scipy来测试模型,最后使用自己的图片。

import numpy as np

import matplotlib.pyplot as plt

import h5py

import scipy

from PIL import Image

from scipy import ndimage

from lr_utils import load_dataset

%matplotlib inline2 - Overview of the Problem set

问题陈述:将获得一个数据集(“data.h5”),其中包含:

- 标记为cat(y = 1)或非cat(y = 0)的m_train图像训练集

- 标记为猫或非猫的m_test图像的测试集

- 每个图像的形状(num_px,num_px,3),其中3表示3个通道(RGB)。 因此,每个图像是正方形(height = num_px)和(width = num_px)。

下面将构建一个简单的图像识别算法,可以正确地将图片分类为猫或非猫。

# Loading the data (cat/non-cat)

train_set_x_orig, train_set_y, test_set_x_orig, test_set_y, classes = load_dataset()

#加orig的原因是要对输入图像进行预处理

# Example of a picture

index = 5

plt.imshow(train_set_x_orig[index])

print ("y = " + str(train_set_y[:, index]) + ", it's a '" + classes[np.squeeze(train_set_y[:, index])].decode("utf-8") + "' picture.")深度学习中的许多软件错误来自于不适合的矩阵/向量维度。 如果你可以保持你的矩阵/矢量尺寸,你将在很长一段时间内消除许多错误。

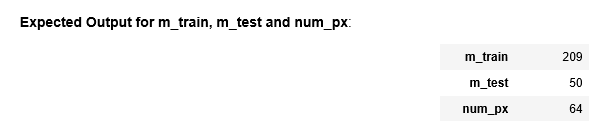

exercise:找到以下值:

- m_train(训练样例数)

- m_test(测试例数)

- num_px(= height =训练图像的宽度)

### START CODE HERE ### (≈ 3 lines of code)

m_train = train_set_x_orig.shape[0] #预处理训练集的第一维度(行数)

m_test = test_set_x_orig.shape[0] #预处理测试集的第一维度(行数)

num_px = train_set_x_orig.shape[1] #预处理训练集的第二维度(列数)

### END CODE HERE ###

print ("Number of training examples: m_train = " + str(m_train))

print ("Number of testing examples: m_test = " + str(m_test))

print ("Height/Width of each image: num_px = " + str(num_px))

print ("Each image is of size: (" + str(num_px) + ", " + str(num_px) + ", 3)")

print ("train_set_x shape: " + str(train_set_x_orig.shape))

print ("train_set_y shape: " + str(train_set_y.shape))

print ("test_set_x shape: " + str(test_set_x_orig.shape))

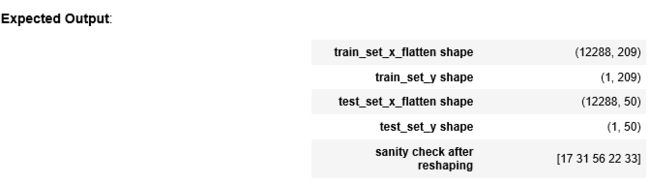

print ("test_set_y shape: " + str(test_set_y.shape))exercise:重塑训练和测试数据集,以便将大小(num_px,num_px,3)的图像展平为单个矢量形状(num_px*num_px*3,1),转置后每列代表一个完整的图片,一共有m_train列。

公式:

# Reshape the training and test examples

### START CODE HERE ### (≈ 2 lines of code)

train_set_x_flatten = train_set_x_orig.reshape(train_set_x_orig.shape[0], -1).T

test_set_x_flatten = test_set_x_orig.reshape(test_set_x_orig.shape[0], -1).T

### END CODE HERE ###

print ("train_set_x_flatten shape: " + str(train_set_x_flatten.shape))

print ("train_set_y shape: " + str(train_set_y.shape))

print ("test_set_x_flatten shape: " + str(test_set_x_flatten.shape))

print ("test_set_y shape: " + str(test_set_y.shape))

print ("sanity check after reshaping: " + str(train_set_x_flatten[0:5,0]))要表示彩色图像,必须为每个像素指定红色,绿色和蓝色通道(RGB),因此像素值实际上是三个数字的矢量,范围从0到255。

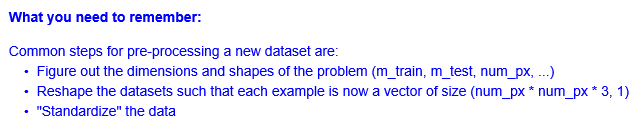

机器学习中一个常见的预处理步骤是对数据集进行居中和标准化,这意味着您从每个示例中减去整个numpy数组的平均值,然后将每个示例除以整个numpy数组的标准偏差。 但是对于图片数据集,它更简单,更方便,几乎可以将数据集的每一行除以255(像素通道的最大值)。

train_set_x = train_set_x_flatten/255.

test_set_x = test_set_x_flatten/255.3 - General Architecture of the learning algorithm(学习算法的一般体系结构)

使用神经网络构建Logistic回归

原理流程:

keywords: 我们将执行以下步骤

- - 初始化模型的参数

- - 通过最小化成本来了解模型的参数

- - 使用学习的参数进行预测(在测试集上)

- - 分析结果并得出结论

4 - Building the parts of our algorithm

4.1 - Helper functions

exercise:计算sigmoid

# GRADED FUNCTION: sigmoid

def sigmoid(z):

"""

Compute the sigmoid of z

Arguments:

z -- A scalar or numpy array of any size.

Return:

s -- sigmoid(z)

"""

### START CODE HERE ### (≈ 1 line of code)

s = 1 / (1 + np.exp(-z))

### END CODE HERE ###

return s

print ("sigmoid([0, 2]) = " + str(sigmoid(np.array([0,2]))))4.2 - Initializing parameters (初始化参数)

exercise:

# GRADED FUNCTION: initialize_with_zeros

def initialize_with_zeros(dim):

"""

This function creates a vector of zeros of shape (dim, 1) for w and initializes b to 0.

Argument:

dim -- size of the w vector we want (or number of parameters in this case)

Returns:

w -- initialized vector of shape (dim, 1)

b -- initialized scalar (corresponds to the bias)

"""

### START CODE HERE ### (≈ 1 line of code)

w = np.zeros((dim, 1))

b = 0

### END CODE HERE ###

assert(w.shape == (dim, 1)) #assert语句:

用以检查某一条件是否为True,若该条件为False则会给出一个AssertionError。

assert(isinstance(b, float) or isinstance(b, int))

return w, b

dim = 2

w, b = initialize_with_zeros(dim)

print ("w = " + str(w))

print ("b = " + str(b))w will be of shape (num_px × num_px × 3, 1).

4.3 - Forward and Backward propagation (前向和后向传播)

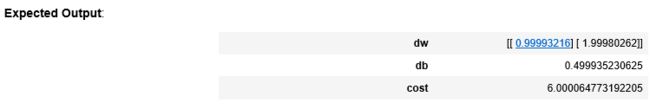

exercise:实现一个函数propagate()来计算成本函数及其渐变。

公式:

# GRADED FUNCTION: propagate

def propagate(w, b, X, Y):

"""

Implement the cost function and its gradient for the propagation explained above

Arguments:

w -- weights, a numpy array of size (num_px * num_px * 3, 1)

b -- bias, a scalar 偏置

X -- data of size (num_px * num_px * 3, number of examples)

Y -- true "label" vector (containing 0 if non-cat, 1 if cat) of size (1, number of examples) 真正的标签向量

Return:

cost -- negative log-likelihood cost for logistic regression 逻辑回归的负对数似然成本

dw -- gradient of the loss with respect to w, thus same shape as w 相对于w的损失梯度,因此与w的形状相同

db -- gradient of the loss with respect to b, thus same shape as b 相对于b的损失梯度,因此与b的形状相同

Tips:

- Write your code step by step for the propagation. np.log(), np.dot()

"""

m = X.shape[1]

# FORWARD PROPAGATION (FROM X TO COST)

### START CODE HERE ### (≈ 2 lines of code)

A = sigmoid(np.dot(w.T, X) + b) # compute activation

cost = -1 / m * np.sum(Y * np.log(A) + (1 - Y) * np.log(1 - A)) # compute cost

### END CODE HERE ###

# BACKWARD PROPAGATION (TO FIND GRAD)

### START CODE HERE ### (≈ 2 lines of code)

dw = 1 / m * np.dot(X, (A - Y).T)

db = 1 / m * np.sum(A - Y)

### END CODE HERE ###

assert(dw.shape == w.shape)

assert(db.dtype == float)

cost = np.squeeze(cost)

assert(cost.shape == ())

grads = {"dw": dw,

"db": db}

return grads, cost

w, b, X, Y = np.array([[1],[2]]), 2, np.array([[1,2],[3,4]]), np.array([[1,0]])

grads, cost = propagate(w, b, X, Y)

print ("dw = " + str(grads["dw"]))

print ("db = " + str(grads["db"]))

print ("cost = " + str(cost))d) Optimization

exercise:实现优化功能

公式:

![]()

# GRADED FUNCTION: optimize

def optimize(w, b, X, Y, num_iterations, learning_rate, print_cost = False):

"""

This function optimizes w and b by running a gradient descent algorithm

Arguments:

w -- weights, a numpy array of size (num_px * num_px * 3, 1)

b -- bias, a scalar

X -- data of shape (num_px * num_px * 3, number of examples)

Y -- true "label" vector (containing 0 if non-cat, 1 if cat), of shape (1, number of examples)

num_iterations -- number of iterations of the optimization loop

learning_rate -- learning rate of the gradient descent update rule

print_cost -- True to print the loss every 100 steps

Returns:

params -- dictionary containing the weights w and bias b

grads -- dictionary containing the gradients of the weights and bias with respect to the cost function

costs -- list of all the costs computed during the optimization, this will be used to plot the learning curve.

Tips:

You basically need to write down two steps and iterate through them:

1) Calculate the cost and the gradient for the current parameters. Use propagate().

2) Update the parameters using gradient descent rule for w and b.

"""

costs = []

for i in range(num_iterations):

# Cost and gradient calculation (≈ 1-4 lines of code)

### START CODE HERE ###

grads, cost = propagate(w, b, X, Y)

### END CODE HERE ###

# Retrieve derivatives from grads

dw = grads["dw"]

db = grads["db"]

# update rule (≈ 2 lines of code)

### START CODE HERE ###

w = w - learning_rate * dw

b = b - learning_rate * db

### END CODE HERE ###

# Record the costs

if i % 100 == 0: #i是否被100整除

costs.append(cost)

# Print the cost every 100 training examples

if print_cost and i % 100 == 0:

print ("Cost after iteration %i: %f" %(i, cost))

params = {"w": w,

"b": b}

grads = {"dw": dw,

"db": db}

return params, grads, costs

params, grads, costs = optimize(w, b, X, Y, num_iterations= 100, learning_rate = 0.009, print_cost = False)

print ("w = " + str(params["w"]))

print ("b = " + str(params["b"]))

print ("dw = " + str(grads["dw"]))

print ("db = " + str(grads["db"]))

print(costs)

exercise:优化 w b 后,我们将用其实现假设函数

分为2步:

# GRADED FUNCTION: predict

def predict(w, b, X):

'''

Predict whether the label is 0 or 1 using learned logistic regression parameters (w, b)

Arguments:

w -- weights, a numpy array of size (num_px * num_px * 3, 1)

b -- bias, a scalar

X -- data of size (num_px * num_px * 3, number of examples)

Returns:

Y_prediction -- a numpy array (vector) containing all predictions (0/1) for the examples in X

'''

m = X.shape[1]

Y_prediction = np.zeros((1,m))

w = w.reshape(X.shape[0], 1)

# Compute vector "A" predicting the probabilities of a cat being present in the picture

### START CODE HERE ### (≈ 1 line of code)

A = sigmoid(np.dot(w.T, X) + b)

### END CODE HERE ###

for i in range(A.shape[1]):

# Convert probabilities A[0,i] to actual predictions p[0,i]将概率A [0,i]转换为实际预测p [0,i]

### START CODE HERE ### (≈ 4 lines of code)

if A[0, i] <= 0.5:

Y_prediction[0, i] = 0

else:

Y_prediction[0, i] = 1

### END CODE HERE ###

assert(Y_prediction.shape == (1, m))

return Y_prediction

print ("predictions = " + str(predict(w, b, X)))