BeautifulSoup及爬取豆瓣评论

BS4的理解

BS4会将html文档对象转换为python可以识别的四种对象:

Tag: 标签对象

NavigableString : 字符内容操作对象

BeautifulSoup: 文档对象

Comment:是一个特殊类型的 NavigableString 对象

floating.html:

Title

cooffee1

文章标题

hello

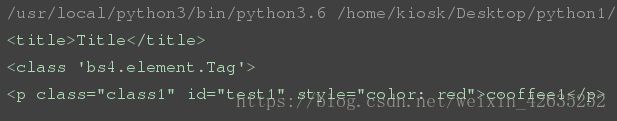

获取标签内容

from bs4 import BeautifulSoup

# 构造对象

soup = BeautifulSoup(open('floating.html'),'html.parser')

# 获取标签, 默认获取找到的第一个符合的内容

print(soup.title)

print(type(soup.title))

print(soup.p)

获取标签的属性

- 通过下标获取:通过标签的下标的方式。

href = a['href'] - 通过attrs属性获取:示例代码:

href = a.attrs['href']

from bs4 import BeautifulSoup

soup = BeautifulSoup(open('floating.html'),'html.parser')

print(soup.p.attrs)

# 获取标签指定属性的内容

print(soup.p['id'])

print(soup.p['class'])

print(soup.p['style'])

# 对属性进行修改

soup.p['id']='mychangeid'

print(soup.p['id'])

print(type(soup.p))

获取标签的文本内容

string和strings、stripped_strings属性以及get_text方法:

- string:获取某个标签下的非标签字符串。返回来的是个字符串。如果这个标签下有多行字符,那么就不能获取到了。

- strings:获取某个标签下的子孙非标签字符串。返回来的是个生成器。

- stripped_strings:获取某个标签下的子孙非标签字符串,会去掉空白字符。返回来的是个生成器。

- get_text:获取某个标签下的子孙非标签字符串。不是以列表的形式返回,是以普通字符串返回。

from bs4 import BeautifulSoup

soup = BeautifulSoup(open('floating.html'),'html.parser')

#获取文本内容

print(soup.title.text,type(soup.title))

print(soup.title.string,type(soup.title.string))

print(soup.title.name)

print(soup.head.title.string)

面对对象的匹配

find_all的使用:

- 在提取标签的时候,第一个参数是标签的名字。然后如果在提取标签的时候想要使用标签属性进行过滤,那么可以在这个方法中通过关键字参数的形式,将属性的名字以及对应的值传进去。或者是使用

attrs属性,将所有的属性以及对应的值放在一个字典中传给attrs属性。 - 有些时候,在提取标签的时候,不想提取那么多,那么可以使用

limit参数。限制提取多少个。

find与find_all的区别:

- find:找到第一个满足条件的标签就返回。说白了,就是只会返回一个元素。

- find_all:将所有满足条件的标签都返回。说白了,会返回很多标签(以列表的形式)。

使用find和find_all的过滤条件:

- 关键字参数:将属性的名字作为关键字参数的名字,以及属性的值作为关键字参数的值进行过滤。

- attrs参数:将属性条件放到一个字典中,传给attrs参数。

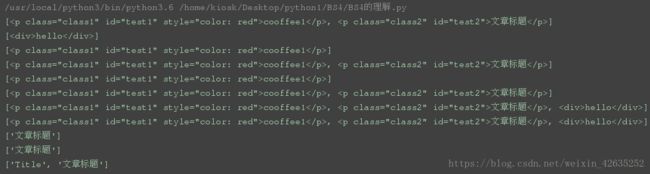

from bs4 import BeautifulSoup

import re

soup = BeautifulSoup(open('floating.html'),'html.parser')

# 查找指定的标签内容(指定的标签)

res1 = soup.find_all('p')

print(res1)

# 查找指定的标签内容(指定的标签)--与正则的使用

res2 = soup.find_all(re.compile(r'^d+'))

print(res2)

# # 对于正则表达式进行编译, 提高查找速率;

# pattern = r'd.+'

# pattern = re.compile(pattern)

# print(re.findall(pattern, 'dog hello d'))

# 详细查找标签

print(soup.find_all('p',id='test1'))

print(soup.find_all('p',id=re.compile(r'test\d{1}')))

print(soup.find_all('p',class_='class1'))

print(soup.find_all('p',class_=re.compile(r'class\d{1}')))

# 查找多个标签

print(soup.find_all(['p','div']))

print(soup.find_all([re.compile('^d'),re.compile('p')]))

# 内容的匹配

print(soup.find_all(text='文章标题'))

print(soup.find_all(text=re.compile('标题')))

print(soup.find_all(text=[re.compile('标题'),'Title']))

CSS匹配

- 根据标签的名字选择,示例代码如下:

p{ background-color: pink; } - 根据类名选择,那么要在类的前面加一个点。示例代码如下:

.line{ background-color: pink; } - 根据id名字选择,那么要在id的前面加一个#号。示例代码如下:

#box{ background-color: pink; } - 查找子孙元素。那么要在子孙元素中间有一个空格。示例代码如下:

#box p{ background-color: pink; } - 查找直接子元素。那么要在父子元素中间有一个>。示例代码如下:

#box > p{ background-color: pink; } - 根据属性的名字进行查找。那么应该先写标签名字,然后再在中括号中写属性的值。示例代码如下:

input[name='username']{ background-color: pink; } - 在根据类名或者id进行查找的时候,如果还要根据标签名进行过滤。那么可以在类的前面或者id的前面加上标签名字。示例代码如下:

div#line{ background-color: pink; } div.line{ background-color: pink; }

在BeautifulSoup中,要使用css选择器,那么应该使用soup.select()方法。应该传递一个css选择器的字符串给select方法。

from bs4 import BeautifulSoup

soup = BeautifulSoup(open('floating.html'),'html.parser')

# CSS常见选择器: 标签选择器(div), 类选择器(.class1), id选择器(#idname), 属性选择器(p[type="text"])

# 标签选择器(div)

res1 = soup.select('p')

print(res1)

# 类选择器(.class1)

res2 = soup.select('.class2')

print(res2)

# id选择器(#idname)

res3 = soup.select("#test1")

print(res3)

# 属性选择器(p[type="text"]

print(soup.select("p[id='test1']"))

print(soup.select("p['class']"))

contents和children:

contents和children:

返回某个标签下的直接子元素,其中也包括字符串。他们两的区别是:contents返回来的是一个列表,children返回的是一个迭代器。

from bs4 import BeautifulSoup

soup = BeautifulSoup(open('floating.html'),'html.parser')

res1 = soup.select('p')

print(type(res1.contents))

print(type(res1.children))

爬取豆瓣电影评论并分析(词云)

获取豆瓣最新电影的id和电影名称

import requests

from bs4 import BeautifulSoup

url ="https://movie.douban.com/cinema/nowplaying/xian/"

#获取页面信息

response = requests.get(url)

content = response.text

#分析页面, 获取id和电影名

soup =BeautifulSoup(content,'html5lib')

# 线找到所有的电影信息对应的li标签;

nowplaying_movie_list = soup.find_all('li',class_='list-item')

# 存储所有电影信息

movies_info=[]

# 依次遍历每一个li标签, 再次提取需要的信息

for item in nowplaying_movie_list:

nowplaying_movie_dict = {}

# 根据属性获取title内容和id内容

# item['data-title']获取li标签里面的指定属性data-title对应的value值;

nowplaying_movie_dict['title']=item['data-title']

nowplaying_movie_dict['id']=item['id']

nowplaying_movie_dict['actors']=item['data-actors']

nowplaying_movie_dict['director']=item['data-director']

# 将获取的{'title':"名称", "id":"id号"}添加到列表中;

movies_info.append(nowplaying_movie_dict)

for items in movies_info:

print(items)

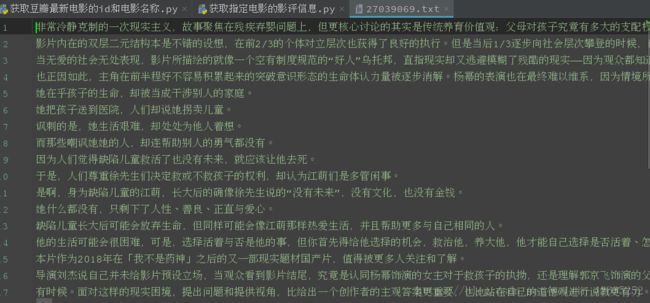

获取指定电影的影评信息

import threading

import requests

from bs4 import BeautifulSoup

#爬取某一页的评论信息;

def getOnePageComment(id,pageNum):

#根据页数确定start变量的值

start = (pageNum-1)*20

url = "https://movie.douban.com/subject/%s/comments?start=" \

"%s&limit=20&sort=new_score&status=P" %(id, start)

#爬取评论信息的网页内容

content = requests.get(url).text

#通过bs4分析网页

soup = BeautifulSoup(content,'html5lib')

# 分析网页得知, 所有的评论信息都是在span标签, 并且class为short;

commentsList = soup.find_all('span',class_='short')

pageComments = ""

## 依次遍历每一个span标签, 获取标签里面的评论信息, 并将所有的评论信息存储到pageComments变量中;

for commentTag in commentsList:

pageComments += commentTag.text

print("%s page" %(pageNum))

global comments

comments += pageComments

#爬取某个电影的前10页评论信息;

id = '27039069'

comments =''

threads = []

# 爬取前10页的评论信息;获取前几页就循环几次;

for pageNum in range(1,11):

# 通过启动多线程获取每页评论信息

t = threading.Thread(target=getOnePageComment,args=(id,pageNum))

threads.append(t)

t.start()

# 等待所有的子线程执行结束, 再执行主线程内容;

_ = [thread.join() for thread in threads]

print("执行结束")

with open("%s.txt" %(id),'w') as f:

f.write(comments)

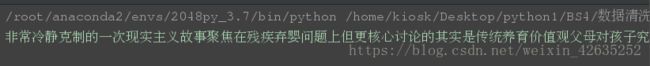

数据清洗

完整的分析过程:

数据的获取: 通过爬虫获取(urllib|requests<获取页面内容> + re|bs4<分析页面内容>)

数据清洗: 按照一定的格式岁文本尽心处理;

import re

# 1. 对于爬取的评论信息进行数据清洗(删除不必要的逗号, 句号, 表情, 只留下中文或者英文内容)

with open('27039069.txt') as f:

comments = f.read()

pattern = re.compile(r'([\u4e00-\u9fa5]+|[a-zA-Z]+)')

# 通过正则表达式实现

deal_comments = re.findall(pattern,comments)

newComments = ''

for item in deal_comments:

newComments += item

print(newComments)

电影评论词云分析

import jieba

import wordcloud

import numpy as np

# 在python2中处理图像,Image; python3中如果处理图像, 千万不要安装Image, 安装pillow

from PIL import Image

# 2). '词组1', '词组2'切割有问题, 可以强调

# jieba.suggest_freq(('词组1'),True)

# jieba.suggest_freq(('词组2'),True)

# 强调文件中出现的所有词语; 在文件中写入相应的词组

#jieba.load_userdict('./doc/newWord')

#切割中文, lcut返回一个列表, cut返回一个生成器;

result = jieba.lcut(open('27039069.txt').read())

打开图片

imageobj = Image.open('img/mao.jpg')

cloud_mask = np.array(imageobj)

#绘制词云

wc = wordcloud.WordCloud(

mask=cloud_mask,

background_color='black',

font_path='./font/msyh.ttf', # 处理中文数据时

min_font_size=5, # 图片中最小字体大小;

max_font_size=50, # 图片中最大字体大小;

width=500, # 指定生成图片的宽度

)

wc.generate(','.join(result))

wc.to_file('./img/douban.png')

完整代码:

豆瓣评论分析:

# 1). 获取豆瓣最新上映的所有电影的前10页评论信息;

# 2). 清洗数据;

# 3). 分析每个电影评论信息分析绘制成词云, 保存为png图片,文件名为: 电影名.png;

import threading

import re

import numpy as np

import jieba

import requests

from PIL import Image

from bs4 import BeautifulSoup

import wordcloud

def get_info_movie(url):

user_agent = 'Mozilla/5.0 (iPad; CPU OS 5_0 like Mac OS X) AppleWebKit/534.46 (KHTML, like Gecko) Version/5.1 Mobile/9A334 Safari/7534.48.3'

headers = {

'User-Agent': user_agent

}

proxy = {'https': '113.200.56.13:8010',

'http': '112.95.188.237:9797'}

response = requests.get(url,proxies=proxy,headers=headers)

content = response.text

soup =BeautifulSoup(content,'html5lib')

nowplaying_movie_list = soup.find_all('li',class_='list-item')

movies_info=[]

for item in nowplaying_movie_list:

nowplaying_movie_dict = {}

nowplaying_movie_dict['title']=item['data-title']

nowplaying_movie_dict['id']=item['id']

nowplaying_movie_dict['actors']=item['data-actors']

nowplaying_movie_dict['director']=item['data-director']

movies_info.append(nowplaying_movie_dict)

return movies_info

def getOnePageComment(id,pageNum,commentLi):

start = (pageNum-1)*20

user_agent = 'Mozilla/5.0 (iPad; CPU OS 5_0 like Mac OS X) AppleWebKit/534.46 (KHTML, like Gecko) Version/5.1 Mobile/9A334 Safari/7534.48.3'

headers = {

'User-Agent': user_agent

}

proxy = {'https': '113.200.56.13:8010',

'http': '112.95.188.237:9797'}

url = "https://movie.douban.com/subject/%s/comments?start=" \

"%s&limit=20&sort=new_score&status=P" %(id, start)

content = requests.get(url,proxies=proxy,headers=headers).text

soup = BeautifulSoup(content,'html5lib')

commentsList = soup.find_all('span',class_='short')

pageComments = ""

for commentTag in commentsList:

pageComments += commentTag.text

commentLi.append(pageComments)

def getMorePageComment(id,title):

threads = []

commentLi = []

for pageNum in range(1, 11):

t = threading.Thread(target=getOnePageComment, args=(id, pageNum,commentLi))

threads.append(t)

t.start()

_ = [thread.join() for thread in threads]

with open("./doc/%s.txt" % (title), 'w') as f:

for comment in commentLi:

f.write(comment)

def Clear_data(title):

with open('./doc/%s.txt' %(title)) as f:

comments = f.read()

pattern = re.compile(r'([\u4e00-\u9fa5]+|[a-zA-Z]+)')

deal_comments = re.findall(pattern, comments)

newComments = ''

for item in deal_comments:

newComments += item

with open('./doc/%s.txt' %(title),'w') as f:

f.write(newComments)

def Word_Analysis(title):

result = jieba.lcut(open('./doc/%s.txt'%(title)).read())

imageobj = Image.open('img/glass.jpg')

cloud_mask = np.array(imageobj)

wc = wordcloud.WordCloud(

mask=cloud_mask,

background_color='snow',

font_path='./font/msyh.ttf',

min_font_size=5,

max_font_size=50,

width=500,

)

wc.generate(','.join(result))

wc.to_file('./img/%s.png' %(title))

url ="https://movie.douban.com/cinema/nowplaying/xian/"

movie_info=get_info_movie(url)

for movie_dict in movie_info:

getMorePageComment(movie_dict['id'],movie_dict['title'])

Clear_data(movie_dict['title'])

try:

Word_Analysis(movie_dict['title'])

except ValueError as e:

print(e)

爬取慕客网

需求:爬取慕客网所有关于python的课程名及描述信息, 并通过词云进行分析展示;

网址: https://www.imooc.com/search/course?words=python

import jieba

import re

import requests

import random

from PIL import Image

from bs4 import BeautifulSoup

import wordcloud

import numpy as np

def get_one_page(url):

user_agent = 'Mozilla/5.0 (X11; Linux x86_64; rv:38.0) Gecko/20100101 Firefox/38.0'

headers ={

'User-Agent':user_agent

}

proxy = [{'https': '183.129.207.80:21776',

'http': '123.117.32.229:9000'

},

{'https': '114.116.10.21:3128',

'http': '2110.40.13.5:80'

},

{'https': '58.251.228.104:9797',

'http': '222.221.11.119:3128'

}

]

response = requests.get(url,proxies=random.choice(proxy),headers=headers)

content = response.text

soup = BeautifulSoup(content,'html5lib')

python_title_list=soup.find_all('a',class_='course-detail-title')

title_string=''

for title in python_title_list:

title_string += title.text.strip()

return title_string

def save_text(title_string):

with open('title.txt','w') as f:

f.write(title_string)

def Clear_data():

with open('title.txt') as f:

comments = f.read()

pattern = re.compile(r'([\u4e00-\u9fa5]+|[a-zA-Z]+)')

deal_comments = re.findall(pattern, comments)

newComments = ''

for item in deal_comments:

newComments += item

with open('title.txt', 'w') as f:

f.write(newComments)

def word_Analysis():

result = jieba.lcut(open('title.txt').read())

imageobj = Image.open('img/glass.jpg')

cloud_mask = np.array(imageobj)

wc = wordcloud.WordCloud(mask=cloud_mask, background_color='snow', font_path='./font/msyh.ttf', min_font_size=5,

max_font_size=50, width=500, )

wc.generate(','.join(result))

wc.to_file('./img/python.png')

if __name__=='__main__':

title_name_string=''

for i in range(1,3):

url = 'https://www.imooc.com/search/course?words=python&page=%s'%(i)

title_name_string +=get_one_page(url)

save_text(title_name_string)

Clear_data()

word_Analysis()

爬取今日百度热点前10的新闻

import random

import requests

from bs4 import BeautifulSoup

url = 'http://top.baidu.com/buzz?b=341&fr=topbuzz_b341'

user_agent = 'Mozilla/5.0 (X11; Linux x86_64; rv:38.0) Gecko/20100101 Firefox/38.0'

headers ={

'User-Agent':user_agent

}

proxy = [{'https': '183.129.207.80:21776',

'http': '123.117.32.229:9000'

},

{'https': '114.116.10.21:3128',

'http': '2110.40.13.5:80'

},

{'https': '58.251.228.104:9797',

'http': '222.221.11.119:3128'

}

]

response = requests.get(url,proxies=random.choice(proxy),headers=headers)

#编码格式

response.encoding='gb18030'

content = response.text

soup = BeautifulSoup(content,'html5lib')

new_title_list=soup.find_all('',class_='list-title')[:10]

for items in new_title_list:

print(items.text)