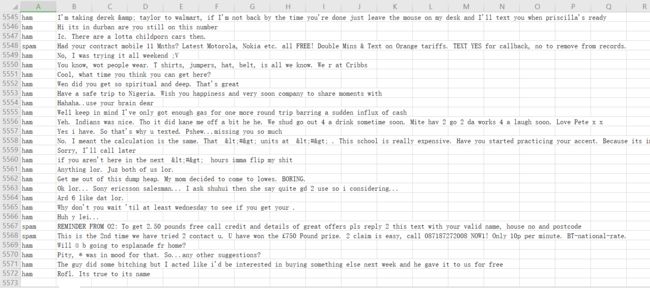

1.读取

2.数据预处理

import csv import nltk import re from nltk.corpus import stopwords from nltk.stem import WordNetLemmatizer import pandas as pd #返回类别 def getLb(data): if data.startswith("J"): return nltk.corpus.wordnet.ADJ elif data.startswith("V"): return nltk.corpus.wordnet.VERB elif data.startswith("N"): return nltk.corpus.wordnet.NOUN elif data.startswith("R"): return nltk.corpus.wordnet.ADV else: return ""; def preprocessing(data): newdata=[] punctuation = '!,;:?"\'' data=re.sub(r'[{}]+'.format(punctuation), '', data).strip().lower();#去标点和转小写 for i in nltk.sent_tokenize(data, "english"): # 对文本按照句子进行分割 for j in nltk.word_tokenize(i): # 对句子进行分词 newdata.append(j) stops = stopwords.words('english') newdata= [i for i in newdata if i not in stops]#去停用词 newdata = nltk.pos_tag(newdata)#词性标注 lem = WordNetLemmatizer() for i, j in enumerate(newdata):#还原词 y = getLb(j[1]) if y: newdata[i] = lem.lemmatize(j[0], y) else: newdata[i] = j[0] newdata=' '.join(newdata); return newdata if __name__ == "__main__": sms = open('./_5', 'r', encoding='utf-8') sms_data = [] sms_label = [] csv_reader = csv.reader(sms, delimiter='\t') for line in csv_reader: sms_label.append(line[0]) sms_data.append(preprocessing(line[1])) sms.close() result=pd.DataFrame({"类别":sms_label,"特征":sms_data}) result.to_csv("./result.csv")

3.数据划分—训练集和测试集数据划分

from sklearn.model_selection import train_test_split

x_train,x_test, y_train, y_test = train_test_split(data, target, test_size=0.2, random_state=0, stratify=y_train)

from sklearn.model_selection import train_test_split import pandas as pd data=pd.read_csv("./分类/垃圾邮件分类/result.csv") x_train,x_test, y_train, y_test = train_test_split(data.iloc[:,2], data.iloc[:,1], test_size=0.2, random_state=0

4.文本特征提取

sklearn.feature_extraction.text.CountVectorizer

https://scikit-learn.org/stable/modules/generated/sklearn.feature_extraction.text.CountVectorizer.html?highlight=sklearn%20feature_extraction%20text%20tfidfvectorizer

sklearn.feature_extraction.text.TfidfVectorizer

https://scikit-learn.org/stable/modules/generated/sklearn.feature_extraction.text.TfidfVectorizer.html?highlight=sklearn%20feature_extraction%20text%20tfidfvectorizer#sklearn.feature_extraction.text.TfidfVectorizer

from sklearn.feature_extraction.text import TfidfVectorizer

tfidf2 = TfidfVectorizer()

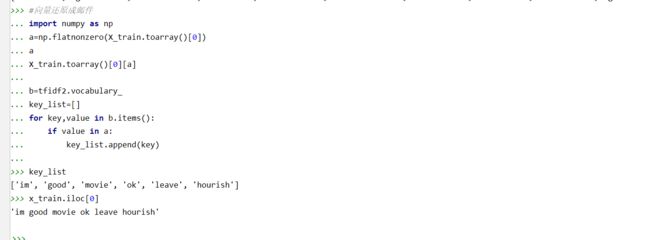

from sklearn.feature_extraction.text import TfidfVectorizer tfidf2 = TfidfVectorizer() X_train=tfidf2.fit_transform(x_train.values.astype('U')) X_text=tfidf2.transform(x_test.values.astype('U')) #邮件与向量的关系 tfidf2.vocabulary_ X_train.toarray() X_train.toarray().shape #向量还原成邮件 import numpy as np a=np.flatnonzero(X_train.toarray()[0]) a X_train.toarray()[0][a] b=tfidf2.vocabulary_ key_list=[] for key,value in b.items(): if value in a: key_list.append(key) key_list x_train.iloc[0]

4.模型选择

from sklearn.naive_bayes import GaussianNB

from sklearn.naive_bayes import MultinomialNB

说明为什么选择这个模型?

多项式朴素贝叶斯分类器适用于具有离散特征的分类

from sklearn.naive_bayes import MultinomialNB mnb=MultinomialNB() mnb.fit(X_train,y_train) y_mnb=mnb.predict(X_text)

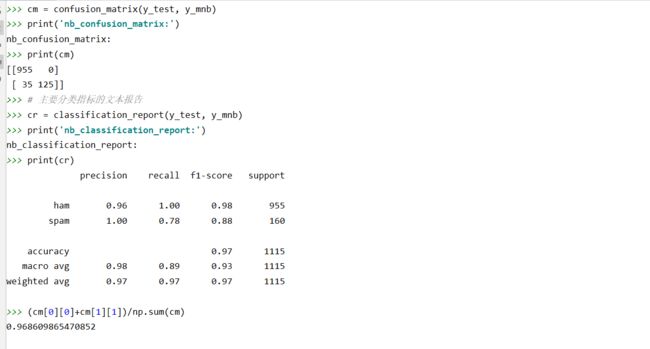

5.模型评价:混淆矩阵,分类报告

from sklearn.metrics import confusion_matrix

confusion_matrix = confusion_matrix(y_test, y_predict)

说明混淆矩阵的含义

from sklearn.metrics import classification_report

说明准确率、精确率、召回率、F值分别代表的意义

混淆矩阵是一个2 × 2的情形分析表,显示以下四组记录的数目:作出正确判断的肯定记录(真阳性)、作出错误判断的肯定记录(假阴性)、作出正确判断的否定记录(真阴性)以及作出错误判断的否定记录(假阳性)

真实值是positive,模型认为是positive的数量(True Positive=TP)

真实值是positive,模型认为是negative的数量(False Negative=FN):这就是统计学上的第二类错误(Type II Error)

真实值是negative,模型认为是positive的数量(False Positive=FP):这就是统计学上的第一类错误(Type I Error)

真实值是negative,模型认为是negative的数量(True Negative=TN)

准确率(Accuracy = (TP +TN)/(TP + FP + TN + FN)): 在所有样本中,预测为真的比例

精确率(Precision = TP /(TP + FP)):预测结果为正例样本中真实为正例的比例(查得准)

召回率(Recall = TP /(TP + FN)):真实为正例的样本中预测结果为正例的比例(查得全,对正样本的区分能力)

F值 = 正确率 * 召回率 * 2 / (正确率 + 召回率) (F 值即为正确率和召回率的调和平均值)

from sklearn.metrics import confusion_matrix from sklearn.metrics import classification_report # 混淆矩阵 cm = confusion_matrix(y_test, y_mnb) print('nb_confusion_matrix:') print(cm) # 主要分类指标的文本报告 cr = classification_report(y_test, y_mnb) print('nb_classification_report:') print(cr) (cm[0][0]+cm[1][1])/np.sum(cm)

6.比较与总结

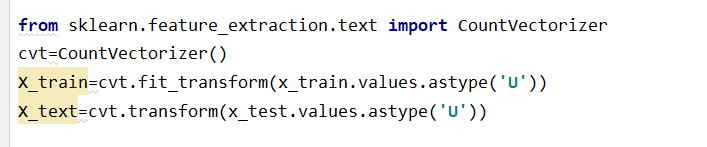

如果用CountVectorizer进行文本特征生成,与TfidfVectorizer相比,效果如何?

因为CountVectorizer只关注词汇在本文本出现的概率,没有像TfidfVectorizer结合其他的文本来考虑,所以最后得出的准确率会较高,但是这样的结果与TfidfVectorizer的结果相比,TfidfVectorizer的结果更为可靠。