kubeadm实现k8s集群环境部署与配置

文章目录

- 个人环境

- (1)Centos 7.4

- (2)K8S三件套(v1.15.1)

- (3)Docker(19.03.9)

- (4)Fannel(v0.11.0)

- (5)Keepalived(v1.3.5)

- 一、部署环境

- 1.主机列表

- 2.其他准备

- 二、Keepalived安装

- 1.安装(在所有master节点上安装)

- 2.配置

- 3.启动keepalived并设置开机启动:

- 三、配置master节点

- 1.master初始化

- 2.加载环境变量

- 四、安装Fannel网络

- 五、备master节点加入集群

- 1.配置密钥登录

- 2.master01分发证书

- 3.备master节点移动证书至指定目录

- 4.备master节点加入集群

- 5.备master节点加载环境变量

- 六、node节点加入集群

- 1.加入集群

- 2.集群节点查看

- 七、测试集群高可用

- 1.测试master节点高可用

- 2.测试node节点高可用

- 八、Dashboard搭建

- 1.下载yaml

- 2. 配置yaml

- 3. 部署访问

- 九、对接私有仓库(可选)

- 1.修改daemon.json

- 2.创建认证secret

- 3.测试私有仓库

- 十、问题总结

- 1.初始化master节点失败或者初始化错节点(我就是初始化错成node节点了):

- 2.flanne文件下载失败

- 3.节点状态NotReady

- 4.直接在主master节点安装Dashboard(优化)

个人环境

(1)Centos 7.4

(2)K8S三件套(v1.15.1)

(3)Docker(19.03.9)

(4)Fannel(v0.11.0)

(5)Keepalived(v1.3.5)

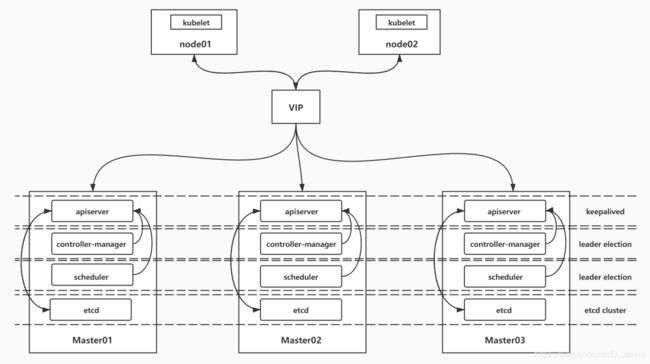

k8s集群的高可用实际是k8s各核心组件的高可用,这里使用主备模式,架构如下:

(1)apiserver 通过keepalived实现高可用,当某个节点故障时触发keepalived的vip 转移;

(2)controller-manager k8s内部通过选举方式产生领导者(由–leader-elect 选型控制,默认为true),同一时刻集群内只有一个controller-manager组件运行;

(3)scheduler k8s内部通过选举方式产生领导者(由–leader-elect 选型控制,默认为true),同一时刻集群内只有一个scheduler组件运行;

(4)etcd 通过运行kubeadm方式自动创建集群来实现高可用,部署的节点数为奇数,3节点方式最多容忍一台机器宕机。

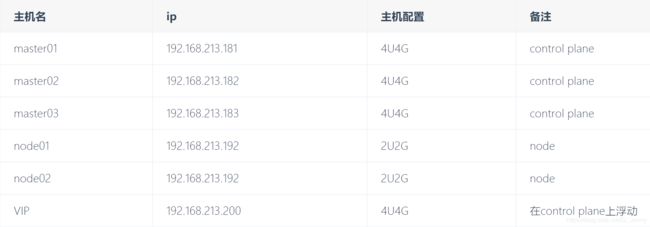

一、部署环境

1.主机列表

2.其他准备

(1)系统初始化,docker安装,k8s(kubelet、kubeadm和kubectl)安装省略(每个节点都部署)

(2)kubelet 运行在集群所有节点上,用于启动Pod和容器

(3)kubeadm 用于初始化集群,启动集群

(4)kubectl 用于和集群通信,部署和管理应用,查看各种资源,创建、删除和更新各种组件

(5)启动kubelet并设置开机启动:

systemctl enable kubelet

systemctl start kubelet

二、Keepalived安装

1.安装(在所有master节点上安装)

[root@master01 ~]# yum install keepalived -y

2.配置

master01:

[root@master01 ~]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id master01 #修改此处

}

vrrp_instance VI_1 {

state MASTER #修改此处

interface ens33

virtual_router_id 50

priority 150 #修改此处

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.213.200 #修改此处

}

}

master02:

[root@master02 ~]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id master02

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

virtual_router_id 50

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.213.200

}

}

master03:

[root@master03 ~]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id master03

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

virtual_router_id 50

priority 50

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.213.200

}

}

3.启动keepalived并设置开机启动:

[root@master01 ~]# systemctl start keepalived

[root@master01 ~]# systemctl enable keepalived

三、配置master节点

1.master初始化

[root@master01 ~]# cat kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1beta2

kind: ClusterConfiguration

kubernetesVersion: v1.15.1

apiServer:

certSANs: ##填写所有kube-apiserver节点的hostname、IP、VIP

- master01

- master02

- master03

- node01

- node02

- 192.168.213.181

- 192.168.213.182

- 192.168.213.183

- 192.168.213.191

- 192.168.213.192

- 192.168.213.200

controlPlaneEndpoint: "192.168.213.200:6443"

networking:

podSubnet: "10.244.0.0/16"

[root@master01 ~]# kubeadm init --config=kubeadm-config.yaml|tee kubeadim-init.log

记录kubeadm join的输出,后面需要这个命令将备master节点和node节点加入集群中

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.213.200:6443 --token ebx4uz.9y3twsnoj9yoscoo \

--discovery-token-ca-cert-hash sha256:1bc280548259dd8f1ac53d75e918a8ec99c234b13f4fe18a71435bbbe8cb26f3

2.加载环境变量

[root@master01 ~]# echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

[root@master01 ~]# source .bash_profile

四、安装Fannel网络

[root@master01 ~]# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/2140ac876ef134e0ed5af15c65e414cf26827915/Documentation/kube-flannel.yml

五、备master节点加入集群

1.配置密钥登录

配置master01到master02、master03免密登录

#创建秘钥

[root@master01 ~]# ssh-keygen -t rsa

#将秘钥同步至master02,master03

[root@master01 ~]# ssh-copy-id -i /root/.ssh/id_rsa.pub [email protected]

[root@master01 ~]# ssh-copy-id -i /root/.ssh/id_rsa.pub [email protected]

#免密登陆测试

[root@master01 ~]# ssh master02

[root@master01 ~]# ssh 192.168.213.183

2.master01分发证书

在master01上运行脚本cert-main-master.sh,将证书分发至master02和master03

[root@master01 ~]# cat cert-main-master.sh

USER=root # customizable

CONTROL_PLANE_IPS="192.168.213.182 192.168.213.183"

for host in ${CONTROL_PLANE_IPS}; do

scp /etc/kubernetes/pki/ca.crt "${USER}"@$host:

scp /etc/kubernetes/pki/ca.key "${USER}"@$host:

scp /etc/kubernetes/pki/sa.key "${USER}"@$host:

scp /etc/kubernetes/pki/sa.pub "${USER}"@$host:

scp /etc/kubernetes/pki/front-proxy-ca.crt "${USER}"@$host:

scp /etc/kubernetes/pki/front-proxy-ca.key "${USER}"@$host:

scp /etc/kubernetes/pki/etcd/ca.crt "${USER}"@$host:etcd-ca.crt

# Quote this line if you are using external etcd

scp /etc/kubernetes/pki/etcd/ca.key "${USER}"@$host:etcd-ca.key

done

[root@master01 ~]# ./cert-main-master.sh

3.备master节点移动证书至指定目录

在master02,master03上运行脚本cert-other-master.sh,将证书移至指定目录

[root@master02 ~]# cat cert-other-master.sh

USER=root # customizable

mkdir -p /etc/kubernetes/pki/etcd

mv /${USER}/ca.crt /etc/kubernetes/pki/

mv /${USER}/ca.key /etc/kubernetes/pki/

mv /${USER}/sa.pub /etc/kubernetes/pki/

mv /${USER}/sa.key /etc/kubernetes/pki/

mv /${USER}/front-proxy-ca.crt /etc/kubernetes/pki/

mv /${USER}/front-proxy-ca.key /etc/kubernetes/pki/

mv /${USER}/etcd-ca.crt /etc/kubernetes/pki/etcd/ca.crt

# Quote this line if you are using external etcd

mv /${USER}/etcd-ca.key /etc/kubernetes/pki/etcd/ca.key

[root@master02 ~]# ./cert-other-master.sh

4.备master节点加入集群

在master02和master03节点上运行加入集群的命令

kubeadm join 192.168.213.200:6443 --token ebx4uz.9y3twsnoj9yoscoo \

--discovery-token-ca-cert-hash sha256:1bc280548259dd8f1ac53d75e918a8ec99c234b13f4fe18a71435bbbe8cb26f3

5.备master节点加载环境变量

此步骤是为了在备master节点上也能执行kubectl命令

scp master01:/etc/kubernetes/admin.conf /etc/kubernetes/

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

source .bash_profile

六、node节点加入集群

1.加入集群

在node节点运行初始化master生成的加入集群的命令

kubeadm join 192.168.213.200:6443 --token ebx4uz.9y3twsnoj9yoscoo \

--discovery-token-ca-cert-hash sha256:1bc280548259dd8f1ac53d75e918a8ec99c234b13f4fe18a71435bbbe8cb26f3

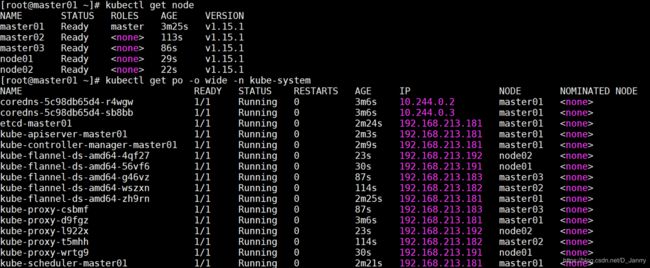

2.集群节点查看

[root@master01 ~]# kubectl get nodes

[root@master01 ~]# kubectl get pod -o wide -n kube-system

可以看到,所有control plane节点处于ready状态,所有的系统组件也正常。

七、测试集群高可用

1.测试master节点高可用

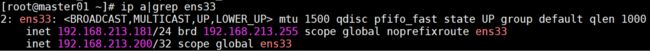

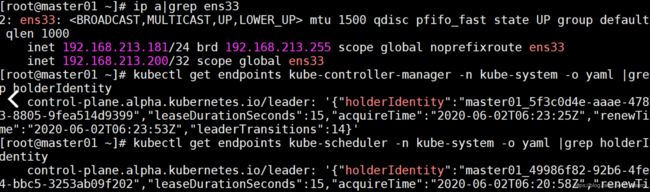

通过ip查看apiserver所在节点,通过leader-elect查看scheduler和controller-manager所在节点

[root@master01 ~]# ip a|grep ens33

[root@master01 ~]# kubectl get endpoints kube-scheduler -n kube-system -o yaml |grep holderIdentity

[root@master01 ~]# kubectl get endpoints kube-controller-manager -n kube-system -o yaml |grep holderIdentity

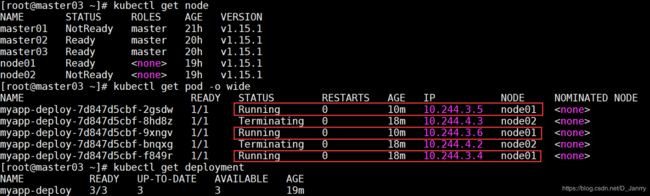

关闭master01,模拟宕机,master01状态为NotReady

[root@master01 ~]# init 0

VIP飘到了master02,controller-manager和scheduler也发生了迁移

当重启master01,根据keepalived的priority值,VIP飘到了master01、controller-manager和scheduler也发生了迁移。

2.测试node节点高可用

K8S 的pod-eviction在某些场景下如节点 NotReady,资源不足时,会把 pod 驱逐至其它节点

(1)Kube-controller-manager 周期性检查节点状态,每当节点状态为 NotReady,并且超出 pod-eviction-timeout 时间后,就把该节点上的 pod 全部驱逐到其它节点,其中具体驱逐速度还受驱逐速度参数,集群大小等的影响。最常用的 2 个参数如下:

pod-eviction-timeout:NotReady 状态节点超过该时间后,执行驱逐,默认 5 min

node-eviction-rate:驱逐速度,默认为 0.1 pod/秒

kube-controller-manager服务

cat /usr/lib/systemd/system/kube-controller-manager.service

cat /etc/kubernetes/controller-manage

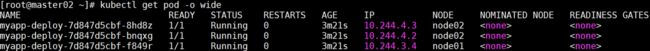

(2)创建pod,维持副本数3

[root@master02 ~]# cat myapp_deploy.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: myapp-deploy

namespace: default

spec:

replicas: 3

selector:

matchLabels:

app: myapp

release: stabel

template:

metadata:

labels:

app: myapp

release: stabel

env: test

spec:

containers:

- name: myapp

image: library/nginx

imagePullPolicy: IfNotPresent

ports:

- name: http

containerPort: 80

可以看到pod分布在node01和node02节点上

关闭node02,模拟宕机,node02状态为NotReady

可以看到 NotReady 状态节点超过指定时间后,pod被驱逐到 Ready 的节点上,deployment维持运行3个副本

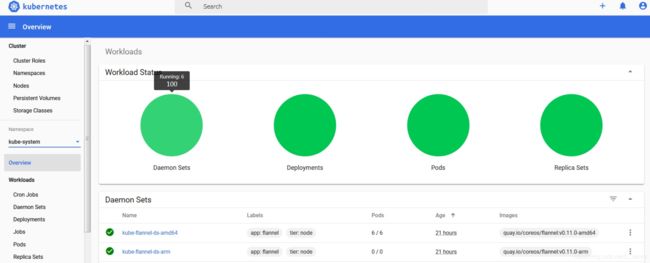

八、Dashboard搭建

master主节点配置,缺点也有,文章最后介绍。

1.下载yaml

[root@master01 ~]# wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-beta8/aio/deploy/recommended.yaml

2. 配置yaml

2.1 修改镜像地址

[root@master01 ~]# sed -i 's/kubernetesui/registry.cn-hangzhou.aliyuncs.com\/loong576/g' recommended.yaml

由于默认的镜像仓库网络访问不通,故改成阿里镜像

2.2 外网访问

[root@master01 ~]# sed -i '/targetPort: 8443/a\ \ \ \ \ \ nodePort: 30001\n\ \ type: NodePort' recommended.yaml

配置NodePort,外部通过https://NodeIp:NodePort 访问Dashboard,此时端口为30001

2.3 新增管理员帐号

[root@master01 ~]# cat >> recommended.yaml << EOF

---

# ------------------- dashboard-admin ------------------- #

apiVersion: v1

kind: ServiceAccount

metadata:

name: dashboard-admin

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: dashboard-admin

subjects:

- kind: ServiceAccount

name: dashboard-admin

namespace: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

创建超级管理员的账号用于登录Dashboard

3. 部署访问

3.1 部署Dashboard

[root@master01 ~]# kubectl apply -f recommended.yaml

3.2 状态查看

[root@master01 ~]# kubectl get all -n kubernetes-dashboard

3.3 令牌查看

[root@master01 ~]# kubectl describe secrets -n kubernetes-dashboard dashboard-admin

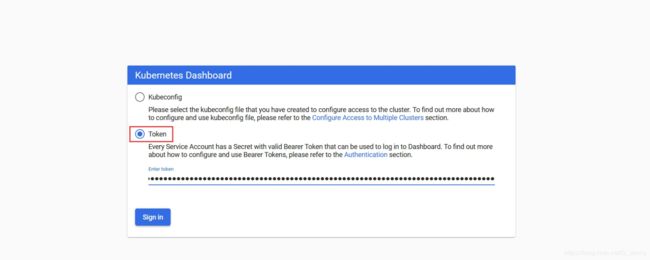

eyJhbGciOiJSUzI1NiIsImtpZCI6Ikd0NHZ5X3RHZW5pNDR6WEdldmlQUWlFM3IxbGM3aEIwWW1IRUdZU1ZKdWMifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4tNms1ZjYiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiZjk1NDE0ODEtMTUyZS00YWUxLTg2OGUtN2JmMWU5NTg3MzNjIiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmVybmV0ZXMtZGFzaGJvYXJkOmRhc2hib2FyZC1hZG1pbiJ9.LAe7N8Q6XR3d0W8w-r3ylOKOQHyMg5UDfGOdUkko_tqzUKUtxWQHRBQkowGYg9wDn-nU9E-rkdV9coPnsnEGjRSekWLIDkSVBPcjvEd0CVRxLcRxP6AaysRescHz689rfoujyVhB4JUfw1RFp085g7yiLbaoLP6kWZjpxtUhFu-MKh1NOp7w4rT66oFKFR-_5UbU3FoetAFBmHuZ935i5afs8WbNzIkM6u9YDIztMY3RYLm9Zs4KxgpAmqUmBSlXFZNW2qg6hxBqDijW_1bc0V7qJNt_GXzPs2Jm1trZR6UU1C2NAJVmYBu9dcHYtTCgxxkWKwR0Qd2bApEUIJ5Wug

3.4 访问

请使用浏览器访问:https://VIP:30001,通过令牌方式登录:

Dashboard提供了可以实现集群管理、服务发现和负载均衡、日志视图等功能。

九、对接私有仓库(可选)

私有仓库配置省略,在所有节点上执行以下步骤

1.修改daemon.json

[root@master01 ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.213.181 master01

192.168.213.182 master02

192.168.213.183 master03

192.168.213.191 node01

192.168.213.192 node02

192.168.213.129 hub.janrry.com

[root@master01 ~]# cat /etc/docker/daemon.json

{

"registry-mirrors": ["https://sopn42m9.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"insecure-registries": ["https://hub.janrry.com"]

}

[root@master01 ~]# systemctl daemon-reload

[root@master01 ~]# systemctl restart docker

2.创建认证secret

使用Kuberctl创建docker register认证secret

[root@master01 ~]# kubectl create secret docker-registry myregistrykey --docker-server=https://reg.zhao.com --docker-username=admin --docker-password=Harbor12345 --docker-email=""

secret/myregistrykey created

[root@master02 ~]# kubectl get secrets

NAME TYPE DATA AGE

default-token-6mrjd kubernetes.io/service-account-token 3 18h

myregistrykey kubernetes.io/dockerconfigjson 1 19s

在创建Pod的时通过imagePullSecret引用myregistrykey

imagePullSecrets:

- name: myregistrykey

3.测试私有仓库

[root@master02 ~]# cat test_sc.yaml

apiVersion: v1

kind: Pod

metadata:

name: foo

spec:

containers:

- name: foo

image: reg.zhao.com/zhao/myapp:v1.0

imagePullSecrets:

- name: myregistrykey

十、问题总结

1.初始化master节点失败或者初始化错节点(我就是初始化错成node节点了):

可执行kubeadm reset后重新初始化

[root@master01 ~]# kubeadm reset

[root@master01 ~]# cd /etc/kubernetes/

[root@master01 ~]# rm -rf ./*

#非root用户还须执行rm -rf $HOME/.kube/config

2.flanne文件下载失败

方法一:可以直接下载kube-flannel.yml文件,然后再执行apply

方法二:配置域名解析

在https://site.ip138.com查询服务器IP

[root@master01 ~]# echo "151.101.76.133 raw.Githubusercontent.com" >>/etc/hosts

3.节点状态NotReady

在出问题的节点机器上执行journalctl -f -u kubelet查看kubelet的输出日志信息如下:

Container runtime network not ready: NetworkReady=false reason:NetworkPluginNotReady message:docker: network plugin is not ready: cni config uninitialized

出现这个错误的原因是网络插件没有准备好,在节点上执行命令 docker images|grep flannel 查看flannel镜像是否已经成功拉取,这个花费的时间可能会很长

如果很长时间仍然没有拉下来flannel镜像,可以使用如下方法解决:

docker save把主节点上的flannel镜像保存为压缩文件(或在官方仓库https://github.com/coreos/flannel/releases下载镜像传到主机上,要注意版本对应),在节点机器上执行docker load加载镜像

[root@master02 ~]# docker save -o my_flannel.tar quay.io/coreos/flannel:v0.11.0-amd64

[root@master02 ~]# scp my_flannel.tar node01:/root

[root@node01 ~]# docker load < my_flannel.tar

4.直接在主master节点安装Dashboard(优化)

其实,把dashboard放在主节点就是个小儿科错误,我们配置高可用的目的是什么?不就是怕单点故障嘛?那放在这里节点故障,不就玩完了。所以,还是重新开一台机器作为客户端:

4.1 配置kubernetes源

[root@client ~]# cat < /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

[root@client ~]# yum clean all

[root@client ~]# yum -y makecache

4.2 安装kubectl

[root@client ~]# yum install -y kubectl-1.15.1 #版本要和之前的相同

4.3 安装并加载bash-completion

[root@client ~]# yum -y install bash-completion

[root@client ~]# source /etc/profile.d/bash_completion.sh

4.4 把主master节点的/etc/kubernetes/admin.conf拷贝到该节点。

[root@client ~]# mkdir -p /etc/kubernetes

[root@client ~]# scp 192.168.213.181:/etc/kubernetes/admin.conf /etc/kubernetes/

[root@client ~]# echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

[root@client ~]# source .bash_profile

4.5 加载环境变量

[root@master01 ~]# echo "source <(kubectl completion bash)" >> ~/.bash_profile

[root@master01 ~]# source .bash_profile

4.6 kubectl测试

[root@client ~]# kubectl get nodes

[root@client ~]# kubectl get cs

[root@client ~]# kubectl get po -o wide -n kube-system

这样Dashboard就可以在这个节点上安装了,接着重复第八步即可搞定。

由于太累,写的不太仔细,可能会有错误,日后发现会及时改正。大家可以试试,其实不难,核心思想懂了,你就是屏幕前最亮的那个仔,祝各位好运