最邻近规则分类(K-Nearest Neighbor)KNN算法

1.综述

1.1 Cover和Hart在1968年提出了最初的邻近算法

1.2 分类(classification)算法

1.3 输入基于实例的学习(instance-based learning),懒惰学习(lazy learing)

2. 例子

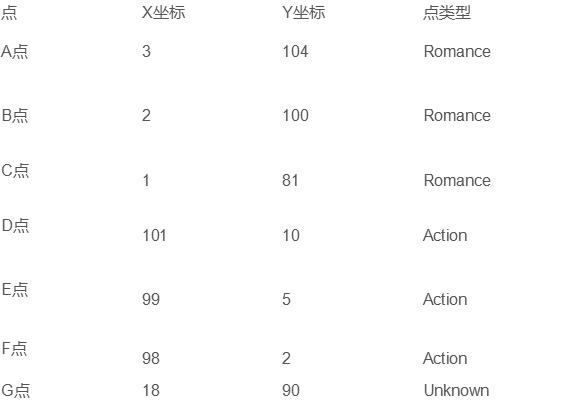

未知电影属于什么类型?

3.算法详述

3.1 步骤

为了判断未知实例的类别,以所有已知类别的实例作为参照

选择参数K

计算未知实例与所有已知实例的距离

选择最近K个已知实例

根据少数服从多数的投票法则(majority-voting),让未知实例归类为K个最邻近样本中最多数的类别

3.2 细节

关于K

关于距离的衡量方法:

3.2.1 Euclidean Distance定义

其他距离衡量:余弦值(cos),相关度(correlation),曼哈顿距离(Manhattan distance)

# -*- coding:utf-8 -*-

#计算a,g两点之间的EuclideanDistance

import math

def ComputerEuclideanDistance(x1,y1,x2,y2):

d = math.sqrt(math.pow((x1 - x2),2) + math.pow((y1 - y2),2))

return d

d_ag = ComputerEuclideanDistance(3,104,18,90)

print("d_ag:",d_ag)

3.3 举例

4. 算法优缺点

4.1 算法优点

简单

易于理解

容易实现

通过对K的选择可具备丢噪音数据的健壮性

4.2 算法缺点

需要大量的空间存储所有已知实例

算法复杂度高(需要比较所有已知实例与要分类的实例)

当其样本分布不平衡时,比如其中一类样本过大(实例数量过多)占主导的时候,新的未知实例容易被归类为这个主导样本,因为这类样本实例的数量过大,但是这个新的未知实例实际上并不接近目标样本。(上图中的Y点)

5.算法的改进版本

考虑距离,根据距离上加上权重

如:1/d(d为距离)

6.KNN算法的应用

在线文档:https://scikit-learn.org/stable/modules/neighbors.html

6.1 数据集介绍-虹膜

150 个实例

特征:萼片长度(sepal length)、萼片宽度(sepal width)、花瓣长度(petal length)、花瓣宽度(petal width)

类别:Iris setosa、Iris versicolor,Iris virginnica

6.2 利用Python的机器学习库sklearn: SKLearn Example.py

直接调用库函数实现

# -*- coding:utf-8 -*-

from sklearn import neighbors

from sklearn import datasets

'''

这里所有测参数都采用默认

'''

#载入分类器

knn = neighbors.KNeighborsClassifier()

#载入数据

iris = datasets.load_iris()

#查看数据集

print(iris)

#传入特征集、类标签来训练模型

knn.fit(iris.data,iris.target)

#使用测试数据预测

predictedLabel = knn.predict([[0.1,0.2,0.3,0.4]])

#查看预测结果

print(predictedLabel)

自定义实现

# -*- coding:utf-8 -*-

import csv

import random

import math

import operator

#加载数据集,划分训练集和测试集

def loadDataset(filename,split,trainingSet = [],testSet = []):

with open(filename,'rt') as csvfile:

lines = csv.reader(csvfile)

dataset = list(lines)

for x in range(len(dataset) - 1):

for y in range(4):

dataset[x][y] = float(dataset[x][y])

if random.random() < split:

trainingSet.append(dataset[x])

else:

testSet.append(dataset[x])

#计算euclideanDistance #实例维度:length

def euclideanDistance(instance1, instance2, length):

distance = 0

for x in range(length):

distance += pow(instance1[x] - instance2[x],2)

return math.sqrt(distance)

#返回最近的K个邻居

def getNeighbors(trainingset,testInstance,k):

distances = []#存放所有计算出的距离

length = len(testInstance) - 1

for x in range(len(trainingset)):

dist = euclideanDistance(testInstance,trainingset[x], length)

distances.append((trainingset[x],dist))

distances.sort(key = operator.itemgetter(1))#排序

neighbors = []

for x in range(k):#选出前K个存入neighbors

neighbors.append(distances[x][0])

return neighbors

#在最近的K个邻居中,根据每个邻居所属于的类别,并统计个数,最后对其排序选出属于哪一类

def getResponse(neighbors):

classVotes = {}

for x in range(len(neighbors)):

Response = neighbors[x][-1]

if Response in classVotes:

classVotes[Response] += 1

else:

classVotes[Response] = 1

sortedVotes = sorted(classVotes.items(),key = operator.itemgetter(1),reverse=True)

return sortedVotes[0][0]

#计算预测的精确度

def getAccuracy(testset,predictions):

correct = 0

for x in range(len(testset)):

if testset[x][-1] == predictions[x]:

correct += 1

return (correct/float(len(testset)))*100.0

def main():

#prepare data

trainingSet = []

testSet = []

split = 0.67

loadDataset(r"irisdata.txt",split,trainingSet,testSet)

print("trainingSet:" + repr(len(trainingSet)))

print("testSet:" + repr(len(testSet)))

#generate predictions

predictions = []

k = 3

correct = []

for x in range(len(testSet)):

neighbors = getNeighbors(trainingSet,testSet[x],k)

result = getResponse(neighbors)

predictions.append(result)

print("predcted =" + repr(result)+",actual = " + repr(testSet[x][-1]))

accuracy = getAccuracy(testSet,predictions)

print("accuracy:" + repr(accuracy) + "%")

main()

测试数据集

5.1,3.5,1.4,0.2,Iris-setosa

4.9,3.0,1.4,0.2,Iris-setosa

4.7,3.2,1.3,0.2,Iris-setosa

4.6,3.1,1.5,0.2,Iris-setosa

5.0,3.6,1.4,0.2,Iris-setosa

5.4,3.9,1.7,0.4,Iris-setosa

4.6,3.4,1.4,0.3,Iris-setosa

5.0,3.4,1.5,0.2,Iris-setosa

4.4,2.9,1.4,0.2,Iris-setosa

4.9,3.1,1.5,0.1,Iris-setosa

5.4,3.7,1.5,0.2,Iris-setosa

4.8,3.4,1.6,0.2,Iris-setosa

4.8,3.0,1.4,0.1,Iris-setosa

4.3,3.0,1.1,0.1,Iris-setosa

5.8,4.0,1.2,0.2,Iris-setosa

5.7,4.4,1.5,0.4,Iris-setosa

5.4,3.9,1.3,0.4,Iris-setosa

5.1,3.5,1.4,0.3,Iris-setosa

5.7,3.8,1.7,0.3,Iris-setosa

5.1,3.8,1.5,0.3,Iris-setosa

5.4,3.4,1.7,0.2,Iris-setosa

5.1,3.7,1.5,0.4,Iris-setosa

4.6,3.6,1.0,0.2,Iris-setosa

5.1,3.3,1.7,0.5,Iris-setosa

4.8,3.4,1.9,0.2,Iris-setosa

5.0,3.0,1.6,0.2,Iris-setosa

5.0,3.4,1.6,0.4,Iris-setosa

5.2,3.5,1.5,0.2,Iris-setosa

5.2,3.4,1.4,0.2,Iris-setosa

4.7,3.2,1.6,0.2,Iris-setosa

4.8,3.1,1.6,0.2,Iris-setosa

5.4,3.4,1.5,0.4,Iris-setosa

5.2,4.1,1.5,0.1,Iris-setosa

5.5,4.2,1.4,0.2,Iris-setosa

4.9,3.1,1.5,0.1,Iris-setosa

5.0,3.2,1.2,0.2,Iris-setosa

5.5,3.5,1.3,0.2,Iris-setosa

4.9,3.1,1.5,0.1,Iris-setosa

4.4,3.0,1.3,0.2,Iris-setosa

5.1,3.4,1.5,0.2,Iris-setosa

5.0,3.5,1.3,0.3,Iris-setosa

4.5,2.3,1.3,0.3,Iris-setosa

4.4,3.2,1.3,0.2,Iris-setosa

5.0,3.5,1.6,0.6,Iris-setosa

5.1,3.8,1.9,0.4,Iris-setosa

4.8,3.0,1.4,0.3,Iris-setosa

5.1,3.8,1.6,0.2,Iris-setosa

4.6,3.2,1.4,0.2,Iris-setosa

5.3,3.7,1.5,0.2,Iris-setosa

5.0,3.3,1.4,0.2,Iris-setosa

7.0,3.2,4.7,1.4,Iris-versicolor

6.4,3.2,4.5,1.5,Iris-versicolor

6.9,3.1,4.9,1.5,Iris-versicolor

5.5,2.3,4.0,1.3,Iris-versicolor

6.5,2.8,4.6,1.5,Iris-versicolor

5.7,2.8,4.5,1.3,Iris-versicolor

6.3,3.3,4.7,1.6,Iris-versicolor

4.9,2.4,3.3,1.0,Iris-versicolor

6.6,2.9,4.6,1.3,Iris-versicolor

5.2,2.7,3.9,1.4,Iris-versicolor

5.0,2.0,3.5,1.0,Iris-versicolor

5.9,3.0,4.2,1.5,Iris-versicolor

6.0,2.2,4.0,1.0,Iris-versicolor

6.1,2.9,4.7,1.4,Iris-versicolor

5.6,2.9,3.6,1.3,Iris-versicolor

6.7,3.1,4.4,1.4,Iris-versicolor

5.6,3.0,4.5,1.5,Iris-versicolor

5.8,2.7,4.1,1.0,Iris-versicolor

6.2,2.2,4.5,1.5,Iris-versicolor

5.6,2.5,3.9,1.1,Iris-versicolor

5.9,3.2,4.8,1.8,Iris-versicolor

6.1,2.8,4.0,1.3,Iris-versicolor

6.3,2.5,4.9,1.5,Iris-versicolor

6.1,2.8,4.7,1.2,Iris-versicolor

6.4,2.9,4.3,1.3,Iris-versicolor

6.6,3.0,4.4,1.4,Iris-versicolor

6.8,2.8,4.8,1.4,Iris-versicolor

6.7,3.0,5.0,1.7,Iris-versicolor

6.0,2.9,4.5,1.5,Iris-versicolor

5.7,2.6,3.5,1.0,Iris-versicolor

5.5,2.4,3.8,1.1,Iris-versicolor

5.5,2.4,3.7,1.0,Iris-versicolor

5.8,2.7,3.9,1.2,Iris-versicolor

6.0,2.7,5.1,1.6,Iris-versicolor

5.4,3.0,4.5,1.5,Iris-versicolor

6.0,3.4,4.5,1.6,Iris-versicolor

6.7,3.1,4.7,1.5,Iris-versicolor

6.3,2.3,4.4,1.3,Iris-versicolor

5.6,3.0,4.1,1.3,Iris-versicolor

5.5,2.5,4.0,1.3,Iris-versicolor

5.5,2.6,4.4,1.2,Iris-versicolor

6.1,3.0,4.6,1.4,Iris-versicolor

5.8,2.6,4.0,1.2,Iris-versicolor

5.0,2.3,3.3,1.0,Iris-versicolor

5.6,2.7,4.2,1.3,Iris-versicolor

5.7,3.0,4.2,1.2,Iris-versicolor

5.7,2.9,4.2,1.3,Iris-versicolor

6.2,2.9,4.3,1.3,Iris-versicolor

5.1,2.5,3.0,1.1,Iris-versicolor

5.7,2.8,4.1,1.3,Iris-versicolor

6.3,3.3,6.0,2.5,Iris-virginica

5.8,2.7,5.1,1.9,Iris-virginica

7.1,3.0,5.9,2.1,Iris-virginica

6.3,2.9,5.6,1.8,Iris-virginica

6.5,3.0,5.8,2.2,Iris-virginica

7.6,3.0,6.6,2.1,Iris-virginica

4.9,2.5,4.5,1.7,Iris-virginica

7.3,2.9,6.3,1.8,Iris-virginica

6.7,2.5,5.8,1.8,Iris-virginica

7.2,3.6,6.1,2.5,Iris-virginica

6.5,3.2,5.1,2.0,Iris-virginica

6.4,2.7,5.3,1.9,Iris-virginica

6.8,3.0,5.5,2.1,Iris-virginica

5.7,2.5,5.0,2.0,Iris-virginica

5.8,2.8,5.1,2.4,Iris-virginica

6.4,3.2,5.3,2.3,Iris-virginica

6.5,3.0,5.5,1.8,Iris-virginica

7.7,3.8,6.7,2.2,Iris-virginica

7.7,2.6,6.9,2.3,Iris-virginica

6.0,2.2,5.0,1.5,Iris-virginica

6.9,3.2,5.7,2.3,Iris-virginica

5.6,2.8,4.9,2.0,Iris-virginica

7.7,2.8,6.7,2.0,Iris-virginica

6.3,2.7,4.9,1.8,Iris-virginica

6.7,3.3,5.7,2.1,Iris-virginica

7.2,3.2,6.0,1.8,Iris-virginica

6.2,2.8,4.8,1.8,Iris-virginica

6.1,3.0,4.9,1.8,Iris-virginica

6.4,2.8,5.6,2.1,Iris-virginica

7.2,3.0,5.8,1.6,Iris-virginica

7.4,2.8,6.1,1.9,Iris-virginica

7.9,3.8,6.4,2.0,Iris-virginica

6.4,2.8,5.6,2.2,Iris-virginica

6.3,2.8,5.1,1.5,Iris-virginica

6.1,2.6,5.6,1.4,Iris-virginica

7.7,3.0,6.1,2.3,Iris-virginica

6.3,3.4,5.6,2.4,Iris-virginica

6.4,3.1,5.5,1.8,Iris-virginica

6.0,3.0,4.8,1.8,Iris-virginica

6.9,3.1,5.4,2.1,Iris-virginica

6.7,3.1,5.6,2.4,Iris-virginica

6.9,3.1,5.1,2.3,Iris-virginica

5.8,2.7,5.1,1.9,Iris-virginica

6.8,3.2,5.9,2.3,Iris-virginica

6.7,3.3,5.7,2.5,Iris-virginica

6.7,3.0,5.2,2.3,Iris-virginica

6.3,2.5,5.0,1.9,Iris-virginica

6.5,3.0,5.2,2.0,Iris-virginica

6.2,3.4,5.4,2.3,Iris-virginica

5.9,3.0,5.1,1.8,Iris-virginica