hadoop集群及HBase+ZooKeeper+Hive完全分布式集群部署安装

这里说复制虚拟机

hadoop单机安装 在这

:

开启远程免密登录配置

ssh-copy-id -i .ssh/id_rsa.pub -p22 [email protected]

远程登录

ssh -p 22 [email protected]

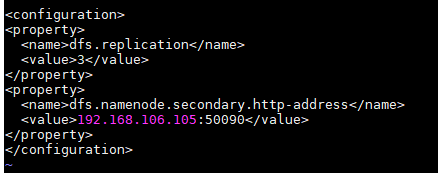

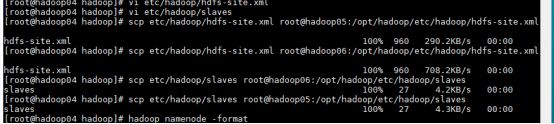

vi /hadoop/hdfs-site.xml

vi etc/hadoop/slaves :

hadoop04

hadoop05

hadoop06

在传到其他两个

格式化HDFS

hadoop namenode -format

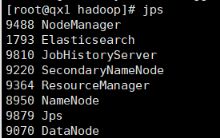

启动hadoop

start-all.sh(jps查看进程)

安装ZooKeeper

修改zookeepr/conf/zoo.cfg)(修改完后改名)

配置里面的server是zookeeper服务器的主机名。

# The number of milliseconds of each tick

tickTime=2000

maxClientCnxns=0

# The number of ticks that the initial

# synchronization phase can take

initLimit=50

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

syncLimit=5

# the directory where the snapshot is stored.

dataDir=/opt/hadoop/zookeeperdata

# the port at which the clients will connect

clientPort=2181

server.1=hadoop01:2888:3888

server.2=hadoop02:2888:3888

server.3=hadoop03:2888:3888

新建目录

在各新建/opt/hadoop/zookprdata/中配置的目录,并添加myid文件,里面内容是该节点对应的server号,如上例hadoop01对应的myid文件内容就是:

1

启动zookeeper

在各zookeeper节点上运行zkServer.sh start

cd /opt/zookeeper

./bin/zkServer.sh start

会有时区错误 附加Linux配置ntp时间服务器(全)

Hbase的安装

修改hbase/conf/hbase-site.xml

hbase.rootdir

hdfs://hadoop01:9000/hbase

The directory shared by region servers.

hbase.cluster.distributed

true

hbase.master.port

60000

hbase.zookeeper.quorum

hadoop01,hadoop02,hadoop03

hbase.regionserver.handler.count

300

hbase.hstore.blockingStoreFiles

70

zookeeper.session.timeout

60000

hbase.regionserver.restart.on.zk.expire

true

Zookeeper session expired will force regionserver exit.

Enable this will make the regionserver restart.

hbase.replication

false

hfile.block.cache.size

0.4

hbase.regionserver.global.memstore.upperLimit

0.35

hbase.hregion.memstore.block.multiplier

8

hbase.server.thread.wakefrequency

100

hbase.master.distributed.log.splitting

false

hbase.regionserver.hlog.splitlog.writer.threads

3

hbase.hstore.blockingStoreFiles

20

hbase.hregion.memstore.flush.size

134217728

hbase.hregion.memstore.mslab.enabled

true

hbase.rootdir

hbase.zookeeper.quorum

以上两处修改

修改hbase/conf/hbase-env.sh

export HBASE_OFFHEAPSIZE=1G

export HBASE_HEAPSIZE=4000

export JAVA_HOME=/opt/j2sdk1.6.29

export HBASE_OPTS="-Xmx4g -Xms4g -Xmn128m -XX:+UseParNewGC -XX:+UseConcMarkSweepGC -XX:CMSInitiatingOccupancyFraction=70 -verbose:gc -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -Xloggc:$HBASE_HOME/logs/gc-$(hostname)-hbase.log"

export HBASE_MANAGES_ZK=false

export HBASE_CLASSPATH=/opt/hadoop/etc/hadoop

修改hbase/conf/log4j.properties

修改如下内容

hbase.root.logger=WARN,console

log4j.logger.org.apache.hadoop.hbase=WARN

在conf/regionservers中添加所有datanode的节点

添加以下内容:

hadooop01

hadooop02

hadooop03

启动Hbase

cd /opt/hbase

bin/start-hbase.sh

配置hive

切换目录: cd /opt/hive/conf

改个名字先mv hive-env.sh.template hive- env.sh

配置hive-env.sh

export HADOOP_ HOME=/opt/hadoop

export HIVE_ CONF_ DIR= /opt/hive/conf

export HIVE_ AUX_ JARS_ PATH=/opt/hive/lib

export JAVA_ HOME= /opt/java8

新建hive- site.xml: vi hive- site.xml

< ?xml version="1.0" encoding= "UTF-8" standalone="no"?>< ?xml-stylesheet type="text/xsl" href= "configuration.xsl"?><configuration>

<!-- configurationJäci -->

<property>

< name> hive.metastore.warehouse.dir</name>

<value> /opt/hive/warehouse < /value>

</property>

< property>

< name> hive.metastore.local </name>

<value> true</value>

</property>

<!--如果是远程mysq|数据库的话需要在这里写入远程的IP或hosts -->< property>

保存退出

将mysql-connector-java-5.1.0-bin放入software目录下

将mysql-connector-java-5.1.0-bin移动到/opt/hive/lib目录下: mv mysql-connector-java- 5.1.0-bin /opt/hive/lib

切换目录: cd /opt/hive

hadoop fs -ls /查看当前目录文件

hadoop fs -mkdir -p /usr/hive/warehouse

hadoop fs -mkdir -p /opt/hive/warehouse

hadoop fs -chmod 777 /opt/hive/warehouse (给文件夹赋权)

hadoop fs-chmod -R 777 /opt/hive给文件夹赋权(递归查询)

初始化MySQL: schematool -dbType mysql -initSchema

启动hive

cd /opt/hive

bin/hive --service hiveserver

启动顺序:

start-all.sh

启动zookeeper

cd /opt/zookeeper

./bin/zkServer.sh start

启动Hbase

cd /opt/hbase

bin/start-hbase.sh

启动hive

cd /opt/hive

bin/hive --service hiveserver

ps关闭反着关