爬虫:抓取猫眼电影排行榜top100

简介: 使用requests 及正则表达式完成 ( python3)

import requests

import re

from requests.exceptions import RequestException ## 用来处理异常

import json

from multiprocessing import Pool ##多线程使用

headers = {

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_4) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/52.0.2743.116 Safari/537.36'

}

def get_one_page(url):

try:

response = requests.get(url, headers=headers)

if response.status_code == 200:

return response.text

return None

except RequestException: ##RequestException 是所有异常的总称

return None

def parse_one_page(html):

pattern = re.compile('.*?board-index.*?">(\d+).*?data-src="(.*?)".*?name">

+ '.*?>(.*?).*?star">(.*?).*?releasetime">(.*?)'

+'.*?integer">(.*?).*?fraction">(.*?).*?',re.S)

items = re.findall(pattern,html)

print(items)

return items

def write_to_file2(item):

with open('result_table.txt','a') as f:

f.write(item[0] + '\t' + item[1] + '\t' +item[2] + '\t' +item[3].strip().split(':')[1] \

+ '\t' + item[4].split(':')[1] + '\t' + str(item[5]+item[6]) + '\n')

def main(offset):

url = 'http://maoyan.com/board/4?offset=' + str(offset) ##网址有防护机制,需要加一个headers.

##这个url是top100的链接,http://maoyan.com/board/4?offset=10 则表示这是第十页

html = get_one_page(url)

items = parse_one_page(html)

for item in items:

write_to_file2(item)

if __name__ == '__main__':

##使用多线程方法:

pool =Pool()

pool.map(main,[i*10 for i in range(10)]) ##速度很快

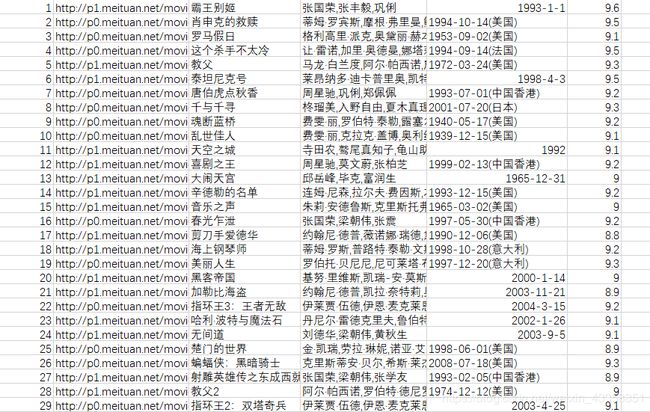

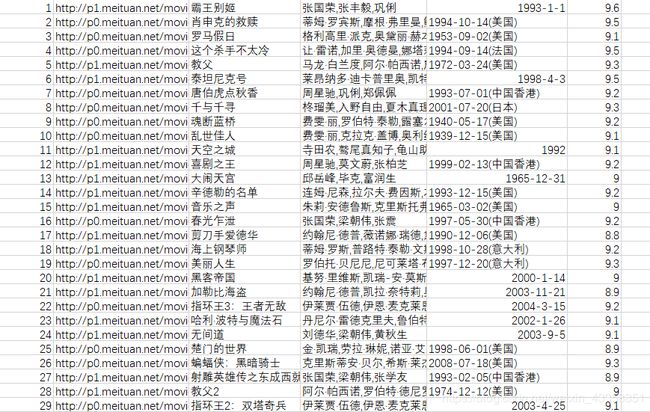

结果展示: