Flink笔记

一、Flink简介

1 重要特点:

1.1 事件驱动型(Event-driven)

比较典型的就是以 kafka 为代表的消息队列几乎都是事件驱动型应用。

1.2 流与批

批处理的特点是有界、持久、大量,非常适合需要访问全套记录才能完成的计

算工作,一般用于离线统计。

流处理的特点是无界、实时, 无需针对整个数据集执行操作,而是对通过系统

传输的每个数据项执行操作,一般用于实时统计。

1.3 分层API

Flink 几大模块

Flink Table & SQL(还没开发完)

Flink Gelly(图计算)

Flink CEP(复杂事件处理)

二、快速上手

2.1 搭建 maven 工程

2.1.1 pom 文件

4.0.0

com.atguigu.flink

FlinkTutorial

1.0-SNAPSHOT

org.apache.flink

flink-scala_2.11

1.7.2

org.apache.flink

flink-streaming-scala_2.11

1.7.2

net.alchim31.maven

scala-maven-plugin

3.4.6

testCompile

org.apache.maven.plugins

maven-assembly-plugin

3.0.0

jar-with-dependencies

make-assembly

package

single

2.1.2 添加 scala 框架 和 scala 文件夹

2.2 批处理 wordcount

object WordCount {

def main(args: Array[String]): Unit = {

// 创建执行环境

val env = ExecutionEnvironment.getExecutionEnvironment

// 从文件中读取数据

val inputPath = "D:\\Projects\\BigData\\TestWC1\\src\\main\\resources\\hello.txt"

val inputDS: DataSet[String] = env.readTextFile(inputPath)

val wordCountDS: AggregateDataSet[(String, Int)] = inputDS.flatMap(_.split("

")).map((_, 1)).groupBy(0).sum(1)

// 打印输出

wordCountDS.print()

}

}

2.3 流处理 wordcount

object StreamWordCount {

def main(args: Array[String]): Unit = {

// 从外部命令中获取参数

val params: ParameterTool = ParameterTool.fromArgs(args)

val host: String = params.get("host")

val port: Int = params.getInt("port")

// 创建流处理环境

val env = StreamExecutionEnvironment.getExecutionEnvironment

// 接收 socket文本流

val textDstream: DataStream[String] = env.socketTextStream(host, port)

// flatMap和 Map需要引用的隐式转换

import org.apache.flink.api.scala._

val dataStream: DataStream[(String, Int)] =

textDstream.flatMap(_.split("\\s")).filter(_.nonEmpty).map((_, 1)).keyBy(0).sum(1)

dataStream.print().setParallelism(1)

// 启动 executor ,执行任务

env.execute("Socket stream word count")

}

}

三、Flink 部署

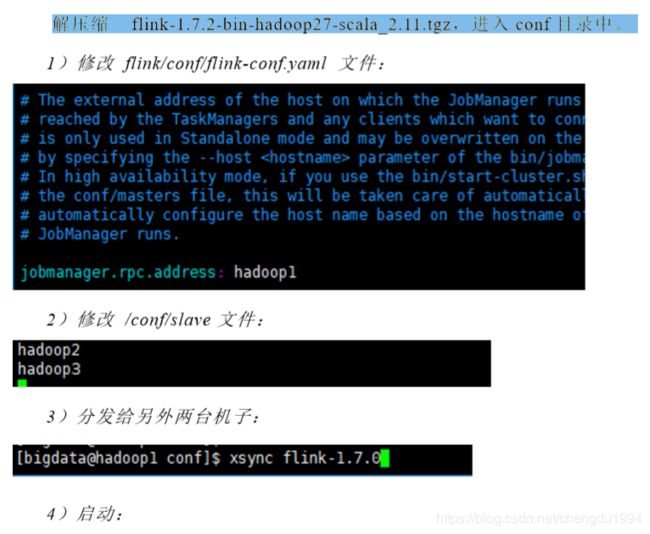

3.1 Standalone 模式

3.1.1 安装

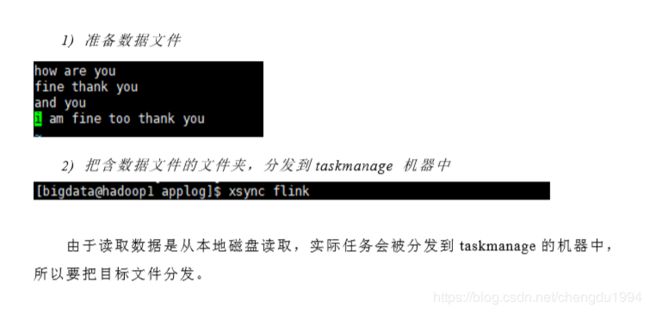

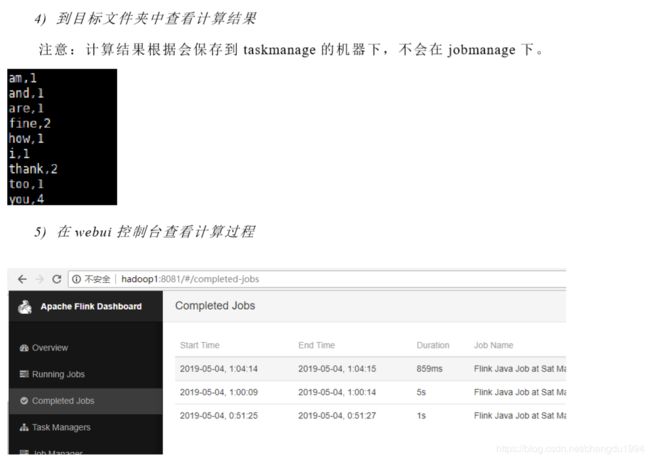

3.1.2 提交任务

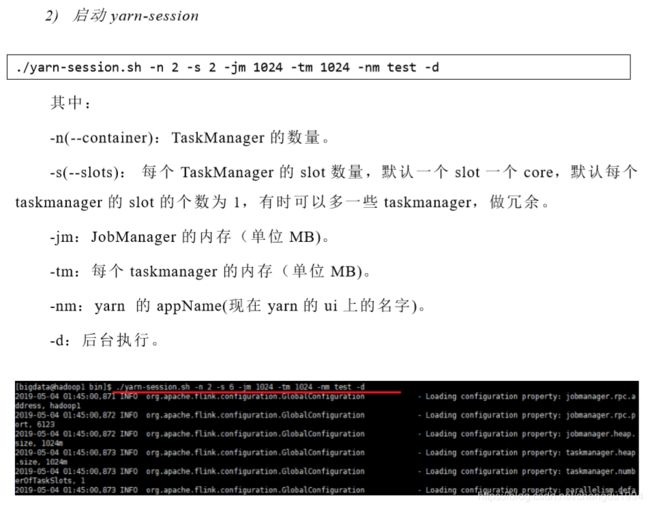

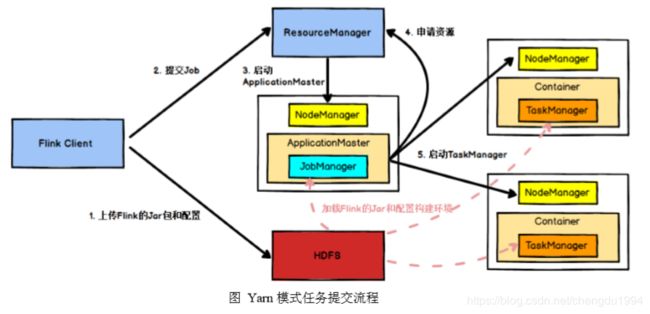

3.2 Yarn 模式

3.3 Kubernetes 部署

容器化部署时目前业界很流行的一项技术,基于 Docker 镜像运行能够让用户更加方便地对应用进行管理和运维。容器管理工具中最为流行的就是 Kubernetes(k8s),而 Flink 也在最近的版本中支持了 k8s 部署模式。

1 )

搭建Kubernetes

集群(略)

2 )

配置各组件的yaml文件

在 k8s 上构建 Flink Session Cluster,需要将 Flink 集群的组件对应的 docker 镜像分别在 k8s 上启动,包括 JobManager、TaskManager、JobManagerService 三个镜像服务。每个镜像服务都可以从中央镜像仓库中获取。

3 )启动

Flink Session Cluster

// 启动jobmanager-service 服务

kubectl create -f jobmanager-service.yaml

// 启动jobmanager-deployment服务

kubectl create -f jobmanager-deployment.yaml

// 启动taskmanager-deployment服务

kubectl create -f taskmanager-deployment.yaml

4 ) 访问Flink UI页面

集群启动后,就可以通过 JobManagerServicers 中配置的 WebUI 端口,用浏览器

输入以下 url 来访问 Flink UI 页面了:

http://{JobManagerHost:Port}/api/v1/namespaces/default/services/flink-jobmanage

r:ui/proxy

四、 Flink 运行架构

4.1 Flink 运行时的组件

Flink 运行时架构主要包括四个不同的组件,它们会在运行流处理应用程序时协同工作:

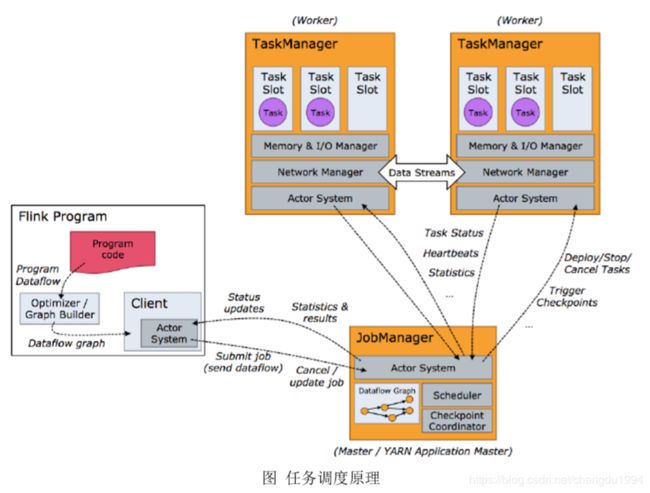

作业管理器(JobManager)、资源管理器(ResourceManager)、任务管理器(TaskManager),以及分发器(Dispatcher)。因为 Flink 是用 Java 和 Scala 实现的,所以所有组件都会运行在Java 虚拟机上。

4.2 任务提交流程

4.3 任务调度原理

4.3.1 TaskManger 与 Slots

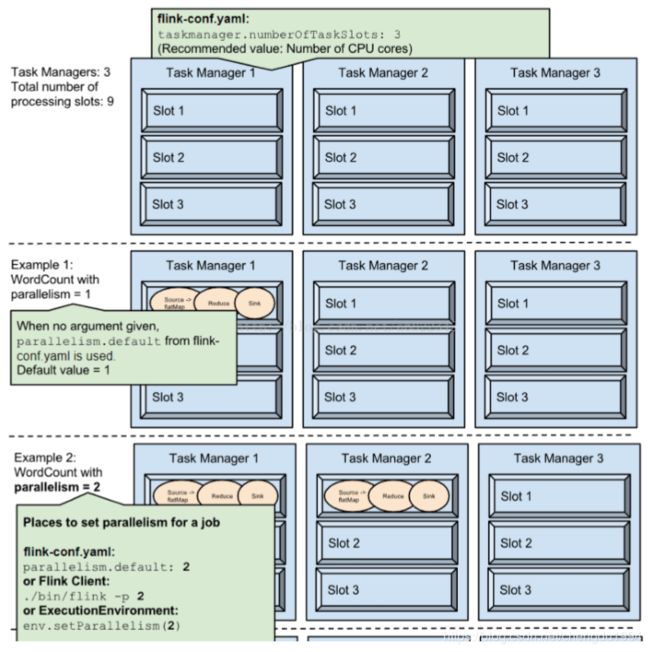

Flink 每一个 worker(TaskManager)是一个 JVM 进程,它可能会在独立的线程上执行一个或多个 subtask。为了控制 worker 接收多少 task,worker 通过 task slot 控制(一个 worker 至少有一个 task slot)。

Task Slot 是静态概念,指 TaskManager 具有的并发执行能力,通过参数 taskmanager.numberOfTaskSlots 进行配置;

并行度 parallelism 是动态概念,指TaskManager 运行程序时实际使用的并发能力,通过参数 parallelism.default进行配置。

4.3.2 程序与数据流(DataFlow)

所有的 Flink 程序都是由三部分组成的: Source 、Transformation 和 Sink

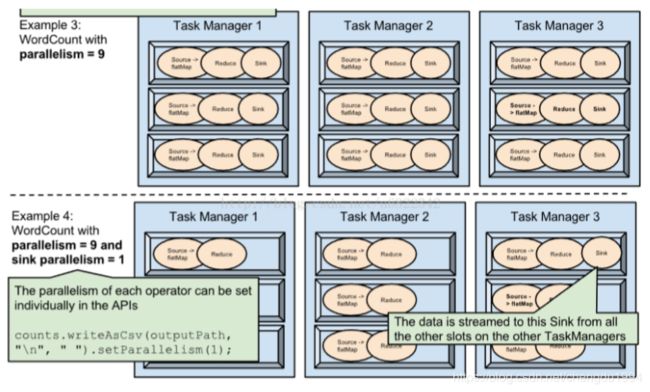

4.3.3 执行图(ExecutionGraph)

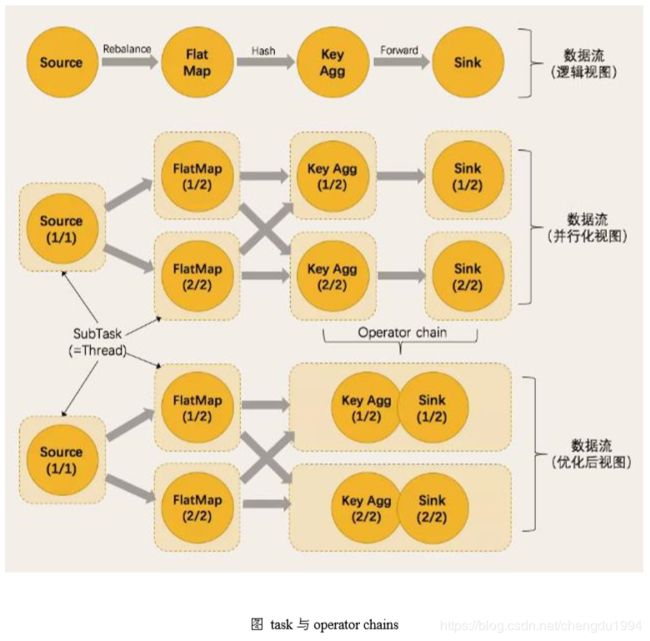

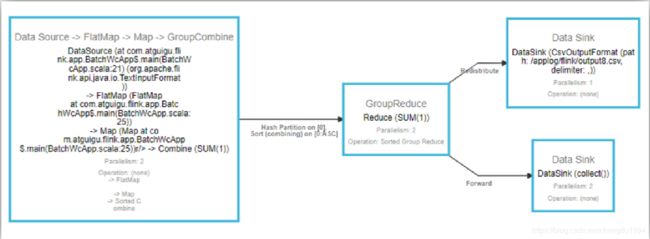

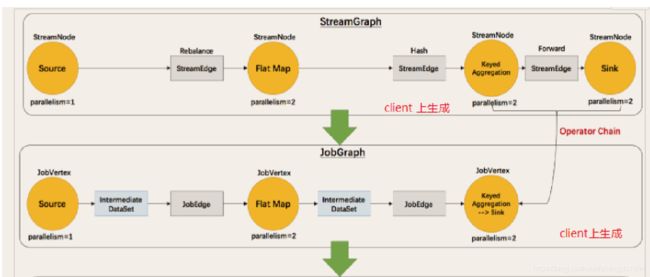

为了执行一个流处理程序,Flink 需要将逻辑流图转换为物理数据流图(也叫执行图)。

Flink 中的执行图可以分成四层:StreamGraph -> JobGraph -> ExecutionGraph -> 物理执行图。

4.3.4 并行度(Parallelism)

一个特定算子的子任务(subtask)的个数被称之为其并行度(parallelism)。

一个流程序的并行度,是其所有算子中最大的并行度。一个程序中,不同的算子可以具有不同的并行度。

Stream 在算子之间传输数据形式是 one-to-one(forwarding)模式(窄依赖 )和redistributing 的模式(宽依赖 )。

One-to-one:map、fliter、flatMap

Redistributing:keyBy() 基于 hashCode 重分区、broadcast 和 rebalance会随机重新分区。

4.3.5 任务链(Operator Chains)

五、Flink 流处理 API

5.1 Environment

5.1.1 getExecutionEnvironment

val env: ExecutionEnvironment = ExecutionEnvironment. getExecutionEnvironment //scala

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment(); //java

5.1.2 createLocalEnvironment

返回本地执行环境,需要在调用时指定默认的并行度。

val env = StreamExecutionEnvironment.createLocalEnvironment(1) //scala

LocalStreamEnvironment localEnvironment = StreamExecutionEnvironment.createLocalEnvironment(1);//java

5.1.3 createRemoteEnvironment

返回集群执行环境,将 Jar 提交到远程服务器。在调用时指定 JobManager 的 IP 和端口号,并指定要在集群中运行的 Jar 包

//scala

val env = ExecutionEnvironment.createRemoteEnvironment("jobmanage-hostname",6123,"YOURPATH//wordcount.jar")

//java

ExecutionEnvironment remoteEnvironment = ExecutionEnvironment.createRemoteEnvironment("jobmanage-hostname", 6123, "YOURPATH//wordcount.jar");

5.2 Source

5.2.1 从集合读取数据

//scala

// 定义样例类,传感器 id ,时间戳,温度

case class SensorReading(id: String, timestamp: Long, temperature: Double)

object Sensor {

def main(args: Array[String]): Unit = {

val env = StreamExecutionEnvironment.getExecutionEnvironment

val stream1 = env.fromCollection(List(

SensorReading("sensor_1", 1547718199, 35.80018327300259),

SensorReading("sensor_6", 1547718201, 15.402984393403084),

SensorReading("sensor_7", 1547718202, 6.720945201171228),

SensorReading("sensor_10", 1547718205, 38.101067604893444)

))

stream1.print("stream1:").setParallelism(1)

env.execute()

}

}

5.2.2 从文件读取数据

val stream2 = env.readTextFile("YOUR_FILE_PATH") //scala

5.2.3 kafka

<-- pom.xml引入kafka连接器 -->

<! -- https://mvnrepository.com/artifact/org.apache.flink/flink - connector - kafka - 0.11 -- >

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-kafka-0.11_2.11</artifactId>

<version>1.7.2</version>

</dependency>

//scala

val properties = new Properties()

properties.setProperty("bootstrap.servers", "localhost:9092")

properties.setProperty("group.id", "consumer-group")

properties.setProperty("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer")

properties.setProperty("value.deserializer","org.apache.kafka.common.serialization.StringDeserializer)

properties.setProperty("auto.offset.reset", "latest")

val stream3 = env.addSource(new FlinkKafkaConsumer011[String]("sensor", new SimpleStringSchema(), properties))

//java

public class StreamKafkaSource {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setStateBackend(new FsStateBackend("hdfs://xxxx"));

env.setParallelism(1);

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime);

env.enableCheckpointing(1000);

env.getCheckpointConfig().setCheckpointingMode(CheckpointingMode.EXACTLY_ONCE);

env.getCheckpointConfig().setCheckpointTimeout(60000);

env.getCheckpointConfig().enableExternalizedCheckpoints(CheckpointConfig.ExternalizedCheckpointCleanup.RETAIN_ON_CANCELLATION);

String topic = "topic01";

Properties properties = new Properties();

properties.setProperty("bootstrap.servers","hadoop110:9092");

properties.setProperty("group.id","con1");

DataStreamSource<String> inputStream = env.addSource(new FlinkKafkaConsumer011<>(topic, new SimpleStringSchema(), properties));

inputStream.print();

env.execute("kafkaSource");

}

}

5.3 Transform

5.3.1 map

val streamMap = stream.map { x => x * 2 } //scala

SingleOutputStreamOperator<Long> StreamMap = inputStream.map(new MapFunction<String, Long>() {

@Override

public Long map(String s) throws Exception {

return Long.parseLong(s) * 2;

}

});

5.3.2 flatMap

List(“a b”, “c d”).flatMap(line ⇒ line.split(" "))

结果是 List(a, b, c, d)。

val streamFlatMap = stream.flatMap{x => x.split(",")}

inputStream.flatMap(new FlatMapFunction<String, String[]>() {

@Override

public void flatMap(String s, Collector<String[]> collector) throws Exception {

String[] strings = s.split(",");

collector.collect(strings);

}

});

5.3.3 Filter

val streamFilter = stream.filter{ x => x == 1 }

inputStream.filter(new FilterFunction<String>() {

@Override

public boolean filter(String s) throws Exception {

long sI = Integer.parseInt(s);

return sI == 1;

}

});

5.3.4 KeyBy

DataStream → KeyedStream:逻辑地将一个流拆分成不相交的分区,每个分区包含具有相同 key 的元素,在内部以 hash 的形式实现的。

5.3.5 滚动聚合算子(Rolling Aggregation)

这些算子针对 KeyedStream 的每一个支流做聚合。 如 sum() min() max() minBy() maxBy()

5.3.6 Reduce

KeyedStream → DataStream:一个分组数据流的聚合操作,合并当前的元素和上次聚合的结果,产生一个新的值,返回的流中包含每一次聚合的结果,而不是只返回最后一次聚合的最终结果。

//scala

case class SensorReading(id:String,timestamp:Long,temperature:Double)

val stream2 = env.readTextFile("YOUR_PATH\\sensor.txt")

.map( data => {

val dataArray = data.split(",")

SensorReading(dataArray(0).trim, dataArray(1).trim.toLong, dataArray(2).trim.toDouble)

})

.keyBy("id")

.reduce( (x, y) => SensorReading(x.id, x.timestamp + 1, y.temperature) )

@Data

@NoArgsConstructor

@AllArgsConstructor

class Teacher implements Serializable {

private static final long serialVersionUID = 2002025547840257105L;

private String id;

private Long timestamp;

private Double temperature;

public static void main(String[] args) {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<String> stringDataStreamSource = env.readTextFile("YOUR_PATH\\\\sensor.txt");

SingleOutputStreamOperator<Teacher> reduceStream = stringDataStreamSource.map(new MapFunction<String, Teacher>() {

@Override

public Teacher map(String s) throws Exception {

String[] dataArrays = s.split(",");

new Teacher(dataArrays[0].toString().trim(), Long.parseLong(dataArrays[1]), Double.parseDouble(dataArrays[2]));

return null;

}

}).keyBy(0)

.reduce(new ReduceFunction<Teacher>() {

@Override

public Teacher reduce(Teacher t0, Teacher t1) throws Exception {

return new Teacher(t0.id,t0.timestamp,t0.temperature+t1.temperature);

}

});

}

}

5.3.7 Split 和 Select

//分流操作 scala

//1.spilt

val splitStream = dataStream.split(

data => {

if (data.temperature > 30) Seq("high")

else Seq("low")

}

)

//2.select

val highTempStrem = splitStream.select("high")

val lowTempStream = splitStream.select("low")

val allTempStream = splitStream.select("high","low")

//split分流 java

SplitStream<Teacher> splitStream = teacherStream.split(new OutputSelector<Teacher>() {

@Override

public Iterable<String> select(Teacher teacher) {

ArrayList<String> output = new ArrayList<String>();

if (teacher.temperature > 30) {

output.add("high");

} else {

output.add("low");

}

return output;

}

});

//select

DataStream<Teacher> highStream = splitStream.select("high");

DataStream<Teacher> lowStream = splitStream.select("low");

DataStream<Teacher> allStream = splitStream.select("low","higth");

5.3.8 Connect 和 CoMap

//scala

val warning = high.map( sensorData => (sensorData.id,

sensorData.temperature) )

val connected = warning.connect(low)

val coMap = connected.map(

warningData => (warningData._1, warningData._2, "warning"),

lowData => (lowData.id, "healthy")

)

//java

//connect

ConnectedStreams<Teacher, Teacher> connectedStreams = highStream.connect(lowStream);

//CoMap

SingleOutputStreamOperator<Tuple2<String, Double>> coMapStream = connectedStreams.map(new CoMapFunction<Teacher, Teacher, Tuple2<String, Double>>() {

@Override

public Tuple2<String, Double> map1(Teacher teacher) throws Exception {

Tuple2<String, Double> t1 = new Tuple2<>();

t1.setFields(teacher.id, teacher.temperature + 1);

return t1;

}

@Override

public Tuple2<String, Double> map2(Teacher teacher) throws Exception {

Tuple2<String, Double> t2 = new Tuple2<>();

t2.setFields(teacher.id, teacher.temperature + 1);

return t2;

}

});

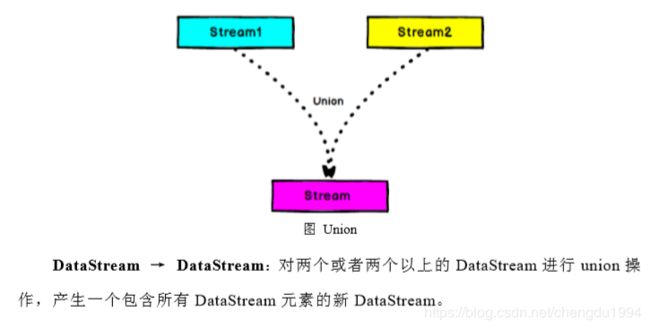

5.3.9 Union

Connect 与 Union 区别

//union scala

val unionStream = highTempStrem.union(lowTempStream)

//RichMapFunction

val mapStream = unionStream.map(new RichMapFunction[Teacher, String] {

override def map(in: Teacher): String = {

var stringBuffer: StringBuilder = new StringBuilder()

stringBuffer.append(in.id).append("==").append(in.temperature.toString).toString()

}

})

DataStreamSource<String> stream1 = env.socketTextStream("hadoop01", 9090);

DataStreamSource<String> stream2 = env.socketTextStream("hadoop02", 9091);

DataStream<String> unionStream = stream1.union(stream2);

SingleOutputStreamOperator<String> mapStream = unionStream.map(new MapFunction<String, String>() {

@Override

public String map(String s) throws Exception {

return s;

}

});

5.4 支持的数据类型

- 基础数据类型

- Java 和 Scala 元组(Tuples)

- Scala 样例类(case classes)

- Java 简单对象(POJOs)

- 其它(Arrays, Lists, Maps, Enums, 等等)

5.5 实现 UDF 函数——更细粒度的控制流

5.5.1 函数类(Function Classes)

Flink 暴露了所有 udf 函数的接口(实现方式为接口或者抽象类)。例如MapFunction, FilterFunction, ProcessFunction 等。

实现FilterFunction接口

val flinkTweets = tweets.filter(new FlinkFilter)

class FilterFilter extends FilterFunction[String] {

override def filter(value: String): Boolean = {

value.contains("flink")

}

}

将函数实现成匿名类

val flinkTweets = tweets.filter(

new RichFilterFunction[String] {

override def filter(value: String): Boolean = {

value.contains("flink")

}

}

)

过滤条件当作参数

val tweets: DataStream[String] = ...

val flinkTweets = tweets.filter(new KeywordFilter("flink"))

class KeywordFilter(keyWord: String) extends FilterFunction[String] {

override def filter(value: String): Boolean = {

value.contains(keyWord)

}

}

匿名函数(Lambda Functions)

val tweets: DataStream[String] = ...

val flinkTweets = tweets.filter(_.contains("flink"))

5.5.2 富函数(Rich Functions)

“富函数”是 DataStream API 的一个函数类接口,所有 Flink 函数类都有其 Rich 版本。它与常规函数不同在于可以获取运行环境的上下文,拥有一些生命周期方法,实现更复杂功能。

RichMapFunction

RichFlatMapFunction

RichFilterFunction

…

Rich Function 有一个生命周期的概念。典型的生命周期方法有:

open()方法是 rich function 的初始化方法,当一个算子如 map 或者 filter被调用之前 open()会被调用。

close()方法是生命周期中最后一个调用的方法,做一些清理工作。

getRuntimeContext()方法提供了函数的 RuntimeContext 一些信息,例如函数执行的并行度,任务的名字,以及 state 状态

class MyFlatMap extends RichFlatMapFunction[Int, (Int, Int)] {

var subTaskIndex = 0

override def open(configuration: Configuration): Unit = {

subTaskIndex = getRuntimeContext.getIndexOfThisSubtask

// 以下可以 做一些初始化工作 , 例如建立一个和 HDFS的连接

}

override def flatMap(in: Int, out: Collector[(Int, Int)]): Unit = {

if (in % 2 == subTaskIndex) {

out.collect((subTaskIndex, in))

}

}

override def close(): Unit = {

// 以下做一些 清理工作, 例如 断开和 HDFS的连接。

}

}

5.6 Sink

stream.addSink(new MySink(xxxx))

5.6.1 Kafka

<! -- https://mvnrepository.com/artifact/org.apache.flink/flink - connector - kafka - 0.11 -- > <dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-kafka-0.11_2.11</artifactId>

<version>1.7.2</version>

</dependency>

//scala

object KafkaSinkTest {

def main(args: Array[String]): Unit = {

val env = StreamExecutionEnvironment.getExecutionEnvironment

env.setParallelism(1)

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime)

val properties = new Properties()

properties.setProperty("bootstrap.servers","localhost:9092")

properties.setProperty("group.id","consumer-group")

properties.setProperty("key.deserializer","org.apache.kafka.common.serialization.StringDeserializer")

properties.setProperty("value.deserializer","org.apache.kafka.common.serialization.StringDeserializer")

properties.setProperty("auto.offset.reset","latest")

val inputStream = env.addSource(new FlinkKafkaConsumer011[String]("topic0101",SimpleStringSchema,properties))

val outputStream = inputStream.map(

data => {

val strings = data.split(",")

Teacher(strings(0).trim, strings(1).trim.toLong, strings(2).trim.toDouble).toString

})

outputStream.addSink(new FlinkKafkaProducer011[String]("topic",new SimpleStringSchema(),properties))

env.execute("kafkaSink")

}

}

/**

1. KafkaSink java

*/

public class StreamKafKaSink {

public static void main(String[] args) {

//获取Flink的运行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setParallelism(1);

//checkpoint

env.enableCheckpointing(1000);

env.getCheckpointConfig().setCheckpointingMode(CheckpointingMode.EXACTLY_ONCE);

env.getCheckpointConfig().setMinPauseBetweenCheckpoints(500);

env.getCheckpointConfig().setCheckpointTimeout(60000);

env.getCheckpointConfig().setMaxConcurrentCheckpoints(1);

env.getCheckpointConfig().enableExternalizedCheckpoints(CheckpointConfig.ExternalizedCheckpointCleanup.RETAIN_ON_CANCELLATION);

//statebackend

env.setStateBackend(new FsStateBackend("hdfs://hadoop100:9000/flink/checkpoints",true));

DataStream<String> inputStream = env.socketTextStream("hadpoop01", 9001, ',',5);

String brokerList = "hadoop110:9092";

String topic = "t1";

Properties properties = new Properties();

properties.setProperty("bootstrap.servers",brokerList);

//第一种解决方案,设置FlinkKafkaProducer011里面的事务超时时间

//设置事务超时时间

properties.setProperty("transaction.timeout.ms",60000*15+"");

//第二种解决方案,设置kafka的最大事务超时时间

FlinkKafkaProducer011<String> myProducer = new FlinkKafkaProducer011<>(brokerList, topic, new SimpleStringSchema());

//使用仅一次语义的kafkaProducer

FlinkKafkaProducer011<String> myProducer2 = new FlinkKafkaProducer011<>(topic, new KeyedSerializationSchemaWrapper<String>(new SimpleStringSchema())

, properties, FlinkKafkaProducer011.Semantic.EXACTLY_ONCE);

inputStream.addSink(myProducer2);

env.execute("kafkaSink");

}

}

六、Flink 中的 Window

6.1 Window

-

滚动窗口(Tumbling Windows)

将数据依据固定的窗口长度对数据进行切片。

特点:时间对齐,窗口长度固定,没有重叠 -

滑动窗口

是固定窗口的更广义的一种形式,滑动窗口由固定的窗口长度和滑动间隔组成。

特点:时间对齐,窗口长度固定,可以有重叠。 -

会话窗口(Session Windows)

由一系列事件组合一个指定时间长度的 timeout 间隙组成,类似于 web 应用的session,也就是一段时间没有接收到新数据就会生成新的窗口。

特点:时间无对齐。

6.2 Window API

6.2.1 TimeWindow

TimeWindow 将指定时间范围内的所有数据组成一个 window,一次对一个window 里的所有数据进行计算。

- 滚动窗口

val minTempPerWindow = dataStream

.map(r => (r.id, r.temperature))

.keyBy(_._1)

.timeWindow(Time.seconds(15))

.reduce((r1, r2) => (r1._1, r1._2.min(r2._2)))

- 滑动窗口(SlidingEventTimeWindows)

val minTempPerWindow: DataStream[(String, Double)] = dataStream

.map(r => (r.id, r.temperature))

.keyBy(_._1)

.timeWindow(Time.seconds(15), Time.seconds(5))

.reduce((r1, r2) => (r1._1, r1._2.min(r2._2)))

// .window(EventTimeSessionWindows.withGap(Time.minutes(10))

6.2.2 CountWindow

- 滚动窗口

val minTempPerWindow: DataStream[(String, Double)] = dataStream

.map(r => (r.id, r.temperature))

.keyBy(_._1)

.countWindow(5)

.reduce((r1, r2) => (r1._1, r1._2.max(r2._2)))

- 滑动窗口

val keyedStream: KeyedStream[(String, Int), Tuple] = dataStream

.map(r => (r.id, r.temperature))

.keyBy(0)

// 每当某一个 key的个数达到 2的时候 , 触发计算,计算最近该 key最近 10个元素的内容

val windowedStream: WindowedStream[(String, Int), Tuple, GlobalWindow] = keyedStream

.countWindow(10,2)

val sumDstream: DataStream[(String, Int)] = windowedStream.sum(1)

6.2.3 window function

window function 定义了要对窗口中收集的数据做的计算操作,主要可以分为两类:

- 增量聚合函数(incremental aggregation functions)

每条数据到来就进行计算,保持一个简单的状态。典型的增量聚合函数有

ReduceFunction, AggregateFunction。 - 全窗口函数(full window functions)

先把窗口所有数据收集起来,等到计算的时候会遍历所有数据。

ProcessWindowFunction 就是一个全窗口函数

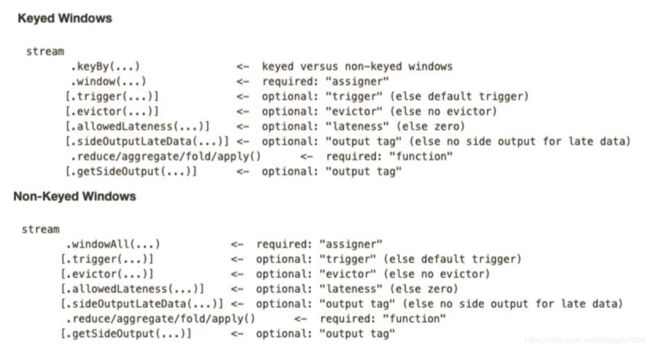

6.2.4 其它可选 API

- trigger() —— 触发器

定义 window 什么时候关闭,触发计算并输出结果 - evitor() —— 移除器

定义移除某些数据的逻辑 - allowedLateness() —— 允许处理迟到的数据

- sideOutputLateData() —— 将迟到的数据放入侧输出流

- getSideOutput() —— 获取侧输出流

七、时间语义与 Wartermark

7.1 Flink 中的时间语义

Event Time:是事件创建的时间。它通常由事件中的时间戳描述,例如采集的日志数据中,每一条日志都会记录自己的生成时间,Flink 通过时间戳分配器访问事件时间戳。

Ingestion Time:是数据进入 Flink 的时间。

Processing Time:是每一个执行基于时间操作的算子的本地系统时间,与机器相关,默认的时间属性就是 Processing Time。

7.2 EventTime 的引入

val env = StreamExecutionEnvironment.getExecutionEnvironment

// 从调用时刻开始给 env创建的每一个 stream追加时间特征

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime)

7.3 Watermark

- Watermark 是一种衡量 Event Time 进展的机制。

- Watermark 是用于处理乱序事件的,而正确的处理乱序事件,通常用Watermark 机制结合 window 来实现。

- 数据流中的 Watermark 用于表示 timestamp 小于 Watermark 的数据,都已经到达了,因此,window 的执行也是由 Watermark 触发的。

- Watermark 可以理解成一个延迟触发机制,我们可以设置 Watermark 的延时时长t,每次系统会校验已经到达的数据中最大的 maxEventTime,然后认定 eventTime小于 maxEventTime - t 的所有数据都已经到达,如果有窗口的停止时间等于maxEventTime – t,那么这个窗口被触发执行

7.3.1 Watermark 的引入

- 方法1

val dataStream = inputStream.map(

data => {

val dataArrays = data.split(",")

Teacher(dataArrays(0).trim, dataArrays(1).trim.toLong, dataArrays(2).trim.toDouble)

}

//从Teacher中提取时间戳并设置watermarks方法1

).assignTimestampsAndWatermarks(new BoundedOutOfOrdernessTimestampExtractor[Teacher](Time.seconds(3)) {

override def extractTimestamp(element: Teacher): Long = element.timestamp * 1000L

})

- 方法2

val resultStream = inputStream.map(

data => { //包装Teacher

val dataArrays = data.split(",")

Teacher(dataArrays(0).trim, dataArrays(1).trim.toLong, dataArrays(2).trim.toDouble)

}

).assignTimestampsAndWatermarks(new MyAssigners()) //获取时间戳和设置watermarks方法2

//方法2 周期性watermarks

class MyAssigners() extends AssignerWithPeriodicWatermarks[Teacher]{

//定义固定延迟为5秒

val bound:Long = 5*1000L

//定义当前收到的最大时间戳

var maxTs:Long = Long.MinValue

override def getCurrentWatermark: Watermark = {

new Watermark(maxTs-bound)

}

override def extractTimestamp(element: Teacher, previousElementTimestamp: Long): Long = {

maxTs = maxTs.max(element.timestamp*1000L)

element.timestamp*1000L

}

}

- 方法3

val resultStream = inputStream.map(

data => { //包装Teacher

val dataArrays = data.split(",")

Teacher(dataArrays(0).trim, dataArrays(1).trim.toLong, dataArrays(2).trim.toDouble)

}

).assignTimestampsAndWatermarks(new MyAssigners()) //获取时间戳和设置watermarks方法3

//方法2-2 不定时watermarks

class MyAssigners2() extends AssignerWithPunctuatedWatermarks[Teacher]{

val bound:Long = 5*1000L

override def checkAndGetNextWatermark(lastElement: Teacher, extractedTimestamp: Long): Watermark = {

if(lastElement.id == "teacher01"){

new Watermark(extractedTimestamp-bound)

}else{

null

}

}

override def extractTimestamp(element: Teacher, previousElementTimestamp: Long): Long = {

element.timestamp*1000L

}

}

八、ProcessFunction API(底层 API)

DataStream API 提供了一系列的 Low-Level 转换算子。可以访问时间戳、watermark 以及注册定时事件。还可以输出特定的一些事件,例如超时事件等。Process Function 用来构建事件驱动的应用以及实现自定义的业务逻辑(使用之前的window 函数和转换算子无法实现)。

Flink 提供了 8 个 Process Function:

- ProcessFunction

- KeyedProcessFunction

- CoProcessFunction

- ProcessJoinFunction

- BroadcastProcessFunction

- KeyedBroadcastProcessFunction

- ProcessWindowFunction

- ProcessAllWindowFunction

8.1 KeyedProcessFunction

KeyedProcessFunction 用来操作 KeyedStream。

KeyedProcessFunction 会处理流的每一个元素,输出为 0 个、1 个或者多个元素。所有的 Process Function 都继承自RichFunction 接口,所以都有 open()、close()和 getRuntimeContext()等方法。

KeyedProcessFunction[KEY, IN, OUT]还额外提供了两个方法:

- processElement(v: IN, ctx: Context, out: Collector[OUT]), 流中的每一个元素都会调用这个方法,调用结果将会放在 Collector 数据类型中输出。Context可以访问元素的时间戳,元素的 key,以及 TimerService 时间服务。Context还可以将结果输出到别的流(side outputs)。

- onTimer(timestamp: Long, ctx: OnTimerContext, out: Collector[OUT])是一个回调函数。当之前注册的定时器触发时调用。参数 timestamp 为定时器所设定的触发的时间戳。Collector 为输出结果的集合。OnTimerContext 和processElement 的 Context 参数一样,提供了上下文的一些信息,例如定时器触发的时间信息(事件时间或者处理时间)。

8.2 TimerService 和 定时器(Timers)

Context 和 OnTimerContext 所持有的 TimerService 对象拥有以下方法:

- currentProcessingTime(): Long 返回当前处理时间

- currentWatermark(): Long 返回当前 watermark 的时间戳

- registerProcessingTimeTimer(timestamp: Long): Unit 会注册当前 key 的processing time 的定时器。当 processing time 到达定时时间时,触发 timer。

- registerEventTimeTimer(timestamp: Long): Unit 会注册当前 key 的 event time 定时器。当水位线大于等于定时器注册的时间时,触发定时器执行回调函数。

- deleteProcessingTimeTimer(timestamp: Long): Unit 删除之前注册处理时间定时器。如果没有这个时间戳的定时器,则不执行。

- deleteEventTimeTimer(timestamp: Long): Unit 删除之前注册的事件时间定时器,如果没有此时间戳的定时器,则不执行。 当定时器 timer 触发时,会执行回调函数 onTimer()。注意定时器 timer 只能在keyed streams 上面使用。

8.3 侧输出流(SideOutput)

除了 split 算子,可以将一条流分成多条流,这些流的数据类型也都相同。process function 的 side outputs 功能可以产生多条流,并且这些流的数据类型可以不一样。一个 side output 可以定义为 OutputTag[X]对象,X 是输出流的数据类型。process function 可以通过 Context 对象发射一个事件到一个或者多个 side outputs。

val monitoredReadings: DataStream[SensorReading] = readings .process(new FreezingMonitor)

class FreezingMonitor extends ProcessFunction[SensorReading, SensorReading] {

// 定义一个侧输出标签

lazy val freezingAlarmOutput: OutputTag[String] = new OutputTag[String]("freezing-alarms")

override def processElement(r: SensorReading,

ctx: ProcessFunction[SensorReading, SensorReading]#Context,

out: Collector[SensorReading]): Unit = {

// 温度在 32F以下 时,输出警告信息

if (r.temperature < 32.0) {

ctx.output(freezingAlarmOutput, s"Freezing Alarm for ${r.id}")

}

// 所有数据直接常规输出到主流

out.collect(r)

}

}

monitoredReadings .getSideOutput(new OutputTag[String]("freezing-alarms")) .print()

8.4 CoProcessFunction

对于两条输入流,DataStream API 提供了 CoProcessFunction 这样的 low-level操作。CoProcessFunction 提供了操作每一个输入流的方法: processElement1()和processElement2()。

类似于 ProcessFunction,这两种方法都通过 Context 对象来调用。这个 Context对象可以访问事件数据,定时器时间戳,TimerService,以及 side outputs。

CoProcessFunction 也提供了 onTimer()回调函数。

九、 状态编程和容错机制

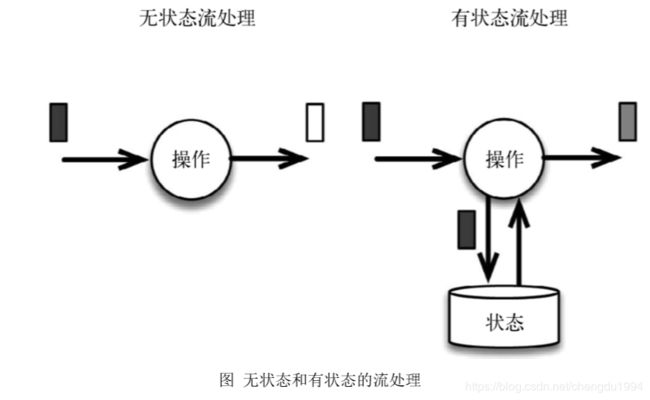

有状态的计算则会基于多个事件输出结果。以下是一些例子。

-

所有类型的窗口。例如,计算过去一小时的平均温度,就是有状态的计算。

-

所有用于复杂事件处理的状态机。例如,在一分钟内收到两个相差 20 度以上的温度读数,则发出警告,这是有状态的计算。

9.1 有状态的算子和应用程序

Flink 内置的很多算子,数据源 source,数据存储 sink 都是有状态的,流中的数据都是 buffer records,会保存一定的元素或者元数据。例如: ProcessWindowFunction会缓存输入流的数据,ProcessFunction 会保存设置的定时器信息等等。

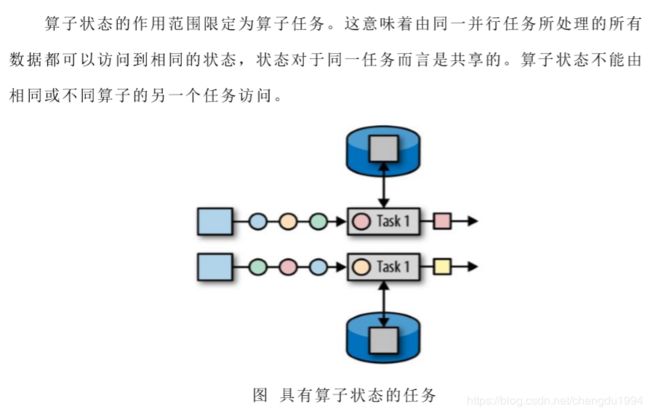

在 Flink 中,状态始终与特定算子相关联。总的来说,有两种类型的状态:

- 算子状态(operator state)

- 键控状态(keyed state)

9.3.1 算子状态(operator state)

- 列表状态(List state)

将状态表示为一组数据的列表。 - 联合列表状态(Union list state)

也将状态表示为数据的列表。它与常规列表状态的区别在于,在发生故障时,或者从保存点(savepoint)启动应用程序时如何恢复。 - 广播状态(Broadcast state)

如果一个算子有多项任务,而它的每项任务状态又都相同,那么这种特殊情况最适合应用广播状态。

9.3.2 键控状态(keyed state)

键控状态是根据输入数据流中定义的键(key)来维护和访问。Flink 为每个键值维护一个状态实例,并将具有相同键的所有数据都分区到同一个算子任务中,这个任务会维护和处理这个 key 对应的状态。当任务处理一条数据时,它会自动将状态的访问范围限定为当前数据的 key。因此具有相同 key 的所有数据都会访问相同的状态。Keyed State 很类似于一个分布式的 key-value map 数据结构,只能用于 KeyedStream(keyBy 算子处理之后)。

Flink 的 Keyed State 支持以下数据类型:

- ValueState[T]保存单个的值,值的类型为 T。

- get 操作: ValueState.value()

- set 操作: ValueState.update(value: T)

- ListState[T]保存一个列表,列表里的元素的数据类型为 T。基本操作如下:

- ListState.add(value: T)

- ListState.addAll(values: java.util.List[T])

- ListState.get()返回 Iterable[T]

- ListState.update(values: java.util.List[T])

- MapState[K, V]保存 Key-Value 对。

- MapState.get(key: K)

- MapState.put(key: K, value: V)

- MapState.contains(key: K)

- MapState.remove(key: K)

- ReducingState[T]

- AggregatingState[I, O]

State.clear()是清空操作。

val sensorData: DataStream[SensorReading] = ...

val keyedData: KeyedStream[SensorReading, String] = sensorData.keyBy(_.id)

val alerts: DataStream[(String, Double, Double)] = keyedData

.flatMap(new TemperatureAlertFunction(1.7))

class TemperatureAlertFunction(val threshold: Double) extends RichFlatMapFunction[SensorReading, (String, Double, Double)] {

private var lastTempState: ValueState[Double] = _

override def open(parameters: Configuration): Unit = {

val lastTempDescriptor = new ValueStateDescriptor[Double]("lastTemp", classOf[Double])

lastTempState = getRuntimeContext.getState[Double](lastTempDescriptor)

}

override def flatMap(reading: SensorReading, out: Collector[(String, Double, Double)]): Unit = {

val lastTemp = lastTempState.value()

val tempDiff = (reading.temperature - lastTemp).abs

if (tempDiff > threshold) {

out.collect((reading.id, reading.temperature, tempDiff))

}

this.lastTempState.update(reading.temperature)

}

}

val alerts: DataStream[(String, Double, Double)] = keyedSensorData

.flatMapWithState[(String, Double, Double), Double] {

case (in: SensorReading, None) =>

(List.empty, Some(in.temperature))

case (r: SensorReading, lastTemp: Some[Double]) =>

val tempDiff = (r.temperature - lastTemp.get).abs

if (tempDiff > 1.7) {

(List((r.id, r.temperature, tempDiff)), Some(r.temperature))

} else {

(List.empty, Some(r.temperature))

}

}

9.2 状态一致性

9.2.1 一致性级别

- at-most-once: 这其实是没有正确性保障的委婉说法——故障发生之后,计数结果可能丢失。同样的还有 udp。

- at-least-once: 这表示计数结果可能大于正确值,但绝不会小于正确值。也就是说,计数程序在发生故障后可能多算,但是绝不会少算。

- exactly-once: 这指的是系统保证在发生故障后得到的计数结果与正确值一致

Flink 的一个重大价值在于,它既保证了 exactly-once,也具有低延迟和高吞吐的处理能力。

9.2.2 端到端(end-to-end)状态一致性

9.3 检查点(checkpoint)

9.3.1 Flink 的检查点算法

待更新

9.3.2 Flink+Kafka 如何实现端到端的 exactly-once 语义

待更新

9.4 选择一个状态后端(state backend)

- MemoryStateBackend

- FsStateBackend

- RocksDBStateBackend

RocksDBStateBackend 的依赖

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-statebackend-rocksdb_2.11</artifactId>

<version>1.7.2</version>

</dependency>

设置状态后端为 FsStateBackend

val env = StreamExecutionEnvironment.getExecutionEnvironment

val checkpointPath: String = ???

val backend = new RocksDBStateBackend(checkpointPath)

env.setStateBackend(backend)

env.setStateBackend(new FsStateBackend("file:///tmp/checkpoints"))

env.enableCheckpointing(1000)

// 配置重启策略

env.setRestartStrategy(RestartStrategies.fixedDelayRestart(60, Time.of(10, TimeUnit.SECONDS)))

十、Table API 与 SQL

10.1 需要引入的 pom 依赖

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table_2.11</artifactId>

<version>1.7.2</version>

</dependency>

10.2 了解 TableAPI

def main(args: Array[String]): Unit = { val env: StreamExecutionEnvironment = StreamExecutionEnvironment.getExecutionEnvironment

val myKafkaConsumer: FlinkKafkaConsumer011[String] = MyKafkaUtil.getConsumer("ECOMMERCE")

val dstream: DataStream[String] = env.addSource(myKafkaConsumer)

val tableEnv: StreamTableEnvironment = TableEnvironment.getTableEnvironment(env)

val ecommerceLogDstream: DataStream[EcommerceLog] = dstream.map{ jsonString => JSON.parseObject(jsonString,classOf[EcommerceLog]) }

val ecommerceLogTable: Table = tableEnv.fromDataStream(ecommerceLogDstream)

val table: Table = ecommerceLogTable.select("mid,ch").filter("ch ='appstore'")

val midchDataStream: DataStream[(String, String)] = table.toAppendStream[(String,String)]

midchDataStream.print()

env.execute() }

10.2.1 动态表

如果流中的数据类型是 case class 可以直接根据 case class 的结构生成 table

tableEnv.fromDataStream(ecommerceLogDstream)

或者根据字段顺序单独命名

tableEnv.fromDataStream(ecommerceLogDstream,’mid,’uid .......)

最后的动态表可以转换为流进行输出

table.toAppendStream[(String,String)]

10.2.2 字段

用一个单引放到字段前面来标识字段名, 如 ‘name , ‘mid ,’amount 等

10.3 TableAPI 的窗口聚合操作

10.3.1 TableAPI 例子

// 每 10秒中渠道为 appstore的个数

def main(args: Array[String]): Unit = { //sparkcontext

val env: StreamExecutionEnvironment = StreamExecutionEnvironment.getExecutionEnvironment

// 时间特性改为 eventTime

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime)

val myKafkaConsumer: FlinkKafkaConsumer011[String] = MyKafkaUtil.getConsumer("ECOMMERCE")

val dstream: DataStream[String] = env.addSource(myKafkaConsumer)

val ecommerceLogDstream: DataStream[EcommerceLog] = dstream.map{ jsonString =>JSON.parseObject(jsonString,classOf[EcommerceLog]) }

// 告知 watermark 和 eventTime 如何提取

val ecommerceLogWithEventTimeDStream: DataStream[EcommerceLog] = ecommerceLogDstream.assignTimestampsAndWatermarks(new BoundedOutOfOrdernessTimestampExtractor[EcommerceLog](Time.seconds(0L)) {

override def extractTimestamp(element: EcommerceLog): Long = {

element.ts

}

}).setParallelism(1)

val tableEnv: StreamTableEnvironment = TableEnvironment.getTableEnvironment(env)

// 把数据流转化成 Table

//'ts.rowtime 指定表里的时间字段EventTime

//‘ts.procTime 指定表里的时间字段ProcessTime

val ecommerceTable: Table = tableEnv.fromDataStream(ecommerceLogWithEventTimeDStream , 'mid,'uid,'appid,'area,'os,'ch,'logType,'vs,'logDate,'logHour,'logHourMinut e,'ts.rowtime)

// 通过 table api 进行操作

// 每 10 秒

统计一次各个渠道的个数 table api 解决

//1 groupby 2 要用 window 3 用 eventtime 来确定开窗时间

val resultTable: Table = ecommerceTable

.window(Tumble over 10000.millis on 'ts as 'tt) //'ts as 'tt ,tt为当前滚动窗口的别名

.groupBy('ch,'tt )

.select( 'ch, 'ch.count)

// 把 Table 转化成数据流

val resultDstream: DataStream[(Boolean, (String, Long))] = resultSQLTable.toRetractStream[(String,Long)]

resultDstream.filter(_._1).print()

env.execute()

}

10.3.2 关于 group by

- 如果了使用 groupby,table 转换为流的时候只能用 toRetractDstream

val rDstream: DataStream[(Boolean, (String, Long))] = table .toRetractStream[(String,Long)]

- toRetractDstream 得到的第一个 boolean 型字段标识 true 就是最新的数据(Insert),false 表示过期老数据(Delete)

val rDstream: DataStream[(Boolean, (String, Long))] = table .toRetractStream[(String,Long)]

rDstream.filter(_._1).print()

- 如果使用的 api 包括时间窗口,那么窗口的字段必须出现在 groupBy 中。

val table: Table = ecommerceLogTable

.filter("ch ='appstore'")

.window(Tumble over 10000.millis on 'ts as 'tt)

.groupBy('ch ,'tt)

.select("ch,ch.count ")

10.3.3 关于时间窗口

- 用到时间窗口,必须提前声明时间字段,如果是 processTime 直接在创建动态表时进行追加就可以。

val ecommerceLogTable: Table = tableEnv

.fromDataStream( ecommerceLogWithEtDstream,

'mid,'uid,'appid,'area,'os,'ch,'logType,'vs,'logDate,'logHour,'logHourMinute,'ps.proctime)

- 如果是 EventTime 要在创建动态表时声明

val ecommerceLogTable: Table = tableEnv

.fromDataStream(ecommerceLogWithEtDstream,

'mid,'uid,'appid,'area,'os,'ch,'logType,'vs,'logDate,'logHour,'logHourMinute,'ts.rowtime)

- 滚动窗口可以使用 Tumble over 10000.millis on 来表示

val table: Table = ecommerceLogTable.filter("ch ='appstore'")

.window(Tumble over 10000.millis on 'ts as 'tt)

.groupBy('ch ,'tt)

.select("ch,ch.count ")

10.4 SQL 如何编写

def main(args: Array[String]): Unit = {

//sparkcontext

val env: StreamExecutionEnvironment = StreamExecutionEnvironment.getExecutionEnvironment

// 时间特性改为 eventTime

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime)

val myKafkaConsumer: FlinkKafkaConsumer011[String] = MyKafkaUtil.getConsumer("ECOMMERCE")

val dstream: DataStream[String] = env.addSource(myKafkaConsumer)

val ecommerceLogDstream: DataStream[EcommerceLog] = dstream.map{ jsonString =>JSON.parseObject(jsonString,classOf[EcommerceLog]) }

// 告知 watermark 和 eventTime 如何提取

val ecommerceLogWithEventTimeDStream: DataStream[EcommerceLog] = ecommerceLogDstream.assignTimestampsAndWatermarks(

new BoundedOutOfOrdernessTimestampExtractor[EcommerceLog](Time.seconds(0L)) {

override def extractTimestamp(element: EcommerceLog): Long = {

element.ts

}

}).setParallelism(1)

//SparkSession

val tableEnv: StreamTableEnvironment = TableEnvironment.getTableEnvironment(env)

// 把数据流转化成 Table

val ecommerceTable: Table = tableEnv.fromDataStream(ecommerceLogWithEventTimeDStream , 'mid,'uid,'appid,'area,'os,'ch,'logType,'vs,'logDate,'logHour,'logHourMinu te,'ts.rowtime)

// 通过 table api 进行操作

// 每 10 秒统计一次各个渠道的个数 table api 解决

//1 groupby 2 要用 window 3 用 eventtime 来确定开窗时间

val resultTable: Table = ecommerceTable.window(Tumble over 10000.millis on 'ts as 'tt).groupBy('ch,'tt ).select( 'ch, 'ch.count)

// 通过 sql 进行操作

val resultSQLTable : Table = tableEnv.sqlQuery( "select ch ,count(ch) from "+ecommerceTable+" group by ch ,Tumble(ts,interval '10' SECOND )")

// 把 Table转化成数据流

val appstoreDStream: DataStream[(String, String, Long)] = appstoreTable.toAppendStream[(String,String,Long)]

val resultDstream: DataStream[(Boolean, (String, Long))] = resultSQLTable.toRetractStream[(String,Long)]

resultDstream.filter(_._1).print()

env.execute()

}

十一、 Flink CEP

11.1 复杂事件处理 CEP

一个或多个由简单事件构成的事件流通过一定的规则匹配,然后输出用户想得到的数据,满足规则的复杂事件。

特征:

- 目标:从有序的简单事件流中发现一些高阶特征

- 输入:一个或多个由简单事件构成的事件流

- 处理:识别简单事件之间的内在联系,多个符合一定规则的简单事件构成复杂事件

- 输出:满足规则的复杂事件

CEP 用于分析低延迟、频繁产生的不同来源的事件流。

CEP 可以帮助在复杂的、不相关的事件流中找出有意义的模式和复杂的关系,以接近实时或准实时的获得通知并阻止一些行为。

CEP 支持在流上进行模式匹配,根据模式的条件不同,分为连续的条件或不连续的条件;模式的条件允许有时间的限制,当在条件范围内没有达到满足的条件时,会导致模式匹配超时。

功能: - 输入的流数据,尽快产生结果

- 在 2 个 event 流上,基于时间进行聚合类的计算

- 提供实时/准实时的警告和通知

- 在多样的数据源中产生关联并分析模式

- 高吞吐、低延迟的处理

市场上有多种 CEP 的解决方案,例如 Spark、Samza、Beam 等,但他们都没有提供专门的 library 支持。但是 Flink 提供了专门的 CEP library。

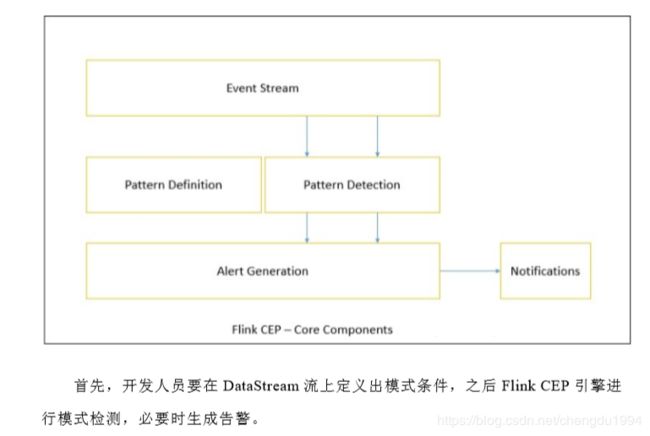

11.2 Flink CEP

Flink 为 CEP 提供了专门的 Flink CEP library,它包含如下组件:

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-cep_${scala.binary.version}</artifactId>

<version>${flink.version}</version>

</dependency>

Event Streams

以登陆事件流为例:

case class LoginEvent(userId: String, ip: String, eventType: String, eventTime: String)

val env = StreamExecutionEnvironment.getExecutionEnvironment

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime)

env.setParallelism(1)

val loginEventStream = env.fromCollection(List(

LoginEvent("1", "192.168.0.1", "fail", "1558430842"),

LoginEvent("1", "192.168.0.2", "fail", "1558430843"),

LoginEvent("1", "192.168.0.3", "fail", "1558430844"),

LoginEvent("2", "192.168.10.10", "success", "1558430845")

)).assignAscendingTimestamps(_.eventTime.toLong)

Pattern API

每个 Pattern 都应该包含几个步骤,或者叫做 state。从一个 state 到另一个 state,通常我们需要定义一些条件,例如下列的代码

val loginFailPattern = Pattern.begin[LoginEvent]("begin")

.where(_.eventType.equals("fail"))

.next("next")

.where(_.eventType.equals("fail"))

.within(Time.seconds(10)

每个 state 都应该有一个标示:例如.beginLoginEvent中的"begin"

每个 state 都需要有一个唯一的名字,而且需要一个 filter 来过滤条件,这个过滤条件定义事件需要符合的条件,例如:

.where(_.eventType.equals(“fail”))

我们也可以通过 subtype 来限制 event 的子类型:

start.subtype(SubEvent.class).where(…);

事实上,你可以多次调用 subtype 和 where 方法;而且如果 where 条件是不相关的,你可以通过 or 来指定一个单独的 filter 函数:

pattern.where(…).or(…);

之后,我们可以在此条件基础上,通过 next 或者 followedBy 方法切换到下一个

state,next 的意思是说上一步符合条件的元素之后紧挨着的元素;

而 followedBy 并不要求一定是挨着的元素。这两者分别称为严格近邻和非严格近邻。

val strictNext = start.next("middle")

val nonStrictNext = start.followedBy("middle")

最后,我们可以将所有的 Pattern 的条件限定在一定的时间范围内:

这个时间可以是 Processing Time,也可以是 Event Time

next.within(Time.seconds(10))

Pattern 检测

通过一个 input DataStream 以及刚刚我们定义的 Pattern,我们可以创建一个PatternStream:

val input = ...

val pattern = ...

val patternStream = CEP.pattern(input, pattern)

val patternStream = CEP.pattern(loginEventStream.keyBy(_.userId), loginFailPattern)

一旦获得 PatternStream,我们就可以通过 select 或 flatSelect,从一个 Map 序列

找到我们需要的警告信息。

select

select 方法需要实现一个 PatternSelectFunction,通过 select 方法来输出需要的警告。它接受一个 Map 对,包含 string/event,其中 key 为 state 的名字,event 则为真实的 Event。

val loginFailDataStream = patternStream

.select((pattern: Map[String, Iterable[LoginEvent]]) => {

val first = pattern.getOrElse("begin", null).iterator.next()

val second = pattern.getOrElse("next", null).iterator.next()

Warning(first.userId, first.eventTime, second.eventTime, "warning")

})

flatSelect

通过实现 PatternFlatSelectFunction,实现与 select 相似的功能。唯一的区别就是 flatSelect 方法可以返回多条记录,它通过一个 Collector[OUT]类型的参数来将要输出的数据传递到下游。

超时事件的处理

通过 within 方法,我们的 parttern 规则将匹配的事件限定在一定的窗口范围内。当有超过窗口时间之后到达的 event,我们可以通过在 select 或 flatSelect 中,实现PatternTimeoutFunction 和 PatternFlatTimeoutFunction 来处理这种情况。

val patternStream: PatternStream[Event] = CEP.pattern(input, pattern)

val outputTag = OutputTag[String]("side-output")

val result: SingleOutputStreamOperator[ComplexEvent] = patternStream.select (outputTag){

(pattern: Map[String, Iterable[Event]], timestamp: Long) => TimeoutEvent ()

} {

pattern: Map[String, Iterable[Event]] => ComplexEvent()

}

val timeoutResult: DataStream<TimeoutEvent> = result.getSideOutput(outputTa g)