神经网络学习-CNN(五)

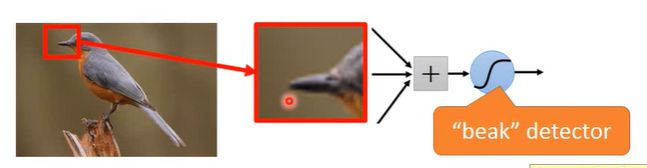

为什么要用CNN

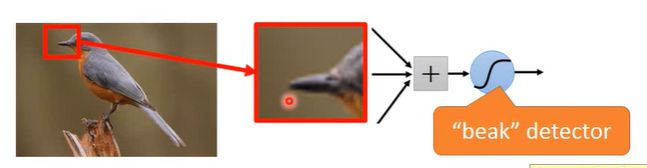

- 检测上图的鸟嘴,不需要看整张图,只需要看鸟嘴的地方就可以了。

- Subsampling the pixels will not change the object

- 同样的特征会出现在图片的不同区域

CNN的过程

计算过程

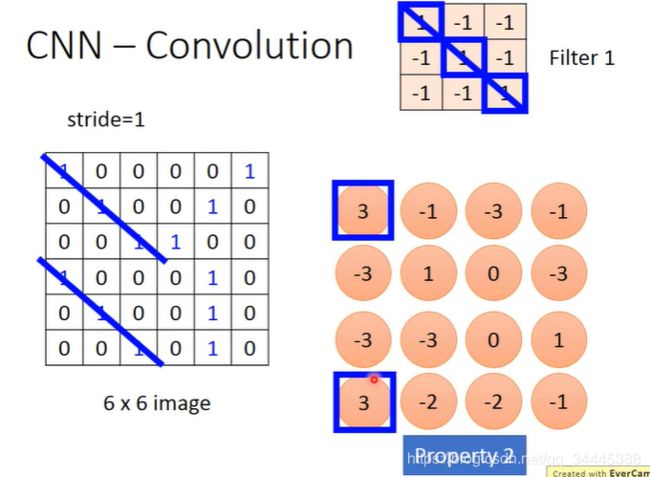

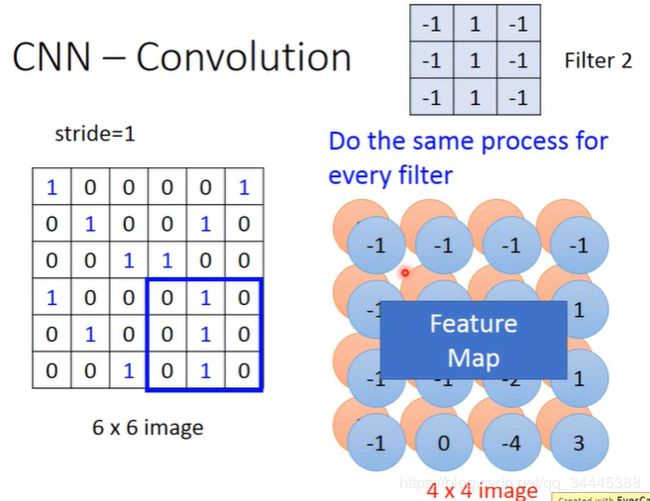

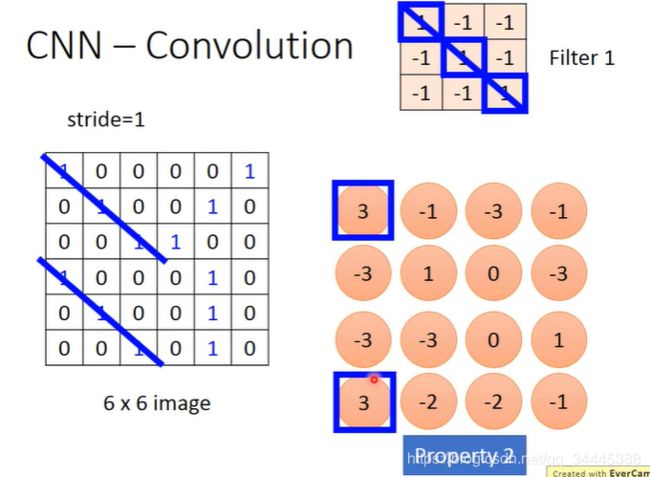

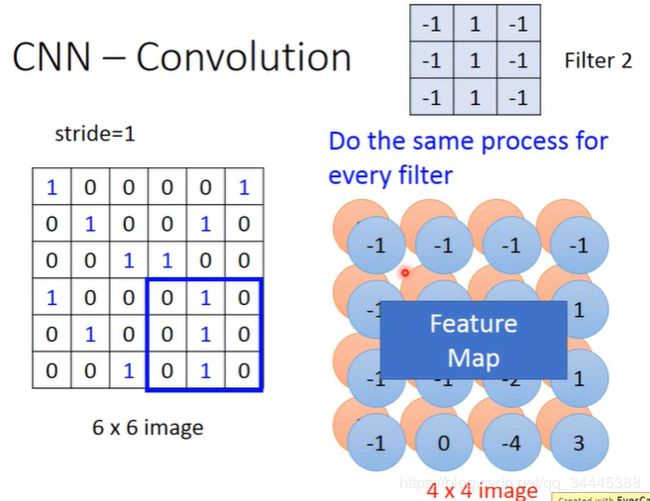

卷积

- 做内积

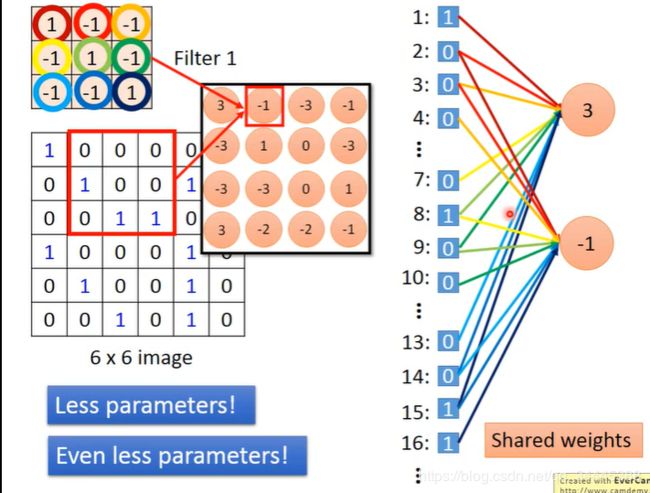

- 同样的特征可以被一个卷积发现

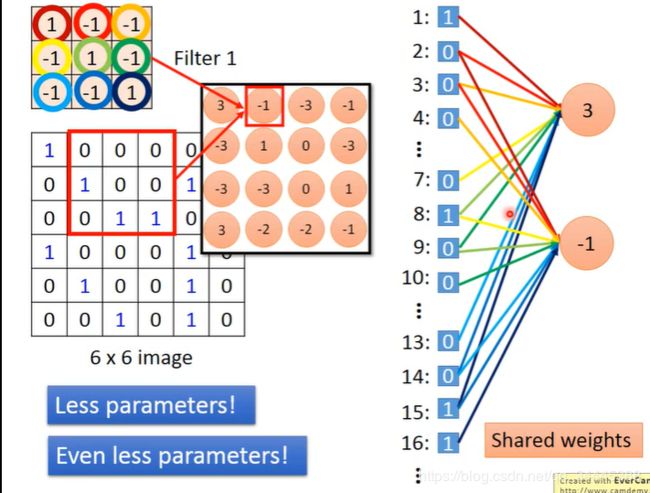

- 只有9个点传递到下一个节点,所以参数少

- 共享了所有的参数,所以参数更少

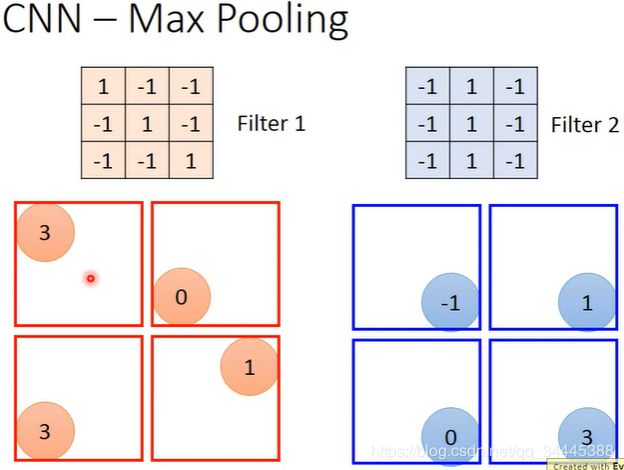

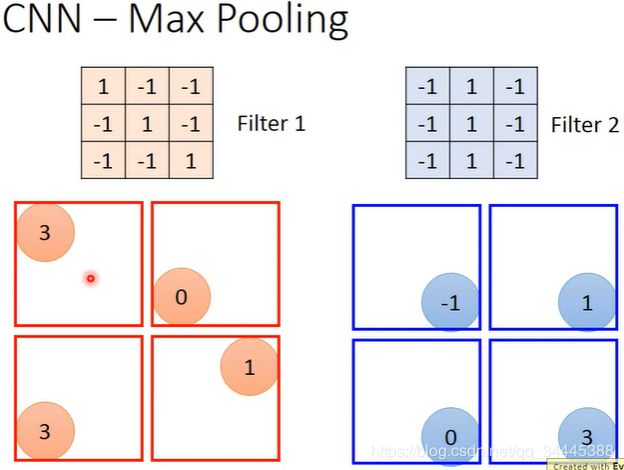

Max Pooling

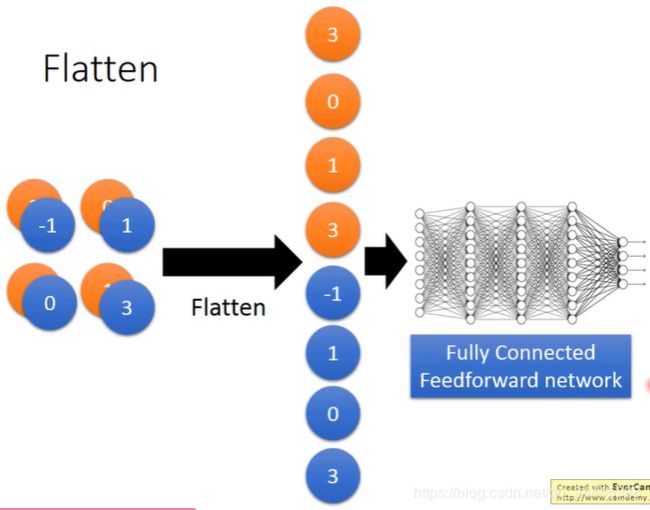

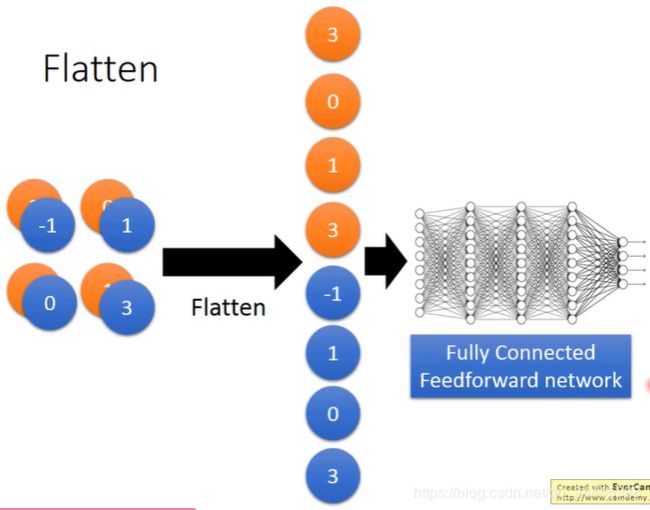

Flatten

手写数字识别PyTorch实现

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torchvision import datasets, transforms

import numpy as np

print("PyTorch Version: ",torch.__version__)

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 20, 5, 1)

self.conv2 = nn.Conv2d(20, 50, 5, 1)

self.fc1 = nn.Linear(4*4*50, 500)

self.fc2 = nn.Linear(500, 10)

def forward(self, x):

x = F.relu(self.conv1(x))

x = F.max_pool2d(x, 2, 2)

x = F.relu(self.conv2(x))

x = F.max_pool2d(x, 2, 2)

x = x.view(-1, 4*4*50)

x = F.relu(self.fc1(x))

x = self.fc2(x)

return F.log_softmax(x, dim=1)

def train(model, device, train_loader, optimizer, epoch, log_interval=100):

model.train()

for batch_idx, (data, target) in enumerate(train_loader):

data, target = data.to(device), target.to(device)

optimizer.zero_grad()

output = model(data)

loss = F.nll_loss(output, target)

loss.backward()

optimizer.step()

if batch_idx % log_interval == 0:

print("Train Epoch: {} [{}/{} ({:0f}%)]\tLoss: {:.6f}".format(

epoch, batch_idx * len(data), len(train_loader.dataset),

100. * batch_idx / len(train_loader), loss.item()

))

def test(model, device, test_loader):

model.eval()

test_loss = 0

correct = 0

with torch.no_grad():

for data, target in test_loader:

data, target = data.to(device), target.to(device)

output = model(data)

test_loss += F.nll_loss(output, target, reduction='sum').item()

pred = output.argmax(dim=1, keepdim=True)

correct += pred.eq(target.view_as(pred)).sum().item()

test_loss /= len(test_loader.dataset)

print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(

test_loss, correct, len(test_loader.dataset),

100. * correct / len(test_loader.dataset)))

torch.manual_seed(53113)

use_cuda = torch.cuda.is_available()

device = torch.device("cuda" if use_cuda else "cpu")

batch_size = test_batch_size = 32

kwargs = {'num_workers': 40, 'pin_memory': True} if use_cuda else {}

train_loader = torch.utils.data.DataLoader(

datasets.MNIST('./mnist_data', train=True, download=True,

transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])),

batch_size=batch_size, shuffle=True, **kwargs)

test_loader = torch.utils.data.DataLoader(

datasets.MNIST('./mnist_data', train=False, transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])),

batch_size=test_batch_size, shuffle=True, **kwargs)

lr = 0.01

momentum = 0.5

model = Net().to(device)

optimizer = optim.SGD(model.parameters(), lr=lr, momentum=momentum)

epochs = 2

for epoch in range(1, epochs + 1):

train(model, device, train_loader, optimizer, epoch)

test(model, device, test_loader)

save_model = True

if (save_model):

torch.save(model.state_dict(),"mnist_cnn.pt")