- 斤斤计较的婚姻到底有多难?

白心之岂必有为

很多人私聊我会问到在哪个人群当中斤斤计较的人最多?我都会回答他,一般婚姻出现问题的斤斤计较的人士会非常多,以我多年经验,在婚姻落的一塌糊涂的人当中,斤斤计较的人数占比在20~30%以上,也就是说10个婚姻出现问题的斤斤计较的人有2-3个有多不减。在婚姻出问题当中,有大量的心理不平衡的、尖酸刻薄的怨妇。在婚姻中仅斤斤计较有两种类型:第一种是物质上的,另一种是精神上的。在物质与精神上抠门已经严重的影响

- 情绪觉察日记第37天

露露_e800

今天是家庭关系规划师的第二阶最后一天,慧萍老师帮我做了个案,帮我处理了埋在心底好多年的一份恐惧,并给了我深深的力量!这几天出来学习,爸妈过来婆家帮我带小孩,妈妈出于爱帮我收拾东西,并跟我先生和婆婆产生矛盾,妈妈觉得他们没有照顾好我…。今晚回家见到妈妈,我很欣赏她并赞扬她,妈妈说今晚要跟我睡我说好,当我们俩躺在床上准备睡觉的时候,我握着妈妈的手对她说:妈妈这几天辛苦你了,你看你多利害把我们的家收拾得

- QQ群采集助手,精准引流必备神器

2401_87347160

其他经验分享

功能概述微信群查找与筛选工具是一款专为微信用户设计的辅助工具,它通过关键词搜索功能,帮助用户快速找到相关的微信群,并提供筛选是否需要验证的群组的功能。主要功能关键词搜索:用户可以输入关键词,工具将自动查找包含该关键词的微信群。筛选功能:工具提供筛选机制,用户可以选择是否只显示需要验证或不需要验证的群组。精准引流:通过上述功能,用户可以更精准地找到目标群组,进行有效的引流操作。3.设备需求该工具可以

- 机器学习与深度学习间关系与区别

ℒℴѵℯ心·动ꦿ໊ོ꫞

人工智能学习深度学习python

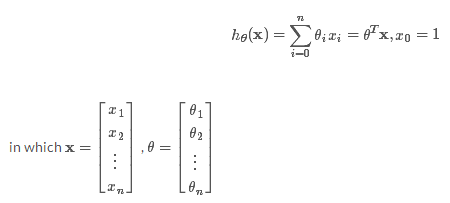

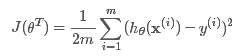

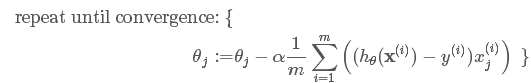

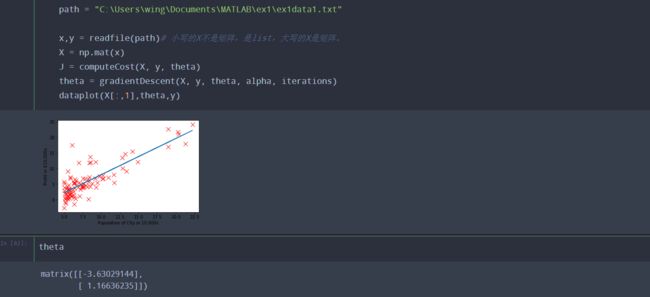

一、机器学习概述定义机器学习(MachineLearning,ML)是一种通过数据驱动的方法,利用统计学和计算算法来训练模型,使计算机能够从数据中学习并自动进行预测或决策。机器学习通过分析大量数据样本,识别其中的模式和规律,从而对新的数据进行判断。其核心在于通过训练过程,让模型不断优化和提升其预测准确性。主要类型1.监督学习(SupervisedLearning)监督学习是指在训练数据集中包含输入

- 底层逆袭到底有多难,不甘平凡的你准备好了吗?让吴起给你说说

造命者说

底层逆袭到底有多难,不甘平凡的你准备好了吗?让吴起给你说说我叫吴起,生于公元前440年的战国初期,正是群雄并起、天下纷争不断的时候。后人说我是军事家、政治家、改革家,是兵家代表人物。评价我一生历仕鲁、魏、楚三国,通晓兵家、法家、儒家三家思想,在内政军事上都有极高的成就。周安王二十一年(公元前381年),因变法得罪守旧贵族,被人乱箭射死。我出生在卫国一个“家累万金”的富有家庭,从年轻时候起就不甘平凡

- 谢谢你们,爱你们!

鹿游儿

昨天家人去泡温泉,二个孩子也带着去,出发前一晚,匆匆下班,赶回家和孩子一起收拾。饭后,我拿出笔和本子(上次去澳门时做手帐的本子)写下了1\2\3\4\5\6\7\8\9,让后让小壹去思考,带什么出发去旅游呢?她在对应的数字旁边画上了,泳衣、泳圈、肖恩、内衣内裤、tapuy、拖鞋……画完后,就让她自己对着这个本子,将要带的,一一带上,没想到这次带的书还是这本《便便工厂》(晚上姑婆发照片过来,妹妹累得

- 2021年12月19日,春蕾教育集团团建活动感受——黄晓丹

黄错错加油

感受:1.从陌生到熟悉的过程。游戏环节让我们在轻松的氛围中得到了锻炼,也增长了不少知识。2.游戏过程中,我们贡献的是个人力量,展现的是团队的力量。它磨合的往往不止是工作的熟悉,更是观念上契合度的贴近。3.这和工作是一样的道理。在各自的岗位上,每个人摆正自己的位置、各司其职充分发挥才能,并团结一致劲往一处使,才能实现最大的成功。新知:1.团队精神需要不断地创新。过去,人们把创新看作是冒风险,现在人们

- 《策划经理回忆录之二》

路基雅虎

话说三年变六年,飘了,飘了……眨眼,2013年5月,老吴回到了他的家乡——油城从新开启他的工作幻想症生涯。很庆幸,这是一家很有追求,同时敢于尝试的,且实力不容低调的新星房企——金源置业(前身泰源置业)更值得庆幸的是第一个盘就是油城十路的标杆之一:金源盛世。2013年5月,到2015年11月,两年的陪伴,迎来了一场大爆发。2000个筹,5万/筹,直接回笼1个亿!!!这……让我开始认真审视这座看似五线

- Long类型前后端数据不一致

igotyback

前端

响应给前端的数据浏览器控制台中response中看到的Long类型的数据是正常的到前端数据不一致前后端数据类型不匹配是一个常见问题,尤其是当后端使用Java的Long类型(64位)与前端JavaScript的Number类型(最大安全整数为2^53-1,即16位)进行数据交互时,很容易出现精度丢失的问题。这是因为JavaScript中的Number类型无法安全地表示超过16位的整数。为了解决这个问

- swagger访问路径

igotyback

swagger

Swagger2.x版本访问地址:http://{ip}:{port}/{context-path}/swagger-ui.html{ip}是你的服务器IP地址。{port}是你的应用服务端口,通常为8080。{context-path}是你的应用上下文路径,如果应用部署在根路径下,则为空。Swagger3.x版本对于Swagger3.x版本(也称为OpenAPI3)访问地址:http://{ip

- 30天风格练习-DAY2

黄希夷

Day2(重义)在一个周日/一周的最后一天,我来到位于市中心/市区繁华地带的一家购物中心/商场,中心内人很多/熙熙攘攘。我注意到/看见一个独行/孤身一人的年轻女孩/,留着一头引人注目/长过腰际的头发,上身穿一件暗红色/比正红色更深的衣服/穿在身体上的东西。走下扶梯的时候,她摔倒了/跌向地面,在她正要站起来/让身体离开地面的时候,过长/超过一般人长度的头发被支撑身体/躯干的手掌压/按在下面,她赶紧用

- html 中如何使用 uniapp 的部分方法

某公司摸鱼前端

htmluni-app前端

示例代码:Documentconsole.log(window);效果展示:好了,现在就可以uni.使用相关的方法了

- ArcGIS栅格计算器常见公式(赋值、0和空值的转换、补充栅格空值)

研学随笔

arcgis经验分享

我们在使用ArcGIS时通常经常用到栅格计算器,今天主要给大家介绍我日常中经常用到的几个公式,供大家参考学习。将特定值(-9999)赋值为0,例如-9999.Con("raster"==-9999,0,"raster")2.给空值赋予特定的值(如0)Con(IsNull("raster"),0,"raster")3.将特定的栅格值(如1)赋值为空值,其他保留原值SetNull("raster"==

- 高级编程--XML+socket练习题

masa010

java开发语言

1.北京华北2114.8万人上海华东2,500万人广州华南1292.68万人成都华西1417万人(1)使用dom4j将信息存入xml中(2)读取信息,并打印控制台(3)添加一个city节点与子节点(4)使用socketTCP协议编写服务端与客户端,客户端输入城市ID,服务器响应相应城市信息(5)使用socketTCP协议编写服务端与客户端,客户端要求用户输入city对象,服务端接收并使用dom4j

- 开心

蒋泳频

从无比抗拒来上课到接受,感动,收获~看着波哥成长,晶晶幸福笑容满面。感觉自己做的事情很有意义,很开心!还有3个感召目标就是还有三个有缘人,哈哈。明天感召去明日计划:8:30-11:00小公益11:00-21点上班,感召图片发自App图片发自App图片发自App

- 2018-07-23-催眠日作业-#不一样的31天#-66小鹿

小鹿_33

预言日:人总是在逃避命运的路上,与之不期而遇。心理学上有个著名的名词,叫做自证预言;经济学上也有一个很著名的定律叫做,墨菲定律;在灵修派上,还有一个很著名的法则,叫做吸引力法则。这3个领域的词,虽然看起来不太一样,但是他们都在告诉人们一个现象:你越担心什么,就越有可能会发生什么。同样的道理,你越想得到什么,就应该要积极地去创造什么。无论是自证预言,墨菲定律还是吸引力法则,对人都有正反2个维度的影响

- 本周第二次约练

2cfbdfe28a51

中原焦点团队中24初26刘霞2021.12.3约练161次,分享第368天当事人虽然是带着问题来的,但是咨询过程中发现,她是经过自己不断地调整和努力才走到现在的,看到当事人的不容易,找到例外,发现资源,力量感也就随之而来。增强画面感,或者说重温,会给当事人带来更深刻的感受。

- 回溯 Leetcode 332 重新安排行程

mmaerd

Leetcode刷题学习记录leetcode算法职场和发展

重新安排行程Leetcode332学习记录自代码随想录给你一份航线列表tickets,其中tickets[i]=[fromi,toi]表示飞机出发和降落的机场地点。请你对该行程进行重新规划排序。所有这些机票都属于一个从JFK(肯尼迪国际机场)出发的先生,所以该行程必须从JFK开始。如果存在多种有效的行程,请你按字典排序返回最小的行程组合。例如,行程[“JFK”,“LGA”]与[“JFK”,“LGB

- 今日联对0306

诗图佳得

自对联:烟销皓月临江浒,水漫金山荡塔裙。一一肖士平2020.3.6.1、试对肖老师联:烟销皓月临江浒,夜笼寒沙梦晚舟。耀哥求正2、试对萧老师联:烟销浩月临江浒,雾散乾坤解汉城。秀霞习作请各位老师校正3、自对联:烟销皓月临江浒,水漫金山荡塔裙。一一肖士平2020.3.6.4、试对肖老师垫场联:烟销皓月临江浒,雾锁寒林缈葉丛。小智求正[抱拳]5、试对肖老师联:烟销皓月临江浒;风卷乱云入峰巅。一一五品6

- 每日一题——第八十九题

互联网打工人no1

C语言程序设计每日一练c语言

题目:在字符串中找到提取数字,并统计一共找到多少整数,a123xxyu23&8889,那么找到的整数为123,23,8889//思想:#include#include#includeintmain(){charstr[]="a123xxyu23&8889";intcount=0;intnum=0;//用于临时存放当前正在构建的整数。boolinNum=false;//用于标记当前是否正在读取一个整

- 每日一题——第八十四题

互联网打工人no1

C语言程序设计每日一练c语言

题目:编写函数1、输入10个职工的姓名和职工号2、按照职工由大到小顺序排列,姓名顺序也随之调整3、要求输入一个职工号,用折半查找法找出该职工的姓名#define_CRT_SECURE_NO_WARNINGS#include#include#defineMAX_EMPLOYEES10typedefstruct{intid;charname[50];}Empolyee;voidinputEmploye

- 《小满细雨轻湿尘》

快乐的人ZZM

图片发自App《小满细雨轻湿尘》文/快乐的人zzm小满细雨轻湿尘石榴花开落纷纷落红不是无情物坠入泥土育养根2018-5-23

- WPF中的ComboBox控件几种数据绑定的方式

互联网打工人no1

wpfc#

一、用字典给ItemsSource赋值(此绑定用的地方很多,建议熟练掌握)在XMAL中:在CS文件中privatevoidBindData(){DictionarydicItem=newDictionary();dicItem.add(1,"北京");dicItem.add(2,"上海");dicItem.add(3,"广州");cmb_list.ItemsSource=dicItem;cmb_l

- Python中os.environ基本介绍及使用方法

鹤冲天Pro

#Pythonpython服务器开发语言

文章目录python中os.environos.environ简介os.environ进行环境变量的增删改查python中os.environ的使用详解1.简介2.key字段详解2.1常见key字段3.os.environ.get()用法4.环境变量的增删改查和判断是否存在4.1新增环境变量4.2更新环境变量4.3获取环境变量4.4删除环境变量4.5判断环境变量是否存在python中os.envi

- 怎么起诉借钱不还的人?怎样起诉欠款不还的人?

影子爱学习

怎么起诉借钱不还的人?怎样起诉欠款不还的人?如果遇到难以解决的法律问题,我们可以匹配专业律师。例如:婚姻家庭(离婚纠纷)、刑事辩护、合同纠纷、债权债务、房产(继承)纠纷、交通事故、劳动争议、人身损害、公司相关法律事务(法律顾问)等咨询推荐手机/微信:15633770876【全国案件皆可】借钱不还起诉对方需要哪些资料起诉欠钱不还的,一般需要的材料包括以下这些:借据、收据、欠条、付款凭证等证据,以及向

- 2019-12-22-22:30

涓涓1016

今天是冬至,写下我的日更,是因为这两天的学习真的是能量的满满,让我看到了自己,未来另外一种可能性,也让我看到了这两年这几年的过程中我所接受那些痛苦的来源。一切的根源和痛苦都来自于人生,家庭,而你的原生家庭,你的爸爸和妈妈,是因为你这个灵魂在那一刻选择他们作为你的爸爸和妈妈来的,所以你得接受他,你得接纳他,他就是因为他的存在而给你的学习和成长带来这些痛苦,那其实是你必然要经历的这个过程,当你去接纳的

- linux sdl windows.h,Windows下的SDL安装

奔跑吧linux内核

linuxsdlwindows.h

首先你要下载并安装SDL开发包。如果装在C盘下,路径为C:\SDL1.2.5如果在WINDOWS下。你可以按以下步骤:1.打开VC++,点击"Tools",Options2,点击directories选项3.选择"Includefiles"增加一个新的路径。"C:\SDL1.2.5\include"4,现在选择"Libaryfiles“增加"C:\SDL1.2.5\lib"现在你可以开始编写你的第

- Python教程:一文了解使用Python处理XPath

旦莫

Python进阶python开发语言

目录1.环境准备1.1安装lxml1.2验证安装2.XPath基础2.1什么是XPath?2.2XPath语法2.3示例XML文档3.使用lxml解析XML3.1解析XML文档3.2查看解析结果4.XPath查询4.1基本路径查询4.2使用属性查询4.3查询多个节点5.XPath的高级用法5.1使用逻辑运算符5.2使用函数6.实战案例6.1从网页抓取数据6.1.1安装Requests库6.1.2代

- 相信相信的力量

孙丽_cdb3

孙丽中级十期坚持分享第345天有一个特别有哲理的故事:有一只老鹰下了蛋,这个蛋,不知怎的就滚到了鸡窝里去了,鸡也下了一窝蛋,然后鸡妈妈把这些蛋全都浮出来了,孵出来之后等小鸡长大一点了,就觉得鹰蛋孵出来的那只小鹰怪模怪样,这些小鸡都嘲笑它,真难看,真笨,丑死了,那只小鹰觉得自己真是谁也不像,真是不好看,后来鸡妈妈也不喜欢他,我怎么生出你这样的孩子来了?真烦人,后来这群小鸡和小鹰一起生活,有一天,老鹰

- 感赏日志133

马姐读书

图片发自App感赏自己今天买个扫地机,以后可以解放出来多看点书,让这个智能小机器人替我工作了。感赏孩子最近进步很大,每天按时上学,认真听课,认真背书,主动认真完成老师布置的作业。感赏自己明白自己容易受到某人的影响,心情不好,每当此刻我就会舒缓,感赏,让自己尽快抽离,想好的一面。感赏儿子今天在我提醒他事情时,告诉我谢谢妈妈对我的提醒我明白了,而不是说我啰嗦,管事情,孩子更懂事了,懂得感恩了。投射父母

- mondb入手

木zi_鸣

mongodb

windows 启动mongodb 编写bat文件,

mongod --dbpath D:\software\MongoDBDATA

mongod --help 查询各种配置

配置在mongob

打开批处理,即可启动,27017原生端口,shell操作监控端口 扩展28017,web端操作端口

启动配置文件配置,

数据更灵活

- 大型高并发高负载网站的系统架构

bijian1013

高并发负载均衡

扩展Web应用程序

一.概念

简单的来说,如果一个系统可扩展,那么你可以通过扩展来提供系统的性能。这代表着系统能够容纳更高的负载、更大的数据集,并且系统是可维护的。扩展和语言、某项具体的技术都是无关的。扩展可以分为两种:

1.

- DISPLAY变量和xhost(原创)

czmmiao

display

DISPLAY

在Linux/Unix类操作系统上, DISPLAY用来设置将图形显示到何处. 直接登陆图形界面或者登陆命令行界面后使用startx启动图形, DISPLAY环境变量将自动设置为:0:0, 此时可以打开终端, 输出图形程序的名称(比如xclock)来启动程序, 图形将显示在本地窗口上, 在终端上输入printenv查看当前环境变量, 输出结果中有如下内容:DISPLAY=:0.0

- 获取B/S客户端IP

周凡杨

java编程jspWeb浏览器

最近想写个B/S架构的聊天系统,因为以前做过C/S架构的QQ聊天系统,所以对于Socket通信编程只是一个巩固。对于C/S架构的聊天系统,由于存在客户端Java应用,所以直接在代码中获取客户端的IP,应用的方法为:

String ip = InetAddress.getLocalHost().getHostAddress();

然而对于WEB

- 浅谈类和对象

朱辉辉33

编程

类是对一类事物的总称,对象是描述一个物体的特征,类是对象的抽象。简单来说,类是抽象的,不占用内存,对象是具体的,

占用存储空间。

类是由属性和方法构成的,基本格式是public class 类名{

//定义属性

private/public 数据类型 属性名;

//定义方法

publ

- android activity与viewpager+fragment的生命周期问题

肆无忌惮_

viewpager

有一个Activity里面是ViewPager,ViewPager里面放了两个Fragment。

第一次进入这个Activity。开启了服务,并在onResume方法中绑定服务后,对Service进行了一定的初始化,其中调用了Fragment中的一个属性。

super.onResume();

bindService(intent, conn, BIND_AUTO_CREATE);

- base64Encode对图片进行编码

843977358

base64图片encoder

/**

* 对图片进行base64encoder编码

*

* @author mrZhang

* @param path

* @return

*/

public static String encodeImage(String path) {

BASE64Encoder encoder = null;

byte[] b = null;

I

- Request Header简介

aigo

servlet

当一个客户端(通常是浏览器)向Web服务器发送一个请求是,它要发送一个请求的命令行,一般是GET或POST命令,当发送POST命令时,它还必须向服务器发送一个叫“Content-Length”的请求头(Request Header) 用以指明请求数据的长度,除了Content-Length之外,它还可以向服务器发送其它一些Headers,如:

- HttpClient4.3 创建SSL协议的HttpClient对象

alleni123

httpclient爬虫ssl

public class HttpClientUtils

{

public static CloseableHttpClient createSSLClientDefault(CookieStore cookies){

SSLContext sslContext=null;

try

{

sslContext=new SSLContextBuilder().l

- java取反 -右移-左移-无符号右移的探讨

百合不是茶

位运算符 位移

取反:

在二进制中第一位,1表示符数,0表示正数

byte a = -1;

原码:10000001

反码:11111110

补码:11111111

//异或: 00000000

byte b = -2;

原码:10000010

反码:11111101

补码:11111110

//异或: 00000001

- java多线程join的作用与用法

bijian1013

java多线程

对于JAVA的join,JDK 是这样说的:join public final void join (long millis )throws InterruptedException Waits at most millis milliseconds for this thread to die. A timeout of 0 means t

- Java发送http请求(get 与post方法请求)

bijian1013

javaspring

PostRequest.java

package com.bijian.study;

import java.io.BufferedReader;

import java.io.DataOutputStream;

import java.io.IOException;

import java.io.InputStreamReader;

import java.net.HttpURL

- 【Struts2二】struts.xml中package下的action配置项默认值

bit1129

struts.xml

在第一部份,定义了struts.xml文件,如下所示:

<!DOCTYPE struts PUBLIC

"-//Apache Software Foundation//DTD Struts Configuration 2.3//EN"

"http://struts.apache.org/dtds/struts

- 【Kafka十三】Kafka Simple Consumer

bit1129

simple

代码中关于Host和Port是割裂开的,这会导致单机环境下的伪分布式Kafka集群环境下,这个例子没法运行。

实际情况是需要将host和port绑定到一起,

package kafka.examples.lowlevel;

import kafka.api.FetchRequest;

import kafka.api.FetchRequestBuilder;

impo

- nodejs学习api

ronin47

nodejs api

NodeJS基础 什么是NodeJS

JS是脚本语言,脚本语言都需要一个解析器才能运行。对于写在HTML页面里的JS,浏览器充当了解析器的角色。而对于需要独立运行的JS,NodeJS就是一个解析器。

每一种解析器都是一个运行环境,不但允许JS定义各种数据结构,进行各种计算,还允许JS使用运行环境提供的内置对象和方法做一些事情。例如运行在浏览器中的JS的用途是操作DOM,浏览器就提供了docum

- java-64.寻找第N个丑数

bylijinnan

java

public class UglyNumber {

/**

* 64.查找第N个丑数

具体思路可参考 [url] http://zhedahht.blog.163.com/blog/static/2541117420094245366965/[/url]

*

题目:我们把只包含因子

2、3和5的数称作丑数(Ugly Number)。例如6、8都是丑数,但14

- 二维数组(矩阵)对角线输出

bylijinnan

二维数组

/**

二维数组 对角线输出 两个方向

例如对于数组:

{ 1, 2, 3, 4 },

{ 5, 6, 7, 8 },

{ 9, 10, 11, 12 },

{ 13, 14, 15, 16 },

slash方向输出:

1

5 2

9 6 3

13 10 7 4

14 11 8

15 12

16

backslash输出:

4

3

- [JWFD开源工作流设计]工作流跳跃模式开发关键点(今日更新)

comsci

工作流

既然是做开源软件的,我们的宗旨就是给大家分享设计和代码,那么现在我就用很简单扼要的语言来透露这个跳跃模式的设计原理

大家如果用过JWFD的ARC-自动运行控制器,或者看过代码,应该知道在ARC算法模块中有一个函数叫做SAN(),这个函数就是ARC的核心控制器,要实现跳跃模式,在SAN函数中一定要对LN链表数据结构进行操作,首先写一段代码,把

- redis常见使用

cuityang

redis常见使用

redis 通常被认为是一个数据结构服务器,主要是因为其有着丰富的数据结构 strings、map、 list、sets、 sorted sets

引入jar包 jedis-2.1.0.jar (本文下方提供下载)

package redistest;

import redis.clients.jedis.Jedis;

public class Listtest

- 配置多个redis

dalan_123

redis

配置多个redis客户端

<?xml version="1.0" encoding="UTF-8"?><beans xmlns="http://www.springframework.org/schema/beans" xmlns:xsi=&quo

- attrib命令

dcj3sjt126com

attr

attrib指令用于修改文件的属性.文件的常见属性有:只读.存档.隐藏和系统.

只读属性是指文件只可以做读的操作.不能对文件进行写的操作.就是文件的写保护.

存档属性是用来标记文件改动的.即在上一次备份后文件有所改动.一些备份软件在备份的时候会只去备份带有存档属性的文件.

- Yii使用公共函数

dcj3sjt126com

yii

在网站项目中,没必要把公用的函数写成一个工具类,有时候面向过程其实更方便。 在入口文件index.php里添加 require_once('protected/function.php'); 即可对其引用,成为公用的函数集合。 function.php如下:

<?php /** * This is the shortcut to D

- linux 系统资源的查看(free、uname、uptime、netstat)

eksliang

netstatlinux unamelinux uptimelinux free

linux 系统资源的查看

转载请出自出处:http://eksliang.iteye.com/blog/2167081

http://eksliang.iteye.com 一、free查看内存的使用情况

语法如下:

free [-b][-k][-m][-g] [-t]

参数含义

-b:直接输入free时,显示的单位是kb我们可以使用b(bytes),m

- JAVA的位操作符

greemranqq

位运算JAVA位移<<>>>

最近几种进制,加上各种位操作符,发现都比较模糊,不能完全掌握,这里就再熟悉熟悉。

1.按位操作符 :

按位操作符是用来操作基本数据类型中的单个bit,即二进制位,会对两个参数执行布尔代数运算,获得结果。

与(&)运算:

1&1 = 1, 1&0 = 0, 0&0 &

- Web前段学习网站

ihuning

Web

Web前段学习网站

菜鸟学习:http://www.w3cschool.cc/

JQuery中文网:http://www.jquerycn.cn/

内存溢出:http://outofmemory.cn/#csdn.blog

http://www.icoolxue.com/

http://www.jikexue

- 强强联合:FluxBB 作者加盟 Flarum

justjavac

r

原文:FluxBB Joins Forces With Flarum作者:Toby Zerner译文:强强联合:FluxBB 作者加盟 Flarum译者:justjavac

FluxBB 是一个快速、轻量级论坛软件,它的开发者是一名德国的 PHP 天才 Franz Liedke。FluxBB 的下一个版本(2.0)将被完全重写,并已经开发了一段时间。FluxBB 看起来非常有前途的,

- java统计在线人数(session存储信息的)

macroli

javaWeb

这篇日志是我写的第三次了 前两次都发布失败!郁闷极了!

由于在web开发中常常用到这一部分所以在此记录一下,呵呵,就到备忘录了!

我对于登录信息时使用session存储的,所以我这里是通过实现HttpSessionAttributeListener这个接口完成的。

1、实现接口类,在web.xml文件中配置监听类,从而可以使该类完成其工作。

public class Ses

- bootstrp carousel初体验 快速构建图片播放

qiaolevip

每天进步一点点学习永无止境bootstrap纵观千象

img{

border: 1px solid white;

box-shadow: 2px 2px 12px #333;

_width: expression(this.width > 600 ? "600px" : this.width + "px");

_height: expression(this.width &

- SparkSQL读取HBase数据,通过自定义外部数据源

superlxw1234

sparksparksqlsparksql读取hbasesparksql外部数据源

关键字:SparkSQL读取HBase、SparkSQL自定义外部数据源

前面文章介绍了SparSQL通过Hive操作HBase表。

SparkSQL从1.2开始支持自定义外部数据源(External DataSource),这样就可以通过API接口来实现自己的外部数据源。这里基于Spark1.4.0,简单介绍SparkSQL自定义外部数据源,访

- Spring Boot 1.3.0.M1发布

wiselyman

spring boot

Spring Boot 1.3.0.M1于6.12日发布,现在可以从Spring milestone repository下载。这个版本是基于Spring Framework 4.2.0.RC1,并在Spring Boot 1.2之上提供了大量的新特性improvements and new features。主要包含以下:

1.提供一个新的sprin