- python之pyttsx3实现文字转语音播报

l8947943

python问题语音识别人工智能pyttsx3python朗读

1.pyttsx3是什么pyttsx3是Python中的文本到语音转换库,可以实现文本的朗读功能。2.pyttsx3的安装pipinstallpyttsx33.pyttsx3的demoimportpyttsx3pyttsx3.speak("Areyouok?")pyttsx3.speak("最近有许多打工人都说打工好难")戴上耳机直接跑即可。是不是很简单!那如果我们想对读音的速率,中英文问题进行自

- Python报错解决:img2pdf.AlphaChannelError: Refusing to work on images with alpha channel

定星照空

python人工智能

img2pdf.AlphaChannelError:Refusingtoworkonimageswithalphachannel-solved解决img2pdf模块不能上传含alpha通道透明度的图片的问题解决img2pdf模块PNG图片转PDF文件因alpha通道报错问题文章目录前言一、AlphaChannelError为什么出现?二、该种报错解决方法1.方法一:转化其他格式图片2.方法二:去除

- 基于PyCATIA的工程图视图锁定工具开发实战解析

Python×CATIA工业智造

CATIA二次开发python自动化

引言本文针对CATIA工程图设计中视图误操作问题,基于PySide6与PyCATIA库开发了一款轻量化视图锁定工具。通过Python二次开发实现全视图/选定视图快速锁定、非模态交互界面及状态实时反馈功能,有效提升大型装配体工程图操作效率。文章深度解析代码架构设计、关键技术实现及工程应用价值,提供完整的开发方法论。一、工具功能与工程应用场景1.1核心功能模块功能模块技术指标应用场景全视图锁定批量操作

- Python中Pyttsx3库实现文本转化成语音MP3格式文件

定星照空

python

Pyttsx3库介绍pyttsx3库是一个功能强大且使用方便的Python本地文本转语音库。它不仅能在离线下将文本转换为语音MP3格式文件,也能在Windows、MacOS和Linux等多个操作系统上实现语音播报。同时,还可以调整语音播报的语速、音量和音色。安装与基本使用安装:cmd命令行中执行pipinstallpyttsx3。基本使用示例:importpyttsx3#初始化语音引擎engine

- springboot使用kafka自定义JSON序列化器和反序列化器

zhou_zhao_xu

Kafkaspring

1.序列化器packagecom.springboot.kafkademo.serialization;importcom.alibaba.fastjson.JSON;importcom.alibaba.fastjson.JSONObject;importorg.apache.kafka.common.serialization.Serializer;importjava.util.Map;/**

- 使用PyTorch搭建Transformer神经网络:入门篇

DASA13

pytorchtransformer神经网络

1.简介Transformer是一种强大的神经网络架构,在自然语言处理等多个领域取得了巨大成功。本教程将指导您使用PyTorch框架从头开始构建一个Transformer模型。我们将逐步解释每个组件,并提供详细的代码实现。2.环境设置首先,确保您的系统中已安装Python(推荐3.7+版本)。然后,安装PyTorch和其他必要的库:pipinstalltorchnumpymatplotlib3.P

- openai-agents 中custom example agent

ZHOU_CAMP

oi_agents人工智能

代码pipshowopenai-agentsName:openai-agentsVersion:0.0.4Summary:OpenAIAgentsSDKHome-page:https://github.com/openai/openai-agents-pythonAuthor:Author-email:OpenAILicense-Expression:MITLocation:d:\soft\ana

- Python 向量检索库Faiss使用

懒大王爱吃狼

pythonpython开发语言自动化Python基础python教程

Faiss(FacebookAISimilaritySearch)是一个由FacebookAIResearch开发的库,它专门用于高效地搜索和聚类大量向量。Faiss能够在几毫秒内搜索数亿个向量,这使得它非常适合于实现近似最近邻(ANN)搜索,这在许多应用中都非常有用,比如图像检索、推荐系统和自然语言处理。以下是如何使用Faiss的基本步骤和示例:1.安装Faiss首先,你需要安装Faiss。你可

- 通过启用Ranger插件的Hive审计日志同步到Doris做分析

fzip

DorisHivedoris审计hive

以下是基于ApacheDoris的RangerHive审计日志同步方案详细步骤,结合审计日志插件与数据导入策略实现:一、Doris环境准备1.创建审计日志库表参考搜索结果的表结构设计,根据Ranger日志字段调整建表语句:CREATEDATABASEIFNOTEXISTSranger_audit;CREATETABLEIFNOTEXISTSranger_audit_hive_log(repoTyp

- Python 应用部署云端实战指南 —— AWS、Google Cloud 与 Azure 全解析

清水白石008

pythonPython题库pythonawsazure

Python应用部署云端实战指南——AWS、GoogleCloud与Azure全解析在当下云计算飞速发展的时代,将Python应用部署到云平台已成为大多数开发者和企业的首选。无论是构建Web服务、API接口,还是自动化任务调度,云平台都能为我们提供高可靠性、弹性伸缩与简便管理的优势。本文将详细阐述如何将Python应用分别部署到AWS、GoogleCloud与Azure,并介绍各平台下涉及的部署工

- Python编程:为什么使用同步原语

林十一npc

Python语言python开发语言

Python编程:为什么使用同步原语1.同步原语同步原语:计算机科学中用于实现进程或线程之间同步的机制。目的:提供一种方法来控制多个进程或线程的执行顺序,确保他们以一致的方式访问共享资源在多线程/多进程编程中,多个执行单元可能同时访问共享资源,导致竞态条件。同步原语通过协调执行顺序,确保数据一致性和操作原子性2.Python核心同步原语同步原语作用适用场景模块Lock(互斥锁)确保同一时间只有一个

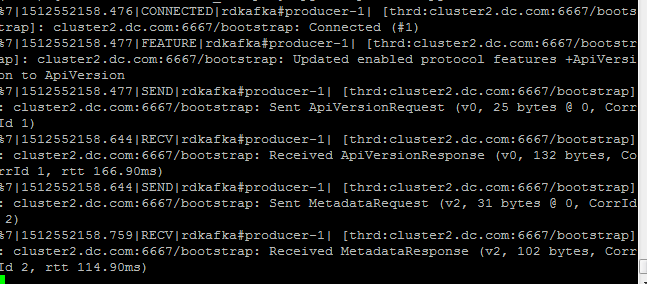

- kafka生产消息失败 ...has passed since batch creation plus linger time

Lichenpar

#记录BUG解决kafka网络安全java

背景:公司要使用华为云的kafka服务,我负责进行技术预研,后期要封装kafka组件。从华为云下载了demo,完全按照开发者文档来进行配置文件配置,但是会报以下错误。org.apache.kafka.common.errors.TimeoutException:Expiring10record(s)fortopic-0:30015mshaspassedsincebatchcreationplusl

- python函数闭包和递归_闭包和递归_个人文章 - SegmentFault 思否

weixin_39830313

python函数闭包和递归

js变量的作用域:全局作用域(全局变量):在函数外面声明的变量**生命周期(变量从声明到销毁):页面从打开到关闭.局部作用域(局部变量):在函数里面声明的变量**生命周:开始调用函数到函数执行完毕1.闭包使用介绍1.闭包介绍(closure)1.1闭包:是一个可以在函数外部访问函数内部变量的函数->闭包是函数1.2闭包作用:可以在函数外部访问函数内部变量->延长局部变量的生命周期1.3闭包语法:-

- python函数闭包和递归_python函数基础3--闭包 + 递归 + 函数回调

weixin_39532019

python函数闭包和递归

一、闭包1.函数嵌套defouter():print("外层函数")definner():print("内层函数")returninner()outer()函数嵌套流程图2.闭包闭包的表现形式:函数里面嵌套函数,外层函数返回内层函数的函数名,这种情况就称之为闭包defouter():print("外层函数")definner():print("内层函数")returninnerret=outer(

- 探索数据安全新境界:Apache Spark SQL Ranger Security插件深度揭秘

乌昱有Melanie

探索数据安全新境界:ApacheSparkSQLRangerSecurity插件深度揭秘项目地址:https://gitcode.com/gh_mirrors/sp/spark-ranger随着大数据的爆炸性增长,数据安全性成为了企业不可忽视的核心议题。在这一背景下,【ApacheSparkSQLRangerSecurityPlugin】以其强大的数据访问控制能力脱颖而出,成为数据处理领域的明星级

- python中的递归、回调函数以及闭包总结

敲代码敲到头发茂密

Python成长之路python开发语言

这里写目录标题一、递归例1:利用递归函数计算1到10的和例2:利用递归函数计算10的阶乘二、回调函数特别注意:在函数中的调用函数分为以下情况:1、同步回调2、异步回调三、闭包一、递归作用:在函数内部调用自己若干次例1:利用递归函数计算1到10的和defsum_num(num):ifnum>=1:sum=num+sum_num(num-1)else:sum=0returnsumprint(sum_n

- 使用Pygame实现记忆拼图游戏

点我头像干啥

Aipygamepython开发语言

引言记忆拼图游戏是一种经典的益智游戏,玩家需要通过翻转卡片来匹配相同的图案。这类游戏不仅能够锻炼玩家的记忆力,还能带来很多乐趣。本文将详细介绍如何使用Pygame库来实现一个简单的记忆拼图游戏。我们将从Pygame的基础知识开始,逐步构建游戏的各个部分,最终完成一个完整的游戏。1.Pygame简介Pygame是一个用于编写视频游戏的Python库,它基于SDL库(SimpleDirectMedia

- 《Python实战进阶》No28: 使用 Paramiko 实现远程服务器管理

带娃的IT创业者

Python实战进阶python服务器开发语言

No28:使用Paramiko实现远程服务器管理摘要在现代开发与运维中,远程服务器管理是必不可少的一环。通过SSH协议,我们可以安全地连接到远程服务器并执行各种操作。Python的Paramiko模块是一个强大的工具,能够帮助我们实现自动化任务,如代码部署、批量命令执行和文件传输。本集将深入讲解Paramiko的核心功能,并通过实战案例展示如何高效管理远程服务器。核心概念和知识点SSH协议的基本原

- 云原生周刊丨CIO 洞察:Kubernetes 解锁 AI 新纪元

KubeSphere 云原生

云原生kubernetes人工智能

开源项目推荐DRANETDRANET是由谷歌开发的K8s网络驱动程序,利用K8s的动态资源分配(DRA)功能,为高吞吐量和低延迟应用提供高性能网络支持。它旨在优化资源管理,确保K8s集群中的网络资源能够按需高效分配。DRANET采用Apache-2.0开源许可,鼓励社区贡献与扩展,是云原生环境下提升网络性能的创新解决方案。LazyjournalLazyjournal是一个用Go语言编写的终端用户界

- python八股(—) --FBV,CBV

suohanfjiusbis

数据库python

引言FBV是面向函数的视图。defFBV(request):ifrequest.method=='GET':returnHttpResponse("GET")elifrequest.method=='POST':returnHttpResponse("POST")CBV是面向类的视图。classCBV(View):defget(self,request):returnHttpResponse("G

- 【纯职业小组——思维】

Kent_J_Truman

蓝桥杯算法

题目思路第十五届蓝桥杯省赛PythonB组H题【纯职业小组】题解(AC)_蓝桥杯纯职业小组-CSDN博客代码#includeusingnamespacestd;usingll=longlong;intmain(){ios::sync_with_stdio(0);cin.tie(0);intt;cin>>t;while(t--){intn;llk;cin>>n>>k;unordered_maph;f

- 第十五届蓝桥杯省赛PythonB组B题【数字串个数】题解(AC)

信奥郭老师

蓝桥杯职场和发展

设n=10000n=10000n=10000。法一枚举333的个数以及777的个数,假设333的个数为iii,777的个数为jjj,那么非3,73,73,7的个数即为n−i−jn-i-jn−i−j。在长度为nnn的字符串中选取iii的方案数为CniC^i_nCni,在剩余n−in-in−i个位置选取jjj个的方案数为Cn−ijC^j_{n-i}Cn−ij,剩余位置个数为n−i−jn-i-jn−i−

- ModuleNotFoundError: No module named ‘h5py‘

Hardess-god

python

到ModuleNotFoundError:Nomodulenamed'h5py'错误表明Python环境中没有安装h5py模块。h5py是一个用于处理HDF5二进制数据格式的Python接口,广泛用于大规模存储和操纵数据。解决方案:安装h5py要解决这个问题,你需要在你的Python环境中安装h5py。以下是如何在不同环境中安装h5py的步骤:使用pip安装如果你使用的是pip包管理器,可以通过以

- CSP-J备考冲刺必刷题(C++) | AcWing 1253 家谱

热爱编程的通信人

c++开发语言

本文分享的必刷题目是从蓝桥云课、洛谷、AcWing等知名刷题平台精心挑选而来,并结合各平台提供的算法标签和难度等级进行了系统分类。题目涵盖了从基础到进阶的多种算法和数据结构,旨在为不同阶段的编程学习者提供一条清晰、平稳的学习提升路径。欢迎大家订阅我的专栏:算法题解:C++与Python实现!附上汇总贴:算法竞赛备考冲刺必刷题(C++)|汇总【题目来源】Acwing:1253.家谱-AcWing题库

- 蓝桥杯2024年第十五届省赛真题-魔法巡游(Python)

罄竹_

python刷题python蓝桥杯算法

前言本文参考了FJ_EYoungOneC的文章思路,并且修改了该文章的某些理解上的偏差。一、题目题目来源:dotcpp题目描述在蓝桥王国中,两位魔法使者,小蓝与小桥,肩负着维护时空秩序的使命。他们每人分别持有N个符文石,这些石头被赋予了强大的力量,每一块上都刻有一个介于1到109之间的数字符号。小蓝的符文石集合标记为s1,s2,...,sN,小桥的则为t1,t2,...,tN。两位魔法使者的任务是

- 想使用dify实现docx文档的自动生成?试了一圈,感觉还是根据python-docx更靠谱

几道之旅

人工智能智能体及数字员工人工智能

前言:文档自动生成的需求痛点在软件开发过程中,需求文档、设计文档等材料的编写是每个开发者都绕不开的工作。最近笔者接到一个需要批量生成标准化需求文档的任务,尝试了目前热门的低代码工具Dify后,发现对于稍微复杂格式的文档生成需求(例如文本居中这么简单的需求),最终还是回归到基于python-docx库的解决方案。本文将分享两种技术路线的对比实践。一、Dify的踩坑经历我尝试了markdown转doc

- python中列表排序

hedgehog"

pythonpythonlist

Python中列表的排序方法1.sort()方法2.sorted()方法========================================1.sort()函数,无返回值主要参数:(1)key:用来进行比较的元素,指定可迭代对象的一个元素作为参数来进行排序。(2)reverse:排序规则。reverse=True降序排序reverse=False升序排序(默认)示例1:list1=[5

- python 列表排序

rainynights

Python

在我们实际使用中,对于列表的操作是十分常见的。对于列表的数据,在很多特殊的情况下我们需要对列表内的数据进行排列以达到我们特定的显示需求。今天,我们一起看一下python中关于列表排序的一些知识。有些时候我们希望对列表进行排序后,列表可以保存我们排序后的结果,但是很多情况下我们只是希望通过列表的排序,临时的显示排序结果而已。所以对于列表的排序可以分为永久性的排序和临时性的排序。sort()sort(

- 使用Python和LangChain构建检索增强生成(RAG)应用的详细指南

m0_57781768

pythonlangchain搜索引擎

使用Python和LangChain构建检索增强生成(RAG)应用的详细指南引言在人工智能和自然语言处理领域,利用大语言模型(LLM)构建复杂的问答(Q&A)系统是一个重要应用。检索增强生成(RetrievalAugmentedGeneration,RAG)是一种技术,通过将模型知识与额外数据结合来增强LLM的能力,使其能够回答关于特定源信息的问题。这些应用不仅限于公开数据,还可以处理私有数据和模

- 华为OD机试 - 相对开音节 - 正则表达式(Python/JS/C/C++ 2024 E卷 100分)

哪 吒

华为od正则表达式python

华为OD机试2024E卷题库疯狂收录中,刷题点这里专栏导读本专栏收录于《华为OD机试真题(Python/JS/C/C++)》。刷的越多,抽中的概率越大,私信哪吒,备注华为OD,加入华为OD刷题交流群,每一题都有详细的答题思路、详细的代码注释、3个测试用例、为什么这道题采用XX算法、XX算法的适用场景,发现新题目,随时更新。一、题目描述相对开音节构成的结构为辅音+元音(aeiou)+辅音(r除外)+

- Nginx负载均衡

510888780

nginx应用服务器

Nginx负载均衡一些基础知识:

nginx 的 upstream目前支持 4 种方式的分配

1)、轮询(默认)

每个请求按时间顺序逐一分配到不同的后端服务器,如果后端服务器down掉,能自动剔除。

2)、weight

指定轮询几率,weight和访问比率成正比

- RedHat 6.4 安装 rabbitmq

bylijinnan

erlangrabbitmqredhat

在 linux 下安装软件就是折腾,首先是测试机不能上外网要找运维开通,开通后发现测试机的 yum 不能使用于是又要配置 yum 源,最后安装 rabbitmq 时也尝试了两种方法最后才安装成功

机器版本:

[root@redhat1 rabbitmq]# lsb_release

LSB Version: :base-4.0-amd64:base-4.0-noarch:core

- FilenameUtils工具类

eksliang

FilenameUtilscommon-io

转载请出自出处:http://eksliang.iteye.com/blog/2217081 一、概述

这是一个Java操作文件的常用库,是Apache对java的IO包的封装,这里面有两个非常核心的类FilenameUtils跟FileUtils,其中FilenameUtils是对文件名操作的封装;FileUtils是文件封装,开发中对文件的操作,几乎都可以在这个框架里面找到。 非常的好用。

- xml文件解析SAX

不懂事的小屁孩

xml

xml文件解析:xml文件解析有四种方式,

1.DOM生成和解析XML文档(SAX是基于事件流的解析)

2.SAX生成和解析XML文档(基于XML文档树结构的解析)

3.DOM4J生成和解析XML文档

4.JDOM生成和解析XML

本文章用第一种方法进行解析,使用android常用的DefaultHandler

import org.xml.sax.Attributes;

- 通过定时任务执行mysql的定期删除和新建分区,此处是按日分区

酷的飞上天空

mysql

使用python脚本作为命令脚本,linux的定时任务来每天定时执行

#!/usr/bin/python

# -*- coding: utf8 -*-

import pymysql

import datetime

import calendar

#要分区的表

table_name = 'my_table'

#连接数据库的信息

host,user,passwd,db =

- 如何搭建数据湖架构?听听专家的意见

蓝儿唯美

架构

Edo Interactive在几年前遇到一个大问题:公司使用交易数据来帮助零售商和餐馆进行个性化促销,但其数据仓库没有足够时间去处理所有的信用卡和借记卡交易数据

“我们要花费27小时来处理每日的数据量,”Edo主管基础设施和信息系统的高级副总裁Tim Garnto说道:“所以在2013年,我们放弃了现有的基于PostgreSQL的关系型数据库系统,使用了Hadoop集群作为公司的数

- spring学习——控制反转与依赖注入

a-john

spring

控制反转(Inversion of Control,英文缩写为IoC)是一个重要的面向对象编程的法则来削减计算机程序的耦合问题,也是轻量级的Spring框架的核心。 控制反转一般分为两种类型,依赖注入(Dependency Injection,简称DI)和依赖查找(Dependency Lookup)。依赖注入应用比较广泛。

- 用spool+unixshell生成文本文件的方法

aijuans

xshell

例如我们把scott.dept表生成文本文件的语句写成dept.sql,内容如下:

set pages 50000;

set lines 200;

set trims on;

set heading off;

spool /oracle_backup/log/test/dept.lst;

select deptno||','||dname||','||loc

- 1、基础--名词解析(OOA/OOD/OOP)

asia007

学习基础知识

OOA:Object-Oriented Analysis(面向对象分析方法)

是在一个系统的开发过程中进行了系统业务调查以后,按照面向对象的思想来分析问题。OOA与结构化分析有较大的区别。OOA所强调的是在系统调查资料的基础上,针对OO方法所需要的素材进行的归类分析和整理,而不是对管理业务现状和方法的分析。

OOA(面向对象的分析)模型由5个层次(主题层、对象类层、结构层、属性层和服务层)

- 浅谈java转成json编码格式技术

百合不是茶

json编码java转成json编码

json编码;是一个轻量级的数据存储和传输的语言

在java中需要引入json相关的包,引包方式在工程的lib下就可以了

JSON与JAVA数据的转换(JSON 即 JavaScript Object Natation,它是一种轻量级的数据交换格式,非

常适合于服务器与 JavaScript 之间的数据的交

- web.xml之Spring配置(基于Spring+Struts+Ibatis)

bijian1013

javaweb.xmlSSIspring配置

指定Spring配置文件位置

<context-param>

<param-name>contextConfigLocation</param-name>

<param-value>

/WEB-INF/spring-dao-bean.xml,/WEB-INF/spring-resources.xml,

/WEB-INF/

- Installing SonarQube(Fail to download libraries from server)

sunjing

InstallSonar

1. Download and unzip the SonarQube distribution

2. Starting the Web Server

The default port is "9000" and the context path is "/". These values can be changed in &l

- 【MongoDB学习笔记十一】Mongo副本集基本的增删查

bit1129

mongodb

一、创建复本集

假设mongod,mongo已经配置在系统路径变量上,启动三个命令行窗口,分别执行如下命令:

mongod --port 27017 --dbpath data1 --replSet rs0

mongod --port 27018 --dbpath data2 --replSet rs0

mongod --port 27019 -

- Anychart图表系列二之执行Flash和HTML5渲染

白糖_

Flash

今天介绍Anychart的Flash和HTML5渲染功能

HTML5

Anychart从6.0第一个版本起,已经逐渐开始支持各种图的HTML5渲染效果了,也就是说即使你没有安装Flash插件,只要浏览器支持HTML5,也能看到Anychart的图形(不过这些是需要做一些配置的)。

这里要提醒下大家,Anychart6.0版本对HTML5的支持还不算很成熟,目前还处于

- Laravel版本更新异常4.2.8-> 4.2.9 Declaration of ... CompilerEngine ... should be compa

bozch

laravel

昨天在为了把laravel升级到最新的版本,突然之间就出现了如下错误:

ErrorException thrown with message "Declaration of Illuminate\View\Engines\CompilerEngine::handleViewException() should be compatible with Illuminate\View\Eng

- 编程之美-NIM游戏分析-石头总数为奇数时如何保证先动手者必胜

bylijinnan

编程之美

import java.util.Arrays;

import java.util.Random;

public class Nim {

/**编程之美 NIM游戏分析

问题:

有N块石头和两个玩家A和B,玩家A先将石头随机分成若干堆,然后按照BABA...的顺序不断轮流取石头,

能将剩下的石头一次取光的玩家获胜,每次取石头时,每个玩家只能从若干堆石头中任选一堆,

- lunce创建索引及简单查询

chengxuyuancsdn

查询创建索引lunce

import java.io.File;

import java.io.IOException;

import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.analysis.standard.StandardAnalyzer;

import org.apache.lucene.document.Docume

- [IT与投资]坚持独立自主的研究核心技术

comsci

it

和别人合作开发某项产品....如果互相之间的技术水平不同,那么这种合作很难进行,一般都会成为强者控制弱者的方法和手段.....

所以弱者,在遇到技术难题的时候,最好不要一开始就去寻求强者的帮助,因为在我们这颗星球上,生物都有一种控制其

- flashback transaction闪回事务查询

daizj

oraclesql闪回事务

闪回事务查询有别于闪回查询的特点有以下3个:

(1)其正常工作不但需要利用撤销数据,还需要事先启用最小补充日志。

(2)返回的结果不是以前的“旧”数据,而是能够将当前数据修改为以前的样子的撤销SQL(Undo SQL)语句。

(3)集中地在名为flashback_transaction_query表上查询,而不是在各个表上通过“as of”或“vers

- Java I/O之FilenameFilter类列举出指定路径下某个扩展名的文件

游其是你

FilenameFilter

这是一个FilenameFilter类用法的例子,实现的列举出“c:\\folder“路径下所有以“.jpg”扩展名的文件。 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28

- C语言学习五函数,函数的前置声明以及如何在软件开发中合理的设计函数来解决实际问题

dcj3sjt126com

c

# include <stdio.h>

int f(void) //括号中的void表示该函数不能接受数据,int表示返回的类型为int类型

{

return 10; //向主调函数返回10

}

void g(void) //函数名前面的void表示该函数没有返回值

{

//return 10; //error 与第8行行首的void相矛盾

}

in

- 今天在测试环境使用yum安装,遇到一个问题: Error: Cannot retrieve metalink for repository: epel. Pl

dcj3sjt126com

centos

今天在测试环境使用yum安装,遇到一个问题:

Error: Cannot retrieve metalink for repository: epel. Please verify its path and try again

处理很简单,修改文件“/etc/yum.repos.d/epel.repo”, 将baseurl的注释取消, mirrorlist注释掉。即可。

&n

- 单例模式

shuizhaosi888

单例模式

单例模式 懒汉式

public class RunMain {

/**

* 私有构造

*/

private RunMain() {

}

/**

* 内部类,用于占位,只有

*/

private static class SingletonRunMain {

priv

- Spring Security(09)——Filter

234390216

Spring Security

Filter

目录

1.1 Filter顺序

1.2 添加Filter到FilterChain

1.3 DelegatingFilterProxy

1.4 FilterChainProxy

1.5

- 公司项目NODEJS实践0.1

逐行分析JS源代码

mongodbnginxubuntunodejs

一、前言

前端如何独立用nodeJs实现一个简单的注册、登录功能,是不是只用nodejs+sql就可以了?其实是可以实现,但离实际应用还有距离,那要怎么做才是实际可用的。

网上有很多nod

- java.lang.Math

liuhaibo_ljf

javaMathlang

System.out.println(Math.PI);

System.out.println(Math.abs(1.2));

System.out.println(Math.abs(1.2));

System.out.println(Math.abs(1));

System.out.println(Math.abs(111111111));

System.out.println(Mat

- linux下时间同步

nonobaba

ntp

今天在linux下做hbase集群的时候,发现hmaster启动成功了,但是用hbase命令进入shell的时候报了一个错误 PleaseHoldException: Master is initializing,查看了日志,大致意思是说master和slave时间不同步,没办法,只好找一种手动同步一下,后来发现一共部署了10来台机器,手动同步偏差又比较大,所以还是从网上找现成的解决方

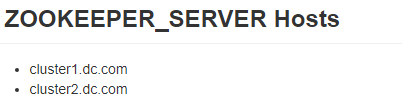

- ZooKeeper3.4.6的集群部署

roadrunners

zookeeper集群部署

ZooKeeper是Apache的一个开源项目,在分布式服务中应用比较广泛。它主要用来解决分布式应用中经常遇到的一些数据管理问题,如:统一命名服务、状态同步、集群管理、配置文件管理、同步锁、队列等。这里主要讲集群中ZooKeeper的部署。

1、准备工作

我们准备3台机器做ZooKeeper集群,分别在3台机器上创建ZooKeeper需要的目录。

数据存储目录

- Java高效读取大文件

tomcat_oracle

java

读取文件行的标准方式是在内存中读取,Guava 和Apache Commons IO都提供了如下所示快速读取文件行的方法: Files.readLines(new File(path), Charsets.UTF_8); FileUtils.readLines(new File(path)); 这种方法带来的问题是文件的所有行都被存放在内存中,当文件足够大时很快就会导致

- 微信支付api返回的xml转换为Map的方法

xu3508620

xmlmap微信api

举例如下:

<xml>

<return_code><![CDATA[SUCCESS]]></return_code>

<return_msg><![CDATA[OK]]></return_msg>

<appid><