分布式深度学习技术-AllReduce

(如果只想了解核心思想,只需要关注红色字体部分即可了解AllReduce和Ring-AllReduce算法的核心思想)

Hello, I am Yuichiro Ueno. I participated in a summer internship program at PFN in 2017, and I currently work as a part-time engineer. I am an undergraduate student at Tokyo Institute of Technology, and my research topic is High-Performance, Parallel and Distributed Computing.

In this blog post, I will describe our recent study on algorithms for AllReduce, a communication operation used for distributed deep learning.

(AllReduce算法,是用于分布式深度学习的通信运算)

What is Distributed Deep Learning?

Currently, one of the significant challenges of deep learning is it is a very time-consuming process. Designing a deep learning model requires design space exploration of a large number of hyper-parameters and processing big data. Thus, accelerating the training process is critical for our research and development. Distributed deep learning is one of the essential technologies in reducing training time.

We have deployed a private supercomputer “MN-1” to accelerate our research and development process. It is equipped with 1024 NVIDIA® Tesla® P100 GPUs and Mellanox® InfiniBand FDR interconnect and is the most powerful supercomputer in the industry segment in Japan. By leveraging MN-1, we completed training a ResNet-50 model on the ImageNet dataset in 15 minutes.

Communication among GPUs is one of the many challenges when training distributed deep learning models in a large-scale environment. The latency of exchanging gradients over all GPUs is a severe bottleneck in data-parallel synchronized distributed deep learning.

(GPU之间的通信是在大规模环境中训练分布式深度学习模型时的众多挑战之一。 在所有GPU上交换梯度的延迟是数据并行同步分布式深度学习中的严重瓶颈。)

什么是数据并行同步分布式深度学习,可以参考这篇文章。

How is the communication performed in distributed deep learning? Also, why is the communication so time-consuming?

The Importance of AllReduce in Distributed Deep Learning

In synchronized data-parallel distributed deep learning, the major computation steps are:

在同步数据并行分布式深度学习中,主要计算步骤如下:

- Compute the gradient of the loss function using a minibatch on each GPU.

使用每个GPU上的minibatch计算损失函数的梯度 - Compute the mean of the gradients by inter-GPU communication.

通过GPU间通信计算梯度的平均值 - Update the model.

更新模型

AllReduce就是用来计算上面第二步中的多GPU之间梯度的均值的方法

To compute the mean, we use a collective communication operation called “AllReduce.”

As of now, one of the fastest collective communication libraries for GPU clusters is NVIDIA Collective Communication Library: NCCL[3]. It achieves far better communication performance than MPI, which is the de-facto standard communication library in the HPC community. NCCL is indispensable for achieving high performance in distributed deep learning using ChainerMN. Without it, the ImageNet 15-min feat could not have been achieved[2].

Our researchers and engineers were curious about NCCL’s excellent performance. Since NCCL is not an open source library, we tried to understand the high performance of the library by developing and optimizing an experimental AllReduce library.

Algorithms of AllReduce( AllReduce算法 )

First, let’s take a look at the AllReduce algorithms.

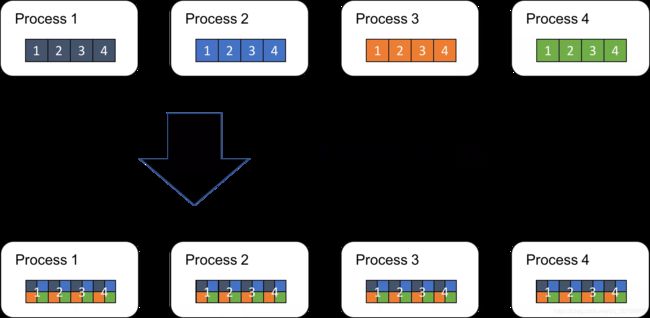

AllReduce is an operation that reduces the target arrays in all processes to a single array and returns the resultant array to all processes.

AllReduce是一种将所有process中的目标数组(即表示All),减少为单个数组(即表示Reduce)并将结果数组返回给所有process的操作。(比如将所有GPU上的梯度值,假设数组表示,合并并执行reduce操作成一个数组,并返回给所有GPU)

下面以四个process为例,讲解AllReduce的执行流程。假设大P为process总数,小p表示第p个process,每个process上有长度为N的数组。

Now, let P P P the total number of processes. Each process has an array of length N called A p A_p Ap. i-th element of the array of process p ( 1 ≤ p ≤ P ) (1≤p≤P) (1≤p≤P) is A p , i A_{p,i} Ap,i.

The resulting array B is to be:

B i = A 1 , i O p A 2 , i O p … O p A P , i B_i = A_{1,i}\quad Op\quad A_{2,i}\quad Op\quad …\quad Op\quad A_{P,i} Bi=A1,iOpA2,iOp…OpAP,i

Here, Op is a binary operator. SUM, MAX, and MIN are frequently used. In distributed deep learning, the SUM operation is used to compute the mean of gradients. In the rest of this blog post, we assume that the reduction operation is SUM. Figure 1 illustrates how the AllReduce operation works by using an example of P=4 and N=4.

Fig.1 AllReduce Operation

There are several algorithms to implement the operation. For example,

a straightforward one is to select one process as a master, gather all arrays into the master, perform reduction operations locally in the master, and then distribute the resulting array to the rest of the processes.

最直接的方式就是选取一个process(GPU)作为master,把其他所有process(GPU)上的数组(比如数组每一个元素代表一个参数的梯度),然后在master上执行reduce操作,并将计算结果在分发到其他所有的process中。

Although this algorithm is simple and easy to implement, it is not scalable. The master process is a performance bottleneck because its communication and reduction costs increase in proportion to the number of total processes.

虽然上面AllReduce算法简单且易于实现,但它不具有可扩展性。 master process是一个性能瓶颈因为它的通信和reduce成本与总process数成比例增加。

Faster and more scalable algorithms have been proposed. They eliminate the bottleneck by carefully distributing the computation and communication over the participant processes.

Such algorithms include Ring-AllReduce and Rabenseifner’s algorithm[4].

一种更好的算法就是Ring-AllReduce算法

We will focus on the Ring-AllReduce algorithms in this blog post. This algorithm is also employed by NCCL [5] and baidu-allreduce[6].

Ring-AllReduce

Fig.2 Example of a process ring

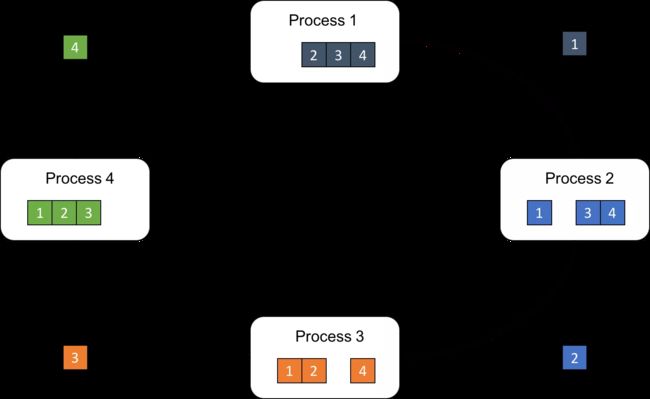

First, each process divides its own array into P subarrays, which we refer to as “chunks”. Let chunk[p] be the p-th chunk.

每个process把自己的数组分成P(P为process总数)个子数组,子数组称之为chunks,令chunk[p]表示第p个chunk

Next, let us focus on the process [p]. The process sends chunk[p] to the next process, while it receives chunk[p-1] from the previous process simultaneously (Fig.3).

假设关注第p个process,该process把chunk[p]发给下一个process,并从上一个process接收chunk[p-1],比如图3中Process2 把2发送给Process3,并从Process1接收1。

Fig.3 Each process sends its chunk[p] to the next process [p+1]

Then, process p performs the reduction operation to the received chunk[p-1] and its own chunk[p-1], and sends the reduced chunk to the next process p+1 (Fig.4).

process p计算把接受到的chunk[p-1]和自己的chunk[p-1]一起计算reduce,并把计算后的chunk值发给下一个process,如图4所示,计算一轮的时候,process2把从process1接收到的1和自身的1计算reduce(比如SUM),然后发送给process3。

Fig.4 Each process sends a reduced chunk to the next process

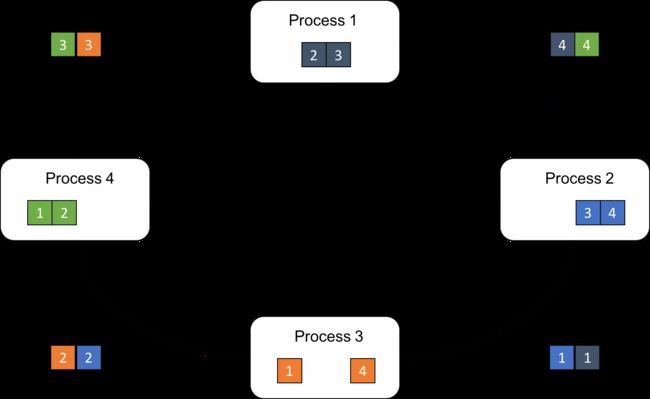

By repeating the receive-reduce-send steps P-1 times, each process obtains a different portion of the resulting array (Fig.5).

这样,经过P-1次之后,每个process都持有结果的一部分(在这里是一个参数的reduce值,假设总共4个参数)

Fig.5 After P-1 steps, each process has a reduced subarray.

In other words, each process adds its local chunk to a received chunk and send it to the next process. In other words, every chunk travels all around the ring and accumulates a chunk in each process. After visiting all processes once, it becomes a portion of the final result array, and the last-visited process holds the chunk.

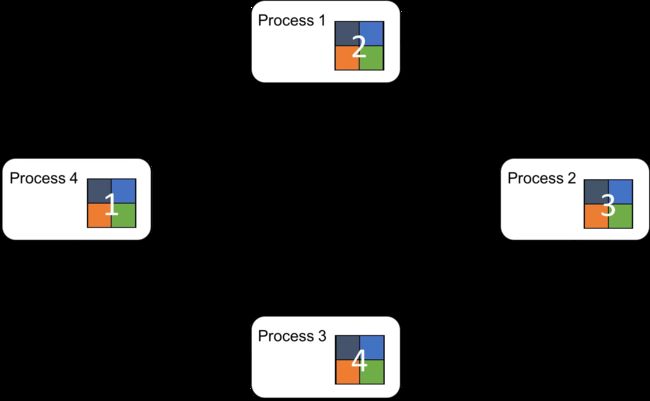

Finally, all processes can obtain the complete array by sharing the distributed partial results among them. This is achieved by doing the circulating step again without reduction operations, i.e., merely overwriting the received chunk to the corresponding local chunk in each process. The AllReduce operation completes when all processes obtain all portions of the final array.

最后,每个process之间在循环一次(不计算reduce)就可以将全部reduce后的值发送到每个process上。

普通的AllReduce和Ring-AllReduce之间通信总数的对比如下:

Let’s compare the amount of communication of Ring-AllReduce to that of the simple algorithm we mentioned above.

In the simple algorithm, the master process receives all the arrays from all other processes, which means the total amount of received data is ( P – 1 ) × N (P–1)×N (P–1)×N. After the reduction operation, it sends the arrays back to all the processes, which is again ( P – 1 ) × N (P–1)×N (P–1)×N data. Thus, the amount of communication of the master process is proportional to P P P.

In the Ring-AllReduce algorithm, we can calculate the amount of communication in each process in the following way. In the earlier half of the algorithm, each process sends an array, the size of which is N / P N/P N/P, P − 1 P−1 P−1 times. Next, each process again sends an array of the same size P − 1 P-1 P−1 times. The total amount of data each process sends throughout the algorithm is 2 N ( P − 1 ) / P 2N(P−1)/P 2N(P−1)/P, which is practically independent of P.

Thus, the Ring-Allreduce algorithm is more efficient than the simple algorithm because it eliminates the bottleneck process by distributing computation and communication evenly over all participant processes. Many AllReduce implementations adopt Ring-AllReduce, and it is suitable for distributed deep learning workloads as well.

Implementation and Optimization

The Ring-AllReduce algorithm is simple to implement if basic send and receive routines are given. baidu-allreduce[6] is built on top of MPI using MPI_Send and MPI_Recv.

However, we tried to do further optimizations by using InfiniBand Verbs API instead of MPI. To fully utilize hardware resources, the algorithm has multiple stages such as memory registration (pinning), cuda-memcpy, send, reduction, receive, and memory deregistration, and they are processed in a software pipeline. Here, “registration” and “deregistration” are pre- and post-processing stages for DMA data transfer. Such low-level operations are abstracted out in MPI send/receive routines, and we are not able to split them into pipeline stages. To increase the granularity of the communication and computation, we further divide chunks into smaller sub-chunks. Also, we introduce a memory pool to hide memory allocation overhead.

Performance Evaluation

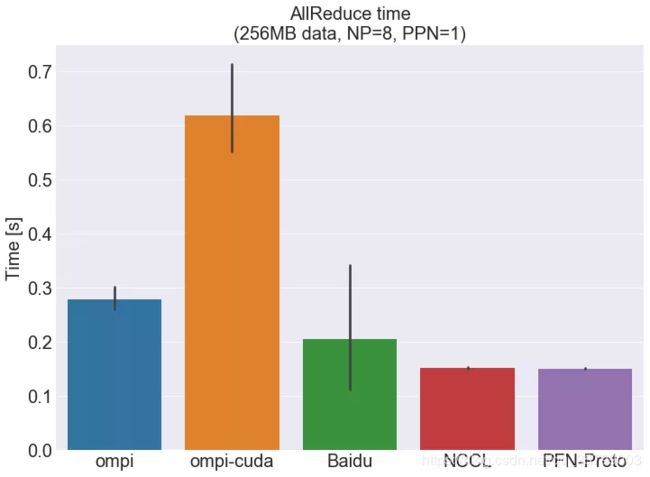

For performance evaluation, we compared our prototype (called PFN-Proto) to several AllReduce implementations shown in the Appendix.

Our prototype implementation currently focuses on inter-node communication; it is not optimized for intra-node communication using shared memory or GPU-to-GPU DMA data transfer. We evaluated the implementations in one process per node configuration. For Open MPI [7], our company is yet to introduce the latest version 3.x series because the most recent series has a minor issue related to GPUDirect. So, we used version 2.1.3 instead.

We used our private supercomputer MN-1 for this experiment, as shown in the “Experimental environment” below. Eight processes were run, where one process ran on one computing node. The target data size is 256MB.

Fig.6 AllReduce Execution Time

Figure 6 shows the result of the evaluation. Each bar indicates the median of 10 runs. The error bar indicates confidence intervals. The details of each library are shown in the “software versions” below.

First, let’s look at the median values. Our experimental implementation, PFN-Proto, showed the fastest time, which is approximately 82%, 286%, 28%, 1.6% better than ompi, ompi-cuda, Baidu, NCCL, respectively. One thing worth mentioning, which is not in the graph, is that Baidu achieved the fastest single-run time 0.097 [s] among all the five libraries.

Next, we focus on the variance of the performance. Maximum and minimum runtimes of PFN-Proto and NCCL are within +/- 3% and +/- 6%, respectively. In contrast, Baidu’s maximum value is 7.5x its median, because its first run takes a very long time. Its maximum runtime excluding the first run is +9.6% over the median, which is still more significant than those of NCCL and PFN-Proto.

Our hypothesis is that the performance variances of MPI and MPI-based routines are attributed to MPI’s internal behavior related to memory operations. MPI’s programming interface hides memory allocation and registration operations for InfiniBand communication. Timings of such operations are not controllable from those AllReduce implementations.

# Summary

We described the AllReduce communication pattern, which is very important for distributed deep learning. In particular, we implemented the Ring-AllReduce algorithm in our experimental communication library, and it achieved comparable performance to NCCL library released by NVIDIA. The implementation efficiently utilizes available hardware resources through advanced optimization such as using InfiniBand Verbs API and software pipelining. We continue our research and development on accelerating distributed deep learning.

Caveats: our implementation is experimental, and we only demonstrated the performance on our in-house cluster. NCCL is a highly practical and usable library thanks to its performance suitability and availability on a wide range of IB-connected NVIDIA GPU clusters.

Acknowledgement

I would like to thank my mentors and the team for the kind support and feedbacks. Since my internship period last year, I have been give access to rich computation resources, and it has been a fantastic experience.

From Mentors:

This project started with a question: “how does NCCL achieve such high and stable performance?” It is an advanced and experimental topic, but Mr. Ueno achieved a remarkable result with his high motivation and technical skills.

PFN is looking for talents, not only in the deep learning/machine learning field but a full range of technical areas from hardware to software. Please visit https://www.preferred-networks.jp/en/jobs for more information.

For students who are interested in high-performance computing and other technologies, PFN offers international internship opportunities, as well as domestic programs for Japanese students. The application period has finished this year, but be ready for the next opportunity!

References

[1] Preferred Networks officially released ChainerMN version 1.0.0

[2] Akiba, et al., “Extremely Large Minibatch SGD: Training ResNet-50 on ImageNet in 15 Minutes”

[3] NVIDIA Collective Communications Library

[4] Rabenseifner, “Optimization of Collective Reduction Operations”, ICCS 2004

[5] Jeaugey, “Optimized Inter-GPU Collective Operations with NCCL”, GTC 2017

[6] baidu-allreduce

[7] Open MPI

[8] New ChainerMN functions for improved performance in cloud environments and performance testing results on AWS

[9] Tsuzuku, et al., “Variance-based Gradient Compression for Efficient Distributed Deep Learning”, In Proceedings of ICLR 2018 (Workshop Track)

原文:Technologies behind Distributed Deep Learning: AllReduce