pytorch入门学习:训练一个图像分类器

目录

- 0 将做以下工作:

- 1 下载训练集和测试集

- 2 定义卷积神经网络

- 3 定义损失函数和优化器

- 4 训练

- 5 测试

- 在GPU上跑?

0 将做以下工作:

- 采用torchvision来下载CIFAR10的训练和测试集

- 定义一个卷积神经网络

- 定义损失函数

- 采用训练集来训练网络

- 采用测试集

1 下载训练集和测试集

Using ``torchvision``, it’s extremely easy to load CIFAR10.

"""

import torch

import torchvision

import torchvision.transforms as transforms

########################################################################

# The output of torchvision datasets are PILImage images of range [0, 1].

# We transform them to Tensors of normalized range [-1, 1].

transform = transforms.Compose(

[transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))]) # 前面的0.5是均值,后面的0.5是方差

trainset = torchvision.datasets.CIFAR10(root='./data', train=True,

download=True, transform=transform)

trainloader = torch.utils.data.DataLoader(trainset, batch_size=4, # 将已有的数据按照batch size封装成Tensor

shuffle=True, num_workers=0) # num_workers,采用几个线程来导入数据,0表示采用主线程;# shuffle,一般在训练数据中会采用。

testset = torchvision.datasets.CIFAR10(root='./data', train=False,

download=True, transform=transform)

testloader = torch.utils.data.DataLoader(testset, batch_size=4,

shuffle=False, num_workers=0)

classes = ('plane', 'car', 'bird', 'cat',

'deer', 'dog', 'frog', 'horse', 'ship', 'truck')

########################################################################

# Let us show some of the training images, for fun.

import matplotlib.pyplot as plt

import numpy as np

# functions to show an image

def imshow(img):

img = img / 2 + 0.5 # unnormalize

npimg = img.numpy()

plt.imshow(np.transpose(npimg, (1, 2, 0)))

# get some random training images

dataiter = iter(trainloader)

images, labels = dataiter.next()

# show images

imshow(torchvision.utils.make_grid(images))

# print labels

print(' '.join('%5s' % classes[labels[j]] for j in range(4)))

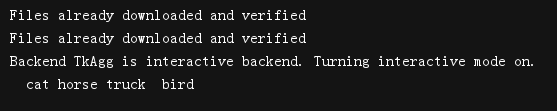

2 定义卷积神经网络

代码:

########################################################################

# 2. Define a Convolution Neural Network

# ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

# Copy the neural network from the Neural Networks section before and modify it to

# take 3-channel images (instead of 1-channel images as it was defined).

import torch.nn as nn

import torch.nn.functional as F

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(3, 6, 5)

self.pool = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(6, 16, 5)

self.fc1 = nn.Linear(16 * 5 * 5, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, 10)

def forward(self, x):

x = self.pool(F.relu(self.conv1(x)))

x = self.pool(F.relu(self.conv2(x)))

x = x.view(-1, 16 * 5 * 5)

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = self.fc3(x)

return x

net = Net()

print(net)

# 输出网络看一下参数

params = list(net.parameters())

print(len(params))

for i in range(len(params)):

print(i, ' : ', params[i].size())

3 定义损失函数和优化器

import torch.optim as optim

criterion = nn.CrossEntropyLoss() # 定义损失函数为交叉熵损失函数

optimizer = optim.SGD(net.parameters(), lr=0.001, momentum=0.9) # 采用SGD(随机梯度下降法)

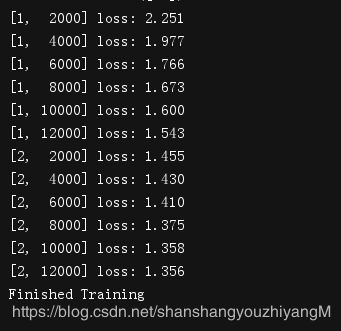

4 训练

########################################################################

# 4. Train the network

# ^^^^^^^^^^^^^^^^^^^^

#

# This is when things start to get interesting.

# We simply have to loop over our data iterator, and feed the inputs to the

# network and optimize.

for epoch in range(2): # loop over the dataset multiple times

running_loss = 0.0

for i, data in enumerate(trainloader, 0): # enumerate()函数把一个可遍历的数据对象组合为一个索引序列,一般用于for循环中

# get the inputs

inputs, labels = data

# zero the parameter gradients

optimizer.zero_grad()

# forward + backward + optimize

outputs = net(inputs)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

# print statistics

running_loss += loss.item()

if i % 2000 == 1999: # print every 2000 mini-batches

print('[%d, %5d] loss: %.3f' %

(epoch + 1, i + 1, running_loss / 2000))

running_loss = 0.0

print('Finished Training')

注意:

epoch(纪元):对所有图像样本训练几遍

iter(迭代):这个和batch_size、sample_num有关系

batch_size:一次迭代要训练几张图片

iter*batch_size = sample_num

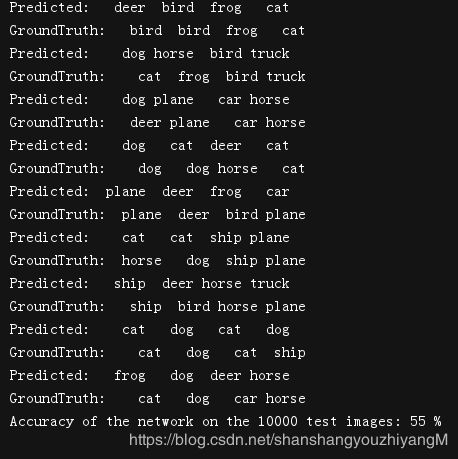

5 测试

########################################################################

# 5. Test the network on the test data

# ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

#

# We have trained the network for 2 passes over the training dataset.

# But we need to check if the network has learnt anything at all.

#

# We will check this by predicting the class label that the neural network

# outputs, and checking it against the ground-truth. If the prediction is

# correct, we add the sample to the list of correct predictions.

#

# Okay, first step. Let us display an image from the test set to get familiar.

# dataiter = iter(testloader)

# images, labels = dataiter.next()

#

# # print images

# imshow(torchvision.utils.make_grid(images))

# print('GroundTruth: ', ' '.join('%5s' % classes[labels[j]] for j in range(4)))

########################################################################

# Okay, now let us see what the neural network thinks these examples above are:

# outputs = net(images)

########################################################################

# The outputs are energies for the 10 classes.

# Higher the energy for a class, the more the network

# thinks that the image is of the particular class.

# So, let's get the index of the highest energy:

# _, predicted = torch.max(outputs, 1)

#

# print('Predicted: ', ' '.join('%5s' % classes[predicted[j]]

# for j in range(4)))

########################################################################

# The results seem pretty good.

#

# Let us look at how the network performs on the whole dataset.

correct = 0

total = 0

with torch.no_grad():

for data in testloader:

images, labels = data

outputs = net(images)

_, predicted = torch.max(outputs.data, 1)

print('Predicted: ', ' '.join('%5s' % classes[predicted[j]]

for j in range(4)))

print('GroundTruth: ', ' '.join('%5s' % classes[labels[j]] for j in range(4)))

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('Accuracy of the network on the 10000 test images: %d %%' % (

100 * correct / total))

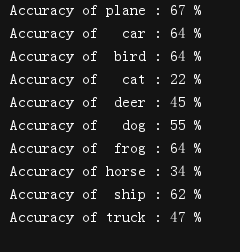

下面是输出各类的准确率:

########################################################################

# That looks waaay better than chance, which is 10% accuracy (randomly picking

# a class out of 10 classes).

# Seems like the network learnt something.

#

# Hmmm, what are the classes that performed well, and the classes that did

# not perform well:

class_correct = list(0. for i in range(10))

class_total = list(0. for i in range(10))

with torch.no_grad():

for data in testloader:

images, labels = data

outputs = net(images)

_, predicted = torch.max(outputs, 1)

c = (predicted == labels).squeeze()

for i in range(4):

label = labels[i]

class_correct[label] += c[i].item()

class_total[label] += 1

for i in range(10):

print('Accuracy of %5s : %2d %%' % (

classes[i], 100 * class_correct[i] / class_total[i]))

在GPU上跑?

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

# Assume that we are on a CUDA machine, then this should print a CUDA device:

print(device)

net.to(device)

inputs, labels = inputs.to(device), labels.to(device)