【YOLACT】训练自己数据集

环境

win10,cuda10,pytorch1.2,python3.7

数据格式

由于我的数据标签是使用labelme方式得到的,但是为了能使得代码有效运行,将我的数据都整理成如下形式:

labelme标注数据转换成可用图像代码:

链接:https://pan.baidu.com/s/1gVQ1tQZ7Sdve4AlhKlIz5A

提取码:xrj5

在train文件夹下有三个子文件夹image,mask,yaml。

image当中保存的是rgb三通道的图像

image1.png

image2.png

image3.png

...

mask当中保存的是npy文件,名字与image中相同,只是后缀不同

image1.npy

image2.npy

image3.npy

...

yaml中保存的是yaml文件,名字与image中相同,只是后缀不同

image1.yaml

image2.yaml

image3.yaml

...

针对image、mask和yaml的image1.png、image1.npy和image1.yaml进行具体介绍

image1.png如图所示,图像尺寸为513*604:

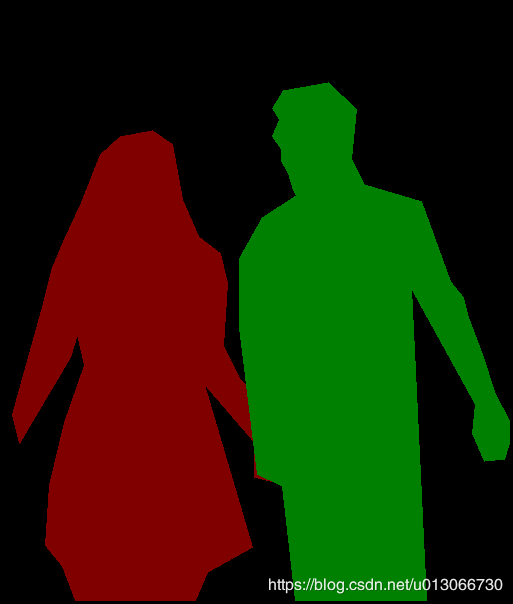

image1.npy保存的是mask文件,本来是要保存png的,但是我防止我一副图片超过了255个物体,那么保存为灰度图像就有点蠢,不如直接保存为npy文件。

上面这张图并不是我npy中实际保存的,仅仅是为了方便显示。npy中具体保存了两个人的实例分割标记。比如红色的person1,在标签中就是数字1,也就是红色部分全是1;绿色的person2,在标签中就是数字2,区域就是绿色部分,又规定标签是单通道的,所以npy是一个513*604的矩阵,矩阵中保存了背景0,person1对应的1,person2对应的2。

image1.yaml的具体内容如下

label_names:

- _background_

- person1

- person2记住了,这个yaml中的顺序必须与image1.npy中的数字对应起来,npy中的数字1代表什么类别,那么这个第一个就是什么类别,顺序千万不要搞错了。不然你后面的训练都是错误的。

我经过测试发现,不需要完全对应,这是由于代码不需要输入坐标导致的。只需要直到image1.yaml中的person1与图像中是person的区域对应即可(可能是图像中person2,也可能person1,但都没关系,只要对应即可)。。。如果这里不理解没关系,你就把按照删除线那段理解也行。

添加和修改文件

为了不改变coco的训练文件,所以我自己新建了训练文件train_cell.py,新建了测试文件eval_cell.py,data文件夹下新建了cell.py文件,修改了data文件夹下的config.py和_init_.py文件。

如果需要在自己电脑上运行,记得修改config.py中的路径,大约在config.py中的174行。

在yolact-master文件夹下新建train_cell.py(加一长串#####################就是被我修改的部分)

parser = argparse.ArgumentParser(

description='Yolact Training Script')

parser.add_argument('--batch_size', default=16, type=int,

help='Batch size for training') #####################################################################################

parser.add_argument('--resume', default=None, type=str,

help='Checkpoint state_dict file to resume training from. If this is "interrupt"' \

', the model will resume training from the interrupt file.') #######################################################

parser.add_argument('--start_iter', default=-1, type=int,

help='Resume training at this iter. If this is -1, the iteration will be' \

'determined from the file name.')

parser.add_argument('--num_workers', default=4, type=int,

help='Number of workers used in dataloading') ##############################################################

parser.add_argument('--cuda', default=True, type=str2bool,

help='Use CUDA to train model')

parser.add_argument('--lr', '--learning_rate', default=None, type=float,

help='Initial learning rate. Leave as None to read this from the config.')

parser.add_argument('--momentum', default=None, type=float,

help='Momentum for SGD. Leave as None to read this from the config.')

parser.add_argument('--decay', '--weight_decay', default=None, type=float,

help='Weight decay for SGD. Leave as None to read this from the config.')

parser.add_argument('--gamma', default=None, type=float,

help='For each lr step, what to multiply the lr by. Leave as None to read this from the config.')

parser.add_argument('--save_folder', default='weights/',

help='Directory for saving checkpoint models.')

parser.add_argument('--log_folder', default='logs/',

help='Directory for saving logs.')

parser.add_argument('--config', default="yolact_base_cell_config", ##########################################################################

help='The config object to use.')

parser.add_argument('--save_interval', default=50, type=int,

help='The number of iterations between saving the model.') #####################################################################

parser.add_argument('--validation_size', default=50, type=int,

help='The number of images to use for validation.') #######################################################################

parser.add_argument('--validation_epoch', default=2, type=int,

help='Output validation information every n iterations. If -1, do no validation.')

parser.add_argument('--keep_latest', dest='keep_latest', action='store_true',

help='Only keep the latest checkpoint instead of each one.')

parser.add_argument('--keep_latest_interval', default=100000, type=int,

help='When --keep_latest is on, don\'t delete the latest file at these intervals. This should be a multiple of save_interval or 0.')

parser.add_argument('--dataset', default=None, type=str,

help='If specified, override the dataset specified in the config with this one (example: coco2017_dataset).')

parser.add_argument('--no_log', dest='log', action='store_false',

help='Don\'t log per iteration information into log_folder.')

parser.add_argument('--log_gpu', dest='log_gpu', action='store_true',

help='Include GPU information in the logs. Nvidia-smi tends to be slow, so set this with caution.')

parser.add_argument('--no_interrupt', dest='interrupt', action='store_false',

help='Don\'t save an interrupt when KeyboardInterrupt is caught.')

parser.add_argument('--batch_alloc', default=None, type=str,

help='If using multiple GPUS, you can set this to be a comma separated list detailing which GPUs should get what local batch size (It should add up to your total batch size).')

parser.add_argument('--no_autoscale', dest='autoscale', action='store_false',

help='YOLACT will automatically scale the lr and the number of iterations depending on the batch size. Set this if you want to disable that.')def train():

if not os.path.exists(args.save_folder):

os.mkdir(args.save_folder)

dataset = CellDetection(image_path=cfg.dataset.train_images, #############################################################################

mask_path=cfg.dataset.train_mask,

yaml_path=cfg.dataset.train_yaml,

transform=SSDAugmentation(MEANS))

if args.validation_epoch > 0:

setup_eval()

val_dataset = CellDetection(image_path=cfg.dataset.valid_images, #####################################################################

mask_path=cfg.dataset.valid_mask,

yaml_path=cfg.dataset.valid_yaml,

transform=BaseTransform(MEANS))

# Parallel wraps the underlying module, but when saving and loading we don't want that

yolact_net = Yolact()

net = yolact_net

net.train()

if args.log:

log = Log(cfg.name, args.log_folder, dict(args._get_kwargs()),

overwrite=(args.resume is None), log_gpu_stats=args.log_gpu)

在yolact-master文件夹下新建eval_cell.py (当中加非常长一串#####################就是我修改的地方)

parser.add_argument('--images', default="F:/pic_complete_ai::E:/yolact-master/results", type=str,

help='An input folder of images and output folder to save detected images. Should be in the format input->output.') #############################################################################

# parser.add_argument('--images', default=None, type=str,

# help='An input folder of images and output folder to save detected images. Should be in the format input->output.') if args.image is not None:

if ':' in args.image:

inp, out = args.image.split(':')

evalimage(net, inp, out)

else:

evalimage(net, args.image)

return

elif args.images is not None:

inp, out = args.images.split('::') ##################################################################################################

evalimages(net, inp, out)

return

elif args.video is not None:

if ':' in args.video:

inp, out = args.video.split(':')

evalvideo(net, inp, out)

else:

evalvideo(net, args.video)

return if args.shuffle:

random.shuffle(dataset_indices)

elif not args.no_sort: ###############################################################################################################

# Do a deterministic shuffle based on the image ids

#

# I do this because on python 3.5 dictionary key order is *random*, while in 3.6 it's

# the order of insertion. That means on python 3.6, the images come in the order they are in

# in the annotations file. For some reason, the first images in the annotations file are

# the hardest. To combat this, I use a hard-coded hash function based on the image ids

# to shuffle the indices we use. That way, no matter what python version or how pycocotools

# handles the data, we get the same result every time.

# hashed = [badhash(x) for x in dataset.ids]

# dataset_indices.sort(key=lambda x: hashed[x])

pass在yolact-master/data文件夹下新建cell.py文件(这cell.py整个文件都被修改了)

import os

import os.path as osp

import sys

import torch

import torch.utils.data as data

import torch.nn.functional as F

import cv2

import numpy as np

from .config import cfg

from pycocotools import mask as maskUtils

import random

import yaml

from PIL import Image

# def get_label_map():

# label_map = {"cell":1}

# return label_map

def get_label_map():

if cfg.dataset.label_map is None:

return {x+1: x+1 for x in range(len(cfg.dataset.class_names))}

else:

return cfg.dataset.label_map

class CellDetection(data.Dataset):

"""`MS Coco Detection `_ Dataset.

Args:

root (string): Root directory where images are downloaded to.

set_name (string): Name of the specific set of COCO images.

transform (callable, optional): A function/transform that augments the

raw images`

target_transform (callable, optional): A function/transform that takes

in the target (bbox) and transforms it.

prep_crowds (bool): Whether or not to prepare crowds for the evaluation step.

"""

def __init__(self, image_path, mask_path, yaml_path, transform=None,

dataset_name='Cell'):

self.image_path = image_path

self.ids = os.listdir(self.image_path)

self.mask_path = mask_path

self.yaml_path = yaml_path

self.label_map = get_label_map()

self.transform = transform

self.name = dataset_name

def __getitem__(self, index):

"""

Args:

index (int): Index

Returns:

tuple: Tuple (image, (target, masks, num_crowds)).

target is the object returned by ``coco.loadAnns``.

"""

im, gt, masks, h, w, num_crowds = self.pull_item(index)

return im, (gt, masks, num_crowds)

def __len__(self):

return len(self.ids)

def get_obj_index(self, mask):

n = np.max(mask)

return n

'''

标签形式为

label_names:

- gland1

- gland2

- gland3

'''

def from_yaml_get_class(self, yaml_path):

with open(yaml_path) as f:

temp = yaml.load(f.read(), Loader=yaml.FullLoader)

labels = temp['label_names']

del labels[0]

return labels

def get_bbox_from_mask(self, mask):

coordis = np.where(mask) # 行列,所以coordis[0]表示y,coordis[1]表示x

height, width = mask.shape[0], mask.shape[1]

x_min = np.min(coordis[1]) * 1. / width

x_max = np.max(coordis[1]) * 1. / width

y_min = np.min(coordis[0]) * 1. / height

y_max = np.max(coordis[0]) * 1. / height

return [x_min, y_min, x_max, y_max]

# 重新写draw_mask

def get_mask_bbox(self, num_obj, mask, image):

height, width = mask.shape[1], mask.shape[2]

bbox = []

for index in range(num_obj):

for i in range(width):

for j in range(height):

at_pixel = image.getpixel((i, j))

# at_pixel = image[j, i]

if at_pixel == index + 1:

mask[index, j, i] = 1

bbox.append(self.get_bbox_from_mask(mask[index,:,:]))

return mask, np.array(bbox)

def pull_item(self, index):

"""

Args:

index (int): Index

Returns:

tuple: Tuple (image, target, masks, height, width, crowd).

target is the object returned by ``coco.loadAnns``.

Note that if no crowd annotations exist, crowd will be None

"""

image_name = self.ids[index]

single_image_path = os.path.join(self.image_path, image_name)

img = cv2.imread(single_image_path)

height, width, _ = img.shape

single_mask_path = os.path.join(self.mask_path, image_name.replace(".png", ".npy"))

mask = np.load(single_mask_path)

num_obj = self.get_obj_index(mask)

single_yaml_path = os.path.join(self.yaml_path, image_name.replace(".png", ".yaml"))

labels = self.from_yaml_get_class(single_yaml_path)

assert num_obj == len(labels)

mask_temple = np.zeros([num_obj, height, width], dtype=np.uint8)

mask, bbox = self.get_mask_bbox(num_obj, mask_temple, mask)

# # 这部分是为了防止标签出现重叠,所以就有了从mask的最后一层开始,

# # 前一层删除与当前层及之后所有层重叠的地方

# occlusion = np.logical_not(mask[-1, :, :]).astype(np.uint8)

# for i in range(num_obj - 2, -1, -1):

# mask[i, :, :] = mask[i, :, :] * occlusion

# occlusion = np.logical_and(occlusion, np.logical_not(mask[i, :, :]))

labels_form = []

for i in range(len(labels)):

if labels[i].find("cell") != -1:

labels_form.append("cell")

target = np.zeros((len(labels_form), 5))

for i in range(len(labels_form)):

target[i, :4] = bbox[i]

target[i, 4] = self.label_map[labels_form[i]]

img, mask, boxes, labels = self.transform(img, mask, target[:, :4],

{'num_crowds': 0, 'labels': target[:, 4]})

num_crowds = labels['num_crowds']

labels = labels['labels']

target = np.hstack((boxes, np.expand_dims(labels, axis=1)))

return torch.from_numpy(img).permute(2, 0, 1), target, mask, height, width, num_crowds

def pull_image(self, index):

pass

def pull_anno(self, index):

pass

def __repr__(self):

fmt_str = 'Dataset ' + self.__class__.__name__ + '\n'

fmt_str += ' Number of datapoints: {}\n'.format(self.__len__())

fmt_str += ' Root Location: {}\n'.format(self.image_path)

tmp = ' Transforms (if any): '

fmt_str += '{0}{1}\n'.format(tmp, self.transform.__repr__().replace('\n', '\n' + ' ' * len(tmp)))

return fmt_str 修改了yoloact-master/data文件夹下的config.py文件(加一长串###################的地方就是我修改的地方)

from backbone import ResNetBackbone, VGGBackbone, ResNetBackboneGN, DarkNetBackbone

from math import sqrt

import torch

# for making bounding boxes pretty

COLORS = ((244, 67, 54),

(233, 30, 99),

(156, 39, 176),

(103, 58, 183),

( 63, 81, 181),

( 33, 150, 243),

( 3, 169, 244),

( 0, 188, 212),

( 0, 150, 136),

( 76, 175, 80),

(139, 195, 74),

(205, 220, 57),

(255, 235, 59),

(255, 193, 7),

(255, 152, 0),

(255, 87, 34),

(121, 85, 72),

(158, 158, 158),

( 96, 125, 139))

# These are in BGR and are for ImageNet

MEANS = (103.94, 116.78, 123.68)

STD = (57.38, 57.12, 58.40)

COCO_CLASSES = ('person', 'bicycle', 'car', 'motorcycle', 'airplane', 'bus',

'train', 'truck', 'boat', 'traffic light', 'fire hydrant',

'stop sign', 'parking meter', 'bench', 'bird', 'cat', 'dog',

'horse', 'sheep', 'cow', 'elephant', 'bear', 'zebra', 'giraffe',

'backpack', 'umbrella', 'handbag', 'tie', 'suitcase', 'frisbee',

'skis', 'snowboard', 'sports ball', 'kite', 'baseball bat',

'baseball glove', 'skateboard', 'surfboard', 'tennis racket',

'bottle', 'wine glass', 'cup', 'fork', 'knife', 'spoon', 'bowl',

'banana', 'apple', 'sandwich', 'orange', 'broccoli', 'carrot',

'hot dog', 'pizza', 'donut', 'cake', 'chair', 'couch',

'potted plant', 'bed', 'dining table', 'toilet', 'tv', 'laptop',

'mouse', 'remote', 'keyboard', 'cell phone', 'microwave', 'oven',

'toaster', 'sink', 'refrigerator', 'book', 'clock', 'vase',

'scissors', 'teddy bear', 'hair drier', 'toothbrush')

COCO_LABEL_MAP = { 1: 1, 2: 2, 3: 3, 4: 4, 5: 5, 6: 6, 7: 7, 8: 8,

9: 9, 10: 10, 11: 11, 13: 12, 14: 13, 15: 14, 16: 15, 17: 16,

18: 17, 19: 18, 20: 19, 21: 20, 22: 21, 23: 22, 24: 23, 25: 24,

27: 25, 28: 26, 31: 27, 32: 28, 33: 29, 34: 30, 35: 31, 36: 32,

37: 33, 38: 34, 39: 35, 40: 36, 41: 37, 42: 38, 43: 39, 44: 40,

46: 41, 47: 42, 48: 43, 49: 44, 50: 45, 51: 46, 52: 47, 53: 48,

54: 49, 55: 50, 56: 51, 57: 52, 58: 53, 59: 54, 60: 55, 61: 56,

62: 57, 63: 58, 64: 59, 65: 60, 67: 61, 70: 62, 72: 63, 73: 64,

74: 65, 75: 66, 76: 67, 77: 68, 78: 69, 79: 70, 80: 71, 81: 72,

82: 73, 84: 74, 85: 75, 86: 76, 87: 77, 88: 78, 89: 79, 90: 80}

CELL_LABEL_MAP = {"cell":0,} #########################################################

CELL_CLASS_NAMES = ('cell',) #这个逗号千万不能删除,否则len方法就是计算cell的长度,即为4

# ----------------------- CONFIG CLASS ----------------------- #

class Config(object):

"""

Holds the configuration for anything you want it to.

To get the currently active config, call get_cfg().

To use, just do cfg.x instead of cfg['x'].

I made this because doing cfg['x'] all the time is dumb.

"""

def __init__(self, config_dict):

for key, val in config_dict.items():

self.__setattr__(key, val)

def copy(self, new_config_dict={}):

"""

Copies this config into a new config object, making

the changes given by new_config_dict.

"""

ret = Config(vars(self))

for key, val in new_config_dict.items():

ret.__setattr__(key, val)

return ret

def replace(self, new_config_dict):

"""

Copies new_config_dict into this config object.

Note: new_config_dict can also be a config object.

"""

if isinstance(new_config_dict, Config):

new_config_dict = vars(new_config_dict)

for key, val in new_config_dict.items():

self.__setattr__(key, val)

def print(self):

for k, v in vars(self).items():

print(k, ' = ', v)

# ----------------------- DATASETS ----------------------- #

dataset_base = Config({

'name': 'Base Dataset',

# Training images and annotations

'train_images': './data/coco/images/',

'train_info': 'path_to_annotation_file',

# Validation images and annotations.

'valid_images': './data/coco/images/',

'valid_info': 'path_to_annotation_file',

# Whether or not to load GT. If this is False, eval.py quantitative evaluation won't work.

'has_gt': True,

# A list of names for each of you classes.

'class_names': COCO_CLASSES,

# COCO class ids aren't sequential, so this is a bandage fix. If your ids aren't sequential,

# provide a map from category_id -> index in class_names + 1 (the +1 is there because it's 1-indexed).

# If not specified, this just assumes category ids start at 1 and increase sequentially.

'label_map': None

})

coco2014_dataset = dataset_base.copy({

'name': 'COCO 2014',

'train_info': './data/coco/annotations/instances_train2014.json',

'valid_info': './data/coco/annotations/instances_val2014.json',

'label_map': COCO_LABEL_MAP

})

coco2017_dataset = dataset_base.copy({

'name': 'COCO 2017',

'train_info': './data/coco/annotations/instances_train2017.json',

'valid_info': './data/coco/annotations/instances_val2017.json',

'label_map': COCO_LABEL_MAP

})

coco2017_testdev_dataset = dataset_base.copy({

'name': 'COCO 2017 Test-Dev',

'valid_info': './data/coco/annotations/image_info_test-dev2017.json',

'has_gt': False,

'label_map': COCO_LABEL_MAP

})

PASCAL_CLASSES = ("aeroplane", "bicycle", "bird", "boat", "bottle",

"bus", "car", "cat", "chair", "cow", "diningtable",

"dog", "horse", "motorbike", "person", "pottedplant",

"sheep", "sofa", "train", "tvmonitor")

pascal_sbd_dataset = dataset_base.copy({

'name': 'Pascal SBD 2012',

'train_images': './data/sbd/img',

'valid_images': './data/sbd/img',

'train_info': './data/sbd/pascal_sbd_train.json',

'valid_info': './data/sbd/pascal_sbd_val.json',

'class_names': PASCAL_CLASSES,

})

################################### cell 添加配置 ##################################

cell_dataset = dataset_base.copy({

'name': 'cell',

'train_images': 'F:/img',

'train_mask': 'F:/mask',

'train_yaml': 'F:/yaml',

'valid_images': 'F:/img',

'valid_mask': 'F:/mask',

'valid_yaml': 'F:/yaml',

'train_info': 'None',

'valid_info': 'None',

'class_names': CELL_CLASS_NAMES,

'label_map': CELL_LABEL_MAP

})

# ----------------------- TRANSFORMS ----------------------- #

resnet_transform = Config({

'channel_order': 'RGB',

'normalize': True,

'subtract_means': False,

'to_float': False,

})

vgg_transform = Config({

# Note that though vgg is traditionally BGR,

# the channel order of vgg_reducedfc.pth is RGB.

'channel_order': 'RGB',

'normalize': False,

'subtract_means': True,

'to_float': False,

})

darknet_transform = Config({

'channel_order': 'RGB',

'normalize': False,

'subtract_means': False,

'to_float': True,

})

# ----------------------- BACKBONES ----------------------- #

backbone_base = Config({

'name': 'Base Backbone',

'path': 'path/to/pretrained/weights',

'type': object,

'args': tuple(),

'transform': resnet_transform,

'selected_layers': list(),

'pred_scales': list(),

'pred_aspect_ratios': list(),

'use_pixel_scales': False,

'preapply_sqrt': True,

'use_square_anchors': False,

})

resnet101_backbone = backbone_base.copy({

'name': 'ResNet101',

'path': 'resnet101_reducedfc.pth',

'type': ResNetBackbone,

'args': ([3, 4, 23, 3],),

'transform': resnet_transform,

'selected_layers': list(range(2, 8)),

'pred_scales': [[1]]*6,

'pred_aspect_ratios': [ [[0.66685089, 1.7073535, 0.87508774, 1.16524493, 0.49059086]] ] * 6,

})

resnet101_gn_backbone = backbone_base.copy({

'name': 'ResNet101_GN',

'path': 'R-101-GN.pkl',

'type': ResNetBackboneGN,

'args': ([3, 4, 23, 3],),

'transform': resnet_transform,

'selected_layers': list(range(2, 8)),

'pred_scales': [[1]]*6,

'pred_aspect_ratios': [ [[0.66685089, 1.7073535, 0.87508774, 1.16524493, 0.49059086]] ] * 6,

})

resnet101_dcn_inter3_backbone = resnet101_backbone.copy({

'name': 'ResNet101_DCN_Interval3',

'args': ([3, 4, 23, 3], [0, 4, 23, 3], 3),

})

resnet50_backbone = resnet101_backbone.copy({

'name': 'ResNet50',

'path': 'resnet50-19c8e357.pth',

'type': ResNetBackbone,

'args': ([3, 4, 6, 3],),

'transform': resnet_transform,

})

resnet50_dcnv2_backbone = resnet50_backbone.copy({

'name': 'ResNet50_DCNv2',

'args': ([3, 4, 6, 3], [0, 4, 6, 3]),

})

darknet53_backbone = backbone_base.copy({

'name': 'DarkNet53',

'path': 'darknet53.pth',

'type': DarkNetBackbone,

'args': ([1, 2, 8, 8, 4],),

'transform': darknet_transform,

'selected_layers': list(range(3, 9)),

'pred_scales': [[3.5, 4.95], [3.6, 4.90], [3.3, 4.02], [2.7, 3.10], [2.1, 2.37], [1.8, 1.92]],

'pred_aspect_ratios': [ [[1, sqrt(2), 1/sqrt(2), sqrt(3), 1/sqrt(3)][:n], [1]] for n in [3, 5, 5, 5, 3, 3] ],

})

vgg16_arch = [[64, 64],

[ 'M', 128, 128],

[ 'M', 256, 256, 256],

[('M', {'kernel_size': 2, 'stride': 2, 'ceil_mode': True}), 512, 512, 512],

[ 'M', 512, 512, 512],

[('M', {'kernel_size': 3, 'stride': 1, 'padding': 1}),

(1024, {'kernel_size': 3, 'padding': 6, 'dilation': 6}),

(1024, {'kernel_size': 1})]]

vgg16_backbone = backbone_base.copy({

'name': 'VGG16',

'path': 'vgg16_reducedfc.pth',

'type': VGGBackbone,

'args': (vgg16_arch, [(256, 2), (128, 2), (128, 1), (128, 1)], [3]),

'transform': vgg_transform,

'selected_layers': [3] + list(range(5, 10)),

'pred_scales': [[5, 4]]*6,

'pred_aspect_ratios': [ [[1], [1, sqrt(2), 1/sqrt(2), sqrt(3), 1/sqrt(3)][:n]] for n in [3, 5, 5, 5, 3, 3] ],

})

# ----------------------- MASK BRANCH TYPES ----------------------- #

mask_type = Config({

# Direct produces masks directly as the output of each pred module.

# This is denoted as fc-mask in the paper.

# Parameters: mask_size, use_gt_bboxes

'direct': 0,

# Lincomb produces coefficients as the output of each pred module then uses those coefficients

# to linearly combine features from a prototype network to create image-sized masks.

# Parameters:

# - masks_to_train (int): Since we're producing (near) full image masks, it'd take too much

# vram to backprop on every single mask. Thus we select only a subset.

# - mask_proto_src (int): The input layer to the mask prototype generation network. This is an

# index in backbone.layers. Use to use the image itself instead.

# - mask_proto_net (list): A list of layers in the mask proto network with the last one

# being where the masks are taken from. Each conv layer is in

# the form (num_features, kernel_size, **kwdargs). An empty

# list means to use the source for prototype masks. If the

# kernel_size is negative, this creates a deconv layer instead.

# If the kernel_size is negative and the num_features is None,

# this creates a simple bilinear interpolation layer instead.

# - mask_proto_bias (bool): Whether to include an extra coefficient that corresponds to a proto

# mask of all ones.

# - mask_proto_prototype_activation (func): The activation to apply to each prototype mask.

# - mask_proto_mask_activation (func): After summing the prototype masks with the predicted

# coeffs, what activation to apply to the final mask.

# - mask_proto_coeff_activation (func): The activation to apply to the mask coefficients.

# - mask_proto_crop (bool): If True, crop the mask with the predicted bbox during training.

# - mask_proto_crop_expand (float): If cropping, the percent to expand the cropping bbox by

# in each direction. This is to make the model less reliant

# on perfect bbox predictions.

# - mask_proto_loss (str [l1|disj]): If not None, apply an l1 or disjunctive regularization

# loss directly to the prototype masks.

# - mask_proto_binarize_downsampled_gt (bool): Binarize GT after dowsnampling during training?

# - mask_proto_normalize_mask_loss_by_sqrt_area (bool): Whether to normalize mask loss by sqrt(sum(gt))

# - mask_proto_reweight_mask_loss (bool): Reweight mask loss such that background is divided by

# #background and foreground is divided by #foreground.

# - mask_proto_grid_file (str): The path to the grid file to use with the next option.

# This should be a numpy.dump file with shape [numgrids, h, w]

# where h and w are w.r.t. the mask_proto_src convout.

# - mask_proto_use_grid (bool): Whether to add extra grid features to the proto_net input.

# - mask_proto_coeff_gate (bool): Add an extra set of sigmoided coefficients that is multiplied

# into the predicted coefficients in order to "gate" them.

# - mask_proto_prototypes_as_features (bool): For each prediction module, downsample the prototypes

# to the convout size of that module and supply the prototypes as input

# in addition to the already supplied backbone features.

# - mask_proto_prototypes_as_features_no_grad (bool): If the above is set, don't backprop gradients to

# to the prototypes from the network head.

# - mask_proto_remove_empty_masks (bool): Remove masks that are downsampled to 0 during loss calculations.

# - mask_proto_reweight_coeff (float): The coefficient to multiple the forground pixels with if reweighting.

# - mask_proto_coeff_diversity_loss (bool): Apply coefficient diversity loss on the coefficients so that the same

# instance has similar coefficients.

# - mask_proto_coeff_diversity_alpha (float): The weight to use for the coefficient diversity loss.

# - mask_proto_normalize_emulate_roi_pooling (bool): Normalize the mask loss to emulate roi pooling's affect on loss.

# - mask_proto_double_loss (bool): Whether to use the old loss in addition to any special new losses.

# - mask_proto_double_loss_alpha (float): The alpha to weight the above loss.

# - mask_proto_split_prototypes_by_head (bool): If true, this will give each prediction head its own prototypes.

# - mask_proto_crop_with_pred_box (bool): Whether to crop with the predicted box or the gt box.

'lincomb': 1,

})

# ----------------------- ACTIVATION FUNCTIONS ----------------------- #

activation_func = Config({

'tanh': torch.tanh,

'sigmoid': torch.sigmoid,

'softmax': lambda x: torch.nn.functional.softmax(x, dim=-1),

'relu': lambda x: torch.nn.functional.relu(x, inplace=True),

'none': lambda x: x,

})

# ----------------------- FPN DEFAULTS ----------------------- #

fpn_base = Config({

# The number of features to have in each FPN layer

'num_features': 256,

# The upsampling mode used

'interpolation_mode': 'bilinear',

# The number of extra layers to be produced by downsampling starting at P5

'num_downsample': 1,

# Whether to down sample with a 3x3 stride 2 conv layer instead of just a stride 2 selection

'use_conv_downsample': False,

# Whether to pad the pred layers with 1 on each side (I forgot to add this at the start)

# This is just here for backwards compatibility

'pad': True,

# Whether to add relu to the downsampled layers.

'relu_downsample_layers': False,

# Whether to add relu to the regular layers

'relu_pred_layers': True,

})

# ----------------------- CONFIG DEFAULTS ----------------------- #

coco_base_config = Config({

'dataset': coco2014_dataset,

'num_classes': 81, # This should include the background class

'max_iter': 400000,

# The maximum number of detections for evaluation

'max_num_detections': 100,

# dw' = momentum * dw - lr * (grad + decay * w)

'lr': 1e-3,

'momentum': 0.9,

'decay': 5e-4,

# For each lr step, what to multiply the lr with

'gamma': 0.1,

'lr_steps': (280000, 360000, 400000),

# Initial learning rate to linearly warmup from (if until > 0)

'lr_warmup_init': 1e-4,

# If > 0 then increase the lr linearly from warmup_init to lr each iter for until iters

'lr_warmup_until': 500,

# The terms to scale the respective loss by

'conf_alpha': 1,

'bbox_alpha': 1.5,

'mask_alpha': 0.4 / 256 * 140 * 140, # Some funky equation. Don't worry about it.

# Eval.py sets this if you just want to run YOLACT as a detector

'eval_mask_branch': True,

# Top_k examples to consider for NMS

'nms_top_k': 200,

# Examples with confidence less than this are not considered by NMS

'nms_conf_thresh': 0.05,

# Boxes with IoU overlap greater than this threshold will be culled during NMS

'nms_thresh': 0.5,

# See mask_type for details.

'mask_type': mask_type.direct,

'mask_size': 16,

'masks_to_train': 100,

'mask_proto_src': None,

'mask_proto_net': [(256, 3, {}), (256, 3, {})],

'mask_proto_bias': False,

'mask_proto_prototype_activation': activation_func.relu,

'mask_proto_mask_activation': activation_func.sigmoid,

'mask_proto_coeff_activation': activation_func.tanh,

'mask_proto_crop': True,

'mask_proto_crop_expand': 0,

'mask_proto_loss': None,

'mask_proto_binarize_downsampled_gt': True,

'mask_proto_normalize_mask_loss_by_sqrt_area': False,

'mask_proto_reweight_mask_loss': False,

'mask_proto_grid_file': 'data/grid.npy',

'mask_proto_use_grid': False,

'mask_proto_coeff_gate': False,

'mask_proto_prototypes_as_features': False,

'mask_proto_prototypes_as_features_no_grad': False,

'mask_proto_remove_empty_masks': False,

'mask_proto_reweight_coeff': 1,

'mask_proto_coeff_diversity_loss': False,

'mask_proto_coeff_diversity_alpha': 1,

'mask_proto_normalize_emulate_roi_pooling': False,

'mask_proto_double_loss': False,

'mask_proto_double_loss_alpha': 1,

'mask_proto_split_prototypes_by_head': False,

'mask_proto_crop_with_pred_box': False,

# SSD data augmentation parameters

# Randomize hue, vibrance, etc.

'augment_photometric_distort': True,

# Have a chance to scale down the image and pad (to emulate smaller detections)

'augment_expand': True,

# Potentialy sample a random crop from the image and put it in a random place

'augment_random_sample_crop': True,

# Mirror the image with a probability of 1/2

'augment_random_mirror': True,

# Flip the image vertically with a probability of 1/2

'augment_random_flip': False,

# With uniform probability, rotate the image [0,90,180,270] degrees

'augment_random_rot90': False,

# Discard detections with width and height smaller than this (in absolute width and height)

'discard_box_width': 4 / 550,

'discard_box_height': 4 / 550,

# If using batchnorm anywhere in the backbone, freeze the batchnorm layer during training.

# Note: any additional batch norm layers after the backbone will not be frozen.

'freeze_bn': False,

# Set this to a config object if you want an FPN (inherit from fpn_base). See fpn_base for details.

'fpn': None,

# Use the same weights for each network head

'share_prediction_module': False,

# For hard negative mining, instead of using the negatives that are leastl confidently background,

# use negatives that are most confidently not background.

'ohem_use_most_confident': False,

# Use focal loss as described in https://arxiv.org/pdf/1708.02002.pdf instead of OHEM

'use_focal_loss': False,

'focal_loss_alpha': 0.25,

'focal_loss_gamma': 2,

# The initial bias toward forground objects, as specified in the focal loss paper

'focal_loss_init_pi': 0.01,

# Keeps track of the average number of examples for each class, and weights the loss for that class accordingly.

'use_class_balanced_conf': False,

# Whether to use sigmoid focal loss instead of softmax, all else being the same.

'use_sigmoid_focal_loss': False,

# Use class[0] to be the objectness score and class[1:] to be the softmax predicted class.

# Note: at the moment this is only implemented if use_focal_loss is on.

'use_objectness_score': False,

# Adds a global pool + fc layer to the smallest selected layer that predicts the existence of each of the 80 classes.

# This branch is only evaluated during training time and is just there for multitask learning.

'use_class_existence_loss': False,

'class_existence_alpha': 1,

# Adds a 1x1 convolution directly to the biggest selected layer that predicts a semantic segmentations for each of the 80 classes.

# This branch is only evaluated during training time and is just there for multitask learning.

'use_semantic_segmentation_loss': False,

'semantic_segmentation_alpha': 1,

# Adds another branch to the netwok to predict Mask IoU.

'use_mask_scoring': False,

'mask_scoring_alpha': 1,

# Match gt boxes using the Box2Pix change metric instead of the standard IoU metric.

# Note that the threshold you set for iou_threshold should be negative with this setting on.

'use_change_matching': False,

# Uses the same network format as mask_proto_net, except this time it's for adding extra head layers before the final

# prediction in prediction modules. If this is none, no extra layers will be added.

'extra_head_net': None,

# What params should the final head layers have (the ones that predict box, confidence, and mask coeffs)

'head_layer_params': {'kernel_size': 3, 'padding': 1},

# Add extra layers between the backbone and the network heads

# The order is (bbox, conf, mask)

'extra_layers': (0, 0, 0),

# During training, to match detections with gt, first compute the maximum gt IoU for each prior.

# Then, any of those priors whose maximum overlap is over the positive threshold, mark as positive.

# For any priors whose maximum is less than the negative iou threshold, mark them as negative.

# The rest are neutral and not used in calculating the loss.

'positive_iou_threshold': 0.5,

'negative_iou_threshold': 0.5,

# When using ohem, the ratio between positives and negatives (3 means 3 negatives to 1 positive)

'ohem_negpos_ratio': 3,

# If less than 1, anchors treated as a negative that have a crowd iou over this threshold with

# the crowd boxes will be treated as a neutral.

'crowd_iou_threshold': 1,

# This is filled in at runtime by Yolact's __init__, so don't touch it

'mask_dim': None,

# Input image size.

'max_size': 300,

# Whether or not to do post processing on the cpu at test time

'force_cpu_nms': True,

# Whether to use mask coefficient cosine similarity nms instead of bbox iou nms

'use_coeff_nms': False,

# Whether or not to have a separate branch whose sole purpose is to act as the coefficients for coeff_diversity_loss

# Remember to turn on coeff_diversity_loss, or these extra coefficients won't do anything!

# To see their effect, also remember to turn on use_coeff_nms.

'use_instance_coeff': False,

'num_instance_coeffs': 64,

# Whether or not to tie the mask loss / box loss to 0

'train_masks': True,

'train_boxes': True,

# If enabled, the gt masks will be cropped using the gt bboxes instead of the predicted ones.

# This speeds up training time considerably but results in much worse mAP at test time.

'use_gt_bboxes': False,

# Whether or not to preserve aspect ratio when resizing the image.

# If True, this will resize all images to be max_size^2 pixels in area while keeping aspect ratio.

# If False, all images are resized to max_size x max_size

'preserve_aspect_ratio': False,

# Whether or not to use the prediction module (c) from DSSD

'use_prediction_module': False,

# Whether or not to use the predicted coordinate scheme from Yolo v2

'use_yolo_regressors': False,

# For training, bboxes are considered "positive" if their anchors have a 0.5 IoU overlap

# or greater with a ground truth box. If this is true, instead of using the anchor boxes

# for this IoU computation, the matching function will use the predicted bbox coordinates.

# Don't turn this on if you're not using yolo regressors!

'use_prediction_matching': False,

# A list of settings to apply after the specified iteration. Each element of the list should look like

# (iteration, config_dict) where config_dict is a dictionary you'd pass into a config object's init.

'delayed_settings': [],

# Use command-line arguments to set this.

'no_jit': False,

'backbone': None,

'name': 'base_config',

# Fast Mask Re-scoring Network

# Inspried by Mask Scoring R-CNN (https://arxiv.org/abs/1903.00241)

# Do not crop out the mask with bbox but slide a convnet on the image-size mask,

# then use global pooling to get the final mask score

'use_maskiou': False,

# Archecture for the mask iou network. A (num_classes-1, 1, {}) layer is appended to the end.

'maskiou_net': [],

# Discard predicted masks whose area is less than this

'discard_mask_area': -1,

'maskiou_alpha': 1.0,

'rescore_mask': False,

'rescore_bbox': False,

'maskious_to_train': -1,

})

# ----------------------- YOLACT v1.0 CONFIGS ----------------------- #

yolact_base_config = coco_base_config.copy({

'name': 'yolact_base',

# Dataset stuff, coco config

'dataset': coco2017_dataset,

'num_classes': len(coco2017_dataset.class_names) + 1,

# # Dataset stuff, coco config

# 'dataset': cell_dataset,

# 'num_classes': len(cell_dataset.class_names) + 1,

# Image Size

'max_size': 550,

# Training params

'lr_steps': (280000, 600000, 700000, 750000),

'max_iter': 800000,

# Backbone Settings

'backbone': resnet101_backbone.copy({

'selected_layers': list(range(1, 4)),

'use_pixel_scales': True,

'preapply_sqrt': False,

'use_square_anchors': True, # This is for backward compatability with a bug

'pred_aspect_ratios': [ [[1, 1/2, 2]] ]*5,

'pred_scales': [[24], [48], [96], [192], [384]],

}),

# FPN Settings

'fpn': fpn_base.copy({

'use_conv_downsample': True,

'num_downsample': 2,

}),

# Mask Settings

'mask_type': mask_type.lincomb,

'mask_alpha': 6.125,

'mask_proto_src': 0,

'mask_proto_net': [(256, 3, {'padding': 1})] * 3 + [(None, -2, {}), (256, 3, {'padding': 1})] + [(32, 1, {})],

'mask_proto_normalize_emulate_roi_pooling': True,

# Other stuff

'share_prediction_module': True,

'extra_head_net': [(256, 3, {'padding': 1})],

'positive_iou_threshold': 0.5,

'negative_iou_threshold': 0.4,

'crowd_iou_threshold': 0.7,

'use_semantic_segmentation_loss': True,

})

yolact_im400_config = yolact_base_config.copy({

'name': 'yolact_im400',

'max_size': 400,

'backbone': yolact_base_config.backbone.copy({

'pred_scales': [[int(x[0] / yolact_base_config.max_size * 400)] for x in yolact_base_config.backbone.pred_scales],

}),

})

yolact_im700_config = yolact_base_config.copy({

'name': 'yolact_im700',

'masks_to_train': 300,

'max_size': 700,

'backbone': yolact_base_config.backbone.copy({

'pred_scales': [[int(x[0] / yolact_base_config.max_size * 700)] for x in yolact_base_config.backbone.pred_scales],

}),

})

yolact_darknet53_config = yolact_base_config.copy({

'name': 'yolact_darknet53',

'backbone': darknet53_backbone.copy({

'selected_layers': list(range(2, 5)),

'pred_scales': yolact_base_config.backbone.pred_scales,

'pred_aspect_ratios': yolact_base_config.backbone.pred_aspect_ratios,

'use_pixel_scales': True,

'preapply_sqrt': False,

'use_square_anchors': True, # This is for backward compatability with a bug

}),

})

yolact_resnet50_config = yolact_base_config.copy({

'name': 'yolact_resnet50',

'backbone': resnet50_backbone.copy({

'selected_layers': list(range(1, 4)),

'pred_scales': yolact_base_config.backbone.pred_scales,

'pred_aspect_ratios': yolact_base_config.backbone.pred_aspect_ratios,

'use_pixel_scales': True,

'preapply_sqrt': False,

'use_square_anchors': True, # This is for backward compatability with a bug

}),

})

yolact_resnet50_pascal_config = yolact_resnet50_config.copy({

'name': None, # Will default to yolact_resnet50_pascal

# Dataset stuff

'dataset': pascal_sbd_dataset,

'num_classes': len(pascal_sbd_dataset.class_names) + 1,

'max_iter': 120000,

'lr_steps': (60000, 100000),

'backbone': yolact_resnet50_config.backbone.copy({

'pred_scales': [[32], [64], [128], [256], [512]],

'use_square_anchors': False,

})

})

############################################################################################################

yolact_base_cell_config = yolact_base_config.copy({

'name': 'yolact_base',

# Dataset stuff, coco config

'dataset': cell_dataset,

'num_classes': len(cell_dataset.class_names) + 1,

# Image Size

'max_size': 550,

# Training params

'lr_steps': (400, 1000, 1600, 2200),

'max_iter': 3000,

'lr': 1e-3,

'momentum': 0.9,

'decay': 5e-4,

# Backbone Settings

'backbone': resnet101_backbone.copy({

'selected_layers': list(range(1, 4)),

'use_pixel_scales': True,

'preapply_sqrt': False,

'use_square_anchors': True, # This is for backward compatability with a bug

'pred_aspect_ratios': [[[1, 1 / 2, 2]]] * 5,

'pred_scales': [[24], [48], [96], [192], [384]],

}),

# FPN Settings

'fpn': fpn_base.copy({

'use_conv_downsample': True,

'num_downsample': 2,

}),

# Mask Settings

'mask_type': mask_type.lincomb,

'mask_alpha': 6.125,

'mask_proto_src': 0,

'mask_proto_net': [(256, 3, {'padding': 1})] * 3 + [(None, -2, {}), (256, 3, {'padding': 1})] + [(32, 1, {})],

'mask_proto_normalize_emulate_roi_pooling': True,

# Other stuff

'share_prediction_module': True,

'extra_head_net': [(256, 3, {'padding': 1})],

'positive_iou_threshold': 0.5,

'negative_iou_threshold': 0.4,

'crowd_iou_threshold': 0.7,

'use_semantic_segmentation_loss': True,

})

# ----------------------- YOLACT++ CONFIGS ----------------------- #

yolact_plus_base_config = yolact_base_config.copy({

'name': 'yolact_plus_base',

'backbone': resnet101_dcn_inter3_backbone.copy({

'selected_layers': list(range(1, 4)),

'pred_aspect_ratios': [ [[1, 1/2, 2]] ]*5,

'pred_scales': [[i * 2 ** (j / 3.0) for j in range(3)] for i in [24, 48, 96, 192, 384]],

'use_pixel_scales': True,

'preapply_sqrt': False,

'use_square_anchors': False,

}),

'use_maskiou': True,

'maskiou_net': [(8, 3, {'stride': 2}), (16, 3, {'stride': 2}), (32, 3, {'stride': 2}), (64, 3, {'stride': 2}), (128, 3, {'stride': 2})],

'maskiou_alpha': 25,

'rescore_bbox': False,

'rescore_mask': True,

'discard_mask_area': 5*5,

})

yolact_plus_resnet50_config = yolact_plus_base_config.copy({

'name': 'yolact_plus_resnet50',

'backbone': resnet50_dcnv2_backbone.copy({

'selected_layers': list(range(1, 4)),

'pred_aspect_ratios': [ [[1, 1/2, 2]] ]*5,

'pred_scales': [[i * 2 ** (j / 3.0) for j in range(3)] for i in [24, 48, 96, 192, 384]],

'use_pixel_scales': True,

'preapply_sqrt': False,

'use_square_anchors': False,

}),

})

# Default config

cfg = yolact_base_config.copy()

def set_cfg(config_name:str):

""" Sets the active config. Works even if cfg is already imported! """

global cfg

# Note this is not just an eval because I'm lazy, but also because it can

# be used like ssd300_config.copy({'max_size': 400}) for extreme fine-tuning

cfg.replace(eval(config_name))

if cfg.name is None:

cfg.name = config_name.split('_config')[0]

def set_dataset(dataset_name:str):

""" Sets the dataset of the current config. """

cfg.dataset = eval(dataset_name)

修改yolact-master/data文件夹下的__init__.py文件

from .config import *

from .coco import *

from .cell import * ##############################################################

import torch

import cv2

import numpy as np